Prometheus

1 概念

1.1 什么是 Prometheus

Prometheus 是一套集数据采集、时序存储、汇总分析、告警功能于一体的开源监控系统,专为分布式系统(尤其是云原生环境)设计。

1.2 特点

- 数据采集方式:通过 HTTP 协议采集数据(核心为 Pull 拉取模式);

- 存储能力:自带 TSDB(时序数据库),支持数据按时间顺序追加存储,也可集成 InfluxDB、OpenTSDB 等外置时序数据库;

- 查询能力:提供强大的查询功能,支持通过 PromQL 对时序数据进行过滤、聚合、分析;

- 可视化支持:可结合第三方插件 Grafana,以友好的图表形式展示监控数据;

- 灵活扩展:通过 Exporter、Pushgateway 等组件适配不同类型的监控目标。

1.3 核心组件

- Exporter:用于将原生不支持 Prometheus 协议的应用 / 系统指标,转换为 Prometheus 可识别的格式,暴露

/metrics接口供采集(如 Node Exporter、MySQL Exporter); - Pushgateway:针对短期临时任务(无法被 Prometheus 持续拉取),接收任务主动推送的指标数据,再由 Prometheus 从其拉取;

- TSDB 时序数据库:Prometheus 内置存储组件,专门用于高效存储按时间顺序生成的监控指标数据;

- Rule 告警规则:基于 PromQL 定义的监控条件(如

cpu_usage > 90%),用于判断指标是否触发告警; - Alertmanager:告警通知模块,接收 Prometheus 触发的告警信息,经过去重、分组、路由后,向管理员发送通知(如邮件、Slack 等);

- Grafana:第三方可视化插件,支持接入 Prometheus 数据源,生成自定义监控仪表盘。

2 Prometheus 完整工作流程

主机/服务

▲

│

├──采集指标

│

Exporter(Node Exporter、nginx-Exporter)

▲

│

│ Exporter提供 metrics接口暴露服务指标,供 Prometheus采集数据

│

Prometheus

│

├── TSDB存储

│

├── PromQL查询

│ │

│ ▼

│ Grafana

│

└── Alert Rules

│

▼

Alertmanager

│

├── 告警

┌───────┼────────┐

▼ ▼ ▼

Email Slack 钉钉

2.1 Exporter——服务暴露指标

应用或系统需要提供 metrics接口。

| 组件 | 作用 |

|---|---|

| Node Exporter | 主机CPU、内存、磁盘 |

| kube-state-metrics | Kubernetes资源状态 |

| 应用自带metrics | 如SpringBoot |

2.2 Prometheus 配置文件关键模块

prometheus.yml 是 Prometheus 的核心配置文件,主要由 全局配置、抓取配置、告警规则、告警管理器、远程存储 等模块组成,控制 数据采集、告警触发、数据存储与转发。

global # 全局配置

rule_files # 告警规则文件

scrape_configs # 监控目标

alerting # Alertmanager地址

remote_write # 远程写入

remote_read # 远程读取

2.2.1 global——全局配置

🔹 作用:定义 Prometheus 的默认运行参数

常见参数

| 参数 | 作用 |

|---|---|

| scrape_interval | 抓取监控数据的默认间隔 |

| scrape_timeout | 抓取超时时间 |

| evaluation_interval | 规则计算间隔 |

示例

global:

scrape_interval: 15s

evaluation_interval: 15s

工作流程

Prometheus

│

│ 每15秒

▼

抓取监控目标 metrics

📌 说明

scrape_interval可以被 job 覆盖evaluation_interval用于 告警规则计算

2.2.2 rule_files——告警规则配置

🔹 作用:加载告警规则和记录规则

groups

├─ name # 规则组名

├─ rules # 规则

│ ├─ alert # 告警规则注释

│ ├─ expr # 告警规则触发条件(#核心:promQL表达式)

│ ├─ for # 达到触发条件后持续多久触发告警

│ ├─ labels # 告警标签

│ └─ annotations # 告警通知的内容

示例

prometheus.yml:

rule_files: |

- rules.yml

rules.yml: |

groups:

- name: cpu告警

rules:

- alert: kube-proxy的cpu使用率大于80%

expr: rate(process_cpu_seconds_total{job=~"kubernetes-kube-proxy"}[1m]) * 100 > 80

for: 1m

labels:

severity: warnning

annotations:

description: "{{$labels.instance}}的{{$labels.job}}组件的cpu使用率超过80%"

2.2.3 scrape_configs——监控目标

🔹 作用:定义 Prometheus 要监控的目标

scrape_configs

├── job_name # 监控任务名称

├── metrics_path # 指定 metrics 地址

├── static_configs # 静态目标

├── kubernetes_sd_configs # K8s服务发现

└── relabel_configs # 标签重写

1️⃣ kubernetes_sd_configs(K8s服务发现)

| role | 监控对象 |

|---|---|

| node | Node |

| pod | Pod |

| service | Service |

| endpoints(推荐) | Endpoint |

示例

kubernetes_sd_configs:

- role: endpoints

📌 role: service 和 role: endpoints 的区别

假设:

Service

nginx-service(10.96.10.20:80)

│

┌───┴────┐

Pod1(10.244.1.3:80) Pod2(10.244.2.5:80)

Service 监控,Prometheus 只看到1个目标,不能看到具体的资源

Prometheus target

10.96.10.20:80

Endpoints 监控,Prometheus 看到2个目标

Prometheus targets

10.244.1.3:80

10.244.2.5:80

**2️⃣ relabel_configs(标签重写 ⭐) **

🔹 作用:过滤或修改监控目标

常见用途:

- 过滤 Pod

- 修改标签

- 生成 target

📌 常用的action(动作)

keep / drop → 过滤 target

replace → 修改/新增标签

labelmap → 批量映射 k8s label

📌 示例1:

# 含义:只保留 service_name = nginx-exporter 的 target

relabel_configs:

- source_labels: [__meta_kubernetes_service_name]

action: keep

regex: nginx-exporter

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: k8s-namespace

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

📌 示例2:

labelmap实际上就是把pod在k8s中的标签,映射给了Prometheus

2.3 Prometheus 发现监控目标

Prometheus需要知道 要监控谁,Prometheus监控的底层原理不是监控某个服务,而是监控Exporter,通过Exporter采集指标

配置文件:

prometheus.yml

2.2.1 传统环境部署的Prometheus监控nginx

scrape_configs:

- job_name: nginx

metrics_path: "/metrics"

static_configs:

# //监控部署在nginx服务器上nginx-exporter 暴露出来的地址

- targets:

- 192.168.10.13:9913

labels:

service: nginx

2.2.1 k8s内部署的Prometheus监控nginx

scrape_configs:

- job_name: "nginx"

kubernetes_sd_configs:

- role: endpoints # 监控的资源类型

relabel_configs:

# //监控service_name为nginx-exporter,且endpoint_port_name(端口名)为metrics的资源

- source_labels:

- __meta_kubernetes_service_name

action: keep

regex: nginx-exporter

- source_labels:

- __meta_kubernetes_endpoint_port_name

action: keep

regex: metrics

-----------------------------------------------------------------------------------------

# 以下是部署在k8s中nginx-exporter的service的yaml文件,Prometheus实际上监控的就是这个

apiVersion: v1

kind: Service

metadata:

name: nginx-exporter # 监控service_name为nginx-exporter

namespace: default

labels:

app: nginx-exporter

spec:

selector:

app: nginx

ports:

- name: metrics # 且endpoint_port_name(端口名)为metrics的资源

port: 9113

targetPort: 9113

2.2.1 传统环境部署的Prometheus监控 k8s内部署的 nginx

scrape_configs:

- job_name: "kubernetes-nginx-service"

kubernetes_sd_configs:

- role: endpoints # 监控的资源类型

api_server: https://192.168.10.68:6443 # 先找到api_server

# //身份认证

bearer_token_file: /data/token

tls_config:

ca_file: /data/ca.crt

relabel_configs:

# //再找到nginx-exporer的地址

- source_labels: [__meta_kubernetes_service_name]

action: keep

regex: nginx

- source_labels: [__meta_kubernetes_endpoint_port_name]

action: keep

regex: metrics

总结:Prometheus定期抓取这些地址的metrics

- Prometheus监控传统环境下的服务通过IP:端口来监控 exporter

- Prometheus监控 k8s 内的服务通过 svc/endporter 来监控 exporter

2.3 TSDB——时序数据库

Prometheus把数据存入 本地时序数据库。

| 特性 | 说明 |

|---|---|

| TSDB | 时序数据库 |

| 数据格式 | 时间 + 指标 + 标签 |

| 本地存储 | /data目录 |

| 自动压缩 | 节省空间 |

数据结构:

metric_name{label=value} timestamp value

示例:

node_cpu_seconds_total{instance="node01"} 1710000000 0.23

2.4 PromQL 查询

📌 promQL的基本语法和格式

语法:函数(指标名 {标签过滤} [时间])

✅ Prometheus 自定义指标常用函数(业务监控向)

| 函数 | 主要用途 | 示例 |

|---|---|---|

rate() |

计算业务请求增长速率(QPS、下单量等) | 以1个小时前的时间点为起点,计算5分钟内的增长速率rate(order_total[5m]offset 1h) |

increase() |

计算一段时间内数据增长量(下单总数、支付成功数) | increase(payment_success_total[1h]) |

sum() |

汇总多实例或多服务的指标 | sum(rate(http_requests_total[5m])) by (service) |

avg() |

计算平均值(平均响应时间、平均耗时) | avg(http_request_duration_seconds) |

max() |

检查业务峰值(最大并发、最大延迟) | max(active_user_count) |

histogram_quantile() |

计算分位响应时间(P90 / P95 / P99) | histogram_quantile(0.95, sum(rate(http_request_duration_seconds_bucket[5m])) by (le)) |

predict_linear() |

预测业务量趋势或容量告警 | predict_linear(order_total[1h], 3600) |

📌 by 具有分别计算的功能

2.5 Alertmanager 告警

2.5.1 发送告警

Prometheus不会直接发通知,而是发送给Alertmanager。

prometheus.yml 配置

alerting:

alertmanagers:

- static_configs:

- targets:

- alertmanager:9093

2.5.2 处理告警

alertmanager.yml

-----------------------------------------------------------------------------------------

route:

receiver: email

receivers:

- name: email

email_configs:

- to: ops@example.com

2.6 Grafana 展示

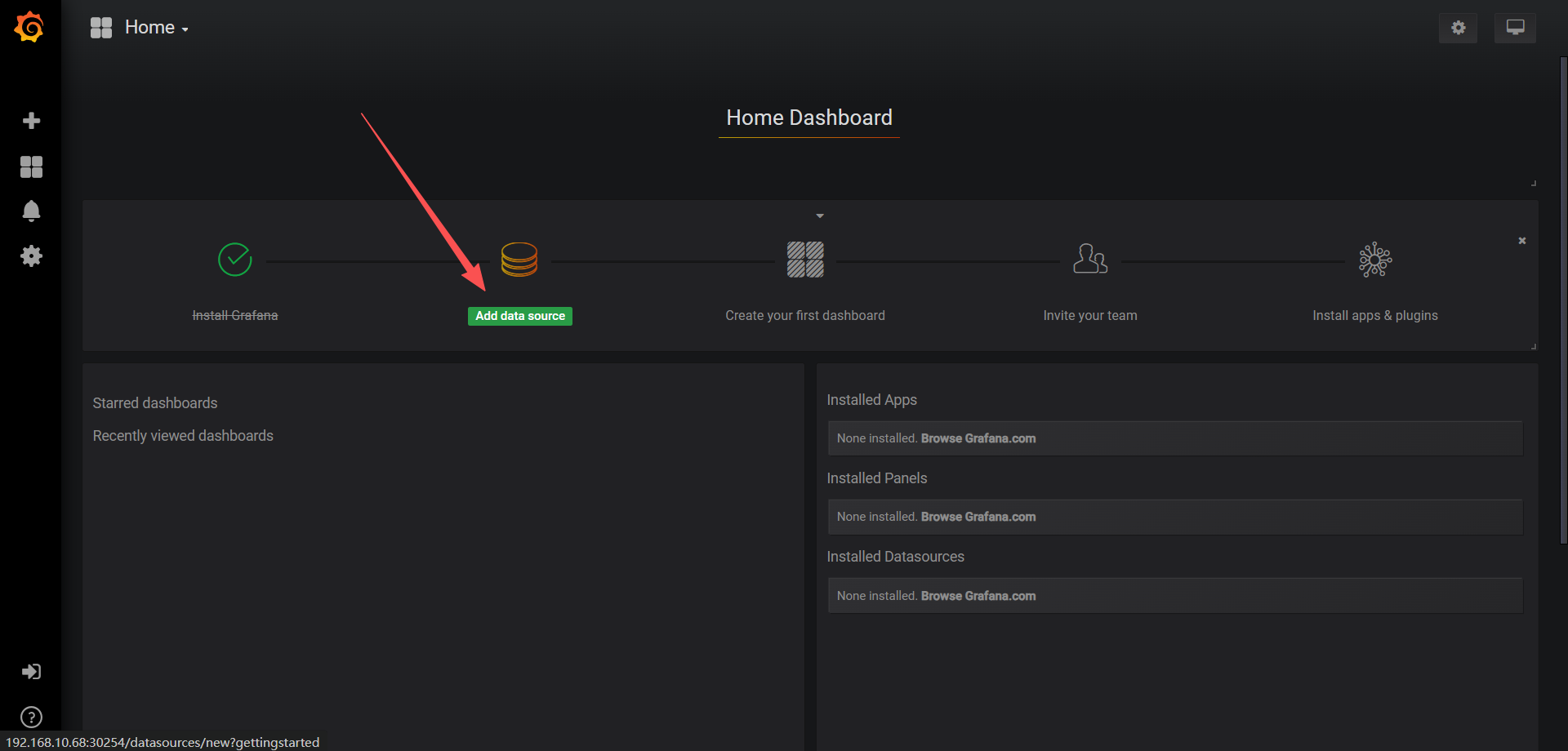

浏览器访问http://192.168.10.68:30254 ,登陆 Grafana

2.6.1 配置Grafana UI 界面

选择 Add data source

【Name】设置成 Prometheus【Type】选择 Prometheus【URL】设置成 http://prometheus.prometheus.svc:9090

点击【Save & Test】完成设置

2.6.2 导入监控模板

官方链接搜索:https://grafana.com/dashboards?dataSource=prometheus&search=kubernetes

可导入 json文件,也可输入 ID号

点击左侧+号选择【Import】

点击【Upload .json File】导入 json 模板【Prometheus】选择 Prometheus

点击【Import】

3 部署 Prometheus

3.1 镜像准备

# 镜像格式:harbor的IP/仓库名/镜像名:标签

# node-exporter

192.168.10.100/myharbor/prom/node-exporter:v0.16.0

# kube-state-metrics

swr.cn-north-4.myhuaweicloud.com/ddn-k8s/quay.io/coreos/kube-state-metrics:v1.9.5

# Prometheus

192.168.10.100/myharbor/prometheus:v2.21.0

# Grafana

192.168.10.100/myharbor/grafana:v5.3.4

# Alertmanager

192.168.10.100/myharbor/alertmanager:v0.24.0

3.2 创建RBAC

# 创建目录

mkdir /opt/prometheus && cd /opt/prometheus/

vim prometheus-rbac.yaml

-----------------------------------------------------------------------------------------

---

# 1. 创建 Namespace

apiVersion: v1

kind: Namespace

metadata:

name: prometheus

labels:

name: prometheus

app: prometheus

---

# 1. 创建 ServiceAccount

apiVersion: v1

kind: ServiceAccount

metadata:

name: prometheus-sa

namespace: prometheus

labels:

app: prometheus

component: rbac

---

# 2. 创建 ClusterRole(精细权限控制)

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: prometheus-clusterrole

labels:

app: prometheus

component: rbac

rules:

# 核心监控权限 - 只读权限

- apiGroups: [""]

resources:

- nodes

- nodes/proxy

- nodes/metrics

- services

- endpoints

- pods

- namespaces

- configmaps

- replicationcontrollers

- persistentvolumes

- persistentvolumeclaims

verbs: ["get", "list", "watch"]

# 扩展 API 组权限

- apiGroups: ["extensions", "apps", "networking.k8s.io"]

resources:

- deployments

- daemonsets

- replicasets

- statefulsets

- ingresses

verbs: ["get", "list", "watch"]

# 批处理任务权限

- apiGroups: ["batch"]

resources:

- jobs

- cronjobs

verbs: ["get", "list", "watch"]

# 自动扩缩容相关

- apiGroups: ["autoscaling"]

resources:

- horizontalpodautoscalers

verbs: ["get", "list", "watch"]

# 存储相关

- apiGroups: ["storage.k8s.io"]

resources:

- storageclasses

- volumeattachments

verbs: ["get", "list", "watch"]

# 指标相关(如果安装了 metrics-server)

- apiGroups: ["metrics.k8s.io"]

resources:

- pods

- nodes

verbs: ["get", "list", "watch"]

# 自定义资源监控

- apiGroups: ["apiextensions.k8s.io"]

resources:

- customresourcedefinitions

verbs: ["get", "list", "watch"]

# Prometheus Operator 相关(如果使用)

- apiGroups: ["monitoring.coreos.com"]

resources:

- prometheuses

- servicemonitors

- prometheusrules

- alertmanagers

verbs: ["get", "list", "watch"]

# 事件监控权限

- apiGroups: [""]

resources:

- events

verbs: ["get", "list", "watch", "create", "update", "patch"]

# 允许 Prometheus 进行健康检查

- apiGroups: [""]

resources:

- componentstatuses

verbs: ["get", "list"]

# 允许访问 API 服务

- nonResourceURLs:

- /metrics

- /healthz

- /ready

- /live

verbs: ["get"]

---

# 3. 创建 ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: prometheus-clusterrolebinding

labels:

app: prometheus

component: rbac

subjects:

- kind: ServiceAccount

name: prometheus-sa

namespace: prometheus

roleRef:

kind: ClusterRole

name: prometheus-clusterrole

apiGroup: rbac.authorization.k8s.io

-----------------------------------------------------------------------------------------

kubectl apply -f prometheus-rbac.yaml

3.3 拉取 harbor 镜像仓库的 secret

# 创建连接harbor仓库的secret

kubectl create secret docker-registry harbor-secret \

--docker-server=192.168.10.100 \

--docker-username=harbor-secret \

--docker-password=Harbor12345 \

--docker-email=1194743653@qq.com \

-n prometheus

# 给命名空间<promethues> 加入secret

kubectl patch serviceaccount default \

-p '{"imagePullSecrets": [{"name": "harbor-secret"}]}' \

-n prometheus

3.3 部署 node-exporter

# 部署 node-exporter

vim node-export.yaml

-----------------------------------------------------------------------------------------

---

apiVersion: apps/v1

kind: DaemonSet #可以保证 k8s 集群的每个节点都运行完全一样的 pod

metadata:

name: node-exporter

namespace: prometheus

labels:

name: node-exporter

spec:

selector:

matchLabels:

name: node-exporter

template:

metadata:

labels:

name: node-exporter

spec:

hostPID: true

hostIPC: true

hostNetwork: true

containers:

- name: node-exporter

image: 192.168.10.100/myharbor/prom/node-exporter:v0.16.0

ports:

- containerPort: 9100

resources:

requests:

cpu: 0.15 #这个容器运行至少需要0.15核cpu

securityContext:

privileged: true #开启特权模式

args:

- --path.procfs

- /host/proc

- --path.sysfs

- /host/sys

- --collector.filesystem.ignored-mount-points

- '"^/(sys|proc|dev|host|etc)($|/)"'

volumeMounts:

- name: dev

mountPath: /host/dev

- name: proc

mountPath: /host/proc

- name: sys

mountPath: /host/sys

- name: rootfs

mountPath: /rootfs

tolerations:

- key: "node-role.kubernetes.io/control-plane"

operator: "Exists"

effect: "NoSchedule"

volumes:

- name: proc

hostPath:

path: /proc

- name: dev

hostPath:

path: /dev

- name: sys

hostPath:

path: /sys

- name: rootfs

hostPath:

path: /

-----------------------------------------------------------------------------------------

kubectl apply -f node-export.yaml

curl -Ls http://192.168.10.80:9100/metrics | grep node_cpu_seconds

curl -Ls http://192.168.10.80:9100/metrics | grep node_load

3.4 部署 kube-state-metrics 组件

# 创建 sa,并对 sa 授权

vim kube-state-metrics-rbac.yaml

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: kube-state-metrics

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: kube-state-metrics

rules:

- apiGroups: [""]

resources: ["nodes", "pods", "services", "resourcequotas", "replicationcontrollers", "limitranges", "persistentvolumeclaims", "persistentvolumes", "namespaces", "endpoints"]

verbs: ["list", "watch"]

- apiGroups: ["extensions"]

resources: ["daemonsets", "deployments", "replicasets"]

verbs: ["list", "watch"]

- apiGroups: ["apps"]

resources: ["statefulsets"]

verbs: ["list", "watch"]

- apiGroups: ["batch"]

resources: ["cronjobs", "jobs"]

verbs: ["list", "watch"]

- apiGroups: ["autoscaling"]

resources: ["horizontalpodautoscalers"]

verbs: ["list", "watch"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: kube-state-metrics

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: kube-state-metrics

subjects:

- kind: ServiceAccount

name: kube-state-metrics

namespace: kube-system

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: kube-state-metrics

namespace: kube-system

spec:

replicas: 1

selector:

matchLabels:

app: kube-state-metrics

template:

metadata:

labels:

app: kube-state-metrics

spec:

serviceAccountName: kube-state-metrics

containers:

- name: kube-state-metrics

image: swr.cn-north-4.myhuaweicloud.com/ddn-k8s/quay.io/coreos/kube-state-metrics:v1.9.5

ports:

- containerPort: 8080

---

apiVersion: v1

kind: Service

metadata:

annotations:

prometheus.io/scrape: 'true'

name: kube-state-metrics

namespace: kube-system

labels:

app: kube-state-metrics

spec:

ports:

- name: kube-state-metrics

port: 8080

protocol: TCP

selector:

app: kube-state-metrics

-----------------------------------------------------------------------------------------

kubectl apply -f kube-state-metrics-rbac.yaml

3.4 部署 Configmap 存放配置

# # 创建 Prometheus的配置文件

vim prometheus-configmap.yaml

-----------------------------------------------------------------------------------------

kind: ConfigMap

apiVersion: v1

metadata:

labels:

app: prometheus

name: prometheus-configmap

namespace: prometheus

data:

prometheus.yml: |

rule_files: #指定报警规则文,prometheus根据这些规则信息,会推送报警信息到alertmanager中

- "rules.yml"

alerting:

alertmanagers:

- static_configs:

- targets: ["localhost:9093"]

global:

scrape_interval: 15s

scrape_timeout: 10s

evaluation_interval: 1m

scrape_configs:

- job_name: 'kubernetes-node'

kubernetes_sd_configs:

- role: node

relabel_configs:

- source_labels: [__address__]

regex: '(.*):10250'

replacement: '${1}:9100'

target_label: __address__

action: replace

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- job_name: 'kubernetes-node-cadvisor'

kubernetes_sd_configs:

- role: node

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- target_label: __address__

replacement: kubernetes.default.svc:443

- source_labels: [__meta_kubernetes_node_name]

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics/cadvisor

- job_name: 'kubernetes-apiserver'

kubernetes_sd_configs:

- role: endpoints

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- source_labels: [__meta_kubernetes_namespace, __meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]

action: keep

regex: default;kubernetes;https

- job_name: 'kubernetes-service-endpoints'

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scheme]

action: replace

target_label: __scheme__

regex: (https?)

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_service_annotation_prometheus_io_port]

action: replace

target_label: __address__

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: kubernetes_name

- job_name: 'kubernetes-pods'

kubernetes_sd_configs:

- role: pod

relabel_configs:

- action: keep

regex: true

source_labels:

- __meta_kubernetes_pod_annotation_prometheus_io_scrape

- action: replace

regex: (.+)

source_labels:

- __meta_kubernetes_pod_annotation_prometheus_io_path

target_label: __metrics_path__

- action: replace

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

source_labels:

- __address__

- __meta_kubernetes_pod_annotation_prometheus_io_port

target_label: __address__

- action: labelmap

regex: __meta_kubernetes_pod_label_(.+)

- action: replace

source_labels:

- __meta_kubernetes_namespace

target_label: kubernetes_namespace

- action: replace

source_labels:

- __meta_kubernetes_pod_name

target_label: kubernetes_pod_name

- job_name: 'kubernetes-schedule' #监控作业的名称

scrape_interval: 5s #拉取数据的时间间隔,默认为 1 分钟默认继承 global 值

static_configs: #表示静态目标配置,就是固定从某个target拉取数据

- targets: ['192.168.10.66:10251'] #指定监控的目标,表示从哪儿拉取数据

- job_name: 'kubernetes-controller-manager'

scrape_interval: 5s

static_configs:

- targets: ['192.168.10.66:10252']

- job_name: 'kubernetes-kube-proxy'

scrape_interval: 5s

static_configs:

- targets: ['192.168.10.66:10249','192.168.10.67:10249','192.168.10.68:10249']

- job_name: 'kubernetes-etcd'

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/k8s-certs/etcd/ca.crt

cert_file: /var/run/secrets/kubernetes.io/k8s-certs/etcd/server.crt

key_file: /var/run/secrets/kubernetes.io/k8s-certs/etcd/server.key

scrape_interval: 5s

static_configs:

- targets: ['192.168.10.66:2379']

rules.yml: |

groups:

- name: example

rules:

- alert: kube-proxy的cpu使用率大于80%

expr: rate(process_cpu_seconds_total{job=~"kubernetes-kube-proxy"}[1m]) * 100 > 80

for: 2s

labels:

severity: warnning

annotations:

description: "{{$labels.instance}}的{{$labels.job}}组件的cpu使用率超过80%"

- alert: kube-proxy的cpu使用率大于90%

expr: rate(process_cpu_seconds_total{job=~"kubernetes-kube-proxy"}[1m]) * 100 > 90

for: 2s

labels:

severity: critical

annotations:

description: "{{$labels.instance}}的{{$labels.job}}组件的cpu使用率超过90%"

- alert: scheduler的cpu使用率大于80%

expr: rate(process_cpu_seconds_total{job=~"kubernetes-schedule"}[1m]) * 100 > 80

for: 2s

labels:

severity: warnning

annotations:

description: "{{$labels.instance}}的{{$labels.job}}组件的cpu使用率超过80%"

- alert: scheduler的cpu使用率大于90%

expr: rate(process_cpu_seconds_total{job=~"kubernetes-schedule"}[1m]) * 100 > 90

for: 2s

labels:

severity: critical

annotations:

description: "{{$labels.instance}}的{{$labels.job}}组件的cpu使用率超过90%"

- alert: controller-manager的cpu使用率大于80%

expr: rate(process_cpu_seconds_total{job=~"kubernetes-controller-manager"}[1m]) * 100 > 80

for: 2s

labels:

severity: warnning

annotations:

description: "{{$labels.instance}}的{{$labels.job}}组件的cpu使用率超过80%"

- alert: controller-manager的cpu使用率大于90%

expr: rate(process_cpu_seconds_total{job=~"kubernetes-controller-manager"}[1m]) * 100 > 0

for: 2s

labels:

severity: critical

annotations:

description: "{{$labels.instance}}的{{$labels.job}}组件的cpu使用率超过90%"

- alert: apiserver的cpu使用率大于80%

expr: rate(process_cpu_seconds_total{job=~"kubernetes-apiserver"}[1m]) * 100 > 80

for: 2s

labels:

severity: warnning

annotations:

description: "{{$labels.instance}}的{{$labels.job}}组件的cpu使用率超过80%"

- alert: apiserver的cpu使用率大于90%

expr: rate(process_cpu_seconds_total{job=~"kubernetes-apiserver"}[1m]) * 100 > 90

for: 2s

labels:

severity: critical

annotations:

description: "{{$labels.instance}}的{{$labels.job}}组件的cpu使用率超过90%"

- alert: etcd的cpu使用率大于80%

expr: rate(process_cpu_seconds_total{job=~"kubernetes-etcd"}[1m]) * 100 > 80

for: 2s

labels:

severity: warnning

annotations:

description: "{{$labels.instance}}的{{$labels.job}}组件的cpu使用率超过80%"

- alert: etcd的cpu使用率大于90%

expr: rate(process_cpu_seconds_total{job=~"kubernetes-etcd"}[1m]) * 100 > 90

for: 2s

labels:

severity: critical

annotations:

description: "{{$labels.instance}}的{{$labels.job}}组件的cpu使用率超过90%"

- alert: kube-state-metrics的cpu使用率大于80%

expr: rate(process_cpu_seconds_total{k8s_app=~"kube-state-metrics"}[1m]) * 100 > 80

for: 2s

labels:

severity: warnning

annotations:

description: "{{$labels.instance}}的{{$labels.k8s_app}}组件的cpu使用率超过80%"

value: "{{ $value }}%"

threshold: "80%"

- alert: kube-state-metrics的cpu使用率大于90%

expr: rate(process_cpu_seconds_total{k8s_app=~"kube-state-metrics"}[1m]) * 100 > 90

for: 2s

labels:

severity: critical

annotations:

description: "{{$labels.instance}}的{{$labels.k8s_app}}组件的cpu使用率超过90%"

value: "{{ $value }}%"

threshold: "90%"

- alert: coredns的cpu使用率大于80%

expr: rate(process_cpu_seconds_total{k8s_app=~"kube-dns"}[1m]) * 100 > 80

for: 2s

labels:

severity: warnning

annotations:

description: "{{$labels.instance}}的{{$labels.k8s_app}}组件的cpu使用率超过80%"

value: "{{ $value }}%"

threshold: "80%"

- alert: coredns的cpu使用率大于90%

expr: rate(process_cpu_seconds_total{k8s_app=~"kube-dns"}[1m]) * 100 > 90

for: 2s

labels:

severity: critical

annotations:

description: "{{$labels.instance}}的{{$labels.k8s_app}}组件的cpu使用率超过90%"

value: "{{ $value }}%"

threshold: "90%"

- alert: HttpRequestsAvg

expr: sum(rate(rest_client_requests_total{job=~"kubernetes-kube-proxy|kubernetes-kubelet|kubernetes-schedule|kubernetes-control-manager|kubernetes-apiservers"}[1m])) > 1000

for: 2s

labels:

team: admin

annotations:

description: "组件{{$labels.job}}({{$labels.instance}}): TPS超过1000"

value: "{{ $value }}"

threshold: "1000"

=========================================================================================

# 创建 alermanager的配置文件

vim alertmanager-configmap.yaml

-----------------------------------------------------------------------------------------

kind: ConfigMap

apiVersion: v1

metadata:

name: alertmanager-configmap

namespace: prometheus

data:

alertmanager.yml: |-

global: #设置发件人邮箱信息

resolve_timeout: 1m

smtp_smarthost: 'smtp.qq.com:465' 改成465 587 ssl

smtp_from: '你的邮箱'

smtp_auth_username: '你的邮箱'

smtp_auth_password: '授权码' #此处为授权码,登录QQ邮箱【设置】->【账户】中的【生成授权码】获取

smtp_require_tls: false

route: #用于设置告警的分发策略

group_by: [alertname] #采用哪个标签来作为分组依据

group_wait: 10s #组告警等待时间。也就是告警产生后等待10s,如果有同组告警一起发出

group_interval: 10s #上下两组发送告警的间隔时间

repeat_interval: 10m #重复发送告警的时间,减少相同邮件的发送频率,默认是1h

receiver: default-receiver #定义谁来收告警

receivers: #设置收件人邮箱信息

- name: 'default-receiver'

email_configs:

- to: '你的邮箱' #设置收件人邮箱地址

send_resolved: true

=========================================================================================

kubectl apply -f prometheus-configmap.yaml

kubectl apply -f alertmanager-configmap.yaml

3.5 部署 Prometheus + Alertmanager

(3)通过 deployment 部署 prometheus

#将 prometheus 调度到 node1 节点,在 node1 节点创建 prometheus 数据存储目录

mkdir /data && chmod 777 /data

#通过 deployment 部署 prometheus

vim prometheus-alertmanager-server.yaml

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: prometheus-server

namespace: prometheus

labels:

app: prometheus

spec:

replicas: 1

selector:

matchLabels:

app: prometheus

component: server

#matchExpressions:

#- {key: app, operator: In, values: [prometheus]}

#- {key: component, operator: In, values: [server]}

template:

metadata:

labels:

app: prometheus

component: server

annotations:

prometheus.io/scrape: 'false'

spec:

nodeName: node01

serviceAccountName: prometheus-sa

containers:

- name: prometheus

image: 192.168.10.100/myharbor/prometheus:v2.21.0

imagePullPolicy: IfNotPresent

securityContext: # 添加安全上下文

runAsUser: 0 # 以 root 用户运行

runAsGroup: 0

command:

- "/bin/prometheus"

args:

- "--config.file=/etc/prometheus/prometheus.yml"

- "--storage.tsdb.path=/prometheus"

- "--storage.tsdb.retention=24h"

- "--web.enable-lifecycle"

ports:

- containerPort: 9090

protocol: TCP

volumeMounts:

- mountPath: /etc/prometheus

name: prometheus-configmap

- mountPath: /prometheus/

name: prometheus-storage-volume

- name: k8s-certs

mountPath: /var/run/secrets/kubernetes.io/k8s-certs/etcd/

- name: localtime

mountPath: /etc/localtime

- name: alertmanager

image: 192.168.10.100/myharbor/alertmanager:v0.24.0

imagePullPolicy: IfNotPresent

args:

- "--config.file=/etc/alertmanager/alertmanager.yml"

- "--log.level=debug"

- "--storage.path=/alertmanager/data"

ports:

- containerPort: 9093

protocol: TCP

name: alertmanager

volumeMounts:

- name: alertmanager-configmap

mountPath: /etc/alertmanager

- name: alertmanager-storage

mountPath: /alertmanager

- name: localtime

mountPath: /etc/localtime

volumes:

- name: prometheus-configmap

configMap:

name: prometheus-configmap

- name: prometheus-storage-volume

hostPath:

path: /data

type: Directory

- name: k8s-certs

secret:

secretName: etcd-certs

- name: alertmanager-configmap

configMap:

name: alertmanager-configmap

- name: alertmanager-storage

hostPath:

path: /data/alertmanager

type: DirectoryOrCreate

- name: localtime

hostPath:

path: /usr/share/zoneinfo/Asia/Shanghai

---

apiVersion: v1

kind: Service

metadata:

name: prometheus

namespace: prometheus

labels:

app: prometheus

spec:

type: NodePort

ports:

- port: 9090

targetPort: 9090

protocol: TCP

nodePort: 31000

selector:

app: prometheus

component: server

---

apiVersion: v1

kind: Service

metadata:

name: alertmanager

namespace: prometheus

labels:

name: prometheus

kubernetes.io/cluster-service: 'true'

spec:

ports:

- name: alertmanager

nodePort: 30066

port: 9093

protocol: TCP

targetPort: 9093

selector:

app: prometheus

sessionAffinity: None

type: NodePort

=========================================================================================

kubectl apply -f prometheus-alertmanager-server.yaml

# 浏览器访问prometheus: http://192.168.10.68:31000

# 浏览器访问alertmanager: http://192.168.10.80:30066

3.6 部署 Grafana

vim grafana.yaml

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: monitoring-grafana

namespace: kube-system

spec:

replicas: 1

selector:

matchLabels:

task: monitoring

k8s-app: grafana

template:

metadata:

labels:

task: monitoring

k8s-app: grafana

spec:

containers:

- name: grafana

image: 192.168.10.100/myharbor/grafana:v5.3.4

ports:

- containerPort: 3000

protocol: TCP

volumeMounts:

- mountPath: /etc/ssl/certs

name: ca-certificates

readOnly: true

- mountPath: /var/lib/grafana

name: grafana-storage

env:

- name: INFLUXDB_HOST

value: monitoring-influxdb

- name: GF_SERVER_HTTP_PORT

value: "3000"

# The following env variables are required to make Grafana accessible via

# the kubernetes api-server proxy. On production clusters, we recommend

# removing these env variables, setup auth for grafana, and expose the grafana

# service using a LoadBalancer or a public IP.

- name: GF_AUTH_BASIC_ENABLED

value: "false"

- name: GF_AUTH_ANONYMOUS_ENABLED

value: "true"

- name: GF_AUTH_ANONYMOUS_ORG_ROLE

value: Admin

- name: GF_SERVER_ROOT_URL

# If you're only using the API Server proxy, set this value instead:

# value: /api/v1/namespaces/kube-system/services/monitoring-grafana/proxy

value: /

volumes:

- name: ca-certificates

hostPath:

path: /etc/ssl/certs

- name: grafana-storage

emptyDir: {}

---

apiVersion: v1

kind: Service

metadata:

labels:

# For use as a Cluster add-on (https://github.com/kubernetes/kubernetes/tree/master/cluster/addons)

# If you are NOT using this as an addon, you should comment out this line.

kubernetes.io/cluster-service: 'true'

kubernetes.io/name: monitoring-grafana

name: monitoring-grafana

namespace: kube-system

spec:

# In a production setup, we recommend accessing Grafana through an external Loadbalancer

# or through a public IP.

# type: LoadBalancer

# You could also use NodePort to expose the service at a randomly-generated port

# type: NodePort

ports:

- port: 80

targetPort: 3000

selector:

k8s-app: grafana

type: NodePort

-----------------------------------------------------------------------------------------

kubectl apply -f grafana.yaml

kubectl get svc -n kube-system | grep grafana

3.7 Grafana UI 配置

详见 2.6

AtomGit 是由开放原子开源基金会联合 CSDN 等生态伙伴共同推出的新一代开源与人工智能协作平台。平台坚持“开放、中立、公益”的理念,把代码托管、模型共享、数据集托管、智能体开发体验和算力服务整合在一起,为开发者提供从开发、训练到部署的一站式体验。

更多推荐

已为社区贡献1条内容

已为社区贡献1条内容

所有评论(0)