6. 人工智能学习-模型调用与集成

·

一、模型调用核心范式:从基础到高效

1. 单机原生调用(快速验证)

适用于开发测试、小规模推理,支持 PyTorch/TensorFlow 双框架,以 Hugging Face Transformers 为核心工具:

# 1.1 基础调用(中文文本生成示例)

from transformers import AutoModelForCausalLM, AutoTokenizer

import os

# 国内镜像加速(关键优化)

os.environ["HF_ENDPOINT"] = "https://hf-mirror.com"

# 加载模型(支持量化减小显存占用)

tokenizer = AutoTokenizer.from_pretrained("Qwen/Qwen3-235B-A22B-Instruct-2507")

model = AutoModelForCausalLM.from_pretrained(

"Qwen/Qwen3-235B-A22B-Instruct-2507",

device_map="auto", # 自动分配GPU/CPU

load_in_8bit=True, # 8-bit量化(需bitsandbytes库)

trust_remote_code=True

)

# 生成推理

inputs = tokenizer("解释什么是LoRA微调", return_tensors="pt").to(model.device)

outputs = model.generate(

**inputs,

max_new_tokens=512,

temperature=0.7,

top_p=0.95

)

print(tokenizer.decode(outputs[0], skip_special_tokens=True))

# 1.2 量化模型调用(极致显存优化)

from transformers import BitsAndBytesConfig

bnb_config = BitsAndBytesConfig(

load_in_4bit=True,

bnb_4bit_quant_type="nf4", # QLoRA专用NF4量化

bnb_4bit_use_double_quant=True,

bnb_4bit_compute_dtype=torch.float16

)

# 4-bit量化加载(7B模型仅需10GB显存)

model = AutoModelForCausalLM.from_pretrained(

"Qwen/Qwen-7B",

quantization_config=bnb_config,

device_map="auto"

)2. 批量调用与异步推理(提升吞吐量)

针对高并发场景,通过批量处理和异步任务队列优化:

# 2.1 批量文本分类(情感分析示例)

from transformers import pipeline

import torch

# 初始化批量分类器

classifier = pipeline(

"text-classification",

model="bert-base-chinese",

device=0 if torch.cuda.is_available() else -1,

batch_size=16 # 批量大小(根据显存调整)

)

# 批量处理数据

texts = [

"这款产品体验极佳",

"服务态度太差了",

"性价比超高,值得推荐",

# ... 更多文本

]

results = classifier(texts) # 自动批量推理

# 2.2 异步推理(FastAPI+Celery)

from fastapi import FastAPI

from celery import Celery

app = FastAPI()

celery = Celery(

"model_tasks",

broker="redis://localhost:6379/0",

backend="redis://localhost:6379/0"

)

@celery.task

def async_generate(text):

# 异步执行模型推理

inputs = tokenizer(text, return_tensors="pt").to(model.device)

outputs = model.generate(**inputs, max_new_tokens=256)

return tokenizer.decode(outputs[0], skip_special_tokens=True)

@app.post("/generate")

async def generate_text(text: str):

# 提交异步任务

task = async_generate.delay(text)

return {"task_id": task.id}

@app.get("/result/{task_id}")

async def get_result(task_id: str):

task = async_generate.AsyncResult(task_id)

if task.ready():

return {"result": task.result}

return {"status": "processing"}3. 量化加速调用(生产环境首选)

结合 bitsandbytes/ONNX Runtime 实现低延迟推理:

# 3.1 bitsandbytes 8-bit推理(显存减半)

model = AutoModelForCausalLM.from_pretrained(

"mistralai/Voxtral-Mini-3B-2507",

load_in_8bit=True,

device_map="auto",

torch_dtype=torch.float16

)

# 3.2 ONNX Runtime加速(CPU/GPU通用)

from transformers import AutoModelForSequenceClassification, AutoTokenizer

import onnxruntime as ort

# 导出ONNX模型(仅需一次)

model = AutoModelForSequenceClassification.from_pretrained("bert-base-chinese")

tokenizer = AutoTokenizer.from_pretrained("bert-base-chinese")

dummy_input = tokenizer("测试文本", return_tensors="pt")

torch.onnx.export(

model,

(dummy_input["input_ids"], dummy_input["attention_mask"]),

"bert_classifier.onnx",

opset_version=14,

input_names=["input_ids", "attention_mask"],

output_names=["logits"]

)

# ONNX Runtime推理

ort_session = ort.InferenceSession(

"bert_classifier.onnx",

providers=["CUDAExecutionProvider", "CPUExecutionProvider"] # 优先GPU

)

inputs = tokenizer("产品质量很好", return_tensors="np")

outputs = ort_session.run(

None,

{

"input_ids": inputs["input_ids"],

"attention_mask": inputs["attention_mask"]

}

)二、多模型集成方案:协同与融合

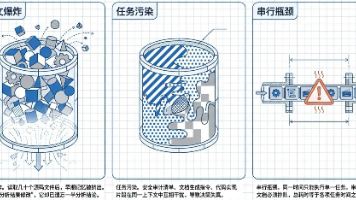

1. 任务拆分集成(流水线模式)

将复杂任务拆分为多个子任务,通过模型串联实现:

# 示例:文档处理流水线(OCR→翻译→摘要)

from transformers import pipeline

from PIL import Image

import pytesseract

# 初始化子任务模型

ocr_model = lambda img: pytesseract.image_to_string(Image.open(img)) # OCR识别

translation_model = pipeline("translation", model="ByteDance-Seed/Seed-X-PPO-7B") # 翻译

summarization_model = pipeline("summarization", model="Qwen/Qwen3-Coder-480B-A35B-Instruct") # 摘要

# 流水线执行

def document_process_pipeline(image_path, target_lang="en"):

# 1. OCR提取文本

text = ocr_model(image_path)

# 2. 翻译(中文→目标语言)

translated = translation_model(text, target_lang=target_lang)[0]["translation_text"]

# 3. 生成摘要

summary = summarization_model(translated, max_length=150, min_length=50)[0]["summary_text"]

return {

"original_text": text,

"translated_text": translated,

"summary": summary

}

# 调用流水线

result = document_process_pipeline("document.png", target_lang="en")2. 模型融合集成(提升精度)

通过加权投票、堆叠等方式融合多个模型预测结果:

# 文本分类模型融合(3个模型协同)

from transformers import pipeline

import numpy as np

# 初始化多个分类模型

model1 = pipeline("text-classification", model="bert-base-chinese", return_all_scores=True)

model2 = pipeline("text-classification", model="roberta-base-chinese", return_all_scores=True)

model3 = pipeline("text-classification", model="ernie-base", return_all_scores=True)

def ensemble_classify(text, weights=[0.4, 0.3, 0.3]):

# 各模型预测

pred1 = np.array([x["score"] for x in model1(text)[0]])

pred2 = np.array([x["score"] for x in model2(text)[0]])

pred3 = np.array([x["score"] for x in model3(text)[0]])

# 加权融合

ensemble_pred = pred1 * weights[0] + pred2 * weights[1] + pred3 * weights[2]

label_id = np.argmax(ensemble_pred)

return {

"label_id": label_id,

"confidence": ensemble_pred[label_id]

}

# 调用融合模型

result = ensemble_classify("这款手机续航超预期,拍照效果出色")3. 跨模态集成(文本 + 图像 + 音频)

结合 Diffusers、语音模型实现多模态协同:

# 跨模态生成(文本→图像→语音)

from transformers import pipeline

from diffusers import StableDiffusionPipeline

# 初始化跨模态模型

text2img = StableDiffusionPipeline.from_pretrained(

"neta-art/Neta-Lumina",

device_map="auto"

).to("cuda")

img2text = pipeline("image-to-text", model="nlpconnect/vit-gpt2-image-captioning")

text2speech = pipeline("text-to-speech", model="bosonai/higgs-audio-v2-generation-3B-base")

# 跨模态流水线

def multimodal_pipeline(text_prompt):

# 1. 文本生成图像

image = text2img(text_prompt).images[0]

image.save("generated_image.png")

# 2. 图像生成描述

img_caption = img2text("generated_image.png")[0]["generated_text"]

# 3. 文本生成语音

speech = text2speech(img_caption)

with open("output_audio.wav", "wb") as f:

f.write(speech["audio"])

return {

"image_path": "generated_image.png",

"image_caption": img_caption,

"audio_path": "output_audio.wav"

}

# 调用跨模态流水线

result = multimodal_pipeline("一片金色的麦田,远处有风车")三、系统集成实战:从模型到产品

1. 模型服务化封装(FastAPI)

将模型封装为 RESTful API,支持高并发调用:

# 完整模型服务代码

from fastapi import FastAPI, UploadFile, File

from pydantic import BaseModel

from transformers import AutoModelForCausalLM, AutoTokenizer

import os

app = FastAPI(title="大模型推理服务")

# 加载模型(全局初始化,避免重复加载)

os.environ["HF_ENDPOINT"] = "https://hf-mirror.com"

tokenizer = AutoTokenizer.from_pretrained("Qwen/Qwen3-235B-A22B-Instruct-2507")

model = AutoModelForCausalLM.from_pretrained(

"Qwen/Qwen3-235B-A22B-Instruct-2507",

device_map="auto",

load_in_8bit=True,

trust_remote_code=True

)

# 请求模型

class GenerateRequest(BaseModel):

text: str

max_new_tokens: int = 512

temperature: float = 0.7

# 响应模型

class GenerateResponse(BaseModel):

result: str

status: str = "success"

# 文本生成接口

@app.post("/generate", response_model=GenerateResponse)

async def generate(request: GenerateRequest):

try:

inputs = tokenizer(request.text, return_tensors="pt").to(model.device)

outputs = model.generate(

**inputs,

max_new_tokens=request.max_new_tokens,

temperature=request.temperature,

top_p=0.95,

do_sample=True

)

result = tokenizer.decode(outputs[0], skip_special_tokens=True)

return GenerateResponse(result=result)

except Exception as e:

return GenerateResponse(result=str(e), status="error")

# 健康检查接口

@app.get("/health")

async def health_check():

return {"status": "healthy", "model": "Qwen3-235B-Instruct"}

# 启动服务:uvicorn main:app --host 0.0.0.0 --port 8000 --workers 42. 分布式部署集成(Accelerate+DeepSpeed)

针对超大规模模型,通过分布式框架实现多卡 / 多机协同:

# 分布式推理配置(accelerate_config.yaml)

from accelerate import Accelerator

accelerator = Accelerator()

model, tokenizer = accelerator.prepare(model, tokenizer)

# 多卡批量推理

with accelerator.split_batches():

for batch in dataloader:

inputs = tokenizer(batch["text"], return_tensors="pt", padding=True, truncation=True)

inputs = accelerator.prepare(inputs)

outputs = model.generate(**inputs, max_new_tokens=256)

results = tokenizer.batch_decode(outputs, skip_special_tokens=True)

# 启动分布式服务

# accelerate launch --config_file accelerate_config.yaml inference.py3. 边缘设备集成(量化 + 轻量化部署)

适配边缘场景(如 ARM 设备、嵌入式系统):

# 边缘设备量化(INT4+GGUF格式)

from transformers import AutoModelForCausalLM, AutoTokenizer

import gguf

# 加载GGUF轻量化模型(已量化为INT4)

model = AutoModelForCausalLM.from_pretrained(

"unsloth/Qwen3-Coder-480B-A35B-Instruct-GGUF",

gguf_file="qwen3-coder-480b-a35b-instruct.Q4_K_M.gguf",

device_map="auto",

low_cpu_mem_usage=True

)

# 边缘场景推理(低功耗模式)

def edge_inference(text, max_tokens=128):

inputs = tokenizer(text, return_tensors="pt").to(model.device)

with torch.no_grad(): # 禁用梯度计算节省内存

outputs = model.generate(

**inputs,

max_new_tokens=max_tokens,

temperature=0.5,

do_sample=False # 确定性推理,降低功耗

)

return tokenizer.decode(outputs[0], skip_special_tokens=True)四、关键优化策略:性能与稳定性

1. 显存优化

- 量化压缩:4/8-bit 量化(bitsandbytes),显存占用降低 75%/50%;

- 梯度检查点:gradient_checkpointing=True,显存节省 30%;

- 模型分片:device_map="auto"自动分配模型层到 CPU/GPU;

- 异步卸载:torch.cuda.empty_cache()及时释放无用显存。

2. 速度优化

- 批量推理:增大batch_size(需匹配显存),吞吐量提升 2-5 倍;

- 推理引擎:使用 TensorRT/ONNX Runtime 加速,延迟降低 40%+;

- 预热模型:启动时先执行少量推理,避免首帧延迟;

- 并行计算:多线程预处理(文本分词)、多卡并行推理。

3. 稳定性优化

- 超时控制:设置推理超时时间,避免长时间阻塞;

- 请求限流:使用 Redis/Token Bucket 实现接口限流;

- 模型监控:集成 Prometheus+Grafana 监控显存、CPU、推理延迟;

- 容错机制:失败自动重试、降级到备用模型。

五、常见问题与解决方案

|

问题场景 |

解决方案 |

|

模型下载慢 / 失败 |

配置 HF_ENDPOINT=https://hf-mirror.com,使用 hfd 工具下载 |

|

显存溢出 |

启用量化(load_in_4bit/8bit)、减小 batch_size、关闭梯度检查点 |

|

推理延迟高 |

批量处理、ONNX 加速、模型分片到多卡 |

|

多模型冲突 |

使用 Docker 容器隔离、模型序列化加载 |

|

边缘设备部署失败 |

转换为 GGUF/TFLite 格式、使用 INT4 量化、精简模型结构 |

六、工具链与资源汇总

1. 核心工具

- 模型调用:Hugging Face Transformers、Diffusers、PEFT

- 量化加速:bitsandbytes、ONNX Runtime、TensorRT

- 部署框架:FastAPI、Celery、Accelerate、DeepSpeed

- 边缘部署:GGUF、TFLite、MLflow

2. 国内资源

- 模型镜像:https://hf-mirror.com(高速下载 Qwen/Kimi 等模型)

- 中文数据集:CLUE Benchmark(https://github.com/CLUEbenchmark/CLUE)

- 轻量化工具:PyCLUE(中文任务快速集成)

3. 学习链接

- Hugging Face 部署文档:https://huggingface.co/docs/inference-endpoints

- Accelerate 分布式指南:https://huggingface.co/docs/accelerate

- QLoRA 量化部署教程:https://github.com/TimDettmers/bitsandbytes

- FastAPI 模型服务实战:https://fastapi.tiangolo.com/tutorial/

AtomGit 是由开放原子开源基金会联合 CSDN 等生态伙伴共同推出的新一代开源与人工智能协作平台。平台坚持“开放、中立、公益”的理念,把代码托管、模型共享、数据集托管、智能体开发体验和算力服务整合在一起,为开发者提供从开发、训练到部署的一站式体验。

更多推荐

已为社区贡献5条内容

已为社区贡献5条内容

所有评论(0)