深度学习中的模型压缩技术:原理与实践

·

深度学习中的模型压缩技术:原理与实践

背景

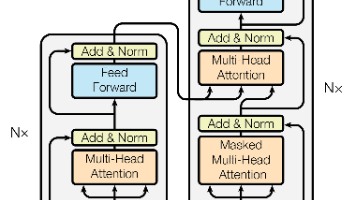

随着深度学习模型的不断增大,模型的部署和推理变得越来越具有挑战性。模型压缩技术旨在减小模型的大小和计算复杂度,同时保持模型的性能,使得深度学习模型能够在资源受限的设备上高效运行。本文将深入探讨模型压缩的原理,介绍常用的模型压缩技术,并提供实践案例。

模型压缩的基本原理

1. 模型压缩的目标

- 减小模型大小:减少模型的存储需求

- 提高推理速度:加速模型的前向传播

- 降低内存使用:减少模型运行时的内存消耗

- 降低能耗:减少模型运行时的能源消耗

2. 模型压缩的评估指标

- 压缩率:原始模型大小与压缩后模型大小的比值

- 精度损失:压缩后模型与原始模型的性能差异

- 推理速度:压缩后模型的推理时间

- 内存使用:压缩后模型的内存消耗

常用模型压缩技术

1. 模型剪枝(Model Pruning)

模型剪枝通过移除模型中不重要的权重或神经元,减小模型的大小和计算复杂度。

import torch

import torch.nn as nn

import torch.optim as optim

# 定义一个简单的模型

class SimpleModel(nn.Module):

def __init__(self):

super(SimpleModel, self).__init__()

self.fc1 = nn.Linear(784, 1024)

self.fc2 = nn.Linear(1024, 512)

self.fc3 = nn.Linear(512, 10)

def forward(self, x):

x = torch.relu(self.fc1(x))

x = torch.relu(self.fc2(x))

x = self.fc3(x)

return x

# 初始化模型

model = SimpleModel()

# 模拟训练过程

# 这里省略训练代码,假设模型已经训练完成

# 模型剪枝

# 1. 计算权重的绝对值

weights = torch.abs(model.fc1.weight.data)

# 2. 排序权重

sorted_weights, _ = torch.sort(weights.view(-1))

# 3. 确定剪枝阈值

prune_ratio = 0.5 # 剪枝50%

threshold = sorted_weights[int(len(sorted_weights) * prune_ratio)]

# 4. 执行剪枝

mask = weights > threshold

model.fc1.weight.data *= mask.float()

# 5. 微调模型

# 这里省略微调代码

# 打印剪枝前后的模型大小

import os

import tempfile

# 保存原始模型

temp_file = tempfile.NamedTemporaryFile(suffix='.pt', delete=False)

torch.save(model, temp_file.name)

original_size = os.path.getsize(temp_file.name) / 1024 / 1024 # MB

print(f"Original model size: {original_size:.2f} MB")

# 保存剪枝后的模型

temp_file_pruned = tempfile.NamedTemporaryFile(suffix='.pt', delete=False)

torch.save(model, temp_file_pruned.name)

pruned_size = os.path.getsize(temp_file_pruned.name) / 1024 / 1024 # MB

print(f"Pruned model size: {pruned_size:.2f} MB")

print(f"Compression ratio: {original_size / pruned_size:.2f}x")

# 清理临时文件

os.unlink(temp_file.name)

os.unlink(temp_file_pruned.name)

2. 模型量化(Model Quantization)

模型量化通过降低权重和激活值的精度,减小模型的大小和计算复杂度。

import torch

import torch.nn as nn

import torch.optim as optim

# 定义一个简单的模型

class SimpleModel(nn.Module):

def __init__(self):

super(SimpleModel, self).__init__()

self.fc1 = nn.Linear(784, 1024)

self.fc2 = nn.Linear(1024, 512)

self.fc3 = nn.Linear(512, 10)

def forward(self, x):

x = torch.relu(self.fc1(x))

x = torch.relu(self.fc2(x))

x = self.fc3(x)

return x

# 初始化模型

model = SimpleModel()

# 模拟训练过程

# 这里省略训练代码,假设模型已经训练完成

# 动态量化

quantized_model = torch.quantization.quantize_dynamic(

model,

{nn.Linear},

dtype=torch.qint8

)

# 保存原始模型

import os

import tempfile

temp_file = tempfile.NamedTemporaryFile(suffix='.pt', delete=False)

torch.save(model, temp_file.name)

original_size = os.path.getsize(temp_file.name) / 1024 / 1024 # MB

print(f"Original model size: {original_size:.2f} MB")

# 保存量化后的模型

temp_file_quantized = tempfile.NamedTemporaryFile(suffix='.pt', delete=False)

torch.save(quantized_model, temp_file_quantized.name)

quantized_size = os.path.getsize(temp_file_quantized.name) / 1024 / 1024 # MB

print(f"Quantized model size: {quantized_size:.2f} MB")

print(f"Compression ratio: {original_size / quantized_size:.2f}x")

# 清理临时文件

os.unlink(temp_file.name)

os.unlink(temp_file_quantized.name)

# 静态量化(需要校准)

# 1. 准备校准数据

calibration_data = torch.randn(100, 784)

# 2. 设置量化配置

model.qconfig = torch.quantization.get_default_qconfig('fbgemm')

# 3. 准备模型

prepared_model = torch.quantization.prepare(model)

# 4. 校准模型

with torch.no_grad():

for i in range(10):

prepared_model(calibration_data[i*10:(i+1)*10])

# 5. 转换模型

quantized_model_static = torch.quantization.convert(prepared_model)

# 保存静态量化后的模型

temp_file_quantized_static = tempfile.NamedTemporaryFile(suffix='.pt', delete=False)

torch.save(quantized_model_static, temp_file_quantized_static.name)

quantized_static_size = os.path.getsize(temp_file_quantized_static.name) / 1024 / 1024 # MB

print(f"Static quantized model size: {quantized_static_size:.2f} MB")

print(f"Compression ratio: {original_size / quantized_static_size:.2f}x")

# 清理临时文件

os.unlink(temp_file_quantized_static.name)

3. 知识蒸馏(Knowledge Distillation)

知识蒸馏通过将大型教师模型的知识转移到小型学生模型,提高小型模型的性能。

import torch

import torch.nn as nn

import torch.optim as optim

# 定义教师模型(较大的模型)

class TeacherModel(nn.Module):

def __init__(self):

super(TeacherModel, self).__init__()

self.fc1 = nn.Linear(784, 2048)

self.fc2 = nn.Linear(2048, 1024)

self.fc3 = nn.Linear(1024, 512)

self.fc4 = nn.Linear(512, 10)

def forward(self, x):

x = torch.relu(self.fc1(x))

x = torch.relu(self.fc2(x))

x = torch.relu(self.fc3(x))

x = self.fc4(x)

return x

# 定义学生模型(较小的模型)

class StudentModel(nn.Module):

def __init__(self):

super(StudentModel, self).__init__()

self.fc1 = nn.Linear(784, 512)

self.fc2 = nn.Linear(512, 256)

self.fc3 = nn.Linear(256, 10)

def forward(self, x):

x = torch.relu(self.fc1(x))

x = torch.relu(self.fc2(x))

x = self.fc3(x)

return x

# 初始化模型

teacher_model = TeacherModel()

student_model = StudentModel()

# 模拟教师模型已经训练完成

# 这里省略教师模型的训练代码

# 知识蒸馏训练

criterion = nn.KLDivLoss()

optimizer = optim.Adam(student_model.parameters(), lr=0.001)

temperature = 10.0 # 蒸馏温度

# 模拟训练数据

train_data = torch.randn(1000, 784)

train_labels = torch.randint(0, 10, (1000,))

# 训练学生模型

for epoch in range(10):

running_loss = 0.0

for i in range(0, 1000, 32):

batch_data = train_data[i:i+32]

batch_labels = train_labels[i:i+32]

# 教师模型的输出(软标签)

with torch.no_grad():

teacher_output = teacher_model(batch_data)

soft_labels = nn.functional.softmax(teacher_output / temperature, dim=1)

# 学生模型的输出

student_output = student_model(batch_data)

soft_preds = nn.functional.log_softmax(student_output / temperature, dim=1)

# 计算蒸馏损失

loss = criterion(soft_preds, soft_labels)

# 反向传播

optimizer.zero_grad()

loss.backward()

optimizer.step()

running_loss += loss.item()

print(f"Epoch {epoch+1}, Loss: {running_loss / (1000/32):.4f}")

# 保存教师模型和学生模型

import os

import tempfile

temp_file_teacher = tempfile.NamedTemporaryFile(suffix='.pt', delete=False)

torch.save(teacher_model, temp_file_teacher.name)

teacher_size = os.path.getsize(temp_file_teacher.name) / 1024 / 1024 # MB

print(f"Teacher model size: {teacher_size:.2f} MB")

temp_file_student = tempfile.NamedTemporaryFile(suffix='.pt', delete=False)

torch.save(student_model, temp_file_student.name)

student_size = os.path.getsize(temp_file_student.name) / 1024 / 1024 # MB

print(f"Student model size: {student_size:.2f} MB")

print(f"Compression ratio: {teacher_size / student_size:.2f}x")

# 清理临时文件

os.unlink(temp_file_teacher.name)

os.unlink(temp_file_student.name)

4. 模型结构搜索(Neural Architecture Search, NAS)

模型结构搜索通过自动搜索最优的模型结构,找到更小、更高效的模型。

# 注意:完整的NAS实现较为复杂,这里提供一个简化的示例

import torch

import torch.nn as nn

import torch.optim as optim

from torch.utils.data import DataLoader, TensorDataset

# 定义搜索空间

class SearchSpace(nn.Module):

def __init__(self, hidden_size=64):

super(SearchSpace, self).__init__()

self.fc1 = nn.Linear(784, hidden_size)

self.fc2 = nn.Linear(hidden_size, hidden_size)

self.fc3 = nn.Linear(hidden_size, 10)

def forward(self, x):

x = torch.relu(self.fc1(x))

x = torch.relu(self.fc2(x))

x = self.fc3(x)

return x

# 定义控制器(用于生成模型结构)

class Controller(nn.Module):

def __init__(self):

super(Controller, self).__init__()

self.rnn = nn.LSTM(input_size=1, hidden_size=64, num_layers=1, batch_first=True)

self.fc = nn.Linear(64, 1) # 输出隐藏层大小的对数

def forward(self, x):

out, _ = self.rnn(x)

out = self.fc(out[:, -1, :])

return out

# 初始化控制器

controller = Controller()

controller_optimizer = optim.Adam(controller.parameters(), lr=0.001)

# 模拟训练数据

train_data = torch.randn(1000, 784)

train_labels = torch.randint(0, 10, (1000,))

train_dataset = TensorDataset(train_data, train_labels)

train_loader = DataLoader(train_dataset, batch_size=32, shuffle=True)

# 模型评估函数

def evaluate_model(model, data_loader):

model.eval()

correct = 0

total = 0

with torch.no_grad():

for inputs, labels in data_loader:

outputs = model(inputs)

_, predicted = torch.max(outputs.data, 1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

return correct / total

# 搜索过程

for step in range(10):

# 生成模型结构

controller_input = torch.randn(1, 5, 1) # 随机输入

hidden_size_log = controller(controller_input)

hidden_size = int(torch.exp(hidden_size_log).item())

hidden_size = max(16, min(hidden_size, 128)) # 限制隐藏层大小范围

# 创建模型

model = SearchSpace(hidden_size=hidden_size)

optimizer = optim.Adam(model.parameters(), lr=0.001)

criterion = nn.CrossEntropyLoss()

# 训练模型

for epoch in range(5):

model.train()

for inputs, labels in train_loader:

optimizer.zero_grad()

outputs = model(inputs)

loss = criterion(outputs, labels)

loss.backward()

optimizer.step()

# 评估模型

accuracy = evaluate_model(model, train_loader)

# 计算控制器的奖励(准确率)

reward = accuracy

# 更新控制器

controller_loss = -reward * hidden_size_log # 负奖励乘以预测值

controller_optimizer.zero_grad()

controller_loss.backward()

controller_optimizer.step()

print(f"Step {step+1}, Hidden size: {hidden_size}, Accuracy: {accuracy:.4f}")

# 保存最佳模型

# 这里省略保存代码

模型压缩技术的性能对比

| 压缩技术 | 压缩率 | 精度损失 | 推理速度提升 | 内存减少 |

|---|---|---|---|---|

| 剪枝 | 2-10x | <1% | 1.5-3x | 2-10x |

| 8位量化 | 4x | <1% | 2-4x | 4x |

| 4位量化 | 8x | 1-3% | 4-8x | 8x |

| 知识蒸馏 | 2-5x | <1% | 1.5-3x | 2-5x |

| NAS | 2-10x | <1% | 2-5x | 2-10x |

模型压缩的最佳实践

1. 选择合适的压缩技术

- 剪枝:适用于任何模型,尤其是全连接层较多的模型

- 量化:适用于需要在移动设备或边缘设备上部署的模型

- 知识蒸馏:适用于需要保持高精度的场景

- NAS:适用于有足够计算资源进行搜索的场景

2. 压缩流程

- 训练原始模型:获得一个性能良好的基础模型

- 选择压缩技术:根据部署环境和性能要求选择合适的压缩技术

- 执行压缩:应用选定的压缩技术

- 微调模型:在压缩后对模型进行微调,恢复性能

- 评估性能:在测试集上评估压缩后模型的性能

3. 压缩注意事项

- 压缩率与精度的平衡:更高的压缩率通常会导致更大的精度损失

- 硬件兼容性:某些压缩技术可能在特定硬件上表现更好

- 训练成本:一些压缩技术(如NAS)可能需要大量的计算资源

- 部署工具支持:确保压缩后的模型能够被部署工具正确处理

代码优化建议

-

性能优化:

- 使用专门的模型压缩库(如PyTorch的 quantization 模块)

- 利用硬件加速(如GPU)进行压缩和微调

-

内存优化:

- 批量处理压缩过程

- 使用生成器减少内存使用

-

效果优化:

- 组合多种压缩技术(如先剪枝后量化)

- 根据模型类型和部署环境调整压缩参数

实践案例:移动端模型部署

import torch

import torch.nn as nn

import torch.optim as optim

from torch.utils.data import DataLoader, TensorDataset

import torchvision

import torchvision.transforms as transforms

# 加载CIFAR-10数据集

transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))

])

trainset = torchvision.datasets.CIFAR10(root='./data', train=True, download=True, transform=transform)

trainloader = DataLoader(trainset, batch_size=128, shuffle=True)

testset = torchvision.datasets.CIFAR10(root='./data', train=False, download=True, transform=transform)

testloader = DataLoader(testset, batch_size=128, shuffle=False)

# 定义原始模型

class OriginalModel(nn.Module):

def __init__(self):

super(OriginalModel, self).__init__()

self.conv1 = nn.Conv2d(3, 64, 3, padding=1)

self.conv2 = nn.Conv2d(64, 128, 3, padding=1)

self.conv3 = nn.Conv2d(128, 256, 3, padding=1)

self.pool = nn.MaxPool2d(2, 2)

self.fc1 = nn.Linear(256 * 4 * 4, 1024)

self.fc2 = nn.Linear(1024, 512)

self.fc3 = nn.Linear(512, 10)

def forward(self, x):

x = self.pool(torch.relu(self.conv1(x)))

x = self.pool(torch.relu(self.conv2(x)))

x = self.pool(torch.relu(self.conv3(x)))

x = x.view(-1, 256 * 4 * 4)

x = torch.relu(self.fc1(x))

x = torch.relu(self.fc2(x))

x = self.fc3(x)

return x

# 训练原始模型

model = OriginalModel()

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

model.to(device)

criterion = nn.CrossEntropyLoss()

optimizer = optim.Adam(model.parameters(), lr=0.001)

for epoch in range(10):

running_loss = 0.0

for i, data in enumerate(trainloader, 0):

inputs, labels = data[0].to(device), data[1].to(device)

optimizer.zero_grad()

outputs = model(inputs)

loss = criterion(outputs, labels)

loss.backward()

optimizer.step()

running_loss += loss.item()

print(f"Epoch {epoch+1}, Loss: {running_loss / len(trainloader):.4f}")

# 评估原始模型

correct = 0

total = 0

with torch.no_grad():

for data in testloader:

images, labels = data[0].to(device), data[1].to(device)

outputs = model(images)

_, predicted = torch.max(outputs.data, 1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

print(f"Original model accuracy: {100 * correct / total:.2f}%")

# 模型剪枝

# 剪枝卷积层

for name, module in model.named_modules():

if isinstance(module, nn.Conv2d):

# 计算权重的绝对值

weights = torch.abs(module.weight.data)

# 排序权重

sorted_weights, _ = torch.sort(weights.view(-1))

# 确定剪枝阈值(剪枝50%)

threshold = sorted_weights[int(len(sorted_weights) * 0.5)]

# 执行剪枝

mask = weights > threshold

module.weight.data *= mask.float()

# 剪枝全连接层

for name, module in model.named_modules():

if isinstance(module, nn.Linear):

# 计算权重的绝对值

weights = torch.abs(module.weight.data)

# 排序权重

sorted_weights, _ = torch.sort(weights.view(-1))

# 确定剪枝阈值(剪枝70%)

threshold = sorted_weights[int(len(sorted_weights) * 0.7)]

# 执行剪枝

mask = weights > threshold

module.weight.data *= mask.float()

# 微调剪枝后的模型

optimizer = optim.Adam(model.parameters(), lr=0.0001)

for epoch in range(5):

running_loss = 0.0

for i, data in enumerate(trainloader, 0):

inputs, labels = data[0].to(device), data[1].to(device)

optimizer.zero_grad()

outputs = model(inputs)

loss = criterion(outputs, labels)

loss.backward()

optimizer.step()

running_loss += loss.item()

print(f"Fine-tuning epoch {epoch+1}, Loss: {running_loss / len(trainloader):.4f}")

# 评估剪枝后的模型

correct = 0

total = 0

with torch.no_grad():

for data in testloader:

images, labels = data[0].to(device), data[1].to(device)

outputs = model(images)

_, predicted = torch.max(outputs.data, 1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

print(f"Pruned model accuracy: {100 * correct / total:.2f}%")

# 模型量化

model.cpu()

quantized_model = torch.quantization.quantize_dynamic(

model,

{nn.Conv2d, nn.Linear},

dtype=torch.qint8

)

# 评估量化后的模型

correct = 0

total = 0

with torch.no_grad():

for data in testloader:

images, labels = data[0], data[1]

outputs = quantized_model(images)

_, predicted = torch.max(outputs.data, 1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

print(f"Quantized model accuracy: {100 * correct / total:.2f}%")

# 保存模型

import os

import tempfile

temp_file_original = tempfile.NamedTemporaryFile(suffix='.pt', delete=False)

torch.save(model, temp_file_original.name)

original_size = os.path.getsize(temp_file_original.name) / 1024 / 1024 # MB

print(f"Original model size: {original_size:.2f} MB")

temp_file_pruned = tempfile.NamedTemporaryFile(suffix='.pt', delete=False)

torch.save(model, temp_file_pruned.name)

pruned_size = os.path.getsize(temp_file_pruned.name) / 1024 / 1024 # MB

print(f"Pruned model size: {pruned_size:.2f} MB")

temp_file_quantized = tempfile.NamedTemporaryFile(suffix='.pt', delete=False)

torch.save(quantized_model, temp_file_quantized.name)

quantized_size = os.path.getsize(temp_file_quantized.name) / 1024 / 1024 # MB

print(f"Quantized model size: {quantized_size:.2f} MB")

# 清理临时文件

os.unlink(temp_file_original.name)

os.unlink(temp_file_pruned.name)

os.unlink(temp_file_quantized.name)

结论

模型压缩技术是深度学习部署中的关键技术,通过减小模型大小、提高推理速度和降低内存使用,使得深度学习模型能够在资源受限的设备上高效运行。本文介绍了几种常用的模型压缩技术,包括模型剪枝、量化、知识蒸馏和模型结构搜索,并提供了实践案例。

在实际应用中,我们应该根据具体的部署环境和性能要求选择合适的压缩技术,并结合多种压缩方法以获得最佳效果。同时,我们也需要关注压缩后的模型性能,确保压缩不会导致严重的精度损失。

通过合理使用模型压缩技术,我们可以开发出更高效、更轻量的深度学习模型,为各种应用场景提供更好的解决方案,特别是在移动设备、边缘设备等资源受限的环境中。

AtomGit 是由开放原子开源基金会联合 CSDN 等生态伙伴共同推出的新一代开源与人工智能协作平台。平台坚持“开放、中立、公益”的理念,把代码托管、模型共享、数据集托管、智能体开发体验和算力服务整合在一起,为开发者提供从开发、训练到部署的一站式体验。

更多推荐

已为社区贡献26条内容

已为社区贡献26条内容

所有评论(0)