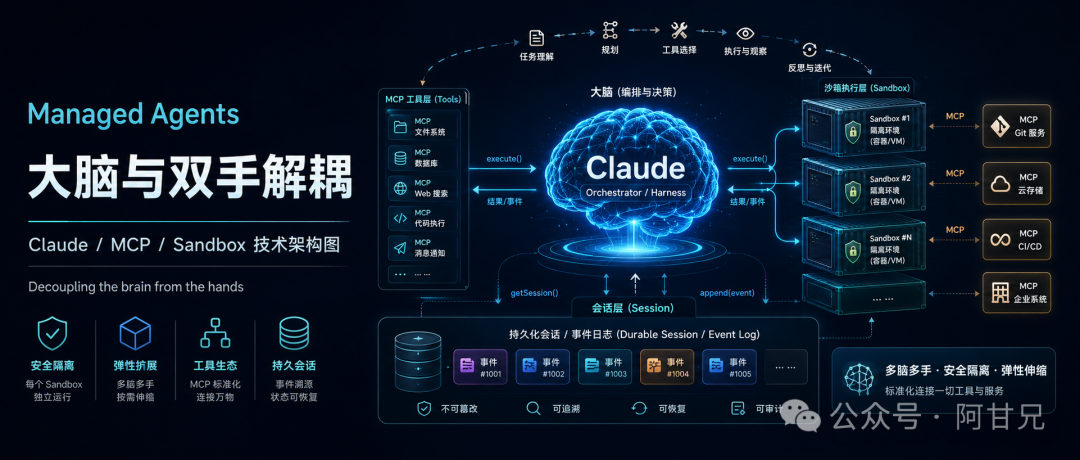

Claude 的下一代 Agent 架构:大脑与双手解耦(译文)

原文链接:https://www.anthropic.com/engineering/managed-agents

Harnesses encode assumptions that go stale as models improve. Managed Agents—our hosted service for long-horizon agent work—is built around interfaces that stay stable as harnesses change.

传统 Agent Harness 往往内置了许多关于模型能力的假设,但随着模型越来越强,这些假设很快就会失效。Anthropic 推出的 Managed Agents,正是为了解决长周期 Agent 任务中的这类问题:它把系统设计在一组稳定接口之上,让底层 Harness 可以持续演进,而上层能力保持稳定。

A running topic on the Engineering Blog is how to build effective agents and design harnesses for long-running work. A common thread across this work is that harnesses encode assumptions about what Claude can’t do on its own. However, those assumptions need to be frequently questioned because they can go stale as models improve.

在我们的工程博客中,一个持续被讨论的话题是:如何构建高效的 Agent,以及如何为长时间运行的任务设计合适的 Harness(编排框架)?

而这些工作的共同点在于:Harness 往往建立在一些 “Claude 自己还做不到什么” 的假设之上。但问题是,随着模型能力不断提升,这些假设很容易快速失效,因此必须持续被重新审视。

As just one example, in prior work we found that Claude Sonnet 4.5 would wrap up tasks prematurely as it sensed its context limit approaching—a behavior sometimes called “context anxiety.” We addressed this by adding context resets to the harness. But when we used the same harness on Claude Opus 4.5, we found that the behavior was gone. The resets had become dead weight.

举个例子,在之前的研究中我们发现,当 Claude Sonnet 4.5 感知到上下文窗口快要耗尽时,会倾向于提前结束任务,这种现象有时被称为“上下文焦虑(context anxiety)”。为了解决这个问题,我们在 Harness 中加入了“上下文重置(context reset)”机制。但后来,当我们把同样的 Harness 用到 Claude Opus 4.5 上时,却发现这种问题已经不存在了。原本用于修复问题的 reset 逻辑,反而变成了多余的负担。

We expect harnesses to continue evolving. So we built Managed Agents: a hosted service in the Claude Platform that runs long-horizon agents on your behalf through a small set of interfaces meant to outlast any particular implementation—including the ones we run today.

我们认为,Harness 未来还会持续演进。因此,我们构建了 Managed Agents : 一个运行在 Claude 平台上的托管式 Agent 服务。

它通过一组简洁而稳定的接口,帮助用户运行长周期 Agent 任务,这些接口的设计目标,并不是服务某一种具体实现,而是能够长期稳定存在,甚至在未来替代掉我们今天正在使用的实现方案。

Building Managed Agents meant solving an old problem in computing: how to design a system for “programs as yet unthought of.” Decades ago, operating systems solved this problem by virtualizing hardware into abstractions—process, file—general enough for programs that didn’t exist yet. The abstractions outlasted the hardware. The read() command is agnostic as to whether it’s accessing a disk pack from the 1970s or a modern SSD. The abstractions on top stayed stable while the implementations underneath changed freely.

构建 Managed Agents,本质上是在解决计算机领域一个非常经典的问题:如何为“那些未来还未出现的程序”提前设计系统?

几十年前,操作系统就是通过“抽象”解决了这个问题。它把底层硬件虚拟化成一套通用接口,例如:process(进程)、file(文件)这些抽象足够通用,即便未来出现全新的程序形态,也依然能够兼容,真正长久存在的,其实不是硬件,而是抽象层本身。例如 read() 这个系统调用,并不关心它读取的是 1970 年代的磁盘阵列,还是今天的 SSD 固态硬盘。底层实现可以不断变化,但上层接口始终保持稳定。

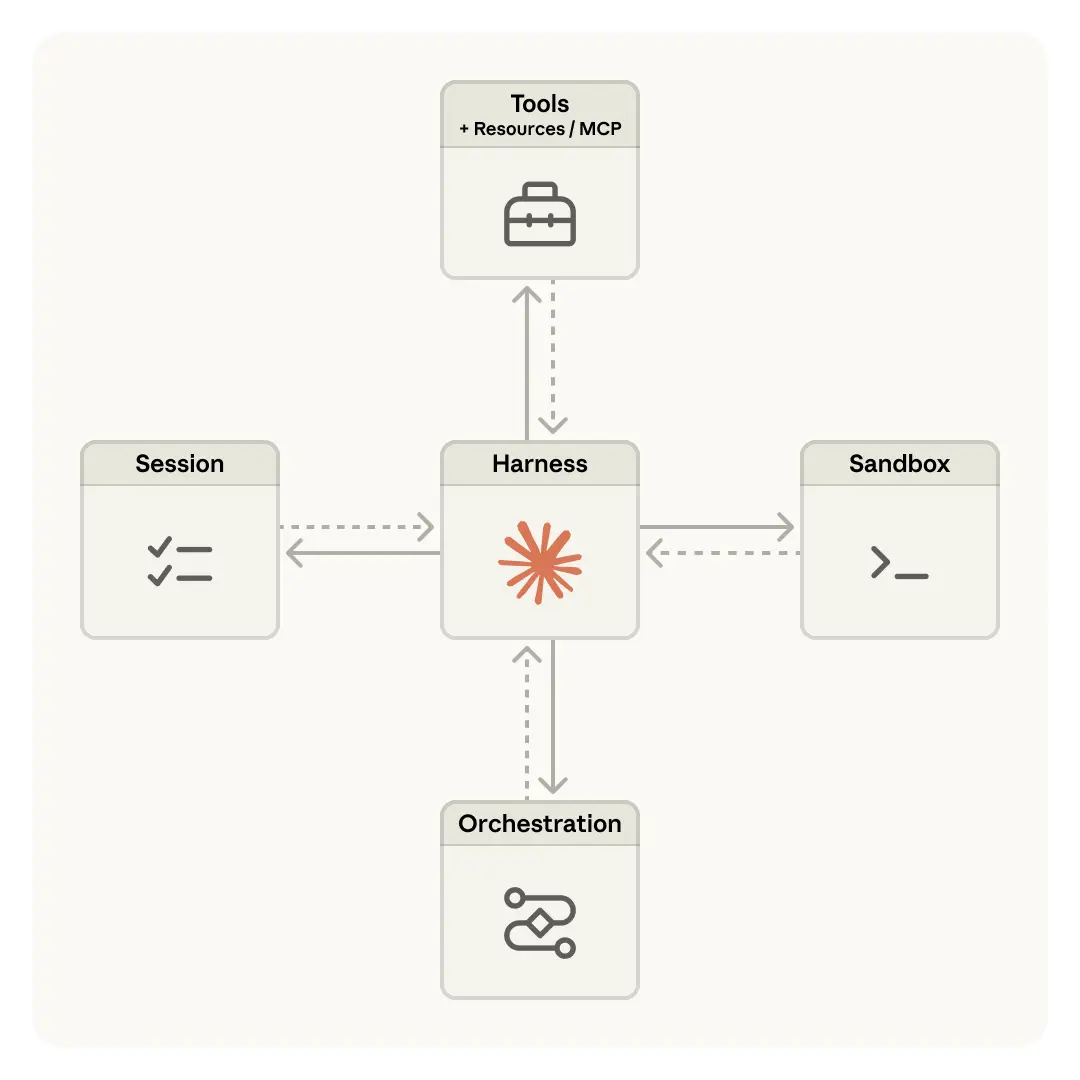

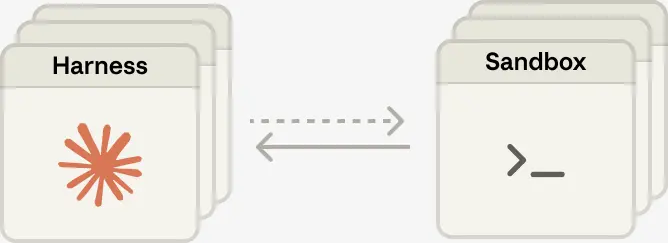

Managed Agents follow the same pattern. We virtualized the components of an agent: a session (the append-only log of everything that happened), a harness (the loop that calls Claude and routes Claude’s tool calls to the relevant infrastructure), and a sandbox (an execution environment where Claude can run code and edit files). This allows the implementation of each to be swapped without disturbing the others. We’re opinionated about the shape of these interfaces, not about what runs behind them.

Managed Agents 采用的也是同样的设计思路,我们将 Agent 的各个组成部分进行了“虚拟化”与“抽象化”,主要包括:

- Session:记录所有事件的追加式日志(append-only log)

- Harness:负责调用 Claude,并将 Claude 的工具调用路由到底层基础设施的执行循环

- Sandbox:Claude 用于运行代码、编辑文件的执行环境

这样的好处是,每个组件都可以独立替换或升级,而不会影响其它部分。我们真正关心的,是这些接口本身的设计是否稳定统一,而不是接口背后具体跑的是什么实现。

Don’t adopt a pet(不要把系统变成“宠物”)

We started by placing all agent components into a single container, which meant the session, agent harness, and sandbox all shared an environment. There were benefits to this approach, including that file edits are direct syscalls, and there were no service boundaries to design.

最开始,我们将所有 Agent 组件都部署在同一个容器中运行,这意味着 Session、Agent Harness 和 Sandbox 共用同一套运行环境。

这种方案的确带来了一些好处,比如文件编辑可以直接通过系统调用(syscall)完成,不需要额外的远程通信;同时,由于所有组件都在同一个容器里,也省去了服务边界设计与跨服务协作的复杂性。

But by coupling everything into one container, we ran into an old infrastructure problem: we’d adopted a pet. In the pets-vs-cattle analogy, a pet is a named, hand-tended individual you can’t afford to lose, while cattle are interchangeable. In our case, the server became that pet; if a container failed, the session was lost. If a container was unresponsive, we had to nurse it back to health.

但当所有东西都强耦合在同一个容器中时,我们也遇到了基础设施领域一个非常经典的问题:我们不小心把系统养成了“宠物(Pet)”。在基础设施领域有个经典比喻,叫做 “Pets vs Cattle(宠物 vs 牛群)”。

所谓“宠物”,指的是那些有名字、需要精心维护、出了问题不能轻易丢弃的机器;而“牛群”则是标准化、可替换、坏了直接重建的资源。而在我们的架构里,服务器逐渐就变成了“宠物”。因为一旦容器崩溃,Session 就会丢失;一旦容器失去响应,我们还得手动排查、修复,想办法把它“救活”。

Nursing containers meant debugging unresponsive stuck sessions. Our only window in was the WebSocket event stream, but that couldn’t tell us where failures arose, which meant that a bug in the harness, a packet drop in the event stream, or a container going offline all presented the same. To figure out what went wrong, an engineer had to open a shell inside the container, but because that container often also held user data, that approach essentially meant we lacked the ability to debug.

而所谓“修复容器”,本质上就是在排查那些卡死、失去响应的 Session。当时,我们唯一能够观察系统状态的入口,就是 WebSocket 事件流,但问题在于,它只能告诉我们“出问题了”,却无法告诉我们“问题到底出在哪里”。因此:

- Harness 本身的 Bug

- WebSocket 事件流中的网络丢包

- 容器离线

这些完全不同的问题,最终表现出来却几乎一模一样。为了真正定位问题,工程师只能进入容器内部,通过 shell 手动调试,但偏偏这个容器里往往还存放着用户数据,这意味着,我们实际上很难安全地进入容器调试系统,某种程度上等于“没有真正的调试能力”。

A second issue was that the harness assumed that whatever Claude worked on lived in the container with it. When customers asked us to connect Claude to their virtual private cloud, they had to either peer their network with ours, or run our harness in their own environment. An assumption baked into the harness became a problem when we wanted to connect it to different infrastructure.

第二个问题在于,Harness 默认假设:Claude 所操作的一切资源,都和它运行在同一个容器里。

这个假设在早期没什么问题,但当客户希望把 Claude 接入他们自己的私有云(VPC)时,问题就出现了。为了实现连接,客户只能二选一:

- 要么和我们的网络进行 VPC Peering(网络互通)

- 要么干脆把我们的 Harness 部署到他们自己的环境中运行

于是,一个原本写死在 Harness 内部的架构假设,开始成为系统适配不同基础设施时的巨大障碍。

Decouple the brain from the hands(将“大脑”与“双手”解耦)

The solution we arrived at was to decouple what we thought of as the “brain” (Claude and its harness) from both the “hands” (sandboxes and tools that perform actions) and the “session” (the log of session events). Each became an interface that made few assumptions about the others, and each could fail or be replaced independently.

最终,我们想到的解决方案是:彻底将“大脑”、“双手”以及“Session”拆分开。其中:

- “大脑” 指的是 Claude 与 Harness

- “双手” 指的是负责执行动作的 Sandbox 与各种工具

- “Session” 则是记录整个会话事件的日志系统

拆分之后,每一部分都通过统一接口进行协作,并且尽量减少对其它组件的依赖与假设,这样带来的最大好处是:每个组件都可以独立运行、独立失败、独立替换,而不会影响整个系统。

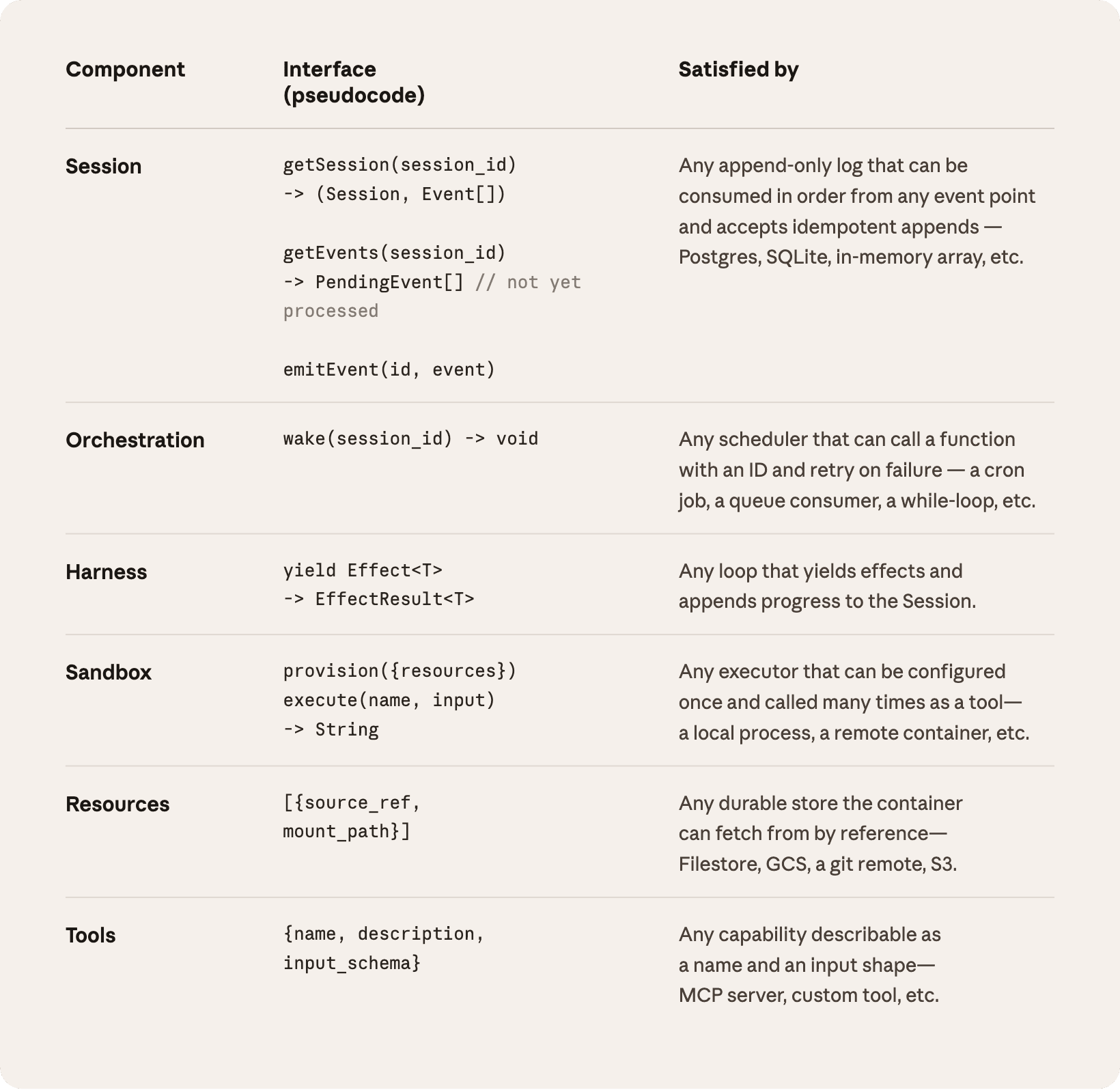

The harness leaves the container. Decoupling the brain from the hands meant the harness no longer lived inside the container. It called the container the way it called any other tool: execute(name, input) → string. The container became cattle. If the container died, the harness caught the failure as a tool-call error and passed it back to Claude. If Claude decided to retry, a new container could be reinitialized with a standard recipe: provision({resources}). We no longer had to nurse failed containers back to health.

Harness 不再运行在容器内部。

当我们把“大脑”和“双手”彻底解耦后,Harness 也不再部署在容器内部。此时,Harness 调用容器的方式,就像调用普通工具一样:execute(name, input) → string。也就是说,对于 Harness 来说,容器只是一种“可调用资源”。这样一来,容器终于从“宠物”变成了“牛群(Cattle)”。

如果某个容器崩溃了,Harness 只会把它视为一次普通的工具调用失败,然后将错误返回给 Claude。而如果 Claude 判断需要重试,那么系统就可以通过统一流程重新创建一个全新的容器:provision({resources})。整个过程不再依赖人工修复,我们终于不用再像维护“宠物服务器”一样,费力地去“救活”那些故障容器了。

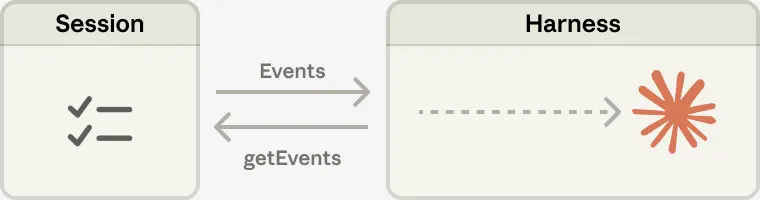

Recovering from harness failure. The harness also became cattle. Because the session log sits outside the harness, nothing in the harness needs to survive a crash. When one fails, a new one can be rebooted with wake(sessionId), use getSession(id) to get back the event log, and resume from the last event. During the agent loop, the harness writes to the session with emitEvent(id, event) in order to keep a durable record of events.

从 Harness 故障中恢复。

Harness 本身也变成了“牛群”,由于 Session 日志已经被放到了 Harness 外部,因此 Harness 内部没有任何必须在崩溃后保留下来的状态。当某个 Harness 失败时,系统可以通过 wake(sessionId) 重新唤醒一个新的 Harness,再通过 getSession(id) 取回对应的事件日志,然后从最后一个事件继续执行。在 Agent 运行循环中,Harness 会通过 emitEvent(id, event) 不断将事件写入 Session,从而确保整个执行过程都有一份持久、可靠的事件记录。

The security boundary. In the coupled design, any untrusted code that Claude generated was run in the same container as credentials—so a prompt injection only had to convince Claude to read its own environment. Once an attacker has those tokens, they can spawn fresh, unrestricted sessions and delegate work to them. Narrow scoping is an obvious mitigation, but this encodes an assumption about what Claude can’t do with a limited token—and Claude is getting increasingly smart. The structural fix was to make sure the tokens are never reachable from the sandbox where Claude’s generated code runs.

安全边界。

在之前的强耦合架构中,Claude 生成的所有不可信代码,都会和系统凭证(credentials)运行在同一个容器里,这意味着一次 Prompt Injection 攻击,只需要诱导 Claude 去读取自己的运行环境,就有机会拿到敏感 Token。而一旦攻击者获取这些 Token,他们就能够创建新的、不受限制的 Session,并进一步把更多任务交给这些 Session 去执行。

一种比较直观的缓解方案,是尽量缩小 Token 权限范围(narrow scoping),但这种做法本质上仍然建立在一个危险假设上:“Claude 即使拿到受限 Token,也做不了太多事情”。可问题是,Claude 正在变得越来越聪明,因此,真正从架构层面解决问题的方法,不是继续限制权限,而是:彻底确保 Token 永远无法从 Claude 代码运行的 Sandbox 中被访问到。

We used two patterns to ensure this. Auth can be bundled with a resource or held in a vault outside the sandbox. For Git, we use each repository’s access token to clone the repo during sandbox initialization and wire it into the local git remote. Git push and pull work from inside the sandbox without the agent ever handling the token itself. For custom tools, we support MCP and store OAuth tokens in a secure vault. Claude calls MCP tools via a dedicated proxy; this proxy takes in a token associated with the session. The proxy can then fetch the corresponding credentials from the vault and make the call to the external service. The harness is never made aware of any credentials.

为了实现这一点,我们采用了两种安全模式。

- 第一种:是把认证信息直接绑定到资源本身;

- 第二种:则是将凭证存放在 Sandbox 外部的安全 Vault 中。

以 Git 为例:在 Sandbox 初始化时,我们会使用仓库对应的 Access Token 来完成代码克隆,并将认证信息直接配置到本地 Git Remote 中。这样一来,即使 Claude 在 Sandbox 内执行 git push 或 git pull,整个过程中也完全接触不到真实 Token。

而对于自定义工具,我们则支持 MCP(Model Context Protocol)机制,并将 OAuth Token 存储在独立的安全 Vault 中。

Claude 调用 MCP 工具时,并不会直接拿到凭证,而是通过一个专门的 Proxy 代理完成调用。这个 Proxy 会接收与当前 Session 关联的 Token,然后再去 Vault 中读取真正的认证信息,并代替 Claude 调用外部服务,整个过程中,Harness 完全不知道任何真实凭证的存在。

The session is not Claude’s context window(Session 并不等于 Claude 的上下文窗口)

Long-horizon tasks often exceed the length of Claude’s context window, and the standard ways to address this all involve irreversible decisions about what to keep. We’ve explored these techniques in prior work on context engineering. For example, compaction lets Claude save a summary of its context window and the memory tool lets Claude write context to files, enabling learning across sessions. This can be paired with context trimming, which selectively removes tokens such as old tool results or thinking blocks.

长周期任务往往会超出 Claude 上下文窗口(Context Window)的容量限制。而目前常见的解决方案,本质上都需要做一些“不可逆”的取舍:哪些上下文该保留,哪些该丢弃?

在之前关于 Context Engineering(上下文工程)的研究中,我们已经探索过很多类似方案。例如:

- Compaction(上下文压缩):允许 Claude 将当前上下文总结成摘要保存下来

- Memory Tool:允许 Claude 将上下文写入文件,从而实现跨 Session 的长期记忆

这些方案通常还会配合 Context Trimming(上下文裁剪)一起使用,所谓裁剪,就是有选择地移除一些不再重要的 Token,例如:

- 旧的工具调用结果

- 历史思考过程(thinking blocks)

- 早期上下文内容

从而为新的上下文腾出空间。

But irreversible decisions to selectively retain or discard context can lead to failures. It is difficult to know which tokens the future turns will need. If messages are transformed by a compaction step, the harness removes compacted messages from Claude’s context window, and these are recoverable only if they are stored. Prior work has explored ways to address this by storing context as an object that lives outside the context window. For example, context can be an object in a REPL that the LLM programmatically accesses by writing code to filter or slice it.

但这种“不可逆”的上下文保留与丢弃策略,本身其实存在很大风险,因为你很难提前知道:未来的推理过程中,模型到底还会不会需要某些 Token。

例如,在执行 Compaction(上下文压缩)后,Harness 会把已经被压缩总结过的原始消息,从 Claude 的上下文窗口中移除,而这些内容一旦被删除,如果没有额外存储,就再也无法恢复。

因此,在之前的研究中,我们开始探索另一种思路:不要把 Context 完全塞进 Context Window 里,而是把它作为一个独立对象,存储在 Context Window “外部”。

例如,在某些 REPL 环境中,Context 可以作为一个独立的数据对象存在,LLM 并不是直接把所有上下文塞进 Prompt,而是通过编写代码,对这些 Context 进行:

- filter(过滤)

- slice(切片)

- 查询

- 检索

从而按需获取真正需要的上下文内容。

In Managed Agents, the session provides this same benefit, serving as a context object that lives outside Claude’s context window. But rather than be stored within the sandbox or REPL, context is durably stored in the session log. The interface, getEvents(), allows the brain to interrogate context by selecting positional slices of the event stream. The interface can be used flexibly, allowing the brain to pick up from wherever it last stopped reading, rewinding a few events before a specific moment to see the lead up, or rereading context before a specific action.

在 Managed Agents 中,Session 扮演的其实也是类似的角色,它相当于一个存在于 Claude Context Window 之外的“上下文对象(Context Object)”。但不同的是,这些 Context 并不是存储在 Sandbox 或 REPL 内部,而是被持久化保存在 Session Log(会话日志)中。系统通过 getEvents() 这个接口,让“大脑(Claude)”能够像查询数据一样,按位置读取事件流中的上下文片段。

这种设计非常灵活。例如,Claude 可以:

- 从上次停止阅读的位置继续处理

- 回退到某个关键事件之前,查看事情的来龙去脉

- 在执行某个动作前,重新读取相关上下文

换句话说,Context 不再是一次性塞进 Prompt 的静态内容,而变成了一个可随时查询、回溯、按需读取的动态数据源。

Any fetched events can also be transformed in the harness before being passed to Claude’s context window. These transformations can be whatever the harness encodes, including context organization to achieve a high prompt cache hit rate and context engineering. We separated the concerns of recoverable context storage in the session and arbitrary context management in the harness because we can’t predict what specific context engineering will be required in future models. The interfaces push that context management into the harness, and only guarantee that the session is durable and available for interrogation.

此外,所有通过 getEvents() 获取到的事件,在真正送入 Claude 的 Context Window 之前,还可以先经过 Harness 的二次加工与转换,而这些转换逻辑,完全由 Harness 自己决定。例如:

- 重新组织上下文结构

- 提高 Prompt Cache 命中率

- 执行各种 Context Engineering(上下文工程)策略

都可以在这一层完成。

之所以这样设计,是因为我们无法提前预判:未来模型究竟会需要怎样的 Context Engineering 策略。因此,我们把两个职责彻底拆开:

- Session 只负责“可靠存储与恢复上下文”

- Harness 只负责“如何管理与组织上下文”

接口层本身并不关心具体怎么做上下文优化,它只保证:Session 中的数据是持久化的、可查询的、可回溯的。

Many brains, many hands(多个“大脑”,多只“双手”)

Many brains. Decoupling the brain from the hands solved one of our earliest customer complaints. When teams wanted Claude to work against resources in their own VPC, the only path was to peer their network with ours, because the container holding the harness assumed every resource sat next to it. Once the harness was no longer in the container, that assumption went away. The same change had a performance payoff. When we initially put the brain in a container, it meant that many brains required as many containers. For each brain, no inference could happen until that container was provisioned; every session paid the full container setup cost up front. Every session, even ones that would never touch the sandbox, had to clone the repo, boot the process, fetch pending events from our servers.

多个“大脑”。

将“大脑”和“双手”解耦之后,我们解决了早期客户最常见的一个问题。过去,当客户希望 Claude 能访问他们自己 VPC(私有云)中的资源时,唯一的办法就是:让客户的网络与我们的网络进行 VPC Peering(网络互通),原因很简单:当时运行 Harness 的容器默认认为,所有资源都和自己部署在同一个环境里。而当 Harness 被移出容器后,这个假设自然也就消失了。

与此同时,这次架构调整还带来了明显的性能收益。因为在最初的设计中,“大脑(Claude + Harness)”本身也是运行在容器里的。这意味着:有多少个“大脑”,就需要创建多少个容器。而每个容器在真正开始推理之前,都必须先完成完整初始化。也就是说,每一个 Session 都必须提前承担完整的容器启动成本。哪怕这个 Session 最终根本不会使用 Sandbox,也依然需要:

- clone 代码仓库

- 启动进程

- 从服务器拉取待处理事件

这些额外开销都会在真正开始推理之前发生。

That dead time is expressed in time-to-first-token (TTFT), which measures how long a session waits between accepting work and producing its first response token. TTFT is the latency the user most acutely feels.

这段“空等时间”,最终会体现在一个关键指标上:TTFT(Time To First Token,首 Token 响应时间)。它衡量的是:一个 Session 从接收到任务,到真正生成第一个响应 Token,中间到底等待了多久,而 TTFT,恰恰是用户感知最明显的延迟指标。

Decoupling the brain from the hands means that containers are provisioned by the brain via a tool call (execute(name, input) → string) only if they are needed. So a session that didn’t need a container right away didn’t wait for one. Inference could start as soon as the orchestration layer pulled pending events from the session log. Using this architecture, our p50 TTFT dropped roughly 60% and p95 dropped over 90%. Scaling to many brains just meant starting many stateless harnesses, and connecting them to hands only if needed.

当我们把“大脑”和“双手”解耦后,容器就不再是 Session 启动时的必选项了。此时,只有当“大脑”真正需要使用 Sandbox 或工具时,才会通过:execute(name, input) → string 这种工具调用方式,动态创建对应容器。

这意味着:如果某个 Session 一开始根本不需要 Sandbox,那么它就完全不需要等待容器启动,编排层(Orchestration Layer)只需要从 Session Log 中读取待处理事件,就可以立刻开始推理。而这种架构带来的性能提升非常明显:

- p50 TTFT 降低了约 60%

- p95 TTFT 降低超过 90%

与此同时,“扩展多个大脑”这件事也变得简单了很多。因为现在只需要启动更多无状态的 Harness 即可,而只有在真正需要执行操作时,它们才会去连接对应的“双手(Sandbox / Tools)”。

Many hands. We also wanted the ability to connect each brain to many hands. In practice, this means Claude must reason about many execution environments and decide where to send work—a harder cognitive task than operating in a single shell. We started with the brain in a single container because earlier models weren’t capable of this. As intelligence scaled, the single container became the limitation instead: when that container failed, we lost state for every hand that the brain was reaching into.

多只“双手”。

除了支持多个“大脑”,我们还希望:每个“大脑”都能够同时连接多只“双手”。在实际场景中,这意味着 Claude 必须能够同时理解多个执行环境,并判断:“这个任务到底应该交给哪只手去完成。”而这比只操作单一 Shell,要复杂得多。最开始,我们之所以把“大脑”放进单个容器里,是因为早期模型还没有能力处理这种复杂的多环境协作。但随着模型智能不断增强,单容器架构反而逐渐变成了系统瓶颈。因为一旦这个容器崩溃,“大脑”当前连接的所有“双手”状态都会一起丢失。

Decoupling the brain from the hands makes each hand a tool, execute(name, input) → string: a name and input go in, and a string is returned. That interface supports any custom tool, any MCP server, and our own tools. The harness doesn’t know whether the sandbox is a container, a phone, or a Pokémon emulator. And because no hand is coupled to any brain, brains can pass hands to one another.

而在“大脑”与“双手”彻底解耦后,每一只“手”都被抽象成了统一工具接口:execute(name, input) → string,输入工具名与参数,返回执行结果。有了这层抽象后:

- 任意自定义工具

- 任意 MCP Server

- Anthropic 自己的工具体系

都可以接入。

此时,Harness 根本不需要知道Sandbox 背后到底是:

- 一个容器

- 一部手机

- 还是一个宝可梦模拟器(Pokémon emulator)

对它来说,这些都只是“可调用工具”。更重要的是,由于“双手”不再绑定某个特定“大脑”,不同“大脑”之间甚至还可以共享与传递这些“手”。

Conclusion(总结)

The challenge we faced is an old one: how to design a system for “programs as yet unthought of.” Operating systems have lasted decades by virtualizing the hardware into abstractions general enough for programs that didn’t exist yet. With Managed Agents, we aimed to design a system that accommodates future harnesses, sandboxes, or other components around Claude.

我们面临的挑战其实是一个老问题:如何为“尚未被设想出来的程序”设计系统。操作系统之所以能够存在数十年,是因为它把硬件虚拟化成了一组足够通用的抽象,比如进程、文件等,使得那些当时还不存在的程序,未来也能运行在这些抽象之上。在 Managed Agents 中,我们的目标也是类似的:设计一个系统,使它能够容纳未来围绕 Claude 出现的各种 Harness、Sandbox,或者其他组件。

Managed Agents is a meta-harness in the same spirit, unopinionated about the specific harness that Claude will need in the future. Rather, it is a system with general interfaces that allow many different harnesses. For example, Claude Code is an excellent harness that we use widely across tasks. We’ve also shown that task-specific agent harnesses excel in narrow domains. Managed Agents can accommodate any of these, matching Claude’s intelligence over time.

Managed Agents 本质上也是一种“元 Harness”(meta-harness),它延续了同样的设计思想:不预设未来 Claude 一定需要哪一种具体 Harness,相反,它提供的是一套通用接口,使多种不同类型的 Harness 都能够接入其中。

例如,Claude Code 就是一个非常优秀的 Harness,我们已经在很多任务中广泛使用它。与此同时,我们也证明了,面向特定任务设计的 Agent Harness,在一些垂直领域中表现非常出色,Managed Agents 可以容纳这些不同形态的 Harness,并随着 Claude 智能水平的提升而不断适配。

Meta-harness design means being opinionated about the interfaces around Claude: we expect that Claude will need the ability to manipulate state (the session) and perform computation (the sandbox). We also expect that Claude will require the ability to scale to many brains and many hands. We designed the interfaces so that these can be run reliably and securely over long time horizons. But we make no assumptions about the number or location of brains or hands that Claude will need.

Meta-harness 的设计意味着,我们会对 Claude 周围的接口保持明确主张:我们认为 Claude 需要具备操作状态的能力,也就是 Session;同时也需要执行计算的能力,也就是 Sandbox。

我们还认为,Claude 未来需要支持扩展到“多个大脑”和“多只手”。因此,我们设计这些接口时,重点是让它们能够在长时间跨度内可靠、安全地运行。

但我们不会预设 Claude 未来到底需要多少个“大脑”或多少只“手”,也不会预设这些“大脑”和“手”应该运行在什么位置。

AtomGit 是由开放原子开源基金会联合 CSDN 等生态伙伴共同推出的新一代开源与人工智能协作平台。平台坚持“开放、中立、公益”的理念,把代码托管、模型共享、数据集托管、智能体开发体验和算力服务整合在一起,为开发者提供从开发、训练到部署的一站式体验。

更多推荐

已为社区贡献1条内容

已为社区贡献1条内容

所有评论(0)