yolov26改进 | Conv/卷积篇 | ScConv空间和通道重构卷积二次创新C3k2(包独家含网络结构图)

开始讲解之前推荐一下我的专栏,本专栏的内容支持(分类、检测、分割、追踪、关键点检测),专栏目前为限时折扣,欢迎大家订阅本专栏,本专栏每周更新5-7篇最新机制,更有包含我所有改进的文件和交流群提供给大家,本人定期在群内分享发表论文方法和经验。

一、本文介绍

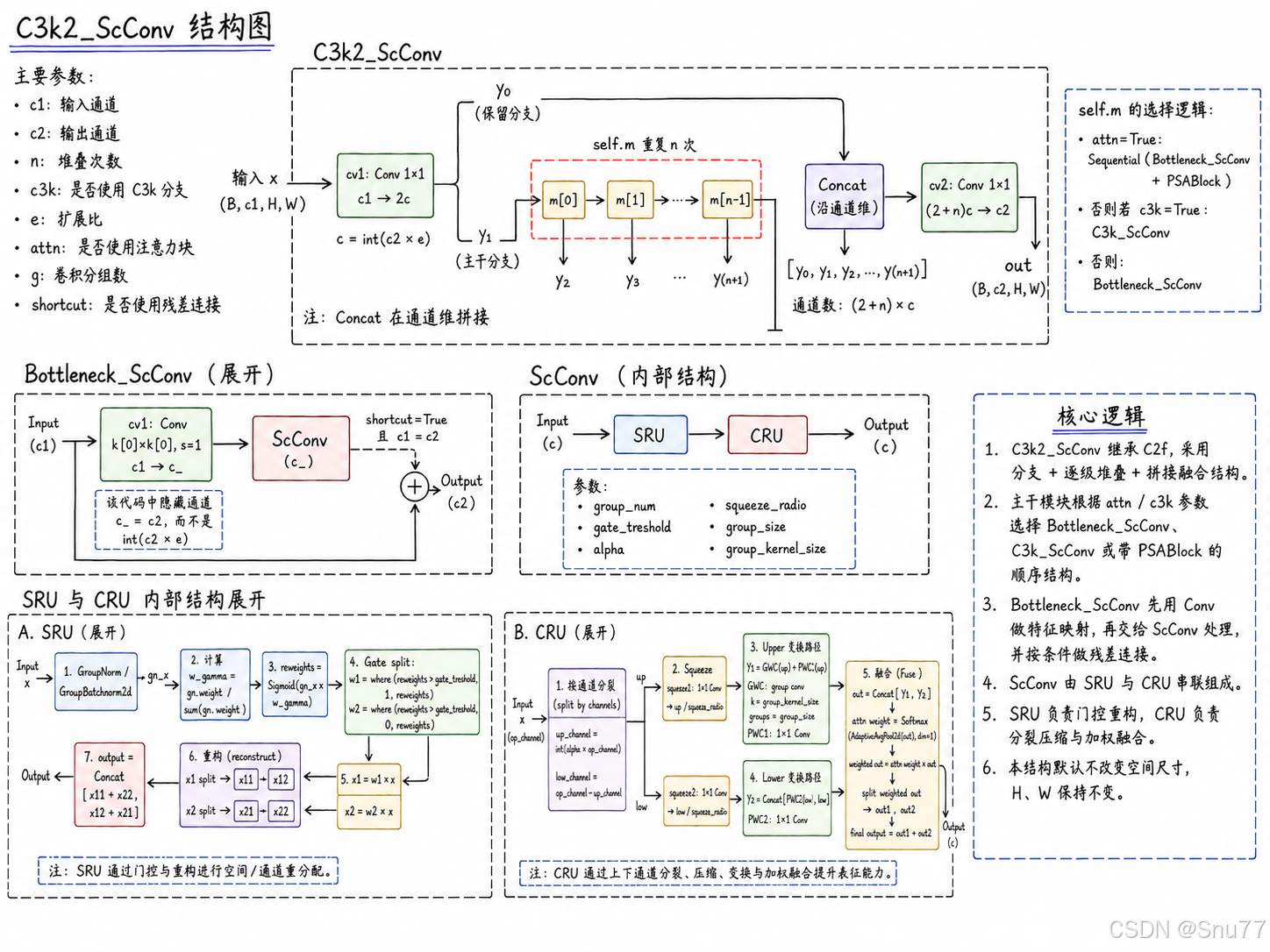

本文给大家带来的改进内容是SCConv,即空间和通道重构卷积,是一种发布于2023.9月份的一个新的改进机制。它的核心创新在于能够同时处理图像的空间(形状、结构)和通道(色彩、深度)信息,这样的处理方式使得SCConv在分析图像时更加精细和高效。这种技术不仅适用于复杂场景的图像处理,还能在普通的对象检测任务中提供更高的精确度(亲测在小目标检测和正常的物体检测中都有效提点)。SCConv的这种能力,特别是在处理大量数据和复杂图像时的优势。本文通过先介绍SCConv的基本网络结构和原理当大家对该卷积有一个大概的了解,然后教大家如何将该卷积添加到自己的网络结构中(本文包独家含网络结构图)。

专栏链接:YOLOv26有效涨点专栏包含:Conv、注意力机制、主干/Backbone、损失函数、优化器、后处理等改进机制

目录

二、网络结构讲解

论文地址:官方论文地址

代码地址:官方代码地址

2.1 SCConv的主要思想

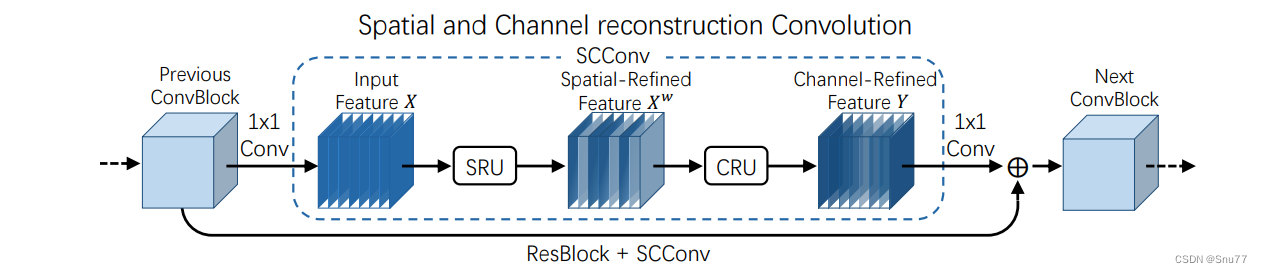

SCConv(空间和通道重构卷积)的高效卷积模块,以减少卷积神经网络(CNN)中的空间和通道冗余。SCConv旨在通过优化特征提取过程,减少计算资源消耗并提高网络性能。该模块包括两个单元:

1.空间重构单元(SRU):SRU通过分离和重构方法来减少空间冗余。

2.通道重构单元(CRU):CRU采用分割-变换-融合策略来减少通道冗余。

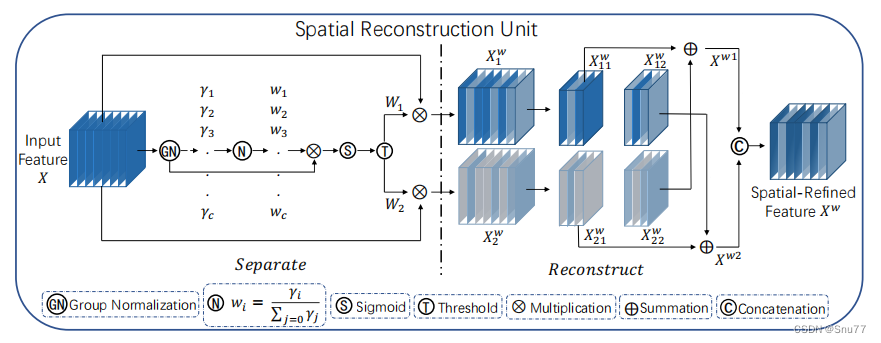

下面是SCConv的结构示意图->

下面我将分别解释这两个单元->

2.2 空间重构单元(SRU)

空间重构单元(SRU)是SCConv模块的一部分,负责减少特征在空间维度上的冗余。SRU接收输入特征,并通过以下步骤处理:

1. 组归一化(Group Normalization):首先对输入特征进行归一化,以减少不同特征图之间的尺度差异。

2. 权重生成:通过应用归一化和激活函数,如Sigmoid,从归一化的特征图中生成权重。

3. 特征分离:根据生成的权重,对输入特征进行分离,形成多个子特征集。

4. 特征重构:最后,这些分离出来的特征集经过变换和重组,产生空间精炼的特征输出,以便进一步处理。

上图展示了空间重构单元(SRU)的架构。SRU的工作流程如下:

1. 输入特征X:首先进行组归一化(GN)处理。

2. 分离:通过一系列的权重 ,

, ...,

对特征进行加权,这些权重是通过输入特征的通道

经过归一化和非线性激活函数(如Sigmoid)计算得到的。

3. 重构:加权后的特征被分割成两个部分 和

,然后这两部分各自经过变换,最终通过加法和拼接操作重构,得到空间精炼特征

。

总结:这个单元的设计目的是为了减少输入特征的空间冗余,从而提高卷积神经网络处理特征的效率。

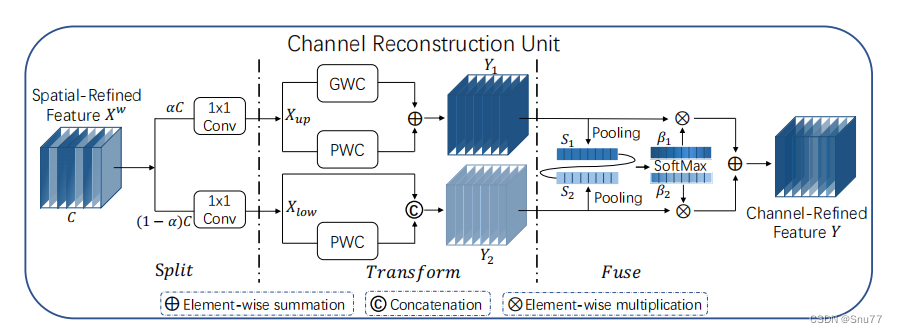

2.3 通道重构单元(CRU)

通道重构单元(CRU)是SCConv模块的一部分,旨在减少卷积神经网络特征的通道冗余。CRU对经过空间重构单元(SRU)处理后的特征进一步操作,通过以下步骤减少通道冗余:

上图详细展示了通道重构单元(CRU)的架构,该单元从空间精炼特征 \( X^W \) 开始进行处理。CRU的工作流程包括以下几个步骤:

1. 分割(Split):特征 被分割成两部分,通过不同比例的

和

路径进行不同的1x1卷积处理。

2. 变换(Transform):通过全局卷积(GWC)和点卷积(PWC)进一步变换这两部分特征。

3. 融合(Fuse):两个变换后的特征 和

经过池化和SoftMax加权融合,形成最终的通道精炼特征

。

总结:这种结构旨在通过细致地处理各个通道,减少不必要的信息,并提高网络的整体性能和效率。通过这一过程,CRU有效地提高了特征的表征效率,同时减少了模型的参数数量和计算成本。

三、核心代码

核心代码的使用方式看章节四!

import torch

import torch.nn.functional as F

import torch.nn as nn

__all__ = ['ScConv', 'C3k2_ScConv']

class GroupBatchnorm2d(nn.Module):

def __init__(self, c_num: int,

group_num: int = 16,

eps: float = 1e-10

):

super(GroupBatchnorm2d, self).__init__()

assert c_num >= group_num

self.group_num = group_num

self.weight = nn.Parameter(torch.randn(c_num, 1, 1))

self.bias = nn.Parameter(torch.zeros(c_num, 1, 1))

self.eps = eps

def forward(self, x):

N, C, H, W = x.size()

x = x.view(N, self.group_num, -1)

mean = x.mean(dim=2, keepdim=True)

std = x.std(dim=2, keepdim=True)

x = (x - mean) / (std + self.eps)

x = x.view(N, C, H, W)

return x * self.weight + self.bias

class SRU(nn.Module):

def __init__(self,

oup_channels: int,

group_num: int = 16,

gate_treshold: float = 0.5,

torch_gn: bool = True

):

super().__init__()

self.gn = nn.GroupNorm(num_channels=oup_channels, num_groups=group_num) if torch_gn else GroupBatchnorm2d(

c_num=oup_channels, group_num=group_num)

self.gate_treshold = gate_treshold

self.sigomid = nn.Sigmoid()

def forward(self, x):

gn_x = self.gn(x)

w_gamma = self.gn.weight / sum(self.gn.weight)

w_gamma = w_gamma.view(1, -1, 1, 1)

reweigts = self.sigomid(gn_x * w_gamma)

# Gate

w1 = torch.where(reweigts > self.gate_treshold, torch.ones_like(reweigts), reweigts) # 大于门限值的设为1,否则保留原值

w2 = torch.where(reweigts > self.gate_treshold, torch.zeros_like(reweigts), reweigts) # 大于门限值的设为0,否则保留原值

x_1 = w1 * x

x_2 = w2 * x

y = self.reconstruct(x_1, x_2)

return y

def reconstruct(self, x_1, x_2):

x_11, x_12 = torch.split(x_1, x_1.size(1) // 2, dim=1)

x_21, x_22 = torch.split(x_2, x_2.size(1) // 2, dim=1)

return torch.cat([x_11 + x_22, x_12 + x_21], dim=1)

class CRU(nn.Module):

'''

alpha: 0<alpha<1

'''

def __init__(self,

op_channel: int,

alpha: float = 1 / 2,

squeeze_radio: int = 2,

group_size: int = 2,

group_kernel_size: int = 3,

):

super().__init__()

self.up_channel = up_channel = int(alpha * op_channel)

self.low_channel = low_channel = op_channel - up_channel

self.squeeze1 = nn.Conv2d(up_channel, up_channel // squeeze_radio, kernel_size=1, bias=False)

self.squeeze2 = nn.Conv2d(low_channel, low_channel // squeeze_radio, kernel_size=1, bias=False)

# up

self.GWC = nn.Conv2d(up_channel // squeeze_radio, op_channel, kernel_size=group_kernel_size, stride=1,

padding=group_kernel_size // 2, groups=group_size)

self.PWC1 = nn.Conv2d(up_channel // squeeze_radio, op_channel, kernel_size=1, bias=False)

# low

self.PWC2 = nn.Conv2d(low_channel // squeeze_radio, op_channel - low_channel // squeeze_radio, kernel_size=1,

bias=False)

self.advavg = nn.AdaptiveAvgPool2d(1)

def forward(self, x):

# Split

up, low = torch.split(x, [self.up_channel, self.low_channel], dim=1)

up, low = self.squeeze1(up), self.squeeze2(low)

# Transform

Y1 = self.GWC(up) + self.PWC1(up)

Y2 = torch.cat([self.PWC2(low), low], dim=1)

# Fuse

out = torch.cat([Y1, Y2], dim=1)

out = F.softmax(self.advavg(out), dim=1) * out

out1, out2 = torch.split(out, out.size(1) // 2, dim=1)

return out1 + out2

def autopad(k, p=None, d=1): # kernel, padding, dilation

"""Pad to 'same' shape outputs."""

if d > 1:

k = d * (k - 1) + 1 if isinstance(k, int) else [d * (x - 1) + 1 for x in k] # actual kernel-size

if p is None:

p = k // 2 if isinstance(k, int) else [x // 2 for x in k] # auto-pad

return p

class Conv(nn.Module):

"""Standard convolution with args(ch_in, ch_out, kernel, stride, padding, groups, dilation, activation)."""

default_act = nn.SiLU() # default activation

def __init__(self, c1, c2, k=1, s=1, p=None, g=1, d=1, act=True):

"""Initialize Conv layer with given arguments including activation."""

super().__init__()

self.conv = nn.Conv2d(c1, c2, k, s, autopad(k, p, d), groups=g, dilation=d, bias=False)

self.bn = nn.BatchNorm2d(c2)

self.act = self.default_act if act is True else act if isinstance(act, nn.Module) else nn.Identity()

def forward(self, x):

"""Apply convolution, batch normalization and activation to input tensor."""

return self.act(self.bn(self.conv(x)))

def forward_fuse(self, x):

"""Perform transposed convolution of 2D data."""

return self.act(self.conv(x))

class ScConv(nn.Module):

def __init__(self,

op_channel: int,

group_num: int = 4,

gate_treshold: float = 0.5,

alpha: float = 1 / 2,

squeeze_radio: int = 2,

group_size: int = 2,

group_kernel_size: int = 3,

):

super().__init__()

self.SRU = SRU(op_channel,

group_num=group_num,

gate_treshold=gate_treshold)

self.CRU = CRU(op_channel,

alpha=alpha,

squeeze_radio=squeeze_radio,

group_size=group_size,

group_kernel_size=group_kernel_size)

def forward(self, x):

x = self.SRU(x)

x = self.CRU(x)

return x

class Bottleneck(nn.Module):

"""Standard bottleneck."""

def __init__(self, c1, c2, shortcut=True, g=1, k=(3, 3), e=0.5):

"""Initializes a standard bottleneck module with optional shortcut connection and configurable parameters."""

super().__init__()

c_ = int(c2 * e) # hidden channels

self.cv1 = Conv(c1, c_, k[0], 1)

self.cv2 = Conv(c_, c2, k[1], 1, g=g)

self.add = shortcut and c1 == c2

def forward(self, x):

"""Applies the YOLO FPN to input data."""

return x + self.cv2(self.cv1(x)) if self.add else self.cv2(self.cv1(x))

class Bottleneck_ScConv(nn.Module):

"""Standard bottleneck."""

def __init__(self, c1, c2, shortcut=True, g=1, k=(3, 3), e=0.5):

"""Initializes a bottleneck module with given input/output channels, shortcut option, group, kernels, and

expansion.

"""

super().__init__()

c_ = c2 # hidden channels

self.cv1 = Conv(c1, c_, k[0], 1)

self.cv2 = ScConv(c_)

self.add = shortcut and c1 == c2

def forward(self, x):

"""'forward()' applies the YOLO FPN to input data."""

return x + self.cv2(self.cv1(x)) if self.add else self.cv2(self.cv1(x))

class C2f(nn.Module):

"""Faster Implementation of CSP Bottleneck with 2 convolutions."""

def __init__(self, c1, c2, n=1, shortcut=False, g=1, e=0.5):

"""Initializes a CSP bottleneck with 2 convolutions and n Bottleneck blocks for faster processing."""

super().__init__()

self.c = int(c2 * e) # hidden channels

self.cv1 = Conv(c1, 2 * self.c, 1, 1)

self.cv2 = Conv((2 + n) * self.c, c2, 1) # optional act=FReLU(c2)

self.m = nn.ModuleList(Bottleneck(self.c, self.c, shortcut, g, k=((3, 3), (3, 3)), e=1.0) for _ in range(n))

def forward(self, x):

"""Forward pass through C2f layer."""

y = list(self.cv1(x).chunk(2, 1))

y.extend(m(y[-1]) for m in self.m)

return self.cv2(torch.cat(y, 1))

def forward_split(self, x):

"""Forward pass using split() instead of chunk()."""

y = list(self.cv1(x).split((self.c, self.c), 1))

y.extend(m(y[-1]) for m in self.m)

return self.cv2(torch.cat(y, 1))

class C3(nn.Module):

"""CSP Bottleneck with 3 convolutions."""

def __init__(self, c1, c2, n=1, shortcut=True, g=1, e=0.5):

"""Initialize the CSP Bottleneck with given channels, number, shortcut, groups, and expansion values."""

super().__init__()

c_ = int(c2 * e) # hidden channels

self.cv1 = Conv(c1, c_, 1, 1)

self.cv2 = Conv(c1, c_, 1, 1)

self.cv3 = Conv(2 * c_, c2, 1) # optional act=FReLU(c2)

self.m = nn.Sequential(*(Bottleneck(c_, c_, shortcut, g, k=((1, 1), (3, 3)), e=1.0) for _ in range(n)))

def forward(self, x):

"""Forward pass through the CSP bottleneck with 2 convolutions."""

return self.cv3(torch.cat((self.m(self.cv1(x)), self.cv2(x)), 1))

class Attention_YOLOv26(nn.Module):

"""Attention module that performs self-attention on the input tensor.

Args:

dim (int): The input tensor dimension.

num_heads (int): The number of attention heads.

attn_ratio (float): The ratio of the attention key dimension to the head dimension.

Attributes:

num_heads (int): The number of attention heads.

head_dim (int): The dimension of each attention head.

key_dim (int): The dimension of the attention key.

scale (float): The scaling factor for the attention scores.

qkv (Conv): Convolutional layer for computing the query, key, and value.

proj (Conv): Convolutional layer for projecting the attended values.

pe (Conv): Convolutional layer for positional encoding.

"""

def __init__(self, dim: int, num_heads: int = 8, attn_ratio: float = 0.5):

"""Initialize multi-head attention module.

Args:

dim (int): Input dimension.

num_heads (int): Number of attention heads.

attn_ratio (float): Attention ratio for key dimension.

"""

super().__init__()

self.num_heads = num_heads

self.head_dim = dim // num_heads

self.key_dim = int(self.head_dim * attn_ratio)

self.scale = self.key_dim**-0.5

nh_kd = self.key_dim * num_heads

h = dim + nh_kd * 2

self.qkv = Conv(dim, h, 1, act=False)

self.proj = Conv(dim, dim, 1, act=False)

self.pe = Conv(dim, dim, 3, 1, g=dim, act=False)

def forward(self, x: torch.Tensor) -> torch.Tensor:

"""Forward pass of the Attention module.

Args:

x (torch.Tensor): The input tensor.

Returns:

(torch.Tensor): The output tensor after self-attention.

"""

B, C, H, W = x.shape

N = H * W

qkv = self.qkv(x)

q, k, v = qkv.view(B, self.num_heads, self.key_dim * 2 + self.head_dim, N).split(

[self.key_dim, self.key_dim, self.head_dim], dim=2

)

attn = (q.transpose(-2, -1) @ k) * self.scale

attn = attn.softmax(dim=-1)

x = (v @ attn.transpose(-2, -1)).view(B, C, H, W) + self.pe(v.reshape(B, C, H, W))

x = self.proj(x)

return x

class PSABlock(nn.Module):

"""PSABlock class implementing a Position-Sensitive Attention block for neural networks.

This class encapsulates the functionality for applying multi-head attention and feed-forward neural network layers

with optional shortcut connections.

Attributes:

attn (Attention): Multi-head attention module.

ffn (nn.Sequential): Feed-forward neural network module.

add (bool): Flag indicating whether to add shortcut connections.

Methods:

forward: Performs a forward pass through the PSABlock, applying attention and feed-forward layers.

"""

def __init__(self, c: int, attn_ratio: float = 0.5, num_heads: int = 4, shortcut: bool = True) -> None:

"""Initialize the PSABlock.

Args:

c (int): Input and output channels.

attn_ratio (float): Attention ratio for key dimension.

num_heads (int): Number of attention heads.

shortcut (bool): Whether to use shortcut connections.

"""

super().__init__()

self.attn = Attention_YOLOv26(c, attn_ratio=attn_ratio, num_heads=num_heads)

self.ffn = nn.Sequential(Conv(c, c * 2, 1), Conv(c * 2, c, 1, act=False))

self.add = shortcut

def forward(self, x: torch.Tensor) -> torch.Tensor:

"""Execute a forward pass through PSABlock.

Args:

x (torch.Tensor): Input tensor.

Returns:

(torch.Tensor): Output tensor after attention and feed-forward processing.

"""

x = x + self.attn(x) if self.add else self.attn(x)

x = x + self.ffn(x) if self.add else self.ffn(x)

return x

class C3k_ScConv(C3):

"""C3k is a CSP bottleneck module with customizable kernel sizes for feature extraction in neural networks."""

def __init__(self, c1, c2, n=1, shortcut=True, g=1, e=0.5, k=3):

"""Initializes the C3k module with specified channels, number of layers, and configurations."""

super().__init__(c1, c2, n, shortcut, g, e)

c_ = int(c2 * e) # hidden channels

# self.m = nn.Sequential(*(RepBottleneck(c_, c_, shortcut, g, k=(k, k), e=1.0) for _ in range(n)))

self.m = nn.Sequential(*(Bottleneck_ScConv(c_, c_, shortcut, g, k=(k, k), e=1.0) for _ in range(n)))

class C3k2_ScConv(C2f):

"""Faster Implementation of CSP Bottleneck with 2 convolutions."""

def __init__(

self,

c1: int,

c2: int,

n: int = 1,

c3k: bool = False,

e: float = 0.5,

attn: bool = False,

g: int = 1,

shortcut: bool = True,

):

"""Initialize C3k2 modu

Args:

c1 (int): Input channels.

c2 (int): Output channels.

n (int): Number of blocks.

c3k (bool): Whether to use C3k blocks.

e (float): Expansion ratio.

attn (bool): Whether to use attention blocks.

g (int): Groups for convolutions.

shortcut (bool): Whether to use shortcut connections.

"""

super().__init__(c1, c2, n, shortcut, g, e)

self.m = nn.ModuleList(

nn.Sequential(

Bottleneck_ScConv(self.c, self.c, shortcut, g),

PSABlock(self.c, attn_ratio=0.5, num_heads=max(self.c // 64, 1)),

)

if attn

else C3k_ScConv(self.c, self.c, 2, shortcut, g)

if c3k

else Bottleneck_ScConv(self.c, self.c, shortcut, g)

for _ in range(n)

)

if __name__ == "__main__":

# Generating Sample image

image_size = (1, 64, 240, 240)

image = torch.rand(*image_size)

# Model

mobilenet_v1 = ScConv(64)

out = mobilenet_v1(image)

print(out.size())四、手把手教你添加本文机制!

下面的步骤如果你不会或者不想麻烦操作,可以联系作者获得本专栏添加所有项目文件的源代码,可直接训练.

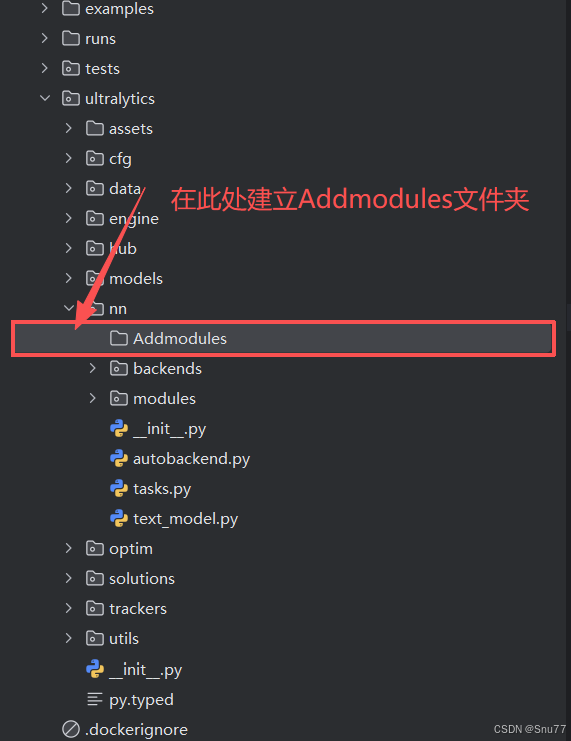

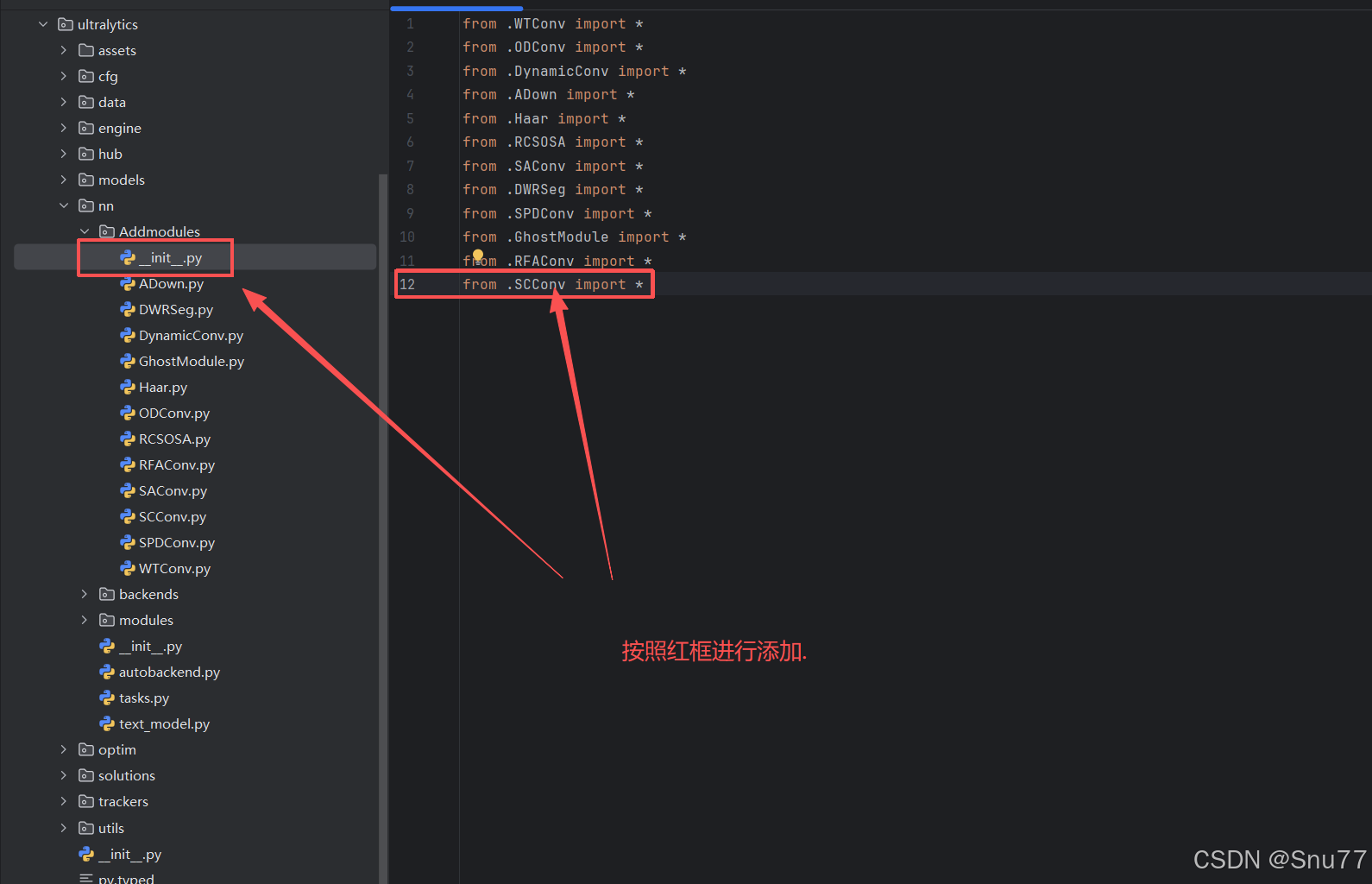

4.1 修改一

第一还是建立文件,我们找到如下ultralytics/nn文件夹下建立一个目录名字呢就是'Addmodules'文件夹!

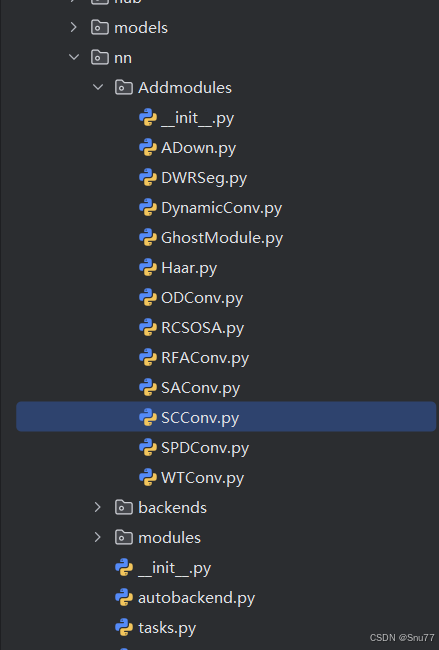

4.2 修改二

然后在Addmodules文件夹内建立一个新的py文件,将本文章节三中的“核心代码"复制粘贴进去。

4.3 修改三

第二步我们在该目录下创建一个新的py文件名字为'__init__.py',然后在其内部导入我们的文件,如下图所示。

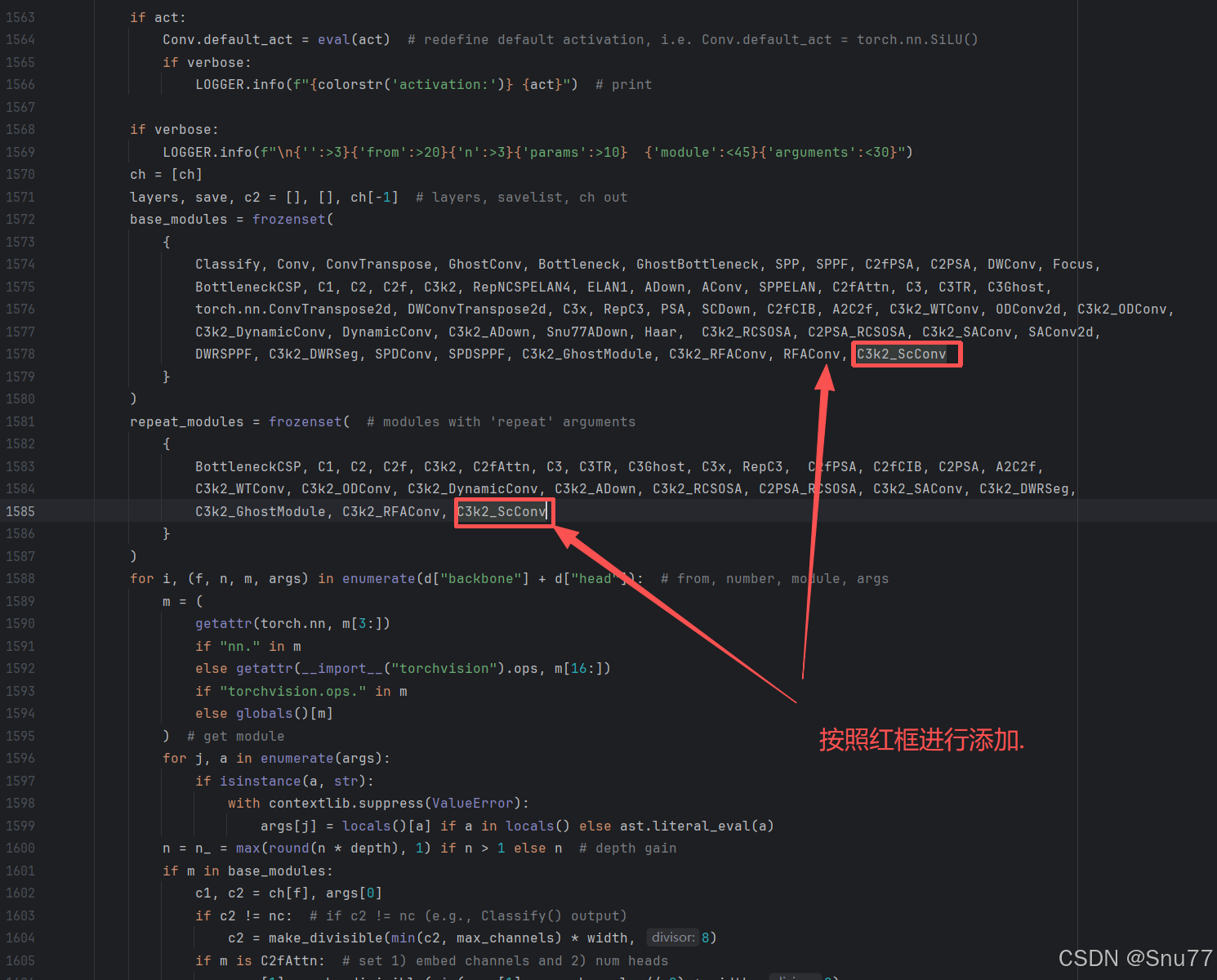

4.4 修改四

第三步我门中到如下文件'ultralytics/nn/tasks.py'进行导入和注册我们的模块(此处只需要添加一次即可,如果你用我其它的改进机制这里的步骤只需要添加一次)!

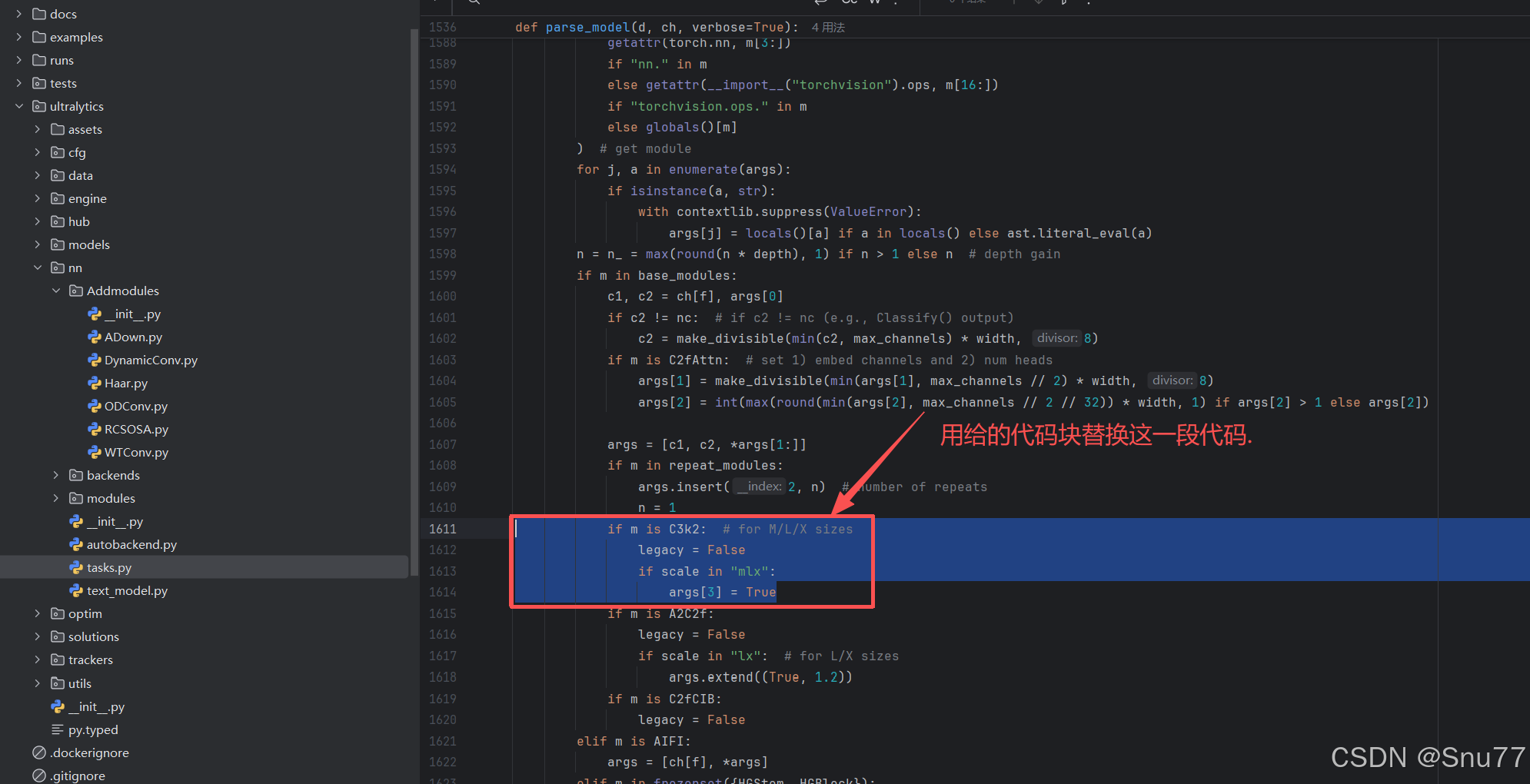

4.5 修改五

在'ultralytics/nn/tasks.py'文件内的parse_model方法函数内(位置大概在1500+行左右),按照图示位置添加即可(此处需要自己有一定的判别能力,如果不会可联系作者获得视频教程)。

4.6 修改六

在'ultralytics/nn/tasks.py'文件内的parse_model方法函数内(位置大概在1600+行左右),按照图示位置进行代码的替换即可(此处不改如果你yaml文件中的所有C3k2都被改名了,则检测头会使用老版本的v8检测头参数量会大幅度增加,但不影响运行很多人都忽略了这一步)。

if "C3k2" in getattr(m, "__name__", str(m)):

legacy = False

if scale in "mlx":

args[3] = True到此就修改完成了,大家可以复制下面的yaml文件运行,更多使用方式可以联系作者获得使用视频,本文仅列出常见的使用方式。

五、正式训练

5.1 yaml文件

5.1.1 yaml文件1

训练信息:YOLO26-C3k2-ScConv-1 summary: 297 layers, 2,435,148 parameters, 2,435,148 gradients, 5.7 GFLOPs

# Ultralytics 🚀 AGPL-3.0 License - https://ultralytics.com/license

# Ultralytics YOLO26 object detection model with P3/8 - P5/32 outputs

# Model docs: https://docs.ultralytics.com/models/yolo26

# Task docs: https://docs.ultralytics.com/tasks/detect

# Parameters

nc: 80 # number of classes

end2end: True # whether to use end-to-end mode

reg_max: 1 # DFL bins

scales: # model compound scaling constants, i.e. 'model=yolo26n.yaml' will call yolo26.yaml with scale 'n'

# [depth, width, max_channels]

n: [0.50, 0.25, 1024] # summary: 260 layers, 2,572,280 parameters, 2,572,280 gradients, 6.1 GFLOPs

s: [0.50, 0.50, 1024] # summary: 260 layers, 10,009,784 parameters, 10,009,784 gradients, 22.8 GFLOPs

m: [0.50, 1.00, 512] # summary: 280 layers, 21,896,248 parameters, 21,896,248 gradients, 75.4 GFLOPs

l: [1.00, 1.00, 512] # summary: 392 layers, 26,299,704 parameters, 26,299,704 gradients, 93.8 GFLOPs

x: [1.00, 1.50, 512] # summary: 392 layers, 58,993,368 parameters, 58,993,368 gradients, 209.5 GFLOPs

# YOLO26n backbone

backbone:

# [from, repeats, module, args]

- [-1, 1, Conv, [64, 3, 2]] # 0-P1/2

- [-1, 1, Conv, [128, 3, 2]] # 1-P2/4

- [-1, 2, C3k2_ScConv, [256, False, 0.25]]

- [-1, 1, Conv, [256, 3, 2]] # 3-P3/8

- [-1, 2, C3k2_ScConv, [512, False, 0.25]]

- [-1, 1, Conv, [512, 3, 2]] # 5-P4/16

- [-1, 2, C3k2_ScConv, [512, True]]

- [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32

- [-1, 2, C3k2_ScConv, [1024, True]]

- [-1, 1, SPPF, [1024, 5, 3, True]] # 9

- [-1, 2, C2PSA, [1024]] # 10

# YOLO26n head

head:

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 6], 1, Concat, [1]] # cat backbone P4

- [-1, 2, C3k2, [512, True]] # 13

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 4], 1, Concat, [1]] # cat backbone P3

- [-1, 2, C3k2, [256, True]] # 16 (P3/8-small)

- [-1, 1, Conv, [256, 3, 2]]

- [[-1, 13], 1, Concat, [1]] # cat head P4

- [-1, 2, C3k2, [512, True]] # 19 (P4/16-medium)

- [-1, 1, Conv, [512, 3, 2]]

- [[-1, 10], 1, Concat, [1]] # cat head P5

- [-1, 1, C3k2, [1024, True, 0.5, True]] # 22 (P5/32-large)

- [[16, 19, 22], 1, Detect, [nc]] # Detect(P3, P4, P5)

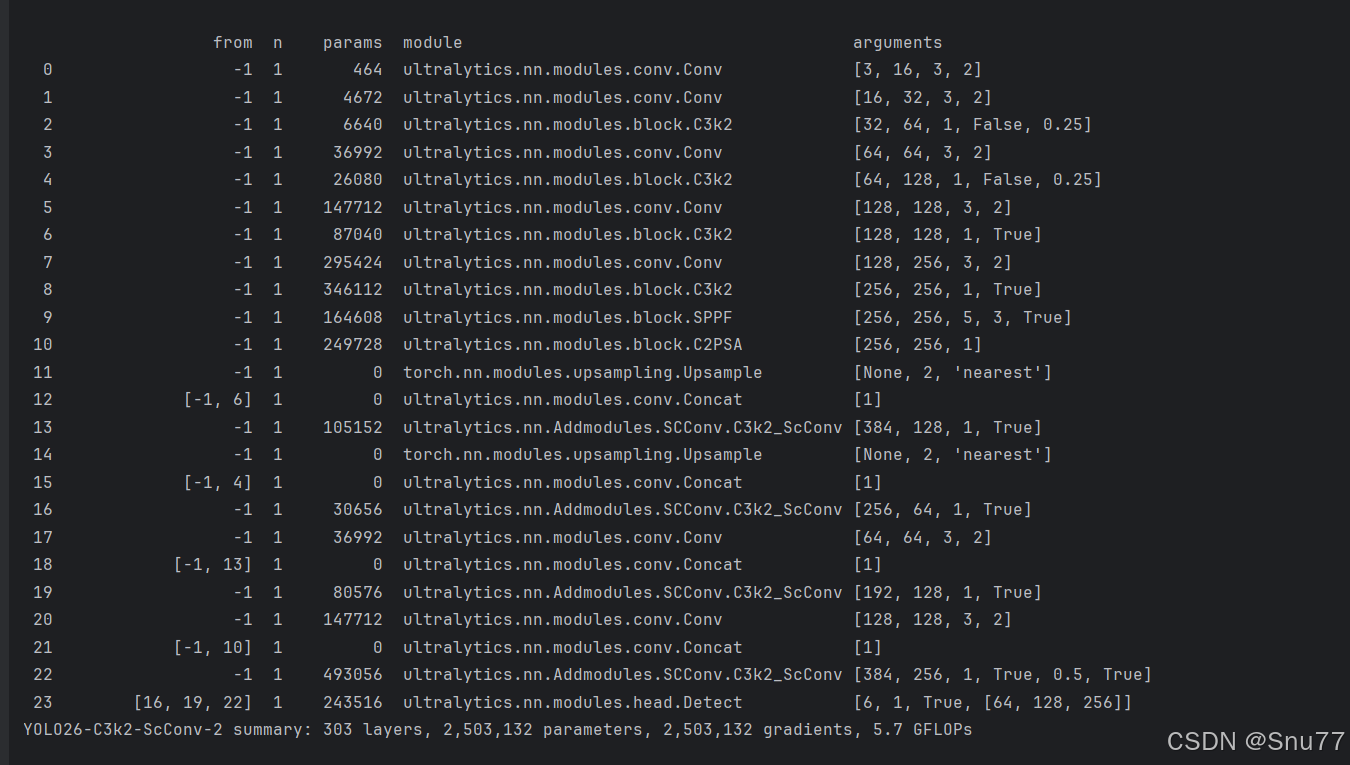

5.1.2 yaml文件2

训练信息:YOLO26-C3k2-ScConv-2 summary: 303 layers, 2,503,132 parameters, 2,503,132 gradients, 5.7 GFLOPs

# Ultralytics 🚀 AGPL-3.0 License - https://ultralytics.com/license

# Ultralytics YOLO26 object detection model with P3/8 - P5/32 outputs

# Model docs: https://docs.ultralytics.com/models/yolo26

# Task docs: https://docs.ultralytics.com/tasks/detect

# Parameters

nc: 80 # number of classes

end2end: True # whether to use end-to-end mode

reg_max: 1 # DFL bins

scales: # model compound scaling constants, i.e. 'model=yolo26n.yaml' will call yolo26.yaml with scale 'n'

# [depth, width, max_channels]

n: [0.50, 0.25, 1024] # summary: 260 layers, 2,572,280 parameters, 2,572,280 gradients, 6.1 GFLOPs

s: [0.50, 0.50, 1024] # summary: 260 layers, 10,009,784 parameters, 10,009,784 gradients, 22.8 GFLOPs

m: [0.50, 1.00, 512] # summary: 280 layers, 21,896,248 parameters, 21,896,248 gradients, 75.4 GFLOPs

l: [1.00, 1.00, 512] # summary: 392 layers, 26,299,704 parameters, 26,299,704 gradients, 93.8 GFLOPs

x: [1.00, 1.50, 512] # summary: 392 layers, 58,993,368 parameters, 58,993,368 gradients, 209.5 GFLOPs

# YOLO26n backbone

backbone:

# [from, repeats, module, args]

- [-1, 1, Conv, [64, 3, 2]] # 0-P1/2

- [-1, 1, Conv, [128, 3, 2]] # 1-P2/4

- [-1, 2, C3k2, [256, False, 0.25]]

- [-1, 1, Conv, [256, 3, 2]] # 3-P3/8

- [-1, 2, C3k2, [512, False, 0.25]]

- [-1, 1, Conv, [512, 3, 2]] # 5-P4/16

- [-1, 2, C3k2, [512, True]]

- [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32

- [-1, 2, C3k2, [1024, True]]

- [-1, 1, SPPF, [1024, 5, 3, True]] # 9

- [-1, 2, C2PSA, [1024]] # 10

# YOLO26n head

head:

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 6], 1, Concat, [1]] # cat backbone P4

- [-1, 2, C3k2_ScConv, [512, True]] # 13

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 4], 1, Concat, [1]] # cat backbone P3

- [-1, 2, C3k2_ScConv, [256, True]] # 16 (P3/8-small)

- [-1, 1, Conv, [256, 3, 2]]

- [[-1, 13], 1, Concat, [1]] # cat head P4

- [-1, 2, C3k2_ScConv, [512, True]] # 19 (P4/16-medium)

- [-1, 1, Conv, [512, 3, 2]]

- [[-1, 10], 1, Concat, [1]] # cat head P5

- [-1, 1, C3k2_ScConv, [1024, True, 0.5, True]] # 22 (P5/32-large)

- [[16, 19, 22], 1, Detect, [nc]] # Detect(P3, P4, P5)

5.1.3 yaml文件3

训练信息:YOLO26-C3k2-ScConv-3 summary: 339 layers, 2,432,140 parameters, 2,432,140 gradients, 5.6 GFLOPs

# Ultralytics 🚀 AGPL-3.0 License - https://ultralytics.com/license

# Ultralytics YOLO26 object detection model with P3/8 - P5/32 outputs

# Model docs: https://docs.ultralytics.com/models/yolo26

# Task docs: https://docs.ultralytics.com/tasks/detect

# Parameters

nc: 80 # number of classes

end2end: True # whether to use end-to-end mode

reg_max: 1 # DFL bins

scales: # model compound scaling constants, i.e. 'model=yolo26n.yaml' will call yolo26.yaml with scale 'n'

# [depth, width, max_channels]

n: [0.50, 0.25, 1024] # summary: 260 layers, 2,572,280 parameters, 2,572,280 gradients, 6.1 GFLOPs

s: [0.50, 0.50, 1024] # summary: 260 layers, 10,009,784 parameters, 10,009,784 gradients, 22.8 GFLOPs

m: [0.50, 1.00, 512] # summary: 280 layers, 21,896,248 parameters, 21,896,248 gradients, 75.4 GFLOPs

l: [1.00, 1.00, 512] # summary: 392 layers, 26,299,704 parameters, 26,299,704 gradients, 93.8 GFLOPs

x: [1.00, 1.50, 512] # summary: 392 layers, 58,993,368 parameters, 58,993,368 gradients, 209.5 GFLOPs

# YOLO26n backbone

backbone:

# [from, repeats, module, args]

- [-1, 1, Conv, [64, 3, 2]] # 0-P1/2

- [-1, 1, Conv, [128, 3, 2]] # 1-P2/4

- [-1, 2, C3k2_ScConv, [256, False, 0.25]]

- [-1, 1, Conv, [256, 3, 2]] # 3-P3/8

- [-1, 2, C3k2_ScConv, [512, False, 0.25]]

- [-1, 1, Conv, [512, 3, 2]] # 5-P4/16

- [-1, 2, C3k2_ScConv, [512, True]]

- [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32

- [-1, 2, C3k2_ScConv, [1024, True]]

- [-1, 1, SPPF, [1024, 5, 3, True]] # 9

- [-1, 2, C2PSA, [1024]] # 10

# YOLO26n head

head:

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 6], 1, Concat, [1]] # cat backbone P4

- [-1, 2, C3k2_ScConv, [512, True]] # 13

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 4], 1, Concat, [1]] # cat backbone P3

- [-1, 2, C3k2_ScConv, [256, True]] # 16 (P3/8-small)

- [-1, 1, Conv, [256, 3, 2]]

- [[-1, 13], 1, Concat, [1]] # cat head P4

- [-1, 2, C3k2_ScConv, [512, True]] # 19 (P4/16-medium)

- [-1, 1, Conv, [512, 3, 2]]

- [[-1, 10], 1, Concat, [1]] # cat head P5

- [-1, 1, C3k2_ScConv, [1024, True, 0.5, True]] # 22 (P5/32-large)

- [[16, 19, 22], 1, Detect, [nc]] # Detect(P3, P4, P5)

5.2 训练代码

大家可以创建一个py文件将我给的代码复制粘贴进去,配置好自己的文件路径即可运行。

import warnings

warnings.filterwarnings('ignore')

from ultralytics import YOLO

if __name__ == '__main__':

model = YOLO('模型配置文件地址,也就是5.1你保存到本地文件的地址')

# 如何切换模型版本, 上面的ymal文件可以改为 yolo26s.yaml就是使用的26s,

# 类似某个改进的yaml文件名称为yolo26-XXX.yaml那么如果想使用其它版本就把上面的名称改为yolo26l-XXX.yaml即可(改的是上面YOLO中间的名字不是配置文件的)!

# model.load('yolo26n.pt') # 是否加载预训练权重,科研不建议大家加载否则很难提升精度

model.train(

data=r"数据集文件地址",

# 如果大家任务是其它的'ultralytics/cfg/default.yaml'找到这里修改task可以改成detect, segment, classify, pose

cache=False,

imgsz=640,

epochs=20,

single_cls=False, # 是否是单类别检测

batch=16,

close_mosaic=0,

workers=0,

device='0',

optimizer='MuSGD', # using SGD/MuSGD

# resume=, # 这里是填写last.pt地址

amp=True, # 如果出现训练损失为Nan可以关闭amp

project='runs/train',

name='exp',

)

5.3 训练过程截图

五、本文总结

到此本文的正式分享内容就结束了,在这里给大家推荐我的YOLOv26改进有效涨点专栏,本专栏目前为新开的平均质量分98分,后期我会根据各种最新的前沿顶会进行论文复现,也会对一些老的改进机制进行补充,如果大家觉得本文帮助到你了,订阅本专栏,关注后续更多的更新~

专栏链接:YOLOv26有效涨点专栏包含:Conv、注意力机制、主干/Backbone、损失函数、优化器、后处理等改进机制

AtomGit 是由开放原子开源基金会联合 CSDN 等生态伙伴共同推出的新一代开源与人工智能协作平台。平台坚持“开放、中立、公益”的理念,把代码托管、模型共享、数据集托管、智能体开发体验和算力服务整合在一起,为开发者提供从开发、训练到部署的一站式体验。

更多推荐

已为社区贡献17条内容

已为社区贡献17条内容

所有评论(0)