为终端设备打造的 AI 模型 Gemini Nano

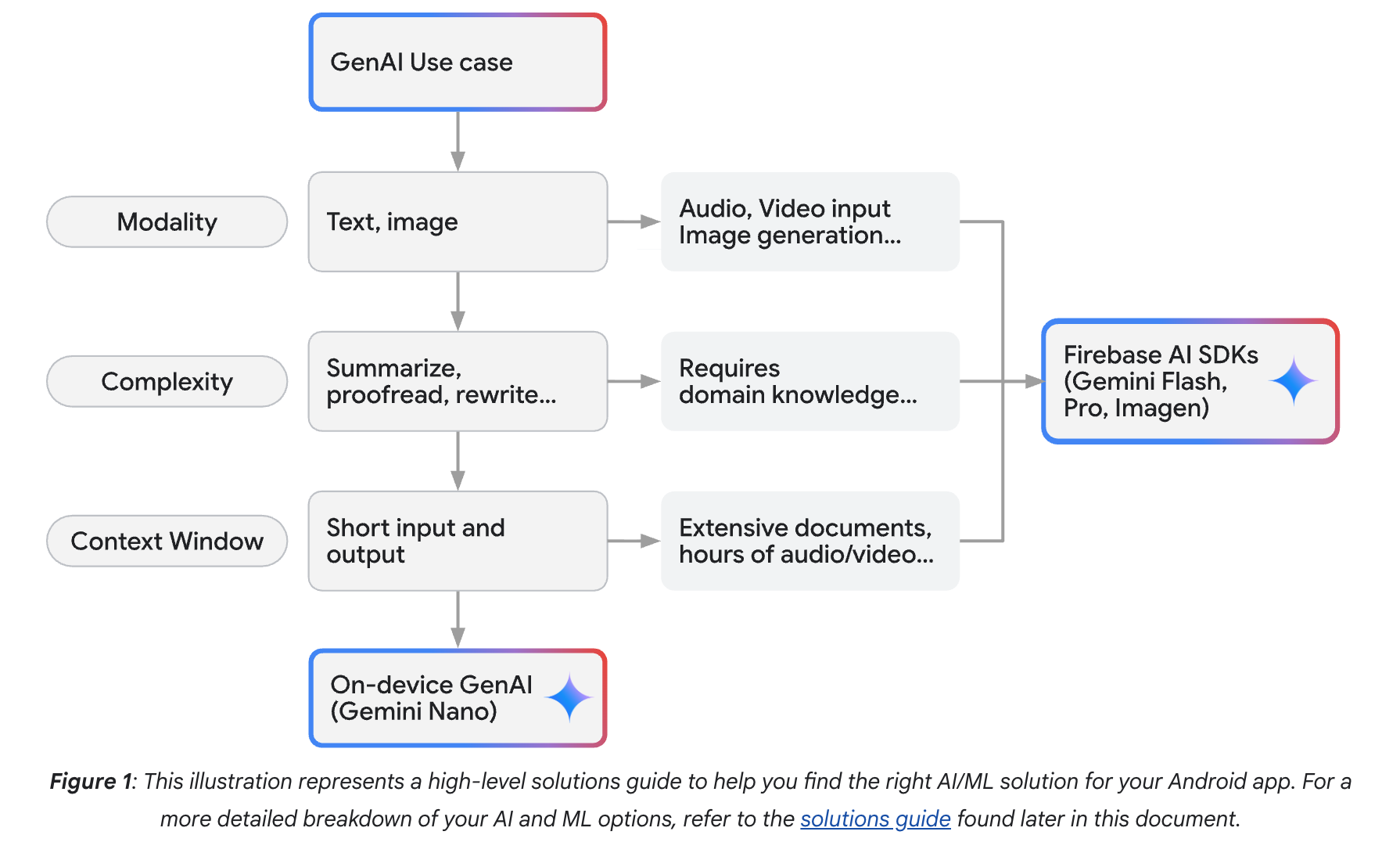

This guide is designed to help you integrate Google's generative artificial intelligence and machine learning (AI/ML) solutions into your applications. It provides guidance to help you navigate the various artificial intelligence and machine learning solutions available and choose the one that best fits your needs. The goal of this document is to help you determine which tool to use and why, by focusing on your needs and use cases.

To assist you in selecting the most suitable AI/ML solution for your specific requirements, this document includes a solutions guide. By answering a series of questions about your project's goals and constraints, the guide directs you towards the most appropriate tools and technologies.

This guide helps you choose the best AI solution for your app. Consider these factors: the type of data (text, images, audio, video), the task's complexity (simple summarization to complex tasks needing specialized knowledge), and the data size (short inputs versus large documents). This will help you decide between using Gemini Nano on your device or Firebase's cloud-based AI (Gemini Flash, Gemini Pro, or Imagen).

本指南旨在帮助您将谷歌生成式人工智能与机器学习(AI/ML)解决方案集成到应用程序中。通过梳理当前可选的人工智能与机器学习解决方案,指导您根据实际需求选择最适合的技术方案。本文档的核心目标是根据您的使用场景和需求,协助判断应选用何种工具及其原因。

为帮助您选择最符合项目需求的AI/ML解决方案,本指南包含解决方案选择器。通过回答一系列关于项目目标与限制条件的问题,该工具将为您推荐最合适的技术方案。

本指南助您为应用程序选择最优AI解决方案,需重点考量以下因素:数据类型(文本/图像/音频/视频)、任务复杂度(从简单摘要到需要专业知识的复杂任务)以及数据规模(短输入与大文档对比)。这些要素将决定您应选择设备端Gemini Nano模型,还是采用Firebase云端AI服务(Gemini Flash、Gemini Pro或Imagen)。

Harness the power of on-device inference

When you're adding AI and ML features to your Android app, you can choose different ways to deliver them – either on the device or using the cloud.

On-device solutions like Gemini Nano deliver results with no additional cost, provide enhanced user privacy, and provide reliable offline functionality because input data is processed locally. These benefits can be critical for certain use cases, like message summarization, making on-device a priority when choosing the right solutions.

Gemini Nano lets you run inference directly on an Android-powered device. If you're working with text, images, or audio, start with ML Kit's GenAI APIs for out-of-the-box solutions. The ML Kit GenAI APIs are powered by Gemini Nano and fine-tuned for specific on-device tasks. The ML Kit GenAI APIs are an ideal path to production for your apps due to their higher-level interface and scalability. These APIs allow you to implement use-cases to summarize, proofread, and rewrite text, generate image descriptions, and perform speech recognition.

To move beyond the fundamental use cases provided by the ML Kit GenAI APIs, consider Gemini Nano Experimental Access. Gemini Nano Experimental Access gives you more direct access to custom prompting with Gemini Nano.

For traditional machine learning tasks, you have the flexibility to implement your own custom models. We provide robust tools like ML Kit, MediaPipe, LiteRT, and Google Play delivery features to streamline your development process.

For applications that require highly specialized solutions, you can use your own custom model, such as Gemma or another model that is tailored to your specific use case. Run your model directly on the user's device with LiteRT, which provides pre-designed model architectures for optimized performance.

You can also consider building a hybrid solution by leveraging both on-device and cloud models.

Mobile apps commonly utilize local models for small text data, such as chat conversations or blog articles. However, for larger data sources (like PDFs) or when additional knowledge is required, a cloud-based solution with more powerful Gemini models may be necessary.

发挥设备端推理的强大能力

在为Android应用添加AI和ML功能时,您可以选择不同的实现方式——既可采用设备端方案,也能使用云端方案。

设备端解决方案(如Gemini Nano)具有零额外成本、强化用户隐私保护以及可靠的离线功能等优势,因为所有输入数据都在本地处理。这些特性对某些应用场景至关重要,例如消息摘要功能,因此在选择解决方案时应优先考虑设备端方案。

Gemini Nano支持直接在Android设备上运行推理。无论是处理文本、图像还是音频,您都可以从ML Kit的GenAI API入手,这些开箱即用的解决方案由Gemini Nano驱动,并针对特定设备端任务进行了优化。凭借其高阶接口和可扩展性,ML Kit GenAI API是实现应用商业化的理想选择,可用于实现文本摘要、校对改写、图像描述生成以及语音识别等功能。

若需突破ML Kit GenAI API提供的基础功能,可尝试Gemini Nano实验性访问通道。该通道支持更灵活的定制化提示词交互。

针对传统机器学习任务,您可以自由部署定制模型。我们提供ML Kit、MediaPipe、LiteRT及Google Play分发功能等强大工具来简化开发流程。

对于需要高度专业化解决方案的场景,您可以使用Gemma等定制模型,或根据具体用例量身打造专属模型。通过LiteRT将模型直接部署在用户设备上运行,该框架提供经过性能优化的预设模型架构。

您还可以考虑构建混合解决方案,同时利用设备端和云端模型。

移动应用通常采用本地模型处理小型文本数据(如聊天记录或博客文章)。但对于较大数据源(如PDF文件)或需要补充知识的场景,可能需要搭载更强大的Gemini模型的云端解决方案。

Integrate advanced Gemini models

Android developers can integrate Google's advanced generative AI capabilities, including the powerful Gemini Pro, Gemini Flash, and Imagen models, into their applications using the Firebase AI Logic SDK. This SDK is designed for larger data needs and provides expanded capabilities and adaptability by enabling access to these high-performing, multimodal AI models.

With the Firebase AI Logic SDK, developers can make client-side calls to Google's AI models with minimal effort. These models, such as Gemini Pro and Gemini Flash, run inference in the cloud and empower Android apps to process a variety of inputs including image, audio, video, and text. Gemini Pro excels at reasoning over complex problems and analyzing extensive data, while the Gemini Flash series offers superior speed and a context window large enough for most tasks.

集成先进Gemini模型

Android开发者可通过Firebase AI Logic SDK,将谷歌先进的生成式AI能力(包括强大的Gemini Pro、Gemini Flash和Imagen模型)整合到应用程序中。该SDK专为处理更大规模数据需求而设计,通过支持访问这些高性能多模态AI模型,提供更强的扩展能力和适应性。

借助Firebase AI Logic SDK,开发者只需极简代码即可在客户端调用谷歌AI模型。Gemini Pro和Gemini Flash等模型在云端执行推理,使Android应用能处理图像、音频、视频及文本等多种输入。其中Gemini Pro擅长复杂问题推理与海量数据分析,而Gemini Flash系列则以卓越速度见长,其上下文窗口足以应对多数任务场景。

When to use traditional machine learning

While generative AI is useful for creating and editing content like text, images, and code, many real-world problems are better solved using traditional Machine Learning (ML) techniques. These established methods excel at tasks involving prediction, classification, detection, and understanding patterns within existing data, often with greater efficiency, lower computational cost, and simpler implementation than generative models.

Traditional ML frameworks offer robust, optimized, and often more practical solutions for applications focused on analyzing input, identifying features, or making predictions based on learned patterns—rather than generating entirely new output. Tools like Google's ML Kit, LiteRT, and MediaPipe provide powerful capabilities tailored for these non-generative use cases, particularly in mobile and edge computing environments.

Kickstart your machine learning integration with ML Kit

ML Kit offers production-ready, mobile-optimized solutions for common machine learning tasks, requiring no prior ML expertise. This easy-to-use mobile SDK brings Google's ML expertise directly to your Android and iOS apps, allowing you to focus on feature development instead of model training and optimization. ML Kit provides prebuilt APIs and ready-to-use models for features like barcode scanning, text recognition (OCR), face detection, image labeling, object detection and tracking, language identification, and smart reply.

These models are typically optimized for on-device execution, ensuring low latency, offline functionality, and enhanced user privacy as data often remains on the device. Choose ML Kit to quickly add established ML features to your mobile app without needing to train models or require generative output. It's ideal for efficiently enhancing apps with "smart" capabilities using Google's optimized models or by deploying custom TensorFlow Lite models.

Get started with our comprehensive guides and documentation at the ML Kit developer site.

Custom ML deployment with LiteRT

For greater control or to deploy your own ML models, use a custom ML stack built on LiteRT and Google Play services. This stack provides the essentials for deploying high-performance ML features. LiteRT is a toolkit optimized for running TensorFlow models efficiently on resource-constrained mobile, embedded, and edge devices, giving you the ability to run significantly smaller and faster models that consume less memory, power, and storage. The LiteRT runtime is highly optimized for various hardware accelerators (GPUs, DSPs, NPUs) on edge devices, enabling low-latency inference.

Choose LiteRT when you need to efficiently deploy trained ML models (commonly for classification, regression, or detection) on devices with limited computational power or battery life, such as smartphones, IoT devices, or microcontrollers. It's the preferred solution for deploying custom or standard predictive models at the edge where speed and resource conservation are paramount.

Learn more about ML deployment with LiteRT.

Build real-time perception into your apps with MediaPipe

MediaPipe provides open-source, cross-platform, and customizable machine learning solutions designed for live and streaming media. Benefit from optimized, prebuilt tools for complex tasks like hand tracking, pose estimation, face mesh detection, and object detection, all enabling high-performance, real-time interaction even on mobile devices.

MediaPipe's graph-based pipelines are highly customizable, allowing you to tailor solutions for Android, iOS, web, desktop, and backend applications. Choose MediaPipe when your application needs to understand and react instantly to live sensor data, especially video streams, for use cases such as gesture recognition, AR effects, fitness tracking, or avatar control—all focused on analyzing and interpreting input.

Explore the solutions and start building with MediaPipe.

Choose an approach: On-device or cloud

When integrating AI/ML features into your Android app, a crucial early decision is whether to perform processing directly on the user's device or in the cloud. Tools like ML Kit, Gemini Nano, and TensorFlow Lite enable on-device capabilities, while the Gemini cloud APIs with Firebase AI Logic can provide powerful cloud-based processing. Making the right choice depends on a variety of factors specific to your use case and user needs.

Consider the following aspects to guide your decision:

- Connectivity and offline functionality: If your application needs to function reliably without an internet connection, on-device solutions like Gemini Nano are ideal. Cloud-based processing, by its nature, requires network access.

- Data privacy: For use cases where user data must remain on the device for privacy reasons, on-device processing offers a distinct advantage by keeping sensitive information local.

- Model capabilities and task complexity: Cloud-based models are often significantly larger, more powerful, and updated more frequently, making them suitable for highly complex AI tasks or when processing larger inputs where higher output quality and extensive capabilities are paramount. Simpler tasks might be well-handled by on-device models.

- Cost considerations: Cloud APIs typically involve usage-based pricing, meaning costs can scale with the number of inferences or amount of data processed. On-device inference, while generally free from direct per-use charges, incurs development costs and can impact device resources like battery life and overall performance.

- Device resources: On-device models consume storage space on the user's device. It's also important to be aware of the device compatibility of specific on-device models, such as Gemini Nano, to ensure your target audience can use the features.

- Fine-tuning and customization: If you require the ability to fine-tune models for your specific use case, cloud-based solutions generally offer greater flexibility and more extensive options for customization.

- Cross-platform consistency: If consistent AI features across multiple platforms, including iOS, are critical, be mindful that some on-device solutions, like Gemini Nano, may not yet be available on all operating systems.

By carefully considering your use case requirements and the available options, you can find the perfect AI/ML solution to enhance your Android app and deliver intelligent and personalized experiences to your users.

Gemini Nano lets you deliver rich generative AI experiences without needing a network connection or sending data to the cloud. On-device AI is a great solution for use-cases where low cost, and privacy safeguards are your primary concerns.

For on-device use-cases, you can take advantage of Google's Gemini Nano foundation model. Gemini Nano runs in Android's AICore system service, which leverages device hardware to enable low inference latency and keeps the model up-to-date.

Gemini Nano让您无需网络连接或向云端发送数据,即可提供丰富的生成式AI体验。对于以低成本和隐私保护为主要关注点的应用场景,设备端AI是一个绝佳的解决方案。

针对设备端应用场景,您可以利用谷歌的Gemini Nano基础模型。Gemini Nano运行在Android的AICore系统服务中,该服务利用设备硬件实现低推理延迟,并保持模型处于最新状态。

ML Kit GenAI APIs

ML Kit's GenAI APIs harness the power of Gemini Nano to help your apps perform tasks. These APIs provide out-of-the-box quality for popular use cases through a high-level interface. The ML Kit GenAI APIs are built on top of AICore, an Android system service that enables on-device execution of GenAI foundation models to facilitate features such as enhanced app functionality and improved user privacy by processing data locally. Learn more.

Note: The ML Kit GenAI API Additional Terms of Service apply to the use of the GenAI APIs. Developers are solely responsible for the safety of their API client and their app's user experience.

ML Kit的GenAI API利用Gemini Nano的强大功能,助力您的应用程序执行各类任务。这些API通过高层接口为常见应用场景提供开箱即用的优质服务。ML Kit GenAI API基于AICore构建——这项Android系统服务支持在设备端运行GenAI基础模型,通过本地数据处理实现增强应用功能、提升用户隐私保护等特性。了解更多。

注:使用GenAI API需遵守《ML Kit GenAI API附加服务条款》。开发者需对其API客户端的安全性及应用程序用户体验承担全部责任。

Key features

The ML Kit GenAI APIs support the following features:

- Prompt: Generate text content based on a custom text-only or multimodal prompt.

- Summarization: Summarize articles or conversations as a bulleted list.

- Proofreading: Proofread short chat messages.

- Rewriting: Rewrite short chat messages in different tones or styles.

- Image Description: Generate a short description of a given image.

- Speech Recognition: Transcribe spoken audio to text.

ML Kit GenAI API支持以下功能:

提示:基于自定义纯文本或多模态提示生成文本内容。

摘要:将文章或对话总结为要点列表。

校对:对短消息进行校对。

改写:以不同语气或风格改写短消息。

图像描述:为给定图像生成简短描述。

语音识别:将语音音频转录为文本。

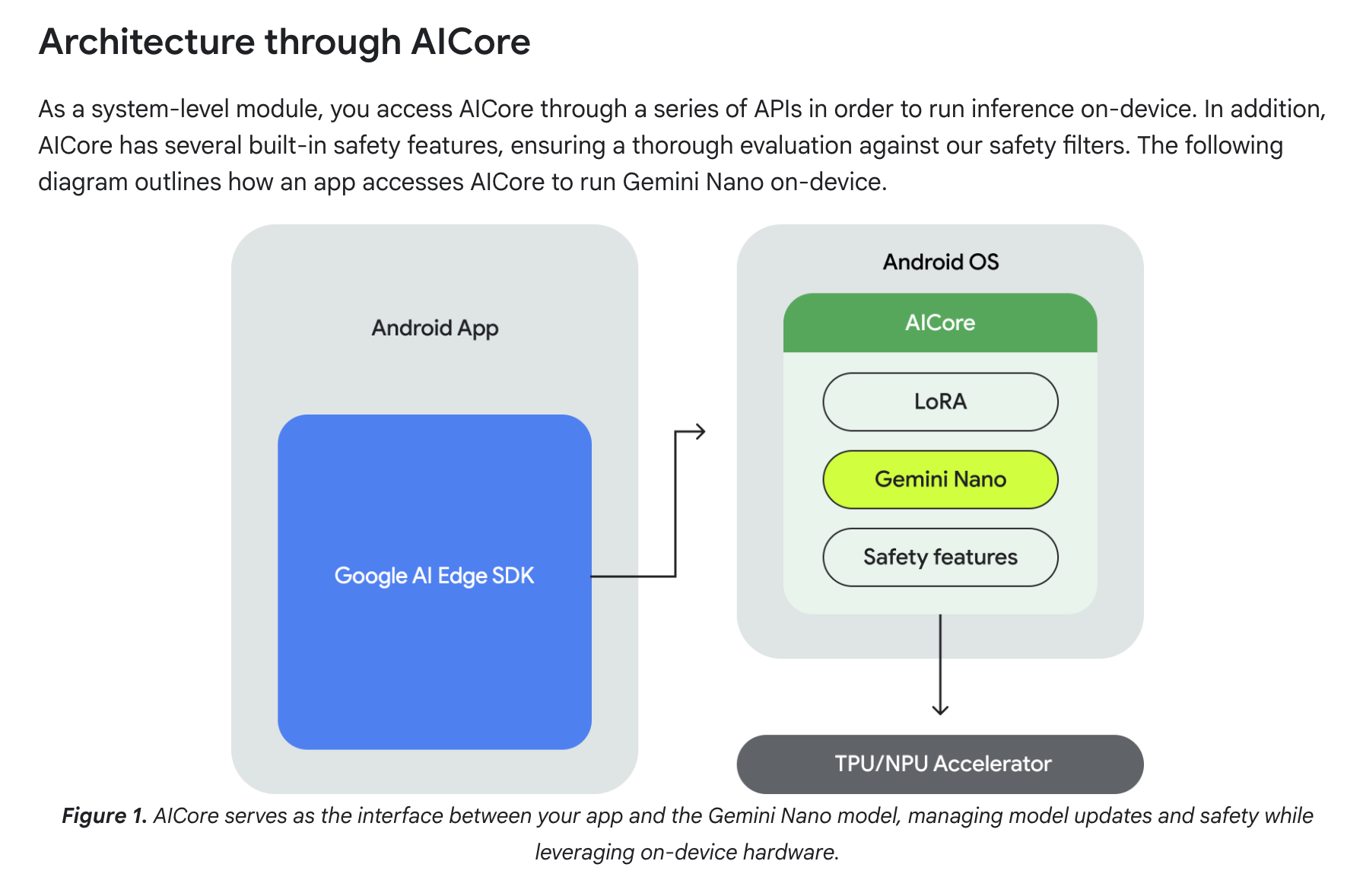

Architecture through AICore

As a system-level module, you access AICore through a series of APIs in order to run inference on-device. In addition, AICore has several built-in safety features, ensuring a thorough evaluation against our safety filters. The following diagram outlines how an app accesses AICore to run Gemini Nano on-device.

作为系统级模块,您通过一系列API访问AICore以便在设备上运行推理。此外,AICore内置多项安全功能,确保通过我们安全过滤器的全面评估。下图概述了应用程序如何访问AICore以在设备上运行Gemini Nano。

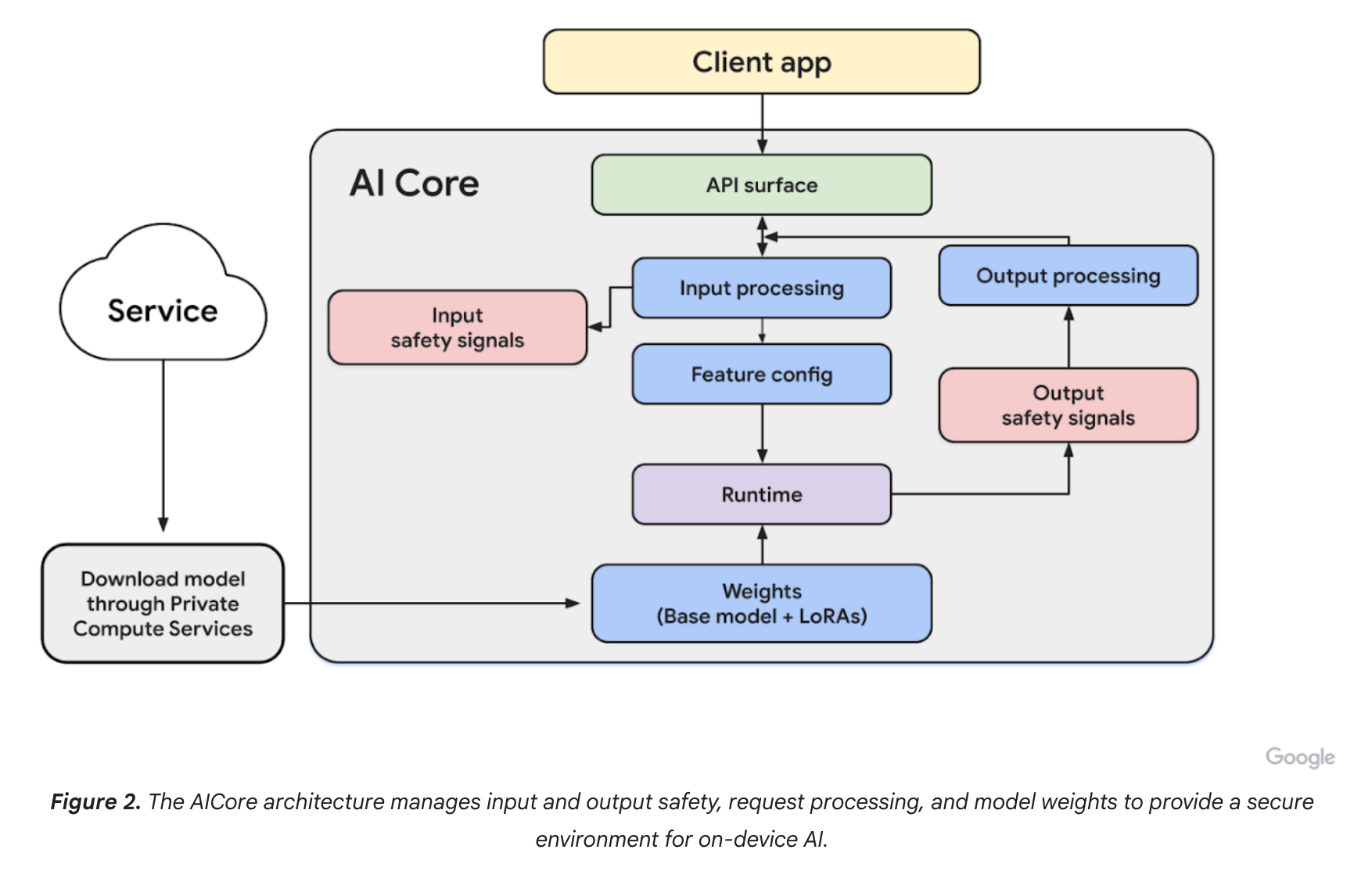

Keep user data private and secure

On-device generative AI executes prompts locally, eliminating server calls. While this removes network latency, inference speed depends on device hardware. This approach enhances privacy by keeping sensitive data on the device, enables offline functionality, and reduces inference costs.

AICore adheres to the Private Compute Core principles, with the following key characteristics:

- Restricted Package Binding: AICore is isolated from most other packages, with limited exceptions for specific system packages. Any modifications to this allowed list can only occur during a full Android OTA update.

- Indirect Internet Access: AICore does not have direct internet access. All internet requests, including model downloads, are routed through the open-source Private Compute Services companion APK. APIs within Private Compute Services must explicitly demonstrate their privacy-centric nature.

Additionally, AICore is built to isolate each request and doesn't store any record of the input data or the resulting outputs after processing them to protect user privacy. Read the blog post An Introduction to Privacy and Safety for Gemini Nano to learn more.

设备端生成式AI在本地执行提示词,无需调用服务器。这种方式消除了网络延迟,但推理速度取决于设备硬件。该方案通过将敏感数据保留在设备上增强了隐私性,支持离线功能,并降低了推理成本。

AICore遵循Private Compute Core(隐私计算核心)原则,具有以下关键特性:

受限包绑定:AICore与大多数其他软件包隔离,仅对特定系统包有少数例外。允许列表的修改只能通过完整的Android OTA更新实现。

间接网络访问:AICore不直接连接互联网。所有网络请求(包括模型下载)都通过开源的Private Compute Services(隐私计算服务)配套APK进行路由。隐私计算服务中的API必须明确展示其以隐私为中心的特性。

此外,AICore设计为隔离每个请求,在处理输入数据和生成输出结果后不会存储任何记录,以保护用户隐私。阅读博客文章《Gemini Nano隐私与安全介绍》了解更多信息。

Benefits of accessing AI foundation models with AICore

AICore enables the Android OS to provide and manage AI foundation models. This significantly reduces the cost of using these large models in your app, principally due to the following:

- Ease of deployment: AICore manages the distribution of Gemini Nano and handles future updates. You don't need to worry about downloading or updating large models over the network, nor impact on your app's disk and runtime memory budget.

- Accelerated inference: AICore leverages on-device hardware to accelerate inference. Your app gets the best performance on each device, and you don't need to worry about the underlying hardware interfaces.

通过AICore访问AI基础模型的优势

AICore使Android操作系统能够提供并管理AI基础模型。这显著降低了在应用中使用这些大型模型的成本,主要归功于以下方面:

部署便捷:AICore负责Gemini Nano的分发及未来更新。您无需担心通过网络下载或更新大型模型,也不会影响应用磁盘和运行时内存预算。

推理加速:AICore利用设备端硬件加速推理。您的应用可在每台设备上获得最佳性能,且无需关注底层硬件接口。

---

AtomGit 是由开放原子开源基金会联合 CSDN 等生态伙伴共同推出的新一代开源与人工智能协作平台。平台坚持“开放、中立、公益”的理念,把代码托管、模型共享、数据集托管、智能体开发体验和算力服务整合在一起,为开发者提供从开发、训练到部署的一站式体验。

更多推荐

已为社区贡献56条内容

已为社区贡献56条内容

所有评论(0)