【端侧部署yolo系列】yolact部署至全志开发板

注:awnpu_model_zoo\docs里有详细的开发文档以及参考指南

本文是根据《NPU开发环境部署参考指南》,部署PC的ubuntu环境,使用Docker镜像环境为例进行说明。

如果想对部署流程进行更加详细的了解,可以参考《NPU_模型部署_开发指南》

重要说明:在awnpu_model_zoo内没有的模型,可参考awnpu_model_zoo\examples里面对应系列的模型进行修改部署,需要自行编写对应模型cpp前后处理代码以及修改相应的配置文件(如model_config.h、CMakeLists.txt)。如果遇到模型导出量化失败的情况,需要对模型进行相应的裁剪、或者采用不同的量化方式等。本文的yolact已经在板端经过了推理验证。

环境配置

关于参考之前部署yolox的文章【端侧部署yolo系列】yolox部署至全志开发板T736![]() https://blog.csdn.net/troyteng/article/details/155444386?spm=1011.2124.3001.6209

https://blog.csdn.net/troyteng/article/details/155444386?spm=1011.2124.3001.6209

下载镜像文件和AWNPU_Model_Zoo,创建自己的容器。

模型准备

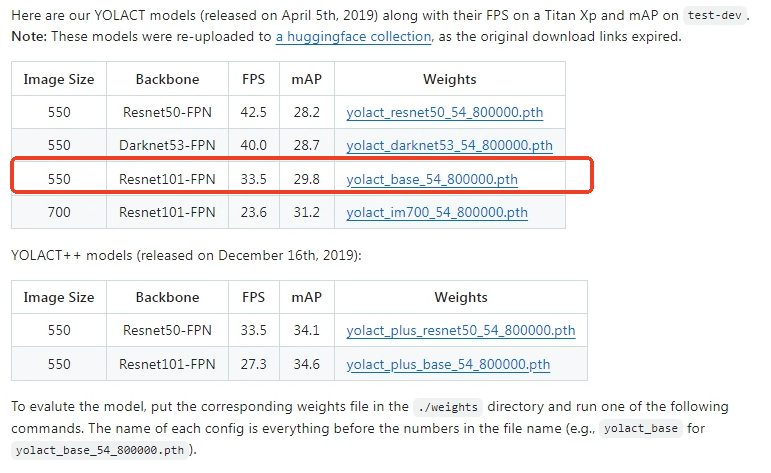

源码地址yolact,这里以yolact_base_54_800000.pth模型为例。

导出onnx模型

需要下载源码配置相应的环境,运行export_onnx.py导出onnx模型。

# 导出onnx模型

python export_onnx.py --trained_model=weights/yolact_base_54_800000.pth --config=yolact_base_configexport_onnx.py代码如下:

import torch

import torch.nn as nn

import argparse

import os

from yolact import Yolact

from data.config import cfg, set_cfg, Config

from collections import OrderedDict

def str2bool(v):

if v.lower() in ('yes', 'true', 't', 'y', '1'):

return True

elif v.lower() in ('no', 'false', 'f', 'n', '0'):

return False

else:

raise argparse.ArgumentTypeError('Boolean value expected.')

def parse_args():

parser = argparse.ArgumentParser(description='YOLACT ONNX Export')

parser.add_argument('--trained_model', default='weights/yolact_base_54_800000.pth', type=str,

help='Trained state_dict file path to open.')

parser.add_argument('--config', default='yolact_base_config', type=str,

help='The config object to use.')

parser.add_argument('--output', default=None, type=str,

help='Output ONNX file path. Default: same as trained_model with .onnx extension')

parser.add_argument('--batch_size', default=1, type=int,

help='Batch size for the model input')

parser.add_argument('--img_size', default=550, type=int,

help='Input image size (square)')

parser.add_argument('--opset_version', default=11, type=int,

help='ONNX opset version')

parser.add_argument('--simplify', default=True, type=str2bool,

help='Simplify the ONNX model using onnx-simplifier')

parser.add_argument('--dynamic_axes', default=False, type=str2bool,

help='Export with dynamic batch and image sizes')

return parser.parse_args()

class YolactONNXWrapper(nn.Module):

"""

Wrapper for YOLACT to make it ONNX-exportable.

This wraps the model to return only the essential outputs for inference.

"""

def __init__(self, yolact_model):

super().__init__()

self.model = yolact_model

# Disable the Detect layer for ONNX export (it has NMS which is hard to export)

# We'll export the raw predictions and do NMS separately

self.model.detect = None

def forward(self, x):

"""

Forward pass that returns raw predictions suitable for ONNX export.

Returns: loc, conf, mask, proto (if applicable)

"""

# Set image size for priors calculation

_, _, img_h, img_w = x.size()

cfg._tmp_img_h = img_h

cfg._tmp_img_w = img_w

# Backbone

outs = self.model.backbone(x)

# FPN

if cfg.fpn is not None:

outs = [outs[i] for i in cfg.backbone.selected_layers]

outs = self.model.fpn(outs)

# ProtoNet

proto_out = None

if cfg.mask_type == 1 and cfg.eval_mask_branch: # mask_type.lincomb == 1

proto_x = x if self.model.proto_src is None else outs[self.model.proto_src]

if self.model.num_grids > 0:

grids = self.model.grid.repeat(proto_x.size(0), 1, 1, 1)

proto_x = torch.cat([proto_x, grids], dim=1)

proto_out = self.model.proto_net(proto_x)

proto_out = cfg.mask_proto_prototype_activation(proto_out)

proto_out = proto_out.permute(0, 2, 3, 1).contiguous()

if cfg.mask_proto_bias:

bias_shape = [x for x in proto_out.size()]

bias_shape[-1] = 1

proto_out = torch.cat([proto_out, torch.ones(*bias_shape, device=proto_out.device)], -1)

# Prediction heads

pred_outs = {'loc': [], 'conf': [], 'mask': [], 'priors': []}

for idx, pred_layer in zip(self.model.selected_layers, self.model.prediction_layers):

pred_x = outs[idx]

if cfg.mask_type == 1 and cfg.mask_proto_prototypes_as_features: # lincomb

proto_downsampled = F.interpolate(proto_out.permute(0, 3, 1, 2),

size=outs[idx].size()[2:],

mode='bilinear',

align_corners=False)

pred_x = torch.cat([pred_x, proto_downsampled], dim=1)

p = pred_layer(pred_x)

for k, v in p.items():

if k in pred_outs:

pred_outs[k].append(v)

# Concatenate predictions from all layers

for k, v in pred_outs.items():

if len(v) > 0:

pred_outs[k] = torch.cat(v, -2)

# Apply activations

loc = pred_outs['loc']

conf = pred_outs['conf']

mask = pred_outs['mask']

# Apply softmax to conf

conf = torch.softmax(conf, dim=-1)

# Apply mask activation

if cfg.mask_type == 1: # lincomb

mask = cfg.mask_proto_coeff_activation(mask)

if proto_out is not None:

return loc, conf, mask, proto_out

else:

return loc, conf, mask

def export_to_onnx(args):

# Set configuration

if args.config is not None:

set_cfg(args.config)

print(f"Using config: {args.config}")

print(f"Number of classes: {cfg.num_classes}")

print(f"Input image size: {args.img_size}")

# Create model

print("Creating model...")

net = Yolact()

# Load weights

print(f"Loading weights from: {args.trained_model}")

if os.path.exists(args.trained_model):

net.load_weights(args.trained_model)

print("Weights loaded successfully!")

else:

print(f"Warning: Weight file not found at {args.trained_model}")

print("Exporting with random weights...")

net.eval()

# Wrap model for ONNX export

wrapped_model = YolactONNXWrapper(net)

wrapped_model.eval()

# Create dummy input

dummy_input = torch.randn(args.batch_size, 3, args.img_size, args.img_size)

# Determine output path

if args.output is None:

output_path = args.trained_model.replace('.pth', '.onnx')

else:

output_path = args.output

print(f"Exporting to: {output_path}")

# Define input/output names

input_names = ['input']

output_names = ['loc', 'conf', 'mask']

# Check if proto output exists

with torch.no_grad():

test_output = wrapped_model(dummy_input)

if len(test_output) == 4:

output_names.append('proto')

print("Model includes proto output for mask generation")

# Define dynamic axes

dynamic_axes = None

if args.dynamic_axes:

dynamic_axes = {

'input': {0: 'batch_size', 2: 'height', 3: 'width'},

'loc': {0: 'batch_size'},

'conf': {0: 'batch_size'},

'mask': {0: 'batch_size'},

}

if 'proto' in output_names:

dynamic_axes['proto'] = {0: 'batch_size'}

print("Using dynamic axes for batch and image sizes")

# Export to ONNX

try:

torch.onnx.export(

wrapped_model,

dummy_input,

output_path,

input_names=input_names,

output_names=output_names,

dynamic_axes=dynamic_axes,

opset_version=args.opset_version,

do_constant_folding=True,

verbose=False

)

print(f"ONNX model exported successfully to: {output_path}")

# Verify the model

try:

import onnx

onnx_model = onnx.load(output_path)

onnx.checker.check_model(onnx_model)

print("ONNX model validation passed!")

# Print model info

print("\nModel inputs:")

for input in onnx_model.graph.input:

print(f" {input.name}: {[dim.dim_value if dim.dim_value else dim.dim_param for dim in input.type.tensor_type.shape.dim]}")

print("\nModel outputs:")

for output in onnx_model.graph.output:

print(f" {output.name}: {[dim.dim_value if dim.dim_value else dim.dim_param for dim in output.type.tensor_type.shape.dim]}")

except ImportError:

print("onnx package not installed, skipping validation")

except Exception as e:

print(f"ONNX validation warning: {e}")

# Simplify model if requested

if args.simplify:

try:

import onnxsim

print("\nSimplifying ONNX model...")

onnx_model = onnx.load(output_path)

simplified_model, check = onnxsim.simplify(onnx_model)

if check:

onnx.save(simplified_model, output_path)

print("Model simplified successfully!")

else:

print("Model simplification check failed, keeping original model")

except ImportError:

print("onnx-simplifier not installed, skipping simplification")

print("Install with: pip install onnx-simplifier")

except Exception as e:

print(f"Simplification error: {e}")

# Test inference

try:

import onnxruntime as ort

print("\nTesting ONNX inference...")

ort_session = ort.InferenceSession(output_path)

# Run inference

onnx_input = dummy_input.numpy()

ort_inputs = {ort_session.get_inputs()[0].name: onnx_input}

ort_outputs = ort_session.run(None, ort_inputs)

print("ONNX inference test passed!")

for i, name in enumerate(output_names):

print(f" {name} shape: {ort_outputs[i].shape}")

except ImportError:

print("onnxruntime not installed, skipping inference test")

print("Install with: pip install onnxruntime")

except Exception as e:

print(f"Inference test error: {e}")

print(f"\nExport complete! Model saved to: {output_path}")

print("\nNote: This ONNX model outputs raw predictions (loc, conf, mask, proto).")

print("Post-processing (NMS, mask assembly) needs to be done separately.")

except Exception as e:

print(f"Export failed: {e}")

import traceback

traceback.print_exc()

def main():

args = parse_args()

export_to_onnx(args)

if __name__ == '__main__':

main()进入容器完成后续的操作。

模型简化

进入下载好的模型库文件awnpu_model_zoo/eamples/yolact/

# 运行onnx_extract.py对模型进行裁剪,生成yolact_base_54_800000_rt.onnx

python3 onnx_extract.py

# 运行推理

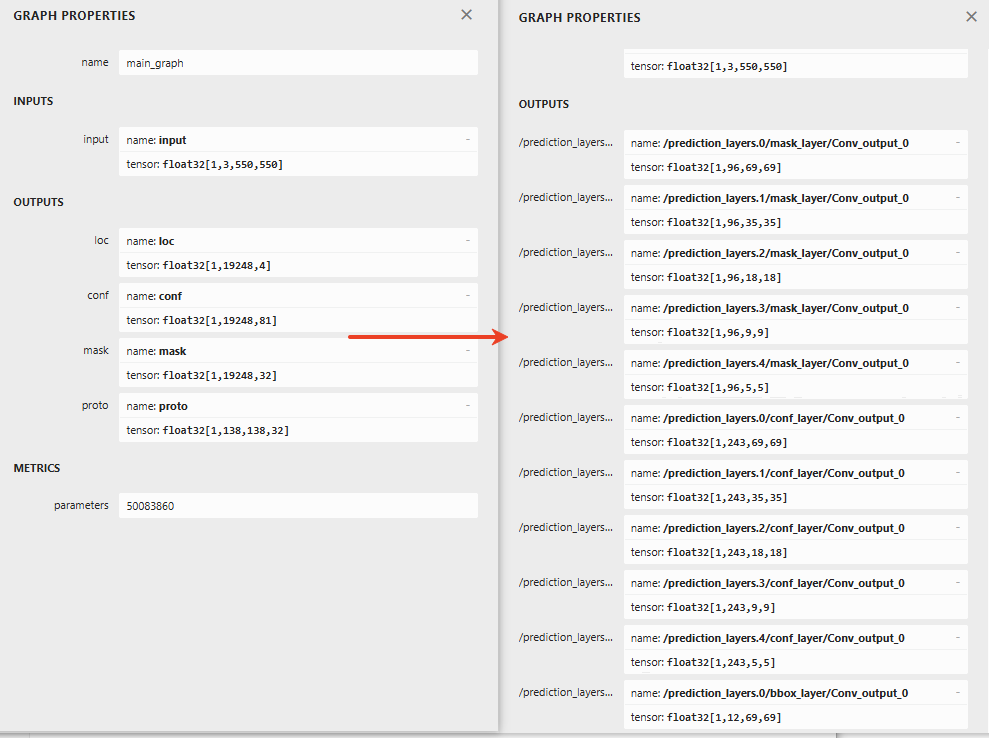

python3 inference_onnx.py --model=../yolact_base_54_800000_rt.onnx --image=../../model/bus.jpgyolact网络的后处理部分8bit量化会产生较大的精度损失,通过onnx_extract.py对模型剪枝,修改输出结构,同时将后处理结构移至外部使用cpu进行相应的处理。模型输出差异如下,左边是官方模型,右边是修改后的模型。这里有直接裁剪好的官方模型

模型配置

修改config_yml.py文件的相关参数配置:

# "database"

DATASET = '../../dataset/coco_12/dataset.txt'

DATASET_TYPE = "TEXT"

# mean, scale

MEAN = [123.68, 116.78, 103.94]

SCALE = [0.017123, 0.017507, 0.0174276]

# reverse_channel: True bgr, False rgb

REVERSE_CHANNEL = False

# add_preproc_node, True or False

ADD_PREPROC_NODE = True

# "preproc_type"

PREPROC_TYPE = "IMAGE_RGB"

# add_postproc_node, quant output -> float32 output

ADD_POSTPROC_NODE = True模型前后处理

后处理说明:yolact的特征图和先验框(锚框)分别有5个

feature_maps = [(69, 69), (35, 35), (18, 18), (9, 9), (5, 5)]

scales = [[24], [48], [96], [192], [384]]由于源码有一个bug,导致所有的先验框为正方形h = w;

在yolact.py的242行,作者注释:

# This is for backward compatability with a bug where I made everything square by accident

if cfg.backbone.use_square_anchors:

源码中还有重打分 (Rescoring) 机制以及Fast NMS(是 YOLACT 使用的改进版本,通过矩阵操作加速 ),本文采用的都是标准的nms。

配置文件model_config.h

配置文件model_config.h可以直接复制其他yolo官方系列的模型(yolov5、yolov8、yolo11、yolo26)。

前处理yolact_pre.cpp

#include <opencv2/core/core.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/imgproc/imgproc.hpp>

#include <iostream>

#include <stdio.h>

#include <stdint.h>

#include <string.h>

#include <math.h>

#include "model_config.h"

void get_input_data_yolact(const char* image_file, unsigned char* input_data, int input_rows, int input_cols)

{

cv::Mat sample = cv::imread(image_file, 1);

if (sample.empty()) {

fprintf(stderr, "cv::imread %s failed\n", image_file);

return;

}

// Convert BGR to RGB

cv::Mat img;

cv::cvtColor(sample, img, cv::COLOR_BGR2RGB);

// Resize to input size (550x550), no letterbox padding for YOLACT

cv::resize(img, img, cv::Size(input_cols, input_rows));

// Copy to output buffer (HWC format, uint8)

memcpy(input_data, img.data, input_rows * input_cols * 3 * sizeof(uint8_t));

}

uint8_t *yolact_preprocess(const char* imagepath, unsigned int *data_size)

{

int img_c = 3;

// YOLACT input size: 550x550

int input_rows = LETTERBOX_ROWS;

int input_cols = LETTERBOX_COLS;

int img_size = input_rows * input_cols * img_c;

*data_size = img_size * sizeof(uint8_t);

uint8_t *tensorData = NULL;

tensorData = (uint8_t *)malloc(1 * img_size * sizeof(uint8_t));

get_input_data_yolact(imagepath, tensorData, input_rows, input_cols);

return tensorData;

}

int yolact_preprocess_no_copy(const char* imagepath, void* buff_ptr, unsigned int buff_size)

{

int img_c = 3;

// YOLACT input size: 550x550

int input_rows = LETTERBOX_ROWS;

int input_cols = LETTERBOX_COLS;

int img_size = input_rows * input_cols * img_c;

unsigned int data_size = img_size * sizeof(uint8_t);

if (data_size > buff_size) {

printf("data size > buff size, please check code. \n");

return -1;

}

get_input_data_yolact(imagepath, (unsigned char*)buff_ptr, input_rows, input_cols);

return 0;

}

后处理yolact_post.cpp

#include <opencv2/core/core.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/imgproc/imgproc.hpp>

#include <iostream>

#include <stdio.h>

#include <vector>

#include <cmath>

#include <chrono>

#include <algorithm>

#include <cstring>

#include "model_config.h"

using namespace std;

// Forward declaration

void draw_yolact_detections(const cv::Mat& bgr, const std::vector<struct YOLACTDet>& dets);

// YOLACT detection result structure

struct YOLACTDet

{

cv::Rect_<float> rect;

int label;

float prob;

std::vector<float> mask_coeffs;

cv::Mat mask;

};

// Prior box parameters

static const int PRIOR_FEATURE_MAPS_H[5] = {69, 35, 18, 9, 5};

static const int PRIOR_FEATURE_MAPS_W[5] = {69, 35, 18, 9, 5};

static const int PRIOR_SCALES[5] = {24, 48, 96, 192, 384};

static const float PRIOR_ASPECT_RATIOS[3] = {1.0f, 0.7071067811865476f, 1.4142135623730951f}; // sqrt(0.5), sqrt(2)

static const float PRIOR_VARIANCES[2] = {0.1f, 0.2f};

// Global prior boxes cache

static std::vector<float> g_prior_boxes;

static bool g_priors_generated = false;

static inline float sigmoid(float x)

{

return static_cast<float>(1.f / (1.f + exp(-x)));

}

static inline float tanh_func(float x)

{

return static_cast<float>(tanh(x));

}

// Generate prior boxes (called once)

static void generate_priors()

{

if (g_priors_generated) return;

g_prior_boxes.clear();

int input_size = 550;

for (int k = 0; k < 5; k++) {

int conv_h = PRIOR_FEATURE_MAPS_H[k];

int conv_w = PRIOR_FEATURE_MAPS_W[k];

int scale = PRIOR_SCALES[k];

for (int j = 0; j < conv_h; j++) {

for (int i = 0; i < conv_w; i++) {

float cx = (i + 0.5f) / conv_w;

float cy = (j + 0.5f) / conv_h;

for (int ar_idx = 0; ar_idx < 3; ar_idx++) {

float ar = PRIOR_ASPECT_RATIOS[ar_idx];

float prior_w = scale * ar / input_size;

float prior_h = scale / ar / input_size;

// Use square anchors (as in original code)

prior_h = prior_w;

g_prior_boxes.push_back(cx);

g_prior_boxes.push_back(cy);

g_prior_boxes.push_back(prior_w);

g_prior_boxes.push_back(prior_h);

}

}

}

}

g_priors_generated = true;

}

// Decode box predictions

static void decode_boxes(const float* loc, float* boxes, int num_priors)

{

for (int i = 0; i < num_priors; i++) {

int p_idx = i * 4;

int l_idx = i * 4;

float prior_cx = g_prior_boxes[p_idx + 0];

float prior_cy = g_prior_boxes[p_idx + 1];

float prior_w = g_prior_boxes[p_idx + 2];

float prior_h = g_prior_boxes[p_idx + 3];

float loc_0 = loc[l_idx + 0];

float loc_1 = loc[l_idx + 1];

float loc_2 = loc[l_idx + 2];

float loc_3 = loc[l_idx + 3];

float box_cx = prior_cx + loc_0 * PRIOR_VARIANCES[0] * prior_w;

float box_cy = prior_cy + loc_1 * PRIOR_VARIANCES[0] * prior_h;

float box_w = prior_w * exp(loc_2 * PRIOR_VARIANCES[1]);

float box_h = prior_h * exp(loc_3 * PRIOR_VARIANCES[1]);

// Convert to [x1, y1, x2, y2] format

boxes[i * 4 + 0] = box_cx - box_w / 2.0f;

boxes[i * 4 + 1] = box_cy - box_h / 2.0f;

boxes[i * 4 + 2] = box_cx + box_w / 2.0f;

boxes[i * 4 + 3] = box_cy + box_h / 2.0f;

}

}

// Softmax function for confidence scores

static void softmax(const float* input, float* output, int n)

{

float max_val = input[0];

for (int i = 1; i < n; i++) {

if (input[i] > max_val) max_val = input[i];

}

float sum = 0.0f;

for (int i = 0; i < n; i++) {

output[i] = exp(input[i] - max_val);

sum += output[i];

}

for (int i = 0; i < n; i++) {

output[i] /= sum;

}

}

static inline float intersection_area(const YOLACTDet& a, const YOLACTDet& b)

{

cv::Rect_<float> inter = a.rect & b.rect;

return inter.area();

}

static void qsort_descent_inplace(std::vector<YOLACTDet>& objects, int left, int right)

{

int i = left;

int j = right;

float p = objects[(left + right) / 2].prob;

while (i <= j)

{

while (objects[i].prob > p)

i++;

while (objects[j].prob < p)

j--;

if (i <= j)

{

// swap

std::swap(objects[i], objects[j]);

i++;

j--;

}

}

#pragma omp parallel sections

{

#pragma omp section

{

if (left < j) qsort_descent_inplace(objects, left, j);

}

#pragma omp section

{

if (i < right) qsort_descent_inplace(objects, i, right);

}

}

}

static void qsort_descent_inplace(std::vector<YOLACTDet>& objects)

{

if (objects.empty())

return;

qsort_descent_inplace(objects, 0, objects.size() - 1);

}

static void nms_sorted_bboxes(const std::vector<YOLACTDet>& objects, std::vector<int>& picked, float nms_threshold, bool agnostic = false)

{

picked.clear();

const int n = objects.size();

std::vector<float> areas(n);

for (int i = 0; i < n; i++)

{

areas[i] = objects[i].rect.area();

}

for (int i = 0; i < n; i++)

{

const YOLACTDet& a = objects[i];

int keep = 1;

for (int j = 0; j < (int)picked.size(); j++)

{

const YOLACTDet& b = objects[picked[j]];

if (!agnostic && a.label != b.label)

continue;

// intersection over union

float inter_area = intersection_area(a, b);

float union_area = areas[i] + areas[picked[j]] - inter_area;

// float IoU = inter_area / union_area

if (inter_area / union_area > nms_threshold)

keep = 0;

}

if (keep)

picked.push_back(i);

}

}

// Assemble mask from prototypes and coefficients

static cv::Mat assemble_mask(const std::vector<float>& mask_coeffs, const float* proto_data, int proto_h, int proto_w, int mask_dim, const cv::Rect_<float>& box)

{

// mask = sigmoid(proto @ mask_coeffs.T)

cv::Mat mask(proto_h, proto_w, CV_32F);

for (int y = 0; y < proto_h; y++) {

for (int x = 0; x < proto_w; x++) {

float val = 0.0f;

for (int c = 0; c < mask_dim; c++) {

val += proto_data[(y * proto_w + x) * mask_dim + c] * mask_coeffs[c];

}

mask.at<float>(y, x) = sigmoid(val);

}

}

// Crop to box region

int x1 = std::max(0, (int)(box.x * proto_w));

int y1 = std::max(0, (int)(box.y * proto_h));

int x2 = std::min(proto_w, (int)((box.x + box.width) * proto_w));

int y2 = std::min(proto_h, (int)((box.y + box.height) * proto_h));

cv::Mat crop_mask = cv::Mat::zeros(proto_h, proto_w, CV_32F);

if (x2 > x1 && y2 > y1) {

cv::Rect roi(x1, y1, x2 - x1, y2 - y1);

cv::Mat(crop_mask, roi) = 1.0f;

mask = mask.mul(crop_mask);

}

return mask;

}

// Main post-processing function

int yolact_postprocess(const char* imagepath, float** output, int output_cnt)

{

std::chrono::steady_clock::time_point Tbegin, Tend;

Tbegin = std::chrono::steady_clock::now();

cv::Mat orig_img = cv::imread(imagepath, 1);

if (orig_img.empty()) {

fprintf(stderr, "cv::imread %s failed\n", imagepath);

return -1;

}

int orig_h = orig_img.rows;

int orig_w = orig_img.cols;

// Generate priors (first time only)

generate_priors();

// Model output structure (16 outputs):

// outputs[0-4]: mask coefficients [1, 96, H, W] (5 layers)

// outputs[5-9]: confidence scores [1, 243, H, W] (5 layers)

// outputs[10-14]: bbox predictions [1, 12, H, W] (5 layers)

// outputs[15]: mask prototypes [1, 138, 138, 32]

if (output_cnt < 16) {

fprintf(stderr, "Error: expected 16 outputs, got %d\n", output_cnt);

return -1;

}

// Process each feature map layer

std::vector<float> all_locs;

std::vector<float> all_confs;

std::vector<float> all_masks;

for (int layer = 0; layer < 5; layer++) {

int h = PRIOR_FEATURE_MAPS_H[layer];

int w = PRIOR_FEATURE_MAPS_W[layer];

int num_anchors = h * w * 3;

// Get output pointers

float* mask_data = output[layer];

float* conf_data = output[5 + layer];

float* bbox_data = output[10 + layer];

// Process bbox (shape: [1, 12, h, w] -> 12 = 3*4)

// Transpose from NCHW to NHWC

for (int y = 0; y < h; y++) {

for (int x = 0; x < w; x++) {

for (int a = 0; a < 3; a++) {

for (int c = 0; c < 4; c++) {

// NCHW: [0, a*4+c, y, x] -> NHWC

int nchw_idx = ((a * 4 + c) * h + y) * w + x;

all_locs.push_back(bbox_data[nchw_idx]);

}

}

}

}

// Process confidence (shape: [1, 243, h, w] -> 243 = 3*81)

for (int y = 0; y < h; y++) {

for (int x = 0; x < w; x++) {

for (int a = 0; a < 3; a++) {

float conf_raw[81];

float conf_softmax[81];

// Read 81 class scores

for (int c = 0; c < 81; c++) {

int nchw_idx = ((a * 81 + c) * h + y) * w + x;

conf_raw[c] = conf_data[nchw_idx];

}

// Apply softmax

softmax(conf_raw, conf_softmax, 81);

// Store (skip background class 0)

for (int c = 1; c < 81; c++) {

all_confs.push_back(conf_softmax[c]);

}

}

}

}

// Process mask coefficients (shape: [1, 96, h, w] -> 96 = 3*32)

for (int y = 0; y < h; y++) {

for (int x = 0; x < w; x++) {

for (int a = 0; a < 3; a++) {

for (int c = 0; c < 32; c++) {

int nchw_idx = ((a * 32 + c) * h + y) * w + x;

// Apply tanh activation

all_masks.push_back(tanh_func(mask_data[nchw_idx]));

}

}

}

}

}

// Decode boxes

int num_priors = all_locs.size() / 4;

std::vector<float> boxes(num_priors * 4);

decode_boxes(all_locs.data(), boxes.data(), num_priors);

// Filter by confidence threshold

float conf_thresh = CONF_THRESH; // Use fixed threshold

std::vector<YOLACTDet> detections;

for (int i = 0; i < num_priors; i++) {

// Find max class score

float max_score = 0.0f;

int max_class = 0;

for (int c = 0; c < 80; c++) {

float score = all_confs[i * 80 + c];

if (score > max_score) {

max_score = score;

max_class = c;

}

}

if (max_score > conf_thresh) {

YOLACTDet det;

det.rect.x = boxes[i * 4 + 0];

det.rect.y = boxes[i * 4 + 1];

det.rect.width = boxes[i * 4 + 2] - boxes[i * 4 + 0];

det.rect.height = boxes[i * 4 + 3] - boxes[i * 4 + 1];

det.label = max_class;

det.prob = max_score;

// Store mask coefficients

det.mask_coeffs.resize(32);

for (int c = 0; c < 32; c++) {

det.mask_coeffs[c] = all_masks[i * 32 + c];

}

detections.push_back(det);

}

}

// Use yolov5 style NMS

qsort_descent_inplace(detections);

std::vector<int> picked;

nms_sorted_bboxes(detections, picked, NMS_THRESHOLD);

int count = picked.size();

std::vector<YOLACTDet> filtered_dets(count);

for (int i = 0; i < count; i++)

{

filtered_dets[i] = detections[picked[i]];

}

// Keep top MAX_DETECTIONS

int max_dets = MAX_DETECTIONS;

if ((int)filtered_dets.size() > max_dets) {

filtered_dets.resize(max_dets);

}

// Get proto data

float* proto_data = output[15];

int proto_h = PROTO_H;

int proto_w = PROTO_W;

int mask_dim = MASK_DIM;

// Assemble masks

for (auto& det : filtered_dets) {

det.mask = assemble_mask(det.mask_coeffs, proto_data, proto_h, proto_w, mask_dim, det.rect);

// Resize mask to original image size

cv::resize(det.mask, det.mask, cv::Size(orig_w, orig_h));

// Apply threshold

det.mask = (det.mask > MASK_THRESHOLD);

// Scale box to original image

det.rect.x *= orig_w;

det.rect.y *= orig_h;

det.rect.width *= orig_w;

det.rect.height *= orig_h;

// Clip to image bounds

det.rect.x = std::max(0.0f, std::min(det.rect.x, (float)orig_w - 1));

det.rect.y = std::max(0.0f, std::min(det.rect.y, (float)orig_h - 1));

det.rect.width = std::min(det.rect.width, orig_w - det.rect.x);

det.rect.height = std::min(det.rect.height, orig_h - det.rect.y);

}

Tend = std::chrono::steady_clock::now();

float f = std::chrono::duration_cast<std::chrono::milliseconds>(Tend - Tbegin).count();

std::cout << "post process time : " << f << " ms" << std::endl;

// Draw results

draw_yolact_detections(orig_img, filtered_dets);

return 0;

}

// Draw detection results with masks

void draw_yolact_detections(const cv::Mat& bgr, const std::vector<YOLACTDet>& dets)

{

cv::Mat result = bgr.clone();

fprintf(stderr, "detection num: %zu\n", dets.size());

for (size_t i = 0; i < dets.size(); i++) {

const YOLACTDet& det = dets[i];

int color_idx = (i * 5) % 19;

cv::Scalar color(g_colors[color_idx][0], g_colors[color_idx][1], g_colors[color_idx][2]);

// Draw mask overlay

cv::Mat mask_colored = cv::Mat::zeros(result.size(), result.type());

mask_colored.setTo(color, det.mask);

cv::addWeighted(result, 1.0, mask_colored, 0.5, 0, result);

// Draw bounding box

cv::rectangle(result, det.rect, color, 1);

// Draw label

char text[256];

sprintf(text, "%s %.1f%%", g_classes_name[det.label].c_str(), det.prob * 100);

int baseline = 0;

cv::Size label_size = cv::getTextSize(text, cv::FONT_HERSHEY_SIMPLEX, 0.5, 1, &baseline);

int x = det.rect.x;

int y = det.rect.y - label_size.height - baseline;

if (y < 0) y = 0;

if (x + label_size.width > result.cols) x = result.cols - label_size.width;

cv::rectangle(result, cv::Rect(cv::Point(x, y), cv::Size(label_size.width, label_size.height + baseline)),

cv::Scalar(255, 255, 255), -1);

cv::putText(result, text, cv::Point(x, y + label_size.height), cv::FONT_HERSHEY_SIMPLEX, 0.5,

cv::Scalar(0, 0, 0), 1);

fprintf(stderr, "%2d: %3.0f%%, [%4.0f, %4.0f, %4.0f, %4.0f], %s\n",

(int)i + 1, det.prob * 100,

det.rect.x, det.rect.y, det.rect.x + det.rect.width, det.rect.y + det.rect.height,

g_classes_name[det.label].c_str());

}

cv::imwrite("output_yolact.png", result);

}

主函数main()

#include <stdio.h>

#include <stdlib.h>

#include <string.h>

#include <sys/time.h>

#include "npulib.h"

#include "model_config.h"

/*-------------------------------------------

Macros and Variables

-------------------------------------------*/

extern uint8_t *yolact_preprocess(const char* imagepath, unsigned int *data_size);

extern int yolact_preprocess_no_copy(const char* imagepath, void* buff_ptr, unsigned int buff_size);

extern int yolact_postprocess(const char *imagepath, float **output, int output_cnt);

const char *usage =

"model_demo -nb modle_path -i input_path -l loop_run_count -m malloc_mbyte \n"

"-nb modle_path: the NBG file path.\n"

"-i input_path: the input file path.\n"

"-l loop_run_count: the number of loop run network.\n"

"-m malloc_mbyte: npu_unit init memory Mbytes.\n"

"-h : help\n"

"example: model_demo -nb model.nb -i input.jpg -l 10 -m 20 \n";

enum time_idx_e {

NPU_INIT = 0,

NETWORK_CREATE,

NETWORK_PREPARE,

NETWORK_PREPROCESS,

NETWORK_RUN,

NETWORK_LOOP,

TIME_IDX_MAX = 9

};

#if defined(__linux__)

#define TIME_SLOTS 10

static uint64_t time_begin[TIME_SLOTS];

static uint64_t time_end[TIME_SLOTS];

static uint64_t GetTime(void)

{

struct timeval time;

gettimeofday(&time, NULL);

return (uint64_t)(time.tv_usec + time.tv_sec * 1000000);

}

static void TimeBegin(int id)

{

time_begin[id] = GetTime();

}

static void TimeEnd(int id)

{

time_end[id] = GetTime();

}

static uint64_t TimeGet(int id)

{

return time_end[id] - time_begin[id];

}

#endif

int main(int argc, char** argv)

{

int status = 0;

int i = 0, k = 0, j = 0;

unsigned int count = 0;

long long total_infer_time = 0;

char *model_file = nullptr;

char *input_file = nullptr;

unsigned int loop_count = 1;

unsigned int malloc_mbyte = 10;

if (argc < 2) {

printf("%s\n", usage);

return -1;

}

for (i = 0; i< argc; i++) {

if (!strcmp(argv[i], "-nb")) {

model_file = argv[++i];

}

else if (!strcmp(argv[i], "-i")) {

input_file = argv[++i];

}

else if (!strcmp(argv[i], "-l")) {

loop_count = atoi(argv[++i]);

}

else if (!strcmp(argv[i], "-m")) {

malloc_mbyte = atoi(argv[++i]);

}

else if (!strcmp(argv[i], "-h")) {

printf("%s\n", usage);

return 0;

}

}

printf("model_file=%s, input=%s, loop_count=%d, malloc_mbyte=%d \n", model_file, input_file, loop_count, malloc_mbyte);

if (model_file == nullptr)

return -1;

/* NPU init*/

NpuUint npu_uint;

int ret = npu_uint.npu_init();

if (ret != 0) {

return -1;

}

NetworkItem yolact;

unsigned int network_id = 0;

status = yolact.network_create(model_file, network_id);

if (status != 0) {

printf("network %d create failed.\n", network_id);

return -1;

}

status = yolact.network_prepare();

if (status != 0) {

printf("network prepare fail, status=%d\n", status);

return -1;

}

TimeBegin(NETWORK_PREPROCESS);

#if 0

// input jpg file

void *file_data = nullptr;

unsigned int file_data_size = 0;

file_data = (void *)yolact_preprocess(input_file, &file_data_size);

status = yolact.network_load_input_buffer(file_data, file_data_size);

if (status != 0) {

printf("network load input file fail, status=%d\n", status);

}

if (file_data != nullptr) {

free(file_data);

file_data = nullptr;

}

#else

// input jpg file, no copy way

void *input_buffer_ptr = nullptr;

unsigned int input_buffer_size = 0;

yolact.get_network_input_buff_info(0, &input_buffer_ptr, &input_buffer_size);

printf("buffer ptr: %p, buffer size: %d \n", input_buffer_ptr, input_buffer_size);

yolact_preprocess_no_copy(input_file, input_buffer_ptr, input_buffer_size);

#endif

TimeEnd(NETWORK_PREPROCESS);

printf("feed input cost: %lu us.\n", (unsigned long)TimeGet(NETWORK_PREPROCESS));

// create yolact output buffer

int output_cnt = yolact.get_output_cnt(); // network output count (should be 16)

// printf("YOLACT output count: %d\n", output_cnt);

float **output_data = new float*[output_cnt]();

// for (int i = 0; i < output_cnt; i++) {

// output_data[i] = new float[yolact.m_output_data_len[i]];

// printf("output[%d] size: %d\n", i, yolact.m_output_data_len[i]);

// }

i = network_id;

/* run network */

TimeBegin(NETWORK_LOOP);

while (count < loop_count) {

count++;

printf("network: %d, loop count: %d\n", i, count);

status = yolact.network_input_output_set();

if (status != 0) {

printf("set network input/output %d failed.\n", i);

return -1;

}

#if defined (__linux__)

TimeBegin(NETWORK_RUN);

#endif

status = yolact.network_run();

if (status != 0) {

printf("fail to run network, status=%d, batchCount=%d\n", status, i);

return -2;

}

#if defined (__linux__)

TimeEnd(NETWORK_RUN);

printf("run time for this network %d: %lu us.\n", i, (unsigned long)TimeGet(NETWORK_RUN));

#endif

total_infer_time += (unsigned long)TimeGet(NETWORK_RUN);

yolact.get_output(output_data);

yolact_postprocess(input_file, output_data, output_cnt);

}

TimeEnd(NETWORK_LOOP);

if (loop_count > 1) {

printf("network: %d, this network run avg inference time=%d us, total avg cost: %d us\n", i,

(uint32_t)(total_infer_time / loop_count), (unsigned int)(TimeGet(NETWORK_LOOP) / loop_count));

}

// free output buffer

for (int i = 0; i < output_cnt; i++) {

delete[] output_data[i];

output_data[i] = nullptr;

}

if (output_data != nullptr)

delete[] output_data;

return ret;

}

模型转换

后续的一系列和其他模型一样,这里就直接给出转换的命令了,详细的说明可以参考yolox的部署,或者《NPU_模型部署_开发指南》

# using xxx_env.sh to create softlink

./convert_model_env.sh

# 导入

# pegasus_import.sh <model_name>

./pegasus_import.sh yolact_base_54_800000_rt

# 量化

# pegasus_quantize.sh <model_name> <quantize_type> <calibration_set_size>

./pegasus_quantize.sh yolact_base_54_800000_rt uint8 12

# 仿真(可选)

# pegasus_inference.sh <model_name> <quantize_type>

./pegasus_inference.sh yolact_base_54_800000_rt uint8

# 导出nb模型

# pegasus_export_ovx_nbg.sh <model_name> <quantize_type> <platform>

./pegasus_export_ovx_nbg.sh yolact_base_54_800000_rt uint8 t736

# 导出的模型文件存放在../model目录

# 例如 ../model/yolact_base_54_800000_rt_uint8_t736.nb编译、推理

这里以在Linux系统下进行编译推理,Android系统参考yolox的部署,这里直接给出命令行,根据自己的平台和系统进行修改就行了。

cd ../example/yolact/

./../build_linux.sh -t t736在examples目录下生成install目录,推送yolov5_seg_demo_linux_t736文件至板端,方式有多种,这里使用adb

adb push .\install\yolact_demo_linux_t736 /mnt/UDISK/进入板端相应的文件进行推理

chmod +x ./yolact_demo_t736

./yolact_demo_t736 -nb model/yolact_base_54_800000_rt_uint8_t736.nb -i model/bus.jpg输出结果

detection num: 4

1: 99%, [ 43, 404, 227, 919], person

2: 99%, [ 1, 254, 810, 776], bus

3: 42%, [ 661, 368, 810, 894], person

4: 42%, [ 219, 408, 351, 861], person

destory npu finished.

~NpuUint.

输出解析后保存的图片output_yolact.png

AtomGit 是由开放原子开源基金会联合 CSDN 等生态伙伴共同推出的新一代开源与人工智能协作平台。平台坚持“开放、中立、公益”的理念,把代码托管、模型共享、数据集托管、智能体开发体验和算力服务整合在一起,为开发者提供从开发、训练到部署的一站式体验。

更多推荐

已为社区贡献7条内容

已为社区贡献7条内容

所有评论(0)