【端侧部署yolo系列】ppyoloe部署至全志开发板

注:awnpu_model_zoo\docs里有详细的开发文档以及参考指南

本文是根据《NPU开发环境部署参考指南》,部署PC的ubuntu环境,使用Docker镜像环境为例进行说明。

如果想对部署流程进行更加详细的了解,可以参考《NPU_模型部署_开发指南》

重要说明:在awnpu_model_zoo内没有的模型,可参考awnpu_model_zoo\examples里面对应系列的模型进行修改部署,需要自行编写对应模型cpp前后处理代码以及修改相应的配置文件(如model_config.h、CMakeLists.txt)。如果遇到模型导出量化失败的情况,需要对模型进行相应的裁剪、或者采用不同的量化方式等。本文的ppyoloe已经在板端经过了推理验证。

环境配置

本模型基于百度飞浆PaddleDetection仓库,如果想使用百度飞桨的官方模型开始转化,就需要配置百度飞桨paddlepaddle环境。也可直接下载已经转化好的onnx模型文件ppyoloe_s.onnx(未简化、动态尺寸)、ppyoloe_s_4sim(已简化、固定尺寸)。

百度飞浆环境配置(可选)

注:只要是配置过yolo的环境,配置百度飞浆的环境问题就不大。具体参考百度飞浆安装文档

这里以python3.10 cuda为12.0为基础配置环境,requirements.txt如下,如果缺少什么库,直接安装即可。

paddlepaddle-gpu==2.6.0

paddle2onnx==1.2.5

paddledet==0.0.0

onnx==1.17.0

onnxruntime==1.23.2

onnxoptimizer==0.3.13

onnxsim==0.4.36

opencv-python==4.5.5.64

numpy==1.26.4

pycocotools==2.0.11

Pillow==12.1.0

matplotlib==3.10.8

scikit-learn==1.7.2

scikit-image==0.25.2

scipy==1.15.3

pandas==2.3.3

tqdm==4.67.1

Cython==3.2.4

pyclipper==1.4.0

shapely==2.1.2

paddle2onnx==1.2.5

imgaug==0.4.0

PyYAML==6.0.3

requests==2.32.5

six==1.17.0

protobuf==6.33.4模型转换(PaddlePaddle至onnx)

cd PaddleDetection

# 先导出.pdparms格式

python \

tools/export_model.py \

-c configs/ppyoloe/ppyoloe_crn_s_300e_coco.yml \

-o weights=https://paddledet.bj.bcebos.com/models/ppyoloe_crn_s_300e_coco.pdparams

# 将.pdparms转化为onnx模型

paddle2onnx \

--model_dir output_inference/ppyoloe_crn_s_300e_coco \

--model_filename model.pdmodel \

--params_filename model.pdiparams \

--opset_version 11 \

--save_file output_inference/ppyoloe_crn_s_300e_coco/ppyoloe_s.onnx运行结果如下:

[Paddle2ONNX] Start to parse PaddlePaddle model...

[Paddle2ONNX] Model file path: output_inference/ppyoloe_crn_s_300e_coco/model.pdmodel

[Paddle2ONNX] Parameters file path: output_inference/ppyoloe_crn_s_300e_coco/model.pdiparams

[Paddle2ONNX] Start to parsing Paddle model...

[Paddle2ONNX] Use opset_version = 11 for ONNX export.

[WARN][Paddle2ONNX] [multiclass_nms3: multiclass_nms3_0.tmp_1] [WARNING] Due to the operator multiclass_nms3, the exported ONNX model will only supports inference with input batch_size == 1.

[Paddle2ONNX] PaddlePaddle model is exported as ONNX format now.

全志环境配置(必要)

关于参考之前部署yolox的文章【端侧部署yolo系列】yolox部署至全志开发板T736![]() https://blog.csdn.net/troyteng/article/details/155444386?spm=1011.2124.3001.6209

https://blog.csdn.net/troyteng/article/details/155444386?spm=1011.2124.3001.6209

下载镜像文件和AWNPU_Model_Zoo,创建自己的容器,进入容器完成后续的操作。

模型准备

将保存在output_inference/ppyoloe_crn_s_300e_coco/ppyoloe_s.onnx模型,复制到awnpu_model_zoo/examples/ppyoloe/model。或者直接使用转化好的 onnx模型文件ppyoloe_s.onnx(未简化、动态尺寸)。

参考其他yolo模型目录,创建ppyoloe目录,

进入awnpu_model_zoo/examples/ppyoloe目录下。目录结构如下:

├── CMakeLists.txt

├── convert_model

│ ├── config_yml.py # 模型的配置文件

│ ├── convert_model_env.sh # 工具链生成脚本

│ └── python

│ ├── ppyoloe_infer.py # 推理简化模型

│ └── sub_model.py # 简化模型

├── main.cpp # 主函数

├── model

│ └── dog.jpg # 预测的图片

├── model_config.h

├── ppyoloe_postprocess.cpp # 模型后处理

└── ppyoloe_preprocess.cpp # 模型前处理

模型配置

# 进入模型转化的工作目录

cd /workspace/awnpu_model_zoo/examples/ppyoloe/convert_model

# 检查或修改config_yml.py文件的相关参数配置

vim config_yml.py将配置文件修改为如下图所示:

#!/usr/bin/env python3

import os

import sys

from acuitylib.vsi_nn import VSInn

import numpy as np

# "database" allowed types: "TEXT, NPY, H5FS, SQLITE, LMDB, GENERATOR, ZIP"

DATASET = '../../dataset/coco_12/dataset.txt'

DATASET_TYPE = "TEXT"

# mean, scale

MEAN = [123.675, 116.28, 103.53]

SCALE = [0.01712475, 0.0175070, 0.01742919]

# reverse_channel: True bgr, False rgb

REVERSE_CHANNEL = False

# add_preproc_node, True or False

ADD_PREPROC_NODE = True

# "preproc_type" allowed types:"IMAGE_RGB, IMAGE_RGB888_PLANAR, IMAGE_RGB888_PLANAR_SEP, IMAGE_I420,

# IMAGE_NV12,IMAGE_NV21, IMAGE_YUV444, IMAGE_YUYV422, IMAGE_UYVY422, IMAGE_GRAY, IMAGE_BGRA, TENSOR"

PREPROC_TYPE = "IMAGE_RGB"

# add_postproc_node, quant output -> float32 output

ADD_POSTPROC_NODE = TrueDATASE:用于量化校准的数据集

DATASE_TYPE:数据集类型,一般是TEXT和NPY。

MEAN和SCALE根据不同模型的归一化来进行修改。

归一化计算公式为normalized = (img / 255.0 - mean) / std

SCALE =(1/std)*255

REVERSE_CHANNEL:是否通道转换。(经过前处理之后的图像如果是RGB,True表示RGB→BGR,反之则不变化,根据模型的输入要求来进行配置)

ADD_PREPROC_NODE:是否打开前处理节点。False则表示对于转换后的nb模型,不使用通道转化和归一化操作

ADD_POSTPROC_NODE:是否打开后处理节点。True表示打开量化和反量化操作,最终输出的是float

模型简化

运行sub_model.py对模型进行裁剪,修改输出结构,同时将后处理结构移至外部,使用cpu进行相应的处理。

# 进入python目录,运行sub_model.py对模型进行裁剪,生成ppyoloe_s_4.onnx

cd python

python3 sub_model.py

# 运行推理

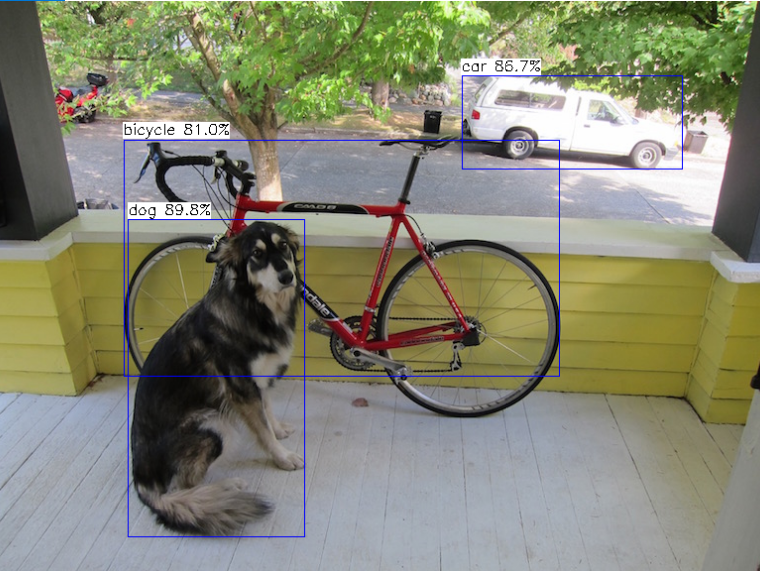

python3 ppyoloe_infer.py -m ../ppyoloe_s_4.onnx -i ../../model/dog.jpg -c 0.5推理结果如下:

detection num: 3

16: 88%, [ 131, 220, 309, 541], dog

2: 87%, [ 466, 74, 688, 170], car

1: 87%, [ 125, 117, 568, 419], bicycle

Result image saved to: result.jpg

模型简化脚本sub_model.py

#// https://onnx.ai/onnx/api/utils.html

import onnx

onnx.utils.extract_model('../ppyoloe_s.onnx', '../ppyoloe_s_4.onnx', ['image'],['conv2d_67.tmp_0',

'conv2d_74.tmp_0',

'conv2d_81.tmp_0',

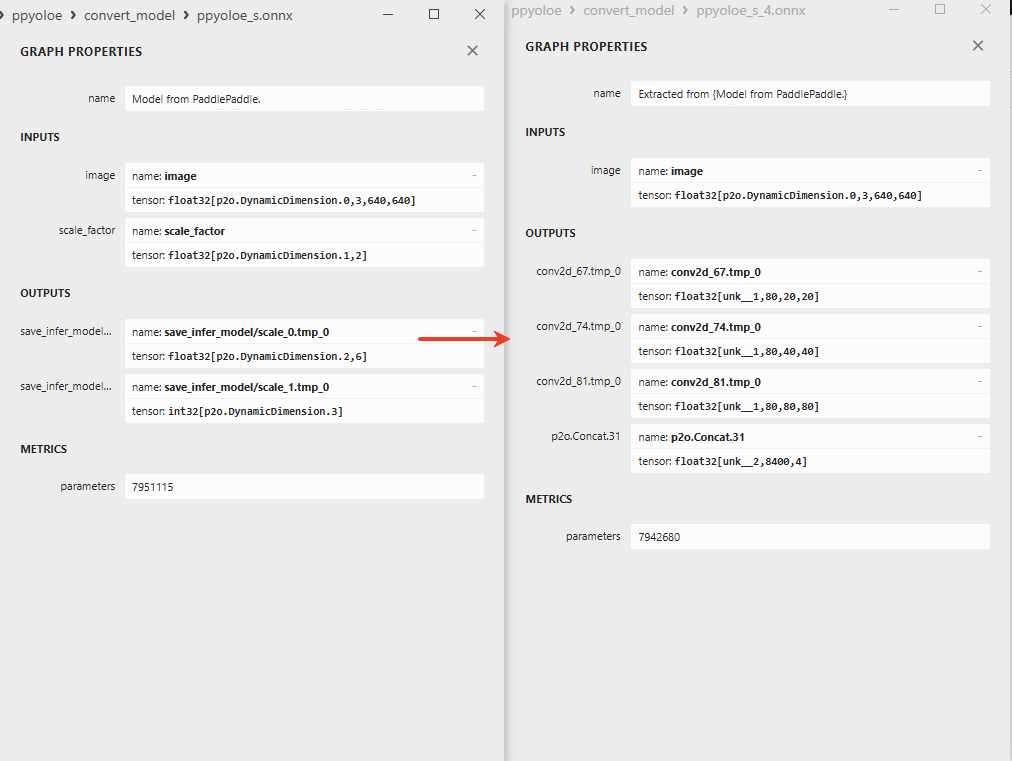

'p2o.Concat.31'])生成的ppyoloe_s_4.onnx模型在上级convert_model目录,模型输出差异如下,左边是官方导出模型,右边是修改后的模型(使用Netron查看):

代码中第一个参数是原始的模型,第二个参数是简化后模型,第三个参数是模型的输入名,第四个参数是模型的输出名,如果有多个输入输出,用逗号隔开。模型的输入输出名正好对应的是模型简化后的INPUTS和OUTPUTS中的name。

模型推理脚本ppyoloe_infer.py

# Copyright (c) 2022 PaddlePaddle Authors. All Rights Reserved.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

import numpy as np

import cv2

from onnxruntime import InferenceSession

import argparse

coco_classes = [

'person', 'bicycle', 'car', 'motorcycle', 'airplane', 'bus', 'train', 'truck', 'boat', 'traffic light',

'fire hydrant', 'stop sign', 'parking meter', 'bench', 'bird', 'cat', 'dog', 'horse', 'sheep', 'cow',

'elephant', 'bear', 'zebra', 'giraffe', 'backpack', 'umbrella', 'handbag', 'tie', 'suitcase', 'frisbee',

'skis', 'snowboard', 'sports ball', 'kite', 'baseball bat', 'baseball glove', 'skateboard', 'surfboard',

'tennis racket', 'bottle', 'wine glass', 'cup', 'fork', 'knife', 'spoon', 'bowl', 'banana', 'apple',

'sandwich', 'orange', 'broccoli', 'carrot', 'hot dog', 'pizza', 'donut', 'cake', 'chair', 'couch',

'potted plant', 'bed', 'dining table', 'toilet', 'tv', 'laptop', 'mouse', 'remote', 'keyboard', 'cell phone',

'microwave', 'oven', 'toaster', 'sink', 'refrigerator', 'book', 'clock', 'vase', 'scissors', 'teddy bear',

'hair drier', 'toothbrush'

]

class PPYOLOEPreprocessor:

def __init__(self, target_size=640, mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225]):

self.target_size = target_size

self.mean = np.array(mean, dtype=np.float32)

self.std = np.array(std, dtype=np.float32)

def decode_image(self, img_path):

with open(img_path, 'rb') as f:

im_read = f.read()

img = cv2.imdecode(np.frombuffer(im_read, dtype='uint8'), 1)

img = cv2.cvtColor(img, cv2.COLOR_BGR2RGB)

return img

def resize(self, img):

h, w = img.shape[:2]

im_size_min = np.min([h, w])

im_size_max = np.max([h, w])

target_size_min = np.min([self.target_size, self.target_size])

target_size_max = np.max([self.target_size, self.target_size])

im_scale = float(target_size_min) / float(im_size_min)

if np.round(im_scale * im_size_max) > target_size_max:

im_scale = float(target_size_max) / float(im_size_max)

new_h = int(np.round(h * im_scale))

new_w = int(np.round(w * im_scale))

resized_img = cv2.resize(

img,

(new_w, new_h),

interpolation=cv2.INTER_LINEAR

)

return resized_img, im_scale

def normalize(self, img):

img = img.astype(np.float32) / 255.0

img = (img - self.mean) / self.std

return img

def permute(self, img):

return img.transpose((2, 0, 1))

def pad(self, img):

c, h, w = img.shape

pad_h = max(self.target_size - h, 0)

pad_w = max(self.target_size - w, 0)

pad_top = pad_h // 2

pad_left = pad_w // 2

padded_img = np.zeros((c, self.target_size, self.target_size), dtype=np.float32)

padded_img[:, pad_top:pad_top+h, pad_left:pad_left+w] = img

return padded_img, (pad_h, pad_w)

def process(self, img_path):

img = self.decode_image(img_path)

original_shape = img.shape[:2]

img, scale = self.resize(img)

resized_shape = img.shape[:2]

img = self.normalize(img)

img = self.permute(img)

img, pad_hw = self.pad(img)

return img, scale, original_shape, resized_shape, pad_hw

class PPYOLOEPredictor:

def __init__(self, onnx_file_path, conf_threshold=0.5):

self.predictor = InferenceSession(onnx_file_path)

self.preprocessor = PPYOLOEPreprocessor()

self.draw_threshold = conf_threshold

self.num_classes = 80

self.fpn_strides = [32, 16, 8]

self.grid_sizes = [(20, 20), (40, 40), (80, 80)]

def decode_bboxes(self, cls_scores, bbox_preds):

all_cls_scores = []

for cls_score in cls_scores:

bs, num_classes, h, w = cls_score.shape

cls_score_flat = cls_score.reshape(bs, num_classes, -1).transpose(0, 2, 1)

cls_score_sigmoid = 1 / (1 + np.exp(-cls_score_flat))

all_cls_scores.append(cls_score_sigmoid)

all_cls_scores = np.concatenate(all_cls_scores, axis=1)

all_anchors = []

strides_per_pred = []

for stride, grid_size in zip(self.fpn_strides, self.grid_sizes):

grid_h, grid_w = grid_size

grid_y, grid_x = np.mgrid[0:grid_h, 0:grid_w]

grid_x = (grid_x + 0.5) * stride

grid_y = (grid_y + 0.5) * stride

anchors = np.stack([grid_x.reshape(-1), grid_y.reshape(-1)], axis=1)

all_anchors.append(anchors)

strides_per_pred.extend([stride] * (grid_h * grid_w))

all_anchors = np.concatenate(all_anchors, axis=0)

strides_arr = np.array(strides_per_pred).reshape(-1, 1)

concat_output = bbox_preds[0].copy()

bbox_preds_abs = np.zeros_like(concat_output)

bbox_preds_abs[:, 0] = all_anchors[:, 0] - concat_output[:, 0] * strides_arr[:, 0]

bbox_preds_abs[:, 1] = all_anchors[:, 1] - concat_output[:, 1] * strides_arr[:, 0]

bbox_preds_abs[:, 2] = all_anchors[:, 0] + concat_output[:, 2] * strides_arr[:, 0]

bbox_preds_abs[:, 3] = all_anchors[:, 1] + concat_output[:, 3] * strides_arr[:, 0]

return all_cls_scores, bbox_preds_abs

def postprocess(self, cls_scores, bbox_preds, original_shape, resized_shape, pad_hw):

all_results = []

if cls_scores.size == 0:

return np.array(all_results)

batch_cls_scores = cls_scores[0]

batch_bbox_preds = bbox_preds

pad_h, pad_w = pad_hw

resized_h, resized_w = resized_shape

original_h, original_w = original_shape

pad_left = pad_w // 2

pad_top = pad_h // 2

max_scores = np.max(batch_cls_scores, axis=1)

max_classes = np.argmax(batch_cls_scores, axis=1)

keep_mask = max_scores > self.draw_threshold

if not np.any(keep_mask):

return np.array(all_results)

filtered_scores = max_scores[keep_mask]

filtered_classes = max_classes[keep_mask]

filtered_bboxes = batch_bbox_preds[keep_mask]

in_image_mask = (

(filtered_bboxes[:, 0] >= pad_left) & (filtered_bboxes[:, 0] < pad_left + resized_w) &

(filtered_bboxes[:, 1] >= pad_top) & (filtered_bboxes[:, 1] < pad_top + resized_h) &

(filtered_bboxes[:, 2] >= pad_left) & (filtered_bboxes[:, 2] < pad_left + resized_w) &

(filtered_bboxes[:, 3] >= pad_top) & (filtered_bboxes[:, 3] < pad_top + resized_h)

)

filtered_scores = filtered_scores[in_image_mask]

filtered_classes = filtered_classes[in_image_mask]

filtered_bboxes = filtered_bboxes[in_image_mask]

if len(filtered_bboxes) == 0:

return np.array(all_results)

keep_indices = self.nms(filtered_bboxes, filtered_scores)

scale_x = original_w / resized_w

scale_y = original_h / resized_h

for idx in keep_indices:

result = np.zeros(6, dtype=np.float32)

result[0] = filtered_classes[idx]

result[1] = filtered_scores[idx]

x1, y1, x2, y2 = filtered_bboxes[idx]

result[2] = (x1 - pad_left) * scale_x # x1

result[3] = (y1 - pad_top) * scale_y # y1

result[4] = (x2 - pad_left) * scale_x # x2

result[5] = (y2 - pad_top) * scale_y # y2

result[2] = max(0, min(result[2], original_w - 1))

result[3] = max(0, min(result[3], original_h - 1))

result[4] = max(0, min(result[4], original_w - 1))

result[5] = max(0, min(result[5], original_h - 1))

all_results.append(result)

return np.array(all_results)

def nms(self, boxes, scores, iou_threshold=0.5):

if len(boxes) == 0:

return []

x1 = boxes[:, 0]

y1 = boxes[:, 1]

x2 = boxes[:, 2]

y2 = boxes[:, 3]

areas = (x2 - x1 + 1) * (y2 - y1 + 1)

order = scores.argsort()[::-1]

keep = []

while order.size > 0:

i = order[0]

keep.append(i)

xx1 = np.maximum(x1[i], x1[order[1:]])

yy1 = np.maximum(y1[i], y1[order[1:]])

xx2 = np.minimum(x2[i], x2[order[1:]])

yy2 = np.minimum(y2[i], y2[order[1:]])

w = np.maximum(0.0, xx2 - xx1 + 1)

h = np.maximum(0.0, yy2 - yy1 + 1)

inter = w * h

iou = inter / (areas[i] + areas[order[1:]] - inter)

inds = np.where(iou <= iou_threshold)[0]

order = order[inds + 1]

return keep

def predict(self, img_path):

img, scale, original_shape, resized_shape, pad_hw = self.preprocessor.process(img_path)

input_data = img[None, ]

outputs = self.predictor.run(output_names=None, input_feed={'image': input_data})

cls_scores = outputs[:3]

bbox_preds = outputs[3]

decoded_cls_scores, decoded_bboxes = self.decode_bboxes(cls_scores, bbox_preds)

bboxes = self.postprocess(decoded_cls_scores, decoded_bboxes, original_shape, resized_shape, pad_hw)

return bboxes, original_shape

def draw_bboxes(self, img_path, bboxes):

"""绘制边界框"""

img = cv2.imread(img_path)

if img is None:

return None

for bbox in bboxes:

label_id = int(bbox[0])

score = bbox[1]

left = int(bbox[2])

top = int(bbox[3])

right = int(bbox[4])

bottom = int(bbox[5])

cv2.rectangle(img, (left, top), (right, bottom), (0, 255, 0), 2)

class_name = coco_classes[label_id] if label_id < len(coco_classes) else f'class_{label_id}'

label = f"{class_name}: {score*100:.0f}%"

cv2.putText(img, label, (left, top - 5), cv2.FONT_HERSHEY_SIMPLEX, 0.5, (0, 255, 0), 2)

return img

def main():

parser = argparse.ArgumentParser(description="PPYOLOE ONNX Inference")

parser.add_argument(

"-m", "--onnx_file", type=str, required=True, help="ONNX model file path"

)

parser.add_argument(

"-i", "--image_file", type=str, required=True, help="Input image file path"

)

parser.add_argument(

"-s","--save_image", type=bool, default=True, help="Whether to save output image"

)

parser.add_argument(

"-o","--output_file", type=str, default="result.jpg", help="Output image name"

)

parser.add_argument(

"-c", "--conf_threshold", type=float, default=0.5, help="Confidence threshold for detection"

)

args = parser.parse_args()

predictor = PPYOLOEPredictor(args.onnx_file, args.conf_threshold)

bboxes, original_shape = predictor.predict(args.image_file)

print(f"detection num: {len(bboxes)}")

for bbox in bboxes:

label_id = int(bbox[0])

score = bbox[1]

left, top, right, bottom = map(int, bbox[2:6])

class_name = coco_classes[label_id] if label_id < len(coco_classes) else f'class_{label_id}'

print(f" {label_id}: {score*100:.0f}%, [ {left:3d}, {top:3d}, {right:3d}, {bottom:3d}], {class_name}")

if args.save_image:

result_img = predictor.draw_bboxes(args.image_file, bboxes)

if result_img is not None:

cv2.imwrite(args.output_file, result_img)

print(f"Result image saved to: {args.output_file}")

if __name__ == '__main__':

main()固定尺寸

由于高版本的paddlepaddle会生成动态图模型文件,在npu部署时需要改为静态尺寸

# 回到convert_model

cd ..

# 方法一:直接使用onnxsim (推荐)

python3 -m onnxsim ppyoloe_s_4.onnx ppyoloe_s_4sim.onnx --overwrite-input-shape 1,3,640,640

# 方法二:使用paddle2onnx (需要使用paddle环境)

python -m paddle2onnx.optimize \

--input_model output_inference/ppyoloe_crn_s_300e_coco/ppyoloe_s_4.onnx \

--output_model output_inference/ppyoloe_crn_s_300e_coco/ppyoloe_s_4sim.onnx \

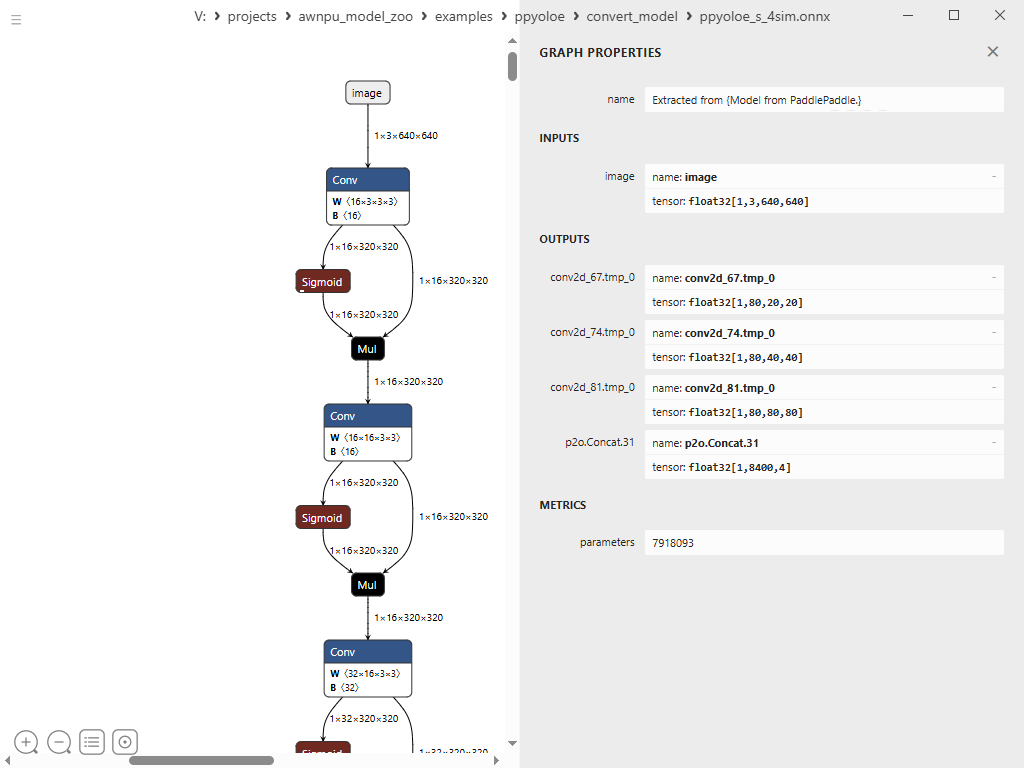

--input_shape_dict '{"image":[1,3,640,640]}'查看固定完成之后的ppyoloe_s_4sim.onnx模型如图所示

模型前后处理

配置文件model_config.h

#ifndef _MODEL_CONFIG_H_

#define _MODEL_CONFIG_H_

#include <iostream>

#include <vector>

#define COCO 1

//#define COCO 0

#if COCO

// coco, 80 class

#define CLASS_NUM 80

/* 640 * 640 */

#define LETTERBOX_ROWS 640

#define LETTERBOX_COLS 640

#define SCORE_THRESHOLD 0.45f

#define NMS_THRESHOLD 0.45f

const std::vector<std::string> g_classes_name{

"person", "bicycle", "car", "motorcycle", "airplane", "bus", "train", "truck", "boat", "traffic_light",

"fire_hydrant", "stop_sign", "parking_meter", "bench", "bird", "cat", "dog", "horse", "sheep", "cow",

"elephant", "bear", "zebra", "giraffe", "backpack", "umbrella", "handbag", "tie", "suitcase", "frisbee",

"skis", "snowboard", "sports_ball", "kite", "baseball_bat", "baseball_glove", "skateboard", "surfboard",

"tennis_racket", "bottle", "wine_glass", "cup", "fork", "knife", "spoon", "bowl", "banana", "apple",

"sandwich", "orange", "broccoli", "carrot", "hot_dog", "pizza", "donut", "cake", "chair", "couch",

"potted_plant", "bed", "dining_table", "toilet", "tv", "laptop", "mouse", "remote", "keyboard", "cell_phone",

"microwave", "oven", "toaster", "sink", "refrigerator", "book", "clock", "vase", "scissors", "teddy_bear",

"hair_drier", "toothbrush"

};

#else

// eg: plant, 1 class

#define CLASS_NUM 1

#define LETTERBOX_ROWS 640

#define LETTERBOX_COLS 640

#define SCORE_THRESHOLD 0.4f

#define NMS_THRESHOLD 0.45f

const std::vector<std::string> g_classes_name{

"plant"

};

#endif

#endif

前处理ppyoloe_preprocess

#include <opencv2/core/core.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/imgproc/imgproc.hpp>

#include <iostream>

#include <stdio.h>

#include <stdint.h>

#include <string.h>

#include <math.h>

#include <chrono>

#include "model_config.h"

/* model_inputmeta.yml file param modify, eg:

preproc_node_params:

add_preproc_node: True

preproc_type: IMAGE_BGR

demo model: model_rgb_xxx.nb.

*/

void get_input_data(const char* image_file, unsigned char* input_data, int letterbox_rows, int letterbox_cols)

{

cv::Mat sample = cv::imread(image_file, 1);

if (sample.empty()) {

fprintf(stderr, "cv::imread %s failed\n", image_file);

return;

}

cv::Mat img;

cv::cvtColor(sample, img, cv::COLOR_BGR2RGB);

/* letterbox process to support different letterbox size */

float scale_letterbox = 1.f;

if ((letterbox_rows * 1.0 / img.rows) < (letterbox_cols * 1.0 / img.cols))

{

scale_letterbox = letterbox_rows * 1.0 / img.rows;

}

else

{

scale_letterbox = letterbox_cols * 1.0 / img.cols;

}

int resize_cols = int(round(scale_letterbox * img.cols));

int resize_rows = int(round(scale_letterbox * img.rows));

float dh = (float)(letterbox_rows - resize_rows);

float dw = (float)(letterbox_cols - resize_cols);

dh /= 2.0f;

dw /= 2.0f;

cv::resize(img, img, cv::Size(resize_cols, resize_rows));

// create a mat with input_data ptr

cv::Mat img_new(letterbox_rows, letterbox_cols, CV_8UC3, input_data);

int top = (int)(round(dh - 0.1));

int bot = (int)(round(dh + 0.1));

int left = (int)(round(dw - 0.1));

int right = (int)(round(dw + 0.1));

// Letterbox filling

cv::copyMakeBorder(img, img_new, top, bot, left, right, cv::BORDER_CONSTANT, cv::Scalar(114, 114, 114));

}

int ppyoloe_preprocess(const char* imagepath, void* buff_ptr, unsigned int buff_size)

{

int img_c = 3;

// set default letterbox size

int letterbox_rows = LETTERBOX_ROWS;

int letterbox_cols = LETTERBOX_COLS;

int img_size = letterbox_rows * letterbox_cols * img_c;

unsigned int data_size = img_size * sizeof(uint8_t);

if (data_size > buff_size) {

printf("data size > buff size, please check code. data_size=%u, buff_size=%u\n", data_size, buff_size);

return -1;

}

get_input_data(imagepath, (unsigned char*)buff_ptr, letterbox_rows, letterbox_cols);

printf("ppyoloe preprocess completed: %s -> %dx%d, buffer size: %u\n",

imagepath, letterbox_cols, letterbox_rows, data_size);

return 0;

}后处理ppyoloe_postprocess

#include <opencv2/core/core.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/imgproc/imgproc.hpp>

#include <opencv2/dnn.hpp>

#include <iostream>

#include <stdio.h>

#include <vector>

#include <cmath>

#include <algorithm>

#include "model_config.h"

using namespace std;

struct Object

{

cv::Rect_<float> rect;

int label;

float prob;

};

static inline float intersection_area(const Object& a, const Object& b)

{

cv::Rect_<float> inter = a.rect & b.rect;

return inter.area();

}

static void qsort_descent_inplace(std::vector<Object>& objects, int left, int right)

{

int i = left;

int j = right;

float p = objects[(left + right) / 2].prob;

while (i <= j)

{

while (objects[i].prob > p)

i++;

while (objects[j].prob < p)

j--;

if (i <= j)

{

std::swap(objects[i], objects[j]);

i++;

j--;

}

}

#pragma omp parallel sections

{

#pragma omp section

{

if (left < j) qsort_descent_inplace(objects, left, j);

}

#pragma omp section

{

if (i < right) qsort_descent_inplace(objects, i, right);

}

}

}

static void qsort_descent_inplace(std::vector<Object>& objects)

{

if (objects.empty())

return;

qsort_descent_inplace(objects, 0, objects.size() - 1);

}

static void nms_sorted_bboxes(const std::vector<Object>& objects, std::vector<int>& picked, float nms_threshold, bool agnostic = true)

{

picked.clear();

const int n = objects.size();

std::vector<float> areas(n);

for (int i = 0; i < n; i++)

{

areas[i] = objects[i].rect.area();

}

for (int i = 0; i < n; i++)

{

const Object& a = objects[i];

int keep = 1;

for (int j = 0; j < (int)picked.size(); j++)

{

const Object& b = objects[picked[j]];

// Different categories do not undergo NMS

if (!agnostic && a.label != b.label)

continue;

float inter_area = intersection_area(a, b);

float union_area = areas[i] + areas[picked[j]] - inter_area;

if (inter_area / union_area > nms_threshold)

keep = 0;

}

if (keep)

picked.push_back(i);

}

}

static inline float sigmoid(float x)

{

return 1.0f / (1.0f + expf(-x));

}

std::vector<std::vector<float>> decode_bboxes_ppyoloe(const std::vector<std::vector<float>>& cls_outputs, const float* bbox_output, const int num_classes)

{

std::vector<std::vector<float>> results;

std::vector<float> all_cls_scores;

std::vector<int> grid_heights = {20, 40, 80};

std::vector<int> grid_widths = {20, 40, 80};

std::vector<int> fpn_strides = {32, 16, 8};

for (int scale_idx = 0; scale_idx < 3; ++scale_idx) {

int grid_h = grid_heights[scale_idx];

int grid_w = grid_widths[scale_idx];

int num_anchors = grid_h * grid_w;

const float* cls_data = cls_outputs[scale_idx].data();

for (int a = 0; a < num_anchors; ++a) {

for (int c = 0; c < num_classes; ++c) {

int cls_index = c * num_anchors + a;

float score = sigmoid(cls_data[cls_index]);

all_cls_scores.push_back(score);

}

}

}

std::vector<float> all_anchors_x;

std::vector<float> all_anchors_y;

std::vector<float> strides_per_pred;

for (int scale_idx = 0; scale_idx < 3; ++scale_idx) {

int grid_h = grid_heights[scale_idx];

int grid_w = grid_widths[scale_idx];

int stride = fpn_strides[scale_idx];

for (int i = 0; i < grid_h; ++i) {

for (int j = 0; j < grid_w; ++j) {

float anchor_x = (j + 0.5f) * stride;

float anchor_y = (i + 0.5f) * stride;

all_anchors_x.push_back(anchor_x);

all_anchors_y.push_back(anchor_y);

strides_per_pred.push_back(stride);

}

}

}

int total_anchors = all_anchors_x.size();

std::vector<float> bbox_preds_abs(total_anchors * 4, 0.0f);

for (int i = 0; i < total_anchors; ++i) {

int bbox_idx = i * 4;

float anchor_x = all_anchors_x[i];

float anchor_y = all_anchors_y[i];

float stride = strides_per_pred[i];

float l_offset = bbox_output[bbox_idx + 0];

float t_offset = bbox_output[bbox_idx + 1];

float r_offset = bbox_output[bbox_idx + 2];

float b_offset = bbox_output[bbox_idx + 3];

bbox_preds_abs[bbox_idx + 0] = anchor_x - l_offset * stride; // x1

bbox_preds_abs[bbox_idx + 1] = anchor_y - t_offset * stride; // y1

bbox_preds_abs[bbox_idx + 2] = anchor_x + r_offset * stride; // x2

bbox_preds_abs[bbox_idx + 3] = anchor_y + b_offset * stride; // y2

}

return {all_cls_scores, bbox_preds_abs};

}

int detect_ppyoloe_post(const cv::Mat& bgr, std::vector<Object>& objects, float **output)

{

std::chrono::steady_clock::time_point Tbegin, Tend;

Tbegin = std::chrono::steady_clock::now();

std::vector<std::vector<float>> cls_outputs(3);

const float prob_threshold = SCORE_THRESHOLD;

const float nms_threshold = NMS_THRESHOLD;

const int num_classes = CLASS_NUM;

const int letterbox_cols = LETTERBOX_COLS;

const int letterbox_rows = LETTERBOX_ROWS;

const float *cls_output0 = output[0]; // (num_classes x 20 x 20)

const float *cls_output1 = output[1]; // (num_classes x 40 x 40)

const float *cls_output2 = output[2]; // (num_classes x 80 x 80)

const float *reg_output = output[3]; // 8400x4 (total_anchors x 4)

int grid_h0 = 20;

int grid_w0 = 20;

int size0 = num_classes * grid_h0 * grid_w0; // num_classes x 20 x 20

int grid_h1 = 40;

int grid_w1 = 40;

int size1 = num_classes * grid_h1 * grid_w1; // num_classes x 40 x 40

int grid_h2 = 80;

int grid_w2 = 80;

int size2 = num_classes * grid_h2 * grid_w2; // num_classes x 80 x 80

cls_outputs[0].resize(size0);

std::copy(cls_output0, cls_output0 + size0, cls_outputs[0].begin());

cls_outputs[1].resize(size1);

std::copy(cls_output1, cls_output1 + size1, cls_outputs[1].begin());

cls_outputs[2].resize(size2);

std::copy(cls_output2, cls_output2 + size2, cls_outputs[2].begin());

// decode

auto decoded_results = decode_bboxes_ppyoloe(cls_outputs, reg_output, num_classes);

const auto& all_cls_scores = decoded_results[0];

const auto& bbox_preds_abs = decoded_results[1];

std::vector<Object> proposals;

int total_anchors = 8400;

for (int i = 0; i < total_anchors; ++i) {

int max_class_idx = -1;

float max_score = -FLT_MAX;

for (int c = 0; c < num_classes; ++c) {

int score_idx = i * num_classes + c;

float score = all_cls_scores[score_idx];

if (score > max_score) {

max_score = score;

max_class_idx = c;

}

}

if (max_score >= prob_threshold) {

Object obj;

int bbox_idx = i * 4;

float x1 = bbox_preds_abs[bbox_idx + 0];

float y1 = bbox_preds_abs[bbox_idx + 1];

float x2 = bbox_preds_abs[bbox_idx + 2];

float y2 = bbox_preds_abs[bbox_idx + 3];

if (x1 < x2 && y1 < y2 && x1 >= 0 && y1 >= 0 && x2 <= letterbox_cols && y2 <= letterbox_rows) {

obj.rect.x = x1;

obj.rect.y = y1;

obj.rect.width = x2 - x1;

obj.rect.height = y2 - y1;

obj.label = max_class_idx;

obj.prob = max_score;

proposals.push_back(obj);

}

}

}

// Sort all proposals by score from highest to lowest.

qsort_descent_inplace(proposals);

// appply NMS

std::vector<int> picked;

nms_sorted_bboxes(proposals, picked, nms_threshold);

// Inverse coordinate transformation

float scale_letterbox = 1.0f;

if ((letterbox_rows * 1.0 / bgr.rows) < (letterbox_cols * 1.0 / bgr.cols))

{

scale_letterbox = letterbox_rows * 1.0 / bgr.rows;

}

else

{

scale_letterbox = letterbox_cols * 1.0 / bgr.cols;

}

float ratio = 1.0f / scale_letterbox;

int resize_cols = int(round(scale_letterbox * bgr.cols));

int resize_rows = int(round(scale_letterbox * bgr.rows));

int hpad = (letterbox_rows - resize_rows)/ 2;

int wpad = (letterbox_cols - resize_cols)/ 2;

int count = picked.size();

objects.resize(count);

for (int i = 0; i < count; i++)

{

objects[i] = proposals[picked[i]];

float x0 = (objects[i].rect.x - wpad) * ratio;

float y0 = (objects[i].rect.y - hpad) * ratio;

float x1 = (objects[i].rect.x + objects[i].rect.width - wpad) * ratio;

float y1 = (objects[i].rect.y + objects[i].rect.height - hpad) * ratio;

x0 = std::max(std::min(x0, (float)(bgr.cols - 1)), 0.f);

y0 = std::max(std::min(y0, (float)(bgr.rows - 1)), 0.f);

x1 = std::max(std::min(x1, (float)(bgr.cols - 1)), 0.f);

y1 = std::max(std::min(y1, (float)(bgr.rows - 1)), 0.f);

objects[i].rect.x = x0;

objects[i].rect.y = y0;

objects[i].rect.width = x1 - x0;

objects[i].rect.height = y1 - y0;

}

// Sort objects by area

struct

{

bool operator()(const Object& a, const Object& b) const

{

return a.rect.area() > b.rect.area();

}

} objects_area_greater;

std::sort(objects.begin(), objects.end(), objects_area_greater);

Tend = std::chrono::steady_clock::now();

float f = std::chrono::duration_cast<std::chrono::milliseconds>(Tend - Tbegin).count();

fprintf(stderr, "detection num: %d\n", count);

return 0;

}

static void draw_objects(const cv::Mat& bgr, const std::vector<Object>& objects, const char *imagepath)

{

cv::Mat image = bgr.clone();

for (size_t i = 0; i < objects.size(); i++)

{

const Object& obj = objects[i];

if (obj.prob > 1.0) {

fprintf(stderr, "%2d: %3.0f%%, [%4.0f, %4.0f, %4.0f, %4.0f], score is illegal ........ \n", obj.label, obj.prob * 100, obj.rect.x,

obj.rect.y, obj.rect.x + obj.rect.width, obj.rect.y + obj.rect.height);

continue;

}

fprintf(stderr, "%2d: %3.0f%%, [%4.0f, %4.0f, %4.0f, %4.0f], %s\n", obj.label, obj.prob * 100, obj.rect.x,

obj.rect.y, obj.rect.x + obj.rect.width, obj.rect.y + obj.rect.height, g_classes_name[obj.label].c_str());

cv::rectangle(image, obj.rect, cv::Scalar(255, 0, 0));

char text[256];

sprintf(text, "%s %.1f%%", g_classes_name[obj.label].c_str(), obj.prob * 100);

int baseLine = 0;

cv::Size label_size = cv::getTextSize(text, cv::FONT_HERSHEY_SIMPLEX, 0.5, 1, &baseLine);

int x = obj.rect.x;

int y = obj.rect.y - label_size.height - baseLine;

if (y < 0)

y = 0;

if (x + label_size.width > image.cols)

x = image.cols - label_size.width;

cv::rectangle(image, cv::Rect(cv::Point(x, y), cv::Size(label_size.width, label_size.height + baseLine)),

cv::Scalar(255, 255, 255), -1);

cv::putText(image, text, cv::Point(x, y + label_size.height),

cv::FONT_HERSHEY_SIMPLEX, 0.5, cv::Scalar(0, 0, 0));

}

cv::imwrite("output_ppyoloe.png", image);

}

int ppyoloe_postprocess(const char *imagepath, float **output)

{

cv::Mat m = cv::imread(imagepath, 1);

if (m.empty()) {

fprintf(stderr, "cv::imread %s failed\n", imagepath);

return -1;

}

std::vector<Object> objects;

detect_ppyoloe_post(m, objects, output);

draw_objects(m, objects, imagepath);

return 0;

}主函数main

#include <stdio.h>

#include <stdlib.h>

#include <string.h>

#include <sys/time.h>

#include "npulib.h"

/*-------------------------------------------

Macros and Variables

-------------------------------------------*/

extern int ppyoloe_preprocess(const char* imagepath, void* buff_ptr, unsigned int buff_size);

extern int ppyoloe_postprocess(const char *imagepath, float **output);

const char *usage =

"ppyoloe_demo -nb modle_path -i input_path -l loop_run_count -m malloc_mbyte \n"

"-nb modle_path: the NBG file path.\n"

"-i input_path: the input file path.\n"

"-l loop_run_count: the number of loop run network.\n"

"-m malloc_mbyte: npu_unit init memory Mbytes.\n"

"-h : help\n"

"example: ppyoloe_demo -nb model.nb -i input.jpg -l 10 -m 20 \n";

enum time_idx_e {

NPU_INIT = 0,

NETWORK_CREATE,

NETWORK_PREPARE,

NETWORK_PREPROCESS,

NETWORK_RUN,

NETWORK_LOOP,

TIME_IDX_MAX = 9

};

#if defined(__linux__)

#define TIME_SLOTS 10

static uint64_t time_begin[TIME_SLOTS];

static uint64_t time_end[TIME_SLOTS];

static uint64_t GetTime(void)

{

struct timeval time;

gettimeofday(&time, NULL);

return (uint64_t)(time.tv_usec + time.tv_sec * 1000000);

}

static void TimeBegin(int id)

{

time_begin[id] = GetTime();

}

static void TimeEnd(int id)

{

time_end[id] = GetTime();

}

static uint64_t TimeGet(int id)

{

return time_end[id] - time_begin[id];

}

#endif

int main(int argc, char** argv)

{

int status = 0;

int i = 0;

unsigned int count = 0;

long long total_infer_time = 0;

char *model_file = NULL;

char *input_file = NULL;

unsigned int loop_count = 1;

unsigned int malloc_mbyte = 10;

if (argc < 2) {

printf("%s\n", usage);

return -1;

}

for (i = 0; i< argc; i++) {

if (!strcmp(argv[i], "-nb")) {

model_file = argv[++i];

}

else if (!strcmp(argv[i], "-i")) {

input_file = argv[++i];

}

else if (!strcmp(argv[i], "-l")) {

loop_count = atoi(argv[++i]);

}

else if (!strcmp(argv[i], "-m")) {

malloc_mbyte = atoi(argv[++i]);

}

else if (!strcmp(argv[i], "-h")) {

printf("%s\n", usage);

return 0;

}

}

printf("model_file=%s, input=%s, loop_count=%d, malloc_mbyte=%d \n", model_file, input_file, loop_count, malloc_mbyte);

if (model_file == nullptr)

return -1;

/* NPU init*/

NpuUint npu_uint;

// int ret = npu_uint.npu_init(malloc_mbyte*1024*1024); // 85x

int ret = npu_uint.npu_init();

if (ret != 0) {

return -1;

}

NetworkItem ppyoloe_net;

unsigned int network_id = 0;

status = ppyoloe_net.network_create(model_file, network_id);

if (status != 0) {

printf("network %d create failed.\n", network_id);

return -1;

}

status = ppyoloe_net.network_prepare();

if (status != 0) {

printf("network prepare fail, status=%d\n", status);

return -1;

}

TimeBegin(NETWORK_PREPROCESS);

// input jpg file, no copy way

void *input_buffer_ptr = nullptr;

unsigned int input_buffer_size = 0;

ppyoloe_net.get_network_input_buff_info(0, &input_buffer_ptr, &input_buffer_size);

printf("buffer ptr: %p, buffer size: %d \n", input_buffer_ptr, input_buffer_size);

ppyoloe_preprocess(input_file, input_buffer_ptr, input_buffer_size);

TimeEnd(NETWORK_PREPROCESS);

printf("feed input cost: %lu us.\n", (unsigned long)TimeGet(NETWORK_PREPROCESS));

// create ppyoloe output buffer

int output_cnt = ppyoloe_net.get_output_cnt(); // network output count

float **output_data = new float*[output_cnt]();

for (int i = 0; i < output_cnt; i++)

output_data[i] = new float[ppyoloe_net.m_output_data_len[i]];

i = network_id;

/* run network */

TimeBegin(NETWORK_LOOP);

while (count < loop_count) {

count++;

printf("network: %d, loop count: %d\n", i, count);

status = ppyoloe_net.network_input_output_set();

if (status != 0) {

printf("set network input/output %d failed.\n", i);

return -1;

}

#if defined (__linux__)

TimeBegin(NETWORK_RUN);

#endif

status = ppyoloe_net.network_run();

if (status != 0) {

printf("fail to run network, status=%d, batchCount=%d\n", status, i);

return -2;

}

#if defined (__linux__)

TimeEnd(NETWORK_RUN);

printf("run time for this network %d: %lu us.\n", i, (unsigned long)TimeGet(NETWORK_RUN));

#endif

total_infer_time += (unsigned long)TimeGet(NETWORK_RUN);

ppyoloe_net.get_output(output_data);

ppyoloe_postprocess(input_file, output_data);

}

TimeEnd(NETWORK_LOOP);

if (loop_count > 1) {

printf("network: %d, this network run avg inference time=%d us, total avg cost: %d us\n", i,

(uint32_t)(total_infer_time / loop_count), (unsigned int)(TimeGet(NETWORK_LOOP) / loop_count));

}

// free output buffer

for (int i = 0; i < output_cnt; i++) {

delete[] output_data[i];

output_data[i] = nullptr;

}

if (output_data != nullptr)

delete[] output_data;

return ret;

}模型转化(onnx至板端模型)

# using xxx_env.sh to create softlink

./convert_model_env.sh

export VSI_USE_IMAGE_PROCESS=1目录如下

.

|-- config_yml.py

|-- convert_model_env.sh

|-- pegasus_export_ovx_nbg.sh -> ../../../scripts_model_convert/pegasus_export_ovx_nbg.sh

|-- pegasus_import.sh -> ../../../scripts_model_convert/pegasus_import.sh

|-- pegasus_inference.sh -> ../../../scripts_model_convert/pegasus_inference.sh

|-- pegasus_quantize.sh -> ../../../scripts_model_convert/pegasus_quantize.sh

|-- ppyoloe_s.onnx

|-- ppyoloe_s_4.onnx

|-- ppyoloe_s_4sim.onnx

`-- python

|-- ppyoloe_infer.py

|-- result.jpg

`-- sub_model.py

1.模型导入

# 导入

# pegasus_import.sh <model_name>

./pegasus_import.sh ppyoloe_s_4sim2.模型量化

# 量化

# pegasus_quantize.sh <model_name> <quantize_type> <calibration_set_size>

./pegasus_quantize.sh ppyoloe_s_4sim uint8 123. 仿真(可选)

# 仿真(可选)

# pegasus_inference.sh <model_name> <quantize_type>

./pegasus_inference.sh ppyoloe_s_4sim uint84.模型导出

# pegasus_export_ovx_nbg.sh <model_name> <quantize_type> <platform>

./pegasus_export_ovx_nbg.sh ppyoloe_s_4sim uint8 t736

# 导出的模型文件存放在../model目录

# 例如 ../model/ppyoloe_s_4sim_uint8_t736.nb交叉编译

由于一般都是在服务器上编译,再把模型发送至板端进行推理,所以需要用到交叉编译。如果直接在板端编译,直接进行推理即可。解压文件需要退出docker(Ctrl + d)

1.解压opencv压缩包

# 进入目录

cd ../../../3rdparty/opencv/

# 解压,选择对应平台,这里选择linux aarch64

# armhf, eg: V85x, R853

unzip opencv-3.4.16-gnueabihf-linux.zip

# linux aarch64, eg: T527/MR527/MR536/T536/A733/T736

unzip opencv-4.9.0-aarch64-linux-sunxi-glibc.zip

# android aarch64, eg: T527/A733/T736

unzip opencv-4.9.0-android.zip2.准备交叉编译工具链

# 进入目录

cd ../../0-toolchains/

# 解压

# aarch64, MR527, T527, MR536

tar xvf gcc-arm-10.3-2021.07-x86_64-aarch64-none-linux-gnu.tar.xz3.开始编译

# 进入examples目录

cd ../examples

chmod +x build_linux.sh

# ./build_linux.sh -t <platform> -p <model>

./build_linux.sh -t t736 -p ppyoloe

# 编译完成之后会在ppyoloe文件夹内生成一个install目录

tree ppyoloe/install/目录结构如下

install/

`-- ppyoloe_demo_linux_t736

|-- model

| |-- dog.jpg

| `-- ppyoloe_s_4sim_uint8_t736.nb

`-- ppyoloe_demo_t736

模型推理

将上述生成的文件推送至开发板,方式不限于adb这一种

# 这里是采用ADB的方式发送至板端。

adb push Z:\projects\docker_data\awnpu_model_zoo\examples\ppyoloe\install /mnt/UDISK/

# 在板端进入ppyoloe_demo_linux_t736目录

cd /mnt/UDISK/install/ppyoloe_demo_linux_t736/

# 推理

./ppyoloe_demo_t736 -nb model/ppyoloe_s_4sim_uint8_t736.nb -i model/dog.jpg

# 运行后,打印log输出,能看到检测信息输出,并将检测结果画框保存为图片output_ppyoloe.png,可以通过adb pull的方式在服务器端进行查看。运行后,打印log输出,能看到检测信息输出;

model_file=model/ppyoloe_s_4sim_uint8_t736.nb, input=model/dog.jpg, loop_count=1, malloc_mbyte=10

VIPLite driver software version 2.0.3.2-AW-2024-08-30

input 0 dim 3 640 640 1, data_format=2, quant_format=0, name=image_295_out0, none-quant

output 0 dim 20 20 80 1, data_format=0, name=uid_7_out_02d_67.tmp_0/out0_0_out0, none-quant

output 1 dim 40 40 80 1, data_format=0, name=uid_6_out_02d_74.tmp_0/out0_1_out0, none-quant

output 2 dim 80 80 80 1, data_format=0, name=uid_5_out_02d_81.tmp_0/out0_2_out0, none-quant

output 3 dim 4 8400 1 0, data_format=0, name=attach_p2o.Concat.31/out0_3_out0, none-quant

nbg name=model/ppyoloe_s_4sim_uint8_t736.nb, size: 6940848.

create network 0: 13647 us.

prepare network: 7327 us.

buffer ptr: 0xfad6600, buffer size: 1228800

ppyoloe preprocess completed: model/dog.jpg -> 640x640, buffer size: 1228800

feed input cost: 28212 us.

network: 0, loop count: 1

run time for this network 0: 22718 us.

detection num: 3

1: 81%, [ 126, 140, 566, 379], bicycle

16: 90%, [ 130, 220, 309, 541], dog

2: 87%, [ 467, 75, 690, 170], car

destory npu finished.

~NpuUint.

板端的运行结果:

AtomGit 是由开放原子开源基金会联合 CSDN 等生态伙伴共同推出的新一代开源与人工智能协作平台。平台坚持“开放、中立、公益”的理念,把代码托管、模型共享、数据集托管、智能体开发体验和算力服务整合在一起,为开发者提供从开发、训练到部署的一站式体验。

更多推荐

已为社区贡献7条内容

已为社区贡献7条内容

所有评论(0)