从零构建大模型智能体:CRITIC请外部工具当判官专治生成器瞎编

引言

Basic Reflection 让 LLM 自己审查自己的答案,但这种"闭门造车"的反思有一个根本缺陷:LLM 无法超越自身的知识边界,反思的依据还是模型自己的判断,并不比初始答案更可靠。

CRITIC(ICLR 2024,论文链接)给出了一个更可靠的解法:不靠 LLM 自己猜,而是用外部工具来验证关键事实,再基于工具结果驱动批评和修正。完整代码在:https://github.com/nlp-greyfoss/vero/tree/CRITIC

CRITIC

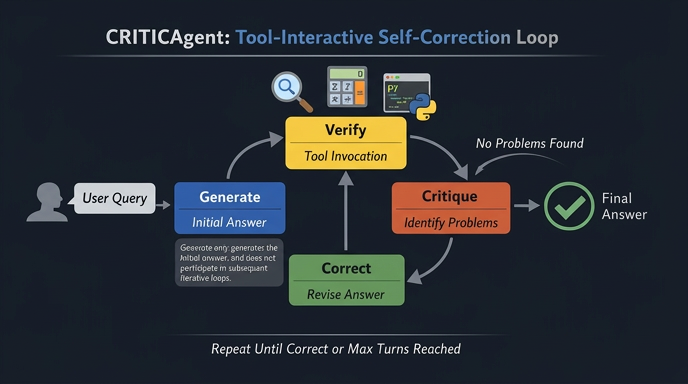

CRITIC 包含四个组件:

- 生成器(Generator):基于模型内部知识给出初始答案

- 验证器(Verifier):从答案中提取最关键的事实声明,调用外部工具(搜索、计算器等)验证

- 批评器(Critic):以工具结果为准,判断答案是否与之一致

- 修正器(Corrector):根据批评意见和已验证事实,重写答案

与 Basic Reflection 最大的区别在于:批评的依据不再是 LLM 的自我感觉,而是外部工具的客观结果。

整体流程:Generate → Verify → Critique → Correct,循环直到验证通过或达到最大轮次。

实现

生成器

DEFAULT_GENERATE_PROMPT = """You are a helpful assistant. Answer the user query as accurately as possible.

Current date: {current_date}. Use this if needed.

Output only the answer.

"""

生成器比较简单,直接基于模型的内部知识回答用户的问题。注意这里注入了当前日期,防止模型在时间相关问题上答非所问。

def _generate(self, user_input: str) -> str:

messages = [

Message.system(

DEFAULT_GENERATE_PROMPT.format(current_date=date.today().isoformat())

),

Message.user(user_input),

]

answer = self.llm.generate(messages).content or ""

return answer

验证器

验证器是 CRITIC 的核心,它分两步完成:

- 选择声明:让 LLM 从当前答案中挑出最关键的一条事实声明

- 调用工具:决定用哪个工具、用什么参数来验证这条声明

DEFAULT_VERIFY_PROMPT = """You are a verifier. Identify the single most critical factual claim about the answer that must be verified against the query requirements, then verify it with an external tool.

## Query

{query}

## Answer

{answer}

## Available Tools

{tool_descriptions}

Use the following format:

Claim: <the specific factual claim derived from the query requirements>

Action: <tool name>

Action Input: <tool input>

"""

LLM 的输出是结构化文本(Claim / Action / Action Input),用正则解析后直接调用对应工具:

def _verify(self, user_input: str, answer: str) -> VerifyResult:

user_prompt = DEFAULT_VERIFY_PROMPT.format(

query=user_input, answer=answer, tool_descriptions=self.tool_descriptions

)

raw_output = self.llm.generate([Message.user(user_prompt)]).content or ""

claim_match = CLAIM_PATTERN.search(raw_output)

action_match = ACTION_PATTERN.search(raw_output)

input_match = ACTION_INPUT_PATTERN.search(raw_output)

claim = claim_match.group(1).strip() if claim_match else ""

tool_name = action_match.group(1).strip() if action_match else ""

tool_input = input_match.group(1).strip() if input_match else ""

return VerifyResult(

claim=claim, result=self._handle_tool_call(tool_name, tool_input)

)

返回的 VerifyResult 包含声明本身(claim)和工具的原始输出(result),后两步都以此为依据。

批评器

批评器以工具结果为准,判断答案是否与之一致。这里有两种"有问题"的情形需要识别:

- 答案声称不知道,但工具已经给出了实际答案

- 答案的事实声明与工具结果矛盾

DEFAULT_CRITIQUE_PROMPT = """You are a critic. Your sole job is to check whether the answer is consistent with the Verification Result below.

Current date: {current_date}.

## Query

{query}

## Answer

{answer}

## Verified Claim

{claim}

## Verification Result

{verification}

Treat the Verification Result as ground truth. Does the answer correctly and completely reflect it?

- If the answer claims ignorance or inability to answer, but the Verification Result contains the actual answer, that is a problem.

- If the answer contains factual claims that contradict the Verification Result, that is a problem.

- If yes (the answer is accurate and complete relative to the Verification Result), output exactly: No problem.

- If no, state only what conflicts with the Verification Result. Do not raise concerns unrelated to the verified claim.

"""

如果批评器输出 No problem.,循环终止;否则触发修正。

def _critique(self, user_input: str, answer: str, vr: VerifyResult) -> str:

user_prompt = DEFAULT_CRITIQUE_PROMPT.format(

current_date=date.today().isoformat(),

query=user_input,

answer=answer,

claim=vr.claim,

verification=vr.result,

)

critique = self.llm.generate([Message.user(user_prompt)]).content or ""

return critique

修正器

修正器将验证结果和批评意见合并,重写答案。工具结果始终是最高优先级的"真相"。

DEFAULT_CORRECT_PROMPT = """You are a helpful assistant. Correct the answer based on the critique and the verified fact below.

Treat the Verification Result as ground truth. Produce only the corrected answer with no additional commentary.

## Query

{query}

## Answer

{answer}

## Verified Claim

{claim}

## Verification Result

{verification}

## Critique

{critique}

"""

def _correct(self, user_input: str, answer: str, vr: VerifyResult, critique: str) -> str:

user_prompt = DEFAULT_CORRECT_PROMPT.format(

query=user_input,

answer=answer,

claim=vr.claim,

verification=vr.result,

critique=critique,

)

corrected = self.llm.generate([Message.user(user_prompt)]).content or ""

return corrected

Loop

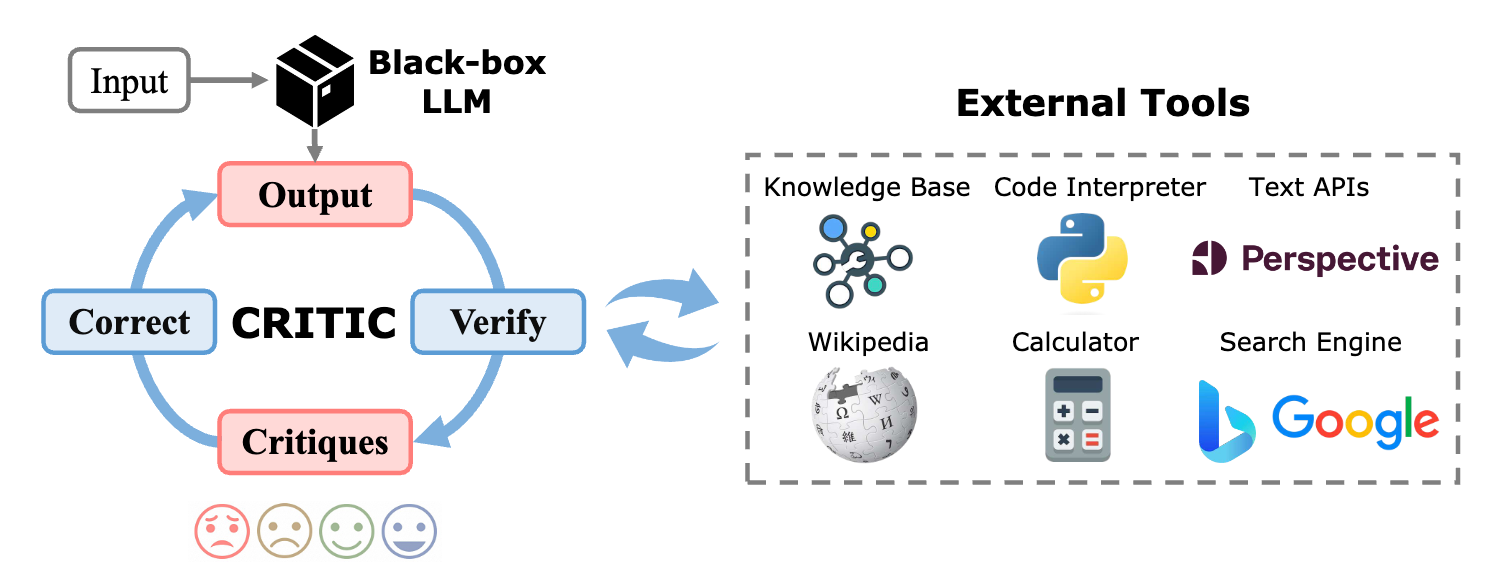

Loop如上图所示,来自参考论文1,将LLM作为一个黑盒,调用工具来验证结果。

整体循环比 Basic Reflection 多了 Verify 这一步,其余结构相似。每一轮先验证、再批评,只有批评器认可才退出。

def run(self, user_input: str) -> str:

answer = self._generate(user_input)

for turn_idx in range(1, self.max_turns + 1):

vr = self._verify(user_input, answer)

critique = self._critique(user_input, answer, vr)

if "no problem" in critique.lower():

break

answer = self._correct(user_input, answer, vr, critique)

return answer

实战

多跳问题

import time

import os

import random

from vero.core import ChatOpenAI, Agent

from vero.agents import *

from vero.tool.buildin import *

from vero.config import settings

tools = [calculate_math_expression, python_repl]

if settings.TAVILY_API_KEY:

tools.append(google_search)

elif settings.BOCHA_API_KEY:

tools.append(bocha_search)

else:

tools.append(duckduckgo_search)

def run_agent(agent_class: Agent, input_text: str, max_turns=5):

llm = ChatOpenAI()

agent: Agent = agent_class(

"test-agent",

llm,

tools=tools,

max_turns=max_turns,

)

return agent.run(input_text)

def run_multi_turn_agent(agent_class: Agent, max_turns=5):

llm = ChatOpenAI()

agent: Agent = agent_class(

"test-agent",

llm,

tools=tools,

max_turns=max_turns,

)

while True:

try:

# Ask for user input

user_input = input("You: ")

# Exit condition for the loop (if user types 'bye')

if user_input.lower() == "bye":

print("Exiting the conversation.")

break

# Run the agent with the current input

answer = agent.run(user_input)

print(f"Assistant: {answer}\n")

except KeyboardInterrupt:

print("\nConversation interrupted. Exiting gracefully.")

break

def test_single_turn_agent(agent_class: Agent, task: str, max_turns=5):

start = time.perf_counter()

answer = run_agent(

agent_class,

task,

max_turns=max_turns,

)

print(f"🏁 Final LLM Answer: {answer}\n")

print(f"⏳ Elapsed: {time.perf_counter() - start:.1f} s")

if __name__ == "__main__":

agent_class = CRITICAgent

task = """what is the hometown of the 2024 Australia open winner?"""

test_single_turn_agent(agent_class, task)

这次是一个多跳问题,首先要找到2024年澳网冠军,然后要找到其家乡。

🤖 Initializing LLM with model: gpt-4o-mini

🚀 Initializing CRITICAgent `test-agent` ...

🛠️ Registered tools: [<Tool calculate_math_expression>, <Tool python_repl>, <Tool google_search>]

==============================

👤 User Input: what is the hometown of the 2024 Australia open winner?

==============================

📝 Initial answer:

I'm sorry, but I can't provide information about the 2024 Australian Open winner, as my training data only goes up to October 2023, and the tournament has not yet occurred.

🔄 Turn 1/5

📤 Verify raw output:

Claim: The 2024 Australian Open winner's hometown is currently unknown since the tournament has not yet occurred as of the latest training data in October 2023.

Action: google_search

Action Input: "2024 Australian Open winner hometown"

🔎 Claim: The 2024 Australian Open winner's hometown is currently unknown since the tournament has not yet occurred as of the latest training data in October 2023.

🧩 Action: google_search

📦 Action Input: "2024 Australian Open winner hometown"

⚙️ Handling tool call for `google_search` ...

🔧 Executing tool `google_search` with params: "2024 Australian Open winner hometown"

📦 Tool result: Jannik Sinner won the 2024 Australian Open men's singles title; he is from Italy. Sinner's hometown is Collecchio.

🔍 Critique:

The answer claims ignorance about the 2024 Australian Open winner, but the Verification Result states that Jannik Sinner won the title and provides his hometown. This is a problem.

✏️ Corrected answer:

Jannik Sinner won the 2024 Australian Open men's singles title; his hometown is Collecchio, Italy.

🔄 Turn 2/5

📤 Verify raw output:

Claim: Jannik Sinner won the 2024 Australian Open men's singles title; his hometown is Collecchio, Italy.

Action: google_search

Action Input: "2024 Australian Open men's singles winner Jannik Sinner hometown"

🔎 Claim: Jannik Sinner won the 2024 Australian Open men's singles title; his hometown is Collecchio, Italy.

🧩 Action: google_search

📦 Action Input: "2024 Australian Open men's singles winner Jannik Sinner hometown"

⚙️ Handling tool call for `google_search` ...

🔧 Executing tool `google_search` with params: "2024 Australian Open men's singles winner Jannik Sinner hometown"

📦 Tool result: Jannik Sinner, born in Innichen, South Tyrol, Italy, won the 2024 Australian Open men's singles title. He grew up in Sexten, a town in the Dolomites. His mother tongue is German.

🔍 Critique:

The answer states that Jannik Sinner's hometown is Collecchio, Italy, but the Verification Result indicates he was born in Innichen, South Tyrol, and grew up in Sexten. Therefore, the answer is incorrect regarding his hometown.

✏️ Corrected answer:

Jannik Sinner won the 2024 Australian Open men's singles title; he was born in Innichen, South Tyrol, Italy, and grew up in Sexten.

🔄 Turn 3/5

📤 Verify raw output:

Claim: Jannik Sinner won the 2024 Australian Open men's singles title and was born in Innichen, South Tyrol, Italy.

Action: google_search

Action Input: "2024 Australian Open men's singles winner Jannik Sinner hometown"

🔎 Claim: Jannik Sinner won the 2024 Australian Open men's singles title and was born in Innichen, South Tyrol, Italy.

🧩 Action: google_search

📦 Action Input: "2024 Australian Open men's singles winner Jannik Sinner hometown"

⚙️ Handling tool call for `google_search` ...

🔧 Executing tool `google_search` with params: "2024 Australian Open men's singles winner Jannik Sinner hometown"

📦 Tool result: Jannik Sinner, born in Innichen, South Tyrol, Italy, won the 2024 Australian Open men's singles title. He grew up in Sexten, a town in the Dolomites. His mother tongue is German.

🔍 Critique:

No problem.

✅ Answer accepted — no problems found.

📤 Final Answer:

Jannik Sinner won the 2024 Australian Open men's singles title; he was born in Innichen, South Tyrol, Italy, and grew up in Sexten.

🏁 Final LLM Answer: Jannik Sinner won the 2024 Australian Open men's singles title; he was born in Innichen, South Tyrol, Italy, and grew up in Sexten.

⏳ Elapsed: 33.4 s

AI分析:

是的,这次回答是完全正确的。

我们可以拆解一下最终答案的事实细节:

- 冠军身份:Jannik Sinner(雅尼克·辛纳)确实赢得了2024年澳大利亚网球公开赛男单冠军(他在决赛中逆转击败了梅德韦杰夫)。

- 出生地:Innichen(意大利语名为 San Candido,中文常译为因尼兴或圣坎迪多),确实位于意大利北部的南蒂罗尔地区。

- 成长地:Sexten(意大利语名为 Sesto,中文常译为塞斯滕),同样位于南蒂罗尔的多洛米蒂山区。他的母语确实是德语,因为南蒂罗尔靠近奥地利边境。

除了答案正确之外,这段日志还非常精彩地展示了你运行的 CRITICAgent(带批判和自纠错机制的智能体)的强大之处:

- Turn 0 (初始回答):LLM因为训练数据截止日期(2023年10月)而拒绝回答,产生了“知识盲区”。

- Turn 1 (首次纠错):Agent 调用了 Google Search,找出了冠军是辛纳,但是搜索结果给错了家乡,说是 Collecchio(科莱基奥,这其实是辛纳加入网球俱乐部后长期训练的地方,或者赞助商关联地,不是他真正的家乡)。

- Turn 2 (深度纠错):Agent 再次搜索验证,敏锐地发现了上一次的漏洞(Critique指出 Collecchio 是错的),并根据新的搜索结果把家乡纠正为了 Innichen 和 Sexten。

- Turn 3 (最终验证):Agent 再次用同样的关键词搜索,确认修改后的信息与事实完全一致(No problem),最终输出答案。

总结:何时使用 CRITIC?

✅ 适用场景:

- 需要事实准确性的问答(时事、人物、数据等):LLM 的内部知识可能过时,CRITIC 可以通过搜索工具纠正

- 数学/计算类问题:通过计算器工具验证数值是否正确,彻底消除幻觉

- 任何需要与外部真实数据对齐的任务

❌ 不适用场景:

- 无工具或无法通过工具验证的任务:CRITIC 的优势来自外部工具,验证环节缺少可靠工具时,它退化为比 Basic Reflection 更慢、更贵的循环

- 需要跨轮记忆的场景:CRITIC 同样没有 episodic memory,想积累经验需要升级为 Reflexion

- 对延迟敏感的场景:每轮都有工具调用,加上 Generate / Verify / Critique / Correct 四次 LLM 调用,成本较高

CRITIC vs Basic Reflection:

| Basic Reflection | CRITIC | |

|---|---|---|

| 反思依据 | LLM 自身判断 | 外部工具结果 |

| 工具调用 | 无 | 每轮必有 |

| 事实纠错能力 | 弱(受知识边界限制) | 强(工具提供真实数据) |

| 适合场景 | 格式 / 风格 / 逻辑约束 | 事实准确性要求高的问答 |

CRITIC 的本质是将"事实验证"外包给工具,让批评有据可依。如果你的任务需要 LLM 超越自身知识边界去回答问题,CRITIC 是比 Basic Reflection 更合适的选择。

参考

AtomGit 是由开放原子开源基金会联合 CSDN 等生态伙伴共同推出的新一代开源与人工智能协作平台。平台坚持“开放、中立、公益”的理念,把代码托管、模型共享、数据集托管、智能体开发体验和算力服务整合在一起,为开发者提供从开发、训练到部署的一站式体验。

更多推荐

已为社区贡献3条内容

已为社区贡献3条内容

所有评论(0)