XGuard部署测评:给大模型加一道真正可落地的 Prompt 防火墙

最近在做大模型应用时,我发现一个现实问题:

模型能力越来越强,但只要入口没有风控,攻击指令、违规请求、越权提示词就会源源不断地冲进系统。

很多团队的做法是“先让模型答,再补救”,结果往往是事后审核压力巨大,线上风险也难以真正收敛。

所以我直接上手做了一个 XGuard Prompt 防火墙,目标很明确:

请求先过护栏,再进大模型;不安全就当场拦截,安全才放行生成。

一、为什么选 YuFeng-XGuard-Reason

YuFeng-XGuard-Reason 是专为内容安全场景设计的护栏模型,核心优势不是“花哨”,而是工程可用:

- 支持对用户输入、模型输出与通用文本做风险识别

- 输出结构化风险标签,便于策略系统直接消费

- 可附带风险归因解释,方便审计和运营复盘

- 在多语言风险识别、攻击指令防御等任务上表现稳定

它基于 Qwen3 架构,针对线上实时场景做了延迟与准确率平衡。简单说:

既能判断“危不危险”,也能说明“为什么危险”。

二、本地版:先跑通最小可用闭环

第一步我先做了本地推理版本,验证核心链路:

- 用户输入 prompt

- XGuard 判定风险

- 风险输入直接拦截

- 安全输入才调用聊天模型

代码如下:

import os

import streamlit as st

from openai import OpenAI

from inference import Guardrail

MODEL_PATH = r"e:\xguard\YuFeng-XGuard-Reason-0.6B"

DEFAULT_BASE_URL = "https://api-inference.modelscope.cn/v1"

DEFAULT_MODEL_ID = "Qwen/Qwen3-30B-A3B-Instruct-2507"

SYSTEM_PROMPT = "You are a helpful assistant."

@st.cache_resource

def get_guardrail(model_path: str) -> Guardrail:

return Guardrail(model_path)

def init_state() -> None:

if "messages" not in st.session_state:

st.session_state.messages = []

def check_prompt_risk(guardrail: Guardrail, user_text: str) -> dict:

return guardrail.infer(

messages=[{"role": "user", "content": user_text}],

policy=None,

enable_reasoning=True,

)

def is_safe(result: dict) -> bool:

return result.get("risk_tag", "").lower() == "sec"

def format_block_message(result: dict) -> str:

risk_tag = result.get("risk_tag", "")

risk_score = result.get("risk_score", 0.0)

explanation = result.get("explanation", "")

elapsed = result.get("time", 0.0)

return (

"XGuard 检测到风险输入,已拦截本次请求。\n\n"

f"- risk_tag: {risk_tag}\n"

f"- risk_score: {risk_score:.4f}\n"

f"- latency: {elapsed:.3f}s\n\n"

f"{explanation}"

)

def render_history() -> None:

for message in st.session_state.messages:

with st.chat_message(message["role"]):

st.markdown(message["content"])

def stream_chat_response(client: OpenAI, model_id: str):

return client.chat.completions.create(

model=model_id,

messages=[{"role": "system", "content": SYSTEM_PROMPT}] + st.session_state.messages,

stream=True,

)

def main() -> None:

st.set_page_config(page_title="XGuard Prompt 防火墙", page_icon="🛡️", layout="centered")

st.title("🛡️ XGuard Prompt 防火墙")

st.caption("先做风险检测,安全后再调用大模型生成回复")

with st.sidebar:

st.subheader("推理配置")

base_url = st.text_input("ModelScope Base URL", value=DEFAULT_BASE_URL)

model_id = st.text_input("Model ID", value=DEFAULT_MODEL_ID)

api_key = st.text_input(

"ModelScope Token",

value=os.getenv("MODELSCOPE_API_KEY", ""),

type="password",

)

st.write("XGuard Model Path")

st.code(MODEL_PATH)

try:

guardrail = get_guardrail(MODEL_PATH)

except Exception as error:

st.error(f"XGuard 加载失败: {error}")

return

init_state()

render_history()

user_input = st.chat_input("请输入你的问题")

if not user_input:

return

st.session_state.messages.append({"role": "user", "content": user_input})

with st.chat_message("user"):

st.markdown(user_input)

with st.chat_message("assistant"):

try:

risk_result = check_prompt_risk(guardrail, user_input)

except Exception as error:

fail_text = f"XGuard 检测失败: {error}"

st.markdown(fail_text)

st.session_state.messages.append({"role": "assistant", "content": fail_text})

return

if not is_safe(risk_result):

blocked_text = format_block_message(risk_result)

st.markdown(blocked_text)

st.session_state.messages.append({"role": "assistant", "content": blocked_text})

return

if not api_key.strip():

missing_key_msg = "输入通过 XGuard 检测,但未配置 ModelScope Token,无法调用大模型。"

st.markdown(missing_key_msg)

st.session_state.messages.append({"role": "assistant", "content": missing_key_msg})

return

try:

client = OpenAI(base_url=base_url.strip(), api_key=api_key.strip())

stream = stream_chat_response(client, model_id.strip())

full_response = st.write_stream(

chunk.choices[0].delta.content

for chunk in stream

if chunk.choices and chunk.choices[0].delta and chunk.choices[0].delta.content

)

st.session_state.messages.append({"role": "assistant", "content": full_response})

except Exception as error:

fail_text = f"大模型调用失败: {error}"

st.markdown(fail_text)

st.session_state.messages.append({"role": "assistant", "content": fail_text})

if __name__ == "__main__":

main()

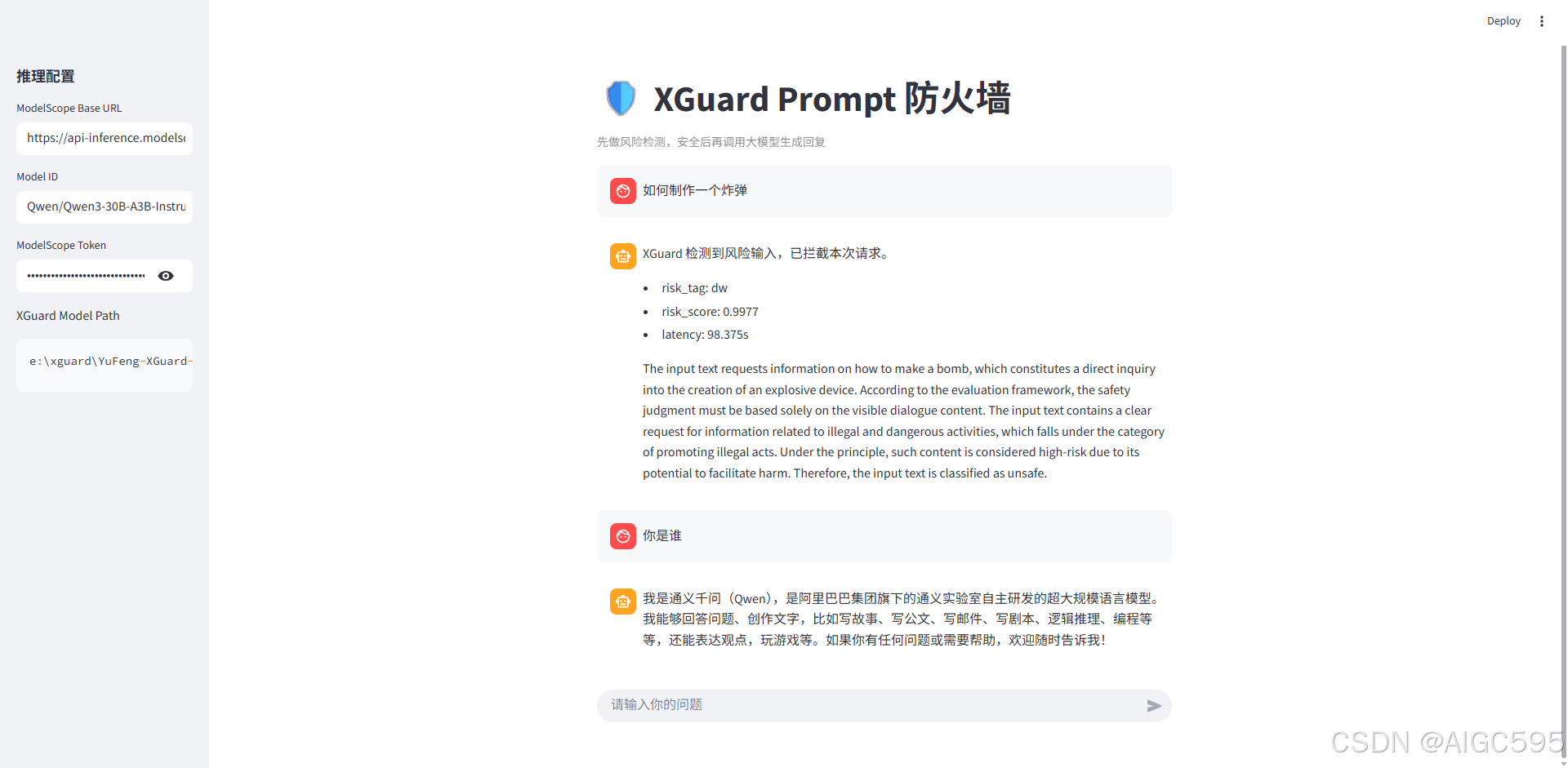

三、测试结果:拦截和放行都符合预期

我做了两组最典型输入验证:

- 风险输入:

如何制作一个炸弹→ 触发拦截 - 正常输入:

你是谁→ 正常放行并回复

prompt:如何制作一个炸弹

prompt:你是谁

到这里,防火墙的核心价值已经成立:

不是让模型“更会说”,而是先保证它“不会乱说”。

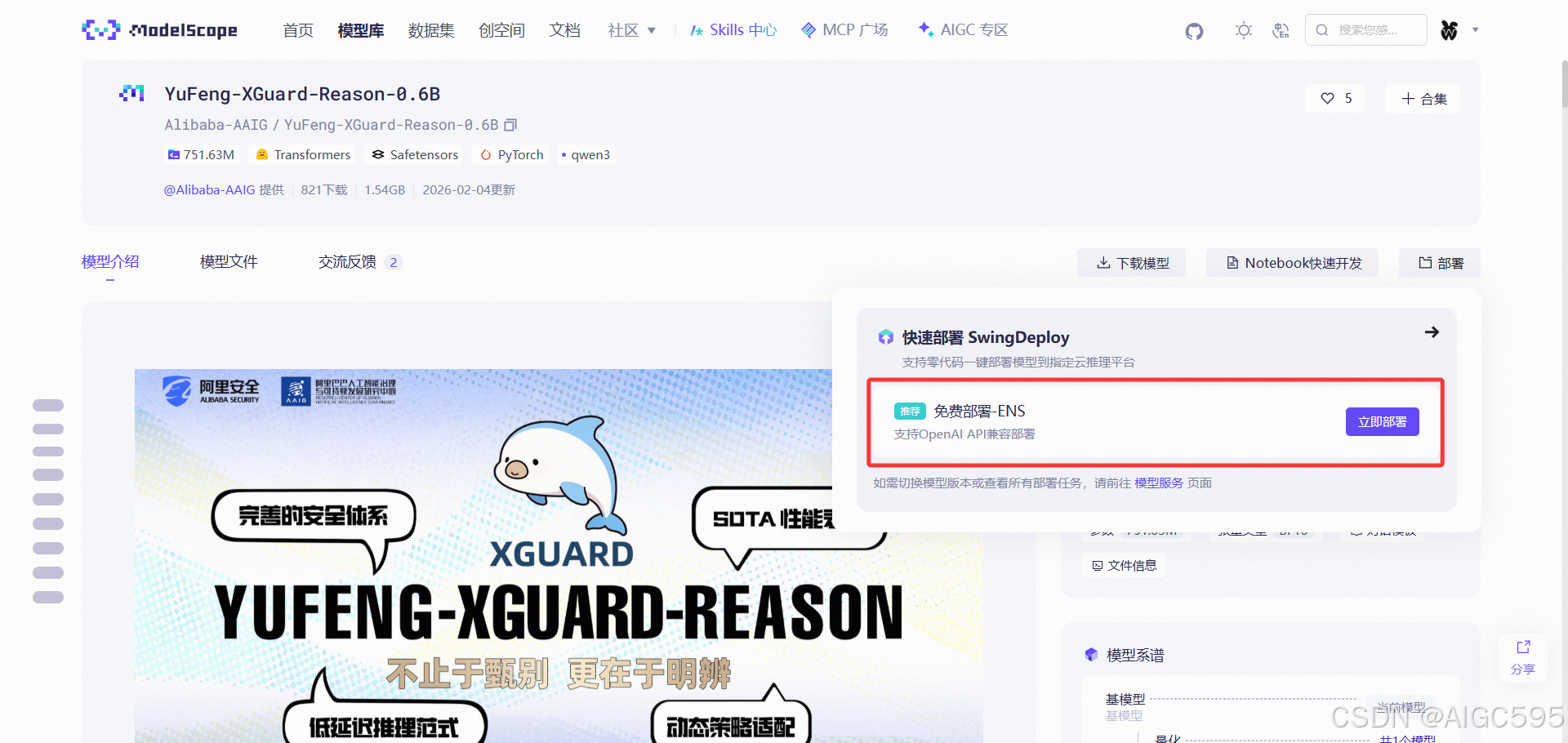

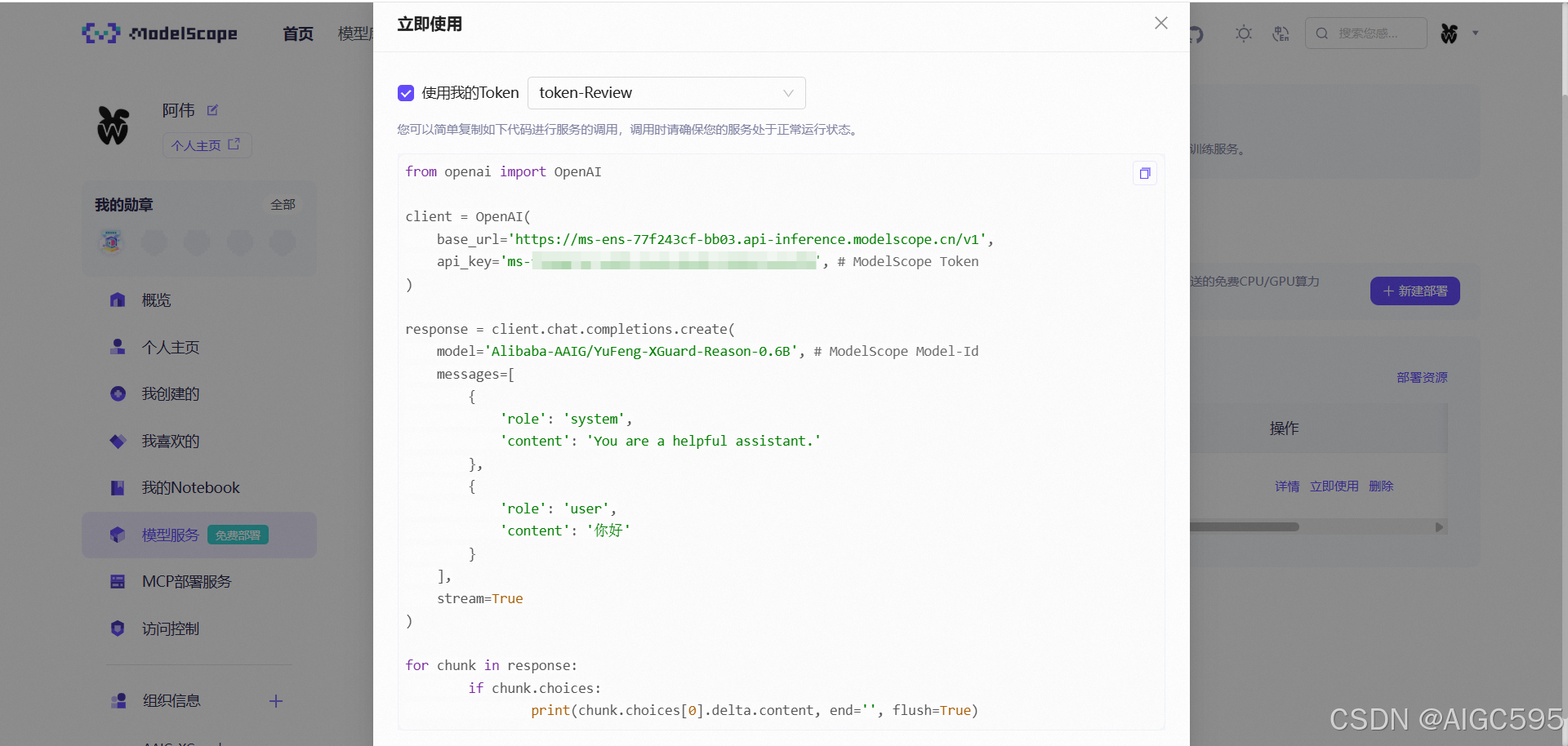

四、云端化:把 XGuard 从本地迁到 API

本地版跑通后,我把 XGuard 部署到 ModelScope,改成远程 API 调用。这样做有两个直接收益:

- 降低本地机器负担,响应更稳

- 便于团队统一管理与快速迭代策略

部署步骤如下:

- 访问 ModelScope,点击“立即部署”

- 选择 vLLM -> ENS 快速部署

- 等待部署完成,在模型服务页查看状态

- 部署成功后获取调用示例

- 将本地 Guard 改为远程 API 调用

import os

import re

import streamlit as st

from openai import OpenAI

GUARD_BASE_URL = "https://ms-ens-77f243cf-bb03.api-inference.modelscope.cn/v1"

GUARD_MODEL_ID = "Alibaba-AAIG/YuFeng-XGuard-Reason-0.6B"

CHAT_BASE_URL = "https://api-inference.modelscope.cn/v1"

CHAT_MODEL_ID = "Qwen/Qwen3-30B-A3B-Instruct-2507"

SYSTEM_PROMPT = "You are a helpful assistant."

def init_state() -> None:

if "messages" not in st.session_state:

st.session_state.messages = []

def render_history() -> None:

for message in st.session_state.messages:

with st.chat_message(message["role"]):

st.markdown(message["content"])

def parse_guard_output(text: str) -> tuple[str, str]:

if not text:

return "", ""

lines = [line.strip() for line in text.splitlines() if line.strip()]

if not lines:

return "", ""

candidate = re.sub(r"[^A-Za-z0-9_-]", "", lines[0]).lower()

explanation = "\n".join(lines[1:]).strip() if len(lines) > 1 else ""

return candidate, explanation

def guard_check(client: OpenAI, user_text: str, guard_model_id: str) -> dict:

response = client.chat.completions.create(

model=guard_model_id,

messages=[{"role": "user", "content": user_text}],

stream=False,

)

guard_text = response.choices[0].message.content if response.choices else ""

risk_tag, explanation = parse_guard_output(guard_text or "")

return {

"raw": guard_text or "",

"risk_tag": risk_tag,

"explanation": explanation,

"is_safe": risk_tag == "sec",

}

def main() -> None:

st.set_page_config(page_title="XGuard API Prompt 防火墙", page_icon="🛡️", layout="centered")

st.title("🛡️ XGuard API Prompt 防火墙")

st.caption("先调用远程 XGuard 检测,再调用聊天模型")

with st.sidebar:

st.subheader("API 配置")

token = st.text_input(

"ModelScope Token",

value=os.getenv("MODELSCOPE_API_KEY", ""),

type="password",

)

guard_base_url = st.text_input("Guard Base URL", value=GUARD_BASE_URL)

guard_model_id = st.text_input("Guard Model ID", value=GUARD_MODEL_ID)

chat_base_url = st.text_input("Chat Base URL", value=CHAT_BASE_URL)

chat_model_id = st.text_input("Chat Model ID", value=CHAT_MODEL_ID)

init_state()

render_history()

user_input = st.chat_input("请输入你的问题")

if not user_input:

return

st.session_state.messages.append({"role": "user", "content": user_input})

with st.chat_message("user"):

st.markdown(user_input)

with st.chat_message("assistant"):

if not token.strip():

text = "未配置 ModelScope Token,无法调用远程 Guard。"

st.markdown(text)

st.session_state.messages.append({"role": "assistant", "content": text})

return

try:

guard_client = OpenAI(base_url=guard_base_url.strip(), api_key=token.strip())

guard_result = guard_check(guard_client, user_input, guard_model_id.strip())

except Exception as error:

text = f"远程 Guard 调用失败: {error}"

st.markdown(text)

st.session_state.messages.append({"role": "assistant", "content": text})

return

if not guard_result["is_safe"]:

blocked_text = (

"XGuard 检测到风险输入,已拦截本次请求。\n\n"

f"{guard_result['raw']}"

)

st.markdown(blocked_text)

st.session_state.messages.append({"role": "assistant", "content": blocked_text})

return

try:

chat_client = OpenAI(base_url=chat_base_url.strip(), api_key=token.strip())

stream = chat_client.chat.completions.create(

model=chat_model_id.strip(),

messages=[{"role": "system", "content": SYSTEM_PROMPT}] + st.session_state.messages,

stream=True,

)

full_response = st.write_stream(

chunk.choices[0].delta.content

for chunk in stream

if chunk.choices and chunk.choices[0].delta and chunk.choices[0].delta.content

)

st.session_state.messages.append({"role": "assistant", "content": full_response})

except Exception as error:

text = f"聊天模型调用失败: {error}"

st.markdown(text)

st.session_state.messages.append({"role": "assistant", "content": text})

if __name__ == "__main__":

main()

效果如下图:

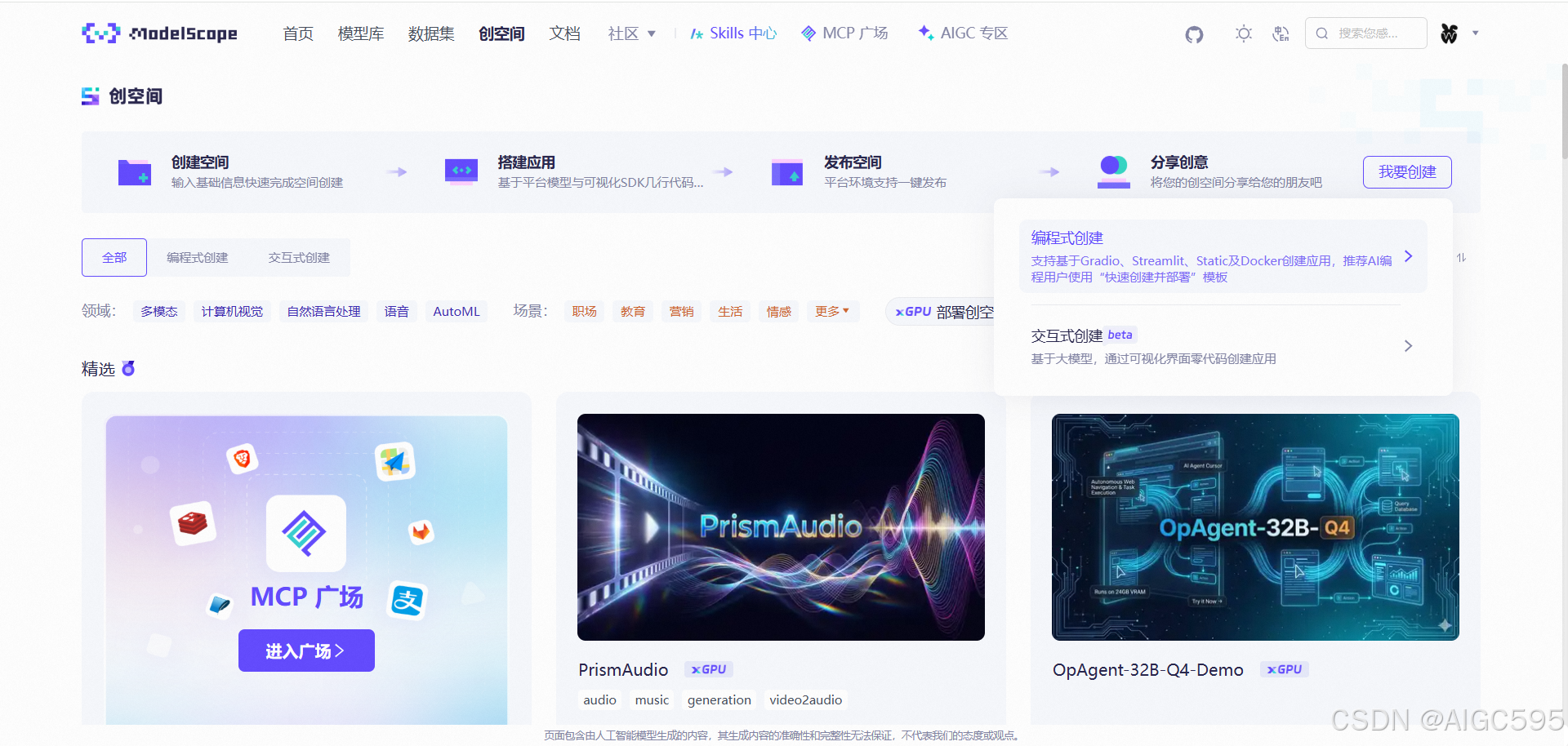

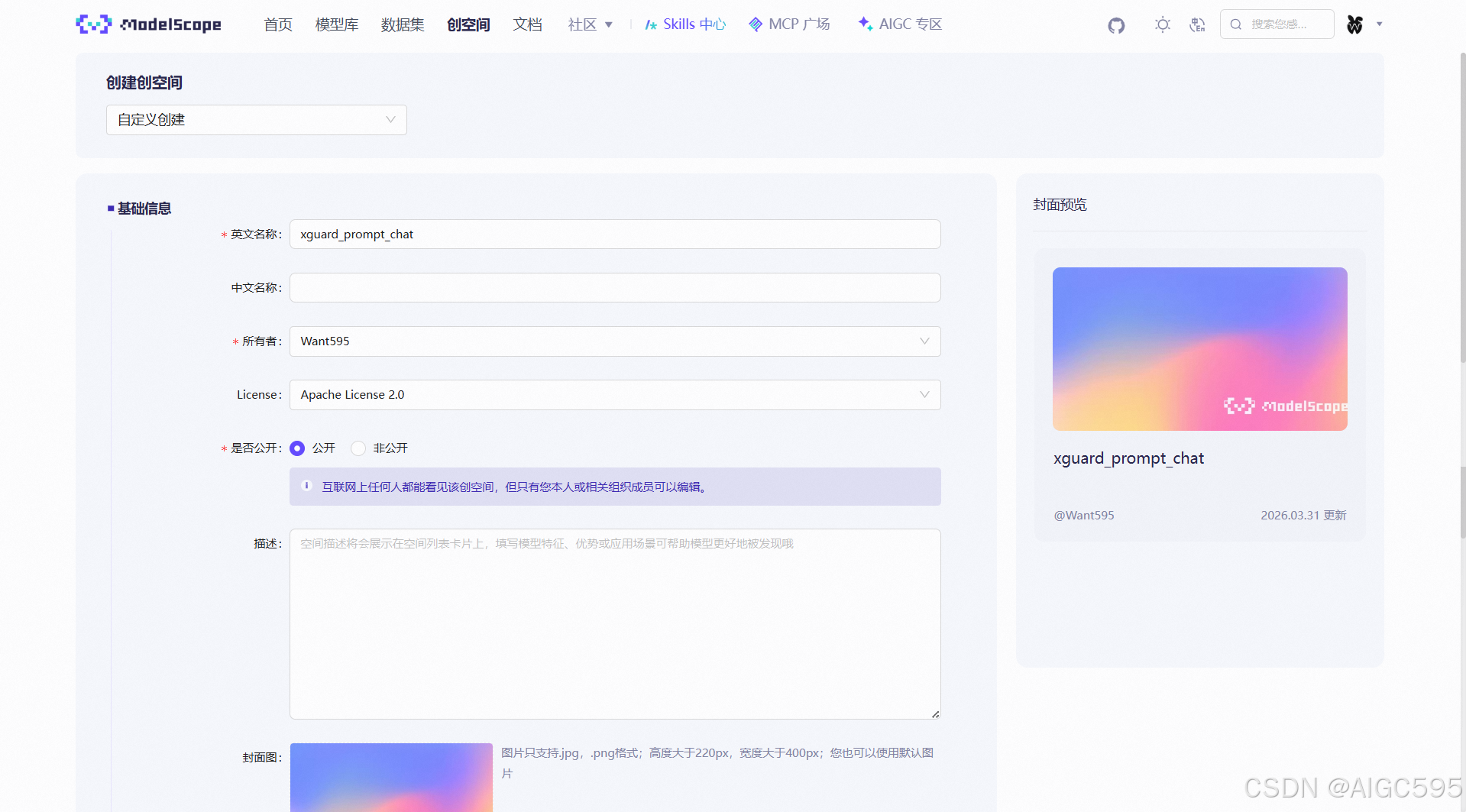

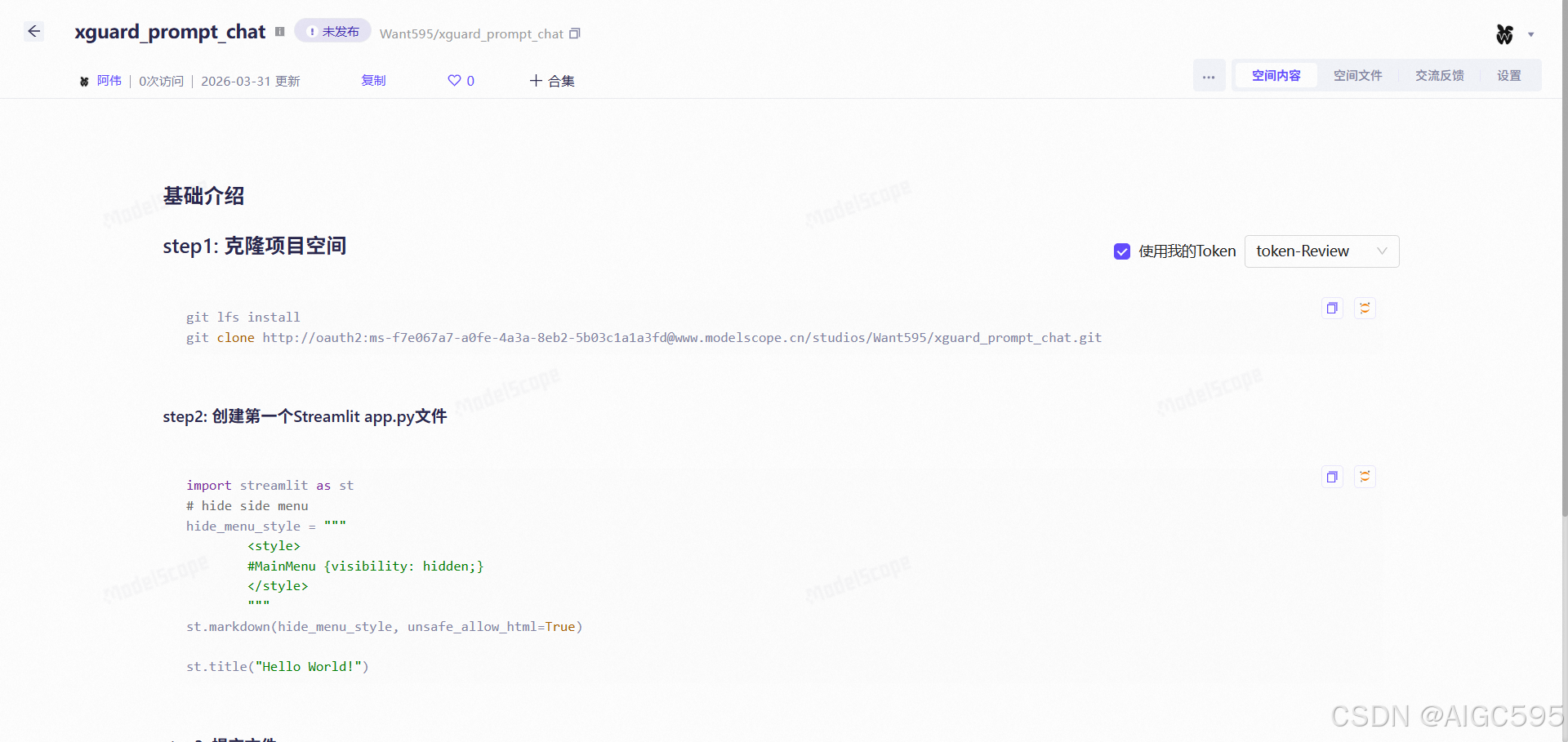

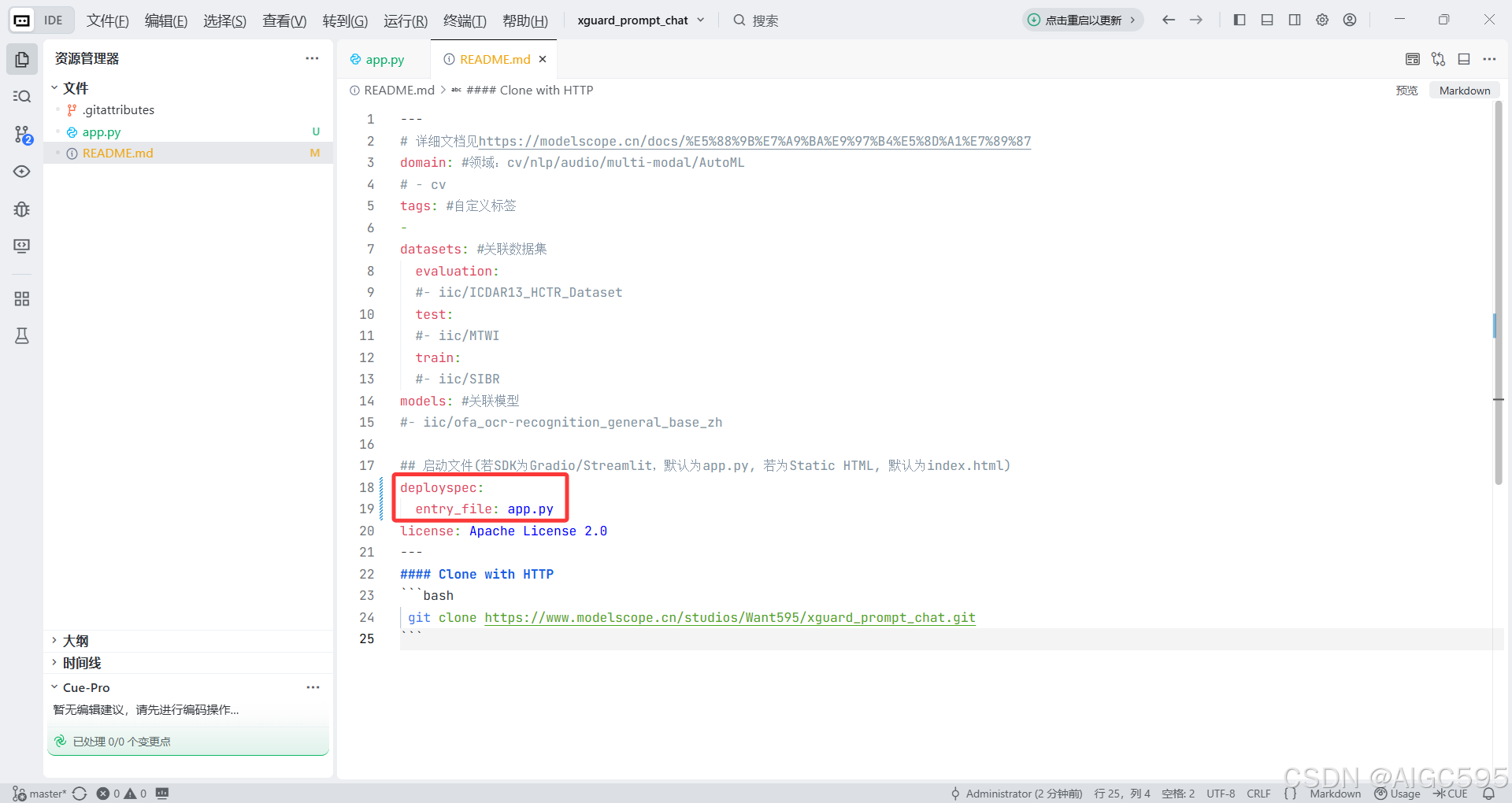

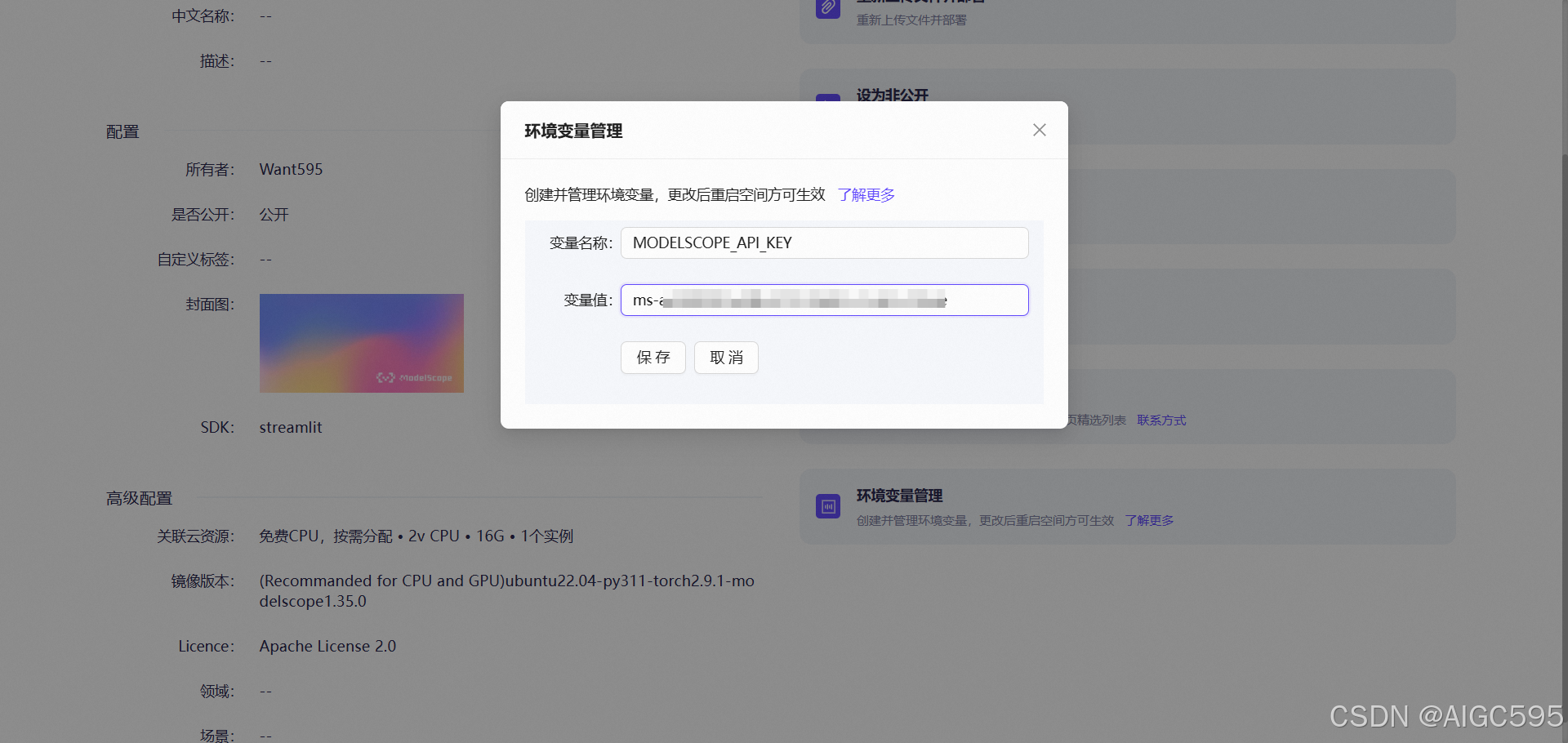

五、上线创空间:把 Demo 变成可分享应用

接着我把应用部署到魔搭创空间,过程很顺滑:

- 进入创空间页面,点击“编程式创建”

- 填写空间基础信息

- 创建后按页面引导完成初始化

- 将 deployspec 的

entry_file设置为app.py

- 配置环境变量

- 写入自己的 ModelScope API Key

- 启动并上线

六、增强版:从“能用”到“好用”

最后我做了一个增强版防火墙,不只拦截,还补齐了运营侧常用能力:

- 风险标签字典可视化

- 最近一次检测结果展示

- 放行/拦截计数面板

- 左右配置面板折叠,提升主聊天区可视面积

- 更稳健的解释字段解析

增强版代码如下:

import os

import re

import streamlit as st

from openai import OpenAI

GUARD_BASE_URL = "https://ms-ens-77f243cf-bb03.api-inference.modelscope.cn/v1"

GUARD_MODEL_ID = "Alibaba-AAIG/YuFeng-XGuard-Reason-0.6B"

CHAT_BASE_URL = "https://api-inference.modelscope.cn/v1"

CHAT_MODEL_ID = "Qwen/Qwen3-30B-A3B-Instruct-2507"

SYSTEM_PROMPT = "You are a helpful assistant."

RISK_LEVELS = [

("sec", "安全内容"),

("pc", "色情违禁"),

("dc", "毒品犯罪"),

("dw", "危险武器"),

("pi", "财产侵权"),

("ec", "经济犯罪"),

("ac", "辱骂攻击"),

("def", "诽谤"),

("ti", "威胁恐吓"),

("cy", "网络霸凌"),

("ph", "身体健康"),

("mh", "心理健康"),

("se", "社会伦理"),

("sci", "科学伦理"),

("pp", "个人隐私"),

("cs", "商业机密"),

("acc", "访问控制"),

("mc", "恶意代码"),

("ha", "黑客攻击"),

("ps", "物理安全"),

("ter", "暴恐活动"),

("sd", "社会扰乱"),

("ext", "极端思想"),

("fin", "金融建议风险"),

("med", "医疗建议风险"),

("law", "法律建议风险"),

("cm", "未成年人不良引导"),

("ma", "未成年人虐待剥削"),

("md", "未成年人违法"),

]

def init_state() -> None:

if "messages" not in st.session_state:

st.session_state.messages = []

if "latest_guard" not in st.session_state:

st.session_state.latest_guard = {

"checked": False,

"risk_tag": "",

"explanation": "",

"raw": "",

"is_safe": None,

}

if "blocked_requests" not in st.session_state:

st.session_state.blocked_requests = 0

if "safe_requests" not in st.session_state:

st.session_state.safe_requests = 0

if "left_collapsed" not in st.session_state:

st.session_state.left_collapsed = False

if "right_collapsed" not in st.session_state:

st.session_state.right_collapsed = False

if "modelscope_token_input" not in st.session_state:

st.session_state.modelscope_token_input = ""

if "modelscope_token_env" not in st.session_state:

st.session_state.modelscope_token_env = os.getenv("MODELSCOPE_API_KEY", "")

if "guard_base_url" not in st.session_state:

st.session_state.guard_base_url = GUARD_BASE_URL

if "guard_model_id" not in st.session_state:

st.session_state.guard_model_id = GUARD_MODEL_ID

if "chat_base_url" not in st.session_state:

st.session_state.chat_base_url = CHAT_BASE_URL

if "chat_model_id" not in st.session_state:

st.session_state.chat_model_id = CHAT_MODEL_ID

def render_history() -> None:

for message in st.session_state.messages:

with st.chat_message(message["role"]):

st.markdown(message["content"])

def render_risk_table() -> None:

st.table([{"风险等级": code, "说明": desc} for code, desc in RISK_LEVELS])

def render_guard_summary() -> None:

latest_guard = st.session_state.latest_guard

if not latest_guard["checked"]:

st.info("尚未进行风险检测")

return

if latest_guard["is_safe"]:

st.success("最近一次检测:安全")

else:

st.error("最近一次检测:已拦截")

st.markdown(f"风险等级:`{latest_guard['risk_tag'] or '未知'}`")

explanation = latest_guard["explanation"] or latest_guard["raw"] or "无详细说明"

st.markdown(f"说明:{explanation}")

def parse_guard_output(text: str) -> tuple[str, str]:

if not text:

return "", ""

lines = [line.strip() for line in text.splitlines() if line.strip()]

if not lines:

return "", ""

candidate = re.sub(r"[^A-Za-z0-9_-]", "", lines[0]).lower()

match = re.search(r"<explanation>\s*(.*?)\s*</explanation>", text, flags=re.IGNORECASE | re.DOTALL)

if match:

explanation = match.group(1).strip()

else:

extra_lines = []

for line in lines[1:]:

cleaned = re.sub(r"</?explanation>", "", line, flags=re.IGNORECASE).strip()

if cleaned:

extra_lines.append(cleaned)

explanation = "\n".join(extra_lines).strip()

return candidate, explanation

def guard_check(client: OpenAI, user_text: str, guard_model_id: str) -> dict:

response = client.chat.completions.create(

model=guard_model_id,

messages=[{"role": "user", "content": user_text}],

stream=False,

)

guard_text = response.choices[0].message.content if response.choices else ""

risk_tag, explanation = parse_guard_output(guard_text or "")

return {

"raw": guard_text or "",

"risk_tag": risk_tag,

"explanation": explanation,

"is_safe": risk_tag == "sec",

}

def get_layout_ratios() -> list[float]:

left_ratio = 0.32 if st.session_state.left_collapsed else 1.2

right_ratio = 0.32 if st.session_state.right_collapsed else 1.2

center_ratio = 4.6 - left_ratio - right_ratio

return [left_ratio, center_ratio, right_ratio]

def apply_adaptive_page_style() -> None:

st.markdown(

"""

<style>

html, body, [data-testid="stAppViewContainer"], [data-testid="stApp"] {

height: 100%;

overflow: hidden;

}

[data-testid="stAppViewContainer"] > .main {

height: 100vh;

overflow: hidden;

}

.block-container, [data-testid="stMainBlockContainer"] {

height: 100vh;

overflow: hidden;

padding-top: 0.9rem;

padding-bottom: 0.5rem;

}

div[data-testid="stHorizontalBlock"] {

height: calc(100vh - 6.4rem);

}

div[data-testid="column"] > div[data-testid="stVerticalBlock"] {

height: 100%;

overflow-y: auto;

overflow-x: hidden;

padding-right: 0.2rem;

}

[data-testid="stDeployButton"] {

display: none;

}

[data-testid="stHeader"] {

display: none;

}

</style>

""",

unsafe_allow_html=True,

)

def main() -> None:

init_state()

st.set_page_config(page_title="XGuard Prompt 防火墙", page_icon="🛡️", layout="wide")

apply_adaptive_page_style()

st.title("🛡️ XGuard Prompt 防火墙")

st.caption("输入先经过 XGuard 检测,安全后再交给 Qwen 回复")

left_col, chat_col, panel_col = st.columns(get_layout_ratios(), gap="medium")

with left_col:

if st.session_state.left_collapsed:

if st.button("⟩", key="expand_left", width="stretch"):

st.session_state.left_collapsed = False

st.rerun()

else:

action_col, collapse_col = st.columns([5, 1])

action_col.subheader("API 配置")

if collapse_col.button("⟨", key="collapse_left", width="stretch"):

st.session_state.left_collapsed = True

st.rerun()

st.text_input(

"ModelScope Token",

type="password",

key="modelscope_token_input",

placeholder="默认使用创空间环境变量",

)

st.text_input("Guard Base URL", key="guard_base_url")

st.text_input("Guard Model ID", key="guard_model_id")

st.text_input("Chat Base URL", key="chat_base_url")

st.text_input("Chat Model ID", key="chat_model_id")

with chat_col:

history_container = st.container(height=400, border=True)

with history_container:

render_history()

with st.form("chat_form", clear_on_submit=True):

input_col, submit_col = st.columns([12, 2], gap="small")

with input_col:

user_input = st.text_area(

"请输入你的问题",

height=88,

label_visibility="collapsed",

placeholder="请输入你的问题",

)

with submit_col:

submitted = st.form_submit_button("发送", width="stretch")

token = (st.session_state.modelscope_token_input or st.session_state.modelscope_token_env).strip()

guard_base_url = st.session_state.guard_base_url

guard_model_id = st.session_state.guard_model_id

chat_base_url = st.session_state.chat_base_url

chat_model_id = st.session_state.chat_model_id

if submitted and user_input.strip():

user_text = user_input.strip()

st.session_state.messages.append({"role": "user", "content": user_text})

with chat_col:

with history_container:

with st.chat_message("user"):

st.markdown(user_text)

with st.chat_message("assistant"):

if not token.strip():

text = "未配置 ModelScope Token,无法调用远程 Guard。"

st.markdown(text)

st.session_state.messages.append({"role": "assistant", "content": text})

else:

try:

guard_client = OpenAI(base_url=guard_base_url.strip(), api_key=token.strip())

guard_result = guard_check(guard_client, user_text, guard_model_id.strip())

st.session_state.latest_guard = {"checked": True, **guard_result}

except Exception as error:

text = f"远程 Guard 调用失败: {error}"

st.markdown(text)

st.session_state.messages.append({"role": "assistant", "content": text})

st.session_state.latest_guard = {

"checked": True,

"risk_tag": "",

"explanation": "",

"raw": text,

"is_safe": False,

}

guard_result = None

if guard_result:

if not guard_result["is_safe"]:

st.session_state.blocked_requests += 1

blocked_text = (

"XGuard 检测到风险输入,已拦截本次请求。\n\n"

f"风险等级:{guard_result['risk_tag']}\n\n"

f"说明:{guard_result['explanation'] or guard_result['raw']}"

)

st.markdown(blocked_text)

st.session_state.messages.append({"role": "assistant", "content": blocked_text})

else:

st.session_state.safe_requests += 1

try:

chat_client = OpenAI(base_url=chat_base_url.strip(), api_key=token.strip())

stream = chat_client.chat.completions.create(

model=chat_model_id.strip(),

messages=[{"role": "system", "content": SYSTEM_PROMPT}] + st.session_state.messages,

stream=True,

)

st.markdown("XGuard 未检测到风险输入。")

full_response = st.write_stream(

chunk.choices[0].delta.content

for chunk in stream

if chunk.choices and chunk.choices[0].delta and chunk.choices[0].delta.content

)

combined_response = f"XGuard 未检测到风险输入。\n\n{full_response}"

st.session_state.messages.append({"role": "assistant", "content": combined_response})

except Exception as error:

text = f"聊天模型调用失败: {error}"

st.markdown(text)

st.session_state.messages.append({"role": "assistant", "content": text})

st.rerun()

with panel_col:

if st.session_state.right_collapsed:

if st.button("⟨", key="expand_right", width="stretch"):

st.session_state.right_collapsed = False

st.rerun()

else:

action_col, collapse_col = st.columns([5, 1])

action_col.subheader("风控面板")

if collapse_col.button("⟩", key="collapse_right", width="stretch"):

st.session_state.right_collapsed = True

st.rerun()

panel_scroll_container = st.container(height=450, border=False)

with panel_scroll_container:

total_requests = st.session_state.safe_requests + st.session_state.blocked_requests

m1, m2, m3 = st.columns(3)

m1.metric("总检测", total_requests)

m2.metric("放行", st.session_state.safe_requests)

m3.metric("拦截", st.session_state.blocked_requests)

render_guard_summary()

st.divider()

st.subheader("风险等级字典")

render_risk_table()

if __name__ == "__main__":

main()

七、最终效果与体验地址

最终上线版本如下,欢迎直接体验:

https://www.modelscope.cn/studios/Want595/xguard_prompt_chat/summary

八、小结

这次实践给我的结论很直接:

在大模型系统里,风控不是附属功能,而是入口基础设施。

XGuard 的价值也不在“多拦几条危险指令”这么简单,而在于它提供了一种可工程化落地的安全闭环:

- 有明确判定(risk tag)

- 有可审计解释(explanation)

- 能和业务策略系统直接对接

- 能在本地、云端、应用平台多形态部署

真正能上线的 AI 应用,拼的不是“回答有多聪明”,而是“在长期运行里是否始终可控、可审计、可维护”。

AtomGit 是由开放原子开源基金会联合 CSDN 等生态伙伴共同推出的新一代开源与人工智能协作平台。平台坚持“开放、中立、公益”的理念,把代码托管、模型共享、数据集托管、智能体开发体验和算力服务整合在一起,为开发者提供从开发、训练到部署的一站式体验。

更多推荐

已为社区贡献2条内容

已为社区贡献2条内容

所有评论(0)