SenseVoicecpp encoder识别语音[AI人工智能(七十二)]—东方仙盟

silero-vad.cc

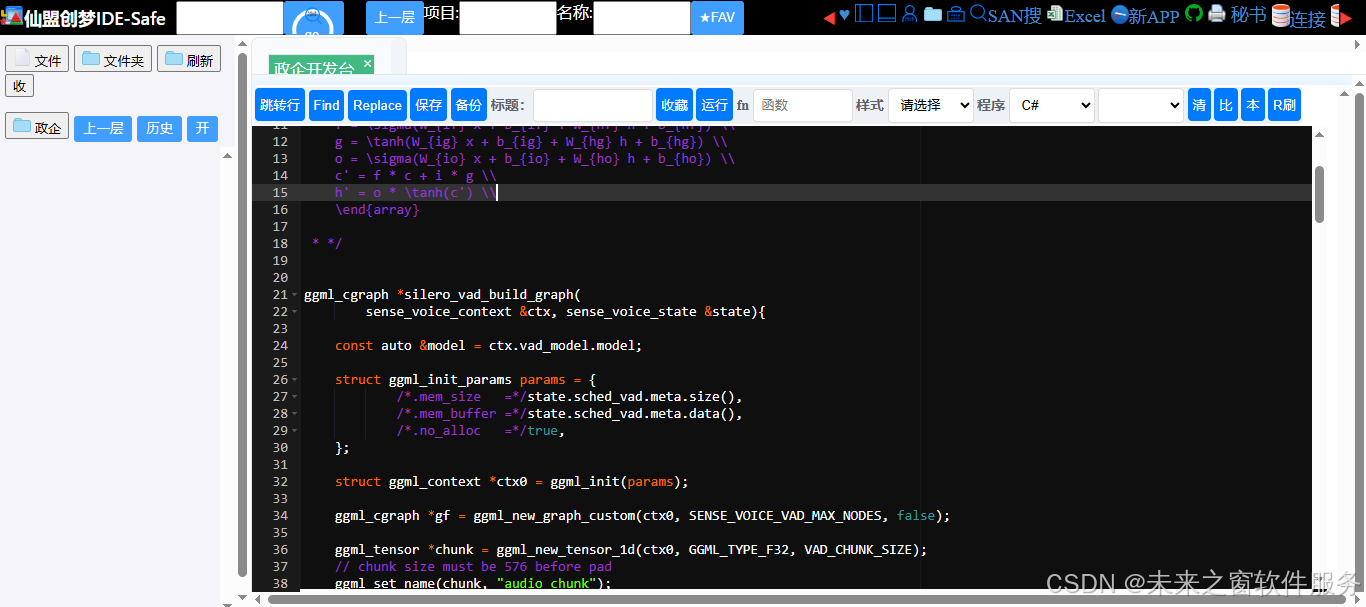

核心代码

完整代码

//

// Created by lovemefan on 2024/11/24.

//

#include "silero-vad.h"

#define SENSE_VOICE_VAD_MAX_NODES 1024

#define VAD_CHUNK_SIZE 640

/*

\begin{array}{ll}

i = \sigma(W_{ii} x + b_{ii} + W_{hi} h + b_{hi}) \\

f = \sigma(W_{if} x + b_{if} + W_{hf} h + b_{hf}) \\

g = \tanh(W_{ig} x + b_{ig} + W_{hg} h + b_{hg}) \\

o = \sigma(W_{io} x + b_{io} + W_{ho} h + b_{ho}) \\

c' = f * c + i * g \\

h' = o * \tanh(c') \\

\end{array}

* */

ggml_cgraph *silero_vad_build_graph(

sense_voice_context &ctx, sense_voice_state &state){

const auto &model = ctx.vad_model.model;

struct ggml_init_params params = {

/*.mem_size =*/state.sched_vad.meta.size(),

/*.mem_buffer =*/state.sched_vad.meta.data(),

/*.no_alloc =*/true,

};

struct ggml_context *ctx0 = ggml_init(params);

ggml_cgraph *gf = ggml_new_graph_custom(ctx0, SENSE_VOICE_VAD_MAX_NODES, false);

ggml_tensor *chunk = ggml_new_tensor_1d(ctx0, GGML_TYPE_F32, VAD_CHUNK_SIZE);

// chunk size must be 576 before pad

ggml_set_name(chunk, "audio_chunk");

ggml_set_input(chunk);

ggml_tensor *cur;

// stft

{

cur = ggml_conv_1d(ctx0, model->stft.forward_basis_buffer, chunk, 128, 0, 1);

// chunk operation by ggml view, equals torch.chunk(x, 2) in pytorch

struct ggml_tensor * real_part = ggml_view_2d(ctx0, cur, cur->ne[0], cur->ne[1] / 2, cur->nb[1], 0);

ggml_set_name(real_part, "real_part");

struct ggml_tensor * image_part = ggml_view_2d(ctx0, cur, cur->ne[0], cur->ne[1] / 2, cur->nb[1], cur->nb[0] * cur->ne[0] * cur->ne[1] / 2);

ggml_set_name(image_part, "image_part");

// magnitude, equals torch.sqrt(real_part ** 2 + imag_part ** 2)

cur = ggml_sqrt(ctx0,

ggml_add(ctx0,

ggml_mul(ctx0, real_part, real_part),

ggml_mul(ctx0, image_part, image_part)

)

);

ggml_set_name(cur, "magnitude");

}

// encoder

{

{

cur = ggml_conv_1d(ctx0, model->encoders_layer[0].reparam_conv_w, cur, 1, 1, 1);

cur = ggml_add(ctx0, cur, ggml_cont(ctx0, ggml_transpose(ctx0, model->encoders_layer[0].reparam_conv_b)));

cur = ggml_relu(ctx0, cur);

cur = ggml_conv_1d(ctx0, model->encoders_layer[1].reparam_conv_w, cur, 2, 1, 1);

cur = ggml_add(ctx0, cur, ggml_cont(ctx0, ggml_transpose(ctx0, model->encoders_layer[1].reparam_conv_b)));

cur = ggml_relu(ctx0, cur);

cur = ggml_conv_1d(ctx0, model->encoders_layer[2].reparam_conv_w, cur, 2, 1, 1);

cur = ggml_add(ctx0, cur, ggml_cont(ctx0, ggml_transpose(ctx0, model->encoders_layer[2].reparam_conv_b)));

cur = ggml_relu(ctx0, cur);

cur = ggml_conv_1d(ctx0, model->encoders_layer[3].reparam_conv_w, cur, 1, 1, 1);

cur = ggml_add(ctx0, cur, ggml_cont(ctx0, ggml_transpose(ctx0, model->encoders_layer[3].reparam_conv_b)));

cur = ggml_relu(ctx0, cur);

}

}

//decoder

{

struct ggml_tensor* in_lstm_hidden_state = ggml_new_tensor_1d(ctx0, cur->type, cur->ne[1]);

struct ggml_tensor* in_lstm_context = ggml_new_tensor_1d(ctx0, cur->type, cur->ne[1]);

struct ggml_tensor* out_lstm_hidden_state;

struct ggml_tensor* out_lstm_context;

ggml_set_name(in_lstm_context, "in_lstm_context");

ggml_set_name(in_lstm_hidden_state, "in_lstm_hidden_state");

// lstm cell

// ref: https://github.com/pytorch/pytorch/blob/1a93b96815b5c87c92e060a6dca51be93d712d09/aten/src/ATen/native/RNN.cpp#L298-L304

// gates = x @ self.weight_ih.T + self.bias_ih + hx[0] @ self.weight_hh.T + self.bias_hh

// chunked_gates = gates.chunk(4, dim=-1)

// ingate = torch.sigmoid(chunked_gates[0])

// forgetgate = torch.sigmoid(chunked_gates[1])

// cellgate = torch.tanh(chunked_gates[2])

// outgate = torch.sigmoid(chunked_gates[3])

// cy = forgetgate * hx[1] + ingate * cellgate

// hy = outgate * torch.tanh(cy)

struct ggml_tensor *gates = ggml_add(

ctx0,

ggml_add(ctx0, ggml_mul_mat(ctx0,

model->decoder.lstm_weight_ih,

ggml_transpose(ctx0, cur)),

model->decoder.lstm_bias_ih),

ggml_add(ctx0, ggml_mul_mat(ctx0,

model->decoder.lstm_weight_hh,

in_lstm_hidden_state),

model->decoder.lstm_bias_hh));

ggml_set_name(gates, "gates");

struct ggml_tensor * input_gates = ggml_sigmoid(ctx0, ggml_view_2d(ctx0, gates, gates->ne[0] / 4, gates->ne[1] , gates->nb[1], 0));

struct ggml_tensor * forget_gates = ggml_sigmoid(ctx0, ggml_view_2d(ctx0, gates, gates->ne[0] / 4, gates->ne[1], gates->nb[1], gates->nb[0] / 4 * gates->ne[0]));

struct ggml_tensor * cell_gate = ggml_tanh(ctx0, ggml_view_2d(ctx0, gates, gates->ne[0] / 4, gates->ne[1], gates->nb[1], 2 * gates->nb[0] / 4 * gates->ne[0]));

struct ggml_tensor * out_gates = ggml_sigmoid(ctx0, ggml_view_2d(ctx0, gates, gates->ne[0] / 4, gates->ne[1], gates->nb[1], 3 * gates->nb[0] / 4 * gates->ne[0]));

ggml_set_name(input_gates, "input_gates");

ggml_set_name(forget_gates, "forget_gates");

ggml_set_name(cell_gate, "cell_gates");

ggml_set_name(out_gates, "out_gates");

out_lstm_context = ggml_add(ctx0,

ggml_mul(ctx0, forget_gates, in_lstm_context),

ggml_mul(ctx0, input_gates, cell_gate)

);

ggml_set_name(out_lstm_context, "out_lstm_context");

ggml_set_output(out_lstm_context);

out_lstm_hidden_state = ggml_mul(ctx0, out_gates, ggml_tanh(ctx0, out_lstm_context));

ggml_set_name(out_lstm_hidden_state, "out_lstm_hidden_state");

ggml_set_output(out_lstm_hidden_state);

cur = ggml_relu(ctx0, out_lstm_hidden_state);

cur = ggml_conv_1d(ctx0, model->decoder.decoder_conv_w, ggml_cont(ctx0, ggml_transpose(ctx0, cur)), 1, 0, 1);

cur = ggml_add(ctx0, cur, ggml_transpose(ctx0, model->decoder.decoder_conv_b));

ggml_set_name(cur, "decoder_out");

cur = ggml_sigmoid(ctx0, cur);

ggml_set_name(cur, "logit");

}

ggml_set_output(cur);

ggml_build_forward_expand(gf, cur);

ggml_free(ctx0);

return gf;

}

bool silero_vad_encode_internal(sense_voice_context &ctx,

sense_voice_state &state,

std::vector<float> chunk,

const int n_threads,

float &speech_prob){

{

auto & sched = ctx.state->sched_vad.sched;

ggml_cgraph *gf = silero_vad_build_graph(ctx, state);

// ggml_backend_sched_set_eval_callback(sched, ctx->params.cb_eval, &ctx->params.cb_eval_user_data);

if (!ggml_backend_sched_alloc_graph(sched, gf)) {

// should never happen as we pre-allocate the memory

return false;

}

// set the input

{

struct ggml_tensor *data = ggml_graph_get_tensor(gf, "audio_chunk");

ggml_backend_tensor_set(data, chunk.data(), 0, ggml_nbytes(data));

struct ggml_tensor *in_lstm_context = ggml_graph_get_tensor(gf, "in_lstm_context");

struct ggml_tensor *in_lstm_hidden_state = ggml_graph_get_tensor(gf, "in_lstm_hidden_state");

ggml_backend_tensor_copy(state.vad_lstm_context, in_lstm_context);

ggml_backend_tensor_copy(state.vad_lstm_hidden_state, in_lstm_hidden_state);

}

if (!ggml_graph_compute_helper(sched, gf, n_threads)) {

return false;

}

// save output state

{

struct ggml_tensor *lstm_context = ggml_graph_get_tensor(gf, "out_lstm_context");

ggml_backend_tensor_copy(lstm_context, state.vad_lstm_context);

struct ggml_tensor *lstm_hidden_state = ggml_graph_get_tensor(gf, "out_lstm_hidden_state");

ggml_backend_tensor_copy(lstm_hidden_state, state.vad_lstm_hidden_state);

}

ggml_backend_tensor_get(ggml_graph_get_tensor(gf, "logit"), &speech_prob, 0, sizeof(speech_prob));

}

return true;

}

代码解释

作用:判断当前音频里有没有人声

- 输出:0~1 之间的概率值

- 用途:切割静音、只保留说话部分,提升语音识别速度和准确率

它是干嘛的?(生活比喻)

- VAD = Voice Activity Detection = 语音活动检测

- 相当于语音识别的 “门卫”

- 只让有人声的片段进入模型

- 静音、噪音、空音全部过滤掉

没有 VAD,语音识别会:

- 变慢

- 把静音识别成乱码

- 耗电更高

代码整体流程(超级清晰)

输入一段固定长度音频 → STFT 转频谱 → 卷积编码器提取特征 → LSTM 记忆人声状态 → 解码器输出人声概率

逐部分超简解释

1. 顶部注释公式

plaintext

i = σ(...)

f = σ(...)

g = tanh(...)

o = σ(...)

c' = f * c + i * g

h' = o * tanh(c')

这是 LSTM 门控公式,不用懂,只需要知道:LSTM 用来记住前面的声音,判断是不是连续说话

2. silero_vad_build_graph

构建 VAD 模型的计算图就是把神经网络一层一层搭起来:

① STFT 时频变换

cpp

运行

cur = ggml_conv_1d(..., stft_basis, ...);

把时域波形 → 频域频谱模型只能看懂频谱,看不懂原始波形。

② 4 层卷积编码器

cpp

运行

conv1d → add → relu

conv1d → add → relu

conv1d → add → relu

conv1d → add → relu

提取声音特征判断:这是噪音?静音?还是人声?

③ LSTM 解码器(核心)

cpp

运行

gates = ...

input_gates = sigmoid(...)

forget_gates = ...

out_gates = ...

LSTM 是 VAD 的灵魂

- 记住上一帧是不是人声

- 防止断断续续

- 让判断更稳定

④ 最终输出

cpp

运行

cur = ggml_sigmoid(ctx0, cur);

输出 0~1 的概率

- ≥0.5:有人声

- <0.5:静音 / 噪音

3. silero_vad_encode_internal

真正运行 VAD 的函数做 3 件事:

- 输入音频块

- 运行模型计算

- 输出 speech_prob(人声概率)

- 保存 LSTM 状态(记忆)

输入输出长什么样?

- 输入:长度固定 640 的 float 音频

- 输出:speech_prob = 0.98(有人声)/ 0.02(静音)

它在整个项目里的位置(超级重要)

plaintext

麦克风 / WAV 文件

↓

【Silero VAD(这段代码)】

↓

只保留有人声的片段

↓

SenseVoice 语音识别

↓

输出文字

最关键的结论

这段代码 = Silero VAD 纯 C++ 实现

作用 = 检测有没有人声

优点 = 轻量、超快、准、离线可用

是语音识别系统必不可少的前置模块

最简总结

- Silero VAD:语音识别的门卫

- 输入:音频

- 输出:人声概率(0~1)

- 功能:过滤静音、提升识别速度与准确率

- 结构:STFT → CNN → LSTM → 概率输出

人人皆为创造者,共创方能共成长

每个人都是使用者,也是创造者;是数字世界的消费者,更是价值的生产者与分享者。在智能时代的浪潮里,单打独斗的发展模式早已落幕,唯有开放连接、创意共创、利益共享,才能让个体价值汇聚成生态合力,让技术与创意双向奔赴,实现平台与伙伴的快速成长、共赢致远。

原创永久分成,共赴星辰大海

原创创意共创、永久收益分成,是东方仙盟始终坚守的核心理念。我们坚信,每一份原创智慧都值得被尊重与回馈,以永久分成锚定共创初心,让创意者长期享有价值红利,携手万千伙伴向着科技星辰大海笃定前行,拥抱硅基 生命与数字智能交融的未来,共筑跨越时代的数字文明共同体。

东方仙盟:拥抱知识开源,共筑数字新生态

在全球化与数字化浪潮中,东方仙盟始终秉持开放协作、知识共享的理念,积极拥抱开源技术与开放标准。我们相信,唯有打破技术壁垒、汇聚全球智慧,才能真正推动行业的可持续发展。

开源赋能中小商户:通过将前端异常检测、跨系统数据互联等核心能力开源化,东方仙盟为全球中小商户提供了低成本、高可靠的技术解决方案,让更多商家能够平等享受数字转型的红利。

共建行业标准:我们积极参与国际技术社区,与全球开发者、合作伙伴共同制定开放协议 与技术规范,推动跨境零售、文旅、餐饮等多业态的系统互联互通,构建更加公平、高效的数字生态。

知识普惠,共促发展:通过开源社区 、技术文档与培训体系,东方仙盟致力于将前沿技术转化为可落地的行业实践,赋能全球合作伙伴,共同培育创新人才,推动数字经济 的普惠式增长

阿雪技术观

在科技发展浪潮中,我们不妨积极投身技术共享。不满足于做受益者,更要主动担当贡献者。无论是分享代码、撰写技术博客,还是参与开源项目 维护改进,每一个微小举动都可能蕴含推动技术进步的巨大能量。东方仙盟是汇聚力量的天地,我们携手在此探索硅基 生命,为科技进步添砖加瓦。

Hey folks, in this wild tech - driven world, why not dive headfirst into the whole tech - sharing scene? Don't just be the one reaping all the benefits; step up and be a contributor too. Whether you're tossing out your code snippets , hammering out some tech blogs, or getting your hands dirty with maintaining and sprucing up open - source projects, every little thing you do might just end up being a massive force that pushes tech forward. And guess what? The Eastern FairyAlliance is this awesome place where we all come together. We're gonna team up and explore the whole silicon - based life thing, and in the process, we'll be fueling the growth of technology

AtomGit 是由开放原子开源基金会联合 CSDN 等生态伙伴共同推出的新一代开源与人工智能协作平台。平台坚持“开放、中立、公益”的理念,把代码托管、模型共享、数据集托管、智能体开发体验和算力服务整合在一起,为开发者提供从开发、训练到部署的一站式体验。

更多推荐

已为社区贡献33条内容

已为社区贡献33条内容

所有评论(0)