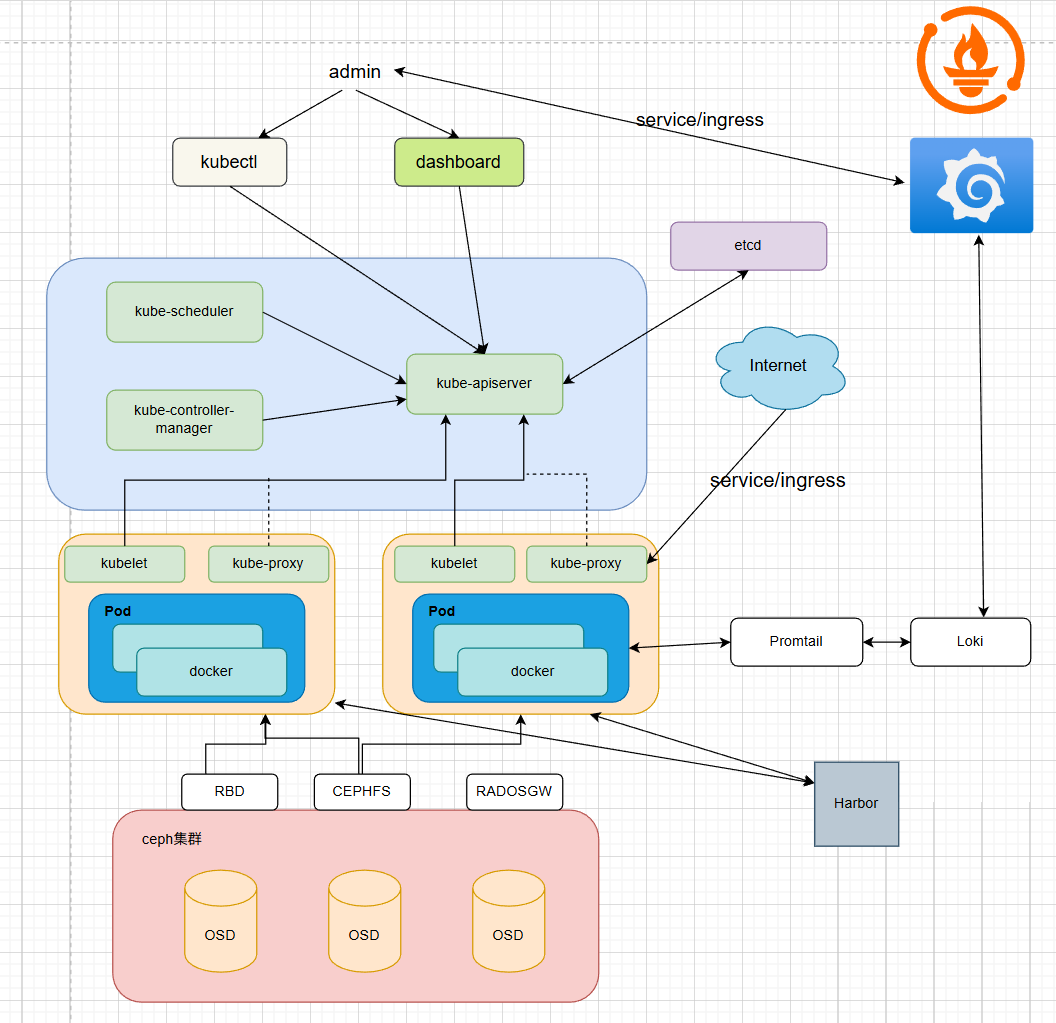

Kubernetes集群可观测性平台搭建与Ceph存储集成

项目解读

- 采用kubeadm搭建1管理2工作节点Kubernetes集群,部署Calico网络插件实现集群网络互通,使用docker作为容 器进行时筑牢集群底层运行基础;

- 额外部署一台机器作为Harbor私有镜像仓库,实现集群内镜像统一托管与安全校验,打通集群镜像拉取权限;

- 基于Helm部署Prometheus套件,整合Node Exporter、kube-state-metrics实现节点、Pod、资源指标全采集,配 置AlertManager并实现企微告警,实现异常实时通知;

- 选用更适配云原生的Loki+Promtail轻量日志架构,实现集群容器日志统一采集、存储与检索,贴合轻量化集群资源 配置;

- Grafana实现可视化对接Prometheus、Loki数据源,导入标准仪表盘,实现监控指标、日志数据一站式可视化;

- 采用cephadm部署Ceph集群,基于CSI驱动对接配置RBD与Cephfs存储类,为Prometheus、Loki、Grafana配置 持久化PVC,实现存储分离与持久化。

架构图

实验环境说明

| 主机名 | ip | 角色 | OS |

|---|---|---|---|

| reg.harbor.org | 172.25.254.200 | harbor仓库 | Rocky Linux 9.6 mini |

| k8s-master | 172.25.254.100 | master,k8s集群控制节点 | Rocky Linux 9.6 mini |

| k8s-node1 | 172.25.254.10 | worker,k8s集群工作节点 | Rocky Linux 9.6 mini |

| k8s-node2 | 172.25.254.20 | worker,k8s集群工作节点 | Rocky Linux 9.6 mini |

| ceph1 | 172.25.254.91 | ceph集群节点1 | Rocky Linux 9.6 mini |

| ceph2 | 172.25.254.92 | ceph集群节点2 | Rocky Linux 9.6 mini |

| ceph3 | 172.25.254.93 | ceph集群节点3 | Rocky Linux 9.6 mini |

kubeadm部署Kubernetes集群

1.更改软件源

全部主机

sed -e 's|^mirrorlist=|#mirrorlist=|g' \

-e 's|^#baseurl=http://dl.rockylinux.org/$contentdir|baseurl=https://mirrors.aliyun.com/rockylinux|g' \

-i.bak \

/etc/yum.repos.d/Rocky-*.repo

dnf makecache

2.最小化安装常用工具

全部主机

dnf install wget tree bash-completion vim psmisc net-tools -y

source /etc/profile.d/bash_completion.sh

3.关闭火墙与selinux

全部主机

systemctl disable firewalld.service

systemctl mask firewalld.service

sed -i '/^SELINUX=/ c SELINUX=disabled' /etc/selinux/config

setenforce 0

4.配置hosts本地解析

全部主机

cat > /etc/hosts << EOF

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

172.25.254.100 k8s-master m1

172.25.254.10 k8s-node1 n1

172.25.254.20 k8s-node2 n2

172.25.254.91 ceph1

172.25.254.92 ceph2

172.25.254.93 ceph3

172.25.254.200 reg.harbor.org reg

EOF

5.配置时间同步

全部主机

dnf install chrony -y

sed -i '/^pool/ c server ntp.aliyun.com iburst' /etc/chrony.conf

systemctl enable --now chronyd

systemctl restart chronyd

chronyc sources -v

6.关闭swap分区

k8s集群主机

systemctl disable --now swap.target

systemctl mask swap.target

sed -i 's/.*swap.*/#&/' /etc/fstab

swapoff -a

free -h

#查看是否关闭

7.修改linux最大连接数

k8s集群主机

cat >> /etc/security/limits.conf <<EOF

* soft nofile 655350

* hard nofile 655350

* soft nproc 655350

* hard nproc 655350

* soft memlock unlimited

* hard memlock unlimited

EOF

ulimit -SHn 655350

8.开启内核路由与安装ipvs

k8s集群主机

echo br_netfilter > /etc/modules-load.d/docker_mod.conf

modprobe -a br_netfilter

echo "net.ipv4.ip_forward=1" >> /etc/sysctl.conf

echo "net.bridnet.bridge.bridge-nf-call-ip6tables = 1.bridge-nf-call-iptables = 1" >> /etc/sysctl.conf

echo "net.bridge.bridge-nf-call-ip6tables = 1" >> /etc/sysctl.conf

sysctl -p

dnf install ipvsadm -y

9.配置免密钥(可选)

[root@k8s-master ~]# ssh-keygen -f ~/.ssh/id_rsa -N '' -q

[root@k8s-master ~]# ssh-copy-id root@k8s-node1

[root@k8s-master ~]# ssh-copy-id root@k8s-node2

10.安装docker

全部主机

yum install -y yum-utils

yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

dnf install docker-ce -y

systemctl enable --now docker

11.部署Harbor

harbor获取地址:https://github.com/goharbor/harbor/

1.配置ssl生成证书与公钥

[root@reg ~]# mkdir /data/certs/ -p

[root@reg ~]# openssl req -newkey rsa:4096 \

-nodes -sha256 -keyout /data/certs/harbor.org.key \

-addext "subjectAltName = DNS:reg.harbor.org" \

-x509 -days 365 -out /data/certs/harbor.org.crt

2.安装harbor与运行

[root@reg ~]# wget https://github.com/goharbor/harbor/releases/download/v2.13.5/harbor-offline-installer-v2.13.5.tgz

[root@reg ~]# tar zxf harbor-offline-installer-v2.13.5.tgz

[root@reg ~]# cd harbor/

[root@reg harbor]# cp harbor.yml.tmpl harbor.yml

[root@reg harbor]# vim harbor.yml

hostname: reg.harbor.org

......

certificate: /data/certs/harbor.org.crt

private_key: /data/certs/harbor.org.key

......

harbor_admin_password: password

[root@reg harbor]# ./install.sh

[root@reg harbor]# docker compose up -d

3.添加证书到拉取客户端

#harbor节点

[root@reg harbor]# mkdir /etc/docker/certs.d/reg.harbor.org -p

[root@reg harbor]# cp /data/certs/harbor.org.crt /etc/docker/certs.d/reg.harbor.org/ca.crt

[root@reg harbor]# systemctl restart docker

[root@reg harbor]# for i in {100,10,20}

do

scp -r /etc/docker/certs.d/ root@172.25.254.$i:/etc/docker/

done

#k8s所有节点

systemctl restart docker

4.设置镜像加速

所有k8s主机

cat >> /etc/docker/daemon.json <<EOF

{

"registry-mirrors": ["https://reg.harbor.org"]

}

EOF

systemctl restart docker

12.安装cri-docker

所有k8s主机

下载地址: https://github.com/Mirantis/cri-dockerd/releases/

安装软件

wget https://github.com/Mirantis/cri-dockerd/releases/download/v0.3.24/cri-dockerd-0.3.24-3.fc36.x86_64.rpm

wget https://rpmfind.net/linux/almalinux/8.10/BaseOS/x86_64/os/Packages/libcgroup-0.41-19.el8.x86_64.rpm #依赖

dnf install libcgroup-0.41-19.el8.x86_64.rpm -y

dnf install cri-dockerd-0.3.24-3.fc36.x86_64.rpm -y

编辑系统服务文件

vim /lib/systemd/system/cri-docker.service

......

ExecStart=/usr/bin/cri-dockerd --container-runtime-endpoint fd:// --network-plugin=cni --pod-infra-container-image=registry.aliyuncs.com/google_containers/pause:3.10.1

......

systemctl daemon-reload

systemctl enable --now cri-docker.service

systemctl enable --now cri-docker.socket

把需要的镜像推送到harbor仓库供集群的节点拉取下载

[root@k8s-master ~]# docker tag registry.aliyuncs.com/google_containers/pause:3.10.1 reg.harbor.org/k8s/pause:3.10.1

[root@k8s-master ~]# docker push reg.harbor.org/k8s/pause:3.10.1

13.安装k8s软件

k8s集群主机

cat <<EOF | tee /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes-new/core/stable/v1.34/rpm/

enabled=1

gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes-new/core/stable/v1.34/rpm/repodata/repomd.xml.key

EOF

dnf makecache

dnf install -y kubelet kubeadm kubectl

systemctl enable --now kubelet.service

#查看版本

kubeadm version

kubectl命令补全

k8s集群所有主机

echo "source <(kubectl completion bash)" >> ~/.bashrc

source ~/.bashrc

14.k8s主节点初始化

#下载所需镜像从国内地址

[root@k8s-master ~]# kubeadm config images pull \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.34.5 \

#本地推送镜像到harbor

[root@k8s-master ~]# docker login reg.harabor.org -u admin

[root@k8s-master ~]# docker images --format "{{.Repository}}:{{.Tag}}" | awk -F "/" '/google/{system("docker tag "$0" reg.harbor.org/k8s/"$3)}'

[root@k8s-master ~]# docker images --format "{{.Repository}}:{{.Tag}}" | awk -F "/" '/harbor/{system("docker push "$0)}'

在控制节点执行

[root@k8s-master ~]# kubeadm config print init-defaults > kubeadm-init.yaml

[root@k8s-master ~]# vim kubeadm-init.yaml #更改文件

12 advertiseAddress: 172.25.254.100

15 criSocket: unix:///var/run/cri-dockerd.sock

18 name: k8s-master

41 imageRepository: registry.aliyuncs.com/google_containers #可以更改为本地harbor仓库地址要是本地harbor仓库上传了k相关镜像如果没有可以在国内阿里源获取上传后更改

43 kubernetesVersion: 1.34.5

47 podSubnet: 10.244.0.0/16 #新增

#在末尾添加

---

apiVersion: kubeproxy.config.k8s.io/v1alpha1

kind: KubeProxyConfiguration

mode: ipvs

#检测语法

kubeadm config validate --config=kubeadm-init.yaml

[root@k8s-master ~]# kubeadm init --config=kubeadm-init.yaml --ignore-preflight-errors=all

#初始化出问题后清理重置后再重新初始化

kubeadm reset -f kubeadm-config.yaml --cri-socket=unix:///var/run/cri-dockerd.sock && rm -rf

$HOME/.kube /etc/cni/ /etc/kubernetes/ && ipvsadm --clear

mkdir -p $HOME/.kube

cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

chown $(id -u):$(id -g) $HOME/.kube/config

echo "export KUBECONFIG=/etc/kubernetes/admin.conf" >> ~/.bash_profile

source ~/.bashrc

15.k8s节点扩容

#在节点主机复制控制节点初始化集群生成的令牌来加入集群,还要添加cri-dockerd的套接字

kubeadm join 172.25.254.100:6443 --token abcdef.0123456789abcdef --discovery-token-ca-cert-hash sha256:1e412c3723d1b9830075632af4fd4e5bf2ec8901cce8d062d31f21a66025af73 --cri-socket=unix:///var/run/cri-dockerd.sock

#如果令牌失效了或者查找不到了可以在控制节点重新生成

kubeadm token create --print-join-command

#如果加入错误可以reset,然后重启cli-docker服务重新加入

kubeadm reset --cri-socket=unix:///var/run/cri-dockerd.sock

16.k8s部署calico网络插件

获取calico部署的自定义资源:

https://docs.tigera.io/calico/latest/getting-started/kubernetes/self-managed-onprem/onpremises#install-calico

打开后点击下载超过50节点yaml文件

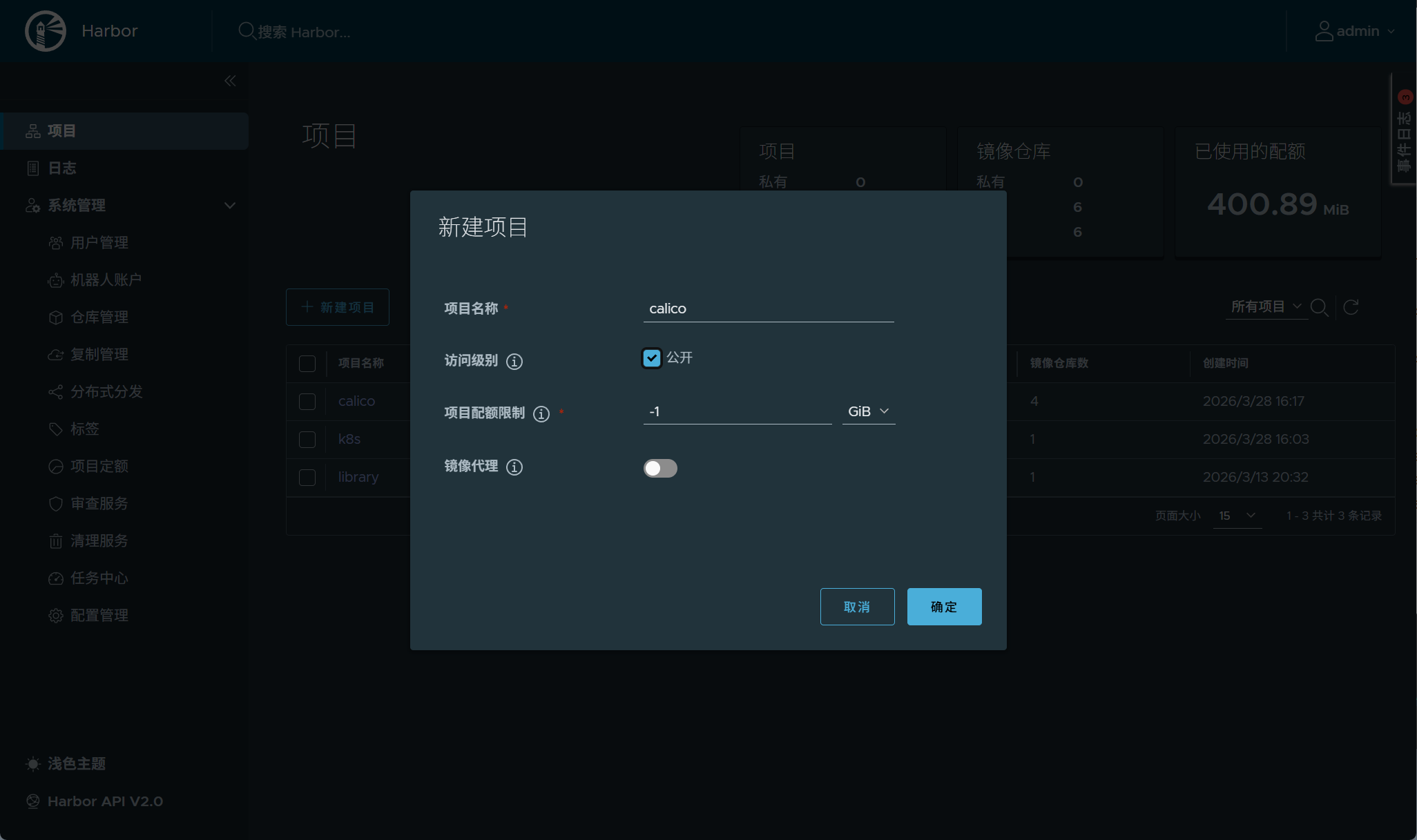

harbor仓库新建项目存放calico镜像

从本地信任的镜像把部署所需镜像打标签推送到本地harbor仓库

[root@k8s-master ~]# docker tag quay.io/calico/cni:v3.31.4 reg.harbor.org/calico/cni:v3.31.4

[root@k8s-master ~]# docker tag quay.io/calico/kube-controllers:v3.31.4 reg.harbor.org/calico/kube-controllers:v3.31.4

[root@k8s-master ~]# docker tag quay.io/calico/node:v3.31.4 reg.harbor.org/calico/node:v3.31.4

[root@k8s-node1 ~]# docker tag quay.io/calico/typha:v3.31.4 reg.harbor.org/calico/typha:v3.31.4

[root@k8s-master ~]# docker push reg.harbor.org/calico/cni:v3.31.4

[root@k8s-master ~]# docker push reg.harbor.org/calico/kube-controllers:v3.31.4

[root@k8s-master ~]# docker push reg.harbor.org/calico/node:v3.31.4

[root@k8s-node1 ~]# docker push reg.harbor.org/calico/typha:v3.31.4

只需要在master节点运行即可

[root@k8s-master ~]# curl https://raw.githubusercontent.com/projectcalico/calico/v3.31.4/manifests/calico-typha.yaml -o calico.yaml

[root@k8s-master ~]# vim calico.yaml

#还需更改image的地址为本地仓库的地址

......

# Enable IPIP

- name: CALICO_IPV4POOL_IPIP

value: "Off"

# Enable or Disable VXLAN on the default IP pool.

- name: CALICO_IPV4POOL_VXLAN

value: "Never"

# Enable or Disable VXLAN on the default IPv6 IP pool.

- name: CALICO_IPV6POOL_VXLAN

value: "Never"

......

- name: CALICO_IPV4POOL_CIDR

value: "10.244.0.0/16"

......

[root@k8s-master ~]# kubectl apply -f calico.yaml

#等待即可

[root@k8s-master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master Ready control-plane 73m v1.35.2

k8s-node1 Ready <none> 46m v1.35.2

k8s-node2 Ready <none> 46m v1.35.2

Helm部署

使用二进制部署,获取二进制资源包

wget https://get.helm.sh/helm-v4.1.3-linux-amd64.tar.gz

直接解压cp到bin目录

tar zxf helm-v4.1.3-linux-amd64.tar.gz

cp -p linux-amd64/helm /usr/local/bin/

配置命令补全并查看版本

echo "source <(helm completion bash)" >> ~/.bashrc

source ~/.bashr

helm version

version.BuildInfo{Version:"v4.1.3", GitCommit:"c94d381b03be117e7e57908edbf642104e00eb8f", GitTreeState:"clean", GoVersion:"go1.25.8", KubeClientVersion:"v1.35"}

添加常用的helm仓库

helm repo add azure http://mirror.azure.cn/kubernetes/charts

helm repo add aliyun https://kubernetes.oss-cn-hangzhou.aliyuncs.com/charts

helm repo add bitnami https://charts.bitnami.com/bitnami

部署Metric

创建工作目录

mkdir metrics

cd metrics/

添加helm包仓库

helm repo add metrics-server https://kubernetes-sigs.github.io/metrics-server/

查看默认变量模板文件,并对其进行修改

helm show values metrics-server/metrics-server > defaults-values.yaml

vim defaults-values.yaml

6 repository: registry.aliyuncs.com/google_containers/metrics-server #更改镜像源

68 containerPort: 10300 #修改端口

76 enabled: true #启用宿主机网络栈

77

78 replicas: 2 #根据集群工作节点数调整副本数

101 args:

102 - --secure-port=10300

103 - --kubelet-insecure-tls #自签名证书场景必须追加此行

[!NOTE]

提示:当开启 hostNetwork 的时候,metrics-serverd 是会使用宿主机网络栈,该 pod 默认的 10250 会和kubelet 默认端口 10250 冲突,因此建议修改端口。

使用更改后的变量模板文件进行安装

helm upgrade --install metrics-server metrics-server/metrics-server -n kube-system -f defaults-values.yaml

查看集群节点资源,验证是否安装成功

kubectl top nodes

kubectl top pods --all-namespaces

部署Ceph集群

1.更改软件源

全部主机

sed -e 's|^mirrorlist=|#mirrorlist=|g' \

-e 's|^#baseurl=http://dl.rockylinux.org/$contentdir|baseurl=https://mirrors.aliyun.com/rockylinux|g' \

-i.bak \

/etc/yum.repos.d/Rocky-*.repo

dnf makecache

2.最小化安装常用工具

全部主机

dnf install wget tree bash-completion vim psmisc net-tools -y

source /etc/profile.d/bash_completion.sh

3.配置时间同步

全部主机

dnf install chrony -y

sed -i '/^pool/ c server ntp.aliyun.com iburst' /etc/chrony.conf

systemctl enable --now chronyd

systemctl restart chronyd

chronyc sources -v

4.cephadm工具安装

cat >> /etc/yum.repos.d/ceph.repo <<EOF

[ceph]

name=Ceph

baseurl=https://mirrors.aliyun.com/ceph/rpm-squid/el9/x86_64

gpgcheck=0

[ceph-noarch]

name=Ceph noarch

baseurl=https://mirrors.aliyun.com/ceph/rpm-squid/el9/noarch

gpgcheck=0

[ceph-source]

name=Ceph source

baseurl=https://mirrors.aliyun.com/ceph/rpm-squid/el9/SRPMS

gpgcheck=0

EOF

dnf install cephadm -y

5.安装docker

全部主机

yum install -y yum-utils

yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

dnf install docker-ce -y

systemctl enable --now docker

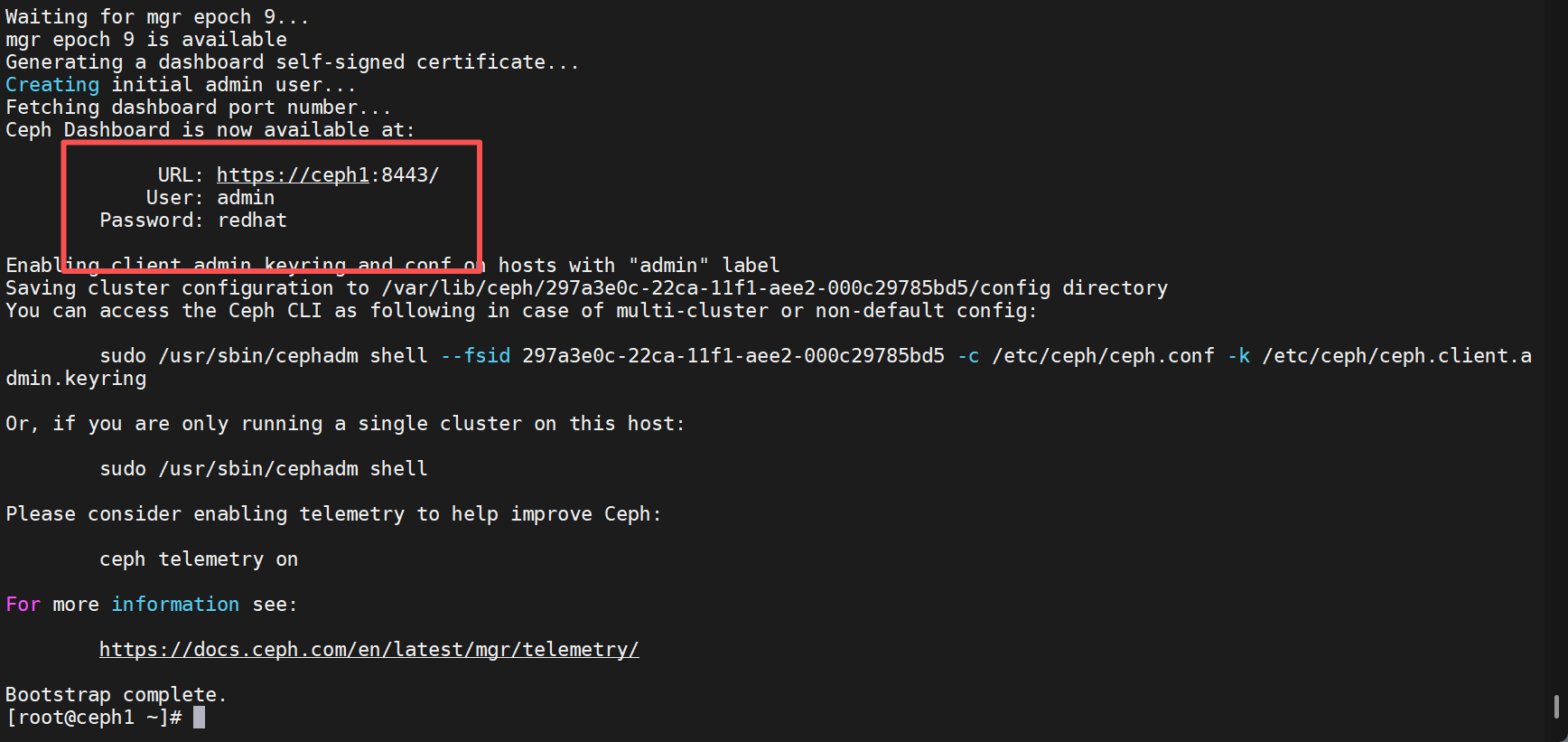

6.cephadm初始化集群

在ceph1中执行

cephadm --docker bootstrap \

--mon-ip 172.25.254.91 \

--initial-dashboard-user admin \

--initial-dashboard-password redhat \

--dashboard-password-noupdate \

--allow-fqdn-hostname

显示以下信息为初始化完成

7.集群扩容

拷贝ceph配置文件的公钥给要加入的节点

ssh-copy-id -f -i /etc/ceph/ceph.pub root@ceph2

ssh-copy-id -f -i /etc/ceph/ceph.pub root@ceph3

添加节点到集群

cephadm shell ceph orch host add ceph2

cephadm shell ceph orch host add ceph3

8.安装ceph-common客户端管理

ceph集群全部节点安装

#要安装epel源,存在依赖

yum install -y https://mirrors.aliyun.com/epel/epel-release-latest-9.noarch.rpm

sed -i 's|^#baseurl=https://download.example/pub|baseurl=https://mirrors.aliyun.com|' /etc/yum.repos.d/epel*

sed -i 's|^metalink|#metalink|' /etc/yum.repos.d/epel*

dnf install ceph-common -y

```

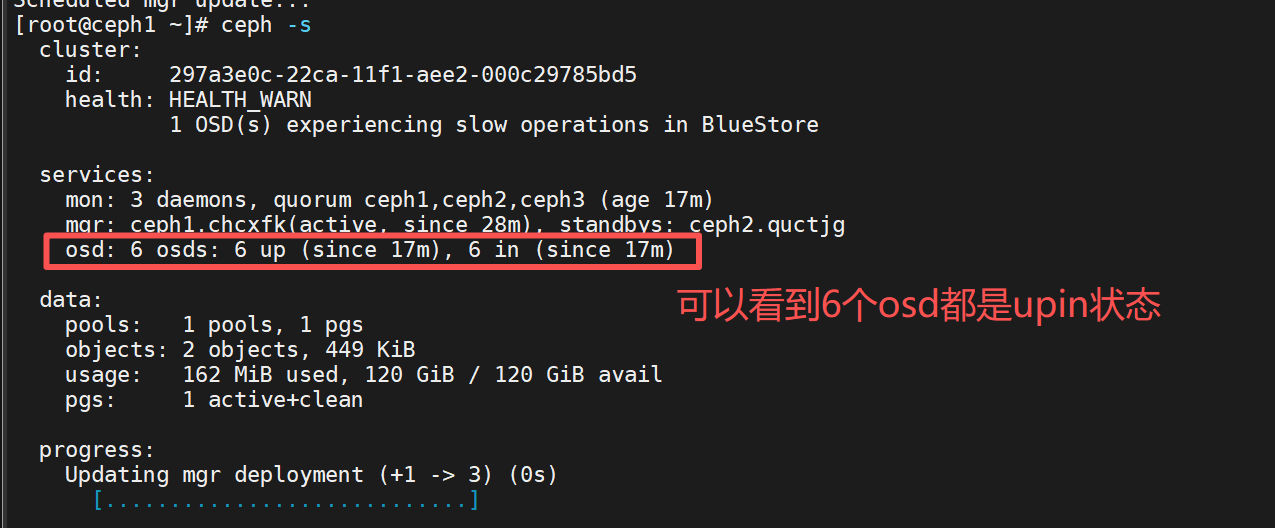

9.添加osd

#查看可用设备

ceph orch device ls --wide

ceph orch apply osd --all-available-devices

或者一个一个加

ceph orch daemon add osd ceph1:/dev/nvme0n2

ceph orch daemon add osd ceph1:/dev/nvme0n3

ceph orch daemon add osd ceph2:/dev/nvme0n2

ceph orch daemon add osd ceph2:/dev/nvme0n3

ceph orch daemon add osd ceph3:/dev/nvme0n2

ceph orch daemon add osd ceph3:/dev/nvme0n3

......

#查看 OSD 状态

ceph osd tree

ceph osd stat

10.添加管理节点

在大型ceph集群中设定的主机为管理节点则不会将其设为osd节点

ceph orch host label add ceph1 _admin

11.部署mon与mgr

ceph orch apply mon "ceph1 ceph2 ceph3"

ceph orch apply mgr --placement "ceph1 ceph2 ceph3"

12.查看ceph集群状态

ceph -s

ceph -w 实时查看ceph状态

Kubernetes对接ceph集群

项目网址:https://github.com/ceph/ceph-csi

#把软件拉取下来后cd到要对接的rbd或者cephfs上

cd ceph-csi-3.16.2/deploy/rbd或者cephfs/kubernetes/

#目录下有认证与授权,控制器与插件,配置文件与secret以及csidriver的yaml文件

https://github.com/ceph/ceph-csi/tree/devel/examples

#examples下有rbd或者ceph的PVC,storageclsss,以及测试pod的yaml文件

k8s对接ceph rbd

1.k8s安装ceph客户端

cat >> /etc/yum.repos.d/ceph.repo <<EOF

[ceph]

name=Ceph

baseurl=https://mirrors.aliyun.com/ceph/rpm-squid/el9/x86_64

gpgcheck=0

[ceph-noarch]

name=Ceph noarch

baseurl=https://mirrors.aliyun.com/ceph/rpm-squid/el9/noarch

gpgcheck=0

[ceph-source]

name=Ceph source

baseurl=https://mirrors.aliyun.com/ceph/rpm-squid/el9/SRPMS

gpgcheck=0

EOF

yum install -y https://mirrors.aliyun.com/epel/epel-release-latest-9.noarch.rpm

sed -i 's|^#baseurl=https://download.example/pub|baseurl=https://mirrors.aliyun.com|' /etc/yum.repos.d/epel*

sed -i 's|^metalink|#metalink|' /etc/yum.repos.d/epel*

dnf install ceph-common -y

2.获取ceph配置文件

只有获取了ceph配置文件才能通过ceph-common来管理ceph

scp -r /etc/ceph/ root@k8s-master:/etc/

scp -r /etc/ceph/ root@k8s-node1:/etc/

scp -r /etc/ceph/ root@k8s-node2:/etc/

3.创建数据池并初始化

ceph新建pool,user

[root@k8s-master ~]# ceph osd pool create kubernetes

[root@k8s-master ~]# rbd pool init kubernetes

如果创建错误可以删除用过以下命令

#查看存在的池

[root@k8s-master kubernetes]# ceph osd pool ls

#ceph默认是关闭了删除池的功能要开启,确保安全

[root@k8s-master kubernetes]# ceph config set mon mon_allow_pool_delete true

#要输入两次池的名称来确认要要删除

[root@k8s-master kubernetes]# ceph osd pool delete kubernets kubernets --yes-i-really-really-mean-it

#设置回关闭

[root@k8s-master kubernetes]# ceph config set mon mon_allow_pool_delete false

4.去除master的污点

[root@k8s-master kubernetes]# kubectl describe nodes k8s-master | grep -i taint

[root@k8s-master kubernetes]# kubectl taint node k8s-master node-role.kubernetes.io/control-plane:NoSchedule-

node/k8s-master untainted

5.部署csi控制器与插件的认证用户与授权

#部署用户认证与授权

[root@k8s-master kubernetes]# kubectl apply -f csi-provisioner-rbac.yaml

[root@k8s-master kubernetes]# kubectl apply -f csi-nodeplugin-rbac.yaml

6.部署csi的驱动

[root@k8s-master kubernetes]# cat csidriver.yaml

---

apiVersion: storage.k8s.io/v1

kind: CSIDriver

metadata:

name: "rbd.csi.ceph.com"

spec:

attachRequired: true

podInfoOnMount: false

seLinuxMount: true

fsGroupPolicy: File

#创建部署csi驱动,允许csi能创建和挂载存储卷

[root@k8s-master kubernetes]# kubectl apply -f csidriver.yaml

7.部署csi控制器与插件

#此处如果没有访问外网能力需要更改镜像地址为国内

[root@k8s-master kubernetes]# sed -i 's#registry.k8s.io/sig-storage#registry.cn-hangzhou.aliyuncs.com/google-containers#' csi-nodeplugin-rbac.yaml

[root@k8s-master kubernetes]# sed -i 's#registry.k8s.io/sig-storage#registry.cn-hangzhou.aliyuncs.com/google-containers#' csi-nodeplugin-rbac.yaml

#部署csi控制器与csi节点插件

[root@k8s-master kubernetes]# kubectl apply -f csi-rbdplugin-provisioner.yaml

[root@k8s-master kubernetes]# kubectl apply -f csi-rbdplugin.yaml

8.创建配置文件cm

#查看集群信息

[root@k8s-master ~]# ceph mon dump

epoch 3

fsid 297a3e0c-22ca-11f1-aee2-000c29785bd5 #配置文件要指定

last_changed 2026-03-18T13:27:47.857110+0000

created 2026-03-18T12:58:35.103687+0000

min_mon_release 19 (squid)

election_strategy: 1

0: [v2:172.25.254.91:3300/0,v1:172.25.254.91:6789/0] mon.ceph1

1: [v2:172.25.254.92:3300/0,v1:172.25.254.92:6789/0] mon.ceph2

2: [v2:172.25.254.93:3300/0,v1:172.25.254.93:6789/0] mon.ceph3

dumped monmap epoch 3

[root@k8s-master kubernetes]# vim csi-config-map.yaml

---

apiVersion: v1

kind: ConfigMap

metadata:

name: "ceph-csi-config"

data:

config.json: |-

[

{

"clusterID": "297a3e0c-22ca-11f1-aee2-000c29785bd5",

"monitors":[

"172.25.254.91:6789",

"172.25.254.92:6789",

"172.25.254.93:6789"

]

}

]

[root@k8s-master kubernetes]# kubectl apply -f csi-config-map.yaml

[root@k8s-master kubernetes]# cat ceph-config-map.yaml

---

apiVersion: v1

kind: ConfigMap

data:

ceph.conf: |

[global]

auth_cluster_required = cephx

auth_service_required = cephx

auth_client_required = cephx

keyring: |

metadata:

name: ceph-config

[root@k8s-master kubernetes]# kubectl apply -f ceph-config-map.yaml

[root@k8s-master kubernetes]# cat csi-kms-config-map.yaml

---

apiVersion: v1

kind: ConfigMap

data:

config.json: |-

{}

metadata:

name: ceph-csi-encryption-kms-config

[root@k8s-master kubernetes]# kubectl apply -f csi-kms-config-map.yaml

9.创建认证文件secret

#为kubernets与ceph-csi创建用户

[root@k8s-master ~]# ceph auth get-or-create client.kubernetes mon 'profile rbd' osd 'profile rbd pool=kubernetes' mgr 'profile rbd pool=kubernetes'

[client.kubernetes]

key = AQBui7tpyXcLNxAAfRIyG39SG4ogbTpUx5Knvw==

#查看用户

[root@k8s-master ~]# ceph auth ls | grep -A 4 client.kubernetes

client.kubernetes

key: AQBui7tpyXcLNxAAfRIyG39SG4ogbTpUx5Knvw==

caps: [mgr] profile rbd pool=kubernetes

caps: [mon] profile rbd

caps: [osd] profile rbd pool=kubernetes

[root@k8s-master kubernetes]# cat csi-rbd-secret.yaml

---

apiVersion: v1

kind: Secret

metadata:

name: csi-rbd-secret

stringData:

userID: kubernetes

userKey: AQBui7tpyXcLNxAAfRIyG39SG4ogbTpUx5Knvw==

[root@k8s-master kubernetes]# kubectl apply -f csi-rbd-secret.yaml

10.创建存储类

$ cat <<EOF > csi-rbd-sc.yaml

---

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: csi-rbd-sc

provisioner: rbd.csi.ceph.com

parameters:

clusterID: b9127830-b0cc-4e34-aa47-9d1a2e9949a8

pool: kubernetes

imageFeatures: layering

csi.storage.k8s.io/provisioner-secret-name: csi-rbd-secret

csi.storage.k8s.io/provisioner-secret-namespace: default

csi.storage.k8s.io/controller-expand-secret-name: csi-rbd-secret

csi.storage.k8s.io/controller-expand-secret-namespace: default

csi.storage.k8s.io/node-stage-secret-name: csi-rbd-secret

csi.storage.k8s.io/node-stage-secret-namespace: default

reclaimPolicy: Delete

allowVolumeExpansion: true

mountOptions:

- discard

EOF

$ kubectl apply -f csi-rbd-sc.yaml

k8s对接cephfs

1.准备工作

以下操作与对接ceph rbd一致

- k8s安装ceph客户端

- 获取ceph配置文件

2.创建数据池与元数据池,fs

cephfs要创建元数据池

#创建数据池与元数据池

ceph osd pool create k8s_cephfs_data 64

ceph osd pool create k8s_cephfs_metadata 64

#根据数据池与元数据池创建fs

ceph fs new <名称> <元数据池> <数据池>

ceph fs new k8s_cephfs k8s_cephfs_metadata k8s_cephfs_data

#查看命令

ceph osd pool ls #查看pool的个数

ceph fs ls #查看文件系统的个数

3.部署MDS

MDS是ceph作为cephfs时必须要的组件用于处理文件系统的元数据

#创建mds(处理元数据组件) --placemenet 3 ceph1 ceph2 ceph3指定部署在节点

ceph orch apply mds k8s_cephfs --placement="3 ceph1 ceph2 ceph3"

4.创建子卷

#在cephfs文件系统中创建一个子卷,不创建的话pvc显示pending,因为volume不能挂载到cephfs文件系统的根目录(k8s_cephfs_data)只能挂载到子目录上

[root@k8s-master kubernetes]# ceph fs subvolumegroup create k8s_cephfs csi

[root@k8s-master kubernetes]# ceph fs subvolumegroup ls k8s_cephfs

[

{

"name": "csi" #查看创建的子卷

}

]

5.创建用户(可选)

这个用户是csi管理ceph集群的用户,可以设定对应用户权限

#创建ceph用户,使用用户进行挂载(可选)

[root@k8s-master kubernetes]# ceph fs authorize fs client.user01 / rwps -o /etc/ceph/ceph.client.user01.keyring client:/etc/ceph/

[root@k8s-master kubernetes]# ceph fs authorize fs client.user02 / r -o /etc/ceph/ceph.client.user02.keyring

ceph权限包括:

r:仅读,如果未指定其他限制,则会向其子目录授予r权限

w:写入,如果未指定其他限制,则会向其子目录授予w权限

p:使用配额权限

s:创建快照权限

#复制用户文件到客户端

scp /etc/ceph/ceph.client.user01.keyring client1:/etc/ceph/

scp /etc/ceph/ceph.client.user02.keyring client2:/etc/ceph/

6.创建控制csi控制器的认证与授权

#创建认证用户与授权

[root@k8s-master kubernetes]# kubectl apply -f csi-nodeplugin-rbac.yaml -f csi-provisioner-rbac.yaml

7.部署csi驱动

[root@k8s-master kubernetes]# cat csidriver.yaml

---

apiVersion: storage.k8s.io/v1

kind: CSIDriver

metadata:

name: "cephfs.csi.ceph.com"

spec:

attachRequired: true

podInfoOnMount: false

fsGroupPolicy: File

seLinuxMount: true

#创建部署csi驱动,允许csi能创建和挂载存储卷

[root@k8s-master kubernetes]# kubectl apply -f csidriver.yaml

8.去除master的污点

[root@k8s-master kubernetes]# kubectl describe nodes k8s-master | grep -i taint

[root@k8s-master kubernetes]# kubectl taint node k8s-master node-role.kubernetes.io/control-plane:NoSchedule-

node/k8s-master untainted

9.部署csi控制器与插件

#此处如果没有访问外网能力需要更改镜像地址为国内

[root@k8s-master kubernetes]# sed -i 's#registry.k8s.io/sig-storage#registry.cn-hangzhou.aliyuncs.com/google-containers#' csi-nodeplugin-rbac.yaml

[root@k8s-master kubernetes]# sed -i 's#registry.k8s.io/sig-storage#registry.cn-hangzhou.aliyuncs.com/google-containers#' csi-nodeplugin-rbac.yaml

部署csi驱动与csi节点插件

[root@k8s-master kubernetes]# kubectl apply -f csi-cephfsplugin-provisioner.yaml -f csi-cephfsplugin.yaml

10.创建配置文件cm

[root@k8s-master kubernetes]# vim csi-cephfs-config-map.yaml

---

apiVersion: v1

kind: ConfigMap

metadata:

name: "ceph-csi-config"

data:

config.json: |-

[

{

"clusterID": "297a3e0c-22ca-11f1-aee2-000c29785bd5",

"monitors":[

"172.25.254.91:6789",

"172.25.254.92:6789",

"172.25.254.93:6789"

],

"cephFS": {

"subvolumeGroup": "csi"

}

}

]

[root@k8s-master kubernetes]# kubectl apply -f csi-cephfs-config-map.yaml

11.创建secret认证文件

#csi直接使用管理员用户来管理ceph集群

[root@k8s-master kubernetes]# ceph auth get client.admin

[client.admin]

key = AQB5obppMzDDNRAAd5QM5eCYI3syzYr1tIdfYQ==

caps mds = "allow *"

caps mgr = "allow *"

caps mon = "allow *"

caps osd = "allow *"

[root@k8s-master kubernetes]# vim csi-cephfs-secret.yaml

---

apiVersion: v1

kind: Secret

metadata:

name: csi-cephfs-secret

stringData:

userID: admin

userKey: AQB5obppMzDDNRAAd5QM5eCYI3syzYr1tIdfYQ==

adminID: admin

adminKey: AQB5obppMzDDNRAAd5QM5eCYI3syzYr1tIdfYQ==

encryptionPassphrase: test_passphrase

[root@k8s-master kubernetes]# kubectl apply -f csi-cephfs-secret.yaml

12.创建存储类

#创建存储类

[root@k8s-master kubernetes]# vim csi-cephfs-sc.yaml

---

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: csi-cephfs-sc

provisioner: cephfs.csi.ceph.com

parameters:

clusterID: 297a3e0c-22ca-11f1-aee2-000c29785bd5

fsName: k8s_cephfs

pool: k8s_cephfs_data

csi.storage.k8s.io/provisioner-secret-name: csi-cephfs-secret

csi.storage.k8s.io/provisioner-secret-namespace: default

csi.storage.k8s.io/controller-expand-secret-name: csi-cephfs-secret

csi.storage.k8s.io/controller-expand-secret-namespace: default

csi.storage.k8s.io/controller-publish-secret-name: csi-cephfs-secret

csi.storage.k8s.io/controller-publish-secret-namespace: default

csi.storage.k8s.io/node-stage-secret-name: csi-cephfs-secret

csi.storage.k8s.io/node-stage-secret-namespace: default

mounter: kernel

reclaimPolicy: Delete

allowVolumeExpansion: true

[root@k8s-master kubernetes]# kubectl apply -f csi-cephfs-sc.yaml

部署Loki

Loki的部署

创建工作目录

[root@k8s-master ~]# mkdir loki

[root@k8s-master ~]# cd loki/

添加loki的helm仓库并拉取下来

[root@k8s-master loki]# helm repo add grafana https://grafana.github.io/helmcharts

[root@k8s-master loki]# ls

loki-stack-2.9.11.tgz

[root@k8s-master loki]# tar zxf loki-stack-2.9.11.tgz

[root@k8s-master loki]# cd loki-stack/

[root@k8s-master loki-stack]# ls

Chart.yaml charts requirements.yaml values-pre.yaml values.yaml

README.md requirements.lock templates values-pre.yaml.bak

更改自定义模板

[root@k8s-master loki-stack]# cat values-pre.yaml

loki:

enabled: true

persistence:

enabled: true

accessModes:

- ReadWriteOnce

size: 2Gi

storageClassName: csi-cephfs-sc #指定ceph为存储类

promtail:

enabled: true

defaultVolumes:

- name: run

hostPath:

path: /run/promtail

- name: containers

hostPath:

path: /var/lib/docker/containers/

- name: pods

hostPath:

path: /var/log/pods

defaultVolumeMounts:

- name: run

mountPath: /run/promtail

- name: containers

mountPath: /var/lib/docker/containers/

readOnly: true

- name: pods

mountPath: /var/log/pods

readOnly: true

grafana:

enabled: true

persistence:

enabled: true

accessModes:

- ReadWriteOnce

size: 2Gi

storageClassName: csi-cephfs-sc

安装之前先创建命令空间

[root@k8s-master loki-stack]# kubectl create ns loki

然后执行命令安装loki

#使用-f 参数来指定模板文件

[root@k8s-master loki-stack]# helm install loki -n loki -f values-pre.yaml

NAME: loki

LAST DEPLOYED: Thu Mar 26 00:27:22 2026

NAMESPACE: loki

STATUS: deployed

REVISION: 1

DESCRIPTION: Install complete

NOTES:

The Loki stack has been deployed to your cluster. Loki can now be added as a datasource in Grafana.

See http://docs.grafana.org/features/datasources/loki/ for more detail.

安装完成后查看pod,svc信息

[root@k8s-master loki-stack]# kubectl get pod,svc -n loki

NAME READY STATUS RESTARTS AGE

pod/loki-0 1/1 Running 0 2m48s

pod/loki-grafana-679c5987-9526m 2/2 Running 0 2m48s

pod/loki-promtail-5hl7w 1/1 Running 0 2m48s

pod/loki-promtail-hllvg 1/1 Running 0 2m48s

pod/loki-promtail-sqths 1/1 Running 0 2m48s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/loki ClusterIP 10.103.35.250 <none> 3100/TCP 2m48s

service/loki-grafana ClusterIP 10.99.149.71 <none> 80/TCP 2m48s

service/loki-headless ClusterIP None <none> 3100/TCP 2m48s

service/loki-memberlist ClusterIP None <none> 7946/TCP 2m48s

可以看到这里有一个叫 loki-grafana 的 service,我们可以通过它对应的 IP 来访问。由于现在的类型是 ClusterIP 无法在集群外部访问,我们可以将其修改为 NodePort 或使用 ingress 来访问,此处采用 NodePort 方式。执行如下命令来修改 loki-grafana

[root@k8s-master loki-stack]# kubectl -n loki edit svc loki-grafana

将 type: ClusterIP 修改为 type: NodePort 类型,然后保存退出并执行如下命令查看 svc 信息:

[root@k8s-master loki-stack]# kubectl get svc -n loki

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

loki ClusterIP 10.103.35.250 <none> 3100/TCP 6m33s

loki-grafana NodePort 10.99.149.71 <none> 80:32322/TCP 6m33s

loki-headless ClusterIP None <none> 3100/TCP 6m33s

loki-memberlist ClusterIP None <none> 7946/TCP 6m33s

可以看到 loki-grafana 的类型已经变为 NodePort 了。此时我们就可以打开浏览器输入 http://172.25.254.100:32322就可以访问了。

tips:只要访问集群任意加点+暴露的随机端口即可访问成功

默认用户名是admin,如果不知道密码也可通过如下命令查看:

[root@k8s-master loki-stack]# kubectl get secrets -n loki

NAME TYPE DATA AGE

loki Opaque 1 8m43s

loki-grafana Opaque 3 8m43s #这个就是存储密码的secret

loki-promtail Opaque 1 8m43s

sh.helm.release.v1.loki.v1 helm.sh/release.v1 1 8m43s

[root@k8s-master loki-stack]# kubectl get secret loki-grafana -n loki -o yaml

apiVersion: v1

data:

admin-password: Nks5b3Z0SDh5MTRkU1ZjWDRXSE4xbnNtM25iTFRIQVRWSzVTRVJhSw==

admin-user: YWRtaW4=

ldap-toml: ""

kind: Secret

metadata:

annotations:

meta.helm.sh/release-name: loki

meta.helm.sh/release-namespace: loki

creationTimestamp: "2026-03-25T16:27:22Z"

labels:

app.kubernetes.io/instance: loki

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/name: grafana

app.kubernetes.io/version: 8.3.5

helm.sh/chart: grafana-6.43.5

name: loki-grafana

namespace: loki

resourceVersion: "8404"

uid: 4e99734d-6390-4680-8486-8551bb501ec5

type: Opaque

将查看到的密码通过如下命令反编码回来即可

[root@k8s-master loki-stack]# echo -n "Nks5b3Z0SDh5MTRkU1ZjWDRXSE4xbnNtM25iTFRIQVRWSzVTRVJhSw==" | base64 -d

6K9ovtH8y14dSVcX4WHN1nsm3nbLTHATVK5SERaK

Loki的使用

使用账号密码登录成功后看到如下界面

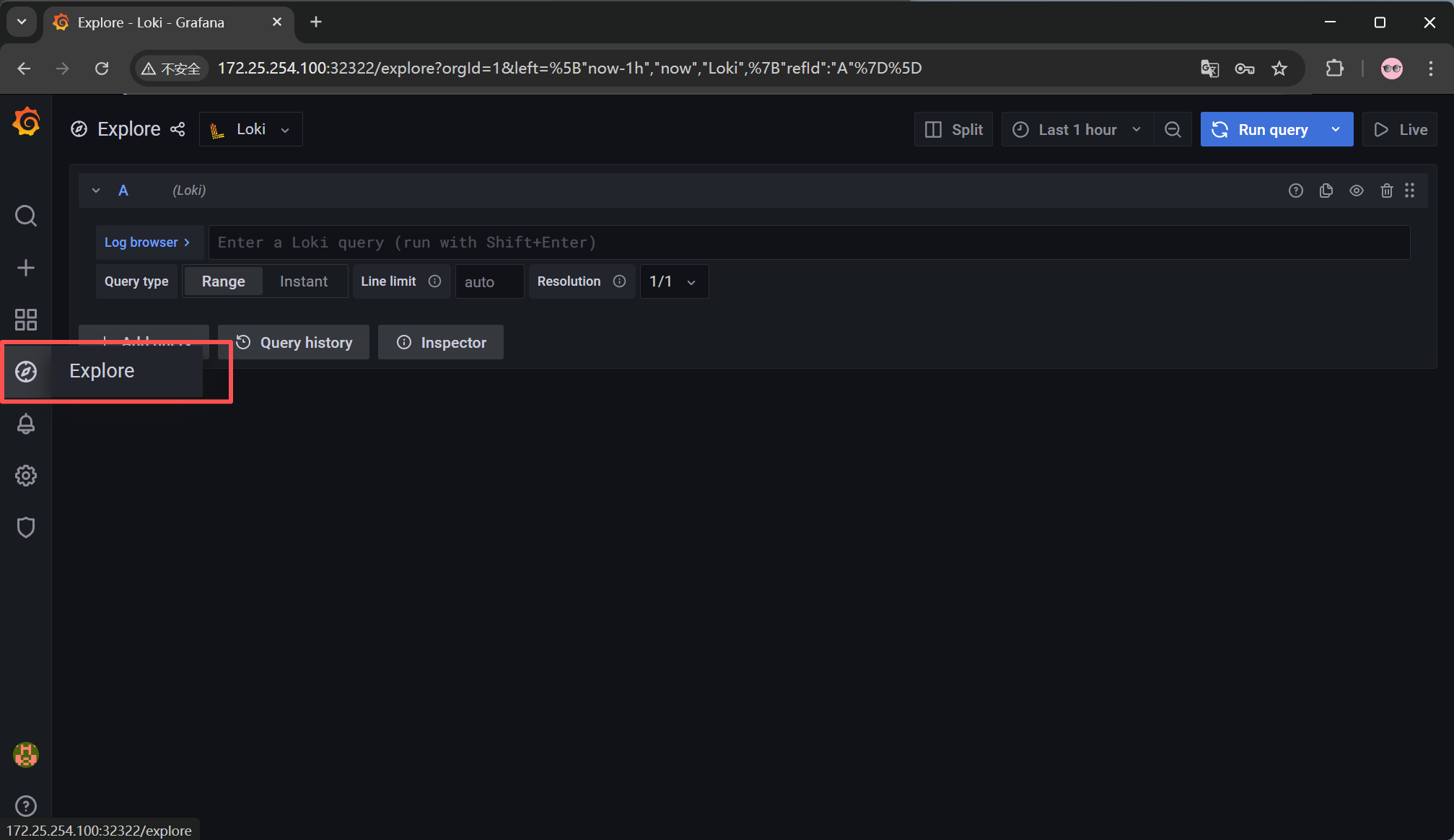

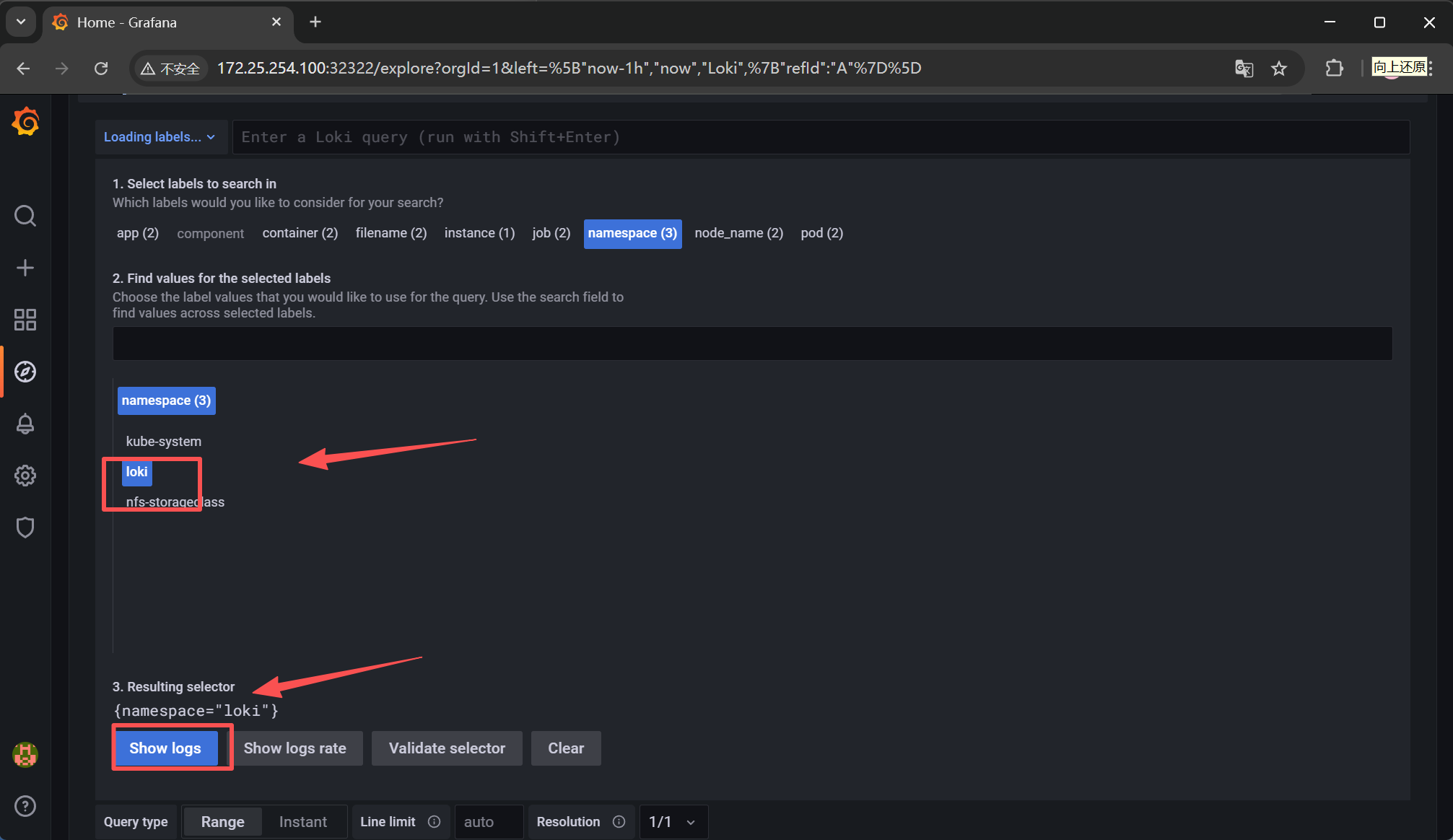

点击指南针图标所,再点击 Explore 链接后进入如下界面:

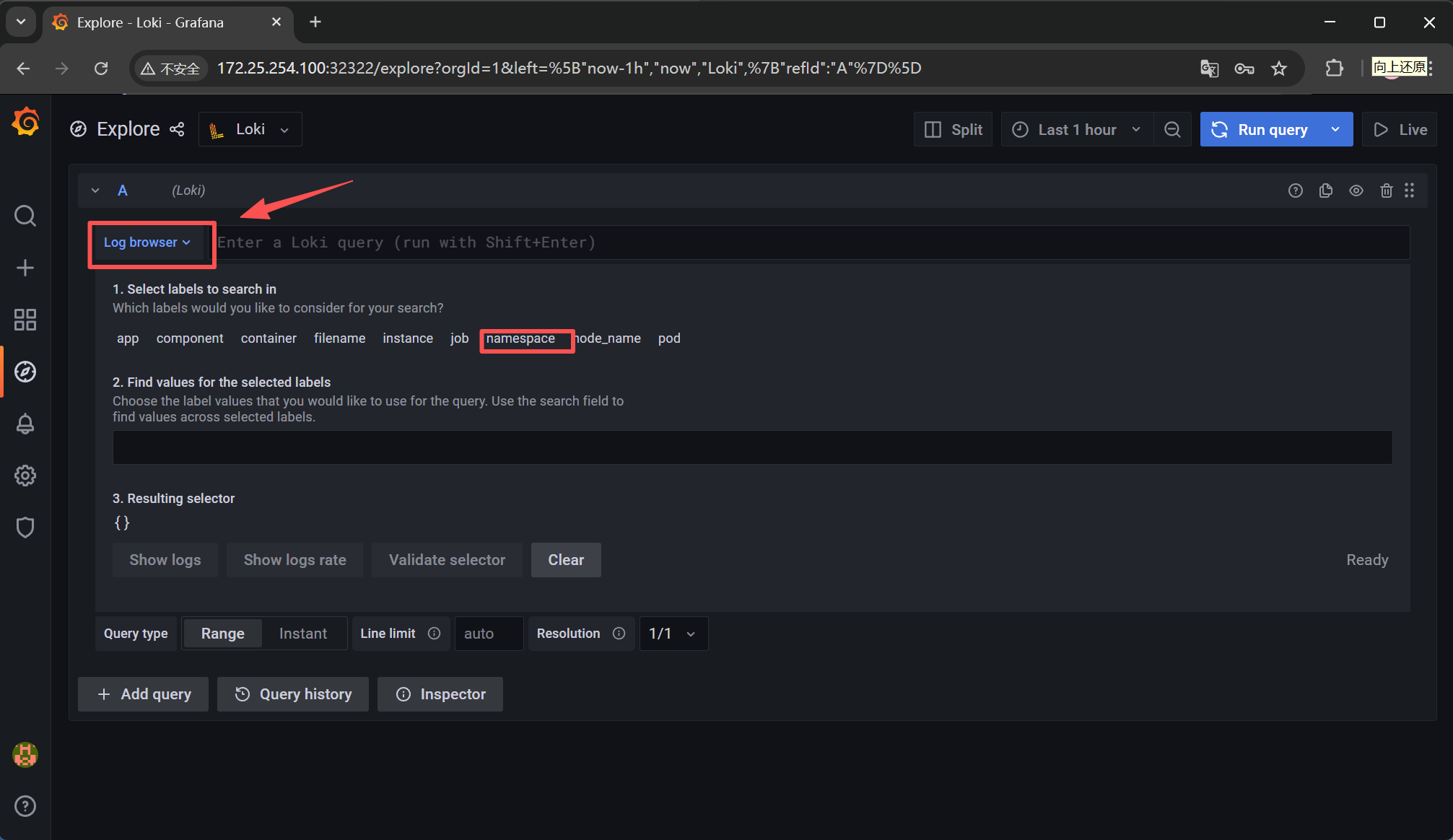

然后点击如下图所示箭头,再点击namespace标签

接着再点击 loki 后,再点击 Show logs 按钮

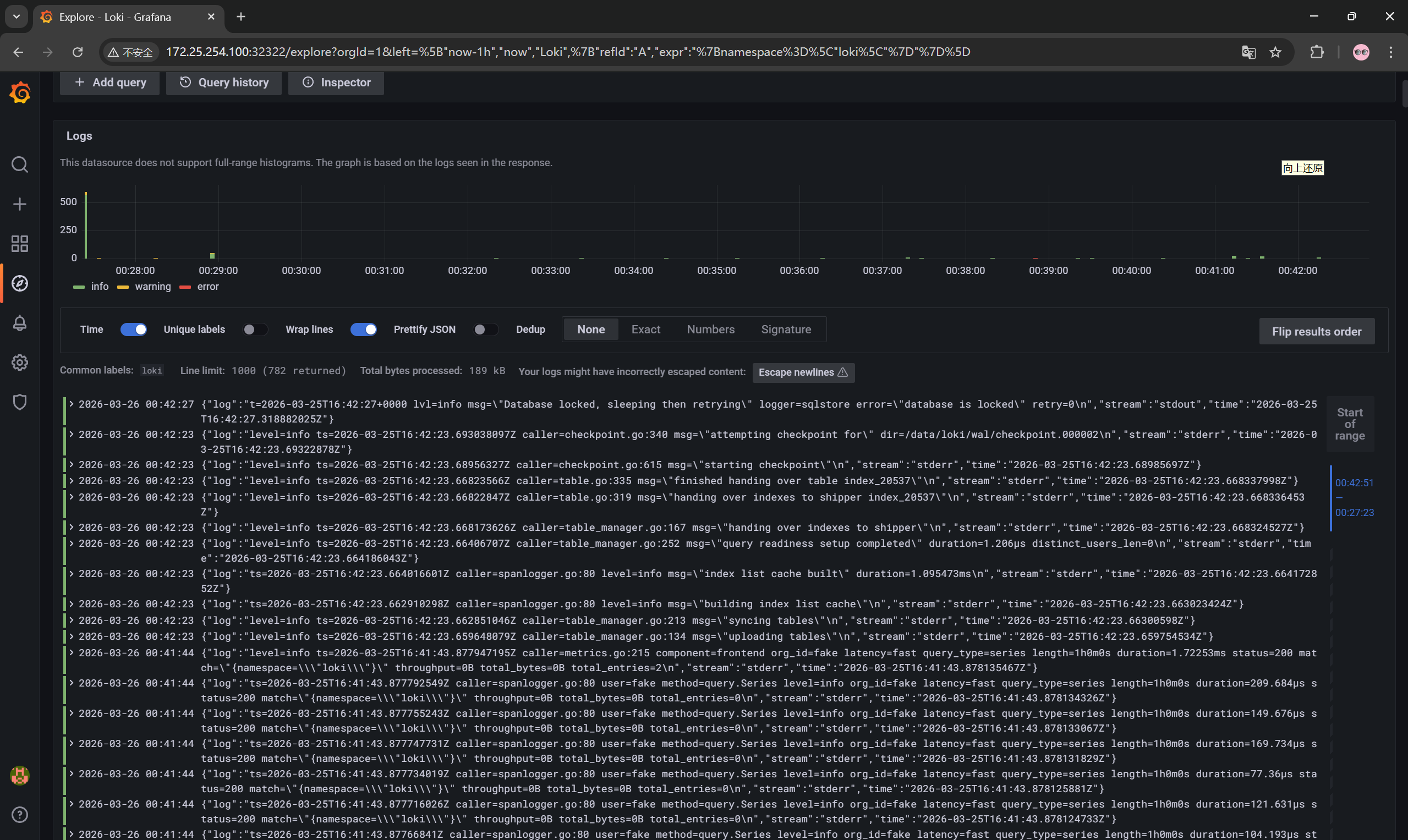

然后向下滚动,这样就可以查看到日志了

部署Prometheus

Prometheus的部署

创建工作目录

[root@k8s-master ~]# mkdir prometheus

[root@k8s-master ~]# cd prometheus/

添加项目所在helm仓库

[root@k8s-master prometheus]# helm repo add prometheus-community https://prometheus-community.github.io/helm-charts

从仓库拉取chart包下来

[root@k8s-master prometheus]# helm pull prometheus-community/kube-prometheus-stack

[root@k8s-master prometheus]# ls

kube-prometheus-stack-82.14.1.tgz

解压chart包

[root@k8s-master prometheus]# tar zxf kube-prometheus-stack-82.14.1.tgz

[root@k8s-master prometheus]# ls

kube-prometheus-stack kube-prometheus-stack-82.14.1.tgz

[root@k8s-master prometheus]# cd kube-prometheus-stack/

[root@k8s-master kube-prometheus-stack]# ls

Chart.lock Chart.yaml README.md charts templates values.yaml

更改模板文件为组件提供持久化存储

[root@k8s-master kube-prometheus-stack]# vim values.yaml

搜索:prometheus → prometheusSpec → storageSpec

storageSpec:

volumeClaimTemplate:

spec:

storageClassName: csi-cephfs-sc #改为ceph的存储类

accessModes: ["ReadWriteOnce"]

resources:

requests:

storage: 10Gi

搜索:alertmanager → alertmanagerSpec → storage

storage:

volumeClaimTemplate:

spec:

storageClassName: csi-cephfs-sc #同理

accessModes: ["ReadWriteOnce"]

resources:

requests:

storage: 10Gi

搜索:grafana → persistence

persistence:

enabled: true

type: sts

storageClassName: csi-cephfs-sc

accessModes:

- ReadWriteOnce

size: 20Gi

创建管理资源的命名空间

[root@k8s-master kube-prometheus-stack]# kubectl create namespace kube-prometheus-stack

开始安装部署

[root@k8s-master kube-prometheus-stack]# helm -n kube-prometheus-stack install kube-prometheus-stack .

NAME: kube-prometheus-stack

LAST DEPLOYED: Thu Mar 26 13:12:01 2026

NAMESPACE: kube-prometheus-stack

STATUS: deployed #显示安装陈工

REVISION: 1

DESCRIPTION: Install complete

TEST SUITE: None

NOTES:

......

查看资源的pod,svc是否运行成功

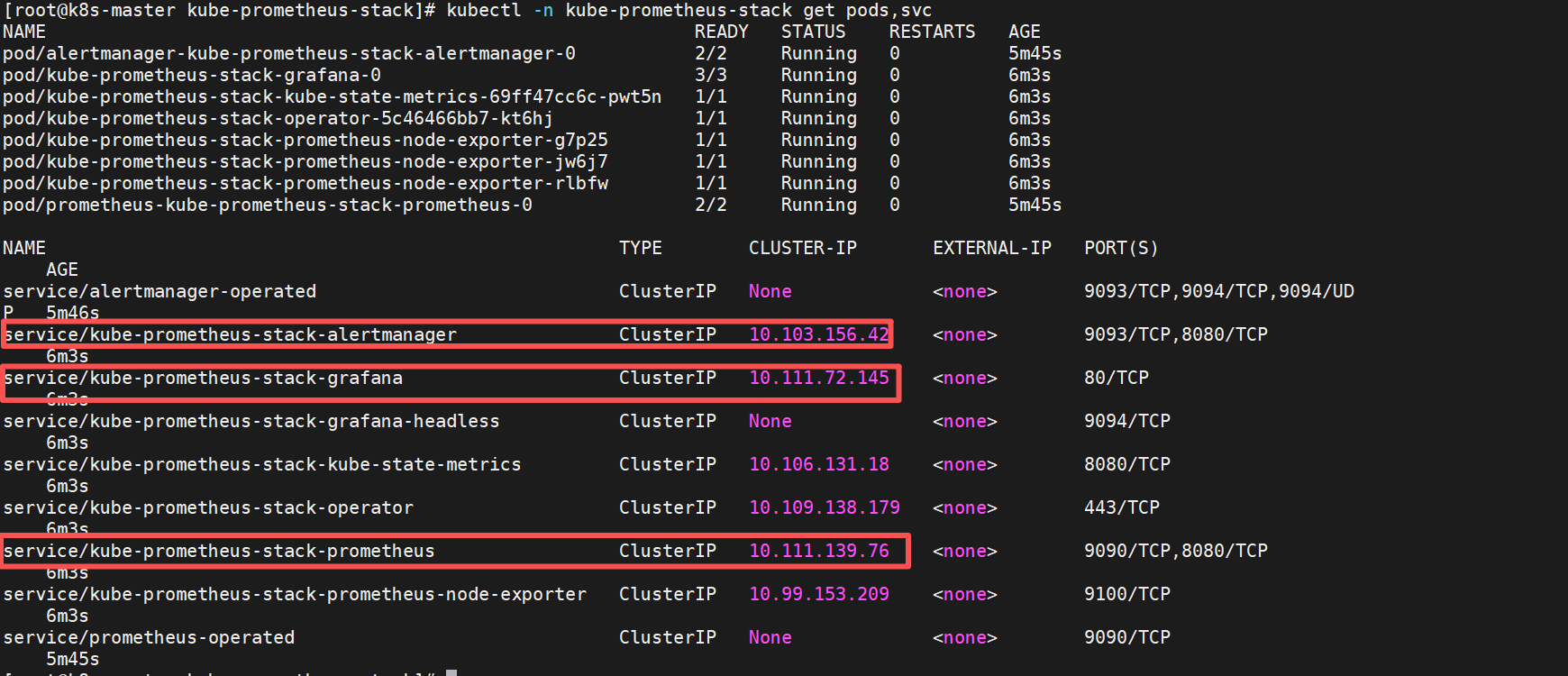

[root@k8s-master kube-prometheus-stack]# kubectl -n kube-prometheus-stack get pods,svc

把svc的暴露方式修改为NodePort提供访问

#修改暴漏方式,把grafana,Prometheus主程序,alertmanager告警设置为loadbalancer可以通过http访问查看

[root@k8s-master kube-prometheus-stack]# kubectl -n kube-prometheus-stack edit svc kube-prometheus-stack-grafana

type: NodePort

[root@k8s-master kube-prometheus-stack]# kubectl -n kube-prometheus-stack edit svc kube-prometheus-stack-alertmanager

type: NodePort

[root@k8s-master kube-prometheus-stack]# kubectl -n kube-prometheus-stack edit svc kube-prometheus-stack-prometheus

type: NodePort

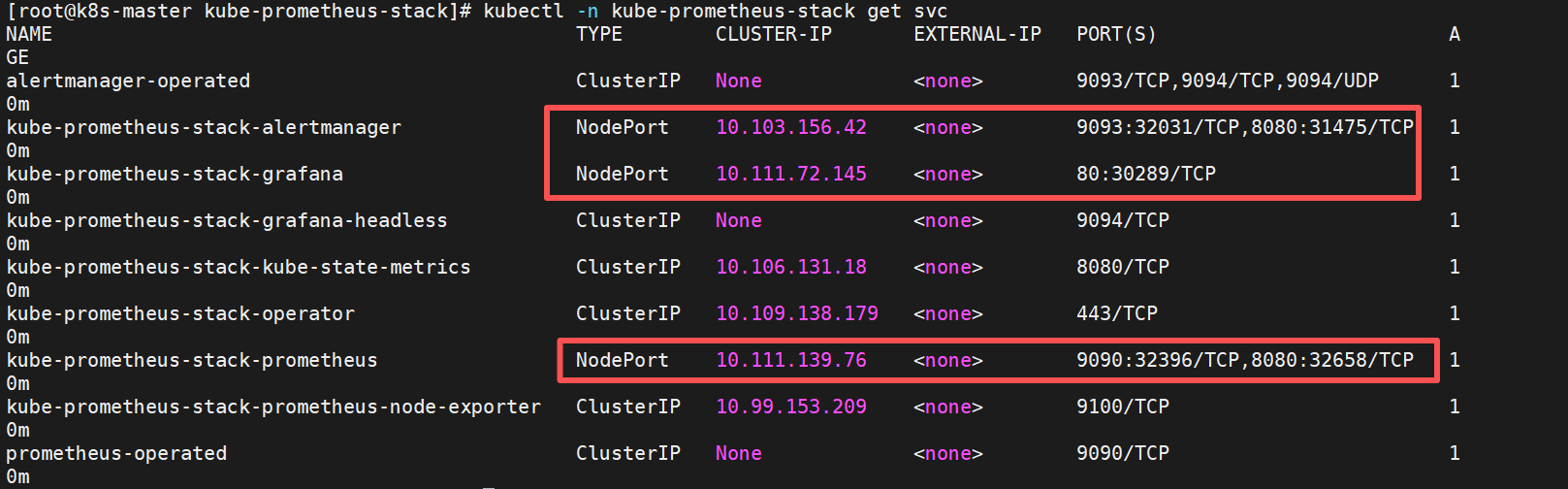

查看暴露的端口通过浏览器访问

Prometheus的使用

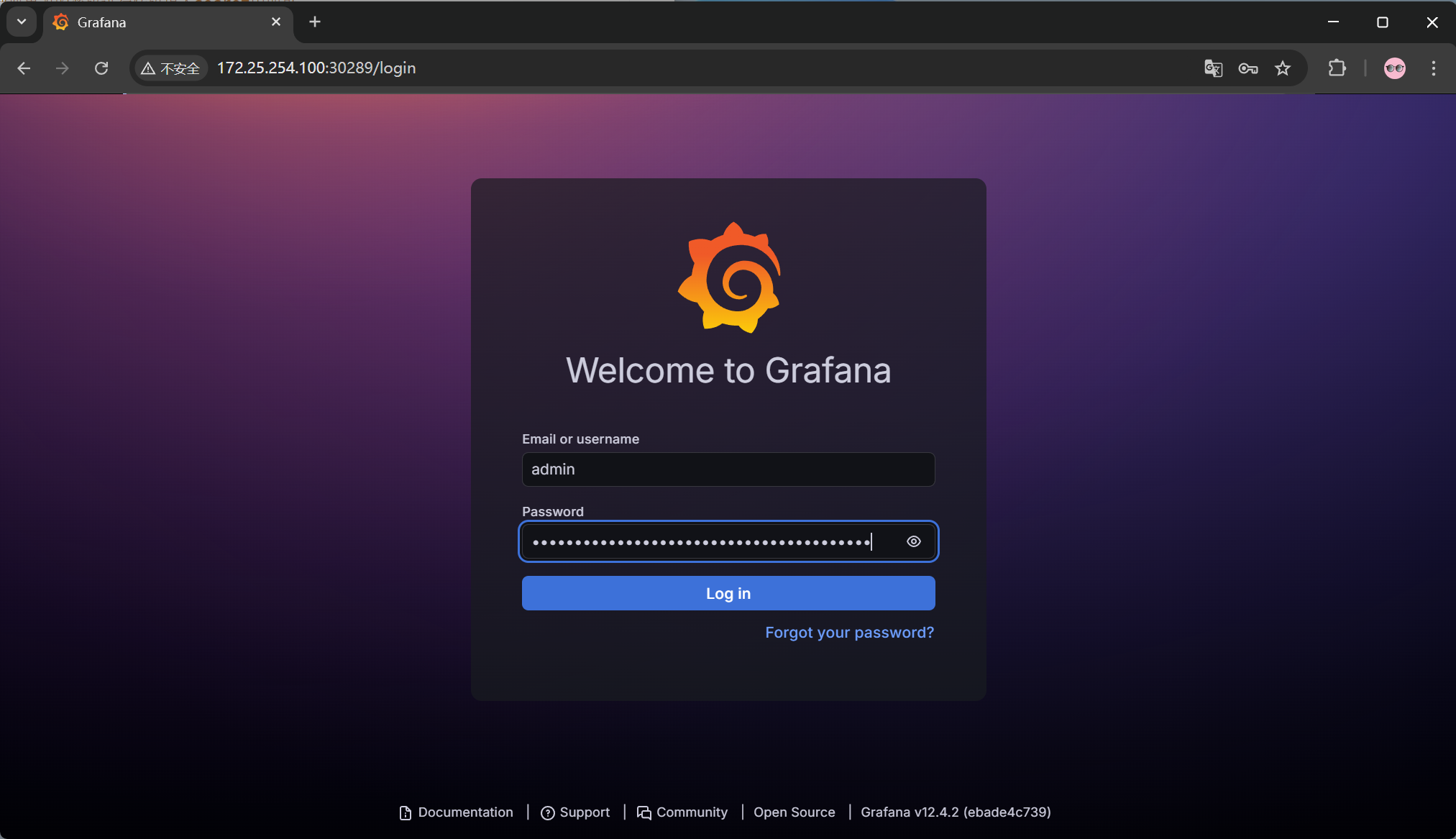

登录grafana,查看登录密码

#密码保存在这个secrets中导出yaml文件进行转码查看

[root@k8s-master kube-prometheus-stack]# kubectl -n kube-prometheus-stack get secrets kube-prometheus-stack-grafana -o yaml

apiVersion: v1

data:

admin-password: WU0xTEtVbm5FcEJuSjRPYTg4ZGI2UmpGOU0zbkxZb281RWZ5NEEycA== #密码

admin-user: YWRtaW4=

ldap-toml: ""

kind: Secret

......

#对免密进行转码

[root@k8s-master kube-prometheus-stack]# echo -n "WU0xTEtVbm5FcEJuSjRPYTg4ZGI2UmpGOU0zbkxZb281RWZ5NEEycA==" | base64 -d

YM1LKUnnEpBnJ4Oa88db6RjF9M3nLYoo5Efy4A2p

#同理查看用户名一般为admin

#如果要更改密码可以把要更改的密码进行转码填入secret中即可

登录grafana访问NodePort暴露的端口

AtomGit 是由开放原子开源基金会联合 CSDN 等生态伙伴共同推出的新一代开源与人工智能协作平台。平台坚持“开放、中立、公益”的理念,把代码托管、模型共享、数据集托管、智能体开发体验和算力服务整合在一起,为开发者提供从开发、训练到部署的一站式体验。

更多推荐

已为社区贡献2条内容

已为社区贡献2条内容

所有评论(0)