SenseVoicecpp sense-voice-frontend识别语音[AI人工智能(七十)]—东方仙盟

sense-voice-frontend

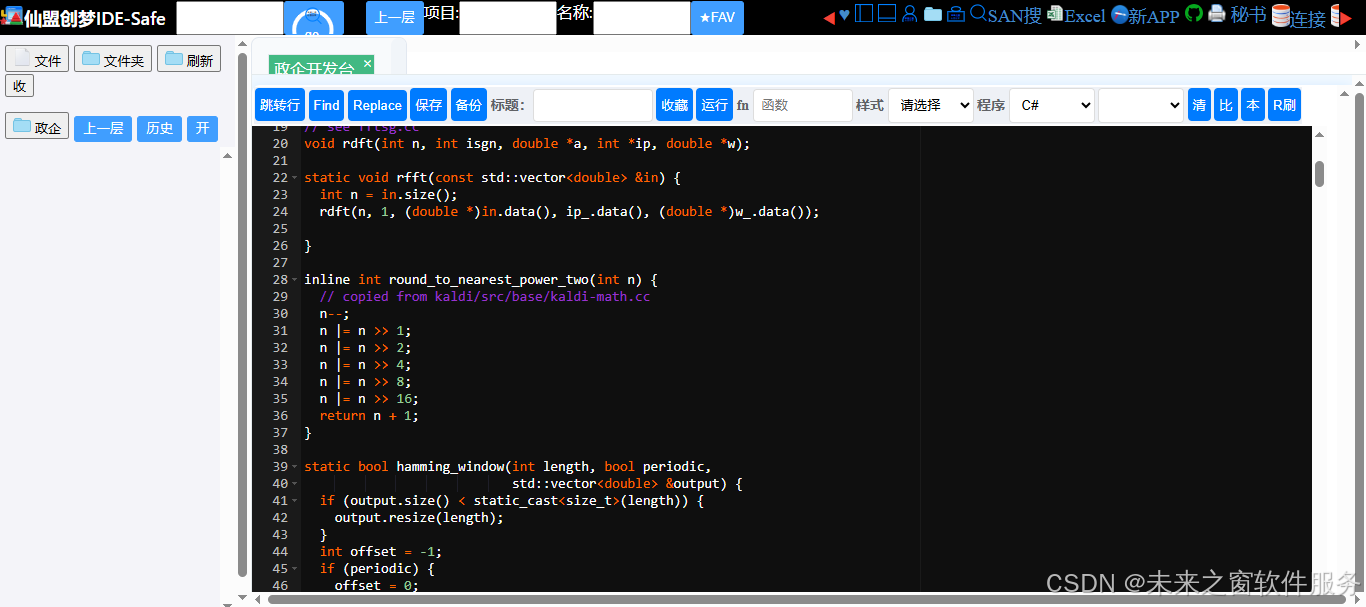

核心代码

完整代码

// Copyright 2024 lovemefan

// Created by lovemefan on 2023/10/3.

//

#include "sense-voice-frontend.h"

#include <algorithm>

#include <cassert>

#include "ThreadPool.h"

#include "log-mel-filter-bank.h"

#define M_2PI 6.283185307179586476925286766559005

#define SIN_COS_N_COUNT 512

// In FFT, we frequently use sine and cosine operations with the same values.

// We can use precalculated values to speed up the process.

std::vector<int> ip_(2 + std::sqrt(SIN_COS_N_COUNT / 2));

std::vector<double> w_(SIN_COS_N_COUNT / 2);

// see fftsg.cc

void rdft(int n, int isgn, double *a, int *ip, double *w);

static void rfft(const std::vector<double> &in) {

int n = in.size();

rdft(n, 1, (double *)in.data(), ip_.data(), (double *)w_.data());

}

inline int round_to_nearest_power_two(int n) {

// copied from kaldi/src/base/kaldi-math.cc

n--;

n |= n >> 1;

n |= n >> 2;

n |= n >> 4;

n |= n >> 8;

n |= n >> 16;

return n + 1;

}

static bool hamming_window(int length, bool periodic,

std::vector<double> &output) {

if (output.size() < static_cast<size_t>(length)) {

output.resize(length);

}

int offset = -1;

if (periodic) {

offset = 0;

}

for (int i = 0; i < length; i++) {

output[i] = 0.54 - 0.46 * cosf((M_2PI * i) / (length + offset));

}

return true;

}

static void fbank_feature_worker_thread(int ith,

const std::vector<double> &hamming,

const std::vector<double> &samples,

int n_samples, int frame_size,

int frame_step, int n_threads,

sense_voice_feature &mel) {

// make sure n_fft == 1 + (sense_voice_N_FFT / 2), bin_0 to bin_nyquist

int i = ith;

std::vector<double> window;

const int padded_window_size = round_to_nearest_power_two(frame_size);

window.resize(padded_window_size);

// calculate FFT only when fft_in are not all zero

int n_fft = std::min(n_samples / frame_step + 1, mel.n_len);

for (; i < n_fft; i += n_threads) {

const int offset = i * frame_step;

std::copy(samples.begin() + offset, samples.begin() + offset + frame_size,

window.begin());

{

// init window default 0, initialization values may result in NaN on arm cpu.

for (int k = frame_size; k < window.size(); k++) {

window[k] = 0;

}

}

// remove dc offset

{

double sum = 0;

for (int32_t k = 0; k < frame_size; ++k) {

sum += window[k];

}

double mean = sum / frame_size;

for (int32_t k = 0; k < frame_size; ++k) {

window[k] -= mean;

}

}

// pre-emphasis

{

for (int32_t k = frame_size - 1; k > 0; --k) {

window[k] -= PREEMPH_COEFF * window[k - 1];

}

window[0] -= PREEMPH_COEFF * window[0];

}

// apply Hamming window

{

for (int j = 0; j < frame_size; j++) {

window[j] *= hamming[j];

}

}

// FFT

// window is input and output

rfft(window);

// Calculate modulus^2 of complex numbers,Power Spectrum

// Use pow(fft_out[2 * j + 0], 2) + pow(fft_out[2 * j + 1], 2) causes

// inference quality problem? Interesting.

for (int j = 0; j < padded_window_size / 2; j++) {

window[j] = (window[2 * j + 0] * window[2 * j + 0] +

window[2 * j + 1] * window[2 * j + 1]);

}

// log-Mel filter bank energies aka: "fbank"

{

auto num_fft_bins = padded_window_size / 2;

int n_mel = mel.n_mel;

for (int j = 0; j < n_mel; j++) {

double sum = 0.0;

for (int k = 0; k < num_fft_bins; k++) {

sum += window[k] * LogMelFilterMelArray[j * num_fft_bins + k];

}

sum = log(sum > 1.19e-7 ? sum : 1.19e-7);

mel.data[i * n_mel + j] = static_cast<float>(sum);

}

}

}

}

bool fbank_lfr_cmvn_feature(const std::vector<double> &samples,

const int n_samples, const int frame_size,

const int frame_step, const int n_feats,

const int n_threads, const bool debug,

sense_voice_cmvn &cmvn, sense_voice_feature &feats) {

// const int64_t t_start_us = ggml_time_us();

const int32_t n_frames_per_ms = SENSE_VOICE_SAMPLE_RATE * 0.001f;

feats.n_mel = n_feats;

feats.n_len = 1 + ((n_samples - frame_size * n_frames_per_ms) /

(frame_step * n_frames_per_ms));

feats.data.resize(feats.n_mel * feats.n_len);

std::vector<double> hamming;

hamming_window(frame_size * n_frames_per_ms, true, hamming);

{

if (n_threads > 1) {

ThreadPool pool(n_threads);

for (int iw = 0; iw < n_threads - 1; ++iw) {

pool.enqueue(fbank_feature_worker_thread, iw + 1, std::cref(hamming),

samples, n_samples, frame_size * n_frames_per_ms,

frame_step * n_frames_per_ms, n_threads, std::ref(feats));

}

}

// main thread

fbank_feature_worker_thread(0, hamming, samples, n_samples,

frame_size * n_frames_per_ms,

frame_step * n_frames_per_ms, n_threads, feats);

}

if (debug) {

auto &mel = feats.data;

std::ofstream outFile("fbank_lfr_cmvn_feature.json");

outFile << "[";

for (uint64_t i = 0; i < mel.size() - 1; i++) {

outFile << mel[i] << ", ";

}

outFile << mel[mel.size() - 1] << "]";

outFile.close();

}

std::vector<std::vector<float>> out_feats;

// tapply lrf, merge lfr_m frames as one,lfr_n frames per window

// ref:

// https://github.com/alibaba-damo-academy/FunASR/blob/main/runtime/onnxruntime/src/paraformer.cpp#L409-L440

int T = feats.n_len;

int lfr_m = feats.lfr_m; // 7

int lfr_n = feats.lfr_n; // 6

int T_lrf = ceil(1.0 * T / feats.lfr_n);

int left_pad = (feats.lfr_m - 1) / 2;

int left_pad_offset = (lfr_m - left_pad) * feats.n_mel;

// Merge lfr_m frames as one,lfr_n frames per window

T = T + (lfr_m - 1) / 2;

std::vector<float> p;

for (int i = 0; i < T_lrf; i++) {

// the first frames need left padding

if (i == 0) {

// left padding

for (int j = 0; j < left_pad; j++) {

p.insert(p.end(), feats.data.begin(), feats.data.begin() + feats.n_mel);

}

p.insert(p.end(), feats.data.begin(), feats.data.begin() + left_pad_offset);

out_feats.push_back(p);

p.clear();

} else {

if (lfr_m <= T - i * lfr_n) {

p.insert(p.end(), feats.data.begin() + (i * lfr_n - left_pad) * feats.n_mel,

feats.data.begin() + (i * lfr_n - left_pad + lfr_m) * feats.n_mel);

out_feats.push_back(p);

p.clear();

} else {

// Fill to lfr_m frames at last window if less than lfr_m frames (copy

// last frame)

int num_padding = lfr_m - (T - i * lfr_n);

for (int j = 0; j < (feats.n_len - i * lfr_n); j++) {

p.insert(p.end(),

feats.data.begin() + (i * lfr_n - left_pad) * feats.n_mel,

feats.data.end());

}

for (int j = 0; j < num_padding; j++) {

p.insert(p.end(), feats.data.end() - feats.n_mel, feats.data.end());

}

out_feats.push_back(p);

p.clear();

}

}

}

feats.data.resize(T_lrf * feats.lfr_m * feats.n_mel);

// apply cvmn

for (int i = 0; i < T_lrf; i++) {

for (int j = 0; j < feats.lfr_m * feats.n_mel; j++) {

feats.data[i * feats.lfr_m * feats.n_mel + j] = (out_feats[i][j] + cmvn.cmvn_means[j]) * cmvn.cmvn_vars[j];

}

}

return true;

}

bool load_wav_file(const char *filename, int32_t *sampling_rate,

std::vector<double> &data) {

struct WaveHeader header {};

std::ifstream is(filename, std::ifstream::binary);

is.read(reinterpret_cast<char *>(&header), sizeof(header));

if (!is) {

std::cout << "Failed to read " << filename;

return false;

}

if (!header.Validate()) {

return false;

}

header.SeekToDataChunk(is);

if (!is) {

return false;

}

*sampling_rate = header.sample_rate;

// header.subchunk2_size contains the number of bytes in the data.

// As we assume each sample contains two bytes, so it is divided by 2 here

auto speech_len = header.subchunk2_size / 2;

data.resize(speech_len);

auto speech_buff = (int16_t *)malloc(sizeof(int16_t) * speech_len);

if (speech_buff) {

memset(speech_buff, 0, sizeof(int16_t) * speech_len);

is.read(reinterpret_cast<char *>(speech_buff), header.subchunk2_size);

if (!is) {

std::cout << "Failed to read " << filename;

return false;

}

// float scale = 32768;

float scale = 1.0;

for (int32_t i = 0; i != speech_len; ++i) {

data[i] = (double)speech_buff[i] / scale;

}

free(speech_buff);

return true;

} else {

free(speech_buff);

return false;

}

}

// Float version of fbank_feature_worker_thread

static void fbank_feature_worker_thread_float(int ith,

const std::vector<double> &hamming,

const std::vector<float> &samples,

int n_samples, int frame_size,

int frame_step, int n_threads,

sense_voice_feature &mel) {

// make sure n_fft == 1 + (sense_voice_N_FFT / 2), bin_0 to bin_nyquist

int i = ith;

std::vector<double> window;

const int padded_window_size = round_to_nearest_power_two(frame_size);

window.resize(padded_window_size);

// calculate FFT only when fft_in are not all zero

int n_fft = std::min(n_samples / frame_step + 1, mel.n_len);

for (; i < n_fft; i += n_threads) {

const int offset = i * frame_step;

// Convert float to double for processing

for (int j = 0; j < frame_size; j++) {

window[j] = static_cast<double>(samples[offset + j]);

}

{

// init window default 0, initialization values may result in NaN on arm cpu.

for (int k = frame_size; k < window.size(); k++) {

window[k] = 0;

}

}

// remove dc offset

{

double sum = 0;

for (int32_t k = 0; k < frame_size; ++k) {

sum += window[k];

}

double mean = sum / frame_size;

for (int32_t k = 0; k < frame_size; ++k) {

window[k] -= mean;

}

}

// pre-emphasis

{

for (int32_t k = frame_size - 1; k > 0; --k) {

window[k] -= PREEMPH_COEFF * window[k - 1];

}

window[0] -= PREEMPH_COEFF * window[0];

}

// apply Hamming window

{

for (int j = 0; j < frame_size; j++) {

window[j] *= hamming[j];

}

}

// FFT

// window is input and output

rfft(window);

// Calculate modulus^2 of complex numbers,Power Spectrum

// Use pow(fft_out[2 * j + 0], 2) + pow(fft_out[2 * j + 1], 2) causes

// inference quality problem? Interesting.

for (int j = 0; j < padded_window_size / 2; j++) {

window[j] = (window[2 * j + 0] * window[2 * j + 0] +

window[2 * j + 1] * window[2 * j + 1]);

}

// log-Mel filter bank energies aka: "fbank"

{

auto num_fft_bins = padded_window_size / 2;

int n_mel = mel.n_mel;

for (int j = 0; j < n_mel; j++) {

double sum = 0.0;

for (int k = 0; k < num_fft_bins; k++) {

sum += window[k] * LogMelFilterMelArray[j * num_fft_bins + k];

}

sum = log(sum > 1.19e-7 ? sum : 1.19e-7);

mel.data[i * n_mel + j] = static_cast<float>(sum);

}

}

}

}

// Float version of fbank_lfr_cmvn_feature

bool fbank_lfr_cmvn_feature(const std::vector<float> &samples,

const int n_samples, const int frame_size,

const int frame_step, const int n_feats,

const int n_threads, const bool debug,

sense_voice_cmvn &cmvn, sense_voice_feature &feats) {

// const int64_t t_start_us = ggml_time_us();

const int32_t n_frames_per_ms = SENSE_VOICE_SAMPLE_RATE * 0.001f;

feats.n_mel = n_feats;

feats.n_len = 1 + ((n_samples - frame_size * n_frames_per_ms) /

(frame_step * n_frames_per_ms));

feats.data.resize(feats.n_mel * feats.n_len);

std::vector<double> hamming;

hamming_window(frame_size * n_frames_per_ms, true, hamming);

{

if (n_threads > 1) {

ThreadPool pool(n_threads);

for (int iw = 0; iw < n_threads - 1; ++iw) {

pool.enqueue(fbank_feature_worker_thread_float, iw + 1, std::cref(hamming),

samples, n_samples, frame_size * n_frames_per_ms,

frame_step * n_frames_per_ms, n_threads, std::ref(feats));

}

}

// main thread

fbank_feature_worker_thread_float(0, hamming, samples, n_samples,

frame_size * n_frames_per_ms,

frame_step * n_frames_per_ms, n_threads, feats);

}

if (debug) {

auto &mel = feats.data;

std::ofstream outFile("fbank_lfr_cmvn_feature_float.json");

outFile << "[";

for (uint64_t i = 0; i < mel.size() - 1; i++) {

outFile << mel[i] << ", ";

}

outFile << mel[mel.size() - 1] << "]";

outFile.close();

}

std::vector<std::vector<float>> out_feats;

// tapply lrf, merge lfr_m frames as one,lfr_n frames per window

// ref:

// https://github.com/alibaba-damo-academy/FunASR/blob/main/runtime/onnxruntime/src/paraformer.cpp#L409-L440

int T = feats.n_len;

int lfr_m = feats.lfr_m; // 7

int lfr_n = feats.lfr_n; // 6

int T_lrf = ceil(1.0 * T / feats.lfr_n);

int left_pad = (feats.lfr_m - 1) / 2;

int left_pad_offset = (lfr_m - left_pad) * feats.n_mel;

// Merge lfr_m frames as one,lfr_n frames per window

T = T + (lfr_m - 1) / 2;

std::vector<float> p;

for (int i = 0; i < T_lrf; i++) {

// the first frames need left padding

if (i == 0) {

// left padding

for (int j = 0; j < left_pad; j++) {

p.insert(p.end(), feats.data.begin(), feats.data.begin() + feats.n_mel);

}

p.insert(p.end(), feats.data.begin(), feats.data.begin() + left_pad_offset);

out_feats.push_back(p);

p.clear();

} else {

if (lfr_m <= T - i * lfr_n) {

p.insert(p.end(), feats.data.begin() + (i * lfr_n - left_pad) * feats.n_mel,

feats.data.begin() + (i * lfr_n - left_pad + lfr_m) * feats.n_mel);

out_feats.push_back(p);

p.clear();

} else {

// Fill to lfr_m frames at last window if less than lfr_m frames (copy

// last frame)

int num_padding = lfr_m - (T - i * lfr_n);

for (int j = 0; j < (feats.n_len - i * lfr_n); j++) {

p.insert(p.end(),

feats.data.begin() + (i * lfr_n - left_pad) * feats.n_mel,

feats.data.end());

}

for (int j = 0; j < num_padding; j++) {

p.insert(p.end(), feats.data.end() - feats.n_mel, feats.data.end());

}

out_feats.push_back(p);

p.clear();

}

}

}

feats.data.resize(T_lrf * feats.lfr_m * feats.n_mel);

// apply cvmn

for (int i = 0; i < T_lrf; i++) {

for (int j = 0; j < feats.lfr_m * feats.n_mel; j++) {

feats.data[i * feats.lfr_m * feats.n_mel + j] = (out_feats[i][j] + cmvn.cmvn_means[j]) * cmvn.cmvn_vars[j];

}

}

return true;

}

代码解释

这段代码的作用只有一个:把原始的语音波形(wav 文件) → 转换成神经网络能听懂的特征(Fbank + LFR + CMVN)

它是语音识别的第一步,没有它,模型根本听不懂声音。

用生活比喻

- 原始音频 = 一堆杂乱无章的声波

- 这段代码 = 把声音 “加工、过滤、整理” 成标准格式

- 输出结果 = 一张梅尔频谱图(数字矩阵)

- 送给模型 = 编码器拿去识别成文字

核心功能(超简单版)

它一共做 4 件大事:

1. 读 WAV 音频文件

- 打开

.wav - 读取采样率、声音数据

- 把整数音频转成浮点数

对应函数:load_wav_file

2. 分帧 + 加汉明窗

- 把长音频切成一小段一小段(每帧 25ms)

- 加窗让波形更平滑,避免噪音

对应函数:hamming_window

3. FFT 快速傅里叶变换 + 梅尔滤波(最核心)

- 把时域声音转成频域

- 用梅尔滤波器组提取人耳敏感的声音特征

- 取对数 → 得到 Log-Mel Fbank 特征

对应函数:fbank_feature_worker_thread

4. LFR 拼接 + CMVN 归一化

- LFR:把几帧拼在一起,压缩数据

- CMVN:做标准化,让模型更稳定

对应函数:fbank_lfr_cmvn_feature

完整流程(最关键)

- 读 wav → 拿到原始声音波形

- 预加重 → 放大高频声音

- 分帧 → 切成 25ms 一小段

- 加窗 → 平滑边缘

- FFT 变换 → 转成频率

- 梅尔滤波 → 提取人耳敏感特征

- 取对数 → 得到 fbank

- LFR 帧拼接 → 降采样

- CMVN 归一化 → 标准化

- 输出 → 给模型识别成文字

代码里的重要函数一眼看懂

1. load_wav_file( )

读取 wav 文件,转成浮点型音频数据。

2. hamming_window( )

生成汉明窗,让音频帧更平滑。

3. rfft( )

快速傅里叶变换,声音→频率。

4. fbank_feature_worker_thread( )

核心工作线程多线程并行计算梅尔特征。

5. fbank_lfr_cmvn_feature( )

总接口:输入音频 → 输出模型可用的特征。

6. 支持 double 和 float 两种版本

一份代码处理两种数据类型,兼容性更强。

它在整个项目里的位置

plaintext

WAV音频文件

↓

【这段代码:前端预处理】

↓

Log-Mel + LFR + CMVN 特征

↓

编码器(sense-voice-encoder)

↓

解码器

↓

输出文字

最终超简总结

这是语音识别的 “声音加工流水线”

- 输入:声音

- 输出:模型能识别的数字特征

- 地位:必不可少的第一步

- 复杂度:信号处理里最经典的流程

人人皆为创造者,共创方能共成长

每个人都是使用者,也是创造者;是数字世界的消费者,更是价值的生产者与分享者。在智能时代的浪潮里,单打独斗的发展模式早已落幕,唯有开放连接、创意共创、利益共享,才能让个体价值汇聚成生态合力,让技术与创意双向奔赴,实现平台与伙伴的快速成长、共赢致远。

原创永久分成,共赴星辰大海

原创创意共创、永久收益分成,是东方仙盟始终坚守的核心理念。我们坚信,每一份原创智慧都值得被尊重与回馈,以永久分成锚定共创初心,让创意者长期享有价值红利,携手万千伙伴向着科技星辰大海笃定前行,拥抱硅基 生命与数字智能交融的未来,共筑跨越时代的数字文明共同体。

东方仙盟:拥抱知识开源,共筑数字新生态

在全球化与数字化浪潮中,东方仙盟始终秉持开放协作、知识共享的理念,积极拥抱开源技术与开放标准。我们相信,唯有打破技术壁垒、汇聚全球智慧,才能真正推动行业的可持续发展。

开源赋能中小商户:通过将前端异常检测、跨系统数据互联等核心能力开源化,东方仙盟为全球中小商户提供了低成本、高可靠的技术解决方案,让更多商家能够平等享受数字转型的红利。

共建行业标准:我们积极参与国际技术社区,与全球开发者、合作伙伴共同制定开放协议 与技术规范,推动跨境零售、文旅、餐饮等多业态的系统互联互通,构建更加公平、高效的数字生态。

知识普惠,共促发展:通过开源社区 、技术文档与培训体系,东方仙盟致力于将前沿技术转化为可落地的行业实践,赋能全球合作伙伴,共同培育创新人才,推动数字经济 的普惠式增长

阿雪技术观

在科技发展浪潮中,我们不妨积极投身技术共享。不满足于做受益者,更要主动担当贡献者。无论是分享代码、撰写技术博客,还是参与开源项目 维护改进,每一个微小举动都可能蕴含推动技术进步的巨大能量。东方仙盟是汇聚力量的天地,我们携手在此探索硅基 生命,为科技进步添砖加瓦。

Hey folks, in this wild tech - driven world, why not dive headfirst into the whole tech - sharing scene? Don't just be the one reaping all the benefits; step up and be a contributor too. Whether you're tossing out your code snippets , hammering out some tech blogs, or getting your hands dirty with maintaining and sprucing up open - source projects, every little thing you do might just end up being a massive force that pushes tech forward. And guess what? The Eastern FairyAlliance is this awesome place where we all come together. We're gonna team up and explore the whole silicon - based life thing, and in the process, we'll be fueling the growth of technology

AtomGit 是由开放原子开源基金会联合 CSDN 等生态伙伴共同推出的新一代开源与人工智能协作平台。平台坚持“开放、中立、公益”的理念,把代码托管、模型共享、数据集托管、智能体开发体验和算力服务整合在一起,为开发者提供从开发、训练到部署的一站式体验。

更多推荐

已为社区贡献31条内容

已为社区贡献31条内容

所有评论(0)