【端侧部署yolo系列】yolov5_seg部署至全志开发板

注:awnpu_model_zoo\docs里有详细的开发文档以及参考指南

本文是根据《NPU开发环境部署参考指南》,部署PC的ubuntu环境,使用Docker镜像环境为例进行说明。

如果想对部署流程进行更加详细的了解,可以参考《NPU_模型部署_开发指南》

重要说明:在awnpu_model_zoo内没有的模型,可参考awnpu_model_zoo\examples里面对应系列的模型进行修改部署,需要自行编写对应模型cpp前后处理代码以及修改相应的配置文件(如model_config.h、CMakeLists.txt)。如果遇到模型导出量化失败的情况,需要对模型进行相应的裁剪、或者采用不同的量化方式等。本文的yolov5_seg已经在板端经过了推理验证。

环境配置

关于参考之前部署yolox的文章【端侧部署yolo系列】yolox部署至全志开发板T736![]() https://blog.csdn.net/troyteng/article/details/155444386?spm=1011.2124.3001.6209

https://blog.csdn.net/troyteng/article/details/155444386?spm=1011.2124.3001.6209

下载镜像文件和AWNPU_Model_Zoo,创建自己的容器。

模型准备

原始模型下载链接请参见yolov5s-seg.pt。

导出onnx模型

# 下载官方源码

git clone https://github.com/ultralytics/yolov5 # clone

cd yolov5

pip install -r requirements.txt # install

# onnx格式转换

python export.py --weights yolov5s-seg.pt --include onnx进入容器完成后续的操作。

模型简化

进入下载好的模型库文件awnpu_model_zoo/eamples

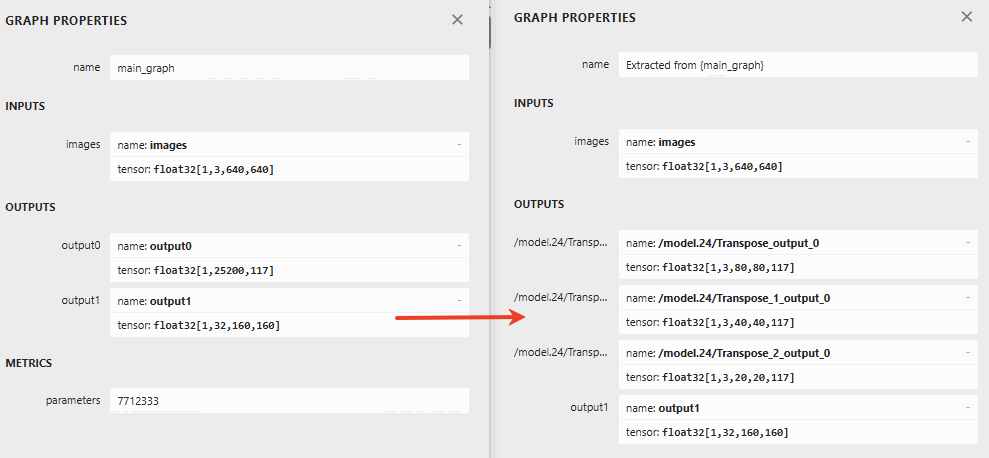

yolov5-seg网络的后处理部分8bit量化会产生较大的精度损失,通过onnx_extract.py对模型剪枝,修改输出结构,其中ouput0节点为3个输出head后处理解析后的节点;同时将后处理结构移至外部使用cpu进行相应的处理,最终模型输出差异如下,左边是官方模型,右边是修改后的模型

# 进入python目录,运行onnx_extract.py对模型进行裁剪,在convert_model目录下生成yolov5s-seg_rt.onnx

cd ./convert_model/python/

python3 onnx_extract.py

# 运行推理

python3 yolov5-seg-onnx.py -m ../yolov5s-seg_rt.onnx -i ../../model/bus.jpg -o output -s 0.25

模型配置

模型配置还是参考yolox的部署,yolo系列的模型配置(归一化、前处理、RGB、量化校准数据集)都是一样的。

模型前后处理

后处理说明:对于yolov5_seg模型,就是在目标检测模型的基础上,多了一个output1掩码输出,形状为[1,32,160,160]。因此在分类和回归的后处理部分,我们可以直接参考yolov5后处理。

掩码部分的处理简单概括如下:

从每个特征图中的第五个维度117提取32个字段,是每个检测框的掩码特征系数,将目标的掩码特征与掩码原型output1进行矩阵乘法,应用sigmoid函数,调整掩码尺寸一匹配原始图像,裁剪掩码到目标边界区域。最后将结果可视化。

配置文件model_config.h

配置文件model_config.h可以直接复制其他yolo官方系列的模型(yolov5、yolov8、yolo11、yolo26)。

前处理yolov5_seg_pre.cpp

#include <opencv2/core/core.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/imgproc/imgproc.hpp>

#include <iostream>

#include <stdio.h>

#include <stdint.h>

#include <string.h>

#include <math.h>

#include "model_config.h"

/* model_inputmeta.yml file param modify, eg:

preproc_node_params:

add_preproc_node: true

preproc_type: IMAGE_RGB

*/

void get_input_data(const char* image_file, unsigned char* input_data, int letterbox_rows, int letterbox_cols)

{

cv::Mat sample = cv::imread(image_file, 1);

if (sample.empty()) {

fprintf(stderr, "cv::imread %s failed\n", image_file);

return;

}

cv::Mat img;

cv::cvtColor(sample, img, cv::COLOR_BGR2RGB);

/* letterbox process to support different letterbox size */

float scale_letterbox;

if ((letterbox_rows * 1.0 / img.rows) < (letterbox_cols * 1.0 / img.cols))

{

scale_letterbox = letterbox_rows * 1.0 / img.rows;

}

else

{

scale_letterbox = letterbox_cols * 1.0 / img.cols;

}

int resize_cols = int(scale_letterbox * img.cols);

int resize_rows = int(scale_letterbox * img.rows);

cv::resize(img, img, cv::Size(resize_cols, resize_rows));

// create a mat with input_data ptr

cv::Mat img_new(letterbox_rows, letterbox_cols, CV_8UC3, input_data);

int top = (letterbox_rows - resize_rows) / 2;

int bot = (letterbox_rows - resize_rows + 1) / 2;

int left = (letterbox_cols - resize_cols) / 2;

int right = (letterbox_cols - resize_cols + 1) / 2;

// Letterbox filling

cv::copyMakeBorder(img, img_new, top, bot, left, right, cv::BORDER_CONSTANT, cv::Scalar(114, 114, 114));

}

int yolov5_seg_preprocess(const char* imagepath, void* buff_ptr, unsigned int buff_size)

{

int img_c = 3;

// set default letterbox size

int letterbox_rows = LETTERBOX_ROWS;

int letterbox_cols = LETTERBOX_COLS;

int img_size = letterbox_rows * letterbox_cols * img_c;

unsigned int data_size = img_size * sizeof(uint8_t);

if (data_size > buff_size) {

printf("data size > buff size, please check code. \n");

return -1;

}

get_input_data(imagepath, (unsigned char*)buff_ptr, letterbox_rows, letterbox_cols);

return 0;

}

后处理yolov5_seg_post.cpp

#include <opencv2/core/core.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/imgproc/imgproc.hpp>

#include <iostream>

#include <stdio.h>

#include <vector>

#include <cmath>

#include <chrono>

#include "model_config.h"

using namespace std;

struct Object

{

cv::Rect_<float> rect;

int label;

float prob;

std::vector<float> mask_feat;

cv::Mat mask;

};

static inline float sigmoid(float x)

{

return static_cast<float>(1.f / (1.f + exp(-x)));

}

static inline float desigmoid(float x)

{

return static_cast<float>(-log(1.f/x - 1.f));

}

static inline float intersection_area(const Object& a, const Object& b)

{

cv::Rect_<float> inter = a.rect & b.rect;

return inter.area();

}

static void qsort_descent_inplace(std::vector<Object>& objects, int left, int right)

{

int i = left;

int j = right;

float p = objects[(left + right) / 2].prob;

while (i <= j)

{

while (objects[i].prob > p)

i++;

while (objects[j].prob < p)

j--;

if (i <= j)

{

// swap

std::swap(objects[i], objects[j]);

i++;

j--;

}

}

#pragma omp parallel sections

{

#pragma omp section

{

if (left < j) qsort_descent_inplace(objects, left, j);

}

#pragma omp section

{

if (i < right) qsort_descent_inplace(objects, i, right);

}

}

}

static void qsort_descent_inplace(std::vector<Object>& objects)

{

if (objects.empty())

return;

qsort_descent_inplace(objects, 0, objects.size() - 1);

}

static void nms_sorted_bboxes(const std::vector<Object>& objects, std::vector<int>& picked, float nms_threshold, bool agnostic = false)

{

picked.clear();

const int n = objects.size();

std::vector<float> areas(n);

for (int i = 0; i < n; i++)

{

areas[i] = objects[i].rect.area();

}

for (int i = 0; i < n; i++)

{

const Object& a = objects[i];

int keep = 1;

for (int j = 0; j < (int)picked.size(); j++)

{

const Object& b = objects[picked[j]];

// if (!agnostic && a.label != b.label)

// continue;

// intersection over union

float inter_area = intersection_area(a, b);

float union_area = areas[i] + areas[picked[j]] - inter_area;

// float IoU = inter_area / union_area

if (inter_area / union_area > nms_threshold)

keep = 0;

}

if (keep)

picked.push_back(i);

}

}

static void generate_proposals_rt(int stride, const float* feat, float prob_threshold,

std::vector<Object>& objects,

int letterbox_cols, int letterbox_rows)

{

static const float anchors[18] = {10,13,16,30,33,23,30,61,62,45,59,119,116,90,156,198,373,326};

const int anchor_num = 3;

const int feat_w = letterbox_cols / stride;

const int feat_h = letterbox_rows / stride;

const int cls_num = CLASS_NUM;

const int mask_num = 32;

const int single_anchor_dim = cls_num + 5 + mask_num;

int anchor_group = 1;

if (stride == 8)

anchor_group = 1;

if (stride == 16)

anchor_group = 2;

if (stride == 32)

anchor_group = 3;

const float deprob_threshold = desigmoid(prob_threshold);

const int feat_size = feat_w * feat_h;

const int feat_size_cls_5_mask = feat_size * single_anchor_dim;

const float* anchor_data[3] = {

feat,

feat + feat_size_cls_5_mask,

feat + feat_size_cls_5_mask * 2

};

const int feat_size_4 = feat_size * 4;

const int feat_size_5 = feat_size * 5;

for (int a = 0; a < anchor_num; a++)

{

const float* anchor_ptr = anchor_data[a];

for (int h = 0; h < feat_h; h++)

{

const int h_offset = h * feat_w;

for (int w = 0; w < feat_w; w++)

{

const int loc_idx = (h_offset + w);

const float* pos_ptr = anchor_ptr + loc_idx;

// box score

const float box_score = *(pos_ptr + feat_size_4);

if (box_score < deprob_threshold)

continue;

// class score

int class_index = 0;

float max_score = -FLT_MAX;

float* class_score_ptr = (float*)pos_ptr + 5 * feat_size;

for (int s = 0; s < cls_num; s++) {

if (*class_score_ptr > max_score) {

class_index = s;

max_score = *class_score_ptr;

}

class_score_ptr += feat_size;

}

if (max_score < deprob_threshold)

continue;

const float sig_box_score = sigmoid(box_score);

const float sig_class_score = sigmoid(max_score);

const float final_score = sig_box_score * sig_class_score;

if (final_score < prob_threshold)

continue;

// get box info

const float dx = sigmoid(*pos_ptr);

const float dy = sigmoid(*(pos_ptr + feat_size));

const float dw = sigmoid(*(pos_ptr + 2 * feat_size));

const float dh = sigmoid(*(pos_ptr + 3 * feat_size));

const float pred_cx = (dx * 2.0f - 0.5f + w) * stride;

const float pred_cy = (dy * 2.0f - 0.5f + h) * stride;

float anchor_w = anchors[(anchor_group - 1) * 6 + a * 2 + 0];

float anchor_h = anchors[(anchor_group - 1) * 6 + a * 2 + 1];

const float pred_w = dw * dw * 4.0f * anchor_w;

const float pred_h = dh * dh * 4.0f * anchor_h;

Object obj;

obj.rect.x = pred_cx - pred_w * 0.5f;

obj.rect.y = pred_cy - pred_h * 0.5f;

obj.rect.width = pred_w;

obj.rect.height = pred_h;

obj.label = class_index;

obj.prob = final_score;

// get mask features

obj.mask_feat.resize(mask_num);

float* mask_feat_ptr = (float*)pos_ptr + (5 + cls_num) * feat_size;

for (int m = 0; m < mask_num; m++) {

obj.mask_feat[m] = *(mask_feat_ptr + m * feat_size);

}

objects.push_back(obj);

}

}

}

}

static int detect_yolov5_rt(const cv::Mat& bgr, std::vector<Object>& objects, float **output, float *proto){

std::chrono::steady_clock::time_point Tbegin, Tend;

Tbegin = std::chrono::steady_clock::now();

const float *p8_data_ptr = output[0];

const float *p16_data_ptr = output[1];

const float *p32_data_ptr = output[2];

// set default letterbox size

int letterbox_rows = LETTERBOX_ROWS;

int letterbox_cols = LETTERBOX_COLS;

/* postprocess */

const float prob_threshold = SCORE_THRESHOLD;

const float nms_threshold = NMS_THRESHOLD;

std::vector<Object> proposals;

std::vector<Object> objects8;

std::vector<Object> objects16;

std::vector<Object> objects32;

generate_proposals_rt(32, p32_data_ptr, prob_threshold, objects32, letterbox_cols, letterbox_rows);

proposals.insert(proposals.end(), objects32.begin(), objects32.end());

generate_proposals_rt(16, p16_data_ptr, prob_threshold, objects16, letterbox_cols, letterbox_rows);

proposals.insert(proposals.end(), objects16.begin(), objects16.end());

generate_proposals_rt( 8, p8_data_ptr, prob_threshold, objects8, letterbox_cols, letterbox_rows);

proposals.insert(proposals.end(), objects8.begin(), objects8.end());

qsort_descent_inplace(proposals);

std::vector<int> picked;

nms_sorted_bboxes(proposals, picked, nms_threshold);

float scale_letterbox;

int resize_rows;

int resize_cols;

if ((letterbox_rows * 1.0 / bgr.rows) < (letterbox_cols * 1.0 / bgr.cols))

{

scale_letterbox = letterbox_rows * 1.0 / bgr.rows;

}

else

{

scale_letterbox = letterbox_cols * 1.0 / bgr.cols;

}

resize_cols = int(scale_letterbox * bgr.cols);

resize_rows = int(scale_letterbox * bgr.rows);

int tmp_h = (letterbox_rows - resize_rows) / 2;

int tmp_w = (letterbox_cols - resize_cols) / 2;

float ratio_y = (float)bgr.rows / resize_rows;

float ratio_x = (float)bgr.cols / resize_cols;

int count = picked.size();

objects.resize(count);

for (int i = 0; i < count; i++)

{

objects[i] = proposals[picked[i]];

float x0 = (objects[i].rect.x);

float y0 = (objects[i].rect.y);

float x1 = (objects[i].rect.x + objects[i].rect.width);

float y1 = (objects[i].rect.y + objects[i].rect.height);

x0 = (x0 - tmp_w) * ratio_x;

y0 = (y0 - tmp_h) * ratio_y;

x1 = (x1 - tmp_w) * ratio_x;

y1 = (y1 - tmp_h) * ratio_y;

x0 = std::max(std::min(x0, (float)(bgr.cols - 1)), 0.f);

y0 = std::max(std::min(y0, (float)(bgr.rows - 1)), 0.f);

x1 = std::max(std::min(x1, (float)(bgr.cols - 1)), 0.f);

y1 = std::max(std::min(y1, (float)(bgr.rows - 1)), 0.f);

objects[i].rect.x = x0;

objects[i].rect.y = y0;

objects[i].rect.width = x1 - x0;

objects[i].rect.height = y1 - y0;

}

if (proto) {

int proto_c = 32;

int proto_h = 160;

int proto_w = 160;

cv::Mat mask_protos = cv::Mat(proto_c, proto_h * proto_w, CV_32FC1, const_cast<float*>(proto));

for (int i = 0; i < count; i++) {

Object& obj = objects[i];

cv::Mat mask_proposals = cv::Mat(1, proto_c, CV_32FC1, obj.mask_feat.data());

cv::Mat masks_feature = mask_proposals * mask_protos;

cv::Mat mask = masks_feature.reshape(1, proto_h);

cv::Mat ones_mat = cv::Mat::ones(mask.size(), CV_32FC1);

cv::exp(-mask, mask);

mask = ones_mat / (ones_mat + mask);

cv::resize(mask, mask, cv::Size(letterbox_cols, letterbox_rows));

cv::Rect valid_roi(tmp_w, tmp_h, resize_cols, resize_rows);

mask = mask(valid_roi).clone();

cv::resize(mask, mask, cv::Size(bgr.cols, bgr.rows));

cv::Rect bbox_roi(obj.rect.x, obj.rect.y, obj.rect.width, obj.rect.height);

bbox_roi.x = std::max(0, bbox_roi.x);

bbox_roi.y = std::max(0, bbox_roi.y);

bbox_roi.width = std::min(bgr.cols - bbox_roi.x, bbox_roi.width);

bbox_roi.height = std::min(bgr.rows - bbox_roi.y, bbox_roi.height);

if (bbox_roi.width > 0 && bbox_roi.height > 0) {

mask = mask(bbox_roi).clone();

cv::threshold(mask, obj.mask, MASK_THRESHOLD, 255, cv::THRESH_BINARY);

obj.mask.convertTo(obj.mask, CV_8UC1);

} else {

obj.mask = cv::Mat();

}

}

}

Tend = std::chrono::steady_clock::now();

float f = std::chrono::duration_cast <std::chrono::milliseconds> (Tend - Tbegin).count();

std::cout << "post process time : " << f << " ms" << std::endl;

fprintf(stderr, "detection num: %d\n", count);

return 0;

}

static void draw_objects(const cv::Mat& bgr, const std::vector<Object>& objects)

{

cv::Mat image = bgr.clone();

std::vector<cv::Scalar> colors(CLASS_NUM);

for (int i = 0; i < CLASS_NUM; i++)

colors[i] = cv::Scalar(rand()%256, rand()%256, rand()%256);

for (size_t i = 0; i < objects.size(); i++) {

const Object& obj = objects[i];

if (!obj.mask.empty()) {

cv::Rect original_rect = obj.rect;

if (original_rect.width != obj.mask.cols || original_rect.height != obj.mask.rows) {

original_rect.width = obj.mask.cols;

original_rect.height = obj.mask.rows;

original_rect.x = std::min(original_rect.x, image.cols - original_rect.width);

original_rect.y = std::min(original_rect.y, image.rows - original_rect.height);

original_rect.x = std::max(0, original_rect.x);

original_rect.y = std::max(0, original_rect.y);

}

cv::Mat mask_color(obj.mask.size(), CV_8UC3, colors[obj.label]);

cv::Mat masked_image;

cv::bitwise_and(mask_color, mask_color, masked_image, obj.mask);

cv::addWeighted(image(original_rect), 0.5, masked_image, 0.5, 0, image(original_rect));

}

fprintf(stderr, "%2d: %3.0f%%, [%4.0f, %4.0f, %4.0f, %4.0f], %s\n", obj.label, obj.prob * 100, obj.rect.x,

obj.rect.y, obj.rect.x + obj.rect.width, obj.rect.y + obj.rect.height, g_classes_name[obj.label].c_str());

cv::rectangle(image, obj.rect, cv::Scalar(255, 0, 0), 2);

char text[256];

sprintf(text, "%s %.1f%%", g_classes_name[obj.label].c_str(), obj.prob * 100);

int baseLine = 0;

cv::Size label_size = cv::getTextSize(text, cv::FONT_HERSHEY_SIMPLEX , 0.5, 1, &baseLine);

int x = obj.rect.x;

int y = obj.rect.y - label_size.height - baseLine;

if (y < 0)

y = 0;

if (x + label_size.width > image.cols)

x = image.cols - label_size.width;

cv::rectangle(image, cv::Rect(cv::Point(x, y), cv::Size(label_size.width, label_size.height + baseLine)),

cv::Scalar(255, 255, 255), -1);

cv::putText(image, text, cv::Point(x, y + label_size.height), cv::FONT_HERSHEY_SIMPLEX , 0.5,

cv::Scalar(0, 0, 0));

}

cv::imwrite("output_yolov5_seg.png", image);

}

int yolov5_seg_postprocess(const char *imagepath, float **output)

{

cv::Mat m = cv::imread(imagepath, 1);

if (m.empty()) return -1;

std::vector<Object> objects;

detect_yolov5_rt(m, objects, output, output[3]); // output[3]为原型掩码

draw_objects(m, objects);

return 0;

}

主函数:main()

#include <stdio.h>

#include <stdlib.h>

#include <string.h>

#include <sys/time.h>

#include "npulib.h"

#include "model_config.h"

/*-------------------------------------------

Macros and Variables

-------------------------------------------*/

extern int yolov5_seg_preprocess(const char* imagepath, void* buff_ptr, unsigned int buff_size);

extern int yolov5_seg_postprocess(const char *imagepath, float **output);

const char *usage =

"model_demo -nb modle_path -i input_path -l loop_run_count -m malloc_mbyte \n"

"-nb modle_path: the NBG file path.\n"

"-i input_path: the input file path.\n"

"-l loop_run_count: the number of loop run network.\n"

"-m malloc_mbyte: npu_unit init memory Mbytes.\n"

"-h : help\n"

"example: model_demo -nb model.nb -i input.tensor -l 10 -m 20 \n";

enum time_idx_e {

NPU_INIT = 0,

NETWORK_CREATE,

NETWORK_PREPARE,

NETWORK_PREPROCESS,

NETWORK_RUN,

NETWORK_LOOP,

TIME_IDX_MAX = 9

};

#if defined(__linux__)

#define TIME_SLOTS 10

static uint64_t time_begin[TIME_SLOTS];

static uint64_t time_end[TIME_SLOTS];

static uint64_t GetTime(void)

{

struct timeval time;

gettimeofday(&time, NULL);

return (uint64_t)(time.tv_usec + time.tv_sec * 1000000);

}

static void TimeBegin(int id)

{

time_begin[id] = GetTime();

}

static void TimeEnd(int id)

{

time_end[id] = GetTime();

}

static uint64_t TimeGet(int id)

{

return time_end[id] - time_begin[id];

}

#endif

int main(int argc, char** argv)

{

int status = 0;

int i = 0;

unsigned int count = 0;

long long total_infer_time = 0;

char *model_file = nullptr;

char *input_file = nullptr;

unsigned int loop_count = 1;

if (argc < 2) {

printf("%s\n", usage);

return -1;

}

for (i = 0; i< argc; i++) {

if (!strcmp(argv[i], "-nb")) {

model_file = argv[++i];

}

else if (!strcmp(argv[i], "-i")) {

input_file = argv[++i];

}

else if (!strcmp(argv[i], "-l")) {

loop_count = atoi(argv[++i]);

}

else if (!strcmp(argv[i], "-h")) {

printf("%s\n", usage);

return 0;

}

}

printf("model_file=%s, input=%s, loop_count=%d \n", model_file, input_file, loop_count);

if (model_file == nullptr)

return -1;

/* NPU init*/

NpuUint npu_uint;

int ret = npu_uint.npu_init();

if (ret != 0) {

return -1;

}

NetworkItem yolov5;

unsigned int network_id = 0;

status = yolov5.network_create(model_file, network_id);

if (status != 0) {

printf("network %d create failed.\n", network_id);

return -1;

}

status = yolov5.network_prepare();

if (status != 0) {

printf("network prepare fail, status=%d\n", status);

return -1;

}

TimeBegin(NETWORK_PREPROCESS);

// input jpg file, no copy way

void *input_buffer_ptr = nullptr;

unsigned int input_buffer_size = 0;

yolov5.get_network_input_buff_info(0, &input_buffer_ptr, &input_buffer_size);

printf("buffer ptr: %p, buffer size: %d \n", input_buffer_ptr, input_buffer_size);

yolov5_seg_preprocess(input_file, input_buffer_ptr, input_buffer_size);

TimeEnd(NETWORK_PREPROCESS);

printf("feed input cost: %lu us.\n", (unsigned long)TimeGet(NETWORK_PREPROCESS));

// create yolov5 output buffer

int output_cnt = yolov5.get_output_cnt(); // network output count

float **output_data = new float*[output_cnt]();

for (int i = 0; i < output_cnt; i++)

output_data[i] = new float[yolov5.m_output_data_len[i]];

i = 0;

/* run network */

TimeBegin(NETWORK_LOOP);

while (count < loop_count) {

count++;

printf("network: %d, loop count: %d\n", i, count);

status = yolov5.network_input_output_set();

if (status != 0) {

printf("set network input/output %d failed.\n", i);

return -1;

}

#if defined (__linux__)

TimeBegin(NETWORK_RUN);

#endif

status = yolov5.network_run();

if (status != 0) {

printf("fail to run network, status=%d, batchCount=%d\n", status, i);

return -2;

}

#if defined (__linux__)

TimeEnd(NETWORK_RUN);

printf("run time for this network %d: %lu us.\n", i, (unsigned long)TimeGet(NETWORK_RUN));

#endif

total_infer_time += (unsigned long)TimeGet(NETWORK_RUN);

yolov5.get_output(output_data);

yolov5_seg_postprocess(input_file, output_data);

}

TimeEnd(NETWORK_LOOP);

if (loop_count > 1) {

printf("network: %d, this network run avg inference time=%d us, total avg cost: %d us\n", i,

(uint32_t)(total_infer_time / loop_count), (unsigned int)(TimeGet(NETWORK_LOOP) / loop_count));

}

// free output buffer

for (int i = 0; i < output_cnt; i++) {

delete[] output_data[i];

output_data[i] = nullptr;

}

if (output_data != nullptr)

delete[] output_data;

return ret;

}

模型转换

后续的一系列和其他模型一样,这里就直接给出转换的命令了,详细的说明可以参考yolox的部署,或者《NPU_模型部署_开发指南》

# using xxx_env.sh to create softlink

./convert_model_env.sh

# 导入

# pegasus_import.sh <model_name>

./pegasus_import.sh yolov5s-seg_rt

# 量化

# pegasus_quantize.sh <model_name> <quantize_type> <calibration_set_size>

./pegasus_quantize.sh yolov5s-seg_rt uint8 12

# 仿真(可选)

# pegasus_inference.sh <model_name> <quantize_type>

./pegasus_inference.sh yolov5s-seg_rt uint8

# 导出nb模型

# pegasus_export_ovx_nbg.sh <model_name> <quantize_type> <platform>

./pegasus_export_ovx_nbg.sh yolov5s-seg_rt uint8 t736

# 导出的模型文件存放在../model目录

# 例如 ../model/yolov5s-seg_rt_uint8_t736.nb编译、推理

这里以在Linux系统下进行编译推理,Android系统参考yolox的部署,这里直接给出命令行,根据自己的平台和系统进行修改就行了。

# 进入yolov5_seg目录,进行编译

cd ../examples/yolov5_seg/

./../build_linux.sh -t t736在examples目录下生成install目录,推送yolov5_seg_demo_linux_t736文件至板端,方式有多种,这里使用adb

adb push install\yolov5_seg_demo_linux_t736 /mnt/UDISK/进入板端相应的文件进行推理

# 运行可执行文件

# ./yolov5_seg_demo_t736 -h 查看执行示例说明

chmod +x ./yolov5_seg_demo_t736

./yolov5_seg_demo_t736 -nb model/yolov5s-seg_rt_uint8_t736.nb -i model/bus.jpg输出结果

detection num: 5

0: 85%, [ 216, 408, 354, 860], person

0: 83%, [ 57, 395, 241, 909], person

0: 82%, [ 665, 391, 809, 873], person

5: 77%, [ 11, 229, 804, 775], bus

0: 45%, [ 4, 543, 77, 890], person

destory npu finished.

~NpuUint.

AtomGit 是由开放原子开源基金会联合 CSDN 等生态伙伴共同推出的新一代开源与人工智能协作平台。平台坚持“开放、中立、公益”的理念,把代码托管、模型共享、数据集托管、智能体开发体验和算力服务整合在一起,为开发者提供从开发、训练到部署的一站式体验。

更多推荐

已为社区贡献7条内容

已为社区贡献7条内容

所有评论(0)