Flume多跳架构深度解析:构建可扩展的数据采集管道

·

Flume多跳架构深度解析:构建可扩展的数据采集管道

|

🌺The Begin🌺点点关注,收藏不迷路🌺

|

在大型数据采集系统中,单层Flume架构往往难以应对复杂的网络拓扑、跨数据中心传输以及海量数据汇聚的需求。多跳架构(Multi-hop Architecture)通过将数据流分解为多个阶段,解决了单点瓶颈、网络隔离和安全管控等核心问题。本文将深入剖析Flume多跳架构的设计原理,并通过实战案例展示如何优化数据流。

引言:为什么需要多跳架构?

在单层Flume架构中,所有采集Agent直接写入最终存储(如HDFS或Kafka),这种模式存在以下问题:

- 连接数爆炸:1000台服务器直接连接HDFS NameNode,导致元数据压力巨大

- 网络隔离:采集层无法直接访问核心机房

- 安全管理:需要在每台服务器上配置存储系统的认证信息

- 扩展性差:难以应对数据量线性增长

多跳架构通过引入中间层(汇聚层)解决了这些问题:

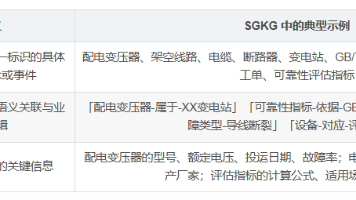

多跳架构的核心设计原则

1. 分层解耦原则

每层只负责特定的功能,层与层之间通过标准协议通信:

| 层级 | 主要职责 | 关键技术 | 部署位置 |

|---|---|---|---|

| 采集层 | 日志采集、初步过滤 | TailDir/SpoolDir Source | 应用服务器 |

| 汇聚层 | 数据聚合、路由、缓冲 | Avro Source/Sink, File Channel | 数据中心边缘节点 |

| 存储层 | 最终写入、格式转换 | HDFS/Kafka/HBase Sink | 核心机房 |

2. 负载均衡与高可用原则

每层都支持水平扩展,通过负载均衡分发流量:

3. 数据可靠性原则

- 采集层到汇聚层:使用File Channel,确保网络故障时数据不丢

- 汇聚层到存储层:使用Failover SinkGroup,实现自动故障转移

- 跨数据中心:启用压缩,节省带宽

多跳架构的实现机制

Avro RPC:Flume的跨节点通信协议

Flume多跳架构的核心是Avro Source和Avro Sink,它们通过Avro RPC进行跨节点数据传输。

Avro RPC工作原理:

Avro Sink配置(发送端)

# 采集层Agent:发送数据到汇聚层

agent.sources = tailSource

agent.channels = fileChannel

agent.sinks = avroSink

# Source配置

agent.sources.tailSource.type = taildir

agent.sources.tailSource.filegroups = f1

agent.sources.tailSource.filegroups.f1 = /var/log/app/access.log

# File Channel(保证可靠性)

agent.channels.fileChannel.type = file

agent.channels.fileChannel.dataDirs = /data/flume/channel

agent.channels.fileChannel.checkpointDir = /ssd/flume/checkpoint

# Avro Sink配置

agent.sinks.avroSink.type = avro

agent.sinks.avroSink.hostname = collector-lb.example.com # 负载均衡地址

agent.sinks.avroSink.port = 4141

agent.sinks.avroSink.batch-size = 5000 # 批次大小

agent.sinks.avroSink.connect-timeout = 20000 # 连接超时

agent.sinks.avroSink.request-timeout = 30000 # 请求超时

agent.sinks.avroSink.compression-type = deflate # 压缩节省带宽

agent.sinks.avroSink.compression-level = 6 # 压缩级别

# 连接关系

agent.sources.tailSource.channels = fileChannel

agent.sinks.avroSink.channel = fileChannel

Avro Source配置(接收端)

# 汇聚层Agent:接收采集层数据

collector.sources = avroSource

collector.channels = memChannel

collector.sinks = hdfsSink kafkaSink

# Avro Source配置

collector.sources.avroSource.type = avro

collector.sources.avroSource.bind = 0.0.0.0 # 监听所有接口

collector.sources.avroSource.port = 4141

collector.sources.avroSource.threads = 50 # 线程数

collector.sources.avroSource.batchSize = 5000

collector.sources.avroSource.compression-type = deflate # 与发送端一致

# Channel配置

collector.channels.memChannel.type = memory

collector.channels.memChannel.capacity = 100000

collector.channels.memChannel.transactionCapacity = 10000

# Sink配置...

collector.sources.avroSource.channels = memChannel

实战案例:三层多跳架构实现

需求场景

某互联网公司需要采集全网5000台服务器的日志,要求:

- 采集层只能访问边缘网络,无法直连核心机房

- 需要实时分析和离线存储两条路径

- 跨数据中心传输要节省带宽

- 任意节点故障不影响数据采集

架构设计

完整配置

采集层Agent配置:

# agent.conf - 部署在5000台应用服务器上

agent.name = app_log_agent

agent.sources = fileSource

agent.channels = fileChannel

agent.sinks = avroSinkGroup

# Source: 采集日志

agent.sources.fileSource.type = taildir

agent.sources.fileSource.filegroups = f1

agent.sources.fileSource.filegroups.f1 = /var/log/app/.*log

agent.sources.fileSource.batchSize = 3000

agent.sources.fileSource.positionFile = /data/flume/position.json

# Channel: File Channel保证数据不丢

agent.channels.fileChannel.type = file

agent.channels.fileChannel.dataDirs = /data1/flume/channel,/data2/flume/channel

agent.channels.fileChannel.checkpointDir = /ssd/flume/checkpoint

agent.channels.fileChannel.capacity = 2000000

agent.channels.fileChannel.transactionCapacity = 6000

# 多Sink实现负载均衡(连接多个Collector)

agent.sinks = avroSink1 avroSink2 avroSink3

agent.sinkgroups = g1

agent.sinkgroups.g1.sinks = avroSink1 avroSink2 avroSink3

agent.sinkgroups.g1.processor.type = load_balance

agent.sinkgroups.g1.processor.selector = round_robin

agent.sinkgroups.g1.processor.backoff = true

# Sink1配置

agent.sinks.avroSink1.type = avro

agent.sinks.avroSink1.hostname = collector01.datacenter-a

agent.sinks.avroSink1.port = 4141

agent.sinks.avroSink1.batch-size = 4000

agent.sinks.avroSink1.compression-type = deflate

agent.sinks.avroSink1.compression-level = 6

agent.sinks.avroSink1.channel = fileChannel

# Sink2配置(类似Sink1,指向collector02)

agent.sinks.avroSink2.type = avro

agent.sinks.avroSink2.hostname = collector02.datacenter-a

agent.sinks.avroSink2.port = 4141

# ... 其他参数相同

agent.sinks.avroSink2.channel = fileChannel

# Sink3配置(指向collector03)

agent.sinks.avroSink3.type = avro

agent.sinks.avroSink3.hostname = collector03.datacenter-a

# ...

agent.sinks.avroSink3.channel = fileChannel

汇聚层Collector配置:

# collector.conf - 部署在汇聚层服务器

collector.name = datacenter_a_collector

collector.sources = avroSource

collector.channels = fileChannel

collector.sinks = hdfsAvroSink kafkaSink

# Avro Source: 接收采集层数据

collector.sources.avroSource.type = avro

collector.sources.avroSource.bind = 0.0.0.0

collector.sources.avroSource.port = 4141

collector.sources.avroSource.threads = 50

collector.sources.avroSource.batchSize = 5000

collector.sources.avroSource.compression-type = deflate

# File Channel: 临时缓冲

collector.channels.fileChannel.type = file

collector.channels.fileChannel.dataDirs = /data1/flume/channel,/data2/flume/channel

collector.channels.fileChannel.checkpointDir = /ssd/flume/checkpoint

collector.channels.fileChannel.capacity = 5000000

collector.channels.fileChannel.transactionCapacity = 10000

# 多Sink: 分别发往不同目的地

collector.sinks = hdfsAvroSink kafkaSink

collector.sinkgroups = g1

collector.sinkgroups.g1.sinks = hdfsAvroSink kafkaSink

collector.sinkgroups.g1.processor.type = load_balance

collector.sinkgroups.g1.processor.selector = round_robin

# Sink1: 通过Avro发往HDFS集群

collector.sinks.hdfsAvroSink.type = avro

collector.sinks.hdfsAvroSink.hostname = hdfs-gateway.datacenter-b

collector.sinks.hdfsAvroSink.port = 4142

collector.sinks.hdfsAvroSink.batch-size = 5000

collector.sinks.hdfsAvroSink.compression-type = deflate

collector.sinks.hdfsAvroSink.compression-level = 6

collector.sinks.hdfsAvroSink.channel = fileChannel

# Sink2: 直接写入Kafka

collector.sinks.kafkaSink.type = org.apache.flume.sink.kafka.KafkaSink

collector.sinks.kafkaSink.kafka.topic = realtime-topic

collector.sinks.kafkaSink.kafka.bootstrap.servers = kafka01.datacenter-b:9092

collector.sinks.kafkaSink.kafka.flumeBatchSize = 3000

collector.sinks.kafkaSink.kafka.producer.acks = 1

collector.sinks.kafkaSink.kafka.producer.compression.type = snappy

collector.sinks.kafkaSink.channel = fileChannel

# 连接关系

collector.sources.avroSource.channels = fileChannel

存储层HDFS Agent配置:

# hdfs-agent.conf - 部署在HDFS网关

hdfs.sources = avroSource

hdfs.channels = fileChannel

hdfs.sinks = hdfsSink

# Avro Source: 接收汇聚层数据

hdfs.sources.avroSource.type = avro

hdfs.sources.avroSource.bind = 0.0.0.0

hdfs.sources.avroSource.port = 4142

hdfs.sources.avroSource.threads = 20

hdfs.sources.avroSource.batchSize = 5000

hdfs.sources.avroSource.compression-type = deflate

# File Channel

hdfs.channels.fileChannel.type = file

hdfs.channels.fileChannel.dataDirs = /data/flume/channel

hdfs.channels.fileChannel.capacity = 2000000

# HDFS Sink

hdfs.sinks.hdfsSink.type = hdfs

hdfs.sinks.hdfsSink.hdfs.path = /flume/logs/%Y%m%d/%H

hdfs.sinks.hdfsSink.hdfs.filePrefix = app

hdfs.sinks.hdfsSink.hdfs.batchSize = 5000

hdfs.sinks.hdfsSink.hdfs.rollSize = 1073741824

hdfs.sinks.hdfsSink.hdfs.rollInterval = 600

hdfs.sinks.hdfsSink.hdfs.codeC = gzip

hdfs.sinks.hdfsSink.hdfs.fileType = CompressedStream

hdfs.sinks.hdfsSink.channel = fileChannel

hdfs.sources.avroSource.channels = fileChannel

多跳传输的性能优化

1. 批次大小优化

不同跳数应设置合理的batchSize:

| 跳数 | 推荐BatchSize | 原因 |

|---|---|---|

| 采集层→汇聚层 | 2000-5000 | 平衡延迟和吞吐,避免频繁RPC |

| 汇聚层→存储层 | 3000-8000 | 网络带宽好,可更大批次 |

| 跨数据中心 | 5000-10000 | 压缩后传输,减少RTT影响 |

2. 压缩策略

# 跨数据中心链路启用压缩

agent.sinks.avroSink.compression-type = deflate

agent.sinks.avroSink.compression-level = 6

# 数据中心内部可禁用压缩

collector.sinks.hdfsAvroSink.compression-type = none

3. 连接池优化

# Avro Source增加线程数

collector.sources.avroSource.threads = 50 # 根据CPU核心数调整

# 操作系统层面

# 增加文件句柄数

ulimit -n 100000

# 网络参数调优

sysctl -w net.core.somaxconn=1024

多跳架构的可靠性保障

端到端可靠性配置

# 每跳都使用File Channel

agent.channels.fileChannel.type = file

agent.channels.fileChannel.dataDirs = /data1/flume/data,/data2/flume/data

agent.channels.fileChannel.checkpointDir = /ssd/flume/checkpoint

# Sink启用重试

agent.sinks.avroSink.connect-timeout = 30000

agent.sinks.avroSink.request-timeout = 60000

# 跨跳失败转移

agent.sinkgroups.g1.processor.type = failover

agent.sinkgroups.g1.processor.priority.sink1 = 100

agent.sinkgroups.g1.processor.priority.sink2 = 80

故障恢复机制

当某一跳发生故障时:

监控多跳架构

分层监控指标

#!/usr/bin/env python3

import requests

import json

def monitor_multi_hop():

"""监控多跳架构各层状态"""

layers = {

'采集层': ['agent1:36001', 'agent2:36001'],

'汇聚层': ['collector1:36001', 'collector2:36001'],

'存储层': ['hdfs-agent:36001']

}

for layer, hosts in layers.items():

print(f"\n=== {layer} ===")

for host in hosts:

try:

metrics = requests.get(f"http://{host}/metrics", timeout=3).json()

# 提取关键指标

for channel, values in metrics.get('CHANNEL', {}).items():

size = values.get('ChannelSize', 0)

print(f"{host} - {channel}: 积压 {size}")

if size > 100000:

print(f" WARNING: 积压过高!")

# Sink成功率

for sink, values in metrics.get('SINK', {}).items():

success = values.get('EventDrainSuccessCount', 0)

attempts = values.get('EventDrainAttemptCount', 0)

if attempts > 0:

rate = success / attempts

print(f"{host} - {sink}: 成功率 {rate:.2%}")

except Exception as e:

print(f"{host}: 无法连接 - {e}")

if __name__ == "__main__":

monitor_multi_hop()

延迟监控

# 监控端到端延迟

# 在入口和出口分别记录时间戳

agent.sources.tailSource.interceptors = timestamp

agent.sources.tailSource.interceptors.timestamp.type = timestamp

# 在HDFS Sink中查看事件头中的原始时间戳

# 计算端到端延迟 = 当前时间 - 事件头中的timestamp

多跳架构的最佳实践

1. 合理规划跳数

| 场景 | 推荐跳数 | 说明 |

|---|---|---|

| 单数据中心 | 2跳(采集→汇聚→存储) | 平衡性能和可靠性 |

| 跨数据中心 | 3跳(采集→汇聚A→汇聚B→存储) | 应对网络隔离 |

| 全球部署 | 4跳+ | 可能需要多级汇聚 |

2. 负载均衡策略

# 采集层到汇聚层:随机/轮询

agent.sinkgroups.g1.processor.selector = random

# 汇聚层到存储层:自定义权重

# 可以通过自定义SinkSelector实现权重分配

3. 容量规划公式

每层需要的Agent数 = 峰值吞吐量 / 单Agent处理能力

示例:

- 总数据量:100GB/s

- 单Agent处理能力:50MB/s

- 每层Agent数 = 100GB/s ÷ 50MB/s = 2000个

4. 避免数据重复

多跳架构中可能因重试导致数据重复,解决方案:

# 在最终存储层做去重

# 使用事件头中的唯一ID

agent.sources.tailSource.interceptors = uuid

agent.sources.tailSource.interceptors.uuid.type = org.apache.flume.interceptor.UUIDInterceptor$Builder

agent.sources.tailSource.interceptors.uuid.headerName = eventId

# HDFS Sink写入时,文件名包含eventId范围,便于下游去重

总结

Flume多跳架构通过分层设计,解决了大规模数据采集中的核心挑战:

| 挑战 | 多跳架构的解决方案 |

|---|---|

| 连接数爆炸 | 汇聚层聚合连接,减少对存储层的直接压力 |

| 网络隔离 | 通过Avro RPC穿透网络边界 |

| 安全管理 | 各层独立配置认证信息,减少暴露面 |

| 水平扩展 | 每层均可独立扩展,无单点瓶颈 |

| 可靠性 | 每跳File Channel + Failover保证端到端可靠性 |

核心收益:

- 可扩展性:支持从百台到万台服务器的线性扩展

- 可靠性:任意节点故障不影响整体数据流

- 灵活性:可在不同跳添加数据清洗、过滤、路由功能

- 可管理性:分层监控、独立运维

通过合理设计多跳架构,Flume可以支撑从GB到PB级的数据采集需求,成为企业数据管道中不可或缺的一环。

|

🌺The End🌺点点关注,收藏不迷路🌺

|

AtomGit 是由开放原子开源基金会联合 CSDN 等生态伙伴共同推出的新一代开源与人工智能协作平台。平台坚持“开放、中立、公益”的理念,把代码托管、模型共享、数据集托管、智能体开发体验和算力服务整合在一起,为开发者提供从开发、训练到部署的一站式体验。

更多推荐

已为社区贡献37条内容

已为社区贡献37条内容

所有评论(0)