ceph-基于rockylinux8.7构建企业级分布式存储

目录

1.安装前准备工作(所有节点)

1.1环境描述

|

主机名 |

IP地址 |

操作系统 |

磁盘 |

备注 |

|

ceph01 |

10.9.254.81 |

rockylinux8.7 |

sdb 100G sdc 100G |

|

|

ceph02 |

10.9.254.82 |

rockylinux8.7 |

sdb 100G sdc 100G |

|

|

ceph03 |

10.9.254.83 |

rockylinux8.7 |

sdb 100G sdc 100G |

|

|

ceph04 |

10.9.254.84 |

rockylinux8.7 |

sdb 100G sdc 100G |

|

|

ceph05 |

10.9.254.85 |

rockylinux8.7 |

sdb 100G sdc 100G |

1.2修改主机名和hosts(所有节点)

|

[root@poc /etc/sysconfig/network-scripts]# hostnamectl set-hostname ceph01 [root@poc /etc/sysconfig/network-scripts]# bash [root@ceph01 ~]# vim /etc/hosts |

|

#public network 10.9.254.81 ceph01 10.9.254.82 ceph02 10.9.254.83 ceph03 10.9.254.84 ceph04 10.9.254.85 ceph05 #cluster network 192.168.254.81 ceph01-cl 192.168.254.82 ceph02-cl 192.168.254.83 ceph03-cl 192.168.254.84 ceph04-cl 192.168.254.85 ceph05-cl |

|

[root@ceph01 ~]# ip a |

|

1: lo: mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000 link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo valid_lft forever preferred_lft forever inet6 ::1/128 scope host valid_lft forever preferred_lft forever 2: eth0: mtu 1500 qdisc fq_codel state UP group default qlen 1000 link/ether 00:50:56:98:2b:ba brd ff:ff:ff:ff:ff:ff altname enp2s0 altname ens32 inet 10.9.254.81/24 brd 10.9.254.255 scope global noprefixroute eth0 valid_lft forever preferred_lft forever inet6 fe80::250:56ff:fe98:2bba/64 scope link noprefixroute valid_lft forever preferred_lft forever 3: eth1: mtu 1500 qdisc fq_codel state UP group default qlen 1000 link/ether 00:50:56:98:84:6b brd ff:ff:ff:ff:ff:ff altname enp2s2 altname ens34 inet 192.168.254.81/24 brd 192.168.254.255 scope global noprefixroute eth1 valid_lft forever preferred_lft forever inet6 fe80::250:56ff:fe98:846b/64 scope link noprefixroute valid_lft forever preferred_lft forever |

1.3关闭防火墙(所有节点)

|

[root@ceph01 ~]# systemctl disable --now firewalld [root@ceph01 ~]# setenforce 0 [root@ceph01 ~]# sed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/sysconfig/selinux [root@ceph01 ~]# sed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/selinux/config [root@ceph01 ~]# cat /etc/selinux/config |

|

# This file controls the state of SELinux on the system. # SELINUX= can take one of these three values: # enforcing - SELinux security policy is enforced. # permissive - SELinux prints warnings instead of enforcing. # disabled - No SELinux policy is loaded. SELINUX=disabled # SELINUXTYPE= can take one of these three values: # targeted - Targeted processes are protected, # minimum - Modification of targeted policy. Only selected processes are protected. # mls - Multi Level Security protection. SELINUXTYPE=targeted |

1.4修改内核参数及资源限制参数(所有节点)

|

[root@ceph01 ~]# vim /etc/modules-load.d/ceph.conf |

|

overlay br_netfilter |

|

[root@ceph01 ~]# modprobe overlay [root@ceph01 ~]# modprobe br_netfilter [root@ceph01 ~]# lsmod | grep br_netfilter |

|

br_netfilter 24576 0 bridge 290816 1 br_netfilter |

|

[root@ceph01 ~]# vim /etc/sysctl.d/ceph.conf |

|

net.bridge.bridge-nf-call-iptables = 1 net.bridge.bridge-nf-call-ip6tables = 1 net.ipv4.ip_forward = 1 net.ipv4.ip_local_port_range = 1024 65335 net.netfilter.nf_conntrack_max = 2621440 net.nf_conntrack_max = 2621440 vm.max_map_count = 1048576 net.ipv4.tcp_wmem = 4096 16384 4194304 net.ipv4.tcp_rmem = 4096 87380 6291456 net.ipv4.tcp_mem = 381462 508616 762924 net.core.rmem_default = 8388608 net.core.rmem_max = 26214400 net.core.wmem_max = 26214400 fs.nr_open = 16777216 fs.file-max = 16777216 net.ipv4.neigh.default.gc_thresh1 = 40960 net.ipv4.neigh.default.gc_thresh2 = 81920 net.ipv4.neigh.default.gc_thresh3 = 102400 net.ipv4.tcp_max_syn_backlog = 65535 net.core.somaxconn = 65535 net.core.netdev_max_backlog = 250000 net.ipv4.tcp_syncookies = 1 net.ipv4.tcp_tw_reuse = 0 net.ipv4.tcp_fin_timeout = 30 net.ipv4.tcp_fastopen = 3 net.ipv4.tcp_orphan_retries = 3 net.ipv4.tcp_abort_on_overflow = 1 |

|

[root@ceph01 ~]# sysctl -p /etc/sysctl.d/ceph.conf |

|

net.bridge.bridge-nf-call-iptables = 1 net.bridge.bridge-nf-call-ip6tables = 1 net.ipv4.ip_forward = 1 net.ipv4.ip_local_port_range = 1024 65335 sysctl: cannot stat /proc/sys/net/netfilter/nf_conntrack_max: No such file or directory sysctl: cannot stat /proc/sys/net/nf_conntrack_max: No such file or directory vm.max_map_count = 1048576 net.ipv4.tcp_wmem = 4096 16384 4194304 net.ipv4.tcp_rmem = 4096 87380 6291456 net.ipv4.tcp_mem = 381462 508616 762924 net.core.rmem_default = 8388608 net.core.rmem_max = 26214400 net.core.wmem_max = 26214400 fs.nr_open = 16777216 fs.file-max = 16777216 net.ipv4.neigh.default.gc_thresh1 = 40960 net.ipv4.neigh.default.gc_thresh2 = 81920 net.ipv4.neigh.default.gc_thresh3 = 102400 net.ipv4.tcp_max_syn_backlog = 65535 net.core.somaxconn = 65535 net.core.netdev_max_backlog = 250000 net.ipv4.tcp_syncookies = 1 net.ipv4.tcp_tw_reuse = 0 net.ipv4.tcp_fin_timeout = 30 net.ipv4.tcp_fastopen = 3 net.ipv4.tcp_orphan_retries = 3 net.ipv4.tcp_abort_on_overflow = 1 |

|

[root@ceph01 ~]# vim /etc/security/limits.d/ceph.conf |

|

* hard nofile 655360 * soft nofile 655360 * soft core 655360 * hard core 655360 * soft nproc unlimited root soft nproc unlimited |

1.5配置时钟同步(所有节点)

|

[root@ceph01 ~]# dnf -y install chrony |

|

Installed: chrony-4.1-1.el8.x86_64 timedatex-0.5-3.el8.x86_64 Complete! |

|

[root@ceph01 ~]# ln -sf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime [root@ceph01 ~]# echo 'Asia/Shanghai' >/etc/timezone [root@ceph01 ~]# systemctl start chronyd [root@ceph01 ~]# systemctl enable chronyd [root@ceph01 ~]# vi /etc/chrony.conf |

|

#pool 2.pool.ntp.org iburst server ntp.aliyun.com iburst server cn.ntp.org.cn iburst |

|

[root@ceph01 ~]# systemctl restart chronyd [root@ceph01 ~]# chronyc sources -v |

|

.-- Source mode '^' = server, '=' = peer, '#' = local clock. / .- Source state '*' = current best, '+' = combined, '-' = not combined, | / 'x' = may be in error, '~' = too variable, '?' = unusable. || .- xxxx [ yyyy ] +/- zzzz || Reachability register (octal) -. | xxxx = adjusted offset, || Log2(Polling interval) --. | | yyyy = measured offset, || \ | | zzzz = estimated error. || | | MS Name/IP address Stratum Poll Reach LastRx Last sample ^- 203.107.6.88 2 6 17 4 +3408us[+3408us] +/- 19ms ^* 106.75.107.80 2 6 17 5 -940us[-2018us] +/- 17ms |

1.6containerd安装(所有节点)

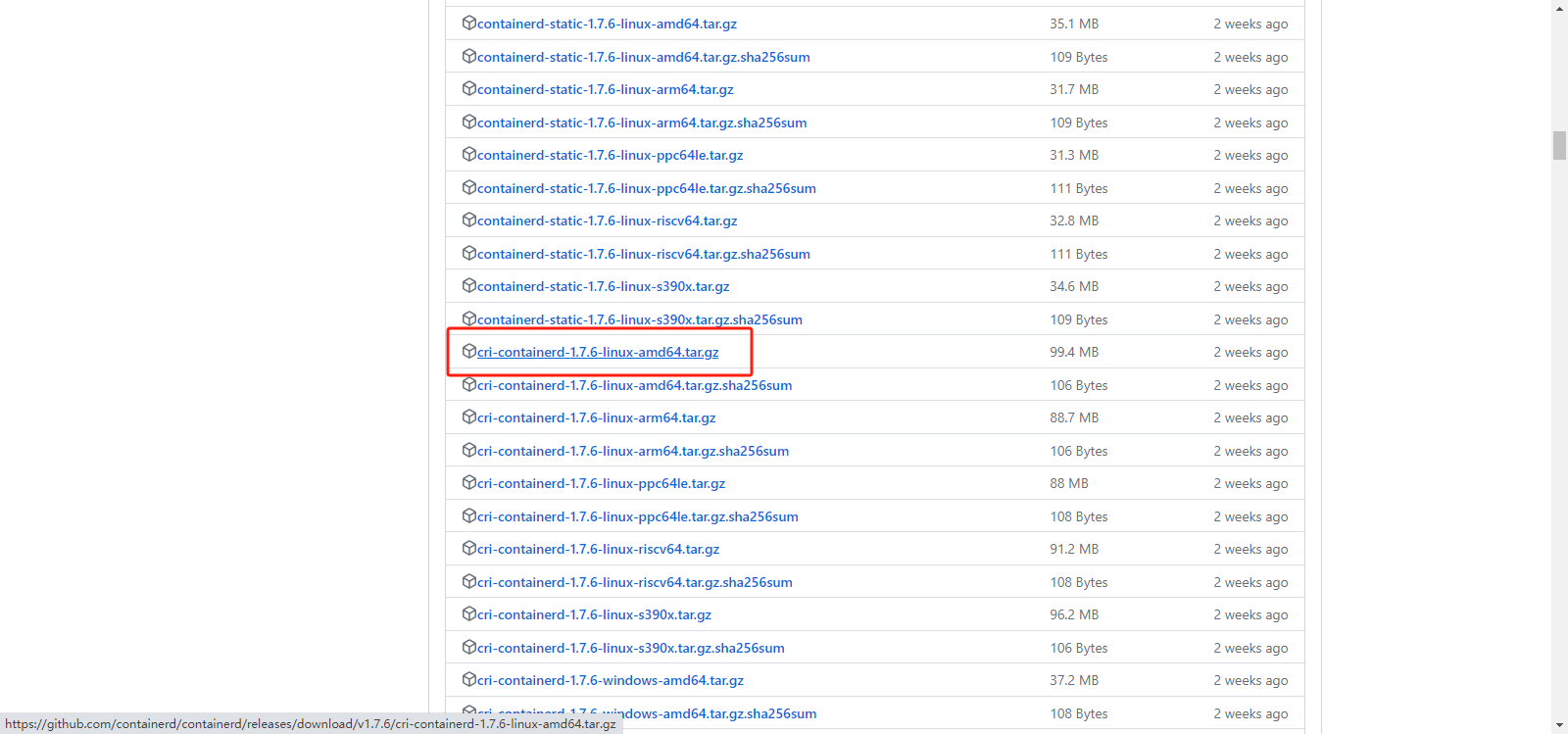

1.6.1containerd下载

https://github.com/containerd/containerd/releases

1.6.2containerd安装

|

[root@ceph01 ~]# ll |

|

-rw-r--r-- 1 root root 104215835 Sep 28 15:55 cri-containerd-1.7.6-linux-amd64.tar.gz |

|

[root@ceph01 ~]# tar xf cri-containerd-1.7.6-linux-amd64.tar.gz -C / [root@ceph01 ~]# ls -lt /usr/local/bin/ |

|

-rwxr-xr-x 1 root root 26243584 Sep 13 01:54 ctd-decoder -rwxr-xr-x 1 root root 54137463 Sep 13 01:54 crictl -rwxr-xr-x 1 root root 56286175 Sep 13 01:54 critest -rwxr-xr-x 1 root root 38972912 Sep 13 01:51 containerd -rwxr-xr-x 1 root root 6598656 Sep 13 01:51 containerd-shim -rwxr-xr-x 1 root root 8306688 Sep 13 01:51 containerd-shim-runc-v1 -rwxr-xr-x 1 root root 12062720 Sep 13 01:51 containerd-shim-runc-v2 -rwxr-xr-x 1 root root 17653760 Sep 13 01:51 containerd-stress -rwxr-xr-x 1 root root 18681856 Sep 13 01:51 ctr |

|

[root@ceph01 ~]# ls -lt /usr/local/sbin |

|

-rwxr-xr-x 1 root root 13511400 Sep 13 01:51 runc |

|

[root@ceph01 ~]# runc |

|

NAME: runc - Open Container Initiative runtime runc is a command line client for running applications packaged according to the Open Container Initiative (OCI) format and is a compliant implementation of the Open Container Initiative specification. runc integrates well with existing process supervisors to provide a production container runtime environment for applications. It can be used with your existing process monitoring tools and the container will be spawned as a direct child of the process supervisor. Containers are configured using bundles. A bundle for a container is a directory that includes a specification file named "config.json" and a root filesystem. The root filesystem contains the contents of the container. To start a new instance of a container: # runc run [ -b bundle ] Where "" is your name for the instance of the container that you are starting. The name you provide for the container instance must be unique on your host. Providing the bundle directory using "-b" is optional. The default value for "bundle" is the current directory. USAGE: runc [global options] command [command options] [arguments...] VERSION: 1.1.9 commit: v1.1.9-0-gccaecfcb spec: 1.0.2-dev go: go1.20.8 libseccomp: 2.5.2 COMMANDS: checkpoint checkpoint a running container create create a container delete delete any resources held by the container often used with detached container events display container events such as OOM notifications, cpu, memory, and IO usage statistics exec execute new process inside the container kill kill sends the specified signal (default: SIGTERM) to the container's init process list lists containers started by runc with the given root pause pause suspends all processes inside the container ps ps displays the processes running inside a container restore restore a container from a previous checkpoint resume resumes all processes that have been previously paused run create and run a container spec create a new specification file start executes the user defined process in a created container state output the state of a container update update container resource constraints features show the enabled features help, h Shows a list of commands or help for one command GLOBAL OPTIONS: --debug enable debug logging --log value set the log file to write runc logs to (default is '/dev/stderr') --log-format value set the log format ('text' (default), or 'json') (default: "text") --root value root directory for storage of container state (this should be located in tmpfs) (default: "/run/runc") --criu value path to the criu binary used for checkpoint and restore (default: "criu") --systemd-cgroup enable systemd cgroup support, expects cgroupsPath to be of form "slice:prefix:name" for e.g. "system.slice:runc:434234" --rootless value ignore cgroup permission errors ('true', 'false', or 'auto') (default: "auto") --help, -h show help --version, -v print the version |

|

[root@ceph01 ~]# ctr |

|

NAME: ctr - __ _____/ /______ / ___/ __/ ___/ / /__/ /_/ / \___/\__/_/ containerd CLI USAGE: ctr [global options] command [command options] [arguments...] VERSION: v1.7.6 DESCRIPTION: ctr is an unsupported debug and administrative client for interacting with the containerd daemon. Because it is unsupported, the commands, options, and operations are not guaranteed to be backward compatible or stable from release to release of the containerd project. COMMANDS: plugins, plugin Provides information about containerd plugins version Print the client and server versions containers, c, container Manage containers content Manage content events, event Display containerd events images, image, i Manage images leases Manage leases namespaces, namespace, ns Manage namespaces pprof Provide golang pprof outputs for containerd run Run a container snapshots, snapshot Manage snapshots tasks, t, task Manage tasks install Install a new package oci OCI tools sandboxes, sandbox, sb, s Manage sandboxes info Print the server info shim Interact with a shim directly help, h Shows a list of commands or help for one command GLOBAL OPTIONS: --debug Enable debug output in logs --address value, -a value Address for containerd's GRPC server (default: "/run/containerd/containerd.sock") [$CONTAINERD_ADDRESS] --timeout value Total timeout for ctr commands (default: 0s) --connect-timeout value Timeout for connecting to containerd (default: 0s) --namespace value, -n value Namespace to use with commands (default: "default") [$CONTAINERD_NAMESPACE] --help, -h show help --version, -v print the version |

|

[root@ceph01 ~]# crictl |

|

NAME: crictl - client for CRI USAGE: crictl [global options] command [command options] [arguments...] VERSION: 1.27.0 COMMANDS: attach Attach to a running container create Create a new container exec Run a command in a running container version Display runtime version information images, image, img List images inspect Display the status of one or more containers inspecti Return the status of one or more images imagefsinfo Return image filesystem info inspectp Display the status of one or more pods logs Fetch the logs of a container port-forward Forward local port to a pod ps List containers pull Pull an image from a registry run Run a new container inside a sandbox runp Run a new pod rm Remove one or more containers rmi Remove one or more images rmp Remove one or more pods pods List pods start Start one or more created containers info Display information of the container runtime stop Stop one or more running containers stopp Stop one or more running pods update Update one or more running containers config Get and set crictl client configuration options stats List container(s) resource usage statistics statsp List pod resource usage statistics completion Output shell completion code checkpoint Checkpoint one or more running containers help, h Shows a list of commands or help for one command GLOBAL OPTIONS: --config value, -c value Location of the client config file. If not specified and the default does not exist, the program's directory is searched as well (default: "/etc/crictl.yaml") [$CRI_CONFIG_FILE] --debug, -D Enable debug mode (default: false) --image-endpoint value, -i value Endpoint of CRI image manager service (default: uses 'runtime-endpoint' setting) [$IMAGE_SERVICE_ENDPOINT] --runtime-endpoint value, -r value Endpoint of CRI container runtime service (default: uses in order the first successful one of [unix:///var/run/dockershim.sock unix:///run/containerd/containerd.sock unix:///run/crio/crio.sock unix:///var/run/cri-dockerd.sock]). Default is now deprecated and the endpoint should be set instead. [$CONTAINER_RUNTIME_ENDPOINT] --timeout value, -t value Timeout of connecting to the server in seconds (e.g. 2s, 20s.). 0 or less is set to default (default: 2s) --help, -h show help --version, -v print the version |

|

[root@ceph01 ~]# vim /etc/crictl.yaml |

|

runtime-endpoint: unix:///run/containerd/containerd.sock image-endpoint: unix:///var/run/containerd/containerd.sock timeout: 10 debug: false |

|

[root@ceph01 ~]# mkdir /etc/containerd [root@ceph01 ~]# containerd config default > /etc/containerd/config.toml [root@ceph01 ~]# vim /etc/containerd/config.toml |

|

max_container_log_line_size = 163840 device_ownership_from_security_context = true SystemdCgroup = true 65 #sandbox_image = "registry.k8s.io/pause:3.8" 66 sandbox_image = "registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.8" 169 [plugins."io.containerd.grpc.v1.cri".registry.mirrors] 170 [plugins."io.containerd.grpc.v1.cri".registry.mirrors."docker.io"] 171 endpoint = ["https://7slsp6vu.mirror.aliyuncs.com"] |

|

[root@ceph01 ~]# vim /etc/systemd/system/containerd.service |

|

LimitNOFILE=655360 |

|

[root@ceph01 ~]# systemctl enable containerd && systemctl restart containerd [root@ceph01 /var/log]# ls -l /var/run/containerd |

|

srw-rw---- 1 root root 0 Sep 28 18:24 containerd.sock srw-rw---- 1 root root 0 Sep 28 18:24 containerd.sock.ttrpc drwx--x--x 2 root root 40 Sep 28 16:40 io.containerd.runtime.v1.linux drwx--x--x 2 root root 40 Sep 28 16:40 io.containerd.runtime.v2.task |

|

[root@ceph01 /var/log]# systemctl status containerd |

|

● containerd.service - containerd container runtime Loaded: loaded (/etc/systemd/system/containerd.service; enabled; vendor preset: disabled) Active: active (running) since Thu 2023-09-28 18:24:47 CST; 2min 20s ago Docs: https://containerd.io Process: 3698 ExecStartPre=/sbin/modprobe overlay (code=exited, status=0/SUCCESS) Main PID: 3700 (containerd) Tasks: 10 Memory: 19.8M CGroup: /system.slice/containerd.service └─3700 /usr/local/bin/containerd Sep 28 18:24:47 ceph01 containerd[3700]: time="2023-09-28T18:24:47.122049553+08:00" level=info msg="Start subscribing containerd ev> Sep 28 18:24:47 ceph01 containerd[3700]: time="2023-09-28T18:24:47.122290883+08:00" level=info msg="Start recovering state" Sep 28 18:24:47 ceph01 containerd[3700]: time="2023-09-28T18:24:47.122332027+08:00" level=info msg=serving... address=/run/containe> Sep 28 18:24:47 ceph01 containerd[3700]: time="2023-09-28T18:24:47.122381718+08:00" level=info msg=serving... address=/run/containe> Sep 28 18:24:47 ceph01 containerd[3700]: time="2023-09-28T18:24:47.122458973+08:00" level=info msg="Start event monitor" Sep 28 18:24:47 ceph01 containerd[3700]: time="2023-09-28T18:24:47.122516664+08:00" level=info msg="Start snapshots syncer" Sep 28 18:24:47 ceph01 containerd[3700]: time="2023-09-28T18:24:47.122541767+08:00" level=info msg="Start cni network conf syncer f> Sep 28 18:24:47 ceph01 containerd[3700]: time="2023-09-28T18:24:47.122556870+08:00" level=info msg="Start streaming server" Sep 28 18:24:47 ceph01 containerd[3700]: time="2023-09-28T18:24:47.122702480+08:00" level=info msg="containerd successfully booted > Sep 28 18:24:47 ceph01 systemd[1]: Started containerd container runtime. |

1.7安装docker

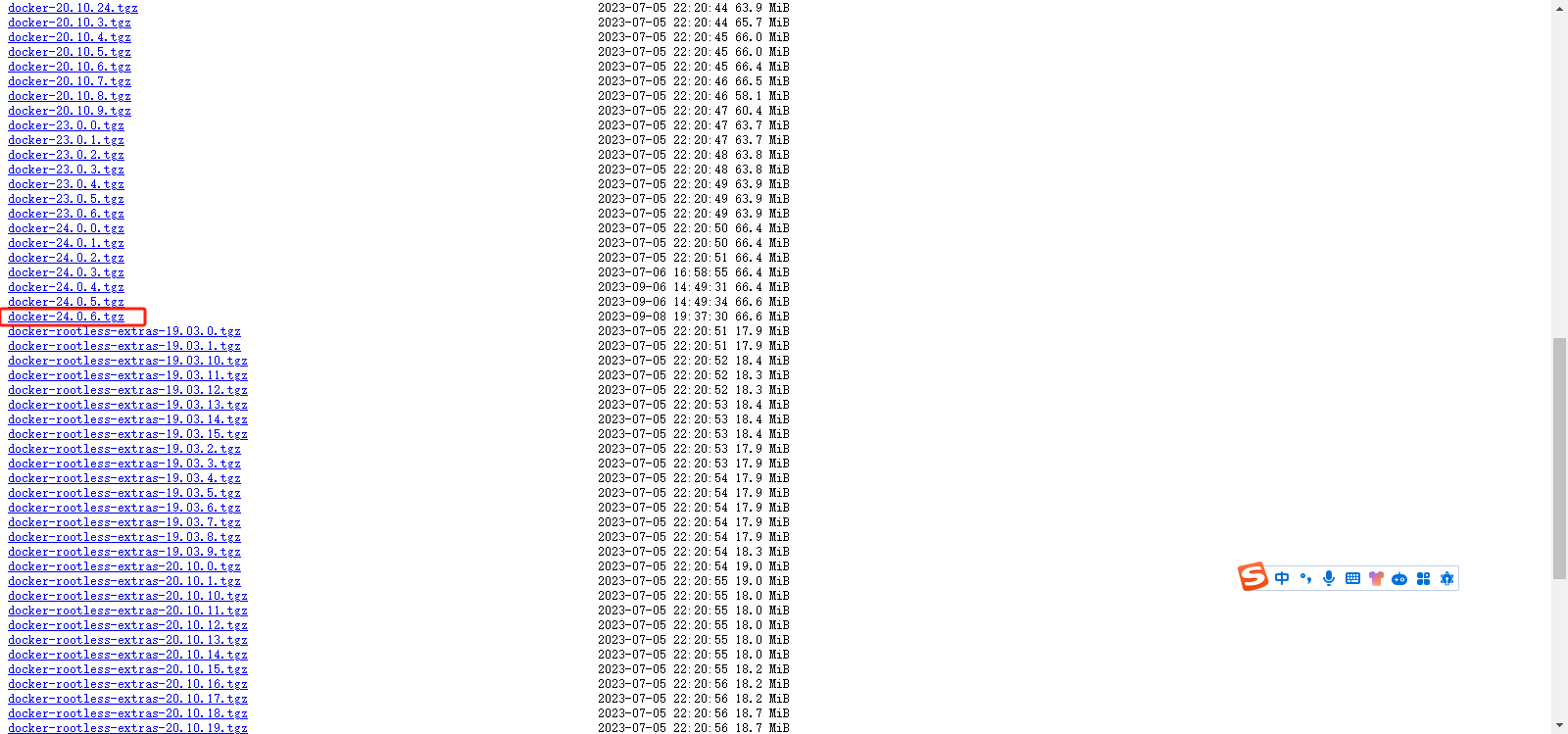

1.7.1下载docker

https://download.docker.com/linux/static/stable/x86_64/

https://download.docker.com/linux/static/stable/x86_64/docker-24.0.6.tgz

1.7.2docker安装(所有节点)

|

[root@ceph01 ~]# ll |

|

-rw-r--r-- 1 root root 69797795 Sep 28 18:32 docker-24.0.6.tgz |

|

[root@ceph01 ~]# tar xf docker-24.0.6.tgz [root@ceph01 ~]# ls -lt |

|

-rw-r--r-- 1 root root 69797795 Sep 28 18:32 docker-24.0.6.tgz -rw-r--r-- 1 root root 104215835 Sep 28 15:55 cri-containerd-1.7.6-linux-amd64.tar.gz drwxrwxr-x 2 1000 1000 146 Sep 4 20:34 docker |

|

[root@ceph01 ~]# ls -l docker |

|

-rwxr-xr-x 1 1000 1000 39129088 Sep 4 20:34 containerd -rwxr-xr-x 1 1000 1000 12374016 Sep 4 20:34 containerd-shim-runc-v2 -rwxr-xr-x 1 1000 1000 19140608 Sep 4 20:34 ctr -rwxr-xr-x 1 1000 1000 34752096 Sep 4 20:34 docker -rwxr-xr-x 1 1000 1000 63346888 Sep 4 20:34 dockerd -rwxr-xr-x 1 1000 1000 761712 Sep 4 20:34 docker-init -rwxr-xr-x 1 1000 1000 1965694 Sep 4 20:34 docker-proxy -rwxr-xr-x 1 1000 1000 15142440 Sep 4 20:34 runc |

|

[root@ceph01 ~]# mv docker/docker* /usr/bin/ [root@ceph01 ~]# useradd -s /sbin/nologin docker [root@ceph01 ~]# vim /usr/lib/systemd/system/docker.service |

|

[Unit] Description=Docker Application Container Engine Documentation=https://docs.docker.com After=network-online.target docker.socket firewalld.service containerd.service Wants=network-online.target Requires=docker.socket [Service] Type=notify ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock ExecReload=/bin/kill -s HUP $MAINPID TimeoutSec=0 RestartSec=2 Restart=always StartLimitBurst=3 StartLimitInterval=60s LimitNOFILE=infinity LimitNPROC=infinity LimitCORE=infinity Delegate=yes KillMode=process [Install] WantedBy=sockets.target |

|

[root@ceph01 ~]# vim /usr/lib/systemd/system/docker.socket |

|

[Unit] Description=Docker Socket for the API PartOf=docker.service [Socket] ListenStream=/var/run/docker.sock SocketMode=0660 SocketUser=root SocketGroup=docker [Install] WantedBy=sockets.traget |

|

[root@ceph01 ~]# systemctl daemon-reload && systemctl enable docker --now |

|

Created symlink /etc/systemd/system/sockets.target.wants/docker.service → /usr/lib/systemd/system/docker.service. |

|

[root@ceph01 ~]# docker info |

|

Client: Version: 24.0.6 Context: default Debug Mode: false Server: Containers: 0 Running: 0 Paused: 0 Stopped: 0 Images: 0 Server Version: 24.0.6 Storage Driver: overlay2 Backing Filesystem: xfs Supports d_type: true Using metacopy: false Native Overlay Diff: true userxattr: false Logging Driver: json-file Cgroup Driver: cgroupfs Cgroup Version: 1 Plugins: Volume: local Network: bridge host ipvlan macvlan null overlay Log: awslogs fluentd gcplogs gelf journald json-file local logentries splunk syslog Swarm: inactive Runtimes: io.containerd.runc.v2 runc Default Runtime: runc Init Binary: docker-init containerd version: 091922f03c2762540fd057fba91260237ff86acb runc version: v1.1.9-0-gccaecfcb init version: de40ad0 Security Options: seccomp Profile: builtin Kernel Version: 4.18.0-425.3.1.el8.x86_64 Operating System: Rocky Linux 8.7 (Green Obsidian) OSType: linux Architecture: x86_64 CPUs: 4 Total Memory: 7.769GiB Name: ceph01 ID: 434da3e4-5b29-448b-ad25-4268c357135b Docker Root Dir: /var/lib/docker Debug Mode: false Experimental: false Insecure Registries: 127.0.0.0/8 Live Restore Enabled: false Product License: Community Engine |

|

[root@ceph01 ~]# systemctl status docker |

|

● docker.service - Docker Application Container Engine Loaded: loaded (/usr/lib/systemd/system/docker.service; enabled; vendor preset: disabled) Active: active (running) since Thu 2023-09-28 19:01:03 CST; 3min 44s ago Docs: https://docs.docker.com Main PID: 3880 (dockerd) Tasks: 10 (limit: 50662) Memory: 32.0M CGroup: /system.slice/docker.service └─3880 /usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock Sep 28 19:01:02 ceph01 systemd[1]: Starting Docker Application Container Engine... Sep 28 19:01:02 ceph01 dockerd[3880]: time="2023-09-28T19:01:02.382303308+08:00" level=info msg="Starting up" Sep 28 19:01:02 ceph01 dockerd[3880]: time="2023-09-28T19:01:02.552352691+08:00" level=info msg="Loading containers: start." Sep 28 19:01:03 ceph01 dockerd[3880]: time="2023-09-28T19:01:03.258877322+08:00" level=info msg="Loading containers: done." Sep 28 19:01:03 ceph01 dockerd[3880]: time="2023-09-28T19:01:03.302782480+08:00" level=info msg="Docker daemon" commit=1a79695 grap> Sep 28 19:01:03 ceph01 dockerd[3880]: time="2023-09-28T19:01:03.302943601+08:00" level=info msg="Daemon has completed initializatio> Sep 28 19:01:03 ceph01 dockerd[3880]: time="2023-09-28T19:01:03.423734187+08:00" level=info msg="API listen on /var/run/docker.sock" Sep 28 19:01:03 ceph01 systemd[1]: Started Docker Application Container Engine. |

|

[root@ceph01 ~]# mkdir /etc/docker [root@ceph01 ~]# vim /etc/docker/daemon.json |

|

{ "registry-mirrors":[ "https://docker.m.daocloud.io", ] } |

|

[root@ceph01 ~]# systemctl daemon-reload [root@ceph01 ~]# systemctl restart docker [root@ceph01 ~]# docker info |

|

Client: Version: 24.0.6 Context: default Debug Mode: false Server: Containers: 0 Running: 0 Paused: 0 Stopped: 0 Images: 0 Server Version: 24.0.6 Storage Driver: overlay2 Backing Filesystem: xfs Supports d_type: true Using metacopy: false Native Overlay Diff: true userxattr: false Logging Driver: json-file Cgroup Driver: cgroupfs Cgroup Version: 1 Plugins: Volume: local Network: bridge host ipvlan macvlan null overlay Log: awslogs fluentd gcplogs gelf journald json-file local logentries splunk syslog Swarm: inactive Runtimes: io.containerd.runc.v2 runc Default Runtime: runc Init Binary: docker-init containerd version: 091922f03c2762540fd057fba91260237ff86acb runc version: v1.1.9-0-gccaecfcb init version: de40ad0 Security Options: seccomp Profile: builtin Kernel Version: 4.18.0-425.3.1.el8.x86_64 Operating System: Rocky Linux 8.7 (Green Obsidian) OSType: linux Architecture: x86_64 CPUs: 4 Total Memory: 7.769GiB Name: ceph01 ID: 434da3e4-5b29-448b-ad25-4268c357135b Docker Root Dir: /var/lib/docker Debug Mode: false Experimental: false Insecure Registries: 127.0.0.0/8 Registry Mirrors: "https://docker.m.daocloud.io" Live Restore Enabled: false Product License: Community Engine |

1.8配置ssh免密登录(ceph01)

|

[root@ceph01 ~]# ssh-keygen -t rsa |

|

Generating public/private rsa key pair. Enter file in which to save the key (/root/.ssh/id_rsa): Enter passphrase (empty for no passphrase): Enter same passphrase again: Your identification has been saved in /root/.ssh/id_rsa. Your public key has been saved in /root/.ssh/id_rsa.pub. The key fingerprint is: SHA256:Rt8DfyPlaWVZw4cxa2D+e1++KoFMIlUNuSXedDQONi0 root@ceph01 The key's randomart image is: +---[RSA 3072]----+ | .o++=o++.| | . o.E++o+=| | . o B +.+.+| | . o = = = + | | . S o = B | | . o . = o | | . . o| | . oo| | ....+| +----[SHA256]-----+ |

|

[root@ceph01 ~]# for i in {1..5};do ssh-copy-id ceph0$i;done [root@ceph01 ~]# ssh ceph02 [root@ceph02 ~]# |

2.CEPH集群安装

O版本开始就不再支持ceph-deploy工具

2.1安装编排工具cephadm(所有节点)

cephadm安装前提

Python3

Systemd

Podman or Docker

Chrony or NTP

LVM2

|

[root@ceph01 ~]# vim /etc/yum.repos.d/ceph.repo |

|

[ceph] name=Ceph packages for $basearch baseurl=https://mirrors.aliyun.com/ceph/rpm-18.2.0/el8//x86_64 enabled=1 priority=2 gpgcheck=1 gpgkey=https://mirrors.aliyun.com/ceph/keys/release.asc [ceph-noarch] name=Ceph noarch packages baseurl=https://mirrors.aliyun.com/ceph/rpm-18.2.0/el8//noarch enabled=1 priority=2 gpgcheck=1 gpgkey=https://mirrors.aliyun.com/ceph/keys/release.asc [ceph-source] name=Ceph source packages baseurl=https://mirrors.aliyun.com/ceph/rpm-18.2.0/el8//SRPMS enabled=0 priority=2 gpgcheck=1 |

|

[root@ceph01 ~]# yum clean all [root@ceph01 ~]# yum makecache |

|

Ceph packages for x86_64 129 kB/s | 81 kB 00:00 Ceph noarch packages 19 kB/s | 9.6 kB 00:00 Rocky Linux 8 - AppStream 2.0 MB/s | 11 MB 00:05 Rocky Linux 8 - BaseOS 2.2 MB/s | 7.1 MB 00:03 Rocky Linux 8 - Extras 28 kB/s | 14 kB 00:00 Extra Packages for Enterprise Linux 8 - x86_64 1.8 MB/s | 16 MB 00:08 Metadata cache created. |

|

[root@ceph01 ~]# yum -y install cephadm |

|

Upgraded: platform-python-setuptools-39.2.0-7.el8.noarch Installed: cephadm-2:18.2.0-0.el8.noarch python3-pip-9.0.3-22.el8.rocky.0.noarch python3-setuptools-39.2.0-7.el8.noarch python36-3.6.8-38.module+el8.5.0+671+195e4563.x86_64 Complete! |

|

[root@ceph01 ~]# rpm -ql cephadm |

|

/usr/sbin/cephadm /usr/share/man/man8/cephadm.8.gz /var/lib/cephadm /var/lib/cephadm/.ssh /var/lib/cephadm/.ssh/authorized_keys |

|

[root@ceph01 ~]# cephadm install |

|

Installing packages ['cephadm']... |

导入镜像

|

[root@ceph01 ~]# docker images |

|

REPOSITORY TAG IMAGE ID CREATED SIZE quay.io/ceph/ceph v18 2ddfbd2845f4 12 days ago 1.25GB quay.io/ceph/ceph-grafana 9.4.7 2c41d148cca3 6 months ago 633MB quay.io/prometheus/prometheus v2.43.0 a07b618ecd1d 6 months ago 234MB quay.io/prometheus/alertmanager v0.25.0 c8568f914cd2 9 months ago 65.1MB quay.io/prometheus/node-exporter v1.5.0 0da6a335fe13 10 months ago 22.5MB |

检查ceph各节点是否满足安装ceph集群,该命令需要在当前节点执行,比如要判断ceph02是否支持安装ceph集群,则在ceph02上执行

|

[root@ceph02 ~]# cephadm check-host --expect-hostname ceph01 |

|

docker (/usr/bin/docker) is present systemctl is present lvcreate is present Unit chronyd.service is enabled and running Hostname "ceph01" matches what is expected. Host looks OK |

|

[root@ceph02 ~]# cephadm check-host --expect-hostname ceph02 |

|

docker (/usr/bin/docker) is present systemctl is present lvcreate is present Unit chronyd.service is enabled and running Hostname "ceph02" matches what is expected. Host looks OK |

|

[root@ceph03 ~]# cephadm check-host --expect-hostname ceph03 |

|

docker (/usr/bin/docker) is present systemctl is present lvcreate is present Unit chronyd.service is enabled and running Hostname "ceph03" matches what is expected. Host looks OK |

|

[root@ceph04 ~]# cephadm check-host --expect-hostname ceph04 |

|

docker (/usr/bin/docker) is present systemctl is present lvcreate is present Unit chronyd.service is enabled and running Hostname "ceph04" matches what is expected. Host looks OK |

|

[root@ceph05 ~]# cephadm check-host --expect-hostname ceph05 |

|

docker (/usr/bin/docker) is present systemctl is present lvcreate is present Unit chronyd.service is enabled and running Hostname "ceph05" matches what is expected. Host looks OK |

2.2使用cephadm初始化ceph最小集群

|

[root@ceph01 ~]# cephadm bootstrap --help |

|

usage: cephadm bootstrap [-h] [--config CONFIG] [--mon-id MON_ID] [--mon-addrv MON_ADDRV | --mon-ip MON_IP] [--mgr-id MGR_ID] [--fsid FSID] [--output-dir OUTPUT_DIR] [--output-keyring OUTPUT_KEYRING] [--output-config OUTPUT_CONFIG] [--output-pub-ssh-key OUTPUT_PUB_SSH_KEY] [--skip-admin-label] [--skip-ssh] [--initial-dashboard-user INITIAL_DASHBOARD_USER] [--initial-dashboard-password INITIAL_DASHBOARD_PASSWORD] [--ssl-dashboard-port SSL_DASHBOARD_PORT] [--dashboard-key DASHBOARD_KEY] [--dashboard-crt DASHBOARD_CRT] [--ssh-config SSH_CONFIG] [--ssh-private-key SSH_PRIVATE_KEY] [--ssh-public-key SSH_PUBLIC_KEY] [--ssh-user SSH_USER] [--skip-mon-network] [--skip-dashboard] [--dashboard-password-noupdate] [--no-minimize-config] [--skip-ping-check] [--skip-pull] [--skip-firewalld] [--allow-overwrite] [--allow-fqdn-hostname] [--allow-mismatched-release] [--skip-prepare-host] [--orphan-initial-daemons] [--skip-monitoring-stack] [--with-centralized-logging] [--apply-spec APPLY_SPEC] [--shared_ceph_folder CEPH_SOURCE_FOLDER] [--registry-url REGISTRY_URL] [--registry-username REGISTRY_USERNAME] [--registry-password REGISTRY_PASSWORD] [--registry-json REGISTRY_JSON] [--cluster-network CLUSTER_NETWORK] [--single-host-defaults] [--log-to-file] optional arguments: -h, --help show this help message and exit --config CONFIG, -c CONFIG ceph conf file to incorporate --mon-id MON_ID mon id (default: local hostname) --mon-addrv MON_ADDRV mon IPs (e.g., [v2:localipaddr:3300,v1:localipaddr:6789]) --mon-ip MON_IP mon IP --mgr-id MGR_ID mgr id (default: randomly generated) --fsid FSID cluster FSID --output-dir OUTPUT_DIR directory to write config, keyring, and pub key files --output-keyring OUTPUT_KEYRING location to write keyring file with new cluster admin and mon keys --output-config OUTPUT_CONFIG location to write conf file to connect to new cluster --output-pub-ssh-key OUTPUT_PUB_SSH_KEY location to write the cluster's public SSH key --skip-admin-label do not create admin label for ceph.conf and client.admin keyring distribution --skip-ssh skip setup of ssh key on local host --initial-dashboard-user INITIAL_DASHBOARD_USER Initial user for the dashboard --initial-dashboard-password INITIAL_DASHBOARD_PASSWORD Initial password for the initial dashboard user --ssl-dashboard-port SSL_DASHBOARD_PORT Port number used to connect with dashboard using SSL --dashboard-key DASHBOARD_KEY Dashboard key --dashboard-crt DASHBOARD_CRT Dashboard certificate --ssh-config SSH_CONFIG SSH config --ssh-private-key SSH_PRIVATE_KEY SSH private key --ssh-public-key SSH_PUBLIC_KEY SSH public key --ssh-user SSH_USER set user for SSHing to cluster hosts, passwordless sudo will be needed for non-root users --skip-mon-network set mon public_network based on bootstrap mon ip --skip-dashboard do not enable the Ceph Dashboard --dashboard-password-noupdate stop forced dashboard password change --no-minimize-config do not assimilate and minimize the config file --skip-ping-check do not verify that mon IP is pingable --skip-pull do not pull the default image before bootstrapping --skip-firewalld Do not configure firewalld --allow-overwrite allow overwrite of existing --output-* config/keyring/ssh files --allow-fqdn-hostname allow hostname that is fully-qualified (contains ".") --allow-mismatched-release allow bootstrap of ceph that doesn't match this version of cephadm --skip-prepare-host Do not prepare host --orphan-initial-daemons Set mon and mgr service to `unmanaged`, Do not create the crash service --skip-monitoring-stack Do not automatically provision monitoring stack (prometheus, grafana, alertmanager, node-exporter) --with-centralized-logging Automatically provision centralized logging (promtail, loki) --apply-spec APPLY_SPEC Apply cluster spec after bootstrap (copy ssh key, add hosts and apply services) --shared_ceph_folder CEPH_SOURCE_FOLDER Development mode. Several folders in containers are volumes mapped to different sub-folders in the ceph source folder --registry-url REGISTRY_URL url for custom registry --registry-username REGISTRY_USERNAME username for custom registry --registry-password REGISTRY_PASSWORD password for custom registry --registry-json REGISTRY_JSON json file with custom registry login info (URL, Username, Password) --cluster-network CLUSTER_NETWORK subnet to use for cluster replication, recovery and heartbeats (in CIDR notation network/mask) --single-host-defaults adjust configuration defaults to suit a single-host cluster --log-to-file configure cluster to log to traditional log files in /var/log/ceph/$fsid |

2.2.1初始化单节点集群

cephadm bootstrap过程是在单一节点上创建一个小型的ceph集群,包括一个ceph monitor和一个ceph mgr,以及监控组件,包括prometheus、node-exporter等。

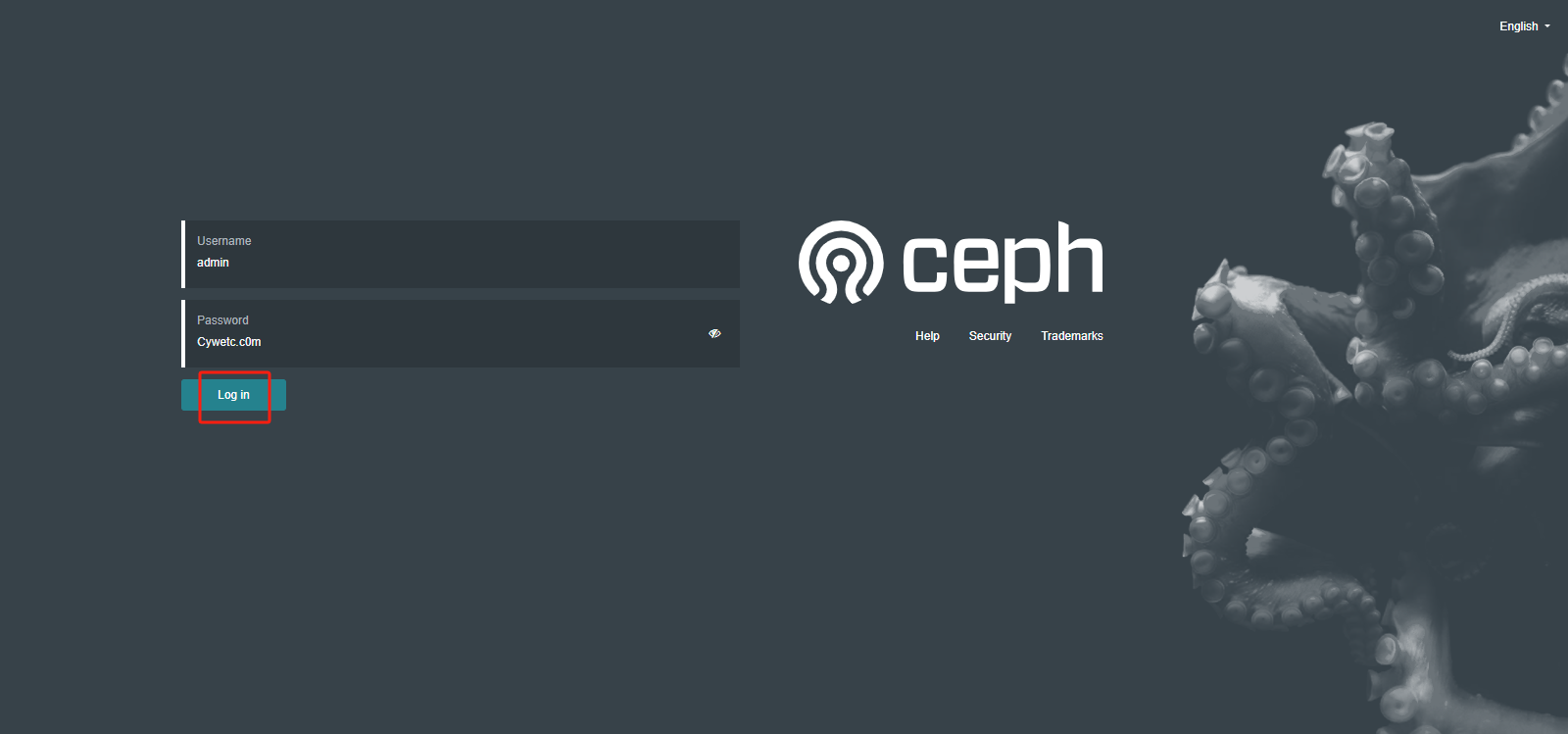

#初始化时,指定了mon-ip、集群网段、dashboard初始用户名和密码

|

[root@ceph01 ~]# cephadm bootstrap --mon-ip 10.9.254.81 --cluster-network 192.168.254.0/24 --initial-dashboard-user admin --initial-dashboard-password Cywetc.c0m |

|

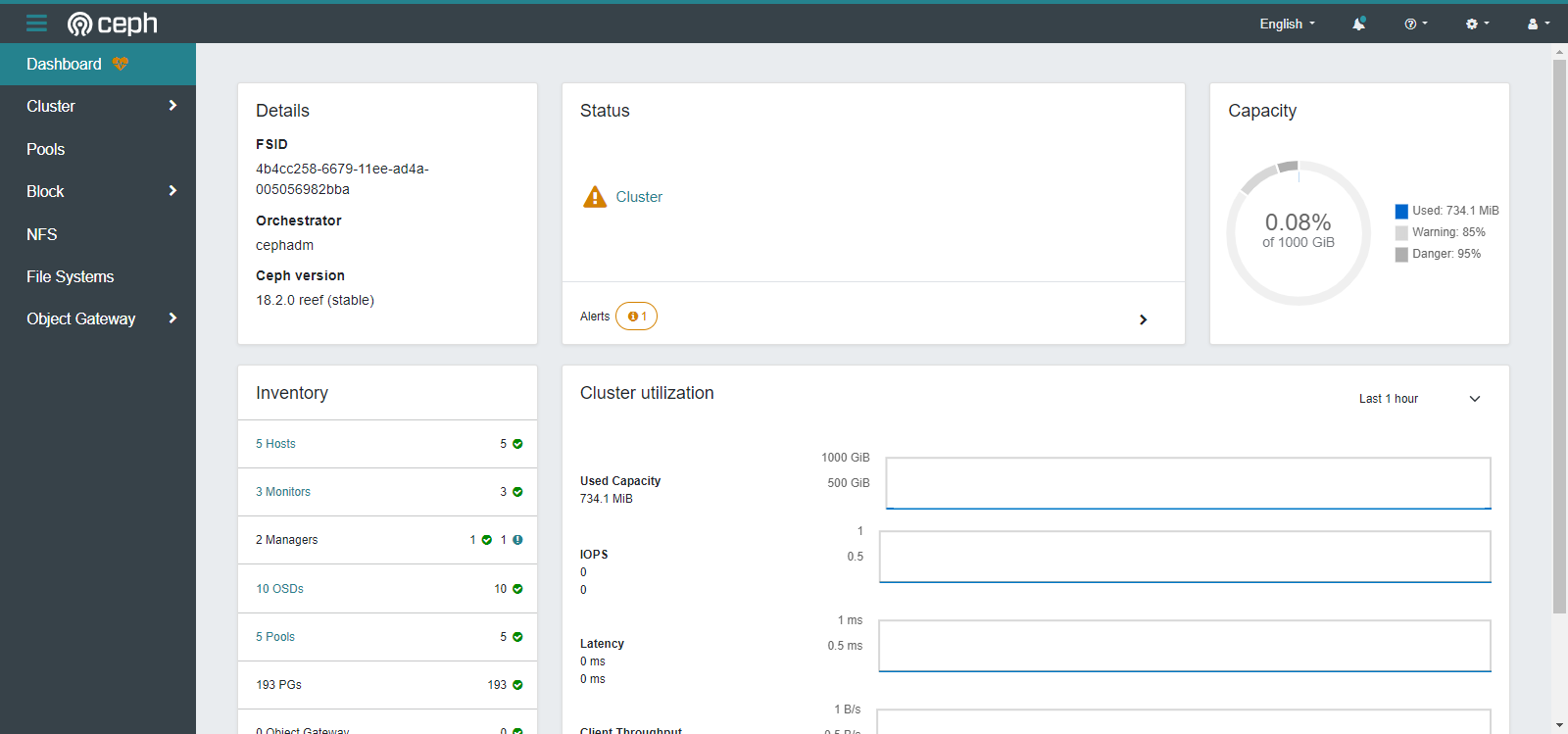

Creating directory /etc/ceph for ceph.conf Verifying podman|docker is present... Verifying lvm2 is present... Verifying time synchronization is in place... Unit chronyd.service is enabled and running Repeating the final host check... docker (/usr/bin/docker) is present systemctl is present lvcreate is present Unit chronyd.service is enabled and running Host looks OK Cluster fsid: 4b4cc258-6679-11ee-ad4a-005056982bba Verifying IP 10.9.254.81 port 3300 ... Verifying IP 10.9.254.81 port 6789 ... Mon IP `10.9.254.81` is in CIDR network `10.9.254.0/24` Mon IP `10.9.254.81` is in CIDR network `10.9.254.0/24` Pulling container image quay.io/ceph/ceph:v18... Ceph version: ceph version 18.2.0 (5dd24139a1eada541a3bc16b6941c5dde975e26d) reef (stable) Extracting ceph user uid/gid from container image... Creating initial keys... Creating initial monmap... Creating mon... Waiting for mon to start... Waiting for mon... mon is available Assimilating anything we can from ceph.conf... Generating new minimal ceph.conf... Restarting the monitor... Setting mon public_network to 10.9.254.0/24 Setting cluster_network to 192.168.254.0/24 Wrote config to /etc/ceph/ceph.conf Wrote keyring to /etc/ceph/ceph.client.admin.keyring Creating mgr... Verifying port 9283 ... Verifying port 8765 ... Verifying port 8443 ... Waiting for mgr to start... Waiting for mgr... mgr not available, waiting (1/15)... mgr not available, waiting (2/15)... mgr not available, waiting (3/15)... mgr not available, waiting (4/15)... mgr not available, waiting (5/15)... mgr not available, waiting (6/15)... mgr is available Enabling cephadm module... Waiting for the mgr to restart... Waiting for mgr epoch 5... mgr epoch 5 is available Setting orchestrator backend to cephadm... Generating ssh key... Wrote public SSH key to /etc/ceph/ceph.pub Adding key to root@localhost authorized_keys... Adding host ceph01... Deploying mon service with default placement... Deploying mgr service with default placement... Deploying crash service with default placement... Deploying ceph-exporter service with default placement... Deploying prometheus service with default placement... Deploying grafana service with default placement... Deploying node-exporter service with default placement... Deploying alertmanager service with default placement... Enabling the dashboard module... Waiting for the mgr to restart... Waiting for mgr epoch 9... mgr epoch 9 is available Generating a dashboard self-signed certificate... Creating initial admin user... Fetching dashboard port number... Ceph Dashboard is now available at: URL: https://ceph01:8443/ User: admin Password: Cywetc.c0m Enabling client.admin keyring and conf on hosts with "admin" label Saving cluster configuration to /var/lib/ceph/4b4cc258-6679-11ee-ad4a-005056982bba/config directory Enabling autotune for osd_memory_target You can access the Ceph CLI as following in case of multi-cluster or non-default config: sudo /usr/sbin/cephadm shell --fsid 4b4cc258-6679-11ee-ad4a-005056982bba -c /etc/ceph/ceph.conf -k /etc/ceph/ceph.client.admin.keyring Or, if you are only running a single cluster on this host: sudo /usr/sbin/cephadm shell Please consider enabling telemetry to help improve Ceph: ceph telemetry on For more information see: https://docs.ceph.com/en/latest/mgr/telemetry/ Bootstrap complete. |

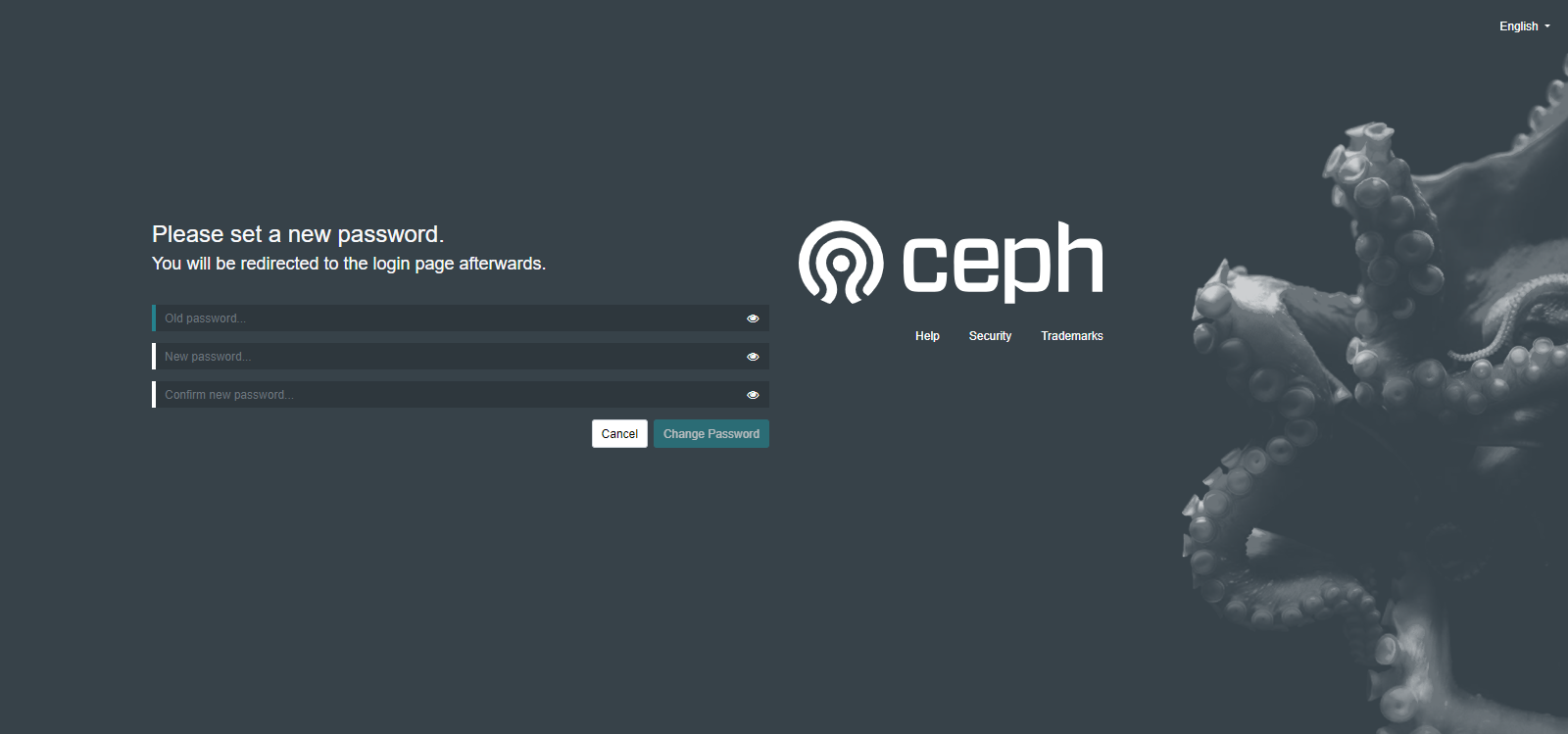

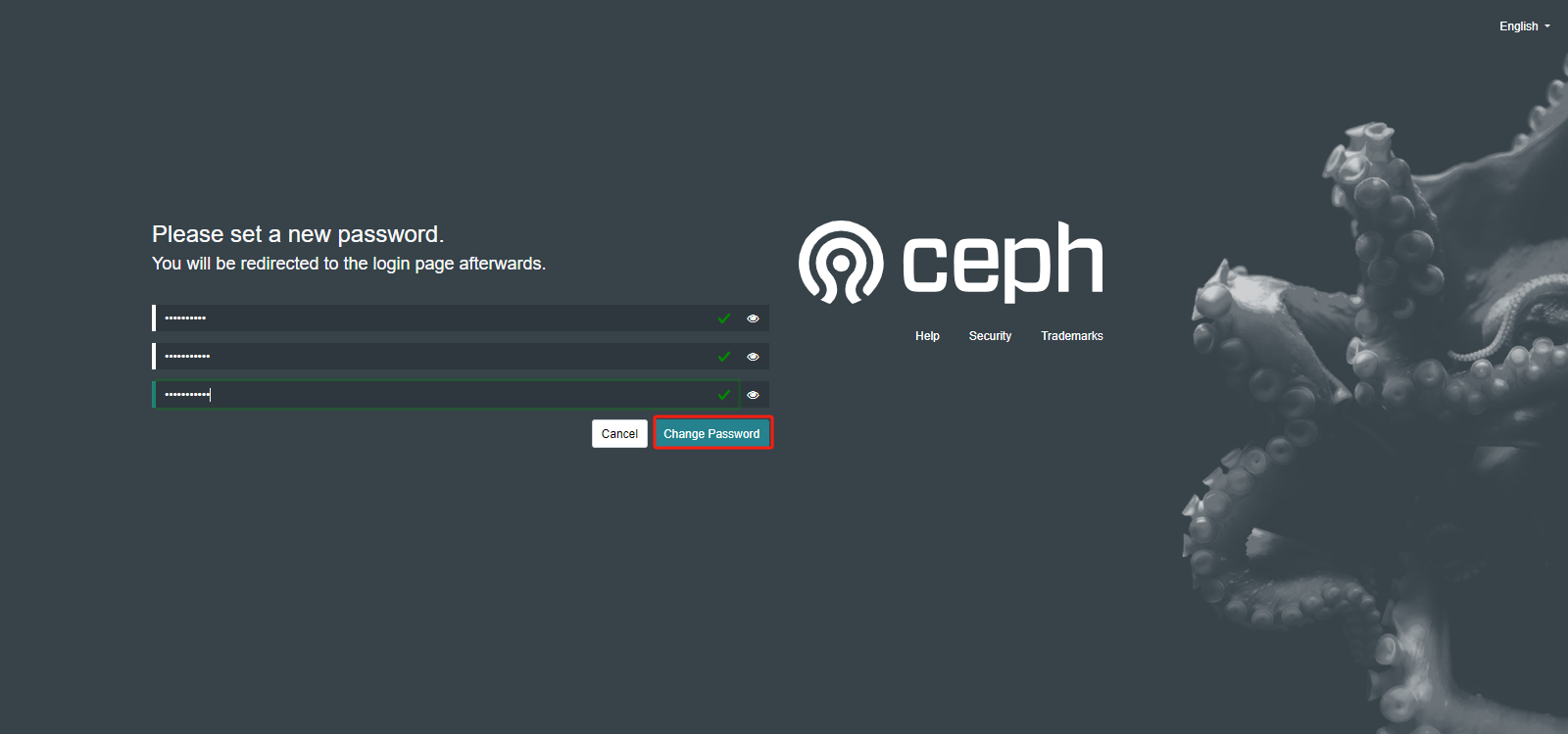

将密码修改成Cywetc.c0m@

查看集群状态,进入容器查看

|

[root@ceph01 ~]# cephadm shell |

|

Inferring fsid 94c0209a-64e1-11ee-a238-005056982bba Inferring config /var/lib/ceph/94c0209a-64e1-11ee-a238-005056982bba/mon.ceph01/config Using ceph image with id '2ddfbd2845f4' and tag 'v18' created on 2023-09-27 00:11:26 +0800 CST quay.io/ceph/ceph@sha256:f239715e1c7756e32a202a572e2763a4ce15248e09fc6e8990985f8a09ffa784 [ceph: root@ceph01 /]# ceph -s cluster: id: 94c0209a-64e1-11ee-a238-005056982bba health: HEALTH_WARN OSD count 0 < osd_pool_default_size 3 services: mon: 1 daemons, quorum ceph01 (age 11m) mgr: ceph01.cvsxkx(active, since 7m) osd: 0 osds: 0 up, 0 in data: pools: 0 pools, 0 pgs objects: 0 objects, 0 B usage: 0 B used, 0 B / 0 B avail pgs: [ceph: root@ceph01 /]# |

|

[ceph: root@ceph01 /]# ceph orch ps |

|

NAME HOST PORTS STATUS REFRESHED AGE MEM USE MEM LIM VERSION IMAGE ID CONTAINER ID alertmanager.ceph01 ceph01 *:9093,9094 running (8m) 6m ago 10m 15.2M - 0.25.0 c8568f914cd2 fb5005d76e7a ceph-exporter.ceph01 ceph01 running (11m) 6m ago 11m 6515k - 18.2.0 2ddfbd2845f4 d51ccac8cd1f crash.ceph01 ceph01 running (11m) 6m ago 11m 7088k - 18.2.0 2ddfbd2845f4 6b7423f6d37e grafana.ceph01 ceph01 *:3000 running (7m) 6m ago 9m 74.2M - 9.4.7 2c41d148cca3 091d300a7385 mgr.ceph01.cvsxkx ceph01 *:9283,8765,8443 running (12m) 6m ago 12m 449M - 18.2.0 2ddfbd2845f4 17c249dcd085 mon.ceph01 ceph01 running (12m) 6m ago 12m 34.2M 2048M 18.2.0 2ddfbd2845f4 3f636825cfea node-exporter.ceph01 ceph01 *:9100 running (10m) 6m ago 11m 16.0M - 1.5.0 0da6a335fe13 6b5265321413 prometheus.ceph01 ceph01 *:9095 running (8m) 6m ago 8m 31.0M - 2.43.0 a07b618ecd1d b12bc5375181 |

|

[ceph: root@ceph01 /]# ceph orch ls #列出服务 |

|

NAME PORTS RUNNING REFRESHED AGE PLACEMENT alertmanager ?:9093,9094 1/1 7m ago 12m count:1 ceph-exporter 1/1 7m ago 12m * crash 1/1 7m ago 12m * grafana ?:3000 1/1 7m ago 12m count:1 mgr 1/2 7m ago 12m count:2 mon 1/5 7m ago 12m count:5 node-exporter ?:9100 1/1 7m ago 12m * prometheus ?:9095 1/1 7m ago 12m count:1 |

|

[ceph: root@ceph01 /]# ceph mgr services |

|

{ "dashboard": "https://10.9.254.81:8443/", "prometheus": "http://10.9.254.81:9283/" } |

|

[ceph: root@ceph01 /]# ls -l /etc/ceph |

|

-rw------- 1 ceph ceph 217 Oct 7 07:26 ceph.conf -rw------- 1 root root 151 Oct 7 07:26 ceph.keyring -rw-r--r-- 1 root root 92 Aug 3 19:43 rbdmap |

|

[root@ceph01 ~]# ls -l /etc/ceph |

|

-rw------- 1 root root 151 Oct 7 15:26 ceph.client.admin.keyring -rw-r--r-- 1 root root 173 Oct 7 15:26 ceph.conf -rw-r--r-- 1 root root 595 Oct 7 15:22 ceph.pub |

|

[root@ceph01 ~]# cat /etc/ceph/ceph.conf |

|

# minimal ceph.conf for 94c0209a-64e1-11ee-a238-005056982bba [global] fsid = 94c0209a-64e1-11ee-a238-005056982bba mon_host = [v2:10.9.254.81:3300/0,v1:10.9.254.81:6789/0] |

2.3添加节点到集群

将公钥复制到其它节点

|

[ceph: root@ceph01 /]# ls -l /etc/ceph |

|

-rw------- 1 ceph ceph 217 Oct 9 07:58 ceph.conf -rw------- 1 root root 151 Oct 9 07:58 ceph.keyring -rw-r--r-- 1 root root 92 Aug 3 19:43 rbdmap |

|

[root@ceph01 ~]# ll /etc/ceph |

|

-rw------- 1 root root 151 Oct 9 15:58 ceph.client.admin.keyring -rw-r--r-- 1 root root 173 Oct 9 15:58 ceph.conf -rw-r--r-- 1 root root 595 Oct 9 15:57 ceph.pub |

|

[root@ceph01 ~]# cat /etc/ceph/ceph.conf |

|

# minimal ceph.conf for 4b4cc258-6679-11ee-ad4a-005056982bba [global] fsid = 4b4cc258-6679-11ee-ad4a-005056982bba mon_host = [v2:10.9.254.81:3300/0,v1:10.9.254.81:6789/0] |

|

[root@ceph01 ~]# for i in {2..5};do ssh-copy-id -f -i /etc/ceph/ceph.pub ceph0$i; done |

|

/usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: "/etc/ceph/ceph.pub" Number of key(s) added: 1 Now try logging into the machine, with: "ssh 'ceph02'" and check to make sure that only the key(s) you wanted were added. /usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: "/etc/ceph/ceph.pub" Number of key(s) added: 1 Now try logging into the machine, with: "ssh 'ceph03'" and check to make sure that only the key(s) you wanted were added. /usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: "/etc/ceph/ceph.pub" Number of key(s) added: 1 Now try logging into the machine, with: "ssh 'ceph04'" and check to make sure that only the key(s) you wanted were added. /usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: "/etc/ceph/ceph.pub" Number of key(s) added: 1 Now try logging into the machine, with: "ssh 'ceph05'" and check to make sure that only the key(s) you wanted were added. |

|

[root@ceph05 ~]# cat /root/.ssh/authorized_keys |

|

ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAABgQDBqVrsHNP6BehExuL0QhsNk++CLufUHMUWKo7Y2XMYcB7+Kwdy/TzQI+ee2GKd0R8uqflLWing8ouRe0YXNZBEd1VBIMnI/rqzV5kPfpgrXFQSbGvb285dtulMvO9mT+uc1toFWL8LjKDr5a8qsKSzeCHvYFMZMgU4atvDMp/rhyUtRl322fc0hXXq7bDt6+QFHLLCWNjowCjV2NAVUNavJSPNZe1RvbT1+dD/GUguLddMRECmuYaB4nR0faORvNrgsJpnn2ufZ9Hx75LbbwYyfgt/ffVAcG3OAZL5I4wqhz9U3dXjGUfpcuQOIHXYJ6C8Ae0VsaYRB8zCYRf2Aw10ccLadPb18AzOQJ2jC3IaXlYBmEHccZDq+jzKgqxWURiPF7W+erqcNiNiEG19yLsRjhixwsI9LAgZWFPk83homM2Lmd/2uYAbOJJ02r7DEfO3r6sz9eFwNeLYISkSLwOyvw5I90ebap3N9+f7bIWPhNRYtsmeEqCNjWSUtetoNkE= root@ceph01 ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAABgQDASd7BS/Xp6nGR6TT8QWkGsq0kpwaoZlMbPDNjlWRctflnAh1NIIi/rEOkaAeP34QXs619Fj5RtPREiix6IN56UI0oC/o3/qyRd8IrYlm9zc0BNDrLD1t/KmrSEIW7hniRA+W6wZjxw6tv5N1Eo8XtHOgoaaWGtAFEyvzfRVwfOTRKZ7z/SaV+jfXIMmIwL+dn6Ed+qxIBjBxbxhqgmxMrokb42+fRYQp3/gZELETSaKsvZSj7uHTCYVjA45apGQE99hlJiCLqdn09PrpX2WJSTpuELmMLzrAYS60StzEJb+jmIrq+YZA/dyLpwCTsLe6SMJri0IYMMfN0L220gkHlNblBTxrpq9XGlPPBD1zGQ6h0nCogHy6syhaAXYaCnS0D2VGOX7E9DMgqtFD2MvOiVbtOq31BmeGt2k8YgKw3qf2lAFWTnDqJpE1I8PT4mU4o/wbm2ZJ/iGa9xfjsPk4iCICEchRy0EHMNbV9B2con6KJuerKz+CES06ud74zoC0= ceph-4b4cc258-6679-11ee-ad4a-005056982bba |

使用cephadm将主机添加到存储集群中,执行添加节点命令后,会再目标节点拉到ceph /node-exporter镜像,需要一定时间,所以可提前在节点上将镜像导入。

|

[root@ceph01 ~]# cephadm shell [ceph: root@ceph01 /]# ceph orch host add ceph02 10.9.254.82 --labels=mon,mgr |

|

Added host 'ceph02' with addr '10.9.254.82' |

|

[ceph: root@ceph01 /]# ceph orch ls |

|

NAME PORTS RUNNING REFRESHED AGE PLACEMENT alertmanager ?:9093,9094 1/1 2m ago 2h count:1 ceph-exporter 1/1 2m ago 2h * crash 1/1 2m ago 2h * grafana ?:3000 1/1 2m ago 2h count:1 mgr 1/2 2m ago 2h count:2 mon 1/5 2m ago 2h count:5 node-exporter ?:9100 1/1 2m ago 2h * prometheus ?:9095 1/1 2m ago 2h count:1 |

|

[ceph: root@ceph01 /]# ceph orch host ls |

|

HOST ADDR LABELS STATUS ceph01 10.9.254.81 _admin ceph02 10.9.254.82 mon,mgr 2 hosts in cluster |

|

[ceph: root@ceph01 /]# ceph orch host add ceph03 10.9.254.83 --labels=mon |

|

Added host 'ceph03' with addr '10.9.254.83' |

|

[ceph: root@ceph01 /]# ceph orch host add ceph04 10.9.254.84 |

|

Added host 'ceph04' with addr '10.9.254.84' |

|

[ceph: root@ceph01 /]# ceph orch host add ceph05 10.9.254.85 |

|

Added host 'ceph05' with addr '10.9.254.85' |

|

[ceph: root@ceph01 /]# ceph orch host ls |

|

HOST ADDR LABELS STATUS ceph01 10.9.254.81 _admin ceph02 10.9.254.82 mon,mgr ceph03 10.9.254.83 mon ceph04 10.9.254.84 ceph05 10.9.254.85 5 hosts in cluster |

|

[root@ceph01 ~]# yum -y install ceph |

|

Upgraded: libibverbs-44.0-2.el8.1.x86_64 Installed: ceph-2:18.2.0-0.el8.x86_64 ceph-base-2:18.2.0-0.el8.x86_64 ceph-common-2:18.2.0-0.el8.x86_64 ceph-grafana-dashboards-2:18.2.0-0.el8.noarch ceph-mds-2:18.2.0-0.el8.x86_64 ceph-mgr-2:18.2.0-0.el8.x86_64 ceph-mgr-cephadm-2:18.2.0-0.el8.noarch ceph-mgr-dashboard-2:18.2.0-0.el8.noarch ceph-mgr-k8sevents-2:18.2.0-0.el8.noarch ceph-mgr-modules-core-2:18.2.0-0.el8.noarch ceph-mgr-rook-2:18.2.0-0.el8.noarch ceph-mon-2:18.2.0-0.el8.x86_64 ceph-osd-2:18.2.0-0.el8.x86_64 ceph-prometheus-alerts-2:18.2.0-0.el8.noarch ceph-selinux-2:18.2.0-0.el8.x86_64 gperftools-libs-1:2.7-9.el8.x86_64 libbabeltrace-1.5.4-4.el8.x86_64 libcephfs2-2:18.2.0-0.el8.x86_64 libcephsqlite-2:18.2.0-0.el8.x86_64 libconfig-1.5-9.el8.x86_64 libicu-60.3-2.el8_1.x86_64 liboath-2.6.2-3.el8.x86_64 librabbitmq-0.9.0-3.el8.x86_64 librados2-2:18.2.0-0.el8.x86_64 libradosstriper1-2:18.2.0-0.el8.x86_64 librbd1-2:18.2.0-0.el8.x86_64 librdkafka-0.11.4-3.el8.x86_64 librdmacm-44.0-2.el8.1.x86_64 librgw2-2:18.2.0-0.el8.x86_64 libstoragemgmt-1.9.1-3.el8.x86_64 libunwind-1.3.1-3.el8.x86_64 lttng-ust-2.8.1-11.el8.x86_64 nvme-cli-1.16-7.el8.x86_64 python3-asyncssh-2.7.0-2.el8.noarch python3-babel-2.5.1-7.el8.noarch python3-bcrypt-3.1.6-2.el8.1.x86_64 python3-beautifulsoup4-4.6.3-2.el8.1.noarch python3-cachetools-3.1.1-4.el8.noarch python3-ceph-argparse-2:18.2.0-0.el8.x86_64 python3-ceph-common-2:18.2.0-0.el8.x86_64 python3-cephfs-2:18.2.0-0.el8.x86_64 python3-certifi-2018.10.15-7.el8.noarch python3-cffi-1.11.5-5.el8.x86_64 python3-chardet-3.0.4-7.el8.noarch python3-cheroot-8.5.2-1.el8.noarch python3-cherrypy-18.4.0-1.el8.noarch python3-cryptography-3.2.1-5.el8.x86_64 python3-cssselect-0.9.2-10.el8.noarch python3-defusedxml-0.6.0-1.el8.noarch python3-google-auth-1:1.1.1-10.el8.noarch python3-html5lib-1:0.999999999-6.el8.noarch python3-idna-2.5-5.el8.noarch python3-influxdb-5.3.1-1.el8.noarch python3-isodate-0.6.0-1.el8.noarch python3-jaraco-6.2-6.el8.noarch python3-jaraco-functools-2.0-4.el8.noarch python3-jinja2-2.10.1-3.el8.noarch python3-jsonpatch-1.21-2.el8.noarch python3-jsonpointer-1.10-11.el8.noarch python3-jwt-1.6.1-2.el8.noarch python3-kubernetes-1:11.0.0-6.el8.noarch python3-libstoragemgmt-1.9.1-3.el8.x86_64 python3-logutils-0.3.5-11.el8.noarch python3-lxml-4.2.3-4.el8.x86_64 python3-mako-1.0.6-14.el8.noarch python3-markupsafe-0.23-19.el8.x86_64 python3-more-itertools-7.2.0-3.el8.noarch python3-msgpack-0.6.2-1.el8.x86_64 python3-natsort-7.1.1-2.el8.noarch python3-oauthlib-2.1.0-1.el8.noarch python3-pecan-1.3.2-9.el8.noarch python3-pkgconfig-1.5.1-5.el8.noarch python3-ply-3.9-9.el8.noarch python3-portend-2.6-1.el8.noarch python3-prettytable-0.7.2-14.el8.noarch python3-pyOpenSSL-19.0.0-1.el8.noarch python3-pyasn1-0.3.7-6.el8.noarch python3-pyasn1-modules-0.3.7-6.el8.noarch python3-pycparser-2.14-14.el8.noarch python3-pysocks-1.6.8-3.el8.noarch python3-pytz-2017.2-9.el8.noarch python3-pyyaml-3.12-12.el8.x86_64 python3-rados-2:18.2.0-0.el8.x86_64 python3-rbd-2:18.2.0-0.el8.x86_64 python3-repoze-lru-0.7-6.el8.noarch python3-requests-2.20.0-3.el8_8.noarch python3-requests-oauthlib-1.0.0-1.el8.noarch python3-rgw-2:18.2.0-0.el8.x86_64 python3-routes-2.4.1-12.el8.noarch python3-rsa-4.9-2.el8.noarch python3-saml-1.9.0-3.el8.noarch python3-simplegeneric-0.8.1-17.el8.noarch python3-singledispatch-3.4.0.3-18.el8.noarch python3-tempora-1.14.1-5.el8.noarch python3-trustme-0.6.0-4.el8.noarch python3-urllib3-1.24.2-5.el8.noarch python3-waitress-1.4.3-1.el8.noarch python3-webencodings-0.5.1-6.el8.noarch python3-webob-1.8.5-1.el8.1.noarch python3-websocket-client-0.56.0-5.el8.noarch python3-webtest-2.0.33-1.el8.noarch python3-werkzeug-0.12.2-4.el8.noarch python3-xmlsec-1.3.3-7.el8.x86_64 python3-zc-lockfile-2.0-2.el8.noarch smartmontools-1:7.1-1.el8.x86_64 thrift-0.13.0-2.el8.x86_64 userspace-rcu-0.10.1-4.el8.x86_64 Complete! |

|

[root@ceph01 ~]# ceph -v |

|

ceph version 18.2.0 (5dd24139a1eada541a3bc16b6941c5dde975e26d) reef (stable) |

|

[root@ceph01 ~]# ceph orch host ls |

|

HOST ADDR LABELS STATUS ceph01 10.9.254.81 _admin ceph02 10.9.254.82 mon,mgr ceph03 10.9.254.83 mon ceph04 10.9.254.84 ceph05 10.9.254.85 5 hosts in cluster |

|

[root@ceph01 ~]# ceph -s |

|

cluster: id: 4b4cc258-6679-11ee-ad4a-005056982bba health: HEALTH_WARN OSD count 0 < osd_pool_default_size 3 services: mon: 5 daemons, quorum ceph01,ceph03,ceph04,ceph05,ceph02 (age 3m) mgr: ceph01.ywwete(active, since 65m), standbys: ceph04.ykcgel osd: 0 osds: 0 up, 0 in data: pools: 0 pools, 0 pgs objects: 0 objects, 0 B usage: 0 B used, 0 B / 0 B avail pgs: |

查看其它节点应用

|

[root@ceph02 ~]# docker ps -a |

|

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES b511e94994b0 quay.io/ceph/ceph "/usr/bin/ceph-mon -…" 5 minutes ago Up 5 minutes ceph-4b4cc258-6679-11ee-ad4a-005056982bba-mon-ceph02 3b4fd4cadb3e quay.io/ceph/ceph "/usr/bin/ceph-crash…" 5 minutes ago Up 5 minutes ceph-4b4cc258-6679-11ee-ad4a-005056982bba-crash-ceph02 2546817aab89 quay.io/ceph/ceph "/usr/bin/ceph-expor…" 5 minutes ago Up 5 minutes ceph-4b4cc258-6679-11ee-ad4a-005056982bba-ceph-exporter-ceph02 8cd0b6e2eb9f quay.io/prometheus/node-exporter:v1.5.0 "/bin/node_exporter …" 6 minutes ago Up 6 minutes ceph-4b4cc258-6679-11ee-ad4a-005056982bba-node-exporter-ceph02 |

|

[root@ceph03 ~]# docker ps -a |

|

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 344830d1f960 quay.io/ceph/ceph "/usr/bin/ceph-mon -…" 19 minutes ago Up 19 minutes ceph-4b4cc258-6679-11ee-ad4a-005056982bba-mon-ceph03 5d0023a9d9ac quay.io/prometheus/node-exporter:v1.5.0 "/bin/node_exporter …" 19 minutes ago Up 19 minutes ceph-4b4cc258-6679-11ee-ad4a-005056982bba-node-exporter-ceph03 51342f1a2ecd quay.io/ceph/ceph "/usr/bin/ceph-crash…" 19 minutes ago Up 19 minutes ceph-4b4cc258-6679-11ee-ad4a-005056982bba-crash-ceph03 e70e11057e78 quay.io/ceph/ceph "/usr/bin/ceph-expor…" 19 minutes ago Up 19 minutes ceph-4b4cc258-6679-11ee-ad4a-005056982bba-ceph-exporter-ceph03 |

|

[root@ceph04 ~]# docker ps -a |

|

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES ce7f89b9d81f quay.io/ceph/ceph "/usr/bin/ceph-mgr -…" 9 minutes ago Up 9 minutes ceph-4b4cc258-6679-11ee-ad4a-005056982bba-mgr-ceph04-ykcgel c940390bea81 quay.io/ceph/ceph "/usr/bin/ceph-mon -…" 19 minutes ago Up 19 minutes ceph-4b4cc258-6679-11ee-ad4a-005056982bba-mon-ceph04 ad2a61c7a224 quay.io/prometheus/node-exporter:v1.5.0 "/bin/node_exporter …" 19 minutes ago Up 19 minutes ceph-4b4cc258-6679-11ee-ad4a-005056982bba-node-exporter-ceph04 4824930bada2 quay.io/ceph/ceph "/usr/bin/ceph-crash…" 19 minutes ago Up 19 minutes ceph-4b4cc258-6679-11ee-ad4a-005056982bba-crash-ceph04 f294ff260174 quay.io/ceph/ceph "/usr/bin/ceph-expor…" 20 minutes ago Up 20 minutes ceph-4b4cc258-6679-11ee-ad4a-005056982bba-ceph-exporter-ceph04 |

|

[root@ceph05 ~]# docker ps -a |

|

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 9f9c36ed2c19 quay.io/ceph/ceph "/usr/bin/ceph-mon -…" 10 minutes ago Up 10 minutes ceph-4b4cc258-6679-11ee-ad4a-005056982bba-mon-ceph05 dd0f095c4c68 quay.io/prometheus/node-exporter:v1.5.0 "/bin/node_exporter …" 10 minutes ago Up 10 minutes ceph-4b4cc258-6679-11ee-ad4a-005056982bba-node-exporter-ceph05 cf92ac9f3a67 quay.io/ceph/ceph "/usr/bin/ceph-crash…" 10 minutes ago Up 10 minutes ceph-4b4cc258-6679-11ee-ad4a-005056982bba-crash-ceph05 e87140fa642c quay.io/ceph/ceph "/usr/bin/ceph-expor…" 10 minutes ago Up 10 minutes ceph-4b4cc258-6679-11ee-ad4a-005056982bba-ceph-exporter-ceph05 |

2.4为节点添加标签并调整mon个数

给节点打上指标标签后,后续可以按标签进行编排。

给节点打_admin标签,默认情况下,_admin标签应用于存储集群中的bootstrapped主机,client.admin密钥被分发到该主机(ceph orch client-keyring{ls|set|rm})。将这个标签添加到其他主机后,其他主机的/etc/ceph下也将有client.admin密钥。

#给ceph02、ceph03加上_admin标签

|

[root@ceph01 ~]# ceph orch host ls |

|

HOST ADDR LABELS STATUS ceph01 10.9.254.81 _admin ceph02 10.9.254.82 mon,mgr ceph03 10.9.254.83 mon ceph04 10.9.254.84 ceph05 10.9.254.85 5 hosts in cluster |

|

[root@ceph01 ~]# ceph orch host label add ceph02 _admin |

|

Added label _admin to host ceph02 |

|

[root@ceph01 ~]# ceph orch host label add ceph03 _admin |

|

Added label _admin to host ceph03 |

|

[root@ceph01 ~]# ceph orch host ls |

|

HOST ADDR LABELS STATUS ceph01 10.9.254.81 _admin ceph02 10.9.254.82 mon,mgr,_admin ceph03 10.9.254.83 mon,_admin ceph04 10.9.254.84 ceph05 10.9.254.85 5 hosts in cluster |

查看ceph02和ceph03上的密钥文件

|

[root@ceph02 ~]# ll /etc/ceph/ |

|

-rw------- 1 root root 151 Oct 9 17:24 ceph.client.admin.keyring -rw-r--r-- 1 root root 357 Oct 9 17:24 ceph.conf -rw-r--r-- 1 root root 92 Aug 4 03:43 rbdmap |

|

[root@ceph03 ~]# ll /etc/ceph |

|

-rw------- 1 root root 151 Oct 9 17:24 ceph.client.admin.keyring -rw-r--r-- 1 root root 357 Oct 9 17:24 ceph.conf -rw-r--r-- 1 root root 92 Aug 4 03:43 rbdmap |

|

[root@ceph04 ~]# ll /etc/ceph |

|

-rw-r--r-- 1 root root 92 Aug 4 03:43 rbdmap |

|

[root@ceph05 ~]# ll /etc/ceph |

|

-rw-r--r-- 1 root root 92 Aug 4 03:43 rbdmap |

给ceph01-ceph03加上mon标签,ceph03加上mgr标签。

|

[root@ceph01 ~]# ceph orch host label add ceph01 mon |

|

Added label mon to host ceph01 |

|

[root@ceph01 ~]# ceph orch host label add ceph03 mgr |

|

Added label mgr to host ceph03 |

|

[root@ceph01 ~]# ceph orch host ls |

|

HOST ADDR LABELS STATUS ceph01 10.9.254.81 _admin,mon ceph02 10.9.254.82 mon,mgr,_admin ceph03 10.9.254.83 mon,_admin,mgr ceph04 10.9.254.84 ceph05 10.9.254.85 5 hosts in cluster |

|

[root@ceph01 ~]# ceph -s |

|

cluster: id: 4b4cc258-6679-11ee-ad4a-005056982bba health: HEALTH_WARN OSD count 0 < osd_pool_default_size 3 services: mon: 5 daemons, quorum ceph01,ceph03,ceph04,ceph05,ceph02 (age 30m) mgr: ceph01.ywwete(active, since 93m), standbys: ceph04.ykcgel osd: 0 osds: 0 up, 0 in data: pools: 0 pools, 0 pgs objects: 0 objects, 0 B usage: 0 B used, 0 B / 0 B avail pgs: |

共有mon节点5个,我们只让带标签的添加mon角色

|

[root@ceph01 ~]# ceph orch apply mon --placement="3 label:mon" |

|

Scheduled mon update... |

|

[root@ceph01 ~]# ceph -s |

|

cluster: id: 4b4cc258-6679-11ee-ad4a-005056982bba health: HEALTH_WARN 1 stray daemon(s) not managed by cephadm OSD count 0 < osd_pool_default_size 3 services: mon: 3 daemons, quorum ceph01,ceph03,ceph02 (age 24s) #已经变成了3个mon节点 mgr: ceph01.ywwete(active, since 96m), standbys: ceph04.ykcgel osd: 0 osds: 0 up, 0 in data: pools: 0 pools, 0 pgs objects: 0 objects, 0 B usage: 0 B used, 0 B / 0 B avail pgs: |

2.5集群添加OSD

osd_pool_default_size 3修改为2

|

[root@ceph01 ~]# ceph config set global osd_pool_default_size 2 [root@ceph01 ~]# ceph -s |

|

cluster: id: 4b4cc258-6679-11ee-ad4a-005056982bba health: HEALTH_WARN 1 stray daemon(s) not managed by cephadm OSD count 0 < osd_pool_default_size 2 #osd数量小于副本数量2,所以警告 services: mon: 3 daemons, quorum ceph01,ceph03,ceph02 (age 2m) mgr: ceph01.ywwete(active, since 98m), standbys: ceph04.ykcgel osd: 0 osds: 0 up, 0 in data: pools: 0 pools, 0 pgs objects: 0 objects, 0 B usage: 0 B used, 0 B / 0 B avail pgs: |

|

[root@ceph01 ~]# ceph orch device ls |

|

HOST PATH TYPE DEVICE ID SIZE AVAILABLE REFRESHED REJECT REASONS ceph01 /dev/sdb hdd 100G Yes 20m ago ceph01 /dev/sdc hdd 100G Yes 20m ago ceph02 /dev/sdb hdd 100G Yes 7m ago ceph02 /dev/sdc hdd 100G Yes 7m ago ceph03 /dev/sdb hdd 100G Yes 20m ago ceph03 /dev/sdc hdd 100G Yes 20m ago ceph04 /dev/sdb hdd 100G Yes 20m ago ceph04 /dev/sdc hdd 100G Yes 20m ago ceph05 /dev/sdb hdd 100G Yes 10m ago ceph05 /dev/sdc hdd 100G Yes 10m ago |

|

[root@ceph01 ~]# ceph orch daemon add osd ceph01:/dev/sdb |

|

Created osd(s) 0 on host 'ceph01' |

|

[root@ceph01 ~]# ceph orch daemon add osd ceph01:/dev/sdc |

|

Created osd(s) 1 on host 'ceph01' |

|

[root@ceph01 ~]# ceph orch daemon add osd ceph02:/dev/sdb [root@ceph01 ~]# ceph orch daemon add osd ceph02:/dev/sdc [root@ceph01 ~]# ceph orch daemon add osd ceph03:/dev/sdb [root@ceph01 ~]# ceph orch daemon add osd ceph03:/dev/sdc [root@ceph01 ~]# ceph orch daemon add osd ceph04:/dev/sdb [root@ceph01 ~]# ceph orch daemon add osd ceph04:/dev/sdc [root@ceph01 ~]# ceph orch daemon add osd ceph05:/dev/sdb [root@ceph01 ~]# ceph orch daemon add osd ceph05:/dev/sdc |

|

Created osd(s) 9 on host 'ceph05' |

|

[root@ceph01 ~]# ceph -s |

|

cluster: id: 4b4cc258-6679-11ee-ad4a-005056982bba health: HEALTH_WARN 1 stray daemon(s) not managed by cephadm services: mon: 3 daemons, quorum ceph01,ceph03,ceph02 (age 17m) mgr: ceph01.ywwete(active, since 113m), standbys: ceph04.ykcgel osd: 10 osds: 10 up (since 37s), 10 in (since 88s) #已经添加了10个osd data: pools: 1 pools, 1 pgs objects: 2 objects, 449 KiB usage: 669 MiB used, 999 GiB / 1000 GiB avail pgs: 1 active+clean |

|

[root@ceph01 ~]# ceph osd tree |

|

ID CLASS WEIGHT TYPE NAME STATUS REWEIGHT PRI-AFF -1 0.97687 root default -3 0.19537 host ceph01 0 hdd 0.09769 osd.0 up 1.00000 1.00000 1 hdd 0.09769 osd.1 up 1.00000 1.00000 -5 0.19537 host ceph02 2 hdd 0.09769 osd.2 up 1.00000 1.00000 3 hdd 0.09769 osd.3 up 1.00000 1.00000 -7 0.19537 host ceph03 4 hdd 0.09769 osd.4 up 1.00000 1.00000 5 hdd 0.09769 osd.5 up 1.00000 1.00000 -9 0.19537 host ceph04 6 hdd 0.09769 osd.6 up 1.00000 1.00000 7 hdd 0.09769 osd.7 up 1.00000 1.00000 -11 0.19537 host ceph05 8 hdd 0.09769 osd.8 up 1.00000 1.00000 9 hdd 0.09769 osd.9 up 1.00000 1.00000 |

|

[root@ceph01 ~]# ceph osd perf |

|

osd commit_latency(ms) apply_latency(ms) 9 0 0 8 0 0 7 0 0 6 0 0 1 0 0 0 0 0 2 0 0 3 0 0 4 0 0 5 0 0 |

2.6OSD分类

- ceph不能自动识别磁盘类型的情况

- 设置osd分类前osd需要是未分类的,即:修改osd分类的做法是,先移除原有的分类,在添加新的分类:

|

[root@ceph01 ~]# ceph osd tree |

|

ID CLASS WEIGHT TYPE NAME STATUS REWEIGHT PRI-AFF -1 0.97687 root default -3 0.19537 host ceph01 0 hdd 0.09769 osd.0 up 1.00000 1.00000 1 hdd 0.09769 osd.1 up 1.00000 1.00000 -5 0.19537 host ceph02 2 hdd 0.09769 osd.2 up 1.00000 1.00000 3 hdd 0.09769 osd.3 up 1.00000 1.00000 -7 0.19537 host ceph03 4 hdd 0.09769 osd.4 up 1.00000 1.00000 5 hdd 0.09769 osd.5 up 1.00000 1.00000 -9 0.19537 host ceph04 6 hdd 0.09769 osd.6 up 1.00000 1.00000 7 hdd 0.09769 osd.7 up 1.00000 1.00000 -11 0.19537 host ceph05 8 hdd 0.09769 osd.8 up 1.00000 1.00000 9 hdd 0.09769 osd.9 up 1.00000 1.00000 |

|

[root@ceph01 ~]# ceph osd crush rm-device-class osd.8 |

|

done removing class of osd(s): 8 |

|

[root@ceph01 ~]# ceph osd crush set-device-class ssd osd.8 |

|

set osd(s) 8 to class 'ssd' |

|

[root@ceph01 ~]# ceph osd tree |

|

ID CLASS WEIGHT TYPE NAME STATUS REWEIGHT PRI-AFF -1 0.97687 root default -3 0.19537 host ceph01 0 hdd 0.09769 osd.0 up 1.00000 1.00000 1 hdd 0.09769 osd.1 up 1.00000 1.00000 -5 0.19537 host ceph02 2 hdd 0.09769 osd.2 up 1.00000 1.00000 3 hdd 0.09769 osd.3 up 1.00000 1.00000 -7 0.19537 host ceph03 4 hdd 0.09769 osd.4 up 1.00000 1.00000 5 hdd 0.09769 osd.5 up 1.00000 1.00000 -9 0.19537 host ceph04 6 hdd 0.09769 osd.6 up 1.00000 1.00000 7 hdd 0.09769 osd.7 up 1.00000 1.00000 -11 0.19537 host ceph05 9 hdd 0.09769 osd.9 up 1.00000 1.00000 8 ssd 0.09769 osd.8 up 1.00000 1.00000 #类型已修改为ssd |

|

[root@ceph01 ~]# ceph osd tree |

|

ID CLASS WEIGHT TYPE NAME STATUS REWEIGHT PRI-AFF -1 0.97687 root default -3 0.19537 host ceph01 1 hdd 0.09769 osd.1 up 1.00000 1.00000 0 ssd 0.09769 osd.0 up 1.00000 1.00000 -5 0.19537 host ceph02 3 hdd 0.09769 osd.3 up 1.00000 1.00000 2 ssd 0.09769 osd.2 up 1.00000 1.00000 -7 0.19537 host ceph03 5 hdd 0.09769 osd.5 up 1.00000 1.00000 4 ssd 0.09769 osd.4 up 1.00000 1.00000 -9 0.19537 host ceph04 7 hdd 0.09769 osd.7 up 1.00000 1.00000 6 ssd 0.09769 osd.6 up 1.00000 1.00000 -11 0.19537 host ceph05 9 hdd 0.09769 osd.9 up 1.00000 1.00000 8 ssd 0.09769 osd.8 up 1.00000 1.00000 |

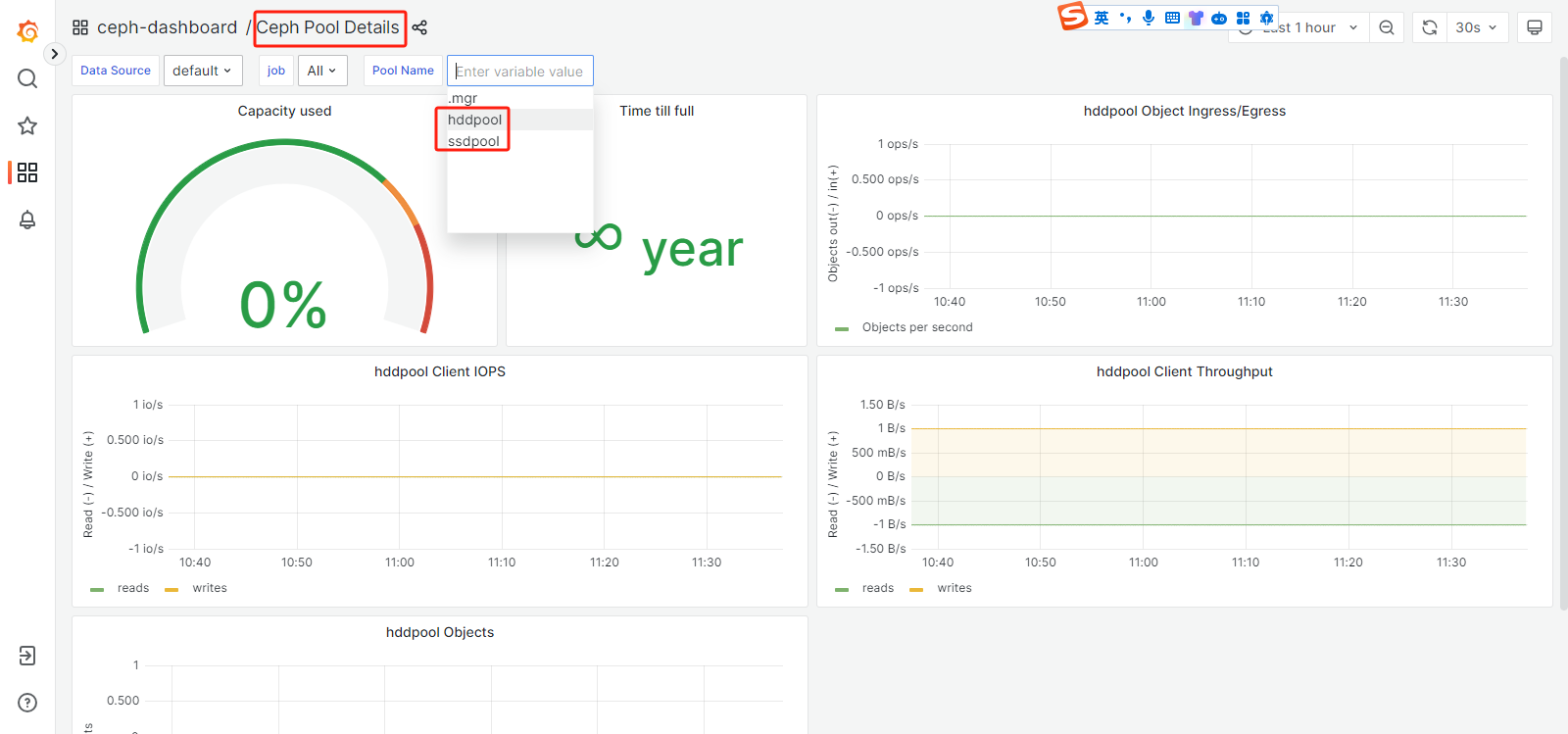

2.7创建池

创建两个存储池一个ssd,另一个hdd

|

[root@ceph01 ~]# ceph osd pool create ssdpool 128 128 |

|

pool 'ssdpool' created |

|

[root@ceph01 ~]# ceph osd pool create hddpool 128 128 |

|

pool 'hddpool' created |

|

[root@ceph01 ~]# ceph osd lspools |

|

1 .mgr 2 ssdpool 3 hddpool |

2.8创建规则以使用该设备

|

[root@ceph01 ~]# ceph osd crush rule create-replicated ssd default host ssd [root@ceph01 ~]# ceph osd crush rule create-replicated hdd default host hdd [root@ceph01 ~]# ceph osd crush rule ls |

|

replicated_rule ssd hdd |

将规则用在存储池上

|

[root@ceph01 ~]# ceph osd pool set ssdpool crush_rule ssd |

|

set pool 2 crush_rule to ssd |

|

[root@ceph01 ~]# ceph osd pool set hddpool crush_rule hdd |

|

set pool 3 crush_rule to hdd |

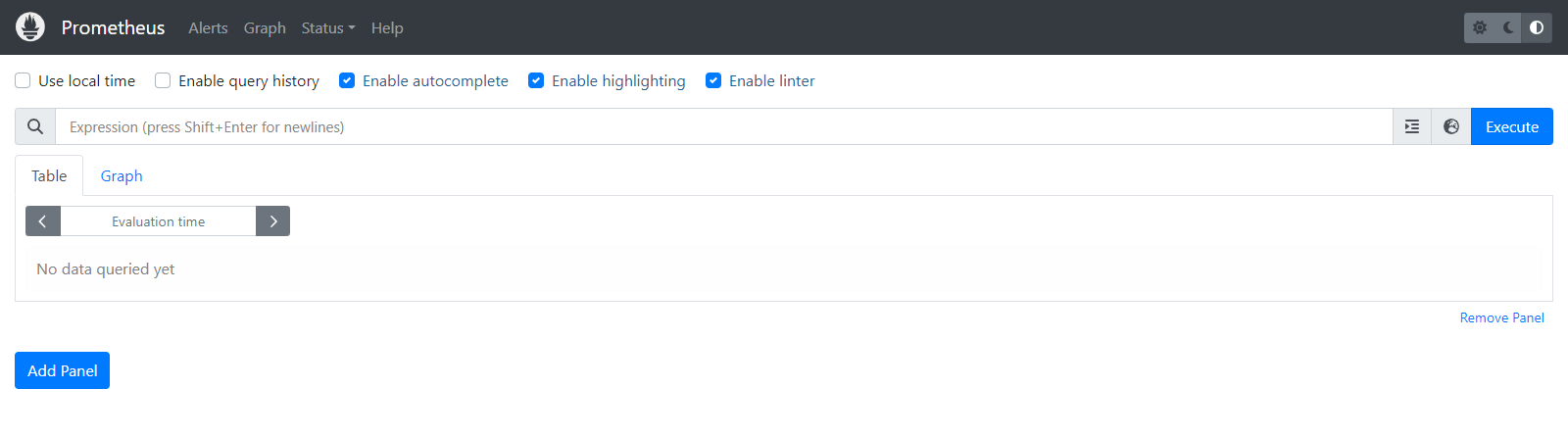

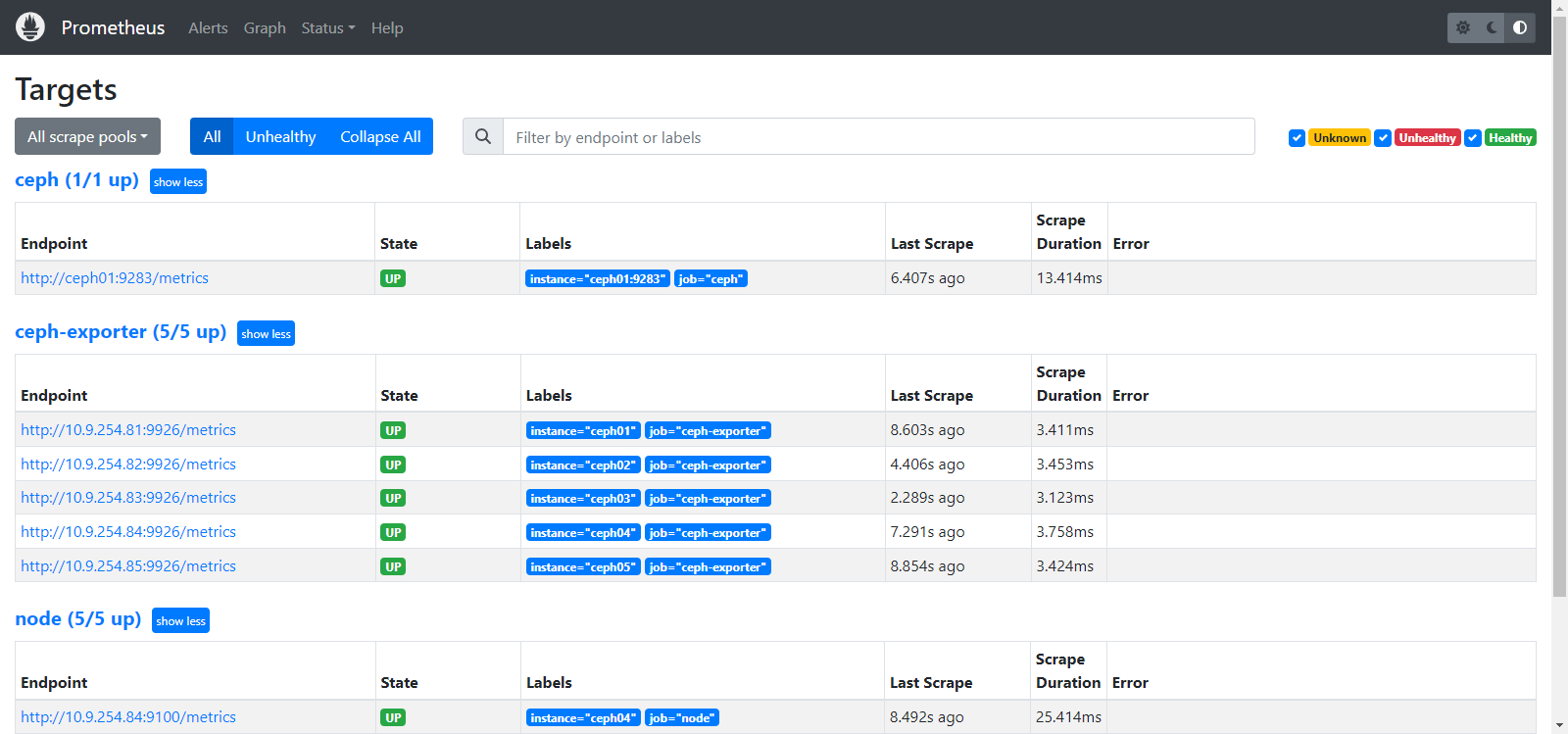

2.9配置监控组件

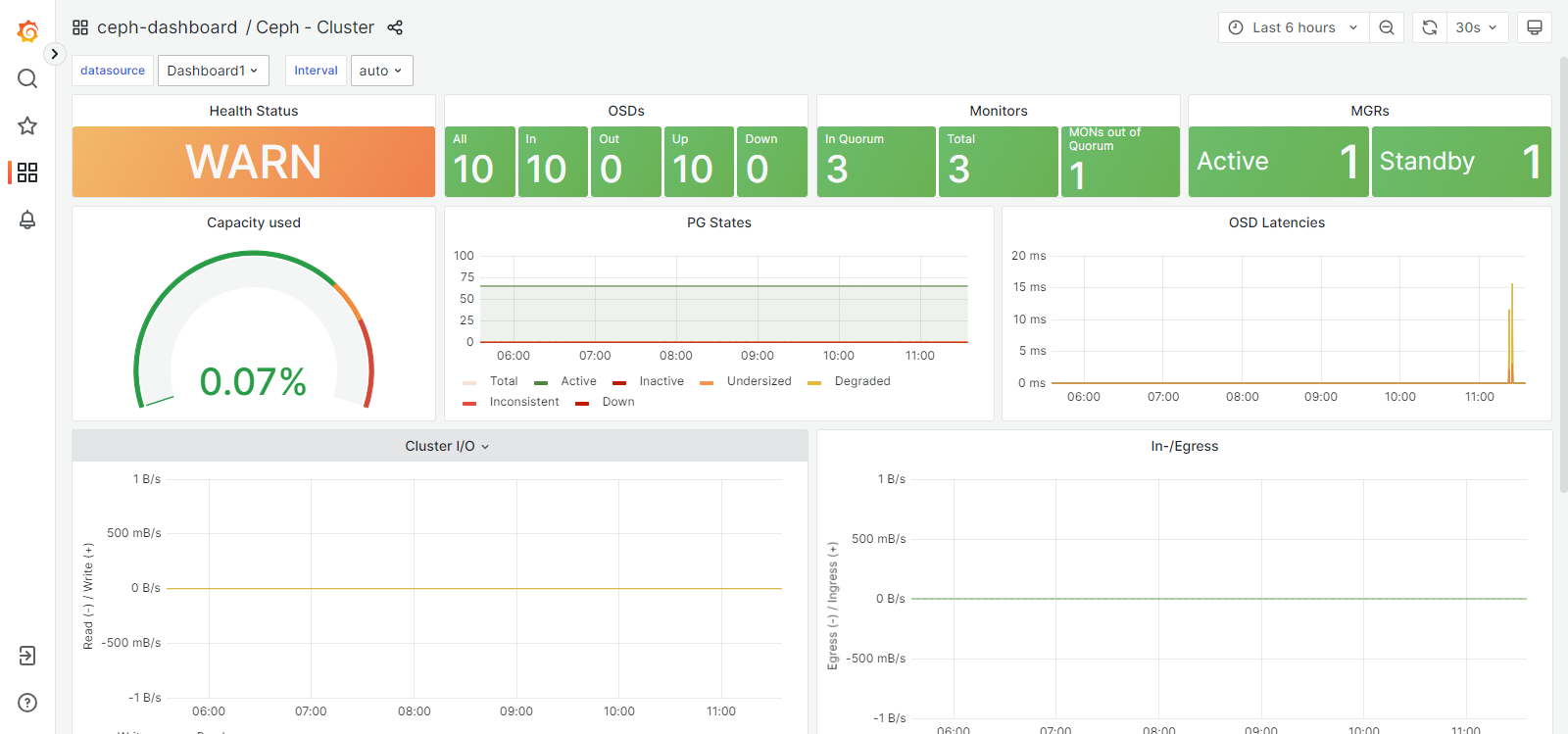

查看监控组件包括alertmanager、grafana、node-exporter、prometheus

|

[root@ceph01 ~]# ceph orch ls |

|

NAME PORTS RUNNING REFRESHED AGE PLACEMENT alertmanager ?:9093,9094 1/1 8m ago 19h count:1 ceph-exporter 5/5 8m ago 19h * crash 5/5 8m ago 19h * grafana ?:3000 1/1 8m ago 19h count:1 mgr 2/2 8m ago 19h count:2 mon 3/3 8m ago 17h count:3;label:mon node-exporter ?:9100 5/5 8m ago 19h * osd 10 8m ago - prometheus ?:9095 1/1 8m ago 19h count:1 |

|

[root@ceph01 ~]# ceph mgr services |

|

{ "dashboard": "https://10.9.254.81:8443/", "prometheus": "http://10.9.254.81:9283/" } |

|

[root@ceph01 ~]# ceph mgr module ls |

|

MODULE balancer on (always on) crash on (always on) devicehealth on (always on) orchestrator on (always on) pg_autoscaler on (always on) progress on (always on) rbd_support on (always on) status on (always on) telemetry on (always on) volumes on (always on) cephadm on dashboard on iostat on nfs on prometheus on restful on alerts - diskprediction_local - influx - insights - k8sevents - localpool - mds_autoscaler - mirroring - osd_perf_query - osd_support - rgw - rook - selftest - snap_schedule - stats - telegraf - test_orchestrator - zabbix - |

调整grafana服务在主机的位置

|

[root@ceph01 ~]# ceph orch ps |

|

NAME HOST PORTS STATUS REFRESHED AGE MEM USE MEM LIM VERSION IMAGE ID CONTAINER ID alertmanager.ceph01 ceph01 *:9093,9094 running (18h) 15s ago 19h 24.8M - 0.25.0 c8568f914cd2 03024acf467e ceph-exporter.ceph01 ceph01 running (19h) 15s ago 19h 17.5M - 18.2.0 2ddfbd2845f4 45b49253fe32 ceph-exporter.ceph02 ceph02 running (18h) 9m ago 18h 31.4M - 18.2.0 2ddfbd2845f4 2546817aab89 ceph-exporter.ceph03 ceph03 running (18h) 9m ago 18h 31.2M - 18.2.0 2ddfbd2845f4 e70e11057e78 ceph-exporter.ceph04 ceph04 running (18h) 9m ago 18h 31.1M - 18.2.0 2ddfbd2845f4 f294ff260174 ceph-exporter.ceph05 ceph05 running (18h) 5m ago 18h 31.2M - 18.2.0 2ddfbd2845f4 e87140fa642c crash.ceph01 ceph01 running (19h) 15s ago 19h 7096k - 18.2.0 2ddfbd2845f4 42b819c1d277 crash.ceph02 ceph02 running (18h) 9m ago 18h 10.1M - 18.2.0 2ddfbd2845f4 3b4fd4cadb3e crash.ceph03 ceph03 running (18h) 9m ago 18h 10.0M - 18.2.0 2ddfbd2845f4 51342f1a2ecd crash.ceph04 ceph04 running (18h) 9m ago 18h 10.1M - 18.2.0 2ddfbd2845f4 4824930bada2 crash.ceph05 ceph05 running (18h) 5m ago 18h 10.1M - 18.2.0 2ddfbd2845f4 cf92ac9f3a67 grafana.ceph01 ceph01 *:3000 running (19h) 15s ago 19h 95.8M - 9.4.7 2c41d148cca3 93485908e28c mgr.ceph01.ywwete ceph01 *:9283,8765,8443 running (19h) 15s ago 19h 621M - 18.2.0 2ddfbd2845f4 2d2752586bdc mgr.ceph04.ykcgel ceph04 *:8443,9283,8765 running (18h) 9m ago 18h 459M - 18.2.0 2ddfbd2845f4 ce7f89b9d81f mon.ceph01 ceph01 running (19h) 15s ago 19h 400M 2048M 18.2.0 2ddfbd2845f4 c45b203f0495 mon.ceph02 ceph02 running (18h) 9m ago 18h 389M 2048M 18.2.0 2ddfbd2845f4 b511e94994b0 mon.ceph03 ceph03 running (18h) 9m ago 18h 389M 2048M 18.2.0 2ddfbd2845f4 344830d1f960 node-exporter.ceph01 ceph01 *:9100 running (19h) 15s ago 19h 29.3M - 1.5.0 0da6a335fe13 a954f05187f7 node-exporter.ceph02 ceph02 *:9100 running (18h) 9m ago 18h 28.2M - 1.5.0 0da6a335fe13 8cd0b6e2eb9f node-exporter.ceph03 ceph03 *:9100 running (18h) 9m ago 18h 30.0M - 1.5.0 0da6a335fe13 5d0023a9d9ac node-exporter.ceph04 ceph04 *:9100 running (18h) 9m ago 18h 29.6M - 1.5.0 0da6a335fe13 ad2a61c7a224 node-exporter.ceph05 ceph05 *:9100 running (18h) 5m ago 18h 30.3M - 1.5.0 0da6a335fe13 dd0f095c4c68 osd.0 ceph01 running (17h) 15s ago 17h 88.2M 4096M 18.2.0 2ddfbd2845f4 601ee7e09d85 osd.1 ceph01 running (17h) 15s ago 17h 90.4M 4096M 18.2.0 2ddfbd2845f4 18a2e23daeac osd.2 ceph02 running (17h) 9m ago 17h 85.4M 1184M 18.2.0 2ddfbd2845f4 2d1dca056476 osd.3 ceph02 running (17h) 9m ago 17h 87.6M 1184M 18.2.0 2ddfbd2845f4 2a8d7f72a03b osd.4 ceph03 running (17h) 9m ago 17h 86.9M 1184M 18.2.0 2ddfbd2845f4 363db89e7f48 osd.5 ceph03 running (17h) 9m ago 17h 89.2M 1184M 18.2.0 2ddfbd2845f4 1c5b076345a0 osd.6 ceph04 running (17h) 9m ago 17h 88.2M 4096M 18.2.0 2ddfbd2845f4 8c11b3ec9f78 osd.7 ceph04 running (17h) 9m ago 17h 85.9M 4096M 18.2.0 2ddfbd2845f4 01646673ed19 osd.8 ceph05 running (17h) 5m ago 17h 84.7M 1696M 18.2.0 2ddfbd2845f4 cfd33d90afc8 osd.9 ceph05 running (17h) 5m ago 17h 86.2M 1696M 18.2.0 2ddfbd2845f4 aad7c56bc534 prometheus.ceph01 ceph01 *:9095 running (18h) 15s ago 19h 148M - 2.43.0 a07b618ecd1d beb3796d1390 |

|

[root@ceph01 ~]# ceph orch apply grafana --placement="ceph02" |

|

Scheduled grafana update... |

|

[root@ceph01 ~]# ceph orch ps |

|

NAME HOST PORTS STATUS REFRESHED AGE MEM USE MEM LIM VERSION IMAGE ID CONTAINER ID alertmanager.ceph01 ceph01 *:9093,9094 running (18h) 30s ago 19h 24.6M - 0.25.0 c8568f914cd2 03024acf467e ceph-exporter.ceph01 ceph01 running (19h) 30s ago 19h 17.5M - 18.2.0 2ddfbd2845f4 45b49253fe32 ceph-exporter.ceph02 ceph02 running (18h) 30s ago 18h 31.4M - 18.2.0 2ddfbd2845f4 2546817aab89 ceph-exporter.ceph03 ceph03 running (18h) 74s ago 18h 31.2M - 18.2.0 2ddfbd2845f4 e70e11057e78 ceph-exporter.ceph04 ceph04 running (18h) 74s ago 18h 31.1M - 18.2.0 2ddfbd2845f4 f294ff260174 ceph-exporter.ceph05 ceph05 running (18h) 8m ago 18h 31.2M - 18.2.0 2ddfbd2845f4 e87140fa642c crash.ceph01 ceph01 running (19h) 30s ago 19h 7096k - 18.2.0 2ddfbd2845f4 42b819c1d277 crash.ceph02 ceph02 running (18h) 30s ago 18h 10.1M - 18.2.0 2ddfbd2845f4 3b4fd4cadb3e crash.ceph03 ceph03 running (18h) 74s ago 18h 10.0M - 18.2.0 2ddfbd2845f4 51342f1a2ecd crash.ceph04 ceph04 running (18h) 74s ago 18h 10.1M - 18.2.0 2ddfbd2845f4 4824930bada2 crash.ceph05 ceph05 running (18h) 8m ago 18h 10.1M - 18.2.0 2ddfbd2845f4 cf92ac9f3a67 grafana.ceph02 ceph02 *:3000 running (43s) 30s ago 43s 28.7M - 9.4.7 2c41d148cca3 8805bbcf7f67 mgr.ceph01.ywwete ceph01 *:9283,8765,8443 running (19h) 30s ago 19h 621M - 18.2.0 2ddfbd2845f4 2d2752586bdc mgr.ceph04.ykcgel ceph04 *:8443,9283,8765 running (18h) 74s ago 18h 459M - 18.2.0 2ddfbd2845f4 ce7f89b9d81f mon.ceph01 ceph01 running (19h) 30s ago 19h 400M 2048M 18.2.0 2ddfbd2845f4 c45b203f0495 mon.ceph02 ceph02 running (18h) 30s ago 18h 389M 2048M 18.2.0 2ddfbd2845f4 b511e94994b0 mon.ceph03 ceph03 running (18h) 74s ago 18h 391M 2048M 18.2.0 2ddfbd2845f4 344830d1f960 node-exporter.ceph01 ceph01 *:9100 running (19h) 30s ago 19h 29.4M - 1.5.0 0da6a335fe13 a954f05187f7 node-exporter.ceph02 ceph02 *:9100 running (18h) 30s ago 18h 27.7M - 1.5.0 0da6a335fe13 8cd0b6e2eb9f node-exporter.ceph03 ceph03 *:9100 running (18h) 74s ago 18h 30.1M - 1.5.0 0da6a335fe13 5d0023a9d9ac node-exporter.ceph04 ceph04 *:9100 running (18h) 74s ago 18h 30.0M - 1.5.0 0da6a335fe13 ad2a61c7a224 node-exporter.ceph05 ceph05 *:9100 running (18h) 8m ago 18h 30.3M - 1.5.0 0da6a335fe13 dd0f095c4c68 osd.0 ceph01 running (18h) 30s ago 18h 88.2M 4096M 18.2.0 2ddfbd2845f4 601ee7e09d85 osd.1 ceph01 running (18h) 30s ago 18h 90.5M 4096M 18.2.0 2ddfbd2845f4 18a2e23daeac osd.2 ceph02 running (17h) 30s ago 17h 85.4M 1184M 18.2.0 2ddfbd2845f4 2d1dca056476 osd.3 ceph02 running (17h) 30s ago 17h 87.6M 1184M 18.2.0 2ddfbd2845f4 2a8d7f72a03b osd.4 ceph03 running (17h) 74s ago 17h 86.9M 1184M 18.2.0 2ddfbd2845f4 363db89e7f48 osd.5 ceph03 running (17h) 74s ago 17h 89.2M 1184M 18.2.0 2ddfbd2845f4 1c5b076345a0 osd.6 ceph04 running (17h) 74s ago 17h 87.8M 4096M 18.2.0 2ddfbd2845f4 8c11b3ec9f78 osd.7 ceph04 running (17h) 74s ago 17h 85.5M 4096M 18.2.0 2ddfbd2845f4 01646673ed19 osd.8 ceph05 running (17h) 8m ago 17h 84.7M 1696M 18.2.0 2ddfbd2845f4 cfd33d90afc8 osd.9 ceph05 running (17h) 8m ago 17h 86.2M 1696M 18.2.0 2ddfbd2845f4 aad7c56bc534 prometheus.ceph01 ceph01 *:9095 running (18h) 30s ago 19h 144M - 2.43.0 a07b618ecd1d beb3796d1390 |

2.10配置MDS

MDS守护进程用于Cephfs(文件系统),MDS采用的是主备模式,即cephfs仅使用1个活跃的MDS守护进程,配置MDS服务有多种方法,此处介绍2种,大同小异。

先创建CephFS,然后使用placement部署MDS

先创建CephFS池

|

[root@ceph01 ~]# ceph osd pool create cephfs_data 64 64 |

|

pool 'cephfs_data' created |

|

[root@ceph01 ~]# ceph osd pool create cephfs_metadata 64 64 |

|

pool 'cephfs_metadata' created |

|

[root@ceph01 ~]# ceph osd lspools |

|

1 .mgr 2 ssdpool 3 hddpool 4 cephfs_data 5 cephfs_metadata |

为数据池和元数据池创建文件系统

|

[root@ceph01 ~]# ceph fs new cephfs cephfs_metadata cephfs_data |

|

new fs with metadata pool 5 and data pool 4 |

使用Ceph orch apply命令部署MDS服务

|

[root@ceph01 ~]# ceph orch apply mds cephfs --placement="3 ceph01 ceph02 ceph03" |

|

Scheduled mds.cephfs update... |

|

[root@ceph01 ~]# ceph orch ls |

|

NAME PORTS RUNNING REFRESHED AGE PLACEMENT alertmanager ?:9093,9094 1/1 37s ago 20h count:1 ceph-exporter 5/5 6m ago 20h * crash 5/5 6m ago 20h * grafana ?:3000 1/1 37s ago 16m ceph02 mds.cephfs 3/3 38s ago 60s ceph01;ceph02;ceph03;count:3 mgr 2/2 6m ago 20h count:2 mon 3/3 38s ago 18h count:3;label:mon node-exporter ?:9100 5/5 6m ago 20h * osd 10 6m ago - prometheus ?:9095 1/1 37s ago 20h count:1 |

|

[root@ceph01 ~]# ceph orch ps |

|

NAME HOST PORTS STATUS REFRESHED AGE MEM USE MEM LIM VERSION IMAGE ID CONTAINER ID alertmanager.ceph01 ceph01 *:9093,9094 running (19h) 72s ago 20h 24.6M - 0.25.0 c8568f914cd2 03024acf467e ceph-exporter.ceph01 ceph01 running (20h) 72s ago 20h 17.5M - 18.2.0 2ddfbd2845f4 45b49253fe32 ceph-exporter.ceph02 ceph02 running (19h) 72s ago 19h 31.4M - 18.2.0 2ddfbd2845f4 2546817aab89 ceph-exporter.ceph03 ceph03 running (19h) 72s ago 19h 31.3M - 18.2.0 2ddfbd2845f4 e70e11057e78 ceph-exporter.ceph04 ceph04 running (19h) 7m ago 19h 31.1M - 18.2.0 2ddfbd2845f4 f294ff260174 ceph-exporter.ceph05 ceph05 running (19h) 4m ago 19h 31.2M - 18.2.0 2ddfbd2845f4 e87140fa642c crash.ceph01 ceph01 running (20h) 72s ago 20h 7096k - 18.2.0 2ddfbd2845f4 42b819c1d277 crash.ceph02 ceph02 running (19h) 72s ago 19h 10.1M - 18.2.0 2ddfbd2845f4 3b4fd4cadb3e crash.ceph03 ceph03 running (19h) 72s ago 19h 10.0M - 18.2.0 2ddfbd2845f4 51342f1a2ecd crash.ceph04 ceph04 running (19h) 7m ago 19h 10.1M - 18.2.0 2ddfbd2845f4 4824930bada2 crash.ceph05 ceph05 running (19h) 4m ago 19h 10.1M - 18.2.0 2ddfbd2845f4 cf92ac9f3a67 grafana.ceph02 ceph02 *:3000 running (17m) 72s ago 17m 160M - 9.4.7 2c41d148cca3 8805bbcf7f67 mds.cephfs.ceph01.aibnpt ceph01 running (82s) 72s ago 82s 15.5M - 18.2.0 2ddfbd2845f4 47174fba9bc0 mds.cephfs.ceph02.kzinfm ceph02 running (79s) 72s ago 79s 15.1M - 18.2.0 2ddfbd2845f4 e49ff6578da6 mds.cephfs.ceph03.pdkglx ceph03 running (83s) 72s ago 84s 15.7M - 18.2.0 2ddfbd2845f4 39761285bc18 mgr.ceph01.ywwete ceph01 *:9283,8765,8443 running (20h) 72s ago 20h 622M - 18.2.0 2ddfbd2845f4 2d2752586bdc mgr.ceph04.ykcgel ceph04 *:8443,9283,8765 running (19h) 7m ago 19h 459M - 18.2.0 2ddfbd2845f4 ce7f89b9d81f mon.ceph01 ceph01 running (20h) 72s ago 20h 401M 2048M 18.2.0 2ddfbd2845f4 c45b203f0495 mon.ceph02 ceph02 running (19h) 72s ago 19h 389M 2048M 18.2.0 2ddfbd2845f4 b511e94994b0 mon.ceph03 ceph03 running (19h) 72s ago 19h 391M 2048M 18.2.0 2ddfbd2845f4 344830d1f960 node-exporter.ceph01 ceph01 *:9100 running (20h) 72s ago 20h 29.0M - 1.5.0 0da6a335fe13 a954f05187f7 node-exporter.ceph02 ceph02 *:9100 running (19h) 72s ago 19h 28.2M - 1.5.0 0da6a335fe13 8cd0b6e2eb9f node-exporter.ceph03 ceph03 *:9100 running (19h) 72s ago 19h 30.5M - 1.5.0 0da6a335fe13 5d0023a9d9ac node-exporter.ceph04 ceph04 *:9100 running (19h) 7m ago 19h 29.8M - 1.5.0 0da6a335fe13 ad2a61c7a224 node-exporter.ceph05 ceph05 *:9100 running (19h) 4m ago 19h 30.7M - 1.5.0 0da6a335fe13 dd0f095c4c68 osd.0 ceph01 running (18h) 72s ago 18h 88.3M 4096M 18.2.0 2ddfbd2845f4 601ee7e09d85 osd.1 ceph01 running (18h) 72s ago 18h 91.0M 4096M 18.2.0 2ddfbd2845f4 18a2e23daeac osd.2 ceph02 running (18h) 72s ago 18h 86.2M 1184M 18.2.0 2ddfbd2845f4 2d1dca056476 osd.3 ceph02 running (18h) 72s ago 18h 87.4M 1184M 18.2.0 2ddfbd2845f4 2a8d7f72a03b osd.4 ceph03 running (18h) 72s ago 18h 86.8M 1184M 18.2.0 2ddfbd2845f4 363db89e7f48 osd.5 ceph03 running (18h) 72s ago 18h 90.1M 1184M 18.2.0 2ddfbd2845f4 1c5b076345a0 osd.6 ceph04 running (18h) 7m ago 18h 88.3M 4096M 18.2.0 2ddfbd2845f4 8c11b3ec9f78 osd.7 ceph04 running (18h) 7m ago 18h 86.3M 4096M 18.2.0 2ddfbd2845f4 01646673ed19 osd.8 ceph05 running (18h) 4m ago 18h 85.9M 1696M 18.2.0 2ddfbd2845f4 cfd33d90afc8 osd.9 ceph05 running (18h) 4m ago 18h 86.5M 1696M 18.2.0 2ddfbd2845f4 aad7c56bc534 prometheus.ceph01 ceph01 *:9095 running (19h) 72s ago 20h 140M - 2.43.0 a07b618ecd1d beb3796d1390 |

|

[root@ceph01 ~]# ceph fs ls |

|

name: cephfs, metadata pool: cephfs_metadata, data pools: [cephfs_data ] |

|

[root@ceph01 ~]# ceph fs status |

|

cephfs - 0 clients ====== RANK STATE MDS ACTIVITY DNS INOS DIRS CAPS 0 active cephfs.ceph03.pdkglx Reqs: 0 /s 10 13 12 0 POOL TYPE USED AVAIL cephfs_metadata metadata 64.0k 474G cephfs_data data 0 474G STANDBY MDS cephfs.ceph01.aibnpt cephfs.ceph02.kzinfm MDS version: ceph version 18.2.0 (5dd24139a1eada541a3bc16b6941c5dde975e26d) reef (stable) |

|

root@ceph01 ~]# ceph orch ps --daemon_type=mds |

|

NAME HOST PORTS STATUS REFRESHED AGE MEM USE MEM LIM VERSION IMAGE ID CONTAINER ID mds.cephfs.ceph01.aibnpt ceph01 running (70m) 6m ago 70m 26.5M - 18.2.0 2ddfbd2845f4 47174fba9bc0 mds.cephfs.ceph02.kzinfm ceph02 running (70m) 6m ago 70m 26.6M - 18.2.0 2ddfbd2845f4 e49ff6578da6 mds.cephfs.ceph03.pdkglx ceph03 running (71m) 6m ago 71m 26.4M - 18.2.0 2ddfbd2845f4 39761285bc18 |

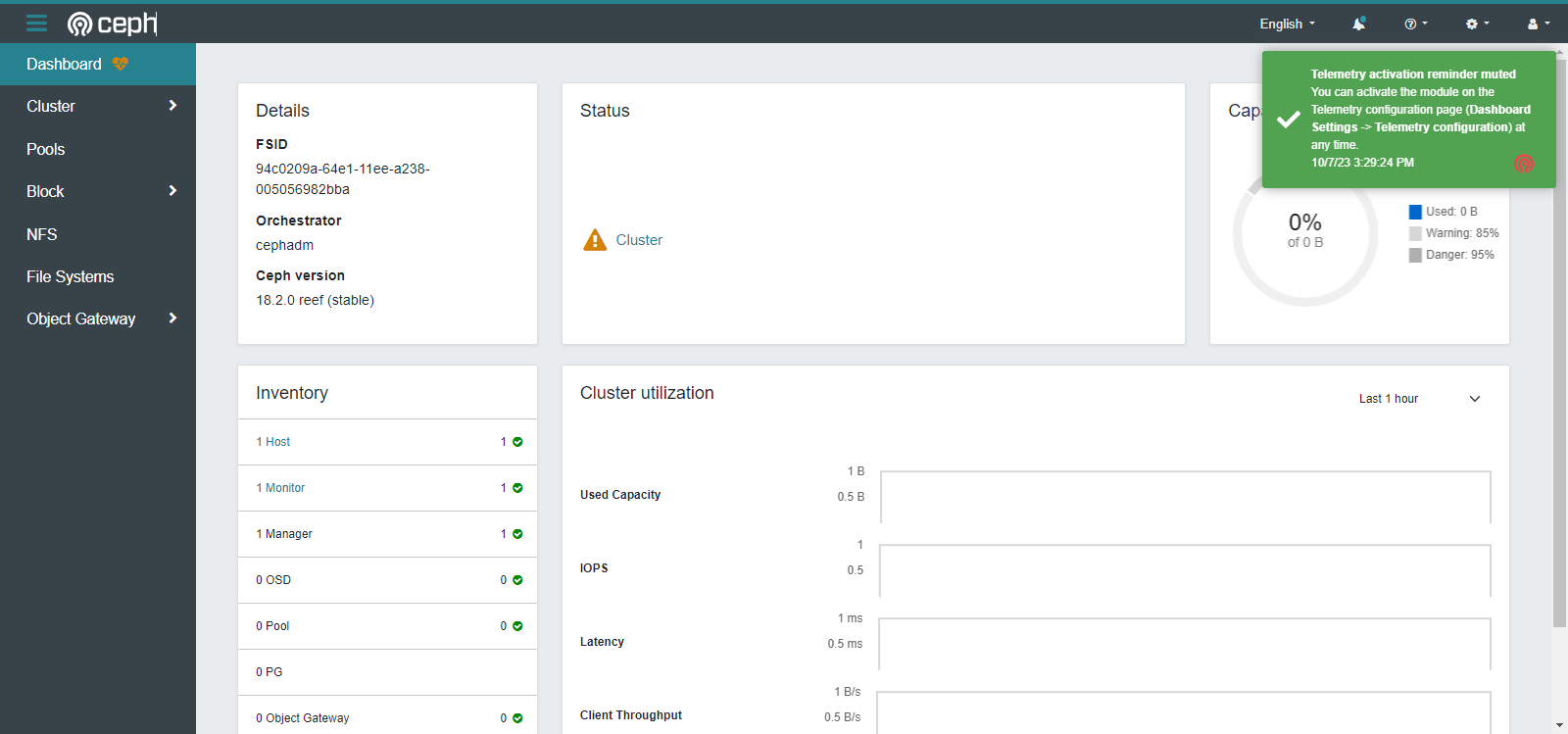

2.11Dashboard的使用

查看dashboard地址

|

[root@ceph01 ~]# ceph mgr services |

|

{ "dashboard": "https://10.9.254.81:8443/", "prometheus": "http://10.9.254.81:9283/" } |

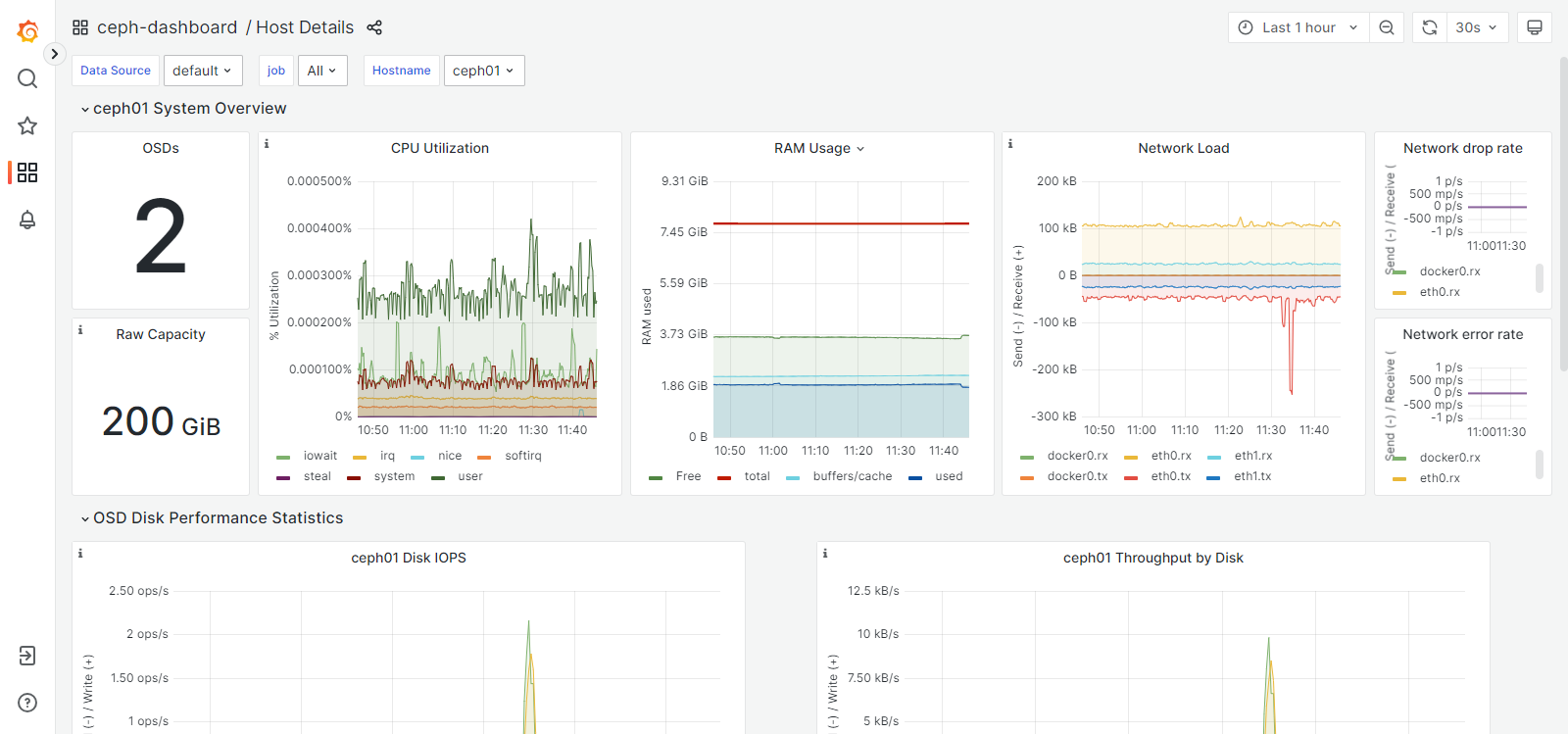

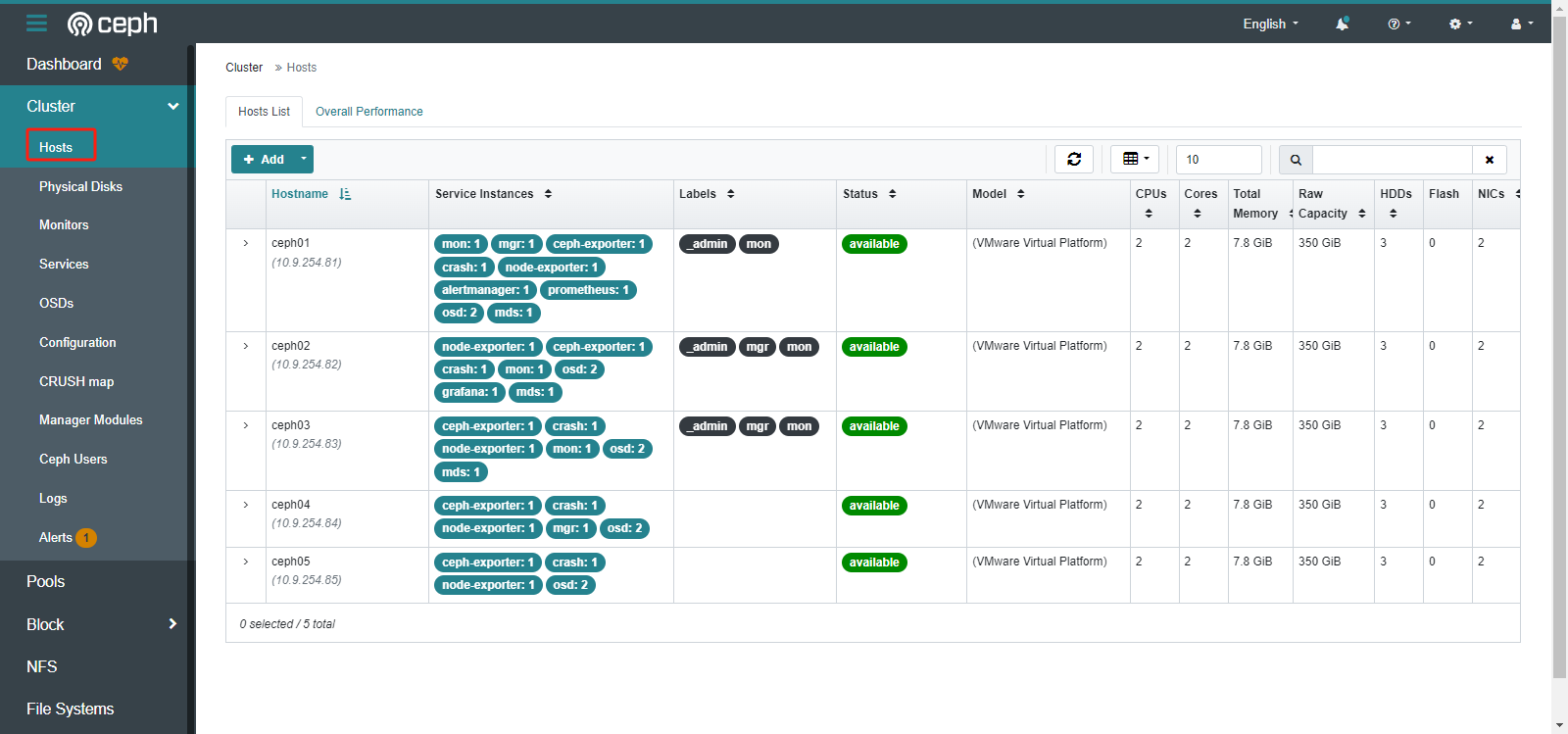

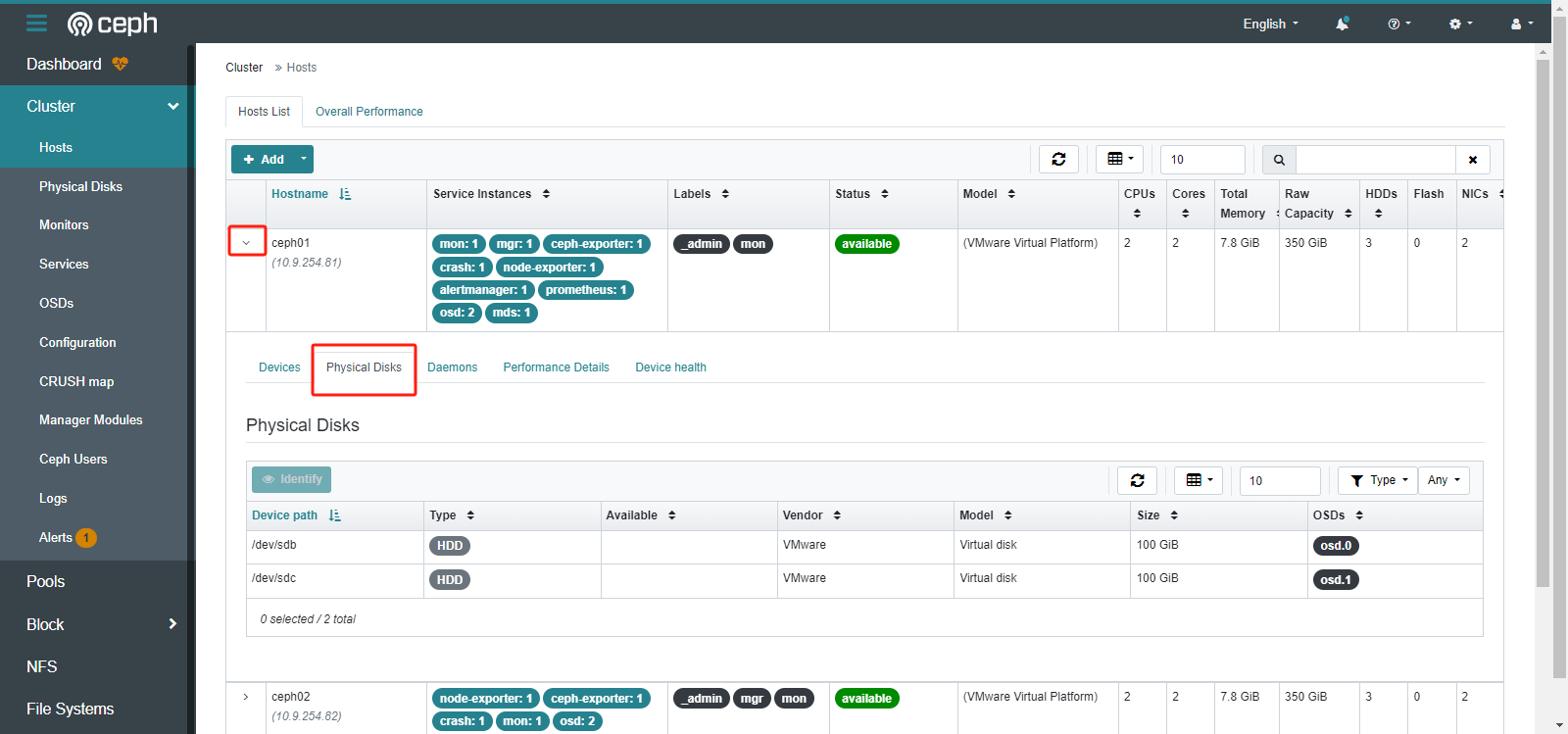

主机信息

3.管理CEPH集群

3.1管理ceph节点

3.1.1添加节点

参照第一章节完成基础环境配置

|

[root@ceph01 ~]# ceph orch host add ceph06 10.9.254.86 |

删除节点后添加

|

[root@ceph01 ~]# ceph orch host ls |

|

HOST ADDR LABELS STATUS ceph01 10.9.254.81 _admin,mon ceph02 10.9.254.82 mon,mgr,_admin ceph03 10.9.254.83 mon,_admin,mgr ceph04 10.9.254.84 4 hosts in cluster |

|

[root@ceph01 ~]# ceph orch host add ceph05 10.9.254.85 |

|

Added host 'ceph05' with addr '10.9.254.85' |

|

[root@ceph01 ~]# ceph orch daemon add osd ceph05:/dev/sdb |

|

Created osd(s) 8 on host 'ceph05' |

|

[root@ceph01 ~]# ceph orch daemon add osd ceph05:/dev/sdc |

|

Created osd(s) 9 on host 'ceph05' |

|

[root@ceph01 ~]# ceph -s |

|

cluster: id: 4b4cc258-6679-11ee-ad4a-005056982bba health: HEALTH_OK services: mon: 3 daemons, quorum ceph01,ceph03,ceph02 (age 23h) mgr: ceph01.ywwete(active, since 46h), standbys: ceph04.ykcgel mds: 1/1 daemons up, 2 standby osd: 10 osds: 10 up (since 92s), 10 in (since 44h) data: volumes: 1/1 healthy pools: 5 pools, 193 pgs objects: 24 objects, 451 KiB usage: 753 MiB used, 999 GiB / 1000 GiB avail pgs: 193 active+clean |

|

[root@ceph01 ~]# ceph orch ps ceph05 |

|

NAME HOST PORTS STATUS REFRESHED AGE MEM USE MEM LIM VERSION IMAGE ID CONTAINER ID ceph-exporter.ceph05 ceph05 running (5m) 2m ago 5m 7543k - 18.2.0 2ddfbd2845f4 18f287bed785 crash.ceph05 ceph05 running (5m) 2m ago 5m 7088k - 18.2.0 2ddfbd2845f4 a99163decd6b node-exporter.ceph05 ceph05 *:9100 running (5m) 2m ago 5m 8680k - 1.5.0 0da6a335fe13 e04a809f0376 osd.8 ceph05 running (3m) 2m ago 3m 71.9M 1696M 18.2.0 2ddfbd2845f4 7a64e10bfcb0 osd.9 ceph05 running (2m) 2m ago 2m 13.1M 1696M 18.2.0 2ddfbd2845f4 c6a2dfa7cb73 |

|

[root@ceph05 ~]# docker ps -a |

|

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES c6a2dfa7cb73 quay.io/ceph/ceph "/usr/bin/ceph-osd -…" 3 minutes ago Up 3 minutes ceph-4b4cc258-6679-11ee-ad4a-005056982bba-osd-9 7a64e10bfcb0 quay.io/ceph/ceph "/usr/bin/ceph-osd -…" 3 minutes ago Up 3 minutes ceph-4b4cc258-6679-11ee-ad4a-005056982bba-osd-8 e04a809f0376 quay.io/prometheus/node-exporter:v1.5.0 "/bin/node_exporter …" 6 minutes ago Up 6 minutes ceph-4b4cc258-6679-11ee-ad4a-005056982bba-node-exporter-ceph05 a99163decd6b quay.io/ceph/ceph "/usr/bin/ceph-crash…" 6 minutes ago Up 6 minutes ceph-4b4cc258-6679-11ee-ad4a-005056982bba-crash-ceph05 18f287bed785 quay.io/ceph/ceph "/usr/bin/ceph-expor…" 6 minutes ago Up 6 minutes ceph-4b4cc258-6679-11ee-ad4a-005056982bba-ceph-exporter-ceph05 |

|

[root@ceph01 ~]# ceph osd tree |

|

ID CLASS WEIGHT TYPE NAME STATUS REWEIGHT PRI-AFF -1 0.97687 root default -3 0.19537 host ceph01 1 hdd 0.09769 osd.1 up 1.00000 1.00000 0 ssd 0.09769 osd.0 up 1.00000 1.00000 -5 0.19537 host ceph02 3 hdd 0.09769 osd.3 up 1.00000 1.00000 2 ssd 0.09769 osd.2 up 1.00000 1.00000 -7 0.19537 host ceph03 5 hdd 0.09769 osd.5 up 1.00000 1.00000 4 ssd 0.09769 osd.4 up 1.00000 1.00000 -9 0.19537 host ceph04 7 hdd 0.09769 osd.7 up 1.00000 1.00000 6 ssd 0.09769 osd.6 up 1.00000 1.00000 -11 0.19537 host ceph05 9 hdd 0.09769 osd.9 up 1.00000 1.00000 8 ssd 0.09769 osd.8 up 1.00000 1.00000 |

使用配置文件进行添加

|

[root@ceph01 ~]# vim hosts.yaml |

|

service_type: host addr: ceph05 hostname: ceph05 labels: - osd |

|

[root@ceph01 ~]# ceph orch apply -i hosts.yaml |

3.1.2节点进入维护模式

将主机置于维护模式,可加上--force,强制操作

|

[root@ceph01 ~]# ceph orch host maintenance enter ceph05 |

|

Daemons for Ceph cluster 4b4cc258-6679-11ee-ad4a-005056982bba stopped on host ceph05. Host ceph05 moved to maintenance mode |

查看主机上服务状态,均为stopped

|

[root@ceph01 ~]# ceph orch ps ceph05 |

|

查看NAME HOST PORTS STATUS REFRESHED AGE MEM USE MEM LIM VERSION IMAGE ID CONTAINER ID ceph-exporter.ceph05 ceph05 stopped 8m ago 20h 31.2M - 18.2.0 2ddfbd2845f4 e87140fa642c crash.ceph05 ceph05 stopped 8m ago 20h 10.1M - 18.2.0 2ddfbd2845f4 cf92ac9f3a67 node-exporter.ceph05 ceph05 *:9100 stopped 8m ago 20h 30.5M - 1.5.0 0da6a335fe13 dd0f095c4c68 osd.8 ceph05 stopped 8m ago 20h 85.2M 1696M 18.2.0 2ddfbd2845f4 cfd33d90afc8 osd.9 ceph05 stopped 8m ago 19h 86.9M 1696M 18.2.0 2ddfbd2845f4 aad7c56bc534 |

查看主机状态,发现主机上添加了maintenance标签

|

[root@ceph01 ~]# ceph orch host ls |

|