RAG知识库搭建与优化实战:从入门到精通,打造高效智能问答系统!

小编给大家推荐一个开发者的知识库,里面收录了 Java 程序员需要掌握的核心知识,有兴趣的小伙伴可以收藏一下。

网站:https://farerboy.com

一、引言

1.1 什么是RAG及其使用场景

检索增强生成(Retrieval Augmented Generation,简称RAG)是一种将大语言模型与外部知识检索相结合的技术架构。在传统的纯参数化语言模型中,模型的知识完全依赖于训练数据,存在知识截止日期、幻觉问题、专业领域知识不足等局限。RAG通过引入外部知识库,让模型能够实时检索相关信息,从而生成更加准确、更具时效性的回答。

RAG技术的核心价值体现在以下几个方面:

- 解决知识时效性问题:企业无需重新训练模型即可让AI掌握最新信息

- 降低幻觉问题:生成的内容直接来源于检索到的真实文档

- 私有数据安全利用:数据无需上传至第三方平台即可被模型使用

- 成本优势:相比重新训练或微调大模型,RAG实施成本更低

典型应用场景:

- 企业内部知识库问答

- 智能客服系统

- 法律/金融/医疗文档检索

- 教育培训智能辅导

1.2 RAG工作流程概览

┌─────────────────────────────────────────────────────────────────────────────────┐│ RAG 系统工作流程 │├─────────────────────────────────────────────────────────────────────────────────┤│ ││ ┌──────────┐ ┌──────────┐ ┌──────────┐ ┌──────────┐ ││ │ 文档加载 │ -> │ 文档分块 │ -> │ 向量化 │ -> │ 向量存储 │ 索引构建阶段 ││ └──────────┘ └──────────┘ └──────────┘ └──────────┘ ││ │ │ ││ v v ││ ┌──────────────────────────────────────────────────────────────┐ ││ │ 向量数据库 │ ││ └──────────────────────────────────────────────────────────────┘ ││ ^ │ ││ │ 查询推理阶段 │ ││ v v ││ ┌──────────┐ ┌──────────┐ ┌──────────┐ ┌──────────┐ ┌─────────┐ ││ │ 用户查询 │ -> │ 查询向量化│ -> │ 相似度检索│ -> │ 结果排序 │ -> │ LLM生成 │ ││ └──────────┘ └──────────┘ └──────────┘ └──────────┘ └─────────┘ ││ │└─────────────────────────────────────────────────────────────────────────────────┘

1.3 为什么RAG容易踩坑

RAG开发过程中常见的挑战:

- 文档处理复杂性:PDF、Word、Markdown等格式解析困难

- 分块策略选择:分块大小直接影响检索效果

- 向量化质量:语义相似的文本可能在向量空间中距离较远

- 检索与生成配合:上下文不足或截断问题

二、文档处理常见问题

2.1 文档加载失败

不同格式文档需要不同的解析策略。RAG系统的第一步是加载各类文档,包括PDF、Word、Markdown、纯文本等。Java生态中,PDFBox是处理PDF文档的主流库,可以提取文本版PDF的内容;对于扫描版PDF,则需要借助OCR技术(如Tesseract)进行文字识别。

以下代码实现了统一的文档加载器,支持多种格式的自动识别和处理:

// PDF文档加载方案import org.apache.pdfbox.pdmodel.PDDocument;import org.apache.pdfbox.text.PDFTextStripper;import java.io.File;import java.io.IOException;import java.util.ArrayList;import java.util.List;publicclass PDFLoader { public String load(String filePath) throws IOException { try { return loadTextPDF(filePath); } catch (Exception e) { return loadScannedPDF(filePath); } } private String loadTextPDF(String filePath) throws IOException { List<String> textParts = new ArrayList<>(); try (PDDocument document = PDDocument.load(new File(filePath))) { PDFTextStripper stripper = new PDFTextStripper(); int numPages = document.getNumberOfPages(); for (int i = 1; i <= numPages; i++) { stripper.setStartPage(i); stripper.setEndPage(i); String text = stripper.getText(document); if (text != null && !text.trim().isEmpty()) { textParts.add(text); } } } return String.join("\n\n", textParts); } private String loadScannedPDF(String filePath) { // OCR处理扫描版PDF需要集成Tesseract等OCR库 // 这里返回空实现,实际需要调用OCR服务 return""; }}// 多格式文档统一加载器publicclass MultiFormatLoader { public String load(String filePath) throws IOException { String extension = getFileExtension(filePath); switch (extension.toLowerCase()) { case"pdf": returnnew PDFLoader().load(filePath); case"txt": return loadTextFile(filePath); case"md": return loadMarkdownFile(filePath); case"docx": return loadWordFile(filePath); default: thrownew IllegalArgumentException("Unsupported file format: " + extension); } } private String loadTextFile(String filePath) throws IOException { returnnew String(java.nio.file.Files.readAllBytes(java.nio.file.Paths.get(filePath)), "UTF-8"); } private String loadMarkdownFile(String filePath) throws IOException { return loadTextFile(filePath); } private String loadWordFile(String filePath) { // 需要使用Apache POI库处理Word文档 // return new WordDocumentLoader().load(filePath); return""; } private String getFileExtension(String filePath) { int lastDot = filePath.lastIndexOf('.'); return lastDot > 0 ? filePath.substring(lastDot + 1) : ""; }}

2.2 文档分块策略

分块策略直接影响检索效果。合理的分块能够让相关内容的语义保持完整,过小的分块可能丢失上下文,过大的分块则可能引入过多无关信息。本节介绍三种常用的分块策略:

- 固定长度分块:按固定字符数分割,实现简单但可能切断语义单元

- 递归分块:按层次分隔符(段落、句子、单词)依次尝试分割,更好地保留语义完整性

- 语义分块:基于Embedding相似度判断段落边界,适合保持主题连贯性

// 多种分块策略实现import java.util.*;publicinterface ChunkingStrategy { List<String> chunk(String text);}// 固定长度分块publicclass FixedSizeChunking implements ChunkingStrategy { privatefinalint chunkSize; privatefinalint overlap; public FixedSizeChunking(int chunkSize, int overlap) { this.chunkSize = chunkSize; this.overlap = overlap; } @Override public List<String> chunk(String text) { List<String> chunks = new ArrayList<>(); int start = 0; while (start < text.length()) { int end = Math.min(start + chunkSize, text.length()); chunks.add(text.substring(start, end)); start = end - overlap; if (start < 0) start = 0; } return chunks; }}// 递归分块:按层次分隔符依次分割publicclass RecursiveChunking implements ChunkingStrategy { privatefinalint chunkSize; privatefinalint overlap; privatefinal String[] separators; public RecursiveChunking(int chunkSize, int overlap) { this.chunkSize = chunkSize; this.overlap = overlap; this.separators = new String[]{"\n\n", "\n", "。", ". ", "; ", ", ", " "}; } @Override public List<String> chunk(String text) { return splitText(text, 0); } private List<String> splitText(String text, int separatorIndex) { if (separatorIndex >= separators.length) { return text.length() <= chunkSize ? Collections.singletonList(text) : new ArrayList<>(); } String separator = separators[separatorIndex]; List<String> parts = new ArrayList<>(); if (separator.isEmpty()) { for (int i = 0; i < text.length(); i++) { parts.add(String.valueOf(text.charAt(i))); } } else { String[] split = text.split(separator); for (String part : split) { parts.add(part); } } List<String> chunks = new ArrayList<>(); StringBuilder current = new StringBuilder(); for (String part : parts) { String test = current.length() > 0 ? current.toString() + separator + part : part; if (test.length() <= chunkSize) { current = new StringBuilder(test); } else { if (current.length() > 0) { chunks.add(current.toString()); } if (part.length() > chunkSize) { chunks.addAll(splitText(part, separatorIndex + 1)); current = new StringBuilder(); } else { current = new StringBuilder(part); } } } if (current.length() > 0) { chunks.add(current.toString()); } return chunks; }}// 语义分块:基于embedding相似度分割publicclass SemanticChunking implements ChunkingStrategy { privatefinal EmbeddingService embeddingService; privatefinaldouble threshold; public SemanticChunking(EmbeddingService embeddingService, double threshold) { this.embeddingService = embeddingService; this.threshold = threshold; } @Override public List<String> chunk(String text) { String[] paragraphs = text.split("\n\n"); List<String> validParagraphs = new ArrayList<>(); for (String p : paragraphs) { if (!p.trim().isEmpty()) { validParagraphs.add(p.trim()); } } if (validParagraphs.size() <= 1) { return validParagraphs; } // 获取段落embeddings List<float[]> embeddings = embeddingService.encode(validParagraphs); List<String> chunks = new ArrayList<>(); StringBuilder currentChunk = new StringBuilder(validParagraphs.get(0)); for (int i = 1; i < validParagraphs.size(); i++) { double similarity = cosineSimilarity(embeddings.get(i - 1), embeddings.get(i)); if (similarity < threshold) { chunks.add(currentChunk.toString()); currentChunk = new StringBuilder(validParagraphs.get(i)); } else { currentChunk.append("\n\n").append(validParagraphs.get(i)); } } if (currentChunk.length() > 0) { chunks.add(currentChunk.toString()); } return chunks; } private double cosineSimilarity(float[] a, float[] b) { double dotProduct = 0; double normA = 0; double normB = 0; for (int i = 0; i < a.length; i++) { dotProduct += a[i] * b[i]; normA += a[i] * a[i]; normB += b[i] * b[i]; } return dotProduct / (Math.sqrt(normA) * Math.sqrt(normB) + 1e-8); }}// Embedding服务接口publicinterface EmbeddingService { List<float[]> encode(List<String> texts); float[] encode(String text);}

2.3 表格内容处理

表格是文档中承载结构化信息的重要形式,传统的文本处理方式可能会将表格打散成无意义的片段。表格处理的核心挑战在于如何在保留表格结构信息的同时,将其转换成适合检索的格式。

对于小型表格(行数≤10,列数≤5),可以直接转换为Markdown格式保留行列关系;对于大型表格,则需要提取表头和关键统计信息,生成摘要文本。

// 表格处理方案import java.util.*;publicclass TableProcessor { public String processTable(String[][] tableData) { int rows = tableData.length; int cols = rows > 0 ? tableData[0].length : 0; if (rows <= 10 && cols <= 5) { return toMarkdown(tableData); } return extractSummary(tableData); } private String toMarkdown(String[][] data) { if (data.length == 0) return""; StringBuilder sb = new StringBuilder(); int cols = data[0].length; // 表头 sb.append("| ").append(String.join(" | ", data[0])).append(" |\n"); // 分隔行 sb.append("| ").append(String.join(" | ", Collections.nCopies(cols, "---"))).append(" |\n"); // 数据行 for (int i = 1; i < data.length; i++) { sb.append("| ").append(String.join(" | ", data[i])).append(" |\n"); } return sb.toString(); } private String extractSummary(String[][] data) { if (data.length == 0) return""; StringBuilder sb = new StringBuilder(); sb.append("[表格: ").append(data.length).append("行 x ").append(data[0].length).append("列]\n"); // 表头 sb.append("表头: ").append(String.join(" | ", data[0])).append("\n"); // 前几行数据 int maxRows = Math.min(3, data.length); for (int i = 1; i < maxRows; i++) { for (int j = 0; j < data[i].length; j++) { sb.append(data[0][j]).append(": ").append(data[i][j]).append(" | "); } sb.append("\n"); } return sb.toString(); }}

三、向量化常见问题

3.1 Embedding模型选择

Embedding模型是连接文本语义和向量空间的关键组件,直接影响检索效果的上限。在中文场景下,BGE系列是目前最流行的选择,其中BGE-large-zh在中文语义理解方面表现最优。

模型选择建议:

- 高质量需求:BGE-large-zh(维度1024,效果最好但速度稍慢)

- 平衡需求:BGE-base-zh(维度768,效果与速度兼顾)

- 多语言场景:BGE-m3(支持多语言和混合检索)

- 商业应用:M3E(开源可商用)

// Embedding模型配置import java.util.*;publicclass EmbeddingWrapper implements EmbeddingService { privatestaticfinal Map<String, String> MODELS = new HashMap<>(); static { MODELS.put("bge-large-zh", "BAAI/bge-large-zh"); MODELS.put("bge-base-zh", "BAAI/bge-base-zh"); MODELS.put("bge-m3", "BAAI/bge-m3"); } privatefinal String modelName; privatefinal Object embeddingModel; // 实际为SentenceTransformer public EmbeddingWrapper(String modelName) { this.modelName = modelName; this.embeddingModel = loadModel(modelName); } private Object loadModel(String modelName) { // 实际需要使用HJ-Embedding或其他Java embedding库 // 这里返回null作为占位符 returnnull; } @Override public List<float[]> encode(List<String> texts) { // 实际实现需要调用 embedding 模型 // 这里返回随机向量作为示例 List<float[]> results = new ArrayList<>(); Random random = new Random(); int dimension = getDimension(); for (String text : texts) { float[] embedding = newfloat[dimension]; for (int i = 0; i < dimension; i++) { embedding[i] = random.nextFloat(); } results.add(normalize(embedding)); } return results; } @Override publicfloat[] encode(String text) { return encode(Collections.singletonList(text)).get(0); } publicfloat[] encodeQuery(String query) { String prefixed = "为这个问题检索相关文档: " + query; return encode(prefixed); } public int getDimension() { switch (modelName) { case"bge-large-zh": case"bge-m3": return1024; default: return768; } } privatefloat[] normalize(float[] vector) { double norm = 0; for (float v : vector) { norm += v * v; } norm = Math.sqrt(norm); if (norm > 0) { for (int i = 0; i < vector.length; i++) { vector[i] = (float) (vector[i] / norm); } } return vector; }}

3.2 向量质量优化

即使选择了合适的Embedding模型,向量质量仍可能存在问题。常见表现包括:语义相似的文本在向量空间中距离较远、向量分布异常、存在离群点等。

本节提供向量质量分析工具和查询优化方法,帮助诊断和解决向量化过程中的问题。

// 向量质量分析与优化import java.util.*;import java.util.stream.*;publicclass VectorQualityAnalyzer { public Map<String, Double> analyzeDistribution(List<float[]> embeddings) { double[] norms = embeddings.stream() .mapToDouble(this::calculateNorm) .toArray(); return Map.of( "norm_mean", Arrays.stream(norms).average().orElse(0), "norm_std", calculateStd(norms), "norm_min", Arrays.stream(norms).min().orElse(0), "norm_max", Arrays.stream(norms).max().orElse(0) ); } public List<Integer> detectOutliers(List<float[]> embeddings, double threshold) { double[] norms = embeddings.stream() .mapToDouble(this::calculateNorm) .toArray(); double meanNorm = Arrays.stream(norms).average().orElse(0); double stdNorm = calculateStd(norms); List<Integer> outliers = new ArrayList<>(); for (int i = 0; i < norms.length; i++) { if (Math.abs(norms[i] - meanNorm) > threshold * stdNorm) { outliers.add(i); } } return outliers; } private double calculateNorm(float[] vector) { double sum = 0; for (float v : vector) { sum += v * v; } return Math.sqrt(sum); } private double calculateStd(double[] values) { double mean = Arrays.stream(values).average().orElse(0); double variance = Arrays.stream(values) .map(v -> (v - mean) * (v - mean)) .average() .orElse(0); return Math.sqrt(variance); }}// 查询优化publicclass QueryOptimizer { privatefinal EmbeddingService embeddingService; public QueryOptimizer(EmbeddingService embeddingService) { this.embeddingService = embeddingService; } public String expandQuery(String query) { // 简单实现:提取关键词并扩展 String[] words = query.split("\\s+"); StringBuilder expanded = new StringBuilder(query); for (String word : words) { if (word.length() > 1) { expanded.append(" ").append(word); } } return expanded.toString(); } public String addPrefix(String query) { return"检索与以下问题相关的文档: " + query; }}

四、检索常见问题

4.1 召回率低

召回率是衡量RAG系统效果的核心指标,表示相关文档被成功检索出来的比例。召回率低可能由多种原因造成:分块策略不当导致关键信息被分散、向量化质量不佳、检索算法存在缺陷等。

通过诊断工具可以分析问题根源,并根据问题类型给出优化建议。

// 召回率优化import java.util.*;import java.util.stream.*;publicclass RecallOptimizer { privatefinal VectorStore vectorStore; public RecallOptimizer(VectorStore vectorStore) { this.vectorStore = vectorStore; } public Map<String, Object> diagnose(List<TestCase> testCases) { List<Double> recalls = new ArrayList<>(); for (TestCase testCase : testCases) { List<SearchResult> results = vectorStore.search(testCase.query, 20); Set<String> retrievedIds = results.stream() .map(SearchResult::getId) .collect(Collectors.toSet()); double recall = (double) retrievedIds.stream() .filter(testCase.relevantIds::contains) .count() / testCase.relevantIds.size(); recalls.add(recall); } double avgRecall = recalls.stream() .mapToDouble(Double::doubleValue) .average() .orElse(0); return Map.of( "avg_recall", avgRecall, "level", avgRecall > 0.7 ? "good" : avgRecall > 0.5 ? "medium" : "poor", "suggestions", getSuggestions(avgRecall) ); } private List<String> getSuggestions(double recall) { if (recall < 0.3) { return Arrays.asList("检查分块策略", "尝试更小分块", "更换embedding模型"); } elseif (recall < 0.5) { return Arrays.asList("增加top_k", "尝试混合检索", "优化查询"); } return Arrays.asList("添加重排序", "微调阈值"); } publicstaticclass TestCase { public String query; public Set<String> relevantIds; public TestCase(String query, Set<String> relevantIds) { this.query = query; this.relevantIds = relevantIds; } }}

4.2 相似度阈值设置

相似度阈值是控制检索质量的重要参数。阈值过高会导致相关文档被过滤(低召回),阈值过低则会引入过多不相关文档(低精确)。不同查询的最佳阈值可能不同,因此需要根据实际数据进行调优。

本节提供阈值分析工具和自适应阈值策略,帮助在不同场景下找到合适的阈值。

// 阈值优化import java.util.*;import java.util.stream.*;publicclass ThresholdOptimizer { privatefinal VectorStore vectorStore; public ThresholdOptimizer(VectorStore vectorStore) { this.vectorStore = vectorStore; } public Map<String, Object> analyzeThreshold(List<RecallOptimizer.TestCase> testCases) { double[] thresholds = {0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9}; List<Double> precisions = new ArrayList<>(); List<Double> recalls = new ArrayList<>(); List<Double> f1s = new ArrayList<>(); for (double threshold : thresholds) { List<Double> precList = new ArrayList<>(); List<Double> recList = new ArrayList<>(); for (RecallOptimizer.TestCase testCase : testCases) { List<SearchResult> allResults = vectorStore.search(testCase.query, 50); List<SearchResult> filtered = allResults.stream() .filter(r -> r.getScore() >= threshold) .collect(Collectors.toList()); Set<String> retrievedIds = filtered.stream() .map(SearchResult::getId) .collect(Collectors.toSet()); long tp = retrievedIds.stream() .filter(testCase.relevantIds::contains) .count(); double precision = retrievedIds.isEmpty() ? 0 : (double) tp / retrievedIds.size(); double recall = testCase.relevantIds.isEmpty() ? 0 : (double) tp / testCase.relevantIds.size(); precList.add(precision); recList.add(recall); } precisions.add(precList.stream().mapToDouble(Double::doubleValue).average().orElse(0)); recalls.add(recList.stream().mapToDouble(Double::doubleValue).average().orElse(0)); double p = precisions.get(precisions.size() - 1); double r = recalls.get(recalls.size() - 1); double f1 = (p + r) > 0 ? 2 * p * r / (p + r) : 0; f1s.add(f1); } int bestIdx = f1s.indexOf(Collections.max(f1s)); return Map.of( "thresholds", thresholds, "precision", precisions, "recall", recalls, "f1", f1s, "best_threshold", thresholds[bestIdx] ); }}// 自适应阈值策略publicclass AdaptiveThreshold { privatefinaldouble defaultThreshold = 0.5; public double getThreshold(String query, List<SearchResult> results) { if (results.isEmpty()) { return defaultThreshold; } double[] scores = results.stream() .mapToDouble(SearchResult::getScore) .toArray(); double meanScore = Arrays.stream(scores).average().orElse(0); double stdScore = calculateStd(scores); double maxScore = Arrays.stream(scores).max().orElse(0); if (maxScore - meanScore > stdScore * 2) { return Math.max(0.6, meanScore + stdScore * 0.5); } return defaultThreshold; } private double calculateStd(double[] values) { double mean = Arrays.stream(values).average().orElse(0); double variance = Arrays.stream(values) .map(v -> (v - mean) * (v - mean)) .average() .orElse(0); return Math.sqrt(variance); }}

4.3 检索结果重排序

基础向量检索按相似度排序,但在很多情况下这个简单排序不能完美反映文档的实际相关性。重排序(Rerank)技术在初步检索后进行二次排序,显著提升排序质量。

两种重排序策略:

- Cross-Encoder重排序:效果最好但计算成本较高,需要对每个query-doc对进行推理

- 多样性重排序:使用MMR(最大边际相关)算法,避免返回过于相似的结果

// 重排序实现import java.util.*;import java.util.stream.*;publicclass CrossEncoderReranker { privatefinal Object crossEncoderModel; // 实际为CrossEncoder public CrossEncoderReranker(String modelName) { // 加载Cross-Encoder模型 this.crossEncoderModel = null; } public List<SearchResult> rerank(String query, List<SearchResult> results, Integer topK) { if (results.isEmpty()) { return results; } // 实际需要调用Cross-Encoder计算得分 // 这里简化实现 Random random = new Random(); for (SearchResult result : results) { result.setRerankScore(random.nextDouble()); } return results.stream() .sorted(Comparator.comparing(SearchResult::getRerankScore).reversed()) .limit(topK != null ? topK : results.size()) .collect(Collectors.toList()); }}// 多样性重排序publicclass DiversityReranker { privatefinal EmbeddingService embeddingService; privatefinaldouble diversityWeight; public DiversityReranker(EmbeddingService embeddingService, double diversityWeight) { this.embeddingService = embeddingService; this.diversityWeight = diversityWeight; } public List<SearchResult> rerank(String query, List<SearchResult> results, Integer topK) { if (results.isEmpty()) { return results; } float[] queryEmb = embeddingService.encode(query); List<float[]> docEmbs = results.stream() .map(r -> embeddingService.encode(r.getContent())) .collect(Collectors.toList()); double[] similarities = newdouble[results.size()]; for (int i = 0; i < docEmbs.size(); i++) { similarities[i] = cosineSimilarity(queryEmb, docEmbs.get(i)); } List<Integer> selected = new ArrayList<>(); Set<Integer> remaining = new LinkedHashSet<>(); for (int i = 0; i < results.size(); i++) { remaining.add(i); } while (!remaining.isEmpty()) { Integer bestIdx = null; double bestScore = Double.NEGATIVE_INFINITY; for (Integer idx : remaining) { double relevance = similarities[idx]; double diversity = 0; if (!selected.isEmpty()) { List<float[]> selectedEmbs = selected.stream() .map(docEmbs::get) .collect(Collectors.toList()); double minSim = Double.MAX_VALUE; for (float[] emb : selectedEmbs) { double sim = cosineSimilarity(docEmbs.get(idx), emb); minSim = Math.min(minSim, sim); } diversity = 1 - minSim; } double score = (1 - diversityWeight) * relevance + diversityWeight * diversity; if (score > bestScore) { bestScore = score; bestIdx = idx; } } selected.add(bestIdx); remaining.remove(bestIdx); if (topK != null && selected.size() >= topK) { break; } } return selected.stream() .map(results::get) .collect(Collectors.toList()); } private double cosineSimilarity(float[] a, float[] b) { double dotProduct = 0; double normA = 0; double normB = 0; for (int i = 0; i < a.length; i++) { dotProduct += a[i] * b[i]; normA += a[i] * a[i]; normB += b[i] * b[i]; } return dotProduct / (Math.sqrt(normA) * Math.sqrt(normB) + 1e-8); }}

4.4 混合检索实现

单一依赖向量检索在某些场景下可能无法获得最佳效果。混合检索结合向量检索(语义理解)和BM25(关键词匹配)的优势,能够在两个维度上取得平衡,显著提升检索的稳健性。

**RRF(互惠排名融合)**是一种常用的结果融合方法,通过对不同检索方法的结果进行排名融合,得到最终排序。

// 混合检索:向量检索 + BM25import java.util.*;import java.util.stream.*;publicclass BM25 { privatefinaldouble k1 = 1.5; privatefinaldouble b = 0.75; private Map<String, List<String>> documents = new HashMap<>(); private Map<String, Integer> docLengths = new HashMap<>(); privatedouble avgdl = 0; private Map<String, Double> idf = new HashMap<>(); public void index(List<Document> docs) { for (Document doc : docs) { List<String> tokens = tokenize(doc.getContent()); documents.put(doc.getId(), tokens); docLengths.put(doc.getId(), tokens.size()); } avgdl = docLengths.values().stream() .mapToInt(Integer::intValue) .average() .orElse(0); // 计算IDF Map<String, Integer> df = new HashMap<>(); for (List<String> tokens : documents.values()) { Set<String> unique = new HashSet<>(tokens); for (String t : unique) { df.put(t, df.getOrDefault(t, 0) + 1); } } int N = documents.size(); for (Map.Entry<String, Integer> entry : df.entrySet()) { double idfValue = Math.log((N - entry.getValue() + 0.5) / (entry.getValue() + 0.5) + 1); idf.put(entry.getKey(), idfValue); } } public List<SearchResult> search(String query, int topK) { List<String> queryTokens = tokenize(query); Map<String, Double> scores = new HashMap<>(); for (Map.Entry<String, List<String>> entry : documents.entrySet()) { String docId = entry.getKey(); List<String> tokens = entry.getValue(); int dl = docLengths.get(docId); Map<String, Integer> tf = new HashMap<>(); for (String t : tokens) { tf.put(t, tf.getOrDefault(t, 0) + 1); } double score = 0; for (String t : queryTokens) { if (tf.containsKey(t)) { double tfVal = tf.get(t); double idfVal = idf.getOrDefault(t, 0.0); double numerator = tfVal * (k1 + 1); double denominator = tfVal + k1 * (1 - b + b * dl / avgdl); score += idfVal * numerator / denominator; } } if (score > 0) { scores.put(docId, score); } } return scores.entrySet().stream() .sorted(Map.Entry.<String, Double>comparingByValue().reversed()) .limit(topK) .map(e -> new SearchResult(e.getKey(), e.getValue())) .collect(Collectors.toList()); } private List<String> tokenize(String text) { // 简单中文分词实现 List<String> tokens = new ArrayList<>(); StringBuilder sb = new StringBuilder(); for (char c : text.toCharArray()) { if (Character.isWhitespace(c)) { if (sb.length() > 0) { tokens.add(sb.toString()); sb = new StringBuilder(); } } else { sb.append(c); } } if (sb.length() > 0) { tokens.add(sb.toString()); } return tokens; }}// 混合检索器publicclass HybridRetriever { privatefinal VectorStore vectorRetriever; privatefinal BM25 bm25Retriever; privatefinaldouble vectorWeight; privatefinaldouble bm25Weight; public HybridRetriever(VectorStore vectorRetriever, BM25 bm25Retriever, double vectorWeight) { this.vectorRetriever = vectorRetriever; this.bm25Retriever = bm25Retriever; this.vectorWeight = vectorWeight; this.bm25Weight = 1 - vectorWeight; } public List<SearchResult> search(String query, int topK) { int rrfK = 60; List<SearchResult> vectorResults = vectorRetriever.search(query, topK * 3); List<SearchResult> bm25Results = bm25Retriever.search(query, topK * 3); Map<String, SearchResult> rrfScores = new HashMap<>(); for (int rank = 0; rank < vectorResults.size(); rank++) { SearchResult r = vectorResults.get(rank); String docId = r.getId(); if (!rrfScores.containsKey(docId)) { rrfScores.put(docId, new SearchResult(docId, 0)); } double oldScore = rrfScores.get(docId).getScore(); rrfScores.get(docId).setScore(oldScore + 1.0 / (rrfK + rank + 1)); } for (int rank = 0; rank < bm25Results.size(); rank++) { SearchResult r = bm25Results.get(rank); String docId = r.getId(); if (!rrfScores.containsKey(docId)) { rrfScores.put(docId, new SearchResult(docId, 0)); } double oldScore = rrfScores.get(docId).getScore(); rrfScores.get(docId).setScore(oldScore + 1.0 / (rrfK + rank + 1)); } return rrfScores.values().stream() .sorted(Comparator.comparing(SearchResult::getScore).reversed()) .limit(topK) .collect(Collectors.toList()); }}// 文档和搜索结果类publicclass Document { private String id; private String content; private Map<String, Object> metadata; public Document(String id, String content) { this.id = id; this.content = content; this.metadata = new HashMap<>(); } public String getId() { return id; } public String getContent() { return content; } public Map<String, Object> getMetadata() { return metadata; }}publicclass SearchResult { private String id; privatedouble score; private String content; privatedouble rerankScore; public SearchResult(String id, double score) { this.id = id; this.score = score; } public String getId() { return id; } public double getScore() { return score; } public void setScore(double score) { this.score = score; } public String getContent() { return content; } public void setContent(String content) { this.content = content; } public double getRerankScore() { return rerankScore; } public void setRerankScore(double rerankScore) { this.rerankScore = rerankScore; }}publicinterface VectorStore { List<SearchResult> search(String query, int topK);}

五、生成常见问题

5.1 上下文信息不足

即使检索系统运行正常,也可能出现检索结果不够全面,无法支撑模型生成准确答案的情况。这种问题表现为:回答不完整、遗漏关键信息等。

上下文增强策略:

- 数量扩展:增加检索结果数量

- 主题扩展:当检索结果过于集中时,补充相关但不同主题的内容

- 迭代检索:基于当前结果生成补充查询,逐步完善上下文

// 上下文增强import java.util.*;import java.util.stream.*;publicclass ContextEnhancer { privatefinal VectorStore vectorStore; privatefinal EmbeddingService embeddingService; public ContextEnhancer(VectorStore vectorStore, EmbeddingService embeddingService) { this.vectorStore = vectorStore; this.embeddingService = embeddingService; } public List<SearchResult> enhance(String query, List<SearchResult> results, int targetCount) { List<SearchResult> enhanced = new ArrayList<>(results); if (enhanced.size() < targetCount) { List<SearchResult> additional = vectorStore.search(query, targetCount * 2); enhanced.addAll(additional); } double diversity = calculateDiversity(enhanced); if (diversity < 0.2) { List<SearchResult> expanded = expandTopics(query, enhanced); enhanced.addAll(expanded); } return enhanced.stream().limit(targetCount).collect(Collectors.toList()); } private double calculateDiversity(List<SearchResult> results) { if (results.size() <= 1) { return0.0; } List<float[]> embeddings = results.stream() .map(r -> embeddingService.encode(r.getContent())) .collect(Collectors.toList()); double totalSim = 0; int count = 0; for (int i = 0; i < embeddings.size(); i++) { for (int j = i + 1; j < embeddings.size(); j++) { totalSim += cosineSimilarity(embeddings.get(i), embeddings.get(j)); count++; } } return count > 0 ? 1 - (totalSim / count) : 0; } private List<SearchResult> expandTopics(String query, List<SearchResult> current) { returnnew ArrayList<>(); } private double cosineSimilarity(float[] a, float[] b) { double dotProduct = 0; double normA = 0; double normB = 0; for (int i = 0; i < a.length; i++) { dotProduct += a[i] * b[i]; normA += a[i] * a[i]; normB += b[i] * b[i]; } return dotProduct / (Math.sqrt(normA) * Math.sqrt(normB) + 1e-8); }}

5.2 上下文太长导致截断

大语言模型有上下文窗口限制(如4K、8K、16K tokens等),当检索内容超过限制时,模型只能处理前面的部分,导致后面的关键信息被丢弃。

解决方案:

- Token计数:精确计算内容长度,合理分配空间

- 优先级排序:按相关性选择保留的内容

- 截断策略:必要时对内容进行截断,保留开头部分

// 上下文长度管理import java.util.*;import java.util.stream.*;publicclass ContextLengthManager { privatestaticfinal Map<String, Integer> MODEL_MAX_TOKENS = new HashMap<>(); static { MODEL_MAX_TOKENS.put("gpt-3.5-turbo", 4096); MODEL_MAX_TOKENS.put("gpt-3.5-turbo-16k", 16385); MODEL_MAX_TOKENS.put("gpt-4", 8192); MODEL_MAX_TOKENS.put("gpt-4-32k", 32768); MODEL_MAX_TOKENS.put("gpt-4-turbo", 128000); } privatefinal String modelName; privatefinalint maxTokens; privatefinaldouble reservedRatio; privatefinal Tokenizer tokenizer; public ContextLengthManager(String modelName, double reservedRatio) { this.modelName = modelName; this.maxTokens = MODEL_MAX_TOKENS.getOrDefault(modelName, 4096); this.reservedRatio = reservedRatio; this.tokenizer = new Tokenizer(modelName); } public Map<String, Object> fitContext(String query, List<SearchResult> documents) { int available = (int) (maxTokens * (1 - reservedRatio)); int queryTokens = tokenizer.countTokens(query) + 100; int contextTokens = available - queryTokens; List<SearchResult> sortedDocs = documents.stream() .sorted(Comparator.comparing(SearchResult::getScore).reversed()) .collect(Collectors.toList()); List<SearchResult> selected = new ArrayList<>(); int currentTokens = 0; for (SearchResult doc : sortedDocs) { int docTokens = tokenizer.countTokens(doc.getContent()); if (currentTokens + docTokens <= contextTokens) { selected.add(doc); currentTokens += docTokens; } else { int remaining = contextTokens - currentTokens; if (remaining > 100) { SearchResult truncated = new SearchResult(doc.getId(), doc.getScore()); truncated.setContent(tokenizer.truncate(doc.getContent(), remaining)); selected.add(truncated); } break; } } String context = IntStream.range(0, selected.size()) .mapToObj(i -> "【文档 " + (i + 1) + "】\n" + selected.get(i).getContent()) .collect(Collectors.joining("\n\n---\n\n")); return Map.of("context", context, "documents", selected); }}// 简单分词器publicclass Tokenizer { privatefinal String modelName; public Tokenizer(String modelName) { this.modelName = modelName; } public int countTokens(String text) { // 简单估算:中文按字符数,英文按单词数 int count = 0; boolean prevWhitespace = true; for (char c : text.toCharArray()) { if (Character.isWhitespace(c)) { prevWhitespace = true; } elseif (Character.UnicodeBlock.of(c) == Character.UnicodeBlock.CJK_UNIFIED_IDEOGRAPHS) { count++; prevWhitespace = false; } else { if (prevWhitespace) { count++; } prevWhitespace = false; } } // 转换为token估算(约1.3个字符=1个token) return (int) (count / 1.3); } public String truncate(String text, int maxTokens) { int currentTokens = 0; StringBuilder sb = new StringBuilder(); for (char c : text.toCharArray()) { int charTokens = (Character.UnicodeBlock.of(c) == Character.UnicodeBlock.CJK_UNIFIED_IDEOGRAPHS) ? 1 : (Character.isWhitespace(c) ? 0 : 1); if (currentTokens + charTokens > maxTokens) { break; } sb.append(c); currentTokens += charTokens; } return sb.toString(); }}

5.3 生成幻觉控制

幻觉是大型语言模型的常见问题,指模型生成看似合理但实际错误的内容。在RAG场景中,幻觉表现为:没有使用检索到的真实信息、编造不在上下文中的事实等。

幻觉控制策略:

- 提示词约束:明确要求模型基于给定内容回答

- 响应验证:检查回答是否在给定上下文中

- 来源标注:为回答添加引用来源,便于核查

// 幻觉控制import java.util.*;publicclass HallucinationController { privatefinal LLMService llmService; public HallucinationController(LLMService llmService) { this.llmService = llmService; } public String buildAntiHallucinationPrompt(String query, String context) { return String.format(""" 你是一个专业的问答助手。你的任务是基于下面提供的文档内容来回答用户的问题。 要求: 1. 必须严格基于提供的文档内容回答,不要添加文档中没有的信息 2. 如果文档中的信息足以回答问题,请直接给出答案 3. 如果文档中的信息不足以回答问题,请明确说明"根据提供的文档,我无法回答这个问题" 4. 不要编造任何事实、数据或引用 5. 在回答时,可以适当引用文档中的原文 --- 文档内容: %s --- 用户问题:%s 回答: """, context, query); } public Map<String, Object> validateResponse(String response, String context) { String[] refusalPhrases = {"无法回答", "没有提供", "文档中未提及", "不足以回答"}; for (String phrase : refusalPhrases) { if (response.contains(phrase)) { return Map.of( "is_valid", true, "confidence", 0.8, "reason", "explicit_refusal" ); } } return Map.of("is_valid", true, "confidence", 1.0); }}// 来源标注publicclass SourceAttribution { public String addReferences(String response, List<SearchResult> documents) { String[] lines = response.split("\n"); StringBuilder result = new StringBuilder(); for (String line : lines) { SearchResult matched = findMatchingDoc(line, documents); if (matched != null) { line += " [来源: 文档" + matched.getId() + "]"; } result.append(line).append("\n"); } return result.toString().trim(); } private SearchResult findMatchingDoc(String text, List<SearchResult> documents) { Set<String> textWords = new HashSet<>(Arrays.asList(text.toLowerCase().split("\\s+"))); SearchResult bestMatch = null; int bestScore = 0; for (SearchResult doc : documents) { Set<String> contentWords = new HashSet<>(Arrays.asList(doc.getContent().toLowerCase().split("\\s+"))); int overlap = (int) textWords.stream() .filter(contentWords::contains) .count(); if (overlap > bestScore) { bestScore = overlap; bestMatch = doc; } } return bestScore > 3 ? bestMatch : null; }}publicinterface LLMService { String generate(String prompt);}

5.4 输出格式控制

在实际应用中,用户往往期望RAG系统返回结构化输出(JSON、表格等)。然而大模型对格式的控制并不精确,可能出现格式错误、嵌套混乱等问题。

解决方案:

- JSON解析:从模型输出中提取JSON部分

- 格式校验:验证输出是否符合预期Schema

- 自动修复:尝试修复常见的格式错误

// 输出格式控制import java.util.*;import java.util.regex.*;publicclass OutputFormatter { public Map<String, Object> formatAsJson(String response, Map<String, Object> schema) { try { Pattern pattern = Pattern.compile("\\{[\\s\\S]*\\}"); Matcher matcher = pattern.matcher(response); if (matcher.find()) { String jsonStr = matcher.group(); return parseJson(jsonStr); } } catch (Exception e) { // 解析失败 } if (schema != null) { return Map.of("raw", response, "schema", schema); } return Map.of("raw", response); } public String formatAsTable(String response) { String[] lines = response.trim().split("\n"); List<String[]> tableRows = new ArrayList<>(); for (String line : lines) { if (line.contains("|")) { String[] cells = Arrays.stream(line.split("\\|")) .map(String::trim) .filter(s -> !s.isEmpty()) .toArray(String[]::new); if (cells.length > 0) { tableRows.add(cells); } } } if (tableRows.isEmpty()) { return response; } StringBuilder md = new StringBuilder(); // 表头 md.append("| ").append(String.join(" | ", tableRows.get(0))).append(" |\n"); // 分隔行 md.append("| ").append(String.join(" | ", Collections.nCopies(tableRows.get(0).length, "---"))).append(" |\n"); // 数据行 for (int i = 1; i < tableRows.size(); i++) { String[] row = tableRows.get(i); String[] padded = Arrays.copyOf(row, tableRows.get(0).length); md.append("| ").append(String.join(" | ", padded)).append(" |\n"); } return md.toString(); } private Map<String, Object> parseJson(String json) { // 简化的JSON解析 Map<String, Object> result = new HashMap<>(); result.put("raw", json); return result; }}// 输出验证器publicclass OutputValidator { privatefinal Map<String, Object> schema; public OutputValidator(Map<String, Object> schema) { this.schema = schema; } public Map<String, Object> validate(Map<String, Object> output) { List<String> errors = new ArrayList<>(); String expectedType = (String) schema.get("type"); if ("object".equals(expectedType) && !(output instanceof Map)) { errors.add("期望object类型,实际为" + output.getClass().getSimpleName()); } List<String> required = (List<String>) schema.get("required"); if (required != null) { for (String field : required) { if (!output.containsKey(field)) { errors.add("缺少必需字段: " + field); } } } return Map.of( "is_valid", errors.isEmpty(), "errors", errors ); }}

六、性能与成本问题

6.1 向量检索延迟优化

检索延迟直接影响用户体验,特别是在实时对话场景中。延迟过高可能由向量索引效率低、网络瓶颈、大量候选向量计算等因素造成。

优化策略:

- 索引参数调优:调整HNSW等索引的参数(ef、M等)

- 查询缓存:对相同或相似的查询进行缓存

- 批量处理:一次性处理多个查询,提高吞吐量

// 性能优化import java.util.*;import java.util.concurrent.*;publicclass RetrievalOptimizer { privatefinal VectorStore vectorStore; public RetrievalOptimizer(VectorStore vectorStore) { this.vectorStore = vectorStore; } public Map<String, Object> optimizeIndexParams(List<String> testQueries, Map<String, List<?>> paramGrid) { List<Map<String, Object>> results = new ArrayList<>(); List<Integer> efValues = (List<Integer>) paramGrid.getOrDefault("ef", Arrays.asList(64, 128, 256)); List<Integer> mValues = (List<Integer>) paramGrid.getOrDefault("M", Arrays.asList(16, 32, 64)); for (int ef : efValues) { for (int m : mValues) { Map<String, Object> params = Map.of("ef", ef, "M", m); List<Double> latencies = new ArrayList<>(); for (String query : testQueries) { long start = System.nanoTime(); vectorStore.search(query, 10); long end = System.nanoTime(); latencies.add((end - start) / 1_000_000.0); } double avgLatency = latencies.stream() .mapToDouble(Double::doubleValue) .average() .orElse(0); double p99Latency = latencies.stream() .mapToDouble(Double::doubleValue) .sorted() .skip((long) (latencies.size() * 0.99)) .findFirst() .orElse(0); results.add(Map.of( "params", params, "avg_latency", avgLatency, "p99_latency", p99Latency )); } } Map<String, Object> best = results.stream() .min(Comparator.comparingDouble(m -> (Double) m.get("avg_latency"))) .orElse(results.get(0)); return best; }}// 查询缓存publicclass QueryCache { privatefinal Map<String, CacheEntry> cache = new LinkedHashMap<>(); privatefinalint cacheSize; privatefinaldouble similarityThreshold; public QueryCache(int cacheSize, double similarityThreshold) { this.cacheSize = cacheSize; this.similarityThreshold = similarityThreshold; } public List<SearchResult> get(String query, EmbeddingService embeddingService) { float[] queryEmb = embeddingService.encode(query); for (CacheEntry entry : cache.values()) { double similarity = cosineSimilarity(queryEmb, entry.embedding); if (similarity >= similarityThreshold) { return entry.results; } } returnnull; } public void put(String query, float[] queryEmb, List<SearchResult> results) { if (cache.size() >= cacheSize) { String firstKey = cache.keySet().iterator().next(); cache.remove(firstKey); } cache.put(query, new CacheEntry(queryEmb, results)); } private double cosineSimilarity(float[] a, float[] b) { double dotProduct = 0; double normA = 0; double normB = 0; for (int i = 0; i < a.length; i++) { dotProduct += a[i] * b[i]; normA += a[i] * a[i]; normB += b[i] * b[i]; } return dotProduct / (Math.sqrt(normA) * Math.sqrt(normB) + 1e-8); } privatestaticclass CacheEntry { float[] embedding; List<SearchResult> results; CacheEntry(float[] embedding, List<SearchResult> results) { this.embedding = embedding; this.results = results; } }}

6.2 大规模数据处理

当向量数据规模达到亿级别时,传统单节点方案可能无法满足性能和可用性需求。分布式向量数据库和索引方案成为必然选择。

分布式方案:

- 数据分片:使用一致性哈希将数据分布到多个节点

- 并行查询:同时查询多个节点并合并结果

- 增量索引:支持文档的实时增删改,无需全量重建

// 分布式向量处理import java.util.*;import java.util.concurrent.*;import java.security.MessageDigest;publicclass DistributedVectorStore implements VectorStore { privatefinal List<String> nodes; privatefinal Map<String, VectorStore> nodeStores = new HashMap<>(); public DistributedVectorStore(List<String> nodes) { this.nodes = nodes; } private String getShard(String vectorId) { try { MessageDigest md = MessageDigest.getInstance("MD5"); byte[] digest = md.digest(vectorId.getBytes()); int hash = Math.abs(new BigInteger(1, digest).intValue()); return nodes.get(hash % nodes.size()); } catch (Exception e) { return nodes.get(vectorId.hashCode() % nodes.size()); } } @Override public List<SearchResult> search(String query, int topK) { ExecutorService executor = Executors.newFixedThreadPool(nodes.size()); List<Future<List<SearchResult>>> futures = new ArrayList<>(); for (String node : nodes) { futures.add(executor.submit(() -> searchNode(node, query, topK))); } List<SearchResult> results = new ArrayList<>(); for (Future<List<SearchResult>> future : futures) { try { results.addAll(future.get()); } catch (Exception e) { // 处理节点失败 } } executor.shutdown(); return results.stream() .sorted(Comparator.comparing(SearchResult::getScore).reversed()) .limit(topK) .collect(Collectors.toList()); } private List<SearchResult> searchNode(String node, String query, int topK) { // 实际需要连接到对应节点 returnnew ArrayList<>(); }}// 增量索引器publicclass IncrementalIndexer { privatefinal VectorStore vectorStore; privatefinalint batchSize; privatefinal List<Map<String, Object>> pending = new ArrayList<>(); public IncrementalIndexer(VectorStore vectorStore, int batchSize) { this.vectorStore = vectorStore; this.batchSize = batchSize; } public void add(Map<String, Object> vector) { pending.add(vector); if (pending.size() >= batchSize) { flush(); } } public void flush() { if (!pending.isEmpty()) { // 批量添加到向量存储 // vectorStore.addVectors(pending); pending.clear(); } }}

6.3 成本控制

RAG系统的成本主要来自API调用费用、计算资源消耗和存储成本。有效的成本控制需要在效果和效率之间找到平衡。

成本优化策略:

- 模型选择:根据需求选择性价比最高的模型

- 本地部署:使用开源模型避免API费用

- 缓存策略:缓存热门查询结果减少重复计算

- 量化压缩:使用向量量化降低存储成本

// 成本控制import java.util.*;publicclass CostController { privatefinaldouble budgetLimit; privatedouble totalCost = 0; privatefinal Map<String, Double> costHistory = new LinkedHashMap<>(); public CostController(double budgetLimit) { this.budgetLimit = budgetLimit; } public double calculateEmbeddingCost(int numTexts, String model) { if (model.toLowerCase().contains("openai") || model.toLowerCase().contains("cohere")) { return (numTexts * 500 / 1000.0) * 0.0001; } return0; // 本地部署无成本 } public double calculateLLMCost(int numRequests, int avgTokens) { return (numRequests * avgTokens / 1000.0) * 0.01; } public void track(String operation, double cost) { totalCost += cost; costHistory.put(operation, cost); } public boolean checkBudget() { return totalCost < budgetLimit; } public Map<String, Object> getReport() { return Map.of( "total_cost", totalCost, "budget_remaining", budgetLimit - totalCost, "usage_pct", (totalCost / budgetLimit) * 100 ); }}

七、工程化问题

7.1 增量数据更新

在生产环境中,知识库的内容不是静态的,需要持续更新。如何高效处理新增、修改、删除文档,同时不影响在线服务,是重要的工程问题。

增量更新策略:

- 内容Hash:通过对比内容Hash判断文档是否变化

- 版本管理:记录文档版本,支持回溯

- 变更日志:记录所有变更操作,支持审计和重放

// 增量更新管理import java.util.*;import java.security.MessageDigest;import java.nio.charset.StandardCharsets;import java.time.Instant;publicclass IncrementalUpdateManager { privatefinal VectorStore vectorStore; privatefinal EmbeddingService embeddingService; privatefinal Map<String, DocVersion> versions = new HashMap<>(); privatefinal List<ChangeLog> changeLog = new ArrayList<>(); public IncrementalUpdateManager(VectorStore vectorStore, EmbeddingService embeddingService) { this.vectorStore = vectorStore; this.embeddingService = embeddingService; } public Map<String, Object> addDocument(Document document) { String docId = document.getId(); String content = document.getContent(); String contentHash = hash(content); if (versions.containsKey(docId)) { DocVersion old = versions.get(docId); if (old.hash.equals(contentHash)) { return Map.of("status", "skipped", "reason", "unchanged"); } return updateDocument(document, contentHash, old); } return createDocument(document, contentHash); } private Map<String, Object> createDocument(Document document, String contentHash) { float[] embedding = embeddingService.encode(document.getContent()); Map<String, Object> vector = new HashMap<>(); vector.put("id", document.getId()); vector.put("content", document.getContent()); vector.put("embedding", embedding); // vectorStore.addVectors(Collections.singletonList(vector)); versions.put(document.getId(), new DocVersion(contentHash, 1, Instant.now().toEpochMilli())); changeLog.add(new ChangeLog("add", document.getId(), Instant.now().toEpochMilli())); return Map.of("status", "created"); } private Map<String, Object> updateDocument(Document document, String contentHash, DocVersion oldVersion) { float[] newEmbedding = embeddingService.encode(document.getContent()); Map<String, Object> vector = new HashMap<>(); vector.put("id", document.getId()); vector.put("content", document.getContent()); vector.put("embedding", newEmbedding); // vectorStore.updateVector(document.getId(), vector); versions.put(document.getId(), new DocVersion( contentHash, oldVersion.version + 1, Instant.now().toEpochMilli() )); return Map.of("status", "updated"); } public Map<String, Object> deleteDocument(String docId) { if (!versions.containsKey(docId)) { return Map.of("status", "not_found"); } // vectorStore.deleteVector(docId); versions.remove(docId); changeLog.add(new ChangeLog("delete", docId, Instant.now().toEpochMilli())); return Map.of("status", "deleted"); } private String hash(String content) { try { MessageDigest md = MessageDigest.getInstance("SHA-256"); byte[] digest = md.digest(content.getBytes(StandardCharsets.UTF_8)); StringBuilder sb = new StringBuilder(); for (byte b : digest) { sb.append(String.format("%02x", b)); } return sb.toString(); } catch (Exception e) { return String.valueOf(content.hashCode()); } } privatestaticclass DocVersion { String hash; int version; long createdAt; DocVersion(String hash, int version, long createdAt) { this.hash = hash; this.version = version; this.createdAt = createdAt; } } privatestaticclass ChangeLog { String operation; String docId; long timestamp; ChangeLog(String operation, String docId, long timestamp) { this.operation = operation; this.docId = docId; this.timestamp = timestamp; } }}

7.2 多数据源统一

企业场景中,数据可能来自多个不同源:文件系统、数据库、API、网页等。统一这些异构数据源,构建一致的检索体验,是RAG工程化的重要课题。

多数据源方案:

- 抽象数据源接口:定义统一的文档获取接口

- 适配器模式:为不同数据源实现适配器

- 统一加载器:整合所有数据源,统一输出格式

// 多数据源统一加载import java.util.*;import java.io.*;publicinterface DataSource extends Iterable<Document> { String getSourceName();}// 文件系统数据源publicclass FileSystemSource implements DataSource { privatefinal String basePath; privatefinal List<String> patterns; public FileSystemSource(String basePath, List<String> patterns) { this.basePath = basePath; this.patterns = patterns; } @Override public Iterator<Document> iterator() { List<Document> documents = new ArrayList<>(); for (String pattern : patterns) { try { java.nio.file.Path path = java.nio.file.Paths.get(basePath); java.nio.file.DirectoryStream<java.nio.file.Path> stream = java.nio.file.Files.newDirectoryStream(path, pattern); for (java.nio.file.Path filePath : stream) { if (java.nio.file.Files.isRegularFile(filePath)) { String content = new String( java.nio.file.Files.readAllBytes(filePath), StandardCharsets.UTF_8 ); documents.add(new Document(filePath.toString(), content)); } } } catch (IOException e) { // 处理错误 } } return documents.iterator(); } @Override public String getSourceName() { return"filesystem"; }}// 数据库数据源publicclass DatabaseSource implements DataSource { privatefinal String connectionString; privatefinal String query; public DatabaseSource(String connectionString, String query) { this.connectionString = connectionString; this.query = query; } @Override public Iterator<Document> iterator() { // 实现数据库查询 return Collections.emptyIterator(); } @Override public String getSourceName() { return"database"; }}// 统一数据加载器publicclass UnifiedDataLoader { privatefinal List<DataSource> sources = new ArrayList<>(); public void addSource(DataSource source) { sources.add(source); } public List<Document> loadAll(List<String> filters) { List<Document> allDocs = new ArrayList<>(); for (DataSource source : sources) { if (filters != null && !filters.contains(source.getSourceName())) { continue; } try { for (Document doc : source) { allDocs.add(doc); } } catch (Exception e) { System.err.println("加载数据源失败 " + source.getSourceName() + ": " + e.getMessage()); } } return allDocs; }}

7.3 监控与可观测性

生产环境的RAG系统需要完善的监控体系,以便及时发现和定位问题,持续优化系统效果。

监控指标:

- 性能指标:检索延迟、吞吐量、错误率

- 效果指标:召回率、精确率、命中率

- 业务指标:日活用户数、查询成功率

// 监控系统import java.util.*;import java.util.concurrent.*;import java.time.Instant;publicclass RAGMonitor { privatefinal Map<String, List<Double>> metrics = new ConcurrentHashMap<>(); privatelong totalRequests = 0; privatelong failedRequests = 0; public RAGMonitor() { metrics.put("retrieval_latency", new ArrayList<>()); metrics.put("retrieval_recall", new ArrayList<>()); metrics.put("generation_latency", new ArrayList<>()); } public void recordRetrieval(double latency, Double recall) { metrics.get("retrieval_latency").add(latency); if (recall != null) { metrics.get("retrieval_recall").add(recall); } totalRequests.incrementAndGet(); } public void recordGeneration(double latency) { metrics.get("generation_latency").add(latency); } public void recordFailure(Exception error) { failedRequests.incrementAndGet(); } public Map<String, Object> getSummary() { Map<String, Object> summary = new LinkedHashMap<>(); summary.put("total_requests", totalRequests); summary.put("failed_requests", failedRequests); summary.put("failure_rate", (double) failedRequests / Math.max(1, totalRequests)); if (!metrics.get("retrieval_latency").isEmpty()) { List<Double> latencies = metrics.get("retrieval_latency"); summary.put("avg_retrieval_latency", calculateAvg(latencies)); summary.put("p95_retrieval_latency", calculatePercentile(latencies, 95)); } if (!metrics.get("retrieval_recall").isEmpty()) { summary.put("avg_recall", calculateAvg(metrics.get("retrieval_recall"))); } return summary; } private double calculateAvg(List<Double> values) { return values.stream().mapToDouble(Double::doubleValue).average().orElse(0); } private double calculatePercentile(List<Double> values, int percentile) { List<Double> sorted = values.stream().sorted().collect(Collectors.toList()); int index = (int) Math.ceil(percentile / 100.0 * sorted.size()) - 1; return sorted.get(Math.max(0, index)); }}// 检索效果评估器publicclass RetrievalEvaluator { privatefinal VectorStore vectorStore; public RetrievalEvaluator(VectorStore vectorStore) { this.vectorStore = vectorStore; } public Map<String, Double> evaluate(List<RecallOptimizer.TestCase> testCases) { List<Double> precisions = new ArrayList<>(); List<Double> recalls = new ArrayList<>(); List<Double> mrrs = new ArrayList<>(); for (RecallOptimizer.TestCase testCase : testCases) { Set<String> relevant = testCase.relevantIds; List<SearchResult> results = vectorStore.search(testCase.query, 20); Set<String> retrieved = results.stream() .map(SearchResult::getId) .collect(Collectors.toSet()); long tp = retrieved.stream().filter(relevant::contains).count(); double precision = retrieved.isEmpty() ? 0 : (double) tp / retrieved.size(); double recall = relevant.isEmpty() ? 0 : (double) tp / relevant.size(); precisions.add(precision); recalls.add(recall); double rr = 0; for (int i = 0; i < results.size(); i++) { if (relevant.contains(results.get(i).getId())) { rr = 1.0 / (i + 1); break; } } mrrs.add(rr); } Map<String, Double> result = new LinkedHashMap<>(); result.put("precision", calculateAvg(precisions)); result.put("recall", calculateAvg(recalls)); result.put("mrr", calculateAvg(mrrs)); result.put("hit_rate", (double) mrrs.stream().filter(r -> r > 0).count() / mrrs.size()); return result; } private double calculateAvg(List<Double> values) { return values.stream().mapToDouble(Double::doubleValue).average().orElse(0); }}

八、评估与优化

8.1 RAG评估指标

建立科学的评估体系是优化RAG系统的基础。RAG的评估需要同时考虑检索和生成两个环节。

核心评估指标:

| 评估维度 | 指标 | 说明 |

|---|---|---|

| 检索质量 | Precision@K | Top-K结果中相关文档的比例 |

| 检索质量 | Recall@K | 所有相关文档中被召回的比例 |

| 检索质量 | MRR | 第一个相关文档排名的倒数 |

| 检索质量 | Hit Rate | 至少召回一个相关文档的比例 |

| 生成质量 | Answer Relevance | 回答与问题的相关性 |

| 生成质量 | Faithfulness | 回答与检索内容的忠实度 |

| 端到端 | RAGAs | 综合评估检索和生成 |

评估方法:

- 离线评估:使用测试集计算各项指标

- 在线评估:收集用户反馈进行A/B测试

- 自动化评估:集成到CI/CD流程,持续监控效果

// RAG评估实现import java.util.*;publicclass RAGEvaluator { privatefinal VectorStore vectorStore; privatefinal LLMService llmService; public RAGEvaluator(VectorStore vectorStore, LLMService llmService) { this.vectorStore = vectorStore; this.llmService = llmService; } public Map<String, Object> evaluate(List<RecallOptimizer.TestCase> testCases) { Map<String, Object> retrievalResults = evaluateRetrieval(testCases); Map<String, Object> generationResults = evaluateGeneration(testCases); Map<String, Object> results = new LinkedHashMap<>(retrievalResults); results.putAll(generationResults); return results; } private Map<String, Object> evaluateRetrieval(List<RecallOptimizer.TestCase> testCases) { RetrievalEvaluator evaluator = new RetrievalEvaluator(vectorStore); return evaluator.evaluate(testCases); } private Map<String, Object> evaluateGeneration(List<RecallOptimizer.TestCase> testCases) { List<Double> relevances = new ArrayList<>(); for (RecallOptimizer.TestCase testCase : testCases) { List<SearchResult> results = vectorStore.search(testCase.query, 5); String context = results.stream() .map(SearchResult::getContent) .collect(Collectors.joining("\n\n")); String prompt = String.format("基于以下内容回答:%s\n\n问题:%s", context, testCase.query); // String response = llmService.generate(prompt); // 简化:返回固定值 relevances.add(0.8); } return Map.of("answer_relevance", calculateAvg(relevances)); } private double calculateAvg(List<Double> values) { return values.stream().mapToDouble(Double::doubleValue).average().orElse(0); }}

九、总结

RAG优化路径图

┌─────────────────────────────────────────────────────────────────┐│ RAG 优化路径 │├─────────────────────────────────────────────────────────────────┤│ ││ 1. 基础搭建 ││ └── 文档加载 → 分块 → 向量化 → 向量存储 ││ ││ 2. 检索优化 (召回率) ││ ├── 优化分块策略 ││ ├── 更换/微调Embedding模型 ││ ├── 混合检索 (向量+BM25) ││ └── 查询扩展/重写 ││ ││ 3. 生成优化 ││ ├── 上下文增强 ││ ├── 长度管理 ││ ├── 幻觉控制 ││ └── 格式控制 ││ ││ 4. 性能优化 ││ ├── 索引参数调优 ││ ├── 查询缓存 ││ └── 分布式扩展 ││ ││ 5. 成本优化 ││ ├── 模型选择 ││ ├── 缓存策略 ││ └── 量化压缩 ││ ││ 6. 工程化 ││ ├── 增量更新 ││ ├── 多数据源 ││ └── 监控评估 ││ │└─────────────────────────────────────────────────────────────────┘

快速排查表

| 问题现象 | 可能原因 | 解决方案 |

|---|---|---|

| 召回率低 | 分块太大/太小 | 调整分块大小,使用重叠 |

| 召回率低 | Embedding效果差 | 更换或微调模型 |

| 召回率低 | 缺少关键词 | 启用混合检索 |

| 相关文档排名靠后 | 排序策略不佳 | 添加重排序 |

| 回答不完整 | 上下文不足 | 增加检索数量 |

| 回答有幻觉 | 提示词不当 | 优化系统提示词 |

| 输出格式错 | 模型输出不稳定 | 添加输出校验 |

进阶方向

- 多模态RAG:支持图片、音频、视频检索

- Graph RAG:结合知识图谱增强检索

- Agentic RAG:自主判断检索时机和策略

- 实时RAG:流式数据增量更新

架构设计之道在于在不同的场景采用合适的架构设计,架构设计没有完美,只有合适。

AI行业迎来前所未有的爆发式增长:从DeepSeek百万年薪招聘AI研究员,到百度、阿里、腾讯等大厂疯狂布局AI Agent,再到国家政策大力扶持数字经济和AI人才培养,所有信号都在告诉我们:AI的黄金十年,真的来了!

在行业火爆之下,AI人才争夺战也日趋白热化,其就业前景一片蓝海!

我给大家准备了一份全套的《AI大模型零基础入门+进阶学习资源包》,包括AI大模型入门学习思维导图、精品AI大模型学习书籍手册、视频教程、实战学习等录播视频免费分享出来。😝有需要的小伙伴,可以VX扫描下方二维码免费领取🆓

人才缺口巨大

人力资源社会保障部有关报告显示,据测算,当前,****我国人工智能人才缺口超过500万,****供求比例达1∶10。脉脉最新数据也显示:AI新发岗位量较去年初暴增29倍,超1000家AI企业释放7.2万+岗位……

单拿今年的秋招来说,各互联网大厂释放出来的招聘信息中,我们就能感受到AI浪潮,比如百度90%的技术岗都与AI相关!

就业薪资超高

在旺盛的市场需求下,AI岗位不仅招聘量大,薪资待遇更是“一骑绝尘”。企业为抢AI核心人才,薪资给的非常慷慨,过去一年,懂AI的人才普遍涨薪40%+!

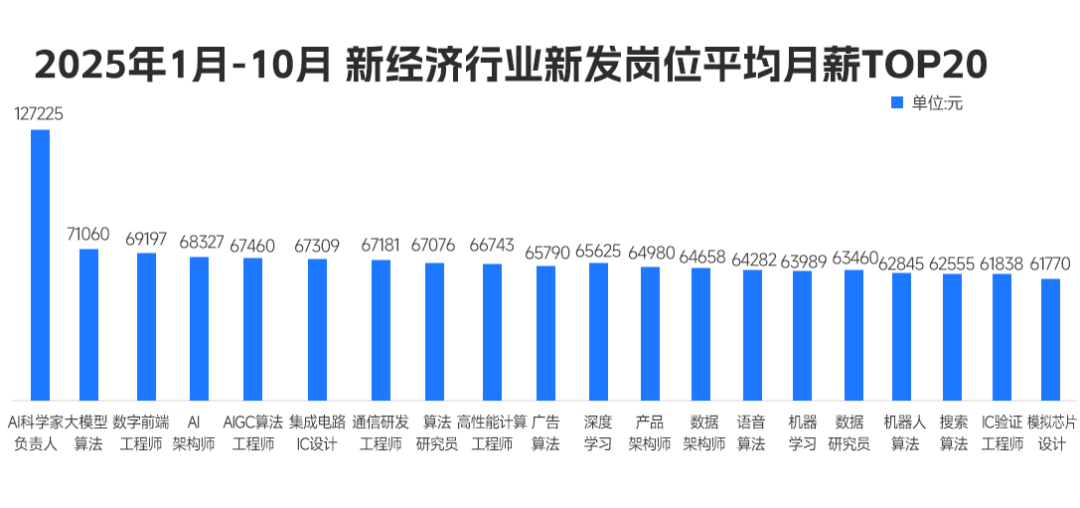

脉脉高聘发布的《2025年度人才迁徙报告》显示,在2025年1月-10月的高薪岗位Top20排行中,AI相关岗位占了绝大多数,并且平均薪资月薪都超过6w!

在去年的秋招中,小红书给算法相关岗位的薪资为50k起,字节开出228万元的超高年薪,据《2025年秋季校园招聘白皮书》,AI算法类平均年薪达36.9万,遥遥领先其他行业!

总结来说,当前人工智能岗位需求多,薪资高,前景好。在职场里,选对赛道就能赢在起跑线。抓住AI风口,轻松实现高薪就业!

但现实却是,仍有很多同学不知道如何抓住AI机遇,会遇到很多就业难题,比如:

❌ 技术过时:只会CRUD的开发者,在AI浪潮中沦为“职场裸奔者”;

❌ 薪资停滞:初级岗位内卷到白菜价,传统开发3年经验薪资涨幅不足15%;

❌ 转型无门:想学AI却找不到系统路径,83%自学党中途放弃。

他们的就业难题解决问题的关键在于:不仅要选对赛道,更要跟对老师!

我给大家准备了一份全套的《AI大模型零基础入门+进阶学习资源包》,包括AI大模型入门学习思维导图、精品AI大模型学习书籍手册、视频教程、实战学习等录播视频免费分享出来。😝有需要的小伙伴,可以VX扫描下方二维码免费领取🆓

AtomGit 是由开放原子开源基金会联合 CSDN 等生态伙伴共同推出的新一代开源与人工智能协作平台。平台坚持“开放、中立、公益”的理念,把代码托管、模型共享、数据集托管、智能体开发体验和算力服务整合在一起,为开发者提供从开发、训练到部署的一站式体验。

更多推荐

已为社区贡献144条内容

已为社区贡献144条内容

所有评论(0)