YOLO26打印模型结构配置信息并查看网络模型详细参数:参数量、计算量(GFLOPS)

《博主简介》

小伙伴们好,我是阿旭。

专注于计算机视觉领域,包括目标检测、图像分类、图像分割和目标跟踪等项目开发,提供模型对比实验、答疑辅导等。

《------往期经典推荐------》

二、机器学习实战专栏【链接】,已更新31期,欢迎关注,持续更新中~~

三、深度学习【Pytorch】专栏【链接】

四、【Stable Diffusion绘画系列】专栏【链接】

五、YOLOv8改进专栏【链接】,持续更新中~~

六、YOLO性能对比专栏【链接】,持续更新中~

《------正文------》

前言

本文主要介绍如何打印并且查看YOLO26网络模型的网络结构配置信息、每一层结构详细信息、以及参数量、计算量等模型相关信息。 该方法同样适用于改进后的模型网络结构信息及相关参数查看。可用于不同模型进行参数量、计算量等对比使用。

查看配置文件结构信息

在每次进行YOLO26模型训练前,都会打印相应的模型结构信息,如上图。但是如何自己能够直接打印出上述网络结构配置信息呢?,博主通过查看源码发现,信息是在源码DetectionModel类中,打印出来的。因此我们直接使用该类,传入我们自己的模型配置文件,运行该类即可,代码如下:

from ultralytics.nn.tasks import DetectionModel

# # 模型网络结构配置文件路径

yaml_path = 'ultralytics/cfg/models/26/yolo26n.yaml'

# # 改进的模型结构路径

# yaml_path = 'ultralytics/cfg/models/v8/yolo26n-CBAM.yaml'

# # 传入模型网络结构配置文件cfg, nc为模型检测类别数

DetectionModel(cfg=yaml_path,nc=80)

其中cfg参数为网络结构的yaml配置文件路径,nc表示自己训练模型的类别数量。

运行代码后,打印结果如下:

打印结果说明:【注:这里使用的nc类别数为80,不同类别数量,参数量会略有差别】

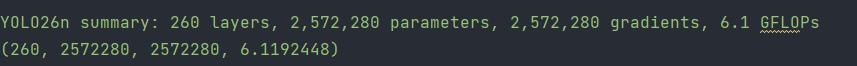

可以看到模型配置文件一共有23行,params为每一层的参数量大小,module为每一层的结构名称,arguments为每一层结构需要传入的参数。最后一行summary为总的信息参数,模型一共有260层,参参数量(parameters)为:2572280,计算量GFLOPs为:6.1.

查看详细的网络结构

上面只是打印出了网络配置文件每一层相关的信息,如果我们想看更加细致的每一步信息,可以直接使用model.info()来进行查看,代码如下:

加载训练好的模型或者网络结构配置文件

from ultralytics import YOLO

# 加载训练好的模型或者网络结构配置文件

model = YOLO('best.pt')

# model = YOLO('ultralytics/cfg/models/26/yolo26n.yaml')

打印模型参数信息:

# 打印模型参数信息

print(model.info())

结果如下:

打印模型每一层结构信息:

在上面代码中加入detailed参数即可。

print(model.info(detailed=True))

打印信息如下:

layer name type gradient parameters shape mu sigma

0 model.0.conv.weight Conv2d True 432 [16, 3, 3, 3] 0.00561 0.11 float32

1 model.0.bn.weight BatchNorm2d True 16 [16] 1 0 float32

1 model.0.bn.bias BatchNorm2d True 16 [16] 0 0 float32

2 model.0.act SiLU False 0 [] - - -

3 model.1.conv.weight Conv2d True 4608 [32, 16, 3, 3] 0.000793 0.0481 float32

4 model.1.bn.weight BatchNorm2d True 32 [32] 1 0 float32

4 model.1.bn.bias BatchNorm2d True 32 [32] 0 0 float32

5 model.2.cv1.conv.weight Conv2d True 1024 [32, 32, 1, 1] -0.00737 0.0986 float32

6 model.2.cv1.bn.weight BatchNorm2d True 32 [32] 1 0 float32

6 model.2.cv1.bn.bias BatchNorm2d True 32 [32] 0 0 float32

7 model.2.cv2.conv.weight Conv2d True 3072 [64, 48, 1, 1] 8.7e-06 0.0836 float32

8 model.2.cv2.bn.weight BatchNorm2d True 64 [64] 1 0 float32

8 model.2.cv2.bn.bias BatchNorm2d True 64 [64] 0 0 float32

9 model.2.m.0.cv1.conv.weight Conv2d True 1152 [8, 16, 3, 3] -0.00195 0.0484 float32

10 model.2.m.0.cv1.bn.weight BatchNorm2d True 8 [8] 1 0 float32

10 model.2.m.0.cv1.bn.bias BatchNorm2d True 8 [8] 0 0 float32

11 model.2.m.0.cv2.conv.weight Conv2d True 1152 [16, 8, 3, 3] 0.000551 0.0669 float32

12 model.2.m.0.cv2.bn.weight BatchNorm2d True 16 [16] 1 0 float32

12 model.2.m.0.cv2.bn.bias BatchNorm2d True 16 [16] 0 0 float32

13 model.3.conv.weight Conv2d True 36864 [64, 64, 3, 3] 1.05e-05 0.024 float32

14 model.3.bn.weight BatchNorm2d True 64 [64] 1 0 float32

14 model.3.bn.bias BatchNorm2d True 64 [64] 0 0 float32

15 model.4.cv1.conv.weight Conv2d True 4096 [64, 64, 1, 1] -0.003 0.072 float32

16 model.4.cv1.bn.weight BatchNorm2d True 64 [64] 1 0 float32

16 model.4.cv1.bn.bias BatchNorm2d True 64 [64] 0 0 float32

17 model.4.cv2.conv.weight Conv2d True 12288 [128, 96, 1, 1] 9.33e-05 0.0589 float32

18 model.4.cv2.bn.weight BatchNorm2d True 128 [128] 1 0 float32

18 model.4.cv2.bn.bias BatchNorm2d True 128 [128] 0 0 float32

19 model.4.m.0.cv1.conv.weight Conv2d True 4608 [16, 32, 3, 3] 0.000497 0.0344 float32

20 model.4.m.0.cv1.bn.weight BatchNorm2d True 16 [16] 1 0 float32

20 model.4.m.0.cv1.bn.bias BatchNorm2d True 16 [16] 0 0 float32

21 model.4.m.0.cv2.conv.weight Conv2d True 4608 [32, 16, 3, 3] -0.000254 0.0481 float32

22 model.4.m.0.cv2.bn.weight BatchNorm2d True 32 [32] 1 0 float32

22 model.4.m.0.cv2.bn.bias BatchNorm2d True 32 [32] 0 0 float32

23 model.5.conv.weight Conv2d True 147456 [128, 128, 3, 3] -7.69e-05 0.017 float32

24 model.5.bn.weight BatchNorm2d True 128 [128] 1 0 float32

24 model.5.bn.bias BatchNorm2d True 128 [128] 0 0 float32

25 model.6.cv1.conv.weight Conv2d True 16384 [128, 128, 1, 1] -0.000179 0.0513 float32

26 model.6.cv1.bn.weight BatchNorm2d True 128 [128] 1 0 float32

26 model.6.cv1.bn.bias BatchNorm2d True 128 [128] 0 0 float32

27 model.6.cv2.conv.weight Conv2d True 24576 [128, 192, 1, 1] -0.000338 0.0416 float32

28 model.6.cv2.bn.weight BatchNorm2d True 128 [128] 1 0 float32

28 model.6.cv2.bn.bias BatchNorm2d True 128 [128] 0 0 float32

29 model.6.m.0.cv1.conv.weight Conv2d True 2048 [32, 64, 1, 1] 0.00224 0.0713 float32

30 model.6.m.0.cv1.bn.weight BatchNorm2d True 32 [32] 1 0 float32

30 model.6.m.0.cv1.bn.bias BatchNorm2d True 32 [32] 0 0 float32

31 model.6.m.0.cv2.conv.weight Conv2d True 2048 [32, 64, 1, 1] -0.0002 0.072 float32

32 model.6.m.0.cv2.bn.weight BatchNorm2d True 32 [32] 1 0 float32

32 model.6.m.0.cv2.bn.bias BatchNorm2d True 32 [32] 0 0 float32

33 model.6.m.0.cv3.conv.weight Conv2d True 4096 [64, 64, 1, 1] -0.00139 0.0721 float32

34 model.6.m.0.cv3.bn.weight BatchNorm2d True 64 [64] 1 0 float32

34 model.6.m.0.cv3.bn.bias BatchNorm2d True 64 [64] 0 0 float32

35 model.6.m.0.m.0.cv1.conv.weight Conv2d True 9216 [32, 32, 3, 3] -0.000845 0.0341 float32

36 model.6.m.0.m.0.cv1.bn.weight BatchNorm2d True 32 [32] 1 0 float32

36 model.6.m.0.m.0.cv1.bn.bias BatchNorm2d True 32 [32] 0 0 float32

37 model.6.m.0.m.0.cv2.conv.weight Conv2d True 9216 [32, 32, 3, 3] -0.000381 0.0339 float32

38 model.6.m.0.m.0.cv2.bn.weight BatchNorm2d True 32 [32] 1 0 float32

38 model.6.m.0.m.0.cv2.bn.bias BatchNorm2d True 32 [32] 0 0 float32

39 model.6.m.0.m.1.cv1.conv.weight Conv2d True 9216 [32, 32, 3, 3] 5.29e-05 0.0342 float32

40 model.6.m.0.m.1.cv1.bn.weight BatchNorm2d True 32 [32] 1 0 float32

40 model.6.m.0.m.1.cv1.bn.bias BatchNorm2d True 32 [32] 0 0 float32

41 model.6.m.0.m.1.cv2.conv.weight Conv2d True 9216 [32, 32, 3, 3] 0.000267 0.0341 float32

42 model.6.m.0.m.1.cv2.bn.weight BatchNorm2d True 32 [32] 1 0 float32

42 model.6.m.0.m.1.cv2.bn.bias BatchNorm2d True 32 [32] 0 0 float32

43 model.7.conv.weight Conv2d True 294912 [256, 128, 3, 3] 8.58e-06 0.017 float32

44 model.7.bn.weight BatchNorm2d True 256 [256] 1 0 float32

44 model.7.bn.bias BatchNorm2d True 256 [256] 0 0 float32

45 model.8.cv1.conv.weight Conv2d True 65536 [256, 256, 1, 1] -0.000264 0.036 float32

46 model.8.cv1.bn.weight BatchNorm2d True 256 [256] 1 0 float32

46 model.8.cv1.bn.bias BatchNorm2d True 256 [256] 0 0 float32

47 model.8.cv2.conv.weight Conv2d True 98304 [256, 384, 1, 1] 1.15e-06 0.0295 float32

48 model.8.cv2.bn.weight BatchNorm2d True 256 [256] 1 0 float32

48 model.8.cv2.bn.bias BatchNorm2d True 256 [256] 0 0 float32

49 model.8.m.0.cv1.conv.weight Conv2d True 8192 [64, 128, 1, 1] 0.00135 0.051 float32

50 model.8.m.0.cv1.bn.weight BatchNorm2d True 64 [64] 1 0 float32

50 model.8.m.0.cv1.bn.bias BatchNorm2d True 64 [64] 0 0 float32

51 model.8.m.0.cv2.conv.weight Conv2d True 8192 [64, 128, 1, 1] -0.00153 0.051 float32

52 model.8.m.0.cv2.bn.weight BatchNorm2d True 64 [64] 1 0 float32

52 model.8.m.0.cv2.bn.bias BatchNorm2d True 64 [64] 0 0 float32

53 model.8.m.0.cv3.conv.weight Conv2d True 16384 [128, 128, 1, 1] -5.22e-05 0.0512 float32

54 model.8.m.0.cv3.bn.weight BatchNorm2d True 128 [128] 1 0 float32

54 model.8.m.0.cv3.bn.bias BatchNorm2d True 128 [128] 0 0 float32

55 model.8.m.0.m.0.cv1.conv.weight Conv2d True 36864 [64, 64, 3, 3] 0.000173 0.0241 float32

56 model.8.m.0.m.0.cv1.bn.weight BatchNorm2d True 64 [64] 1 0 float32

56 model.8.m.0.m.0.cv1.bn.bias BatchNorm2d True 64 [64] 0 0 float32

57 model.8.m.0.m.0.cv2.conv.weight Conv2d True 36864 [64, 64, 3, 3] -0.000226 0.0241 float32

58 model.8.m.0.m.0.cv2.bn.weight BatchNorm2d True 64 [64] 1 0 float32

58 model.8.m.0.m.0.cv2.bn.bias BatchNorm2d True 64 [64] 0 0 float32

59 model.8.m.0.m.1.cv1.conv.weight Conv2d True 36864 [64, 64, 3, 3] 0.000178 0.0241 float32

60 model.8.m.0.m.1.cv1.bn.weight BatchNorm2d True 64 [64] 1 0 float32

60 model.8.m.0.m.1.cv1.bn.bias BatchNorm2d True 64 [64] 0 0 float32

61 model.8.m.0.m.1.cv2.conv.weight Conv2d True 36864 [64, 64, 3, 3] -2.65e-05 0.0241 float32

62 model.8.m.0.m.1.cv2.bn.weight BatchNorm2d True 64 [64] 1 0 float32

62 model.8.m.0.m.1.cv2.bn.bias BatchNorm2d True 64 [64] 0 0 float32

63 model.9.cv1.conv.weight Conv2d True 32768 [128, 256, 1, 1] -0.000108 0.036 float32

64 model.9.cv1.bn.weight BatchNorm2d True 128 [128] 1 0 float32

64 model.9.cv1.bn.bias BatchNorm2d True 128 [128] 0 0 float32

65 model.9.cv1.act Identity False 0 [] - - -

66 model.9.cv2.conv.weight Conv2d True 131072 [256, 512, 1, 1] 9.26e-05 0.0255 float32

67 model.9.cv2.bn.weight BatchNorm2d True 256 [256] 1 0 float32

67 model.9.cv2.bn.bias BatchNorm2d True 256 [256] 0 0 float32

68 model.9.m MaxPool2d False 0 [] - - -

69 model.10.cv1.conv.weight Conv2d True 65536 [256, 256, 1, 1] -0.000111 0.036 float32

70 model.10.cv1.bn.weight BatchNorm2d True 256 [256] 1 0 float32

70 model.10.cv1.bn.bias BatchNorm2d True 256 [256] 0 0 float32

71 model.10.cv2.conv.weight Conv2d True 65536 [256, 256, 1, 1] -0.00023 0.0361 float32

72 model.10.cv2.bn.weight BatchNorm2d True 256 [256] 1 0 float32

72 model.10.cv2.bn.bias BatchNorm2d True 256 [256] 0 0 float32

73 model.10.m.0.attn.qkv.conv.weight Conv2d True 32768 [256, 128, 1, 1] -0.000127 0.0513 float32

74 model.10.m.0.attn.qkv.bn.weight BatchNorm2d True 256 [256] 1 0 float32

74 model.10.m.0.attn.qkv.bn.bias BatchNorm2d True 256 [256] 0 0 float32

75 model.10.m.0.attn.qkv.act Identity False 0 [] - - -

76 model.10.m.0.attn.proj.conv.weight Conv2d True 16384 [128, 128, 1, 1] -0.00013 0.0512 float32

77 model.10.m.0.attn.proj.bn.weight BatchNorm2d True 128 [128] 1 0 float32

77 model.10.m.0.attn.proj.bn.bias BatchNorm2d True 128 [128] 0 0 float32

78 model.10.m.0.attn.proj.act Identity False 0 [] - - -

79 model.10.m.0.attn.pe.conv.weight Conv2d True 1152 [128, 1, 3, 3] -0.0054 0.194 float32

80 model.10.m.0.attn.pe.bn.weight BatchNorm2d True 128 [128] 1 0 float32

80 model.10.m.0.attn.pe.bn.bias BatchNorm2d True 128 [128] 0 0 float32

81 model.10.m.0.attn.pe.act Identity False 0 [] - - -

82 model.10.m.0.ffn.0.conv.weight Conv2d True 32768 [256, 128, 1, 1] -0.0002 0.0513 float32

83 model.10.m.0.ffn.0.bn.weight BatchNorm2d True 256 [256] 1 0 float32

83 model.10.m.0.ffn.0.bn.bias BatchNorm2d True 256 [256] 0 0 float32

84 model.10.m.0.ffn.1.conv.weight Conv2d True 32768 [128, 256, 1, 1] 0.000212 0.0362 float32

85 model.10.m.0.ffn.1.bn.weight BatchNorm2d True 128 [128] 1 0 float32

85 model.10.m.0.ffn.1.bn.bias BatchNorm2d True 128 [128] 0 0 float32

86 model.10.m.0.ffn.1.act Identity False 0 [] - - -

87 model.11 Upsample False 0 [] - - -

88 model.12 Concat False 0 [] - - -

89 model.13.cv1.conv.weight Conv2d True 49152 [128, 384, 1, 1] -2.59e-05 0.0295 float32

90 model.13.cv1.bn.weight BatchNorm2d True 128 [128] 1 0 float32

90 model.13.cv1.bn.bias BatchNorm2d True 128 [128] 0 0 float32

91 model.13.cv2.conv.weight Conv2d True 24576 [128, 192, 1, 1] 0.000164 0.0416 float32

92 model.13.cv2.bn.weight BatchNorm2d True 128 [128] 1 0 float32

92 model.13.cv2.bn.bias BatchNorm2d True 128 [128] 0 0 float32

93 model.13.m.0.cv1.conv.weight Conv2d True 2048 [32, 64, 1, 1] 0.00378 0.073 float32

94 model.13.m.0.cv1.bn.weight BatchNorm2d True 32 [32] 1 0 float32

94 model.13.m.0.cv1.bn.bias BatchNorm2d True 32 [32] 0 0 float32

95 model.13.m.0.cv2.conv.weight Conv2d True 2048 [32, 64, 1, 1] -0.00286 0.0723 float32

96 model.13.m.0.cv2.bn.weight BatchNorm2d True 32 [32] 1 0 float32

96 model.13.m.0.cv2.bn.bias BatchNorm2d True 32 [32] 0 0 float32

97 model.13.m.0.cv3.conv.weight Conv2d True 4096 [64, 64, 1, 1] 0.000587 0.0717 float32

98 model.13.m.0.cv3.bn.weight BatchNorm2d True 64 [64] 1 0 float32

98 model.13.m.0.cv3.bn.bias BatchNorm2d True 64 [64] 0 0 float32

99 model.13.m.0.m.0.cv1.conv.weight Conv2d True 9216 [32, 32, 3, 3] -0.000176 0.0339 float32

100 model.13.m.0.m.0.cv1.bn.weight BatchNorm2d True 32 [32] 1 0 float32

100 model.13.m.0.m.0.cv1.bn.bias BatchNorm2d True 32 [32] 0 0 float32

101 model.13.m.0.m.0.cv2.conv.weight Conv2d True 9216 [32, 32, 3, 3] 5.28e-05 0.034 float32

102 model.13.m.0.m.0.cv2.bn.weight BatchNorm2d True 32 [32] 1 0 float32

102 model.13.m.0.m.0.cv2.bn.bias BatchNorm2d True 32 [32] 0 0 float32

103 model.13.m.0.m.1.cv1.conv.weight Conv2d True 9216 [32, 32, 3, 3] 0.000276 0.0338 float32

104 model.13.m.0.m.1.cv1.bn.weight BatchNorm2d True 32 [32] 1 0 float32

104 model.13.m.0.m.1.cv1.bn.bias BatchNorm2d True 32 [32] 0 0 float32

105 model.13.m.0.m.1.cv2.conv.weight Conv2d True 9216 [32, 32, 3, 3] 0.000775 0.0338 float32

106 model.13.m.0.m.1.cv2.bn.weight BatchNorm2d True 32 [32] 1 0 float32

106 model.13.m.0.m.1.cv2.bn.bias BatchNorm2d True 32 [32] 0 0 float32

107 model.14 Upsample False 0 [] - - -

108 model.15 Concat False 0 [] - - -

109 model.16.cv1.conv.weight Conv2d True 16384 [64, 256, 1, 1] -9.75e-05 0.036 float32

110 model.16.cv1.bn.weight BatchNorm2d True 64 [64] 1 0 float32

110 model.16.cv1.bn.bias BatchNorm2d True 64 [64] 0 0 float32

111 model.16.cv2.conv.weight Conv2d True 6144 [64, 96, 1, 1] 2.59e-05 0.0592 float32

112 model.16.cv2.bn.weight BatchNorm2d True 64 [64] 1 0 float32

112 model.16.cv2.bn.bias BatchNorm2d True 64 [64] 0 0 float32

113 model.16.m.0.cv1.conv.weight Conv2d True 512 [16, 32, 1, 1] 0.00212 0.102 float32

114 model.16.m.0.cv1.bn.weight BatchNorm2d True 16 [16] 1 0 float32

114 model.16.m.0.cv1.bn.bias BatchNorm2d True 16 [16] 0 0 float32

115 model.16.m.0.cv2.conv.weight Conv2d True 512 [16, 32, 1, 1] 0.00287 0.0999 float32

116 model.16.m.0.cv2.bn.weight BatchNorm2d True 16 [16] 1 0 float32

116 model.16.m.0.cv2.bn.bias BatchNorm2d True 16 [16] 0 0 float32

117 model.16.m.0.cv3.conv.weight Conv2d True 1024 [32, 32, 1, 1] 0.00347 0.104 float32

118 model.16.m.0.cv3.bn.weight BatchNorm2d True 32 [32] 1 0 float32

118 model.16.m.0.cv3.bn.bias BatchNorm2d True 32 [32] 0 0 float32

119 model.16.m.0.m.0.cv1.conv.weight Conv2d True 2304 [16, 16, 3, 3] -0.000425 0.0483 float32

120 model.16.m.0.m.0.cv1.bn.weight BatchNorm2d True 16 [16] 1 0 float32

120 model.16.m.0.m.0.cv1.bn.bias BatchNorm2d True 16 [16] 0 0 float32

121 model.16.m.0.m.0.cv2.conv.weight Conv2d True 2304 [16, 16, 3, 3] -0.00143 0.0478 float32

122 model.16.m.0.m.0.cv2.bn.weight BatchNorm2d True 16 [16] 1 0 float32

122 model.16.m.0.m.0.cv2.bn.bias BatchNorm2d True 16 [16] 0 0 float32

123 model.16.m.0.m.1.cv1.conv.weight Conv2d True 2304 [16, 16, 3, 3] -0.00044 0.0481 float32

124 model.16.m.0.m.1.cv1.bn.weight BatchNorm2d True 16 [16] 1 0 float32

124 model.16.m.0.m.1.cv1.bn.bias BatchNorm2d True 16 [16] 0 0 float32

125 model.16.m.0.m.1.cv2.conv.weight Conv2d True 2304 [16, 16, 3, 3] -0.000684 0.0485 float32

126 model.16.m.0.m.1.cv2.bn.weight BatchNorm2d True 16 [16] 1 0 float32

126 model.16.m.0.m.1.cv2.bn.bias BatchNorm2d True 16 [16] 0 0 float32

127 model.17.conv.weight Conv2d True 36864 [64, 64, 3, 3] 0.000129 0.024 float32

128 model.17.bn.weight BatchNorm2d True 64 [64] 1 0 float32

128 model.17.bn.bias BatchNorm2d True 64 [64] 0 0 float32

129 model.18 Concat False 0 [] - - -

130 model.19.cv1.conv.weight Conv2d True 24576 [128, 192, 1, 1] -0.000114 0.0416 float32

131 model.19.cv1.bn.weight BatchNorm2d True 128 [128] 1 0 float32

131 model.19.cv1.bn.bias BatchNorm2d True 128 [128] 0 0 float32

132 model.19.cv2.conv.weight Conv2d True 24576 [128, 192, 1, 1] -0.000339 0.0415 float32

133 model.19.cv2.bn.weight BatchNorm2d True 128 [128] 1 0 float32

133 model.19.cv2.bn.bias BatchNorm2d True 128 [128] 0 0 float32

134 model.19.m.0.cv1.conv.weight Conv2d True 2048 [32, 64, 1, 1] -4.37e-05 0.0722 float32

135 model.19.m.0.cv1.bn.weight BatchNorm2d True 32 [32] 1 0 float32

135 model.19.m.0.cv1.bn.bias BatchNorm2d True 32 [32] 0 0 float32

136 model.19.m.0.cv2.conv.weight Conv2d True 2048 [32, 64, 1, 1] -0.0011 0.0725 float32

137 model.19.m.0.cv2.bn.weight BatchNorm2d True 32 [32] 1 0 float32

137 model.19.m.0.cv2.bn.bias BatchNorm2d True 32 [32] 0 0 float32

138 model.19.m.0.cv3.conv.weight Conv2d True 4096 [64, 64, 1, 1] 8.43e-05 0.0717 float32

139 model.19.m.0.cv3.bn.weight BatchNorm2d True 64 [64] 1 0 float32

139 model.19.m.0.cv3.bn.bias BatchNorm2d True 64 [64] 0 0 float32

140 model.19.m.0.m.0.cv1.conv.weight Conv2d True 9216 [32, 32, 3, 3] 0.000496 0.0336 float32

141 model.19.m.0.m.0.cv1.bn.weight BatchNorm2d True 32 [32] 1 0 float32

141 model.19.m.0.m.0.cv1.bn.bias BatchNorm2d True 32 [32] 0 0 float32

142 model.19.m.0.m.0.cv2.conv.weight Conv2d True 9216 [32, 32, 3, 3] 0.00015 0.0341 float32

143 model.19.m.0.m.0.cv2.bn.weight BatchNorm2d True 32 [32] 1 0 float32

143 model.19.m.0.m.0.cv2.bn.bias BatchNorm2d True 32 [32] 0 0 float32

144 model.19.m.0.m.1.cv1.conv.weight Conv2d True 9216 [32, 32, 3, 3] -0.000129 0.0341 float32

145 model.19.m.0.m.1.cv1.bn.weight BatchNorm2d True 32 [32] 1 0 float32

145 model.19.m.0.m.1.cv1.bn.bias BatchNorm2d True 32 [32] 0 0 float32

146 model.19.m.0.m.1.cv2.conv.weight Conv2d True 9216 [32, 32, 3, 3] -0.000211 0.034 float32

147 model.19.m.0.m.1.cv2.bn.weight BatchNorm2d True 32 [32] 1 0 float32

147 model.19.m.0.m.1.cv2.bn.bias BatchNorm2d True 32 [32] 0 0 float32

148 model.20.conv.weight Conv2d True 147456 [128, 128, 3, 3] -5.3e-06 0.017 float32

149 model.20.bn.weight BatchNorm2d True 128 [128] 1 0 float32

149 model.20.bn.bias BatchNorm2d True 128 [128] 0 0 float32

150 model.21 Concat False 0 [] - - -

151 model.22.cv1.conv.weight Conv2d True 98304 [256, 384, 1, 1] -6.51e-05 0.0294 float32

152 model.22.cv1.bn.weight BatchNorm2d True 256 [256] 1 0 float32

152 model.22.cv1.bn.bias BatchNorm2d True 256 [256] 0 0 float32

153 model.22.cv2.conv.weight Conv2d True 98304 [256, 384, 1, 1] -2.61e-05 0.0295 float32

154 model.22.cv2.bn.weight BatchNorm2d True 256 [256] 1 0 float32

154 model.22.cv2.bn.bias BatchNorm2d True 256 [256] 0 0 float32

155 model.22.m.0.0.cv1.conv.weight Conv2d True 73728 [64, 128, 3, 3] -0.000181 0.017 float32

156 model.22.m.0.0.cv1.bn.weight BatchNorm2d True 64 [64] 1 0 float32

156 model.22.m.0.0.cv1.bn.bias BatchNorm2d True 64 [64] 0 0 float32

157 model.22.m.0.0.cv2.conv.weight Conv2d True 73728 [128, 64, 3, 3] -4.27e-05 0.0241 float32

158 model.22.m.0.0.cv2.bn.weight BatchNorm2d True 128 [128] 1 0 float32

158 model.22.m.0.0.cv2.bn.bias BatchNorm2d True 128 [128] 0 0 float32

159 model.22.m.0.1.attn.qkv.conv.weight Conv2d True 32768 [256, 128, 1, 1] -0.000304 0.051 float32

160 model.22.m.0.1.attn.qkv.bn.weight BatchNorm2d True 256 [256] 1 0 float32

160 model.22.m.0.1.attn.qkv.bn.bias BatchNorm2d True 256 [256] 0 0 float32

161 model.22.m.0.1.attn.qkv.act Identity False 0 [] - - -

162 model.22.m.0.1.attn.proj.conv.weight Conv2d True 16384 [128, 128, 1, 1] 0.000286 0.0512 float32

163 model.22.m.0.1.attn.proj.bn.weight BatchNorm2d True 128 [128] 1 0 float32

163 model.22.m.0.1.attn.proj.bn.bias BatchNorm2d True 128 [128] 0 0 float32

164 model.22.m.0.1.attn.proj.act Identity False 0 [] - - -

165 model.22.m.0.1.attn.pe.conv.weight Conv2d True 1152 [128, 1, 3, 3] -0.00486 0.19 float32

166 model.22.m.0.1.attn.pe.bn.weight BatchNorm2d True 128 [128] 1 0 float32

166 model.22.m.0.1.attn.pe.bn.bias BatchNorm2d True 128 [128] 0 0 float32

167 model.22.m.0.1.attn.pe.act Identity False 0 [] - - -

168 model.22.m.0.1.ffn.0.conv.weight Conv2d True 32768 [256, 128, 1, 1] 2.31e-06 0.0512 float32

169 model.22.m.0.1.ffn.0.bn.weight BatchNorm2d True 256 [256] 1 0 float32

169 model.22.m.0.1.ffn.0.bn.bias BatchNorm2d True 256 [256] 0 0 float32

170 model.22.m.0.1.ffn.1.conv.weight Conv2d True 32768 [128, 256, 1, 1] 6.89e-05 0.0361 float32

171 model.22.m.0.1.ffn.1.bn.weight BatchNorm2d True 128 [128] 1 0 float32

171 model.22.m.0.1.ffn.1.bn.bias BatchNorm2d True 128 [128] 0 0 float32

172 model.22.m.0.1.ffn.1.act Identity False 0 [] - - -

173 model.23.cv2.0.0.conv.weight Conv2d True 9216 [16, 64, 3, 3] 0.000251 0.0242 float32

174 model.23.cv2.0.0.bn.weight BatchNorm2d True 16 [16] 1 0 float32

174 model.23.cv2.0.0.bn.bias BatchNorm2d True 16 [16] 0 0 float32

175 model.23.cv2.0.1.conv.weight Conv2d True 2304 [16, 16, 3, 3] 0.00189 0.0482 float32

176 model.23.cv2.0.1.bn.weight BatchNorm2d True 16 [16] 1 0 float32

176 model.23.cv2.0.1.bn.bias BatchNorm2d True 16 [16] 0 0 float32

177 model.23.cv2.0.2.weight Conv2d True 64 [4, 16, 1, 1] -0.0287 0.153 float32

177 model.23.cv2.0.2.bias Conv2d True 4 [4] 2 0 float32

178 model.23.cv2.1.0.conv.weight Conv2d True 18432 [16, 128, 3, 3] -0.000184 0.0171 float32

179 model.23.cv2.1.0.bn.weight BatchNorm2d True 16 [16] 1 0 float32

179 model.23.cv2.1.0.bn.bias BatchNorm2d True 16 [16] 0 0 float32

180 model.23.cv2.1.1.conv.weight Conv2d True 2304 [16, 16, 3, 3] -0.000105 0.0486 float32

181 model.23.cv2.1.1.bn.weight BatchNorm2d True 16 [16] 1 0 float32

181 model.23.cv2.1.1.bn.bias BatchNorm2d True 16 [16] 0 0 float32

182 model.23.cv2.1.2.weight Conv2d True 64 [4, 16, 1, 1] -0.0153 0.15 float32

182 model.23.cv2.1.2.bias Conv2d True 4 [4] 2 0 float32

183 model.23.cv2.2.0.conv.weight Conv2d True 36864 [16, 256, 3, 3] -6.12e-06 0.012 float32

184 model.23.cv2.2.0.bn.weight BatchNorm2d True 16 [16] 1 0 float32

184 model.23.cv2.2.0.bn.bias BatchNorm2d True 16 [16] 0 0 float32

185 model.23.cv2.2.1.conv.weight Conv2d True 2304 [16, 16, 3, 3] -0.00112 0.0479 float32

186 model.23.cv2.2.1.bn.weight BatchNorm2d True 16 [16] 1 0 float32

186 model.23.cv2.2.1.bn.bias BatchNorm2d True 16 [16] 0 0 float32

187 model.23.cv2.2.2.weight Conv2d True 64 [4, 16, 1, 1] 0.00415 0.146 float32

187 model.23.cv2.2.2.bias Conv2d True 4 [4] 2 0 float32

188 model.23.cv3.0.0.0.conv.weight Conv2d True 576 [64, 1, 3, 3] -0.0059 0.191 float32

189 model.23.cv3.0.0.0.bn.weight BatchNorm2d True 64 [64] 1 0 float32

189 model.23.cv3.0.0.0.bn.bias BatchNorm2d True 64 [64] 0 0 float32

190 model.23.cv3.0.0.1.conv.weight Conv2d True 5120 [80, 64, 1, 1] -0.000175 0.0727 float32

191 model.23.cv3.0.0.1.bn.weight BatchNorm2d True 80 [80] 1 0 float32

191 model.23.cv3.0.0.1.bn.bias BatchNorm2d True 80 [80] 0 0 float32

192 model.23.cv3.0.1.0.conv.weight Conv2d True 720 [80, 1, 3, 3] 0.02 0.19 float32

193 model.23.cv3.0.1.0.bn.weight BatchNorm2d True 80 [80] 1 0 float32

193 model.23.cv3.0.1.0.bn.bias BatchNorm2d True 80 [80] 0 0 float32

194 model.23.cv3.0.1.1.conv.weight Conv2d True 6400 [80, 80, 1, 1] -0.00191 0.0648 float32

195 model.23.cv3.0.1.1.bn.weight BatchNorm2d True 80 [80] 1 0 float32

195 model.23.cv3.0.1.1.bn.bias BatchNorm2d True 80 [80] 0 0 float32

196 model.23.cv3.0.2.weight Conv2d True 6400 [80, 80, 1, 1] 0.000251 0.0648 float32

196 model.23.cv3.0.2.bias Conv2d True 80 [80] -11.5 1.92e-06 float32

197 model.23.cv3.1.0.0.conv.weight Conv2d True 1152 [128, 1, 3, 3] 0.00123 0.192 float32

198 model.23.cv3.1.0.0.bn.weight BatchNorm2d True 128 [128] 1 0 float32

198 model.23.cv3.1.0.0.bn.bias BatchNorm2d True 128 [128] 0 0 float32

199 model.23.cv3.1.0.1.conv.weight Conv2d True 10240 [80, 128, 1, 1] -0.000151 0.0506 float32

200 model.23.cv3.1.0.1.bn.weight BatchNorm2d True 80 [80] 1 0 float32

200 model.23.cv3.1.0.1.bn.bias BatchNorm2d True 80 [80] 0 0 float32

201 model.23.cv3.1.1.0.conv.weight Conv2d True 720 [80, 1, 3, 3] 0.00242 0.192 float32

202 model.23.cv3.1.1.0.bn.weight BatchNorm2d True 80 [80] 1 0 float32

202 model.23.cv3.1.1.0.bn.bias BatchNorm2d True 80 [80] 0 0 float32

203 model.23.cv3.1.1.1.conv.weight Conv2d True 6400 [80, 80, 1, 1] 0.00125 0.0645 float32

204 model.23.cv3.1.1.1.bn.weight BatchNorm2d True 80 [80] 1 0 float32

204 model.23.cv3.1.1.1.bn.bias BatchNorm2d True 80 [80] 0 0 float32

205 model.23.cv3.1.2.weight Conv2d True 6400 [80, 80, 1, 1] 0.000722 0.0644 float32

205 model.23.cv3.1.2.bias Conv2d True 80 [80] -10.2 0 float32

206 model.23.cv3.2.0.0.conv.weight Conv2d True 2304 [256, 1, 3, 3] 0.00326 0.189 float32

207 model.23.cv3.2.0.0.bn.weight BatchNorm2d True 256 [256] 1 0 float32

207 model.23.cv3.2.0.0.bn.bias BatchNorm2d True 256 [256] 0 0 float32

208 model.23.cv3.2.0.1.conv.weight Conv2d True 20480 [80, 256, 1, 1] 0.000189 0.0361 float32

209 model.23.cv3.2.0.1.bn.weight BatchNorm2d True 80 [80] 1 0 float32

209 model.23.cv3.2.0.1.bn.bias BatchNorm2d True 80 [80] 0 0 float32

210 model.23.cv3.2.1.0.conv.weight Conv2d True 720 [80, 1, 3, 3] -0.00601 0.195 float32

211 model.23.cv3.2.1.0.bn.weight BatchNorm2d True 80 [80] 1 0 float32

211 model.23.cv3.2.1.0.bn.bias BatchNorm2d True 80 [80] 0 0 float32

212 model.23.cv3.2.1.1.conv.weight Conv2d True 6400 [80, 80, 1, 1] -0.000445 0.0638 float32

213 model.23.cv3.2.1.1.bn.weight BatchNorm2d True 80 [80] 1 0 float32

213 model.23.cv3.2.1.1.bn.bias BatchNorm2d True 80 [80] 0 0 float32

214 model.23.cv3.2.2.weight Conv2d True 6400 [80, 80, 1, 1] -0.00132 0.0645 float32

214 model.23.cv3.2.2.bias Conv2d True 80 [80] -8.76 0 float32

215 model.23.dfl Identity False 0 [] - - -

216 model.23.one2one_cv2.0.0.conv.weight Conv2d True 9216 [16, 64, 3, 3] 0.000251 0.0242 float32

217 model.23.one2one_cv2.0.0.bn.weight BatchNorm2d True 16 [16] 1 0 float32

217 model.23.one2one_cv2.0.0.bn.bias BatchNorm2d True 16 [16] 0 0 float32

218 model.23.one2one_cv2.0.0.act SiLU False 0 [] - - -

219 model.23.one2one_cv2.0.1.conv.weight Conv2d True 2304 [16, 16, 3, 3] 0.00189 0.0482 float32

220 model.23.one2one_cv2.0.1.bn.weight BatchNorm2d True 16 [16] 1 0 float32

220 model.23.one2one_cv2.0.1.bn.bias BatchNorm2d True 16 [16] 0 0 float32

221 model.23.one2one_cv2.0.2.weight Conv2d True 64 [4, 16, 1, 1] -0.0287 0.153 float32

221 model.23.one2one_cv2.0.2.bias Conv2d True 4 [4] 2 0 float32

222 model.23.one2one_cv2.1.0.conv.weight Conv2d True 18432 [16, 128, 3, 3] -0.000184 0.0171 float32

223 model.23.one2one_cv2.1.0.bn.weight BatchNorm2d True 16 [16] 1 0 float32

223 model.23.one2one_cv2.1.0.bn.bias BatchNorm2d True 16 [16] 0 0 float32

224 model.23.one2one_cv2.1.1.conv.weight Conv2d True 2304 [16, 16, 3, 3] -0.000105 0.0486 float32

225 model.23.one2one_cv2.1.1.bn.weight BatchNorm2d True 16 [16] 1 0 float32

225 model.23.one2one_cv2.1.1.bn.bias BatchNorm2d True 16 [16] 0 0 float32

226 model.23.one2one_cv2.1.2.weight Conv2d True 64 [4, 16, 1, 1] -0.0153 0.15 float32

226 model.23.one2one_cv2.1.2.bias Conv2d True 4 [4] 2 0 float32

227 model.23.one2one_cv2.2.0.conv.weight Conv2d True 36864 [16, 256, 3, 3] -6.12e-06 0.012 float32

228 model.23.one2one_cv2.2.0.bn.weight BatchNorm2d True 16 [16] 1 0 float32

228 model.23.one2one_cv2.2.0.bn.bias BatchNorm2d True 16 [16] 0 0 float32

229 model.23.one2one_cv2.2.1.conv.weight Conv2d True 2304 [16, 16, 3, 3] -0.00112 0.0479 float32

230 model.23.one2one_cv2.2.1.bn.weight BatchNorm2d True 16 [16] 1 0 float32

230 model.23.one2one_cv2.2.1.bn.bias BatchNorm2d True 16 [16] 0 0 float32

231 model.23.one2one_cv2.2.2.weight Conv2d True 64 [4, 16, 1, 1] 0.00415 0.146 float32

231 model.23.one2one_cv2.2.2.bias Conv2d True 4 [4] 2 0 float32

232 model.23.one2one_cv3.0.0.0.conv.weight Conv2d True 576 [64, 1, 3, 3] -0.0059 0.191 float32

233 model.23.one2one_cv3.0.0.0.bn.weight BatchNorm2d True 64 [64] 1 0 float32

233 model.23.one2one_cv3.0.0.0.bn.bias BatchNorm2d True 64 [64] 0 0 float32

234 model.23.one2one_cv3.0.0.0.act SiLU False 0 [] - - -

235 model.23.one2one_cv3.0.0.1.conv.weight Conv2d True 5120 [80, 64, 1, 1] -0.000175 0.0727 float32

236 model.23.one2one_cv3.0.0.1.bn.weight BatchNorm2d True 80 [80] 1 0 float32

236 model.23.one2one_cv3.0.0.1.bn.bias BatchNorm2d True 80 [80] 0 0 float32

237 model.23.one2one_cv3.0.1.0.conv.weight Conv2d True 720 [80, 1, 3, 3] 0.02 0.19 float32

238 model.23.one2one_cv3.0.1.0.bn.weight BatchNorm2d True 80 [80] 1 0 float32

238 model.23.one2one_cv3.0.1.0.bn.bias BatchNorm2d True 80 [80] 0 0 float32

239 model.23.one2one_cv3.0.1.1.conv.weight Conv2d True 6400 [80, 80, 1, 1] -0.00191 0.0648 float32

240 model.23.one2one_cv3.0.1.1.bn.weight BatchNorm2d True 80 [80] 1 0 float32

240 model.23.one2one_cv3.0.1.1.bn.bias BatchNorm2d True 80 [80] 0 0 float32

241 model.23.one2one_cv3.0.2.weight Conv2d True 6400 [80, 80, 1, 1] 0.000251 0.0648 float32

241 model.23.one2one_cv3.0.2.bias Conv2d True 80 [80] -11.5 1.92e-06 float32

242 model.23.one2one_cv3.1.0.0.conv.weight Conv2d True 1152 [128, 1, 3, 3] 0.00123 0.192 float32

243 model.23.one2one_cv3.1.0.0.bn.weight BatchNorm2d True 128 [128] 1 0 float32

243 model.23.one2one_cv3.1.0.0.bn.bias BatchNorm2d True 128 [128] 0 0 float32

244 model.23.one2one_cv3.1.0.1.conv.weight Conv2d True 10240 [80, 128, 1, 1] -0.000151 0.0506 float32

245 model.23.one2one_cv3.1.0.1.bn.weight BatchNorm2d True 80 [80] 1 0 float32

245 model.23.one2one_cv3.1.0.1.bn.bias BatchNorm2d True 80 [80] 0 0 float32

246 model.23.one2one_cv3.1.1.0.conv.weight Conv2d True 720 [80, 1, 3, 3] 0.00242 0.192 float32

247 model.23.one2one_cv3.1.1.0.bn.weight BatchNorm2d True 80 [80] 1 0 float32

247 model.23.one2one_cv3.1.1.0.bn.bias BatchNorm2d True 80 [80] 0 0 float32

248 model.23.one2one_cv3.1.1.1.conv.weight Conv2d True 6400 [80, 80, 1, 1] 0.00125 0.0645 float32

249 model.23.one2one_cv3.1.1.1.bn.weight BatchNorm2d True 80 [80] 1 0 float32

249 model.23.one2one_cv3.1.1.1.bn.bias BatchNorm2d True 80 [80] 0 0 float32

250 model.23.one2one_cv3.1.2.weight Conv2d True 6400 [80, 80, 1, 1] 0.000722 0.0644 float32

250 model.23.one2one_cv3.1.2.bias Conv2d True 80 [80] -10.2 0 float32

251 model.23.one2one_cv3.2.0.0.conv.weight Conv2d True 2304 [256, 1, 3, 3] 0.00326 0.189 float32

252 model.23.one2one_cv3.2.0.0.bn.weight BatchNorm2d True 256 [256] 1 0 float32

252 model.23.one2one_cv3.2.0.0.bn.bias BatchNorm2d True 256 [256] 0 0 float32

253 model.23.one2one_cv3.2.0.1.conv.weight Conv2d True 20480 [80, 256, 1, 1] 0.000189 0.0361 float32

254 model.23.one2one_cv3.2.0.1.bn.weight BatchNorm2d True 80 [80] 1 0 float32

254 model.23.one2one_cv3.2.0.1.bn.bias BatchNorm2d True 80 [80] 0 0 float32

255 model.23.one2one_cv3.2.1.0.conv.weight Conv2d True 720 [80, 1, 3, 3] -0.00601 0.195 float32

256 model.23.one2one_cv3.2.1.0.bn.weight BatchNorm2d True 80 [80] 1 0 float32

256 model.23.one2one_cv3.2.1.0.bn.bias BatchNorm2d True 80 [80] 0 0 float32

257 model.23.one2one_cv3.2.1.1.conv.weight Conv2d True 6400 [80, 80, 1, 1] -0.000445 0.0638 float32

258 model.23.one2one_cv3.2.1.1.bn.weight BatchNorm2d True 80 [80] 1 0 float32

258 model.23.one2one_cv3.2.1.1.bn.bias BatchNorm2d True 80 [80] 0 0 float32

259 model.23.one2one_cv3.2.2.weight Conv2d True 6400 [80, 80, 1, 1] -0.00132 0.0645 float32

259 model.23.one2one_cv3.2.2.bias Conv2d True 80 [80] -8.76 0 float32

YOLO26n summary: 260 layers, 2,572,280 parameters, 2,572,280 gradients, 6.1 GFLOPs

(260, 2572280, 2572280, 6.1192448)

可以看到,打印出了模型每一层网络结构的名字、参数量以及该层的结构形状。

本文方法同样适用于ultralytics框架的其他模型结构,使用方法相同,可用于不同模型进行参数量、计算量等对比使用。

为方便大家学习使用,本文涉及到的所有代码均已打包好。免费获取方式如下:

如果文章对你有帮助,麻烦动动你的小手,给点个赞,鼓励一下吧,谢谢~~~

好了,这篇文章就介绍到这里,喜欢的小伙伴感谢给点个赞和关注,更多精彩内容持续更新~~

关于本篇文章大家有任何建议或意见,欢迎在评论区留言交流!

好了,这篇文章就介绍到这里,喜欢的小伙伴感谢给点个赞和关注,更多精彩内容持续更新~~

关于本篇文章大家有任何建议或意见,欢迎在评论区留言交流!

AtomGit 是由开放原子开源基金会联合 CSDN 等生态伙伴共同推出的新一代开源与人工智能协作平台。平台坚持“开放、中立、公益”的理念,把代码托管、模型共享、数据集托管、智能体开发体验和算力服务整合在一起,为开发者提供从开发、训练到部署的一站式体验。

更多推荐

已为社区贡献2条内容

已为社区贡献2条内容

所有评论(0)