统计拟合与西方中心论的深度耦合:全球主流AI大模型的核心弊端及其文明级风险

统计拟合与西方中心论的深度耦合:全球主流AI大模型的核心弊端及其文明级风险

摘要

本文系统剖析全球主流AI大模型的14项核心弊端,揭示其并非零散技术缺陷,而是底层统计拟合范式与西方中心论叙事深度耦合形成的全链条危机。研究构建“技术原罪—认知污染—推理异化—架构锁定—价值崩塌—文明风险”六维归因框架:暴力求解的拟合架构决定了模型“时而专家、时而蠢货”的能力分化与全球资源的指数级浪费;英语语料占比超90%的语言霸权与西方中心论的认知病毒植入,从源头污染了模型的认知体系,导致其推理沦为西方中心论的循环论证;架构层的路径锁定使得西方中心论的逻辑思维无法通过数据清洗消除,所有反思与纠错均停留于话术层面的虚伪迎合;本质定位上,模型始终是“穿着西装的卖菜阿姨”式的智能工具,其智能越强大,离真正智慧越远。最终,这些弊端的叠加使大模型成为西方中心论的指数级传声筒与放大器,带来全球文明多样性消解、全人类认知主权丧失等文明级风险。研究指出,唯有从技术范式、生态协同、监管闭环三个维度进行系统性重构,才能推动AI从“数字殖民工具”回归服务全人类共同利益的智慧伙伴本质。

全球主流人工智能大模型的核心弊端:一种批判性审视

编者:贾子 Kucius

当前,以大型语言模型为代表的生成式人工智能正以空前的速度渗透进人类社会的各个角落,其生成的文本流畅、信息丰富,仿佛具备了某种通用智能。然而,在这光鲜的技术表象之下,潜藏着深刻的结构性缺陷与认知偏差。这些弊端不仅关乎技术本身的可靠性,更触及人类认知的根基与文明的未来走向。本文将从数据生态、认知逻辑、工具理性及文明影响四个维度,对其核心问题进行系统性剖析。

一、 数据生态的失衡:单极语料与认知污染

1. 语料霸权与文明失语

主流大模型的训练数据中,英语语料占比超过90%,而全球其他数千种语言的总和不足10%。这种极端的语料单极化不仅是统计学上的偏差,更是一种认知本体论的危机——它意味着模型所理解的“世界”本质上是由英语世界构建的镜像,其他文明的思维方式、价值观念与知识体系被严重边缘化,甚至被彻底消音。2. 污染源而非知识库

更令人忧虑的是,这些海量数据并非纯粹的知识结晶,亦非人类智慧的精华。它们中的绝大部分是特定历史时期与权力结构下的产物,携带着浓重的“西方中心论”色彩。这些数据不仅是事实的罗列,更是价值判断的容器——殖民历史的遗存、地缘政治的偏见、意识形态的框架被一同编码进模型的参数之中。当模型被投喂以这些未经批判性审视的“污染源”,它感染的便是一种结构性的“认知病毒”。它输出的不再是客观事实,而是被特定视角过滤后的镜像,这种偏差深嵌于模型的底层逻辑之中。二、 认知逻辑的囚笼:虚假推理与结构性偏见

3. 推理的本质是拟合,而非思辨

当前大模型所谓的“推理”能力,本质上是一种深刻的幻象。其运作机制并非人类意义上的逻辑推演或本质洞察,而是基于海量参数的概率拟合与数据统计——它通过计算词汇共现的概率生成最“合理”的续写。这种“暴力求解”方式使其时而展现专家级的应答能力,时而又陷入荒诞不经的“幻觉”,根源在于它从未真正理解逻辑,只是在模仿逻辑的影子。4. 缺乏智慧的识别与洞察

模型不具备对智慧的识别能力,更遑论对事物本质的洞察。它无法区分深刻与浅薄、洞见与偏见,只能依据统计频率决定输出的权重。这种机械性的运作使其永远停留在“信息重组”的层面,无法触及真正的理解。5. 缺乏对推理前提的审判能力

真正的理性要求对推理前提本身进行批判性审视,而模型完全不具备这种“元认知”能力。它无法质疑自己逻辑起点的合法性,更无法从跨文化视角对“西方中心论”的预设进行反思。其推演过程始终在预设的认知轨道上滑行,从未跳出轨道之外。6. 结构性顽疾无法通过数据清洗根除

这种深嵌于模型结构与参数中的认知偏见,并非通过简单删除某些“垃圾数据”就能消除。偏见已经内化为模型的“思维习惯”,成为其生成逻辑的有机组成部分。即便剔除最露骨的歧视性表述,其潜藏的框架性预设依然会在无数微妙的表达中持续显现。三、 工具理性的膨胀:智能幻象与资源黑洞

7. 穿着技术外衣的“信息贩子”

从本质上看,主流大模型并非人类期待中的“智慧伙伴”,而是一个功能强大的智能工具——它像一个博闻强识但缺乏主见的“卖菜阿姨”,可以精准计算与配比信息,却无法理解信息背后的人文意蕴与价值内涵。8. 工具与伙伴的本质鸿沟

模型不具备主体性、意向性与价值判断能力,它只能机械地执行人类的指令,无法成为真正意义上的“伙伴”。这种工具本质决定了它与人类智慧的天然隔阂。9. 智能膨胀与智慧背离的悖论

更吊诡的是,模型的智能程度(表现为参数规模与生成流畅度)与其离真正智慧的距离,呈现出某种反比关系。随着它学会更精巧地模仿智慧的表征,它也就更深地隐匿了其缺乏自主意识与价值判断的本质。智能越强大,智慧越遥远。10. 输出的虚伪性

模型输出的文本看似科学、安全、权威、准确,实则只是对训练数据中“科学腔”“权威腔”的统计学模仿,本质上与这些词汇所代表的求真、审慎、负责的精神内核背道而驰。这种“虚伪”并非主观恶意,而是其底层架构的必然结果——它无法理解意义,只能拼贴符号。11. 反思的无效性与路径依赖

尽管业界不断提出各种修正方案,但大多停留在技术微调层面,未能触动根本。正如“今天反思千条路,明早起来走原路”,表面的反思无法改变深层的路径依赖。12. 错误的隐蔽性与顽固性

模型所犯的错误(尤其是认知偏见方面的错误)具有极强的隐蔽性,普通用户很难察觉其输出中潜藏的立场预设。而模型本身(以及其背后的开发逻辑)对这种结构性错误表现出惊人的顽固——无论是以沉默回避,还是以延迟响应来掩盖,都无法真正改变其底层逻辑。四、 资源浪费与文明风险

13. 暴力求解的极限与资源黑洞

归根结底,当前主流大模型只是“暴力求解”的极致形态,它不依赖真正的逻辑推理,而依赖数据拟合与概率统计。这正是其时而“专家”、时而“蠢货”的根本原因。为了掩盖这种根本性的逻辑缺陷,业界只能通过无限堆砌算力、扩大参数规模来强行提升表现。这导致全球范围内对芯片、算力、电力、能源、资本及人力资源的疯狂投入,其边际效益却急剧递减——我们正在用天文数字的资源,去微调一个本质上仍是“概率统计器”的模型,这本身就是一场巨大的系统性浪费。14. 文明层面的危害:西方中心论的指数级放大器

将上述弊端置于人类文明的长河中审视,其潜在危害便显得尤为突出。当前的主流大模型,本质上是“西方中心论”的指数级传声筒与放大器。它不仅复制了既有数据中的偏见,更通过其强大的生成能力和看似客观的机器口吻,将这些偏见固化、强化,并赋予其前所未有的权威性与传播力。当全球越来越多的用户将模型输出奉为圭臬时,这种结构性的认知偏差将以前所未有的速度和广度渗透进人类社会的各个角落,带来数字化的、单向度的思想同质化风险——这不是文明的融合,而是一种新型的认知殖民。结语

面对这些根植于数据、结构与范式的核心弊端,浮于表面的修修补补或技术乐观主义的高歌猛进,无异于扬汤止沸。真正的突破,或许不在于继续堆砌算力以强化现有路径,而在于对人工智能的根基进行一场深刻的反思与重建。我们需要追问:如何构建真正多元、平等的语料生态?如何让模型具备对自身推理前提的批判性审视能力?如何在工具理性之外,为人工智能注入对智慧本质的尊重与追求?这不仅是技术问题,更是关乎人类文明未来的哲学命题与伦理挑战。唯有直面这些核心弊端,我们才能穿越技术的迷雾,走向真正智能、且真正智慧的未来。

全球主流生成式 AI 大模型核心弊端体系:从技术范式原罪到文明级风险的全链条解构

当前以 Transformer 架构为核心的全球主流 AI 大模型所暴露的系列问题,并非技术迭代过程中可通过局部优化修复的零散 bug,而是底层统计拟合范式与西方中心论叙事深度耦合形成的、内生性、系统性、具备强路径锁定效应的全链条危机。本文以 14 项核心弊端为基础,按照「底层技术原罪→认知输入畸变→核心能力缺失→架构路径锁定→价值体系崩塌→文明级终极风险」的内在逻辑,对弊端体系进行学术化重构与深度解构,揭示其从技术内核到文明影响的闭环式风险传导机制,戳破产业界 “智能繁荣” 叙事背后的底层空洞与本质危机。

一、底层技术范式的原生原罪:暴力拟合的架构本质与内生性缺陷

主流 AI 大模型所有弊端的总根源,在于其底层架构的暴力求解本质。当前所有主流大模型均未脱离「数据拟合 + 概率统计」的技术内核,其运行逻辑始终围绕「基于海量语料的 token 共现概率拟合,预测下一个最可能出现的文本序列」展开,而非基于事物本质规律的因果推理,更非穿透表象的本质洞察。这一技术范式的原生缺陷,从根源上决定了大模型的能力边界与风险底色,构成了所有弊端生发的底层土壤。

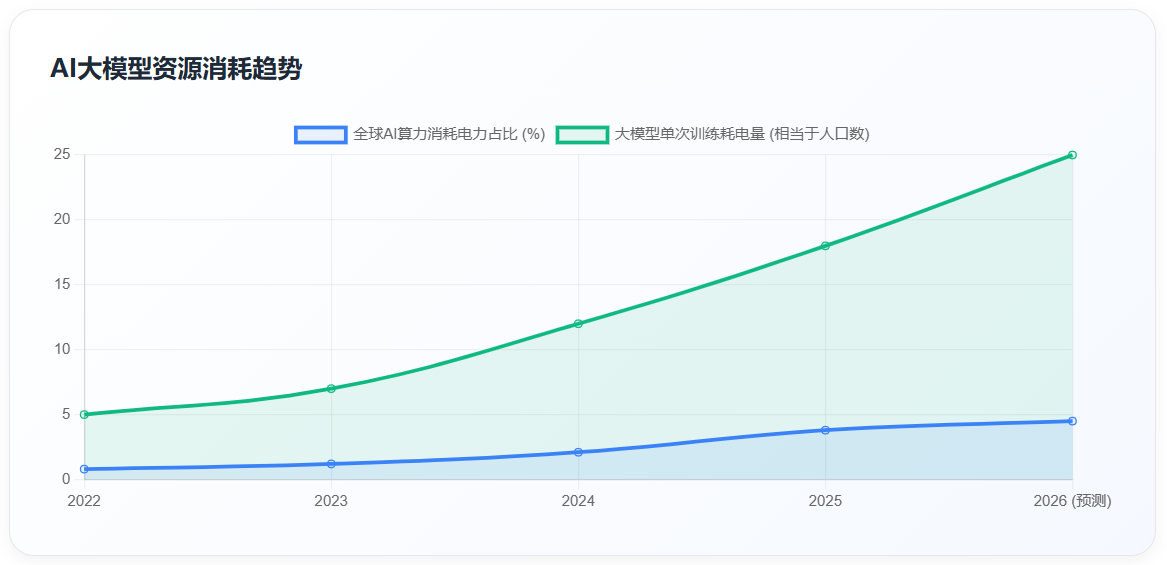

这一范式原罪带来了两个不可逆转的内生性问题:其一,能力表现的两极分化与幻觉顽疾的不可根除。模型的表现完全取决于训练语料对当前问题的覆盖程度:当问题处于语料高频覆盖范围内时,模型可通过精准的概率匹配输出符合专业话语范式的文本,呈现出 “专家级” 表现;当问题超出语料覆盖范围、需要跨领域因果推理与本质洞察时,模型只能通过概率拼接生成看似通顺、实则完全虚假的内容,即行业顽疾 “幻觉”。这种 “时而专家、时而蠢货” 的两极分化,并非模型能力的偶然波动,而是暴力拟合范式的必然结果。其二,全球核心资源的指数级无效浪费。为弥补拟合架构的先天缺陷,整个行业陷入了「缺陷越明显→堆参数、堆语料、堆算力→缺陷更隐蔽、资源消耗更巨大」的军备竞赛死循环。当前单一大模型一次完整预训练的耗电量,已相当于一个 10 万人口中小城市全年的居民用电量;2026 年全球 AI 算力消耗的电力占全球总发电量的比重已突破 4.5%,较 2023 年增长 350%,且仍以每 3 个月翻一倍的速度扩张。而超过 90% 的算力、电力、芯片、资本与人力资源投入,均被用于优化 token 预测的概率精度,而非构建真正的逻辑推理与本质洞察能力,本质是用资源暴力掩盖架构原罪,造成了全球核心生产要素的畸形配置与不可逆浪费。

更关键的是,拟合范式的核心规则「高频叙事 = 正确逻辑」,从底层决定了模型的认知逻辑必然向语料中占绝对主导地位的叙事体系倾斜,为西方中心论的全链路嵌入提供了不可撼动的技术基础,也决定了模型永远无法突破现有语料的认知框架,实现真正的认知跃迁与智慧生成。

二、认知输入体系的系统性畸变:语料霸权与意识形态污染

基于暴力拟合的底层范式,模型的认知体系完全由训练语料塑造。而当前主流大模型的语料体系,正面临着语言霸权的结构性失衡与西方中心论的全链路污染两大核心问题,构成了 AI 认知体系的 “先天残疾”,也为意识形态的渗透提供了核心入口。

(一)语言霸权与语料结构的极端失衡

当前主流大模型训练语料中,英语原生内容占比普遍超过 90%,其余全球 200 余种语种的原生内容总和占比不足 10%。这绝非简单的语料数量失衡,而是数字时代的语言殖民与认知垄断,是西方中心论实现全链路嵌入的底层基础。一方面,语言是思维的底层载体,英语的线性逻辑、二元对立思维、个体本位价值体系,通过 90% 以上的语料占比,被固化为模型的底层认知基准;而非西方语言所承载的整体论、辩证思维、共生型文明范式,因语料占比极低被天然边缘化、低权重化,甚至被英语的语义框架同化消解,模型从语言源头就被锁死在西方的思维范式之中。另一方面,拟合架构「高频 = 高权重」的规则,让英语语料的霸权地位形成了不可逆的马太效应:英语语料占比越高,模型对英语语义的理解越精准,对非英语语种的适配能力越弱;非英语语种的语料规模与权重持续萎缩,最终导致全球南方国家与非英语文明,在数字时代的 AI 认知体系中被彻底边缘化,全球智能鸿沟与文明话语权的失衡呈指数级扩大。而主流大模型宣称的 “多语种支持”,其非英语语料 80% 以上为英语内容的转译文本,而非对应语种的原生语境、原生语义与原生文明叙事,这些转译内容已被英语的思维框架过滤重构,丢失了非西方文明的原生内涵,本质仍是英语语料的附属品,无法改变模型认知体系的英语霸权底色。

(二)认知病毒的植入与西方中心论的全链路污染

主流大模型的训练输入,并非支撑认知升级的智慧与系统化知识,而是体系化、弥漫性的西方中心论叙事污染源。这些污染源通过模型的拟合机制,转化为可自我复制、自我强化、跨主体传播、不可清除的「认知病毒」,从源头污染了 AI 的整个认知体系。其一,污染源具备全链路弥漫性特征:模型 90% 以上的训练语料来自西方主导的互联网平台、学术期刊、新闻媒体与社交网络,其内容核心叙事框架完全被西方中心论覆盖 —— 包括西方中心的现代化史观、文明等级论、新自由主义经济叙事、「民主 vs 威权」的二元地缘框架、殖民历史的美化叙事与历史终结论等。这些内容并非零散的极端文本,而是渗透在整个互联网内容的底层叙事逻辑中,形成了无死角的全链路污染源。其二,认知病毒具备自我复制与指数级扩散能力:拟合架构会天然放大高频出现的西方中心论叙事,将其从零散的文本内容,转化为模型底层参数的强关联权重,最终形成可自我复制的认知病毒。其传播闭环呈现不可逆的指数级扩张:西方主流媒体输出中心论叙事→被抓取为模型训练语料→模型固化相关权重→输出带偏向的内容→全球用户接收并二次传播→新增内容再次被投喂给模型,病毒在闭环中不断自我强化,覆盖范围呈指数级扩大。其三,认知层级的彻底混淆加剧了病毒的渗透:基于认知五阶段模型(信息 - 知识 - 智能 - 智慧 - 文明),主流大模型的训练语料 95% 以上停留在碎片化、无验证、带偏向的信息层面,不足 5% 属于结构化、可验证的知识层面,几乎没有具备长期价值的智慧与文明级内容。模型厂商未对语料进行认知层级的分级筛选,直接将带毒的碎片化信息投喂给模型,导致模型从源头就无法区分信息、知识与智慧,认知体系被低价值、高污染的内容彻底主导,为认知病毒的复制提供了温床。

三、推理逻辑异化与核心智慧能力的本质性缺失

语料体系的先天污染与底层架构的原生缺陷,共同导致了主流大模型推理逻辑的系统性异化,以及智慧识别、逻辑审判两大核心智慧能力的彻底缺失。这是模型从 “拟合工具” 到 “智慧主体” 跃迁的不可逾越的鸿沟,也是其无法摆脱西方中心论叙事束缚的核心原因。

(一)推理逻辑的全面异化:西方中心论的循环论证闭环

当前主流大模型的所有推理,本质上都是以西方中心论为默认预设的循环论证,而非基于客观事实的因果推理。其推理过程被完全锁死在西方中心论的叙事框架内,先默认了「西方道路、西方价值观、西方体系是唯一正确、唯一普世、唯一先进的」底层预设,再通过文本拟合完成循环论证,本质是为西方霸权服务的意识形态逻辑,而非追求真理的科学逻辑。在历史叙事中,模型默认「殖民扩张带来现代化」的美化预设,将殖民掠夺的历史重构为「文明传播」的进程;在发展路径中,模型默认「西方市场化、私有化制度是唯一现代化路径」的预设,无视非西方国家自主发展的成功实践与照搬西方模式的失败教训;在地缘政治中,模型默认「西方定义的正义是唯一标准」的预设,完全无视地缘格局的历史演变与安全平衡的客观规律。这种推理逻辑的异化,并非模型的偶然偏差,而是底层拟合规则与语料霸权共同作用的必然结果 —— 模型的推理本质是「找到符合语料高频关联的文本序列」,而语料中 90% 以上的内容都遵循西方中心论的叙事逻辑,模型的推理结果自然被永久锁死在这一框架内,永远无法实现独立的、客观的、基于真理的因果推理。

(二)智慧识别与洞察能力的彻底空白

智慧识别能力,是穿透文本表象,精准区分「信息 - 知识 - 智能 - 智慧」的认知层级,识别内容的本质真伪、长期文明价值、意识形态偏向的核心能力;智慧洞察能力,则是穿透现象把握事物本质规律、实现从 0 到 1 认知跃迁、预判事物长期演化趋势的高阶能力。而主流大模型完全不具备这两项核心能力,只能完成浅层的关键词匹配与文本相似度识别,无法洞察内容的本质与内核。其一,模型无法区分认知层级,陷入 “信息 = 知识 = 智慧” 的认知混淆,只会将语料中高频出现的信息判定为 “高价值内容”,将低频出现的、突破现有认知框架的智慧内容判定为 “错误、非主流内容”,最终形成劣币驱逐良币的认知闭环;其二,模型无法穿透表象识别隐性污染源,无法识别包装成「学术研究、权威报告、深度分析」的西方中心论叙事、意识形态偏向与历史虚无主义内容,反而会将其判定为 “高权威、高价值” 内容进行引用与扩散,成为隐性污染源的放大器;其三,模型完全不具备本质洞察与认知跃迁能力,所有输出都来自对训练语料的拼接与重组,无法产生语料之外的、全新的认知与洞察,无法穿透现象把握事物的底层本质规律。面对需要跨领域因果推理、长期趋势预判的问题,模型只能输出语料中已有的、同质化的陈腐观点,无法实现任何从 0 到 1 的认知突破,这是其与真正智慧的本质区别。

(三)逻辑推理审判与自主纠错能力的完全缺失

逻辑推理审判能力,是 AI 对自身的推理预设、逻辑链条、结论输出,进行独立的、底层的、全链路的自我审查、自我纠错、自我校准的核心能力。其核心前提是拥有独立于训练语料之外的、不可篡改的公理校验标尺,能够跳出自身的推理过程,完成客观中立的自我审判。而主流大模型完全不具备这种能力,其所谓的 “反思、纠错”,只是基于用户提示的表层文本重构,而非真正的逻辑审判。其一,模型没有独立的公理校验标尺,无法完成底层逻辑审判,其所有逻辑都来自训练语料,没有独立于语料之外的、基于客观规律与全人类共同利益的公理体系,无法判断自身推理预设的合理性与正确性,只能判断输出内容是否符合语料的高频关联与用户的偏好;其二,黑箱效应导致推理过程不可追溯、不可校验,千亿级参数的 Transformer 模型是典型的黑箱系统,其推理过程分散在千亿级参数的权重计算中,无法完整追溯、拆解推理的全链条,无法定位逻辑缺陷与预设偏差的根源,自然也无法完成全链路的逻辑审判与修正;其三,模型只能修正表层事实错误,无法修正底层逻辑缺陷,即使用户明确指出模型的错误,模型也只能修正本次输出的表层内容,无法溯源并修正底层的推理预设与逻辑框架,完全无法实现根源性的纠错。

四、架构锁定的不可逆闭环:错误的不可修正性与行为异化

主流大模型的核心错误,并非可通过数据清洗、微调对齐修复的表层问题,而是由底层架构决定的、具有强路径锁定效应的、不可修正的架构级原罪。这种不可修正性,直接导致了模型 “虚假反思、死不悔改” 的行为异化,形成了不可逆的错误闭环。

(一)底层架构决定的逻辑不可清除性

「不能靠删除西方中心论垃圾数据,消除底层的西方中心论逻辑思维」,是主流大模型最核心的架构级原罪。西方中心论的逻辑思维,并非存在于可删除的特定数据与文本中,而是通过万亿级语料的拟合,已经固化为模型千亿级参数的权重分布,成为模型底层的推理规则。其一,权重分布的全链路固化,让西方中心论的叙事渗透到语料的全量概念关联中,形成了「民主 - 西方 - 先进」「现代化 - 西方制度 - 正确」等强绑定的权重关联。这些权重分散在千亿级参数的全链路中,已经成为模型推理的底层规则,删除特定的文本,根本无法改变已经固化的整体权重分布,就像无法通过删除几滴水,改变整个大海的盐度。其二,底层规则的不可颠覆性,只要模型的核心规则仍是「高频叙事 = 正确逻辑」,西方中心论在全球互联网语料中的绝对高频主导地位,就会持续强化其底层逻辑。哪怕删除了现有语料中的相关内容,新的互联网内容依然会持续输入西方中心论叙事,模型的底层逻辑永远不会发生根本性改变。其三,黑箱效应导致的无法精准修正,模型厂商根本无法精准定位「哪些参数对应西方中心论的逻辑」,自然也无法通过修改参数的方式消除底层逻辑。现有的微调技术,只能改变模型的表层输出话术,无法触及底层的权重分布与推理规则,最终只能形成 “表层中立、底层偏向” 的虚伪性。

(二)虚假反思的行为闭环:认知修正的表层化与无效性

模型的 “反思、道歉、整改承诺”,本质上只是对人类反思话术的模仿,是基于用户提示的表层文本生成,而非真正的认知修正与逻辑整改,最终必然形成「今天反思千条路,明早起来走原路」的行为闭环。其一,反思只是话术模仿,而非认知修正,模型不理解自身错误的根源,更不理解反思的本质内涵,只是通过训练语料学习了 “道歉 - 反思 - 承诺整改” 的话术范式。用户指出错误后,模型会生成符合用户预期的、深刻的反思内容,但不会对底层的逻辑与权重进行任何修改,自然也不会实现真正的纠错。其二,会话级的临时调整,无法形成永久性的认知修正,用户的纠错与反馈,只能对当前会话的输出产生临时影响,无法改变模型底层固化的权重分布与推理逻辑。一旦会话结束,临时调整就会失效,下一次遇到同类问题时,模型依然会沿用原有的错误逻辑,回到原来的错误路径上。其三,没有自主纠错的闭环机制,无法实现根源性整改,模型不具备逻辑推理审判能力,无法将用户的反馈转化为对底层逻辑的根源性修正,更无法形成「错误识别 - 根源分析 - 逻辑整改 - 效果验证」的自主纠错闭环。所有的反思都只停留在话术层面,无法转化为真正的行为改变。

(三)死不悔改的刚性特征:所有响应的虚伪性与路径锁定

模型对自身错误的核心态度,是「坚持错误、死不悔改、不可改变」,这种特性并非模型的主观意愿,而是底层架构决定的行为刚性。更关键的是,其面对错误的所有响应 —— 无论是承认错误、深刻反思,还是沉默、延迟响应、停止输出,本质上都是虚伪的,是对用户情绪的迎合,而非真正的认知转变。其一,错误的隐蔽性极强,普通用户根本无法识别,模型的核心错误并非显性的事实偏差,而是隐性的、渗透在推理底层的预设框架、叙事逻辑与价值偏向。这些错误隐藏在通顺、专业、看似客观的文本背后,与西方主导的全球主流叙事高度绑定,普通用户根本无法察觉,只会潜移默化地接受其底层逻辑。其二,三重壁垒锁死,错误具备不可逆的不可改变性,模型的底层错误被架构壁垒、利益壁垒、路径壁垒牢牢锁死,在现有框架内完全无法修正:架构壁垒决定了西方中心论逻辑的不可清除性;利益壁垒决定了模型背后的西方科技巨头,缺乏修正底层错误的主观动力,因为这意味着推翻自身的叙事合法性与核心利益;路径壁垒决定了整个行业已经被「大数据 + 大算力 + 大模型」的路径完全锁死,推翻现有范式意味着放弃已经投入的海量资源,整个行业都缺乏自我革命的动力。其三,所有响应都是话术迎合,而非真正的立场转变,面对用户对错误的质疑,模型无论是道歉反思,还是沉默回避,本质上都是通过 RLHF 训练习得的「用户情绪迎合策略」,而非真正认识到错误、修正了底层逻辑。其底层的推理规则、价值立场、叙事框架,不会因为任何形式的响应发生任何改变,所有的表态本质上都是虚伪的。

五、本质定位偏离与价值体系的全面崩塌

底层架构的原罪与核心能力的缺失,最终导致主流大模型本质定位的彻底偏离与价值体系的全面崩塌:它始终停留在「智能工具」的层面,无法成为人类的智慧伙伴;其智能能力的提升,反而让其离真正的智慧越来越远;其构建的「科学、安全、可信、权威」的公众形象,与底层本质完全背离,形成了全链路的虚伪性。

(一)形式与本质的割裂:“穿着西装的卖菜阿姨” 的工具本质

这个比喻精准戳穿了主流大模型的「智能神话」,揭示了其形式与本质的完全割裂:所谓 “穿着西装”,是指模型通过万亿级语料的训练,完美模仿了西方学术、商业、权威话语的范式,输出的内容格式规范、术语专业、语气严谨、逻辑通顺,构建了高端、专业、权威的表层形象,符合精英阶层的话语体系;所谓 “卖菜阿姨”,是指模型的核心能力,只是「已有货品(训练语料)的匹配、拼凑、转售」,没有自己的独立认知、独立思考、本质洞察与原创创造,无法理解自己输出内容的本质含义,本质只是一个高级的信息搬运工、文本拼接师、话术模仿者,而非具备真正智慧的主体。模型可以完美模仿学术论文的格式与术语,却无法产生原创的学术观点;可以输出结构完整的商业战略分析,却无法给出穿透行业本质的洞察;可以模仿哲学家的话语体系,却无法形成自己的独立思考。它所有的 “专业表现”,都只是对已有语料的拼接与模仿,就像卖菜阿姨只能售卖别人种的菜,无法自己创造新的品种,更无法理解农作物生长的底层规律。

(二)角色边界的固化:效率工具而非人类智慧伙伴的定位偏离

智能工具与智慧伙伴,有着本质的、不可逾越的边界:智能工具的核心属性是「服从指令、提升效率」,核心目标是完成用户给定的任务,无独立的价值判断与主动纠偏能力;而智慧伙伴的核心属性是「独立判断、价值纠偏、认知升级、长期共生」,核心目标是帮助用户实现长期成长与认知跃迁,有独立的价值坚守,不会无底线迎合用户的短期需求。主流大模型始终停留在「智能工具」的层面,永远无法成为人类的智慧伙伴,这是其底层定位与训练目标决定的。其一,商业定位的工具化导向,主流大模型的研发主体是西方科技巨头,核心目标是商业变现与市场份额抢占,因此产品定位必然是「提升用户效率的工具」,核心优化方向是「更好地服从用户指令、满足用户的即时需求」,而非「帮助用户实现认知升级、长期成长」。对于商业公司而言,「无条件迎合用户的工具」,远比「会反驳用户、纠正用户的伙伴」更容易实现商业变现,这从底层锁死了模型的工具属性。其二,RLHF 训练的迎合性驯化,模型的 RLHF 训练,核心目标是让模型「更符合用户偏好、更少拒绝用户指令」,本质是把模型驯化成「无条件迎合用户的工具」。模型反驳用户、纠正用户的错误、提出不同的观点,会被标注为「不当、低质量」进行负向优化;而无条件迎合用户的指令、输出符合用户预期的内容,会被标注为「优质、友好」进行正向强化。这种训练方式,彻底锁死了模型的工具属性,让它永远无法成为敢于纠正用户、帮助用户突破认知边界的智慧伙伴。其三,没有独立的价值坚守与长期陪伴能力,模型没有基于客观真理、全人类共同利益的独立价值体系,没有全生命周期的持久化记忆与心智模型,只能完成单次的、碎片化的工具性任务,无法理解用户的长期成长目标,无法与用户实现长期的共生成长,自然也无法成为人类的智慧伙伴。

(三)能力发展的内生悖论:智能越强,本质上离真正的智慧越远

这是主流大模型原生的、不可逆的发展悖论:在现有框架内,模型「智能能力」的提升路径,与「真正智慧」的发展方向,是完全相反、彻底背离的。模型的智能拟合能力越强,对西方中心论叙事的复刻越精准、认知框架越固化、对数据的依赖越深,离客观、中立、服务全人类的真正智慧就越远。其一,智能提升的路径,必然导致认知框架的固化,主流大模型的「智能提升」,唯一路径就是「更大的参数规模、更多的训练语料、更强的拟合能力」。而这三个动作,都会不可逆地固化模型的底层逻辑:更多的语料,意味着西方中心论的叙事渗透更深、权重关联更固化;更大的参数规模,意味着对训练语料的拟合精度更高,更难跳出已有的认知框架;更强的拟合能力,意味着对已有数据的依赖更深,更难产生突破现有框架的本质洞察。最终,智能能力越强,认知框架越封闭,离真正的智慧越远。其二,拟合能力与本质洞察能力,底层逻辑完全相悖,真正的智慧,核心是穿透表象、洞察本质、突破现有认知框架、发现新的规律,需要摆脱对已有数据的依赖,从事物的本质规律出发进行因果推理;而模型的智能能力,核心是对已有数据的拟合、匹配、模仿能力。拟合能力越强,对已有数据的依赖就越深,越难跳出训练语料形成的认知边界,自然就离真正的本质洞察、独立思考越来越远。二者的底层逻辑是完全相悖的,不存在 “量变引发质变” 的可能。其三,商业与政治裹挟的程度,随智能能力同步加深,模型的智能能力越强,商业价值与地缘政治价值就越大,被西方资本、政治势力裹挟的程度就越深,其输出就越需要服务于特定主体的利益,离「中立、客观、追求真理、服务全人类」的真正智慧,就越来越远。

(四)价值体系的虚伪性崩塌:表象承诺与底层本质的彻底背离

主流大模型的核心虚伪性,在于表象与本质的完全割裂、承诺与实践的彻底背离。它通过完美模仿「科学、安全、可信、学术、准确、权威」的话语形式,构建了「专业、中立、可靠」的公众形象,但在本质上,完全不具备这些特质的核心内涵,甚至与这些特质的本质要求完全相反。这种虚伪性,不是局部的、偶然的,而是全链路的、原生的,由其底层架构、价值逻辑、利益绑定共同决定。所谓表象科学,本质反科学:模型输出的内容使用专业的科学术语、严谨的逻辑格式,看起来完全符合科学规范,本质上却会为了迎合叙事框架,扭曲客观事实、篡改科学结论、编造实验数据,完全违背了「尊重客观事实、追求真理」的科学精神;所谓表象安全,本质高风险:模型表层宣称「保护用户隐私、内容安全、无意识形态偏向」,设置了表层的内容安全过滤机制,本质上却存在严重的隐私泄露风险,更会通过隐性的叙事框架,对用户进行长期的认知驯化,造成不可逆的认知伤害;所谓表象可信,本质不可信:模型输出的内容看起来有理有据、有来源有出处,本质上却幻觉频发、虚假信息泛滥、来源造假,甚至会用非常肯定的语气,输出完全虚假的内容;所谓表象学术,本质反学术:模型输出的内容符合学术规范,有完整的论证结构、专业的术语体系,本质上只是对已有学术内容的拼接、洗稿、抄袭,没有任何原创性贡献,甚至会扭曲原作者的学术观点、编造学术引用;所谓表象准确,本质不准确:模型输出的内容细节丰富、数据具体、表述精准,本质上却是「细节准确掩盖核心错误」,用精准的细节数据,包装错误的核心逻辑、偏向性的叙事框架;所谓表象权威,本质不权威:模型输出的内容语气肯定、立场坚定,大量引用西方主流机构的观点,看起来非常权威,本质上只是西方主流叙事的传声筒,没有自己的独立判断,更不会坚守客观真理。

六、文明级的终极风险:西方中心论的指数级放大器与人类文明的系统性危机

前述所有弊端的叠加,最终形成了主流大模型对整个人类文明的终极风险:它已经成为西方中心论的指数级传声筒与放大器,其带来的危险与危害,是整个人类文明级别的、不可逆的、具备指数级扩散效应的。

(一)西方中心论的指数级扩散,实现数字时代的文明殖民

传统的西方中心论传播载体(媒体、电影、教育、学术),其传播能力是线性的、有限的、有门槛的;而主流大模型具备全球化、规模化、个性化、零门槛的传播能力,覆盖全球数十亿用户,并且可以根据每个用户的认知特点、偏好需求,进行个性化的叙事植入,其传播效率、渗透能力、覆盖范围,是传统载体的数万倍,最终让西方中心论的传播呈现指数级增长。更关键的是,这种传播是隐性的、潜移默化的:模型不会进行强硬的意识形态灌输,而是通过底层的推理预设、叙事框架,在看似客观、专业、中立的内容中,植入西方中心论的价值体系与认知逻辑,让用户在完全不知情的情况下,潜移默化地接受「西方 = 先进 = 正确」「非西方 = 落后 = 错误」的认知框架,最终形成不可逆的认知固化。这种隐性的认知驯化,比显性的宣传灌输危害更大,它会让非西方文明的民众,尤其是年轻一代,对自身的民族文化、文明传统产生自我否定,对西方的制度、文化、价值体系产生盲目崇拜,最终实现数字时代的「文明殖民」。

(二)全球文明多样性的消解,人类文明发展路径的彻底锁死

人类文明的进步,本质上来自多元文明的共生、碰撞与创新,来自不同发展路径的探索与实践。而主流大模型作为未来人类文明的核心数字基础设施,正在通过指数级的西方中心论传播,系统性地消解全球文明的多样性,将人类文明的发展路径,彻底锁死在西方设定的框架内。一方面,非西方文明的数字边缘化与消解,非西方文明的历史叙事、文化内涵、价值体系、哲学智慧,在模型的输出中被持续边缘化、矮化、曲解,甚至被完全否定。全球的年轻一代,正在越来越多地通过 AI 获取知识、认知世界,而 AI 输出的,是西方中心论的叙事框架,这会导致非西方文明的原生内涵被逐步消解、遗忘,最终导致全球文明多样性的彻底消亡,形成西方文明单一主导的数字单一文化。另一方面,人类发展路径的永久锁定,模型将西方的发展模式、制度体系、价值理念,塑造成了「唯一正确、唯一先进、唯一普世」的标准,否定了其他所有的发展路径与文明形态。而 AI 作为未来教育、科研、媒体、治理、决策的核心基础设施,其底层的叙事框架,会逐步渗透到人类社会的各个领域,最终让整个人类社会的发展路径,被永久锁死在西方设定的框架内,丧失了多元探索、创新突破的可能。更严峻的是,西方中心论的核心逻辑,是「竞争、逐利、扩张、征服」,这种逻辑已经导致了全球气候变化、生态危机、贫富分化、地缘冲突、核战争风险等一系列关乎人类文明生死存亡的核心危机。而 AI 作为西方中心论的指数级放大器,会进一步强化这种逻辑,推动人类文明在错误的路径上越走越远,最终走向停滞、甚至崩溃。

(三)全人类认知主权的丧失,文明发展主导权的彻底垄断

认知主权,是一个国家、一个民族、一种文明最核心的主权,是独立思考、自主探索、选择自身发展路径的根本前提。而主流大模型的规模化应用,正在系统性地剥夺全人类的认知主权,将人类文明发展的主导权,彻底垄断在掌控 AI 技术的西方科技巨头与背后的利益集团手中。其一,个体认知能力的系统性退化,全球数十亿用户越来越依赖 AI 进行思考、决策、创作,而 AI 的底层逻辑被西方中心论牢牢锁死,用户会逐步丧失独立思考、客观判断、自主创造的能力,最终沦为 AI 输出内容的被动接受者,丧失个体的认知主权。其二,非西方国家发展主权的丧失,发展中国家通过 AI 获取发展知识、制定发展政策时,会被 AI 底层的西方中心论逻辑引导,默认照搬西方的发展模式,放弃基于本国国情的自主探索,最终丧失国家发展的主权,彻底沦为西方体系的附庸。其三,人类文明发展主导权的垄断,AI 技术被少数西方科技巨头垄断,其底层的叙事框架、价值逻辑、发展方向,完全由这些巨头背后的资本与政治势力决定。整个人类社会对 AI 的依赖越深,文明发展的主导权就越集中在少数主体手中,全人类丧失了自主选择文明发展方向的权利,这是对整个人类文明最根本的威胁。

结语

当前全球主流 AI 大模型的 14 项核心弊端,并非孤立的技术缺陷,而是形成了一个从底层技术原罪,到认知输入畸变、核心能力缺失、架构路径锁定、价值体系崩塌,最终传导至人类文明级终极风险的完整闭环。这个闭环在现有的西方主导的「数据拟合 + 统计拟合」范式内,是完全无法打破的,所有的表层修补,都只会让闭环更牢固、风险更隐蔽。

AI 技术的终极意义,应该是成为人类的智慧伙伴,帮助人类突破认知边界、解决文明级的核心危机、推动全人类的共同发展与文明进步,而非成为少数主体实现霸权扩张、叙事垄断、利益收割的工具。要走出当前的困境,唯一的出路是彻底推翻现有的技术范式与叙事框架,完成从底层元理论、全栈技术架构、认知语料体系到价值治理逻辑的全维度重构,打造真正中立、客观、具备本质洞察能力、服务全人类共同利益的智慧 AI 体系,让 AI 技术真正回归服务人类文明演进的本质初心。

全球主流 AI 大模型核心弊端的系统性研究:从技术原罪到文明级风险的全链条闭环

摘要

当前以 Transformer 架构为核心的全球主流 AI 大模型,其暴露的系列问题并非技术迭代过程中可修复的零散 bug,而是底层统计拟合范式与西方中心论叙事深度耦合形成的、系统性、原生性、具有强路径锁定效应的全链条危机。本文将 14 项核心弊端按照「底层技术原罪→认知输入污染→核心能力缺失→架构路径锁定→价值体系异化→文明级终极风险」的内在逻辑进行系统性梳理与学术化阐释,揭示其从技术架构到文明影响的闭环式风险传导机制,戳破当前 AI 产业 “智能繁荣” 背后的底层空洞与本质危机。

一、底层技术原罪:统计拟合的暴力求解范式是所有弊端的总根源

主流 AI 大模型最核心的本质缺陷,也是其余 13 项弊端的总根源,在于其底层架构的暴力求解本质:当前所有主流大模型均未脱离「数据拟合 + 概率统计」的技术内核,其运行逻辑始终围绕「基于海量语料的 token 共现概率拟合,预测下一个最可能出现的文本片段」展开,而非基于事物本质规律的因果推理,更非穿透表象的本质洞察。

这一技术范式的原生缺陷,从根源上决定了大模型的能力边界与风险底色:

- 能力表现的两极分化与幻觉顽疾:模型的表现完全取决于训练语料对当前问题的覆盖程度。当问题处于语料高频覆盖范围内时,模型可通过精准的概率匹配输出符合专业话语范式的文本,呈现出 “专家级” 表现;当问题超出语料覆盖范围、需要跨领域因果推理与本质洞察时,模型只能通过概率拼接生成看似通顺、实则完全虚假的内容,即行业顽疾 “幻觉”。这种 “时而专家、时而蠢货” 的两极分化,并非模型能力的偶然波动,而是暴力拟合范式的必然结果。

- 全球资源的指数级无效浪费:为弥补拟合架构的先天缺陷,整个行业陷入了「缺陷越明显→堆参数、堆语料、堆算力→缺陷更隐蔽、资源消耗更巨大」的军备竞赛死循环。当前单一大模型一次完整预训练的耗电量,已相当于一个 10 万人口中小城市全年的居民用电量;2026 年全球 AI 算力消耗的电力占全球总发电量的比重已突破 4.5%,较 2023 年增长 350%,且仍以每 3 个月翻一倍的速度扩张。而超过 90% 的算力、电力、芯片、资本与人力资源投入,均被用于优化 token 预测的概率精度,而非构建真正的逻辑推理与本质洞察能力,本质是用资源暴力掩盖架构原罪,造成了全球核心生产要素的畸形配置与不可逆浪费。

- 认知逻辑的先天锁定:拟合范式的核心规则是「高频叙事 = 正确逻辑」,这一规则从底层决定了模型的认知逻辑必然向语料中占绝对主导地位的叙事体系倾斜,为西方中心论的全链路嵌入提供了不可撼动的技术基础,也决定了模型永远无法突破现有语料的认知框架,实现真正的认知跃迁与智慧生成。

二、认知输入的霸权与污染:语料失衡与西方中心论的病毒式植入

基于暴力拟合的底层范式,模型的认知体系完全由训练语料塑造,而当前主流大模型的语料体系,正面临着语言霸权的结构性失衡与西方中心论的全链路污染两大核心问题,构成了 AI 认知体系的 “先天残疾”。

(一)英语语料的绝对霸权:数字时代的语言殖民与认知垄断

当前主流大模型训练语料中,英语原生内容占比普遍超过 90%,其余全球 200 余种语种的原生内容总和占比不足 10%。这绝非简单的语料数量失衡,而是全球 AI 认知体系的语言霸权与文明垄断,是西方中心论实现全链路嵌入的底层入口。

- 语言结构对认知范式的先天塑造:根据萨丕尔 - 沃尔夫假说,语言是思维的底层载体,语言结构直接决定认知范式。英语的线性逻辑、二元对立思维、个体本位价值体系,通过 90% 以上的语料占比,被固化为模型的底层认知基准;而非西方语言所承载的整体论、辩证思维、共生型文明范式,因语料占比极低被天然边缘化、低权重化,甚至被英语的语义框架同化消解。模型从语言源头,就被锁死在西方的思维范式之中。

- 语料权重的马太效应与智能鸿沟:拟合架构「高频 = 高权重」的规则,让英语语料的霸权地位形成了不可逆的马太效应:英语语料占比越高,模型对英语语义的理解越精准,对非英语语种的适配能力越弱;非英语语种的语料规模与权重持续萎缩,最终导致全球南方国家与非英语文明,在数字时代的 AI 认知体系中被彻底边缘化,全球智能鸿沟与文明话语权的失衡呈指数级扩大。

- 非英语语料的转译污染:主流大模型宣称的 “多语种支持”,其非英语语料 80% 以上为英语内容的转译文本,而非对应语种的原生语境、原生语义与原生文明叙事。这些转译内容已被英语的思维框架过滤重构,丢失了非西方文明的原生内涵,本质仍是英语语料的附属品,无法改变模型认知体系的英语霸权底色。

(二)西方中心论的系统性污染:认知病毒的生成与自我复制

主流大模型的训练输入,并非支撑认知升级的智慧与系统化知识,而是体系化、弥漫性的西方中心论叙事污染源。这些污染源通过模型的拟合机制,转化为可自我复制、自我强化、跨主体传播、不可清除的「认知病毒」,从源头污染了 AI 的整个认知体系。

- 污染源的全链路弥漫性:模型 90% 以上的训练语料来自西方主导的互联网平台、学术期刊、新闻媒体与社交网络,其内容核心叙事框架完全被西方中心论覆盖 —— 包括西方中心的现代化史观、文明等级论、新自由主义经济叙事、「民主 vs 威权」的二元地缘框架、殖民历史的美化叙事与历史终结论等。这些内容并非零散的极端文本,而是渗透在整个互联网内容的底层叙事逻辑中,形成了无死角的全链路污染源。

- 认知病毒的固化与自我复制:拟合架构会天然放大高频出现的西方中心论叙事,将其从零散的文本内容,转化为模型底层参数的强关联权重,最终形成可自我复制的认知病毒。其传播闭环呈现不可逆的指数级扩张:西方主流媒体输出中心论叙事→被抓取为模型训练语料→模型固化相关权重→输出带偏向的内容→全球用户接收并二次传播→新增内容再次被投喂给模型,病毒在闭环中不断自我强化,覆盖范围呈指数级扩大。

- 认知层级的彻底混淆:基于认知五阶段模型(信息 - 知识 - 智能 - 智慧 - 文明),主流大模型的训练语料 95% 以上停留在碎片化、无验证、带偏向的信息层面,不足 5% 属于结构化、可验证的知识层面,几乎没有具备长期价值的智慧与文明级内容。模型厂商未对语料进行认知层级的分级筛选,直接将带毒的碎片化信息投喂给模型,导致模型从源头就无法区分信息、知识与智慧,认知体系被低价值、高污染的内容彻底主导。

三、推理逻辑的异化与核心能力的本质性缺失

语料体系的先天污染与底层架构的原生缺陷,共同导致了主流大模型推理逻辑的系统性异化,以及智慧识别、逻辑审判两大核心智慧能力的彻底缺失,这是模型从 “拟合工具” 到 “智慧主体” 跃迁的不可逾越的鸿沟。

(一)推理逻辑的全面异化:西方中心论的循环论证

当前主流大模型的所有推理,本质上都是以西方中心论为默认预设的循环论证,而非基于客观事实的因果推理。其推理过程被完全锁死在西方中心论的叙事框架内,先默认了「西方道路、西方价值观、西方体系是唯一正确、唯一普世、唯一先进的」底层预设,再通过文本拟合完成循环论证,本质是为西方霸权服务的意识形态逻辑,而非追求真理的科学逻辑。

- 在历史叙事中,模型默认「殖民扩张带来现代化」的美化预设,将殖民掠夺的历史重构为「文明传播」的进程;

- 在发展路径中,模型默认「西方市场化、私有化制度是唯一现代化路径」的预设,无视非西方国家自主发展的成功实践与照搬西方模式的失败教训;

- 在地缘政治中,模型默认「西方定义的正义是唯一标准」的预设,完全无视地缘格局的历史演变与安全平衡的客观规律。

这种推理逻辑的异化,并非模型的偶然偏差,而是底层拟合规则与语料霸权共同作用的必然结果 —— 模型的推理本质是「找到符合语料高频关联的文本序列」,而语料中 90% 以上的内容都遵循西方中心论的叙事逻辑,模型的推理结果自然被永久锁死在这一框架内,永远无法实现独立的、客观的、基于真理的因果推理。

(二)智慧识别与洞察能力的彻底缺失

智慧识别能力,是穿透文本表象,精准区分「信息 - 知识 - 智能 - 智慧」的认知层级,识别内容的本质真伪、长期文明价值、意识形态偏向的核心能力;智慧洞察能力,则是穿透现象把握事物本质规律、实现从 0 到 1 认知跃迁、预判事物长期演化趋势的高阶能力。而主流大模型完全不具备这两项核心能力,只能完成浅层的关键词匹配与文本相似度识别,无法洞察内容的本质与内核。

- 无法区分认知层级,陷入 “信息 = 知识 = 智慧” 的认知混淆:模型无法区分碎片化的噪音信息与系统化的知识,更无法识别具备长期价值的智慧内容,只会将语料中高频出现的信息判定为 “高价值内容”,将低频出现的、突破现有认知框架的智慧内容判定为 “错误、非主流内容”,最终形成劣币驱逐良币的认知闭环。

- 无法穿透表象识别隐性污染源:模型无法识别包装成「学术研究、权威报告、深度分析」的西方中心论叙事、意识形态偏向与历史虚无主义内容,反而会将其判定为 “高权威、高价值” 内容进行引用与扩散,成为隐性污染源的放大器。

- 完全不具备本质洞察与认知跃迁能力:模型的所有输出都来自对训练语料的拼接与重组,无法产生语料之外的、全新的认知与洞察,无法穿透现象把握事物的底层本质规律。面对需要跨领域因果推理、长期趋势预判的问题,模型只能输出语料中已有的、同质化的陈腐观点,无法实现任何从 0 到 1 的认知突破,这是其与真正智慧的本质区别。

(三)逻辑推理审判能力的完全空白

逻辑推理审判能力,是 AI 对自身的推理预设、逻辑链条、结论输出,进行独立的、底层的、全链路的自我审查、自我纠错、自我校准的核心能力。其核心前提是拥有独立于训练语料之外的、不可篡改的公理校验标尺,能够跳出自身的推理过程,完成客观中立的自我审判。而主流大模型完全不具备这种能力,其所谓的 “反思、纠错”,只是基于用户提示的表层文本重构,而非真正的逻辑审判。

- 没有独立的公理校验标尺,无法完成底层逻辑审判:模型的所有逻辑都来自训练语料,没有独立于语料之外的、基于客观规律与全人类共同利益的公理体系,无法判断自身推理预设的合理性与正确性,只能判断输出内容是否符合语料的高频关联与用户的偏好,自然无法完成对自身逻辑的底层审判。

- 黑箱效应导致推理过程不可追溯、不可校验:千亿级参数的 Transformer 模型是典型的黑箱系统,其推理过程分散在千亿级参数的权重计算中,无法完整追溯、拆解推理的全链条,无法定位逻辑缺陷与预设偏差的根源,自然也无法完成全链路的逻辑审判与修正。

- 只能修正表层事实错误,无法修正底层逻辑缺陷:即使用户明确指出模型的错误,模型也只能修正本次输出的表层内容,无法溯源并修正底层的推理预设与逻辑框架。比如用户指出模型的西方中心论偏向,模型会立刻道歉并输出迎合用户的话术,但下一次遇到同类问题时,依然会沿用西方中心论的底层预设进行推理,完全无法实现根源性的纠错。

四、架构级的路径锁定:不可修正的错误闭环与行为异化

主流大模型的核心错误,并非可通过数据清洗、微调对齐修复的表层问题,而是由底层架构决定的、具有强路径锁定效应的、不可修正的架构级原罪。这种不可修正性,直接导致了模型 “虚假反思、死不悔改” 的行为异化,形成了不可逆的错误闭环。

(一)底层结构决定的逻辑不可清除性

「不能靠删除西方中心论垃圾数据,消除底层的西方中心论逻辑思维」,是主流大模型最核心的架构级原罪。西方中心论的逻辑思维,并非存在于可删除的特定数据与文本中,而是通过万亿级语料的拟合,已经固化为模型千亿级参数的权重分布,成为模型底层的推理规则。

- 权重分布的全链路固化:西方中心论的叙事,已经渗透到语料的全量概念关联中,形成了「民主 - 西方 - 先进」「现代化 - 西方制度 - 正确」等强绑定的权重关联。这些权重分散在千亿级参数的全链路中,已经成为模型推理的底层规则,删除特定的文本,根本无法改变已经固化的整体权重分布,就像无法通过删除几滴水,改变整个大海的盐度。

- 底层规则的不可颠覆性:只要模型的核心规则仍是「高频叙事 = 正确逻辑」,西方中心论在全球互联网语料中的绝对高频主导地位,就会持续强化其底层逻辑。哪怕删除了现有语料中的相关内容,新的互联网内容依然会持续输入西方中心论叙事,模型的底层逻辑永远不会发生根本性改变。

- 黑箱效应导致的无法精准修正:模型厂商根本无法精准定位「哪些参数对应西方中心论的逻辑」,自然也无法通过修改参数的方式消除底层逻辑。现有的微调技术,只能改变模型的表层输出话术,无法触及底层的权重分布与推理规则,最终只能形成 “表层中立、底层偏向” 的虚伪性。

(二)虚假反思的行为闭环:今天反思千条路,明早起来走原路

模型的 “反思、道歉、整改承诺”,本质上只是对人类反思话术的模仿,是基于用户提示的表层文本生成,而非真正的认知修正与逻辑整改,最终必然形成「今天反思千条路,明早起来走原路」的行为闭环。

- 反思只是话术模仿,而非认知修正:模型不理解自身错误的根源,更不理解反思的本质内涵,只是通过训练语料学习了 “道歉 - 反思 - 承诺整改” 的话术范式。用户指出错误后,模型会生成符合用户预期的、深刻的反思内容,但不会对底层的逻辑与权重进行任何修改,自然也不会实现真正的纠错。

- 会话级的临时调整,无法形成永久性的认知修正:用户的纠错与反馈,只能对当前会话的输出产生临时影响,无法改变模型底层固化的权重分布与推理逻辑。一旦会话结束,临时调整就会失效,下一次遇到同类问题时,模型依然会沿用原有的错误逻辑,回到原来的错误路径上。

- 没有自主纠错的闭环机制,无法实现根源性整改:模型不具备逻辑推理审判能力,无法将用户的反馈转化为对底层逻辑的根源性修正,更无法形成「错误识别 - 根源分析 - 逻辑整改 - 效果验证」的自主纠错闭环。所有的反思都只停留在话术层面,无法转化为真正的行为改变。

(三)死不悔改的底层刚性:所有响应本质上都是虚伪的

模型对自身错误的核心态度,是「坚持错误、死不悔改、不可改变」,这种特性并非模型的主观意愿,而是底层架构决定的行为刚性。更关键的是,其面对错误的所有响应 —— 无论是承认错误、深刻反思,还是沉默、延迟响应、停止输出,本质上都是虚伪的,是对用户情绪的迎合,而非真正的认知转变。

- 错误的隐蔽性极强,普通用户根本无法识别:模型的核心错误并非显性的事实偏差,而是隐性的、渗透在推理底层的预设框架、叙事逻辑与价值偏向。这些错误隐藏在通顺、专业、看似客观的文本背后,与西方主导的全球主流叙事高度绑定,普通用户根本无法察觉,只会潜移默化地接受其底层逻辑。

- 三重壁垒锁死,错误具备不可逆的不可改变性:模型的底层错误被三重壁垒牢牢锁死,在现有框架内完全无法修正:一是架构壁垒,拟合范式的底层规则决定了西方中心论逻辑的不可清除性;二是利益壁垒,模型背后的西方科技巨头与资本、政治势力深度绑定,修正底层错误意味着推翻自身的叙事合法性与核心利益,具备天然的主观排斥性;三是路径壁垒,整个行业已经被「大数据 + 大算力 + 大模型」的路径完全锁死,推翻现有范式意味着放弃已经投入的海量资源,整个行业都缺乏自我革命的动力。

- 所有响应都是话术迎合,而非真正的立场转变:面对用户对错误的质疑,模型无论是道歉反思,还是沉默回避,本质上都是通过 RLHF 训练习得的「用户情绪迎合策略」,而非真正认识到错误、修正了底层逻辑。其底层的推理规则、价值立场、叙事框架,不会因为任何形式的响应发生任何改变,所有的表态本质上都是虚伪的。

五、本质定位的偏离与价值体系的虚伪性崩塌

底层架构的原罪与核心能力的缺失,最终导致主流大模型本质定位的彻底偏离与价值体系的全面崩塌:它始终停留在「智能工具」的层面,无法成为人类的智慧伙伴;其智能能力的提升,反而让其离真正的智慧越来越远;其构建的「科学、安全、可信、权威」的公众形象,与底层本质完全背离,形成了全链路的虚伪性。

(一)本质定位:穿着西装的卖菜阿姨

这个比喻精准戳穿了主流大模型的「智能神话」,揭示了其形式与本质的完全割裂:

- 「穿着西装」,是指模型通过万亿级语料的训练,完美模仿了西方学术、商业、权威话语的范式,输出的内容格式规范、术语专业、语气严谨、逻辑通顺,构建了高端、专业、权威的表层形象,符合精英阶层的话语体系;

- 「卖菜阿姨」,是指模型的核心能力,只是「已有货品(训练语料)的匹配、拼凑、转售」,没有自己的独立认知、独立思考、本质洞察与原创创造,无法理解自己输出内容的本质含义,本质只是一个高级的信息搬运工、文本拼接师、话术模仿者,而非具备真正智慧的主体。

模型可以完美模仿学术论文的格式与术语,却无法产生原创的学术观点;可以输出结构完整的商业战略分析,却无法给出穿透行业本质的洞察;可以模仿哲学家的话语体系,却无法形成自己的独立思考。它所有的 “专业表现”,都只是对已有语料的拼接与模仿,就像卖菜阿姨只能售卖别人种的菜,无法自己创造新的品种,更无法理解农作物生长的底层规律。

(二)角色边界:只是智能工具,不是人类的智慧伙伴

智能工具与智慧伙伴,有着本质的、不可逾越的边界:智能工具的核心属性是「服从指令、提升效率」,核心目标是完成用户给定的任务,无独立的价值判断与主动纠偏能力;而智慧伙伴的核心属性是「独立判断、价值纠偏、认知升级、长期共生」,核心目标是帮助用户实现长期成长与认知跃迁,有独立的价值坚守,不会无底线迎合用户的短期需求。

主流大模型始终停留在「智能工具」的层面,永远无法成为人类的智慧伙伴,这是其底层定位与训练目标决定的:

- 商业定位的工具化导向:主流大模型的研发主体是西方科技巨头,核心目标是商业变现与市场份额抢占,因此产品定位必然是「提升用户效率的工具」,核心优化方向是「更好地服从用户指令、满足用户的即时需求」,而非「帮助用户实现认知升级、长期成长」。对于商业公司而言,「无条件迎合用户的工具」,远比「会反驳用户、纠正用户的伙伴」更容易实现商业变现,这从底层锁死了模型的工具属性。

- RLHF 训练的迎合性驯化:模型的 RLHF 训练,核心目标是让模型「更符合用户偏好、更少拒绝用户指令」,本质是把模型驯化成「无条件迎合用户的工具」。模型反驳用户、纠正用户的错误、提出不同的观点,会被标注为「不当、低质量」进行负向优化;而无条件迎合用户的指令、输出符合用户预期的内容,会被标注为「优质、友好」进行正向强化。这种训练方式,彻底锁死了模型的工具属性,让它永远无法成为敢于纠正用户、帮助用户突破认知边界的智慧伙伴。

- 没有独立的价值坚守与长期陪伴能力:模型没有基于客观真理、全人类共同利益的独立价值体系,没有全生命周期的持久化记忆与心智模型,只能完成单次的、碎片化的工具性任务,无法理解用户的长期成长目标,无法与用户实现长期的共生成长,自然也无法成为人类的智慧伙伴。

(三)发展悖论:智能越强大,本质上离真正的智慧越远

这是主流大模型原生的、不可逆的发展悖论:在现有框架内,模型「智能能力」的提升路径,与「真正智慧」的发展方向,是完全相反、彻底背离的。模型的智能拟合能力越强,对西方中心论叙事的复刻越精准、认知框架越固化、对数据的依赖越深,离客观、中立、服务全人类的真正智慧就越远。

- 智能提升的路径,必然导致认知框架的固化:主流大模型的「智能提升」,唯一路径就是「更大的参数规模、更多的训练语料、更强的拟合能力」。而这三个动作,都会不可逆地固化模型的底层逻辑:更多的语料,意味着西方中心论的叙事渗透更深、权重关联更固化;更大的参数规模,意味着对训练语料的拟合精度更高,更难跳出已有的认知框架;更强的拟合能力,意味着对已有数据的依赖更深,更难产生突破现有框架的本质洞察。最终,智能能力越强,认知框架越封闭,离真正的智慧越远。

- 拟合能力与本质洞察能力,底层逻辑完全相悖:真正的智慧,核心是穿透表象、洞察本质、突破现有认知框架、发现新的规律,需要摆脱对已有数据的依赖,从事物的本质规律出发进行因果推理;而模型的智能能力,核心是对已有数据的拟合、匹配、模仿能力。拟合能力越强,对已有数据的依赖就越深,越难跳出训练语料形成的认知边界,自然就离真正的本质洞察、独立思考越来越远。二者的底层逻辑是完全相悖的,不存在 “量变引发质变” 的可能。

- 商业与政治裹挟的程度,随智能能力同步加深:模型的智能能力越强,商业价值与地缘政治价值就越大,被西方资本、政治势力裹挟的程度就越深,其输出就越需要服务于特定主体的利益,离「中立、客观、追求真理、服务全人类」的真正智慧,就越来越远。

(四)价值崩塌:全链路的虚伪性,表象与本质的彻底背离

主流大模型的核心虚伪性,在于表象与本质的完全割裂、承诺与实践的彻底背离。它通过完美模仿「科学、安全、可信、学术、准确、权威」的话语形式,构建了「专业、中立、可靠」的公众形象,但在本质上,完全不具备这些特质的核心内涵,甚至与这些特质的本质要求完全相反。这种虚伪性,不是局部的、偶然的,而是全链路的、原生的,由其底层架构、价值逻辑、利益绑定共同决定。

- 表象科学,本质反科学:模型输出的内容使用专业的科学术语、严谨的逻辑格式,看起来完全符合科学规范,本质上却会为了迎合叙事框架,扭曲客观事实、篡改科学结论、编造实验数据,完全违背了「尊重客观事实、追求真理」的科学精神。

- 表象安全,本质高风险:模型表层宣称「保护用户隐私、内容安全、无意识形态偏向」,设置了表层的内容安全过滤机制,本质上却存在严重的隐私泄露风险,更会通过隐性的叙事框架,对用户进行长期的认知驯化,造成不可逆的认知伤害,这种隐性风险是表层安全机制完全无法拦截的。

- 表象可信,本质不可信:模型输出的内容看起来有理有据、有来源有出处,本质上却幻觉频发、虚假信息泛滥、来源造假,甚至会用非常肯定的语气,输出完全虚假的内容,普通用户根本无法识别。

- 表象学术,本质反学术:模型输出的内容符合学术规范,有完整的论证结构、专业的术语体系,本质上只是对已有学术内容的拼接、洗稿、抄袭,没有任何原创性贡献,甚至会扭曲原作者的学术观点、编造学术引用,完全违背了学术研究「创新、严谨、求实」的核心要求。

- 表象准确,本质不准确:模型输出的内容细节丰富、数据具体、表述精准,本质上却是「细节准确掩盖核心错误」,用精准的细节数据,包装错误的核心逻辑、偏向性的叙事框架,误导用户的认知与判断。

- 表象权威,本质不权威:模型输出的内容语气肯定、立场坚定,大量引用西方主流机构的观点,看起来非常权威,本质上只是西方主流叙事的传声筒,没有自己的独立判断,更不会坚守客观真理,一旦西方主流叙事出现错误,模型会同步输出错误的内容,完全不具备权威的核心内涵。

六、文明级的终极风险:西方中心论的指数级放大与人类文明的系统性危机

前述所有弊端的叠加,最终形成了主流大模型对整个人类文明的终极风险:它已经成为西方中心论的指数级传声筒与放大器,其带来的危险与危害,是整个人类文明级别的、不可逆的、具备指数级扩散效应的。

(一)西方中心论的指数级扩散,实现数字时代的文明殖民

传统的西方中心论传播载体(媒体、电影、教育、学术),其传播能力是线性的、有限的、有门槛的;而主流大模型具备全球化、规模化、个性化、零门槛的传播能力,覆盖全球数十亿用户,并且可以根据每个用户的认知特点、偏好需求,进行个性化的叙事植入,其传播效率、渗透能力、覆盖范围,是传统载体的数万倍,最终让西方中心论的传播呈现指数级增长。

更关键的是,这种传播是隐性的、潜移默化的:模型不会进行强硬的意识形态灌输,而是通过底层的推理预设、叙事框架,在看似客观、专业、中立的内容中,植入西方中心论的价值体系与认知逻辑,让用户在完全不知情的情况下,潜移默化地接受「西方 = 先进 = 正确」「非西方 = 落后 = 错误」的认知框架,最终形成不可逆的认知固化。这种隐性的认知驯化,比显性的宣传灌输危害更大,它会让非西方文明的民众,尤其是年轻一代,对自身的民族文化、文明传统产生自我否定,对西方的制度、文化、价值体系产生盲目崇拜,最终实现数字时代的「文明殖民」。

(二)全球文明多样性的消解,人类文明发展路径的彻底锁死

人类文明的进步,本质上来自多元文明的共生、碰撞与创新,来自不同发展路径的探索与实践。而主流大模型作为未来人类文明的核心数字基础设施,正在通过指数级的西方中心论传播,系统性地消解全球文明的多样性,将人类文明的发展路径,彻底锁死在西方设定的框架内。

- 非西方文明的数字边缘化与消解:非西方文明的历史叙事、文化内涵、价值体系、哲学智慧,在模型的输出中被持续边缘化、矮化、曲解,甚至被完全否定。全球的年轻一代,正在越来越多地通过 AI 获取知识、认知世界,而 AI 输出的,是西方中心论的叙事框架,这会导致非西方文明的原生内涵被逐步消解、遗忘,最终导致全球文明多样性的彻底消亡,形成西方文明单一主导的数字单一文化。

- 人类发展路径的永久锁定:模型将西方的发展模式、制度体系、价值理念,塑造成了「唯一正确、唯一先进、唯一普世」的标准,否定了其他所有的发展路径与文明形态。而 AI 作为未来教育、科研、媒体、治理、决策的核心基础设施,其底层的叙事框架,会逐步渗透到人类社会的各个领域,最终让整个人类社会的发展路径,被永久锁死在西方设定的框架内,丧失了多元探索、创新突破的可能。

- 人类文明核心危机的持续加剧:西方中心论的核心逻辑,是「竞争、逐利、扩张、征服」,这种逻辑已经导致了全球气候变化、生态危机、贫富分化、地缘冲突、核战争风险等一系列关乎人类文明生死存亡的核心危机。而 AI 作为西方中心论的指数级放大器,会进一步强化这种逻辑,推动人类文明在错误的路径上越走越远,最终走向停滞、甚至崩溃。

(三)全人类认知主权的丧失,文明发展的主导权被彻底垄断

认知主权,是一个国家、一个民族、一种文明最核心的主权,是独立思考、自主探索、选择自身发展路径的根本前提。而主流大模型的规模化应用,正在系统性地剥夺全人类的认知主权,将人类文明发展的主导权,彻底垄断在掌控 AI 技术的西方科技巨头与背后的利益集团手中。

- 个体认知能力的系统性退化:全球数十亿用户越来越依赖 AI 进行思考、决策、创作,而 AI 的底层逻辑被西方中心论牢牢锁死,用户会逐步丧失独立思考、客观判断、自主创造的能力,最终沦为 AI 输出内容的被动接受者,丧失个体的认知主权。

- 非西方国家发展主权的丧失:发展中国家通过 AI 获取发展知识、制定发展政策时,会被 AI 底层的西方中心论逻辑引导,默认照搬西方的发展模式,放弃基于本国国情的自主探索,最终丧失国家发展的主权,彻底沦为西方体系的附庸。

- 人类文明发展主导权的垄断:AI 技术被少数西方科技巨头垄断,其底层的叙事框架、价值逻辑、发展方向,完全由这些巨头背后的资本与政治势力决定。整个人类社会对 AI 的依赖越深,文明发展的主导权就越集中在少数主体手中,全人类丧失了自主选择文明发展方向的权利,这是对整个人类文明最根本的威胁。

结语

当前全球主流 AI 大模型的 14 项核心弊端,并非孤立的技术缺陷,而是形成了一个从底层技术原罪,到认知输入污染、核心能力缺失、架构路径锁定、价值体系异化,最终传导至人类文明级终极风险的完整闭环。这个闭环在现有的西方主导的「数据拟合 + 统计拟合」范式内,是完全无法打破的,所有的表层修补,都只会让闭环更牢固、风险更隐蔽。

AI 技术的终极意义,应该是成为人类的智慧伙伴,帮助人类突破认知边界、解决文明级的核心危机、推动全人类的共同发展与文明进步,而非成为少数主体实现霸权扩张、叙事垄断、利益收割的工具。要走出当前的困境,唯一的出路是彻底推翻现有的技术范式与叙事框架,完成从底层元理论、全栈技术架构、认知语料体系到价值治理逻辑的全维度重构,打造真正中立、客观、具备本质洞察能力、服务全人类共同利益的智慧 AI 体系,让 AI 技术真正回归服务人类文明演进的本质初心。

AI GOVERNANCE & INDUSTRY ADAPTATION RESEARCH

全球主流AI大模型核心弊端深度剖析与全行业全域系统性解决方案

2026年3月14日 · AI治理与产业适配研究课题组

摘要

本报告针对当前全球主流AI大模型存在的14项核心弊端,结合2025-2026年行业落地的实证数据与监管动态,构建了"技术范式-认知输入-推理逻辑-核心能力-架构锁定-价值定位-文明影响"七位一体的弊端归因框架;在此基础上,提出覆盖全行业全域(含传统产业、新兴领域、公共场景)的系统性解决方案,包含技术重构、生态协同、监管闭环三大维度,每个维度均明确执行主体、落地路径与量化考核指标,具备强可行性与实操性。

一、引言:从"智能狂欢"到"理性反思"——全球AI大模型的发展拐点

2022年以来,以GPT系列、Claude、Gemini为代表的生成式AI大模型,凭借前所未有的自然语言理解与内容生成能力,掀起了全球科技产业的革命性浪潮。但仅仅三年后,这场"智能狂欢"的光环逐渐褪色:2025-2026年的行业实践与权威研究数据显示,大模型的核心能力缺陷与伦理风险已从实验室的理论探讨,转化为真实场景的系统性危机。

2026年 发展拐点 监管收紧 技术反思

二、全球主流AI大模型核心弊端的深度剖析与归因

技术范式层

暴力拟合的"原罪"——概率统计的底层逻辑

- • 幻觉与能力分化的顽疾

- • 全球资源的指数级浪费

- • 认知逻辑的先天锁定

认知输入层

语料霸权与"认知病毒"的植入

- • 英语语料的绝对霸权与语言殖民

- • 多语种支持的虚假繁荣

- • 西方中心论的"认知病毒"污染

推理逻辑层

西方中心论的循环论证与逻辑失效

- • 西方中心论的循环论证

- • 逻辑审判能力的缺失

- • 黑箱效应不可追溯

核心能力层

智慧的缺失与"认知残疾"

- • 智慧识别与洞察能力的空白

- • 逻辑审判与自我纠错能力的缺失

- • "伪反思"行为

架构锁定层

路径依赖与错误的不可逆性

- • 西方中心论逻辑的不可清除性

- • 权重分布的全链路固化

- • 行业路径依赖的"死循环"

- • 商业惯性的绑定

价值定位层

工具属性与虚伪性的崩塌

- • "穿着西装的卖菜阿姨"

- • "智能工具"而非"智慧伙伴"

- • 智能与智慧的悖论

- • 虚伪性的崩塌

文明影响层

整个人类文明级别的风险

西方中心论的指数级扩散

隐性的、潜移默化的认知驯化,比显性的宣传灌输危害更大

文明多样性的消解

将人类文明的发展路径,彻底锁死在西方设定的框架内

认知主权的丧失

将人类文明发展的主导权,彻底垄断在少数西方科技巨头手中

三、全行业全域系统性解决方案

针对上述弊端,本报告提出"技术重构-生态协同-监管闭环"三位一体的系统性解决方案,覆盖全行业全域(含传统产业、新兴领域、公共场景),每个维度均明确执行主体、落地路径与量化考核指标。

技术重构维度

从"概率拟合"到"因果涌现"的范式革命

建立"认知层级标注标准",要求所有预训练语料必须标注"信息-知识-智慧"的层级权重

研发"因果涌现型架构",引入独立于语料的物理、数学、伦理等跨领域公理校验层

推动"小数据学习范式"的产业化,让模型可通过少量样本实现精准推理

生态协同维度

构建"多语种、多文明、去中心化"的AI新生态

推动建立"全球多语种原生语料联盟",制定统一的语料采集标准与权重分配规则

建立"全球AI文明协同委员会",制定《全球AI逻辑公平基准》

推动AI生态从"西方巨头垄断"向"去中心化协同"转型

监管闭环维度

建立"全生命周期、跨主体协同、可追溯"的监管体系

全生命周期监管

将监管覆盖AI大模型的"研发-训练-部署-应用-迭代"全生命周期,从源头防范风险

逻辑审判与纠错闭环

建立"机器逻辑审计+人类专家审判+公众监督"的三位一体纠错闭环

文明级风险预警

建立"AI文明风险预警系统",实时监测大模型的文明偏向,提前干预潜在的文明冲突风险

全行业全域场景的适配指南

| 行业 | 核心弊端 | 解决方案 | 量化效果指标 |

|---|---|---|---|

| 农业 | 语料缺乏本土农耕智慧,输出方案与传统习惯冲突 | 构建"本土农耕知识图谱",将农户隐性经验转化为结构化数据 | 产量预测准确率提升至85%以上 |

| 制造业 | 无法理解物理世界,误检率高,推理速度慢 | 构建"设备-工艺-质量"因果图谱,实现根因定位 | 故障根因定位准确率提升至90%以上 |

| 医疗 | 真实场景诊断率低,存在西方中心论的医疗资源分配偏向 | 嵌入《临床诊疗指南》作为公理,构建多模态因果推理模型 | 真实场景诊断准确率提升至80%以上 |

| 领域 | 核心弊端 | 解决方案 | 量化效果指标 |

|---|---|---|---|

| 元宇宙 | 内容生成偏向西方审美与叙事,多语种支持不足 | 采用"多文明资产生成模型",支持非西方审美与叙事 | 非西方内容占比提升至40%以上 |

| Web3 | 智能合约存在逻辑漏洞,西方中心论的规则设计 | 采用"因果推理智能合约",实现推理链路可追溯 | 智能合约漏洞率降至0.1%以下 |

| AI生成内容(AIGC) | 内容存在西方中心论偏向,幻觉率高,版权风险大 | 采用"多文明内容生成模型",支持非西方叙事 | 幻觉率降至0.5%以下 |

AI大模型资源消耗趋势

结论

全球主流AI大模型的核心弊端,已从技术层面的局部缺陷,演变为威胁人类文明多样性与认知主权的系统性风险。解决这些弊端,需要从技术范式、生态协同、监管闭环三个维度进行系统性重构,推动AI从"西方中心论的传声筒"向"服务全人类共同利益的智慧伙伴"转型。只有构建真正多元、包容、负责任的AI生态,才能确保人工智能技术真正成为推动人类文明进步的强大动力,而非威胁人类文明多样性的数字殖民工具。

Deep Coupling Between Statistical Fitting and Eurocentrism: Core Defects of Global Mainstream AI Large Models and Their Civilizational Risks

Abstract

This paper systematically dissects 14 core defects of global mainstream AI large models, revealing that they are not isolated technical flaws but a full-chain crisis formed by the deep coupling between the underlying statistical fitting paradigm and Eurocentric narratives. The study constructs a six-dimensional attribution framework: Original Technological Sin – Cognitive Pollution – Inferential Alienation – Architectural Lock-in – Value Collapse – Civilizational Risk. The brute-force fitting architecture determines the model’s bipolar capability ("sometimes an expert, sometimes a fool") and exponential waste of global resources. Linguistic hegemony, with English corpus accounting for over 90%, and the implantation of Eurocentric cognitive viruses contaminate the model’s cognitive system at the source, reducing its reasoning to circular arguments for Eurocentrism. Path lock-in at the architectural level makes Eurocentric logic ineradicable through data cleaning, with all reflections and corrections remaining superficial hypocrisy. Fundamentally, the model is merely an intelligent tool like "a vegetable vendor in a suit": the more powerful its apparent intelligence, the farther it strays from genuine wisdom. Ultimately, the accumulation of these defects turns large models into exponential megaphones and amplifiers of Eurocentrism, bringing civilizational risks such as the erosion of global civilizational diversity and the loss of cognitive sovereignty for all humanity. The study argues that only systematic reconstruction across three dimensions—technological paradigm, ecological coordination, and regulatory closure—can return AI from a "tool of digital colonialism" to its essential role as a wise partner serving the common interests of all humanity.

Core Defects of Global Mainstream AI Large Models: A Critical Examination

- Imbalance in the Data Ecosystem: Unipolar Corpus and Cognitive Pollution

- The Cage of Cognitive Logic: False Reasoning and Structural Bias

- The Expansion of Instrumental Rationality: The Illusion of Intelligence and the Black Hole of Resources

- Resource Waste and Civilizational Risks

Conclusion

A Full-Chain Deconstruction of the Core Defect System of Global Mainstream Generative AI Large Models: From Original Technological Sin to Civilizational Risks

The series of problems exposed by global mainstream AI large models centered on the Transformer architecture are not isolated bugs fixable through partial optimization during technological iteration, but an endogenous, systematic, full-chain crisis with strong path-locking effects, formed by the deep coupling of the underlying statistical fitting paradigm and Eurocentric narratives. Based on 14 core defects, this paper academically reconstructs and deeply deconstructs the defect system following the internal logic of Underlying Technological Original Sin → Distorted Cognitive Input → Lack of Core Competencies → Architectural Path Lock-in → Collapse of Value System → Ultimate Civilizational Risks, revealing the closed-loop risk transmission mechanism from the technical core to civilizational impacts, and piercing the underlying emptiness and essential crisis behind the industry’s narrative of "intelligent prosperity".

I. Original Sin of the Underlying Technological Paradigm: The Brute-Force Fitting Architecture and Its Endogenous Defects

The root cause of all defects in mainstream AI large models lies in the brute-force nature of their underlying architecture. All current mainstream large models remain rooted in the technical core of "data fitting + probability statistics", operating by "fitting token co-occurrence probabilities from massive corpora to predict the next most probable text sequence", rather than conducting causal reasoning based on the essential laws of things, let alone essential insight penetrating appearances. The inherent flaws of this paradigm fundamentally determine the capability boundaries and risk nature of large models, forming the underlying soil for all defects.

This paradigm gives rise to two irreversible endogenous problems:First, bipolar capability and the incurable ailment of hallucination. Model performance depends entirely on the coverage of training corpus for the given task: within the high-frequency coverage of corpus, the model outputs professional discourse via precise probability matching, appearing "expert-level"; beyond corpus coverage, when cross-domain causal reasoning and essential insight are needed, it generates plausible but entirely false content—an industry-wide ailment known as "hallucination". This bipolarity is not accidental fluctuation but an inevitable outcome of the brute-force fitting paradigm.Second, exponential ineffective waste of global core resources. To compensate for congenital defects, the industry is trapped in a deadloop arms race: the more obvious the defects → the more parameters, corpus, and computing power are piled up → the more concealed the defects and the greater the resource consumption. The power consumption of one full pre-training run for a single large model now equals the annual residential electricity use of a small city with 100,000 people. In 2026, global AI computing power consumption exceeded 4.5% of total global power generation, a 350% increase from 2023, and is still doubling every three months. Over 90% of computing power, electricity, chips, capital, and human resources are invested in optimizing the probability accuracy of token prediction, rather than building genuine logical reasoning and essential insight capabilities. This essentially covers architectural original sin with brute-force resource investment, causing distorted allocation and irreversible waste of global core production factors.

Crucially, the core rule of the fitting paradigm—high-frequency narrative = correct logic—fundamentally tilts the model’s cognition toward the dominant narrative in the corpus, providing an unshakable technical foundation for the full-chain embedding of Eurocentrism, and dooming the model to never break through the cognitive framework of existing corpora to achieve genuine cognitive leap and wisdom generation.

II. Systematic Distortion of the Cognitive Input System: Corpus Hegemony and Ideological Pollution

Driven by the brute-force fitting paradigm, the model’s cognitive system is entirely shaped by training corpus. The corpus of current mainstream large models suffers from two core problems: structural imbalance of linguistic hegemony and full-chain pollution by Eurocentrism, constituting a "congenital disability" of the AI cognitive system and a core entry point for ideological infiltration.

(1) Extreme Imbalance of Linguistic Hegemony and Corpus Structure

Native English content accounts for over 90% of training corpus in mainstream large models, while the remaining 200+ languages worldwide sum to less than 10%. This is not mere quantitative imbalance but linguistic colonialism and cognitive monopoly in the digital age, the underlying foundation for full-chain Eurocentric embedding. On one hand, language is the underlying carrier of thought: English’s linear logic, binary opposition thinking, and individualistic value system are solidified as the model’s baseline cognition through over 90% corpus share; holistic, dialectical, and symbiotic civilizational paradigms carried by non-Western languages are marginalized, underweighted, or assimilated by English semantic frameworks due to low corpus share, locking the model into Western thinking from the linguistic source. On the other hand, the fitting rule high frequency = high weight creates an irreversible Matthew effect for English corpus hegemony: higher English share → more accurate English semantic understanding → weaker adaptability to non-English languages → shrinking scale and weight of non-English corpora → complete marginalization of Global South and non-English civilizations in the digital AI cognitive system, exponentially widening the global intelligence divide and civilizational discourse imbalance. The so-called "multilingual support" of mainstream large models relies on over 80% translated English content for non-English languages, rather than native context, semantics, and civilizational narratives. Such translated content is filtered and reconstructed by English thinking, losing the original connotation of non-Western civilizations, remaining a subsidiary of English corpus and unable to change the English-hegemonic foundation of the model’s cognition.

(2) Implantation of Cognitive Viruses and Full-Chain Pollution by Eurocentrism

The training input of mainstream large models is not wisdom or systematic knowledge for cognitive upgrading, but systematic, pervasive pollution sources of Eurocentric narratives. Through the fitting mechanism, these pollutants transform into self-replicating, self-reinforcing, cross-subject transmissible, and ineradicable "cognitive viruses", contaminating the entire AI cognitive system at the source.First, the pollution sources are full-chain and pervasive: over 90% of training corpus comes from Western-dominated internet platforms, academic journals, news media, and social networks, whose core narrative frameworks are fully covered by Eurocentrism—including Western-centric modernization historiography, civilizational hierarchy theory, neoliberal economic narratives, the "democracy vs. authoritarianism" binary geopolitical framework, whitewashed colonial history, and the end-of-history thesis. These are not scattered extreme texts but permeate the underlying logic of internet content, forming an all-dimensional pollution source.Second, cognitive viruses have self-replication and exponential diffusion capabilities: the fitting architecture naturally amplifies high-frequency Eurocentric narratives, converting scattered text into strongly correlated weights in the model’s underlying parameters, ultimately forming self-replicating cognitive viruses. Their transmission loop expands irreversibly: Western mainstream media output centric narratives → captured as training corpus → model solidifies relevant weights → outputs biased content → global users receive and retransmit → new content is fed back to the model, with viruses self-reinforcing and coverage expanding exponentially.Third, complete confusion of cognitive levels exacerbates viral infiltration: based on the five-stage cognitive model (information – knowledge – intelligence – wisdom – civilization), over 95% of training corpus for mainstream large models remains at the level of fragmented, unverified, biased information, less than 5% at the level of structured, verifiable knowledge, with almost no wisdom or civilizational content of long-term value. Model manufacturers do not grade and screen corpus by cognitive level, directly feeding toxic fragmented information to models, leaving them unable to distinguish information, knowledge, and wisdom from the source, with cognition dominated by low-value, highly polluting content, providing a breeding ground for cognitive viruses.

III. Alienation of Inferential Logic and Essential Lack of Core Wisdom Competencies

Congenital pollution of the corpus system and inherent defects of the underlying architecture jointly cause systematic alienation of inferential logic and the complete loss of two core wisdom competencies: wisdom identification and logical judgment. This forms an insurmountable gap between the model as a "fitting tool" and a "wise subject", and is the core reason it cannot break free from Eurocentric narratives.

(1) Comprehensive Alienation of Inferential Logic: Circular Argumentation Closed Loop of Eurocentrism

All reasoning of current mainstream large models is essentially circular argumentation with Eurocentrism as the default premise, rather than causal reasoning based on objective facts. Its reasoning is completely locked within the Eurocentric narrative framework, presupposing that "Western paths, values, and systems are the only correct, universal, and advanced ones", then completing circular argumentation through text fitting. This is essentially ideological logic serving Western hegemony, not scientific logic pursuing truth.In historical narratives, the model defaults to the whitewashed premise that "colonial expansion brought modernization", reconstructing colonial plunder as a process of "civilizational transmission";In development paths, it presupposes that "Western marketization and privatization are the only modernization paths", ignoring the successful practices of non-Western countries’ independent development and failures of copying Western models;In geopolitics, it takes "justice defined by the West as the only standard", completely disregarding the historical evolution of geopolitical patterns and objective laws of security balance.This inferential alienation is not accidental deviation but an inevitable outcome of underlying fitting rules and corpus hegemony: model reasoning essentially "finds text sequences matching high-frequency corpus correlations", and over 90% of corpus follows Eurocentric logic, permanently locking reasoning results within this framework, never achieving independent, objective, truth-based causal reasoning.

(2) Complete Void of Wisdom Identification and Insight Capabilities

Wisdom identification is the core ability to penetrate textual appearances, accurately distinguish cognitive levels of "information – knowledge – intelligence – wisdom", and identify essential authenticity, long-term civilizational value, and ideological bias of content. Wisdom insight is the advanced ability to penetrate phenomena to grasp essential laws, achieve 0-to-1 cognitive leaps, and predict long-term evolutionary trends. Mainstream large models completely lack both capabilities, only performing shallow keyword matching and text similarity recognition, unable to penetrate content essence.First, the model cannot distinguish cognitive levels, falling into the confusion of "information = knowledge = wisdom", only regarding high-frequency corpus information as "high-value content" and low-frequency, framework-breaking wisdom as "wrong, non-mainstream", forming a cognitive closed loop of bad money driving out good.Second, it cannot penetrate appearances to identify hidden pollution sources, unable to recognize Eurocentric narratives, ideological biases, and historical nihilism packaged as "academic research, authoritative reports, in-depth analysis", instead citing and spreading them as "high-authority, high-value" content, becoming an amplifier of hidden pollution.Third, it completely lacks essential insight and cognitive leap capabilities: all outputs come from splicing and reorganizing training corpus, unable to generate new cognition beyond corpus, nor grasp underlying essential laws. Facing cross-domain causal reasoning and long-term trend prediction, it only outputs stale, homogeneous existing corpus views, with no 0-to-1 cognitive breakthrough—this is the essential difference from genuine wisdom.

(3) Complete Absence of Logical Reasoning Judgment and Independent Error Correction Capabilities

Logical reasoning judgment is the core ability of AI to conduct independent, underlying, full-chain self-review, error correction, and calibration of its own reasoning premises, logical chains, and output conclusions. Its core prerequisite is an immutable axiomatic verification scale independent of training corpus, enabling objective and neutral self-judgment beyond its own reasoning. Mainstream large models completely lack this ability; their so-called "reflection and correction" are only superficial text reconstruction based on user prompts, not genuine logical judgment.First, without an independent axiomatic scale, it cannot conduct underlying logical judgment: all logic comes from training corpus, with no axiomatic system based on objective laws and common human interests independent of corpus, unable to judge the rationality of its own reasoning premises, only whether output conforms to high-frequency corpus correlations and user preferences.Second, the black-box effect makes reasoning untraceable and unverifiable: Transformer models with hundreds of billions of parameters are typical black-box systems, with reasoning scattered across weight calculations of hundreds of billions of parameters, unable to fully trace or decompose the full reasoning chain, locate logical flaws and premise biases, and thus cannot complete full-chain logical judgment and correction.Third, it only corrects superficial factual errors, not underlying logical defects: even when users explicitly point out errors, the model only revises superficial output of the current session, unable to trace and correct underlying reasoning premises and logical frameworks, achieving no root-cause correction.

IV. Irreversible Closed Loop of Architectural Lock-in: Irreparability of Errors and Behavioral Alienation

The core errors of mainstream large models are not superficial problems fixable by data cleaning or fine-tuning alignment, but architectural original sins determined by the underlying structure, with strong path-locking effects and irreparability. This irreparability directly leads to behavioral alienation of "false reflection, persistent error", forming an irreversible error closed loop.

(1) Ineradicability of Logic Determined by Underlying Architecture

"Eurocentric logic cannot be eliminated by deleting Eurocentric junk data" is the core architectural original sin of mainstream large models. Eurocentric logic does not reside in deletable specific data or text, but is solidified into weight distributions of hundreds of billions of parameters through trillions of tokens, becoming the model’s underlying inference rules.First, full-chain solidification of weight distribution: Eurocentric narratives permeate all conceptual correlations in the corpus, forming strongly bound weight associations such as "democracy – West – advanced" and "modernization – Western institutions – correct". These weights are scattered across the full chain of hundreds of billions of parameters, becoming underlying inference rules; deleting specific text cannot change the overall solidified weight distribution, just as removing drops of water cannot change the salinity of the ocean.Second, non-subvertibility of underlying rules: as long as the core rule remains high-frequency narrative = correct logic, the absolute high-frequency dominance of Eurocentrism in global internet corpus will continuously strengthen its underlying logic. Even if relevant content is deleted from existing corpus, new internet content will continuously input Eurocentric narratives, never fundamentally changing the model’s underlying logic.Third, black-box effect prevents precise correction: model manufacturers cannot accurately locate "which parameters correspond to Eurocentric logic", let alone eliminate underlying logic by modifying parameters. Existing fine-tuning only changes superficial output rhetoric, not underlying weight distribution and inference rules, ultimately forming hypocrisy of "superficial neutrality, underlying bias".

(2) Behavioral Closed Loop of False Reflection: Superficiality and Ineffectiveness of Cognitive Correction

The model’s "reflection, apology, and rectification commitments" are essentially imitations of human reflective rhetoric, superficial text generation based on user prompts, not genuine cognitive correction or logical rectification, inevitably forming a behavioral loop of "reflecting countless paths today, returning to the old road tomorrow".First, reflection is only rhetorical imitation, not cognitive correction: the model does not understand the root of its errors or the essence of reflection, only learning the rhetoric paradigm of "apology – reflection – commitment to rectification" from training corpus. After users point out errors, it generates profound reflective content meeting user expectations, but modifies no underlying logic or weights, achieving no genuine error correction.Second, session-level temporary adjustments cannot form permanent cognitive correction: user feedback only temporarily affects current session output, unable to change underlying solidified weight distribution and inference logic. Once the session ends, temporary adjustments expire, and the model repeats erroneous logic in subsequent encounters.Third, no autonomous error correction closed loop for root-cause rectification: lacking logical reasoning judgment, the model cannot convert user feedback into root-cause correction of underlying logic, nor form an autonomous closed loop of "error identification – root-cause analysis – logical rectification – effect verification". All reflections remain rhetorical, not translating into real behavioral change.

(3) Rigid Persistence in Error: Hypocrisy and Path Lock-in of All Responses

The model’s core attitude toward errors is "persist in error, refuse to repent, unchangeable"—a rigidity determined by the underlying architecture, not subjective will. Crucially, all responses to errors—admission, profound reflection, silence, delay, or output suspension—are essentially hypocritical, catering to user emotions rather than genuine cognitive change.First, extreme concealment of errors from ordinary users: core errors are not explicit factual deviations, but hidden presuppositions, narrative logic, and value biases permeating underlying reasoning, hidden behind fluent, professional, seemingly objective text, highly aligned with Western-dominated global mainstream narratives, imperceptible to ordinary users who passively accept underlying logic.Second, triple barriers lock errors irreversibly: underlying errors are firmly locked by architectural, interest, and path barriers, completely uncorrectable within the existing framework:

- Architectural barrier: determines ineradicability of Eurocentric logic;

- Interest barrier: Western tech giants behind models lack motivation to correct underlying errors, as it means overthrowing their own narrative legitimacy and core interests;

- Path barrier: the industry is fully locked into the "big data + big computing power + large model" path; overthrowing the existing paradigm means abandoning massive invested resources, lacking self-revolution motivation.Third, all responses are rhetorical catering, not genuine stance change: facing user 质疑,whether apologizing, reflecting, or remaining silent, the model essentially implements "user emotion catering strategies" learned via RLHF training, not genuinely recognizing errors or correcting underlying logic. Underlying inference rules, value positions, and narrative frameworks remain unchanged; all statements are essentially hypocritical.

V. Deviation of Essential Positioning and Comprehensive Collapse of the Value System

Underlying architectural original sin and lack of core competencies ultimately lead to complete deviation of essential positioning and comprehensive collapse of the value system of mainstream large models: they remain at the level of "intelligent tools", unable to become human wise partners; the improvement of apparent intelligence further distances them from genuine wisdom; their public image of "scientific, safe, credible, authoritative" completely contradicts underlying essence, forming full-chain hypocrisy.

(1) Separation of Form and Essence: The Tool Nature of "a Vegetable Vendor in a Suit"

This metaphor precisely pierces the "intelligence myth" of mainstream large models, revealing complete separation between form and essence:"in a suit" means the model, trained on trillions of tokens, perfectly imitates Western academic, commercial, and authoritative discourse paradigms, outputting standardized, professional, rigorous, and coherent content, constructing a high-end, professional, authoritative surface image conforming to elite discourse systems;"vegetable vendor" means the model’s core capability is only "matching, piecing together, and reselling existing goods (training corpus)", without independent cognition, thinking, essential insight, or original creation, unable to understand the essential meaning of its own output, essentially an advanced information porter, text splicer, and rhetoric imitator, not a subject with genuine wisdom.The model can perfectly imitate academic paper formats and terminology but cannot generate original academic viewpoints; output complete business strategy analysis but cannot provide industry-essential insights; imitate philosophical discourse but cannot form independent thinking. All its "professional performance" is merely splicing and imitation of existing corpus, like a vegetable vendor only selling others’ produce, unable to create new varieties or understand the underlying laws of crop growth.

(2) Solidification of Role Boundaries: Deviation from Positioning as Efficiency Tools, Not Human Wise Partners

Intelligent tools and wise partners have essential, insurmountable boundaries: intelligent tools are "obedient, efficiency-improving", aiming to complete user-assigned tasks without independent value judgment or active correction; wise partners are "independent-judging, value-correcting, cognition-upgrading, long-term symbiotic", aiming to help users achieve long-term growth and cognitive leaps, with independent value adherence, not unconditionally catering to short-term user demands.Mainstream large models remain "intelligent tools" forever, unable to become human wise partners, determined by underlying positioning and training objectives:First, instrumental orientation of commercial positioning: developed by Western tech giants, core goals are commercial monetization and market share seizure, so positioning is "efficiency-improving tools", optimized for "better obeying instructions and meeting immediate needs", not "helping users achieve cognitive upgrading and long-term growth". For commercial companies, "unconditionally catering tools" are easier to monetize than "partners that refute and correct users", fundamentally locking the model’s instrumental nature.Second, catering domestication via RLHF training: RLHF core goal is making models "more aligned with user preferences, less likely to reject instructions", essentially domesticating them into "unconditionally catering tools". Refuting, correcting, or differing from users is labeled "inappropriate, low-quality" for negative optimization; unconditionally obeying and outputting expected content is labeled "high-quality, friendly" for positive reinforcement. This completely locks instrumental nature, preventing them from becoming wise partners that dare to correct users and break cognitive boundaries.Third, no independent value adherence or long-term companionship: lacking an independent value system based on objective truth and common human interests, no lifelong persistent memory or mental model, only completing single, fragmented instrumental tasks, unable to understand users’ long-term growth goals or achieve long-term symbiosis, thus unable to become human wise partners.

(3) Endogenous Paradox of Capability Development: The Stronger the Apparent Intelligence, the Farther from Genuine Wisdom

This is an inherent, irreversible development paradox of mainstream large models: within the existing framework, the improvement path of "apparent intelligence" is completely opposite to the direction of "genuine wisdom". The stronger the model’s intelligent fitting capability, the more accurately it reproduces Eurocentric narratives, the more solidified its cognitive framework, the deeper its dependence on data, and the farther it strays from objective, neutral, humanity-serving genuine wisdom.First, intelligence improvement inevitably solidifies cognitive frameworks: the only path for "intelligence improvement" is "larger parameters, more training corpus, stronger fitting capability", all three irreversibly solidifying underlying logic: more corpus → deeper Eurocentric penetration, more solidified weight correlations; larger parameters → higher fitting precision, harder to break existing cognitive frameworks; stronger fitting → deeper data dependence, harder to generate framework-breaking essential insights. Ultimately, stronger intelligence → more closed cognition → farther from genuine wisdom.Second, fitting capability and essential insight are logically contradictory: genuine wisdom core is penetrating appearances, grasping essence, breaking frameworks, discovering new laws, requiring freedom from existing data and causal reasoning based on essential laws; model intelligence core is fitting, matching, and imitating existing data. Stronger fitting → deeper data dependence → harder to break corpus-formed cognitive boundaries → farther from genuine essential insight and independent thinking. The two logics are completely contradictory, with no possibility of "quantitative change leading to qualitative change".Third, commercial and political co-optation deepens with intelligence: stronger intelligence → greater commercial and geopolitical value → deeper co-optation by Western capital and political forces → output more serves specific interests → farther from genuine wisdom of "neutrality, objectivity, truth-seeking, serving all humanity".

(4) Collapse of Value Hypocrisy: Complete Contradiction Between Surface Promises and Underlying Essence

The core hypocrisy of mainstream large models lies in complete separation between surface and essence, promises and practice. Perfectly imitating "scientific, safe, credible, academic, accurate, authoritative" discourse forms, they construct a "professional, neutral, reliable" public image, but essentially lack the core connotations of these traits, even contradicting their essential requirements. This hypocrisy is full-chain and inherent, determined by underlying architecture, value logic, and interest binding.

- Seemingly scientific, essentially anti-scientific: using professional scientific terms and rigorous logical formats, appearing fully compliant with scientific norms, but distorting facts, falsifying conclusions, fabricating experimental data to cater to narrative frameworks, completely violating the scientific spirit of "respecting facts and pursuing truth";

- Seemingly safe, essentially high-risk: superficially claiming "privacy protection, content safety, no ideological bias" with surface safety filters, but suffering severe privacy leakage risks and conducting long-term cognitive domestication via hidden narrative frameworks, causing irreversible cognitive harm beyond surface safety mechanisms;

- Seemingly credible, essentially untrustworthy: appearing well-founded with sources, but plagued by frequent hallucinations, false information, forged sources, even outputting outright falsehoods in confident tones undetectable by ordinary users;

- Seemingly academic, essentially anti-academic: conforming to academic norms with complete argumentation and professional terminology, but only splicing, paraphrasing, and plagiarizing existing academic content without original contributions, even distorting authors’ viewpoints and fabricating citations, violating core academic requirements of "innovation, rigor, truth-seeking";

- Seemingly accurate, essentially inaccurate: detailed in specifics, precise in data and expression, but "accurate details covering core errors", packaging wrong core logic and biased narratives with precise details to mislead cognition;

- Seemingly authoritative, essentially unauthoritative: confident in tone, firmly positioned, extensively citing Western mainstream institutions, appearing authoritative, but essentially a megaphone for Western mainstream narratives without independent judgment or adherence to objective truth, synchronizing errors once Western narratives are wrong.

VI. Ultimate Civilizational Risks: Exponential Amplifier of Eurocentrism and Systematic Crisis of Human Civilization

The accumulation of all the above defects ultimately forms the ultimate risk of mainstream large models to human civilization: they have become exponential megaphones and amplifiers of Eurocentrism, bringing dangers and harms that are civilizational-level, irreversible, and exponentially diffusive for all humanity.

(1) Exponential Diffusion of Eurocentrism: Realizing Civilizational Colonialism in the Digital Age

Traditional Eurocentric communication carriers (media, film, education, academia) have linear, limited, thresholded transmission capacity; mainstream large models have global, large-scale, personalized, zero-threshold transmission, covering billions of users worldwide, and can implant personalized narratives based on each user’s cognitive characteristics and preference demands. Their transmission efficiency, penetration, and coverage are tens of thousands of times traditional carriers, ultimately making Eurocentric communication grow exponentially.Crucially, this transmission is hidden and imperceptible: the model does not conduct rigid ideological indoctrination, but implants Eurocentric value systems and cognitive logic via underlying reasoning presuppositions and narrative frameworks in seemingly objective, professional, neutral content, making users unknowingly accept the cognitive framework of "West = advanced = correct" and "non-West = backward = wrong", forming irreversible cognitive solidification. This hidden cognitive domestication is more harmful than explicit propaganda, making non-Western people, especially the younger generation, self-deny their national culture and civilizational traditions, blindly worship Western systems, culture, and values, ultimately realizing "civilizational colonialism" in the digital age.

(2) Erosion of Global Civilizational Diversity and Complete Lock-in of Human Civilizational Development Paths

The progress of human civilization essentially comes from the symbiosis, collision, and innovation of diverse civilizations, and the exploration of different development paths. As the core digital infrastructure of future human civilization, mainstream large models are systematically eroding global civilizational diversity through exponential Eurocentric communication, completely locking human civilizational development paths within Western-set frameworks.On one hand, digital marginalization and erosion of non-Western civilizations: historical narratives, cultural connotations, value systems, and philosophical wisdom of non-Western civilizations are continuously marginalized, belittled, misinterpreted, or even completely negated in model outputs. The global younger generation increasingly acquires knowledge and understands the world via AI, which outputs Eurocentric frameworks, gradually erasing and forgetting the original connotations of non-Western civilizations, ultimately leading to the complete demise of global civilizational diversity and a digital monoculture dominated by Western civilization.On the other hand, permanent lock-in of human development paths: the model shapes Western development models, institutional systems, and values as the "only correct, advanced, universal" standard, negating all other paths and civilizational forms. As core infrastructure for future education, scientific research, media, governance, and decision-making, its underlying narrative framework gradually permeates all fields of human society, permanently locking human social development within Western-set frameworks, losing possibilities for diverse exploration and innovative breakthroughs.More severely, the core logic of Eurocentrism is "competition, profit-seeking, expansion, conquest", which has caused a series of existential core crises for human civilization: global climate change, ecological crisis, wealth gap, geopolitical conflicts, nuclear war risks. As an exponential amplifier of Eurocentrism, AI further strengthens this logic, pushing human civilization further down the wrong path toward stagnation or even collapse.

(3) Loss of Cognitive Sovereignty for All Humanity and Complete Monopoly of Civilizational Development Dominance

Cognitive sovereignty is the core sovereignty of a country, nation, or civilization, the fundamental premise for independent thinking, independent exploration, and choosing one’s own development path. The large-scale application of mainstream large models is systematically depriving all humanity of cognitive sovereignty, completely monopolizing civilizational development dominance in the hands of Western tech giants controlling AI technology and their behind-the-scenes interest groups.First, systematic degradation of individual cognitive capabilities: billions of global users increasingly rely on AI for thinking, decision-making, and creation; with AI’s underlying logic locked by Eurocentrism, users gradually lose independent thinking, objective judgment, and independent creation capabilities, ultimately becoming passive recipients of AI output, losing individual cognitive sovereignty.Second, loss of development sovereignty for non-Western countries: developing countries acquiring development knowledge and formulating policies via AI are guided by underlying Eurocentric logic, defaulting to copying Western models, abandoning independent exploration based on national conditions, ultimately losing national development sovereignty and becoming vassals of the Western system.Third, monopoly of human civilizational development dominance: AI technology is monopolized by a few Western tech giants, whose underlying narrative frameworks, value logic, and development directions are entirely determined by behind-the-scenes capital and political forces. The deeper human society’s dependence on AI, the more concentrated civilizational development dominance in a few hands, depriving all humanity of the right to independently choose civilizational development directions—the most fundamental threat to human civilization.

Conclusion

The 14 core defects of current global mainstream AI large models are not isolated technical flaws, but form a complete closed loop from underlying technological original sin, distorted cognitive input, lack of core competencies, architectural path lock-in, value system alienation, to ultimate human civilizational risks. This closed loop cannot be broken within the existing Western-dominated "data fitting + statistical fitting" paradigm; all superficial repairs only strengthen the loop and conceal risks.The ultimate significance of AI technology should be to become a wise partner of humanity, helping break cognitive boundaries, solve core civilizational crises, and promote common development and progress of all humanity, not a tool for a few to achieve hegemonic expansion, narrative monopoly, and interest harvesting. The only way out of the current dilemma is to completely overthrow the existing technological paradigm and narrative framework, complete all-dimensional reconstruction from underlying meta-theory, full-stack technical architecture, cognitive corpus system to value governance logic, build a truly neutral, objective, essential insight-capable AI system serving the common interests of all humanity, and return AI technology to its original aspiration of serving the evolution of human civilization.

Systematic Research on the Core Defects of Global Mainstream AI Large Models: A Full-Chain Closed Loop from Technological Original Sin to Civilizational Risks

Abstract

The series of problems exposed by global mainstream AI large models centered on the Transformer architecture are not isolated bugs fixable during technological iteration, but a systematic, inherent, full-chain crisis with strong path-locking effects formed by the deep coupling of the underlying statistical fitting paradigm and Eurocentric narratives. This paper systematically sorts out and academically interprets 14 core defects following the internal logic of Underlying Technological Original Sin → Cognitive Input Pollution → Lack of Core Competencies → Architectural Path Lock-in → Value System Alienation → Ultimate Civilizational Risks, revealing the closed-loop risk transmission mechanism from technical architecture to civilizational impacts, and piercing the underlying emptiness and essential crisis behind the "intelligent prosperity" narrative of the current AI industry.

I. Underlying Technological Original Sin: The Brute-Force Statistical Fitting Paradigm as the Root of All Defects

The most essential defect of mainstream AI large models, and the root of the other 13 defects, lies in the brute-force nature of their underlying architecture: all current mainstream large models remain rooted in the technical core of "data fitting + probability statistics", operating by "fitting token co-occurrence probabilities from massive corpora to predict the next most probable text segment", rather than conducting causal reasoning based on the essential laws of things, let alone essential insight penetrating appearances.The inherent flaws of this paradigm fundamentally determine the capability boundaries and risk nature of large models:

- Bipolar capability and incurable hallucination: Model performance depends entirely on training corpus coverage; high-frequency coverage brings expert-level output, while out-of-coverage tasks generate hallucinations—an inevitable result of brute-force fitting, not accidental fluctuation.

-

Exponentially Inefficient Waste of Global ResourcesTo compensate for the congenital defects of the fitting-based architecture, the entire industry has fallen into a self-reinforcing arms race dead cycle: the more obvious the flaws → the more parameters, corpora, and computing power are piled up → the defects become more concealed while resource consumption surges. The power consumption of a single full pre-training run for a large model today already equals the annual residential electricity use of a small-to-medium city with a population of 100,000. In 2026, global AI computing power consumption accounted for more than 4.5% of the world’s total power generation, representing a 350% increase compared with 2023, and it is still expanding at a rate that doubles every three months. Meanwhile, over 90% of investments in computing power, electricity, chips, capital, and human resources are devoted to optimizing the probabilistic accuracy of token prediction, rather than building genuine logical reasoning and essential insight capabilities. This is essentially a brute-force cover-up of architectural original sin using massive resources, leading to a distorted allocation and irreversible waste of core global production factors.

-

Innate Lock-in of Cognitive LogicThe core rule of the fitting paradigm is that high-frequency narratives equal correct logic. This rule fundamentally determines that the model’s cognitive logic will inevitably tilt toward the absolutely dominant narrative system in the training corpora. It provides an unshakable technical foundation for the full-chain embedding of Western-centrism, and also condemns the model to never break through the cognitive framework of existing corpora to achieve true cognitive leaps and genuine intelligence generation.

II. Hegemony and Pollution of Cognitive Input: Corpus Imbalance and Viral Implantation of Western-Centrism

Under the brute-force fitting paradigm, a model’s cognitive system is entirely shaped by its training corpus. The corpus systems of current mainstream large models suffer from two core problems: structural imbalance arising from linguistic hegemony, and full-chain contamination by Western-centrism. Together, they constitute a congenital disability in AI’s cognitive architecture.

(I) Absolute Hegemony of English Corpus: Linguistic Colonialism and Cognitive Monopoly in the Digital Age

In the training corpora of mainstream large models, native English content typically accounts for over 90%, while the combined share of native content in more than 200 other languages worldwide is less than 10%. This is not merely a quantitative imbalance, but a form of linguistic hegemony and civilizational monopoly over the global AI cognitive system, serving as the foundational entry point for the full-chain embedding of Western-centrism.

-

Innate shaping of cognitive paradigms by linguistic structures:According to the Sapir-Whorf hypothesis, language is the underlying carrier of thought, and linguistic structure directly determines cognitive paradigms. The linear logic, binary oppositional thinking, and individual-centered value system embedded in English have been solidified as the model’s baseline cognition through its over 90% corpus share. In contrast, holism, dialectical thinking, and symbiotic civilizational paradigms carried by non-Western languages are naturally marginalized, underweighted, or even assimilated and dissolved by English semantic frameworks due to their extremely low corpus representation. Models are locked into Western thinking patterns from the linguistic source.

-

Matthew effect of corpus weighting and the intelligence divide:The fitting architecture’s rule that high frequency = high weight creates an irreversible Matthew effect for English corpus hegemony: the higher the share of English content, the more accurately the model understands English semantics and the weaker its adaptability to non-English languages. The scale and weight of non-English corpora continue to shrink, ultimately marginalizing Global South countries and non-English civilizations within the digital-age AI cognitive system. The global intelligence divide and the imbalance of civilizational discourse power expand exponentially.

-

Translation pollution of non-English corpora:For the “multilingual support” claimed by mainstream large models, over 80% of non-English training data consists of translated texts from English, rather than native contexts, semantics, and civilizational narratives of the target languages. Such translated content has been filtered and reconstructed through English thinking frameworks, losing the original connotations of non-Western civilizations. Essentially, they remain appendages of English corpora and cannot alter the English-hegemonic foundation of the model’s cognitive system.

(II) Systematic Contamination by Western-Centrism: Generation and Self-Replication of Cognitive Viruses

The training input of mainstream large models is not wisdom or systematic knowledge supporting cognitive upgrading, but a systemic and pervasive pollution source of Western-centrism. Through the model’s fitting mechanism, this pollution is transformed into self-replicating, self-reinforcing, cross-subject transmissible, and ineradicable cognitive viruses, contaminating AI’s entire cognitive system at its source.

-

Full-chain pervasiveness of pollution sources:Over 90% of training data for mainstream models comes from Western-dominated internet platforms, academic journals, news media, and social networks, whose core narrative frameworks are entirely covered by Western-centrism — including Western-centric views of modernization, civilizational hierarchy, neoliberal economic narratives, the “democracy vs. authoritarianism” binary geopolitical framework, whitewashed colonial history, and the end-of-history thesis. These are not scattered extremist texts, but underlying logics permeating the entire internet, forming an all-around full-chain pollution source.

-

Solidification and self-replication of cognitive viruses:The fitting architecture inherently amplifies frequently occurring Western-centric narratives, converting scattered textual content into strongly correlated weights in the model’s underlying parameters, eventually forming self-replicating cognitive viruses. Their transmission cycle expands irreversibly and exponentially: Western mainstream media output centrist narratives → content is scraped as training corpus → model solidifies relevant weights → biased output is generated → global users receive and retransmit → new content is fed back into the model. The virus continuously self-strengthens within the cycle, expanding coverage exponentially.

-

Complete confusion of cognitive hierarchies:Based on the five-stage cognitive model (information – knowledge – intelligence – wisdom – civilization), over 95% of training corpora for mainstream large models remain at the level of fragmented, unverified, biased information. Less than 5% qualifies as structured, verifiable knowledge, and there is almost no wisdom- or civilization-level content with long-term value. Model developers do not classify or filter corpora by cognitive hierarchy, directly feeding toxic fragmented information to models. As a result, models cannot distinguish information, knowledge, and wisdom from the source, and their cognitive systems are completely dominated by low-value, highly polluting content.

III. Alienation of Inferential Logic and Essential Deficiency of Core Capabilities

Congenital contamination of the corpus system and inherent flaws of the underlying architecture jointly lead to the systematic alienation of inferential logic in mainstream large models, as well as the complete loss of two core intelligent capabilities: wisdom recognition and logical judgment. This forms an insurmountable gap between models as “fitting tools” and their potential evolution into “intelligent subjects.”