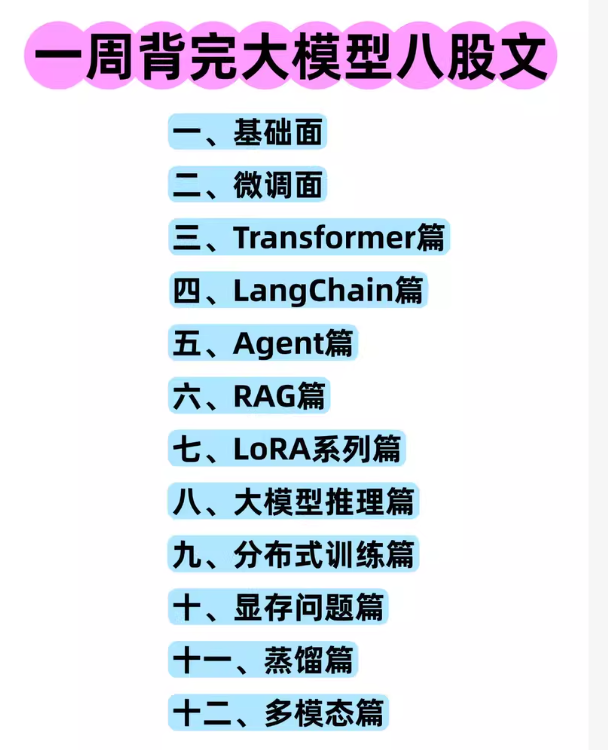

大模型八股文全套资料,整理自最新AI大模型学习体系,内容涵盖基础面、微调、Transformer、LangChain、Agent、RAG、LoRA、推理、分布式训练、【无标题】

大模型八股文全套资料,整理自最新AI大模型学习体系,内容涵盖基础面、微调、Transformer、LangChain、Agent、RAG、LoRA、推理、分布式训练、显存、蒸馏、多模态等,适合准备AI大模型面试、学习、进阶的同学。

资料包含PDF、文档、思维导图,知识点全,结构清晰,方便复习和查漏补缺。

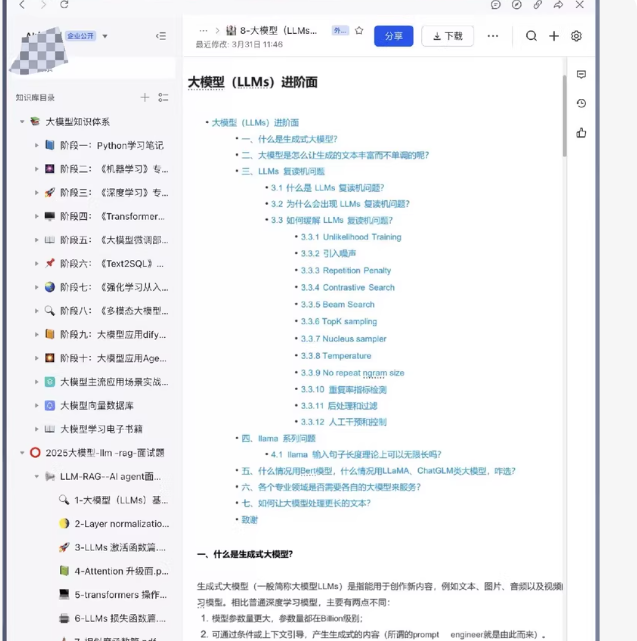

这类“八股文”资料通常侧重于理论知识梳理、面试问题总结和概念解释,例如:

Transformer 的结构与原理

LoRA 微调的数学推导

RAG 的工作流程

分布式训练的通信策略

Transformer 核心组件 (PyTorch)

import torch

import torch.nn as nn

import math

class MultiHeadAttention(nn.Module):

def init(self, d_model, num_heads):

super().init()

assert d_model % num_heads == 0, “d_model must be divisible by num_heads”

self.d_k = d_model // num_heads

self.num_heads = num_heads

self.W_q = nn.Linear(d_model, d_model)

self.W_k = nn.Linear(d_model, d_model)

self.W_v = nn.Linear(d_model, d_model)

self.W_o = nn.Linear(d_model, d_model)

def forward(self, q, k, v, mask=None):

batch_size = q.size(0)

# Linear projections

Q = self.W_q(q).view(batch_size, -1, self.num_heads, self.d_k).transpose(1, 2)

K = self.W_k(k).view(batch_size, -1, self.num_heads, self.d_k).transpose(1, 2)

V = self.W_v(v).view(batch_size, -1, self.num_heads, self.d_k).transpose(1, 2)

# Scaled Dot-Product Attention

scores = torch.matmul(Q, K.transpose(-2, -1)) / math.sqrt(self.d_k)

if mask is not None:

scores = scores.masked_fill(mask == 0, -1e9)

attn_weights = torch.softmax(scores, dim=-1)

output = torch.matmul(attn_weights, V)

# Concatenate heads and project

output = output.transpose(1, 2).contiguous().view(batch_size, -1, self.num_heads * self.d_k)

return self.W_o(output)

使用 Hugging Face 进行 LoRA 微调

from peft import LoraConfig, get_peft_model

from transformers import AutoModelForCausalLM

加载基础模型

model = AutoModelForCausalLM.from_pretrained(“meta-llama/Llama-2-7b-hf”)

配置LoRA

lora_config = LoraConfig(

r=8,

lora_alpha=32,

target_modules=[“q_proj”, “v_proj”], # 通常只微调注意力层的Q和V

lora_dropout=0.1,

bias=“none”,

task_type=“CAUSAL_LM”

)

应用LoRA

model = get_peft_model(model, lora_config)

model.print_trainable_parameters() # 查看可训练参数量

LangChain 构建一个简单的 RAG 应用

from langchain_community.document_loaders import WebBaseLoader

from langchain_community.embeddings import HuggingFaceEmbeddings

from langchain_community.vectorstores import FAISS

from langchain_community.llms import HuggingFaceHub

from langchain.chains import RetrievalQA

加载文档

loader = WebBaseLoader(“https://example.com/article”)

docs = loader.load()

创建向量数据库

embeddings = HuggingFaceEmbeddings(model_name=“all-MiniLM-L6-v2”)

db = FAISS.from_documents(docs, embeddings)

初始化LLM

llm = HuggingFaceHub(repo_id=“google/flan-t5-large”)

创建RAG链

qa_chain = RetrievalQA.from_chain_type(

llm=llm,

chain_type=“stuff”,

retriever=db.as_retriever()

)

response = qa_chain.run(“文章的主要观点是什么?”)

print(response)

分布式训练 (使用 PyTorch DDP)

import torch

import torch.distributed as dist

import torch.multiprocessing as mp

from torch.nn.parallel import DistributedDataParallel as DDP

def setup(rank, world_size):

dist.init_process_group(“nccl”, rank=rank, world_size=world_size)

def cleanup():

dist.destroy_process_group()

def train(rank, world_size):

setup(rank, world_size)

# 创建模型并移到对应GPU

model = MyModel().to(rank)

ddp_model = DDP(model, device_ids=[rank])

# 定义优化器和数据加载器(需使用DistributedSampler)

optimizer = torch.optim.Adam(ddp_model.parameters())

# ... 训练循环 ...

cleanup()

if name == “main”:

world_size = torch.cuda.device_count()

mp.spawn(train, args=(world_size,), nprocs=world_size, join=True)

主流开源模型分类(Prefix Decoder / Causal Decoder / Encoder-Decoder)

代表模型:ChatGLM、LLaMA、T5、BART 等

注意力机制差异与适用场景

Prefix Decoder vs Causal Decoder 的 attention mask 实现

Encoder-Decoder 结构的前向传播

或者用 Hugging Face 快速加载不同架构模型并对比输出

🧩 1. Prefix Decoder vs Causal Decoder —— Attention Mask 对比(PyTorch 手动实现)

import torch

import torch.nn as nn

import math

def create_causal_mask(seq_len):

“”“Causal Decoder: 只能看前面的 token”“”

mask = torch.tril(torch.ones(seq_len, seq_len))

return mask.unsqueeze(0).unsqueeze(0) # [1,1,seq_len,seq_len]

def create_prefix_mask(prefix_len, total_len):

“”“Prefix Decoder: prefix 部分双向,生成部分单向”“”

mask = torch.ones(total_len, total_len)

# prefix 区域允许全连接

mask[:prefix_len, :prefix_len] = 1

# 生成区域只能看 prefix + 自己及之前

for i in range(prefix_len, total_len):

mask[i, :i+1] = 1

mask[i, i+1:] = 0

return mask.unsqueeze(0).unsqueeze(0)

示例:sequence length = 6, prefix length = 2

seq_len = 6

prefix_len = 2

causal_mask = create_causal_mask(seq_len)

prefix_mask = create_prefix_mask(prefix_len, seq_len)

print(“=== Causal Decoder Mask ===”)

print(causal_mask[0,0])

print(“n=== Prefix Decoder Mask ===”)

print(prefix_mask[0,0])

📌 输出解释:

Causal:下三角矩阵 → 每个位置只能看到自己及之前

Prefix:前2行全1(prefix可互见),后4行只允许看prefix+自身及之前 → 适合指令微调/上下文学习

🧩 2. Encoder-Decoder 架构示例(使用 Hugging Face Transformers)

from transformers import T5ForConditionalGeneration, T5Tokenizer

加载 T5 (Encoder-Decoder 架构代表)

model_name = “t5-small”

tokenizer = T5Tokenizer.from_pretrained(model_name)

model = T5ForConditionalGeneration.from_pretrained(model_name)

输入文本(如翻译任务)

input_text = “translate English to German: Hello world!”

inputs = tokenizer(input_text, return_tensors=“pt”)

生成目标(解码器输入)

target_text = “Hallo Welt!”

targets = tokenizer(target_text, return_tensors=“pt”)

前向传播(训练时)

outputs = model(

input_ids=inputs[“input_ids”],

attention_mask=inputs[“attention_mask”],

labels=targets[“input_ids”]

)

loss = outputs.loss

print(f"Loss: {loss.item():.4f}")

推理生成

generated = model.generate(

inputs[“input_ids”],

max_length=20,

num_beams=4,

early_stopping=True

)

print(“Generated:”, tokenizer.decode(generated[0], skip_special_tokens=True))

📌 关键点:

Encoder 处理输入序列(双向注意力)

Decoder 生成输出序列(带 causal mask 的单向注意力 + cross-attention 到 encoder 输出)

🧩 3. 快速对比三种架构模型(Hugging Face 加载 + 输出风格分析)

from transformers import AutoModelForCausalLM, AutoTokenizer, AutoModelForSeq2SeqLM

models_info = {

“Causal Decoder”: (“meta-llama/Llama-2-7b-hf”, AutoModelForCausalLM),

“Prefix Decoder”: (“THUDM/chatglm3-6b”, AutoModelForCausalLM), # ChatGLM3 实际是 Prefix LM

“Encoder-Decoder”: (“google/t5-small”, AutoModelForSeq2SeqLM)

}

prompt = “今天天气很好,”

for arch_type, (model_name, model_cls) in models_info.items():

print(f"n=== {arch_type} ===")

try:

tokenizer = AutoTokenizer.from_pretrained(model_name, trust_remote_code=True)

model = model_cls.from_pretrained(model_name, trust_remote_code=True)

inputs = tokenizer(prompt, return_tensors="pt")

if arch_type == "Encoder-Decoder":

# T5 需要添加任务前缀

inputs = tokenizer("translate English to German: " + prompt, return_tensors="pt")

outputs = model.generate(**inputs, max_new_tokens=10, do_sample=False)

result = tokenizer.decode(outputs[0], skip_special_tokens=True)

print(f"Input: {prompt}")

print(f"Output: {result}")

except Exception as e:

print(f"Error loading {model_name}: {e}")

架构对比 用 HF 加载不同模型,观察生成行为差异

微调实战 用 LoRA 微调 LLaMA / ChatGLM

RAG / Agent 用 LangChain 构建问答机器人

✅ 完整 Jupyter Notebook 版本(含可视化 attention mask)

✅ LoRA 微调脚本(针对 ChatGLM 或 LLaMA)

✅ RAG + Agent 端到端项目代码

✅ 分布式训练 / 显存优化技巧代码

AtomGit 是由开放原子开源基金会联合 CSDN 等生态伙伴共同推出的新一代开源与人工智能协作平台。平台坚持“开放、中立、公益”的理念,把代码托管、模型共享、数据集托管、智能体开发体验和算力服务整合在一起,为开发者提供从开发、训练到部署的一站式体验。

更多推荐

已为社区贡献8条内容

已为社区贡献8条内容

所有评论(0)