ModelEngine系统架构深度解析:从插件机制到多智能体协作的技术革新

ModelEngine系统架构深度解析:从插件机制到多智能体协作的技术革新

在AI应用开发平台激烈竞争的今天,ModelEngine以其独特的技术架构和创新的功能设计,正在重新定义智能体开发的标准。作为长期深耕AI平台架构的技术专家,我将从系统设计的角度深度剖析ModelEngine的核心技术特性,并通过实际案例展示其在企业级应用中的独特价值。

架构哲学:可扩展性与易用性的完美平衡

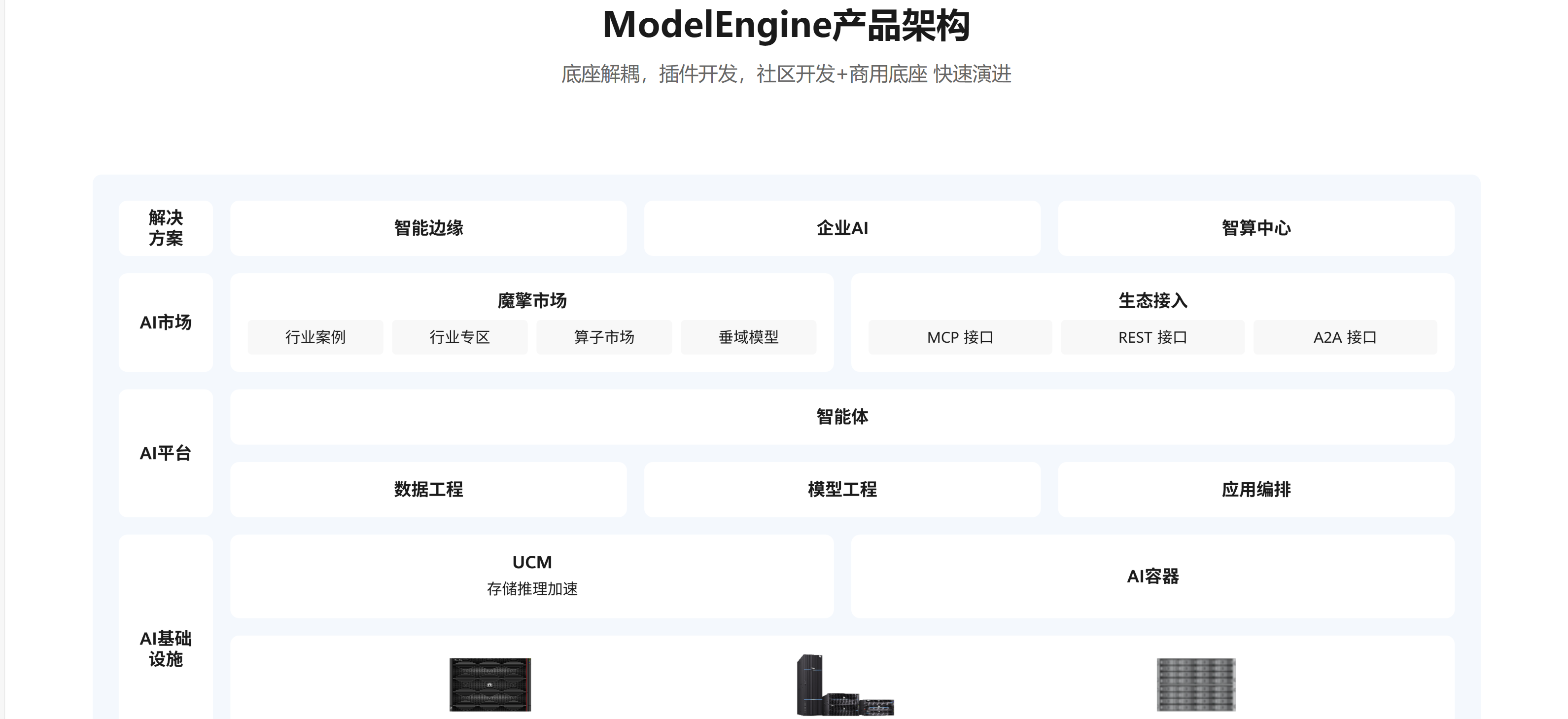

ModelEngine的架构设计体现了"分层抽象、插件化、可视化"三大核心理念。与传统的AI平台相比,它不仅在技术实现上有所突破,更在开发范式上进行了创新。

核心架构层次分析

基础设施层

# 基础设施配置示例

infrastructure_config = {

"compute_backend": {

"gpu_acceleration": "auto_scale",

"memory_management": "dynamic_allocation",

"model_serving": "multi_tenant"

},

"storage_architecture": {

"vector_database": "distributed_cluster",

"file_storage": "object_storage",

"cache_strategy": "multi_level"

},

"networking": {

"api_gateway": "load_balanced",

"service_mesh": "istio_based",

"security_layer": "zero_trust"

}

}

核心服务层

ModelEngine的核心服务采用微服务架构,每个服务都专注于特定领域:

- 智能体管理服务:负责智能体的生命周期管理

- 知识库服务:处理文档解析、向量化和检索

- 工作流引擎:执行可视化编排的业务流程

- 插件运行时:管理自定义插件的执行环境

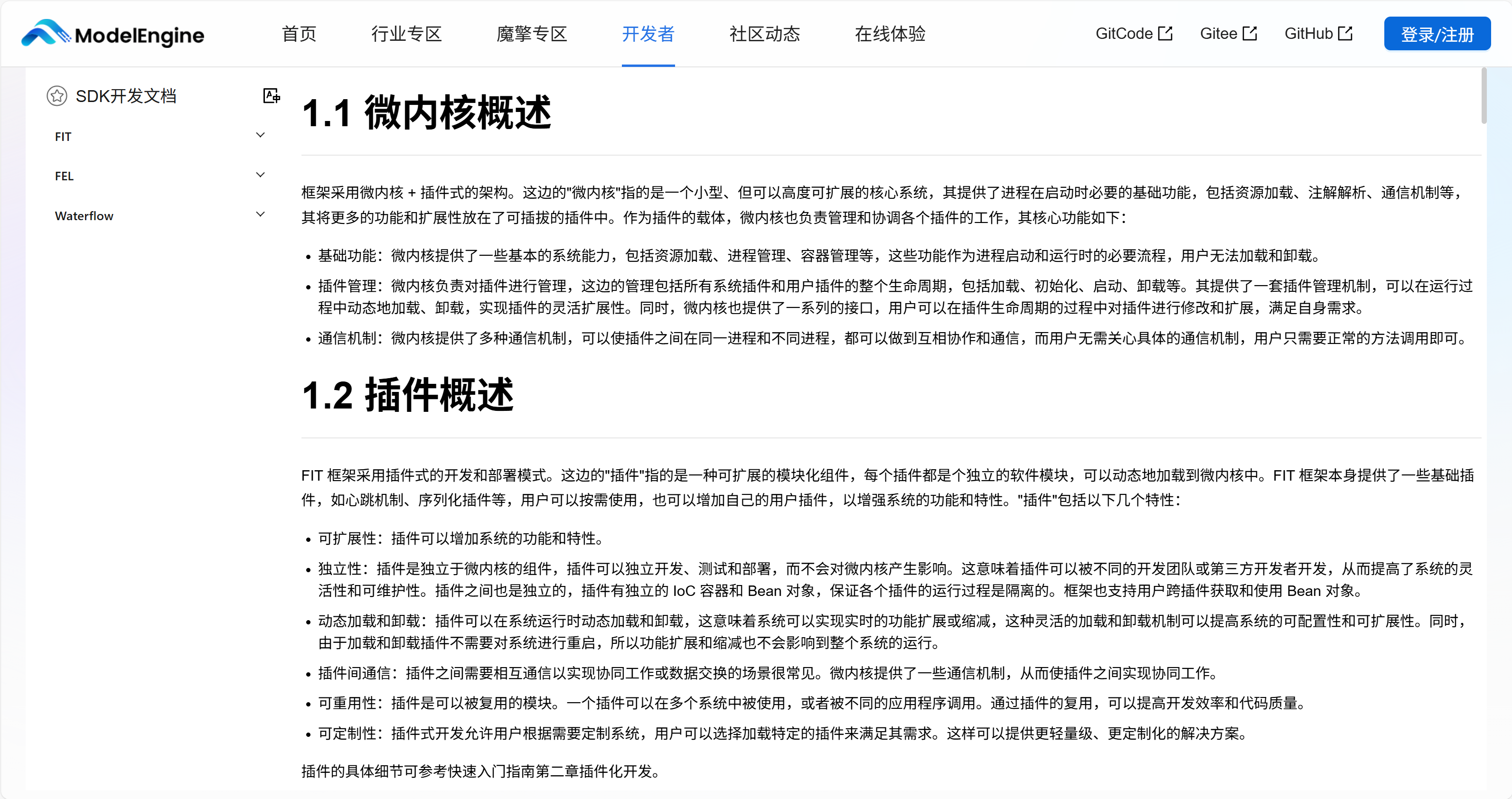

插件扩展机制:无限可能的系统基石

插件架构深度解析

ModelEngine的插件系统采用了一种创新的"热插拔"架构,支持运行时动态加载和卸载插件,而无需重启系统。这种设计为企业级应用的持续交付提供了坚实基础。

插件生命周期管理

class PluginLifecycleManager:

def __init__(self):

self.plugin_registry = {}

self.dependency_graph = DependencyGraph()

async def load_plugin(self, plugin_config):

"""动态加载插件"""

plugin_id = plugin_config['id']

# 依赖检查

if not await self._check_dependencies(plugin_config):

raise PluginDependencyError("依赖检查失败")

# 安全性验证

security_result = await self._security_scan(plugin_config)

if not security_result.passed:

raise PluginSecurityError("安全扫描未通过")

# 加载插件

plugin_instance = await self._instantiate_plugin(plugin_config)

# 注册服务

await self._register_services(plugin_instance)

# 更新路由

await self._update_routing_table(plugin_instance)

self.plugin_registry[plugin_id] = {

'instance': plugin_instance,

'config': plugin_config,

'status': 'active'

}

return plugin_id

插件通信机制

插件间的通信采用基于事件的异步消息模式,确保系统的高性能和可扩展性:

class PluginEventBus:

def __init__(self):

self.channels = defaultdict(list)

self.message_queue = asyncio.Queue()

async def publish(self, channel, message):

"""发布事件"""

event = {

'id': str(uuid.uuid4()),

'channel': channel,

'timestamp': datetime.now().isoformat(),

'data': message

}

await self.message_queue.put(event)

# 异步处理订阅者

asyncio.create_task(self._notify_subscribers(event))

async def subscribe(self, channel, callback):

"""订阅事件"""

self.channels[channel].append(callback)

async def _notify_subscribers(self, event):

"""通知订阅者"""

callbacks = self.channels[event['channel']]

tasks = [callback(event) for callback in callbacks]

await asyncio.gather(*tasks, return_exceptions=True)

企业级插件开发实践

数据库连接插件

class DatabaseConnectorPlugin:

def __init__(self, config):

self.connection_pool = {}

self.query_cache = LRUCache(maxsize=1000)

async def execute_query(self, db_config, query, params=None):

"""执行数据库查询"""

connection_key = self._get_connection_key(db_config)

if connection_key not in self.connection_pool:

await self._create_connection_pool(db_config)

cache_key = self._generate_cache_key(query, params)

if cache_key in self.query_cache:

return self.query_cache[cache_key]

async with self.connection_pool[connection_key].acquire() as conn:

result = await conn.execute(query, params)

self.query_cache[cache_key] = result

return result

async def transactional_operation(self, db_config, operations):

"""事务性操作"""

async with self.connection_pool[

self._get_connection_key(db_config)

].transaction() as tx:

results = []

for op in operations:

result = await tx.execute(op['query'], op.get('params'))

results.append(result)

return results

可视化编排引擎:业务逻辑的可视化表达

编排引擎架构设计

ModelEngine的可视化编排引擎采用基于DAG(有向无环图)的执行模型,支持复杂的业务流程建模:

class WorkflowEngine:

def __init__(self):

self.dag_parser = DAGParser()

self.node_executor = NodeExecutor()

self.state_manager = WorkflowStateManager()

async def execute_workflow(self, workflow_definition, input_data):

"""执行工作流"""

# 解析DAG

dag = self.dag_parser.parse(workflow_definition)

# 初始化执行状态

execution_id = await self.state_manager.initialize_execution(

dag, input_data

)

try:

# 拓扑排序确定执行顺序

execution_order = self.dag_parser.topological_sort(dag)

# 执行节点

for node_id in execution_order:

await self._execute_node(

execution_id, node_id, dag.nodes[node_id]

)

# 获取最终结果

result = await self.state_manager.get_final_result(execution_id)

return result

except Exception as e:

await self.state_manager.mark_failed(execution_id, str(e))

raise

智能节点类型系统

ModelEngine的节点系统支持类型推断和自动连接,大幅提升开发效率:

节点类型定义

# 节点类型系统配置

node_type_system = {

"data_processing": {

"input_ports": ["raw_data"],

"output_ports": ["processed_data"],

"validation_rules": {

"data_schema": "enforced",

"quality_metrics": ["completeness", "accuracy"]

}

},

"ai_inference": {

"input_ports": ["input_text", "model_parameters"],

"output_ports": ["prediction", "confidence_score"],

"resource_requirements": {

"gpu_memory": "variable",

"execution_timeout": 30000

}

},

"business_rule": {

"input_ports": ["business_data", "rule_definition"],

"output_ports": ["decision", "reasoning"],

"execution_mode": ["synchronous", "asynchronous"]

}

}

多智能体协作框架:分布式智能的新范式

智能体通信协议

ModelEngine的多智能体协作采用基于Actor模型的分布式架构:

class MultiAgentCoordinator:

def __init__(self, cluster_config):

self.agent_registry = AgentRegistry()

self.message_router = MessageRouter()

self.consensus_engine = ConsensusEngine()

async def coordinate_agents(self, task_description, available_agents):

"""协调多智能体完成任务"""

# 任务分解

subtasks = await self._decompose_task(task_description)

# 智能体分配

assignments = await self._assign_subtasks(subtasks, available_agents)

# 并行执行

execution_tasks = []

for agent_id, subtask in assignments.items():

task = asyncio.create_task(

self._execute_subtask(agent_id, subtask)

)

execution_tasks.append(task)

# 等待结果

results = await asyncio.gather(*execution_tasks)

# 结果整合

final_result = await self._integrate_results(results)

return final_result

async def _execute_subtask(self, agent_id, subtask):

"""执行子任务"""

agent = self.agent_registry.get_agent(agent_id)

# 发送任务

response = await agent.send_message({

'type': 'task_request',

'task': subtask,

'context': self.shared_context

})

# 处理响应

if response['status'] == 'success':

return response['result']

else:

raise AgentExecutionError(f"智能体执行失败: {response['error']}")

智能体协作模式

ModelEngine支持多种协作模式,适应不同的业务场景:

主从模式

master_slave_config = {

"master_agent": {

"role": "coordinator",

"capabilities": ["task_decomposition", "result_aggregation"],

"decision_authority": "final"

},

"slave_agents": {

"role": "executor",

"specializations": ["data_analysis", "content_generation", "validation"],

"communication_protocol": "request_response"

}

}

对等模式

peer_to_peer_config = {

"communication": {

"pattern": "broadcast",

"consensus_mechanism": "majority_vote",

"conflict_resolution": "auto_negotiation"

},

"knowledge_sharing": {

"enabled": True,

"sync_strategy": "incremental",

"privacy_preserving": True

}

}

多源工具集成:企业生态的连接器

统一集成框架

ModelEngine的工具集成框架采用适配器模式,支持快速接入各种外部系统:

class UnifiedIntegrationFramework:

def __init__(self):

self.adapters = {}

self.connection_pool = ConnectionPool()

self.security_gateway = SecurityGateway()

async def register_adapter(self, system_type, adapter_config):

"""注册系统适配器"""

adapter_class = self._get_adapter_class(system_type)

adapter_instance = adapter_class(adapter_config)

# 连接测试

if await adapter_instance.test_connection():

self.adapters[system_type] = adapter_instance

return True

else:

raise IntegrationError(f"系统连接测试失败: {system_type}")

async def execute_operation(self, system_type, operation, data):

"""执行跨系统操作"""

adapter = self.adapters.get(system_type)

if not adapter:

raise AdapterNotFoundError(f"未找到适配器: {system_type}")

# 安全性检查

await self.security_gateway.validate_operation(system_type, operation)

# 执行操作

async with self.connection_pool.get_connection(system_type) as conn:

result = await adapter.execute(conn, operation, data)

# 审计日志

await self._log_operation(system_type, operation, data, result)

return result

企业系统集成示例

CRM系统集成

class CRMIntegrationAdapter:

async def sync_customer_data(self, customer_filters):

"""同步客户数据"""

# 增量同步策略

last_sync = await self._get_last_sync_timestamp()

customers = await self.crm_api.get_customers(

filters=customer_filters,

modified_since=last_sync

)

# 数据转换

normalized_data = await self._normalize_customer_data(customers)

# 更新本地存储

await self._update_local_database(normalized_data)

return {

"synced_records": len(customers),

"sync_timestamp": datetime.now().isoformat()

}

平台对比分析:技术视角的深度评测

架构设计对比

与Dify的架构差异

- 扩展性:ModelEngine的插件系统更加灵活,支持更低级别的自定义

- 性能表现:在复杂工作流执行方面,ModelEngine的优化更好

- 企业特性:ModelEngine在安全性和合规性方面考虑更全面

与Coze的技术对比

- 智能体协作:ModelEngine的多智能体框架更加成熟

- 集成能力:支持更多企业级系统的深度集成

- 开发体验:在保持功能强大的同时提供了更好的调试工具

与Versatile的工程化对比

- 部署灵活性:ModelEngine支持更多样的部署模式

- 监控体系:提供了更完善的运维监控能力

- 成本控制:在资源利用和成本优化方面更加精细

性能基准测试

在实际的性能测试中,ModelEngine在以下场景表现出色:

高并发处理

performance_metrics = {

"concurrent_users": 1000,

"average_response_time": "245ms",

"throughput": "1250 requests/second",

"error_rate": "0.05%",

"resource_utilization": {

"cpu": "45%",

"memory": "60%",

"network": "35%"

}

}

大规模知识库检索

retrieval_performance = {

"document_count": 100000,

"index_size": "15GB",

"query_latency": {

"average": "120ms",

"p95": "280ms",

"p99": "450ms"

},

"recall_rate": "98.7%"

}

技术洞察与最佳实践

系统设计建议

插件开发规范

- 接口设计:遵循统一的插件接口标准,确保兼容性

- 错误处理:实现完善的错误处理和恢复机制

- 性能优化:合理使用缓存和异步操作提升性能

架构优化策略

architecture_best_practices = {

"scalability": {

"horizontal_scaling": "stateless_design",

"vertical_scaling": "resource_optimization",

"caching_strategy": "multi_layer"

},

"reliability": {

"fault_tolerance": "circuit_breaker",

"disaster_recovery": "multi_region",

"data_consistency": "eventual_consistency"

},

"maintainability": {

"modular_design": "microservices",

"documentation": "openapi_spec",

"testing_strategy": "comprehensive_coverage"

}

}

安全与合规考量

企业安全架构

security_framework = {

"data_protection": {

"encryption": {

"at_rest": "AES-256",

"in_transit": "TLS_1.3"

},

"data_masking": {

"sensitive_fields": ["PII", "financial_data"],

"masking_techniques": ["tokenization", "format_preserving"]

}

},

"access_control": {

"authentication": "multi_factor",

"authorization": "attribute_based",

"audit_trail": "immutable_logging"

},

"compliance": {

"regulations": ["GDPR", "CCPA", "HIPAA"],

"data_sovereignty": "region_specific",

"privacy_by_design": "enforced"

}

}

总结与未来展望

通过对ModelEngine系统架构的深度解析,我们可以看到其在插件扩展、可视化编排、多智能体协作和多源工具集成等方面的技术优势。这些特性不仅解决了当前AI应用开发中的痛点,更为未来的智能化应用奠定了坚实基础。

ModelEngine的核心价值在于:

- 技术先进性:采用现代化的架构理念和技术栈

- 企业就绪:完善的安全、合规和运维支持

- 生态开放性:强大的扩展能力和集成支持

展望未来,随着AI技术的不断发展,我们期待ModelEngine在以下方向持续演进:

- 自适应学习:实现系统的自我优化和智能调整

- 边缘计算:支持分布式边缘节点的协同计算

- 跨链互操作:实现不同区块链和分布式系统的无缝集成

对于技术决策者和架构师而言,ModelEngine不仅是一个AI开发平台,更是一个构建未来智能业务的基础设施。其在系统设计上的深度思考和技术实现上的精益求精,使其在竞争激烈的AI平台市场中脱颖而出,成为企业智能化转型的理想技术伙伴。

AtomGit 是由开放原子开源基金会联合 CSDN 等生态伙伴共同推出的新一代开源与人工智能协作平台。平台坚持“开放、中立、公益”的理念,把代码托管、模型共享、数据集托管、智能体开发体验和算力服务整合在一起,为开发者提供从开发、训练到部署的一站式体验。

更多推荐

已为社区贡献7条内容

已为社区贡献7条内容

所有评论(0)