YOLOv8/v10剪枝全过程

1 约束训练

1.1 修改YOLOv8代码:

ultralytics/yolo/engine/trainer.py

添加内容:

# Backward

self.scaler.scale(self.loss).backward()

# ========== 新增 ==========

l1_lambda = 1e-2 * (1 - 0.9 * epoch / self.epochs)

for k, m in self.model.named_modules():

if isinstance(m, nn.BatchNorm2d):

m.weight.grad.data.add_(l1_lambda * torch.sign(m.weight.data))

m.bias.grad.data.add_(1e-2 * torch.sign(m.bias.data))

# ========== 新增 ==========

# Optimize - https://pytorch.org/docs/master/notes/amp_examples.html

if ni - last_opt_step >= self.accumulate:

self.optimizer_step()

last_opt_step = ni

1.2 训练

需要注意的就是amp=False

命令行输入:

yolo train model=yolov8s.yaml epochs=100 amp=False

训练完会得到一个best.pt和last.pt,推荐用last.pt

1.3 约束训练可视化

已实现在tensorboard可视化约束训练过程BN参数的分布变化

随着训练进行(纵轴是epoch),BN层参数会逐渐从最上面的正太分布趋向于0附近。

以下是正常训练和稀疏训练的BN层参数值分布图:

右图的稀疏训练明显太早就全到0了,这样会影响精度,可以把系数1e-2改小一点1e-3,这样会稀疏的慢一点,l1_lambda = 1e-2 * (1 - 0.9 * epoch / self.epochs)

如下图:左为1e-2, 右为0.3*1e-2

2 剪枝

上一步得到的last.pt作为剪枝对象,自己创建一个prun.py文件:

这里的剪枝代码仅适用yolov8原模型,如有模块/模型的更改,则需要修改剪枝代码

需要定制改模型后的剪枝的可以私信

from ultralytics import YOLO

import torch

from ultralytics.nn.modules import Bottleneck, Conv, C2f, SPPF, Detect

yolo = YOLO("last.pt") # 第一步约束训练得到的pt文件

model = yolo.model

ws = []

bs = []

for _, m in model.named_modules():

if isinstance(m, torch.nn.BatchNorm2d):

w = m.weight.abs().detach()

b = m.bias.abs().detach()

ws.append(w)

bs.append(b)

factor = 0.8 # 通道保留比率

ws = torch.cat(ws)

threshold = torch.sort(ws, descending=True)[0][int(len(ws) * factor)]

print(threshold)

def _prune(c1, c2):

wet = c1.bn.weight.data.detach()

bis = c1.bn.bias.data.detach()

list = []

_threshold = threshold

while len(list) < 8:

list = torch.where(wet.abs() >= _threshold)[0]

_threshold = _threshold * 0.5

i = len(list)

c1.bn.weight.data = wet[list]

c1.bn.bias.data = bis[list]

c1.bn.running_var.data = c1.bn.running_var.data[list]

c1.bn.running_mean.data = c1.bn.running_mean.data[list]

c1.bn.num_features = i

c1.conv.weight.data = c1.conv.weight.data[list]

c1.conv.out_channels = i

if c1.conv.bias is not None:

c1.conv.bias.data = c1.conv.bias.data[list]

if not isinstance(c2, list):

c2 = [c2]

for item in c2:

if item is not None:

if isinstance(item, Conv):

conv = item.conv

else:

conv = item

conv.in_channels = i

conv.weight.data = conv.weight.data[:, list]

def prune(m1, m2):

if isinstance(m1, C2f):

m1 = m1.cv2

if not isinstance(m2, list):

m2 = [m2]

for i, item in enumerate(m2):

if isinstance(item, C2f) or isinstance(item, SPPF):

m2[i] = item.cv1

_prune(m1, m2)

for _, m in model.named_modules():

if isinstance(m, Bottleneck):

_prune(m.cv1, m.cv2)

for _, p in yolo.model.named_parameters():

p.requires_grad = True

# yolo.export(format="onnx") # 导出为onnx文件

# yolo.train(data="VOC.yaml", epochs=100) # 剪枝后直接训练微调

torch.save(yolo.ckpt, "prune.pt")

print("done")上述代码只需修改:

1. 最顶上的yolo = YOLO("last.pt")改为第一步约束训练得到的文件路径,一般为runs/detect/train/weights/last.pt

2. 最下面的torch.save(yolo.ckpt, "prune.pt")改为想要保存的路径

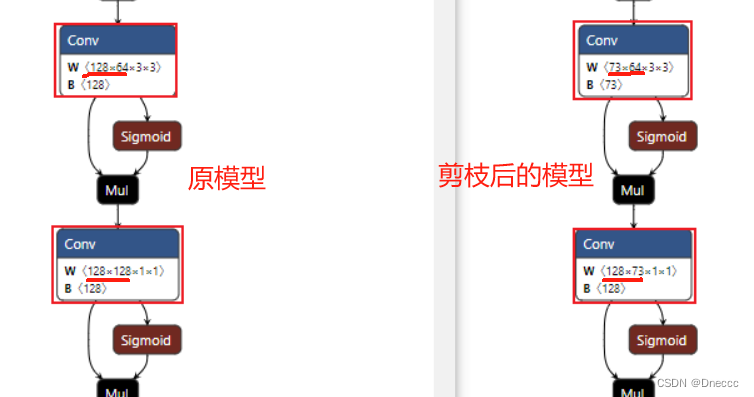

运行完会得到prune.pt和prune.onnx可以在netron.app网站拖入onnx文件查看是否剪枝成功了,成功的话可以看到某些通道数字为单数或者一些不规律的数字,如下图:

3 回调训练(finetune)

3.1 先要把第一步约束训练的代码注释掉

3.2 修改相关代码

修改位置:yolo/engine/trainer.py的443行左右

self.model = self.get_model(cfg=cfg, weights=weights, verbose=RANK == -1) # calls Model(cfg, weights)

# ========== 新增该行代码 ==========

self.model = weights

# ========== 新增该行代码 ==========

return ckpt

3.3 修改完代码就可以进行finetun训练了

命令行输入:

yolo train model=prune.pt epochs=100

结果展示:

约束训练last.pt:

剪枝后的prune.pt:

回调后的finetune.pt:

可以看到精度损失很小,但是参数量和浮点运算量下去了很多,推理速度在cpu上测试是变快了的,gpu上好像没啥变化

剪枝前后各层通道数对比:

更多推荐

已为社区贡献3条内容

已为社区贡献3条内容

所有评论(0)