报错解决MaxRetryError(“HTTPSConnectionPool(host=‘huggingface.co‘, port=443):xxx“)

·

完整的错误信息

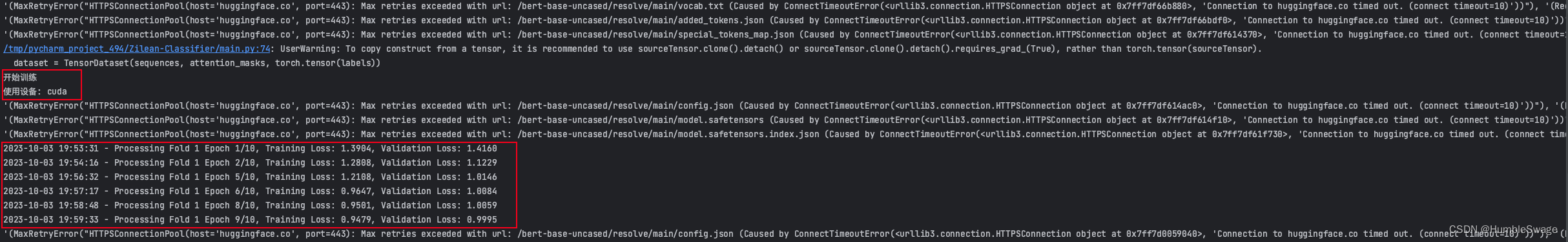

'(MaxRetryError("HTTPSConnectionPool(host='huggingface.co', port=443): Max retries exceeded with url: /bert-base-uncased/resolve/main/vocab.txt (Caused by ConnectTimeoutError(<urllib3.connection.HTTPSConnection object at 0x7f1320354880>, 'Connection to huggingface.co timed out. (connect timeout=10)'))"), '(Request ID: 625af900-631f-4614-9358-30364ecacefe)')' thrown while requesting HEAD https://huggingface.co/bert-base-uncased/resolve/main/vocab.txt

'(MaxRetryError("HTTPSConnectionPool(host='huggingface.co', port=443): Max retries exceeded with url: /bert-base-uncased/resolve/main/added_tokens.json (Caused by ConnectTimeoutError(<urllib3.connection.HTTPSConnection object at 0x7f1320354d60>, 'Connection to huggingface.co timed out. (connect timeout=10)'))"), '(Request ID: 1679a995-7441-4afe-a685-9a7bd6da9f2a)')' thrown while requesting HEAD https://huggingface.co/bert-base-uncased/resolve/main/added_tokens.json

'(MaxRetryError("HTTPSConnectionPool(host='huggingface.co', port=443): Max retries exceeded with url: /bert-base-uncased/resolve/main/special_tokens_map.json (Caused by ConnectTimeoutError(<urllib3.connection.HTTPSConnection object at 0x7f13202fb250>, 'Connection to huggingface.co timed out. (connect timeout=10)'))"), '(Request ID: 9af5b73e-5230-45d7-8886-5d37d38f09a8)')' thrown while requesting HEAD https://huggingface.co/bert-base-uncased/resolve/main/special_tokens_map.json

'(MaxRetryError("HTTPSConnectionPool(host='huggingface.co', port=443): Max retries exceeded with url: /bert-base-uncased/resolve/main/tokenizer_config.json (Caused by ConnectTimeoutError(<urllib3.connection.HTTPSConnection object at 0x7f13202fb730>, 'Connection to huggingface.co timed out. (connect timeout=10)'))"), '(Request ID: 12136040-d033-4099-821c-dcb80fb50018)')' thrown while requesting HEAD https://huggingface.co/bert-base-uncased/resolve/main/tokenizer_config.json

Traceback (most recent call last):

File "/tmp/pycharm_project_494/Zilean-Classifier/main.py", line 48, in <module>

tokenizer = BertTokenizer.from_pretrained('bert-base-uncased')

File "/root/miniconda3/envs/DL/lib/python3.8/site-packages/transformers/tokenization_utils_base.py", line 1838, in from_pretrained

raise EnvironmentError(

OSError: Can't load tokenizer for 'bert-base-uncased'. If you were trying to load it from 'https://huggingface.co/models', make sure you don't have a local directory with the same name. Otherwise, make sure 'bert-base-uncased' is the correct path to a directory containing all relevant files for a BertTokenizer tokenizer.

首先造成这种错误的原因主要是因为你的服务器没有办法连接huggingface的原因,你可以直接在你的服务器上尝试能否直接ping

ping huggingface.co

那我的机器就是没有数据传输过来,当然前提是你自己的服务器一定要有网络连接(可以尝试ping www.baidu.com来检测自己机器是否有网络)。

解决方案1

使用VPN,这个方法比较适用机器是你自己的,如果机器不是你的,你搭VPN比较麻烦,因为租的服务器会定时清理,在Linux搭建VPN也很简单,大家搜索一哈有很多方案

解决方案2【推荐】

第二种方式适用于租赁的机器的情况,就是直接将本地的下载好(你的本地也需要能访问外网)的预训练模型上传上去,如果你已经在你的本地简单跑过代码了,没有就去官网下载,首先我们确定我们本地文件所处的路径【windows下应该在你的用户文件下面又有个.cache,注意打开隐藏文件夹】:

-

将指定的模型下载到本地【本地机器需要科学上网】

from transformers import BertModel, BertTokenizer # 使用bert-large-uncased model = BertModel.from_pretrained('bert-large-uncased') tokenizer = BertTokenizer.from_pretrained('bert-large-uncased')此时你的机器上会出现如下图片:

-

找到本地下载好的模型文件

- 如果你是

windows用户,你的用户User文件下面又有个.cache/huggingface/hub/,注意打开隐藏文件; - 如果你是

macos用户在下面地址中~/.cache/huggingface/hub/models--bert-base-uncased

- 如果你是

-

上传文件到服务器上

将本地文件上传到服务器的下面地址中~/.cache/huggingface/hub/models--bert-base-uncased就可以运行你的代码了,但是这里运行的时候有个小问题,就是你运行时候仍然会报错说无法下载这些文件,请耐心等待,你的代码会正常运行

如果你不想出现之前上面还显示出错的问题,那么修改之前的加载方法,之前的加载方法为:

config = BertConfig.from_pretrained(model_name)修改为

# 指定本地bert模型路径 bert_model_dir = "/path/to/bert/model" config = transformers.BertConfig.from_pretrained(bert_model_dir)即可

更多推荐

已为社区贡献4条内容

已为社区贡献4条内容

所有评论(0)